本章讲解WebRTC如何实现音视频数据采集,其中包括

1.通过 WebCam 获取视频流,

2.采集音频流,

3.设置Camera分辨率

4.视频渲染。

6-1 【基础铺垫,学前有概念】WebRTC音视频数据采集

正确是

var constraints = {

video : true,

audio : true

}

<html><meta charset="utf-8"><head><title>WebRTC 获取视频和音频</title></head><body><video autoplay playsinline id="player"></video><script src="http://webrtc.github.io/adapter/adapter-latest.js"></script><script src="./js/client.js"></script></body></html>

'use strict'var videoplay = document.querySelector('video#player');function gotMediaStream(stream){videoplay.srcObject = stream;return navigator.mediaDevices.enumerateDevices();}function handleError(err){console.log('getUserMedia error:', err);}if(!navigator.mediaDevices ||!navigator.mediaDevices.getUserMedia){console.log('getUserMedia is not supported!');}else{var constraints = {video : true,audio : true}navigator.mediaDevices.getUserMedia(constraints).then(gotMediaStream).catch(handleError);}

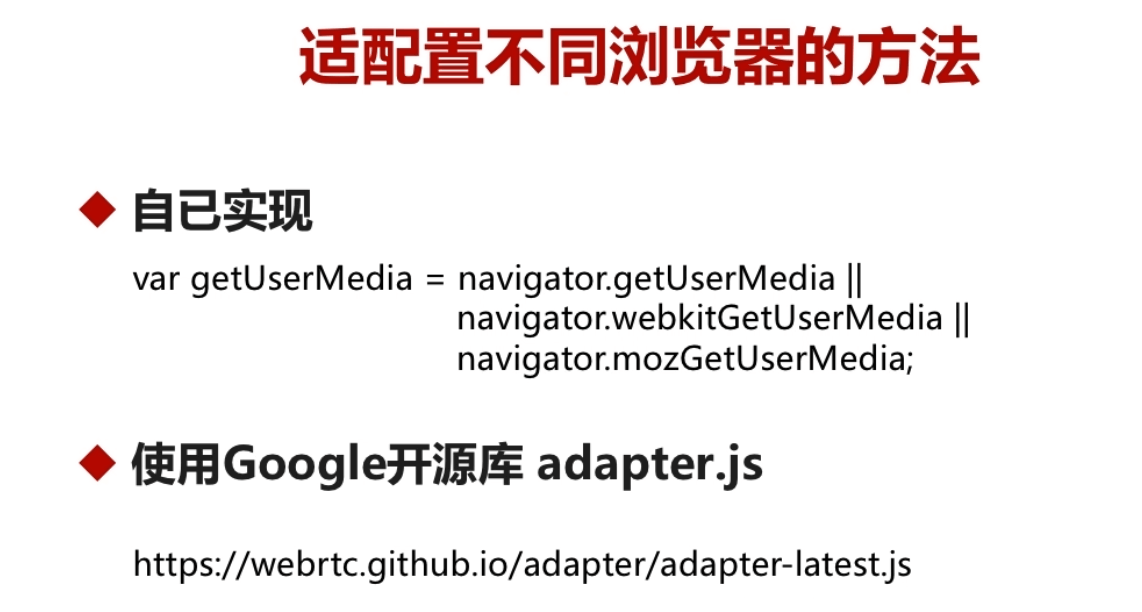

6-2 【浏览器适配方法】WebRTCAPI适配

<html><meta charset="utf-8"><head><title>WebRTC 获取视频和音频</title></head><body><video autoplay playsinline id="player"></video><script src="http://webrtc.github.io/adapter/adapter-latest.js"></script><script src="./js/client.js"></script></body></html>

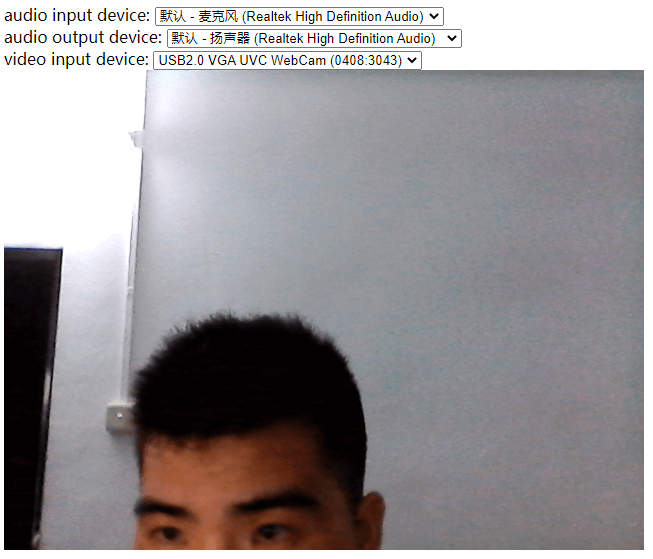

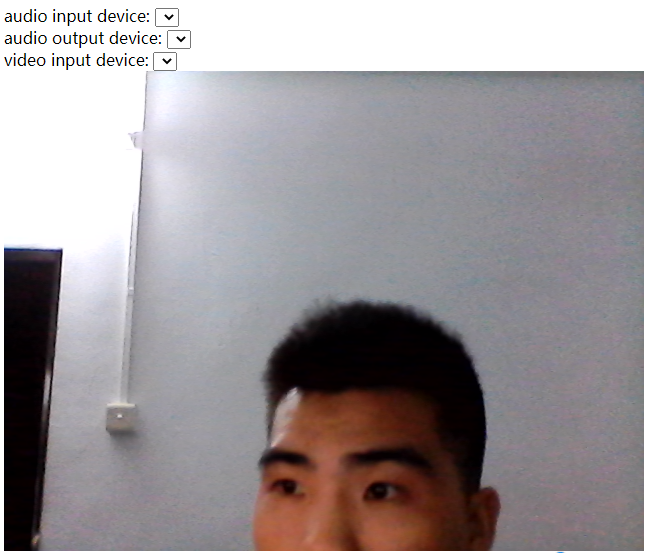

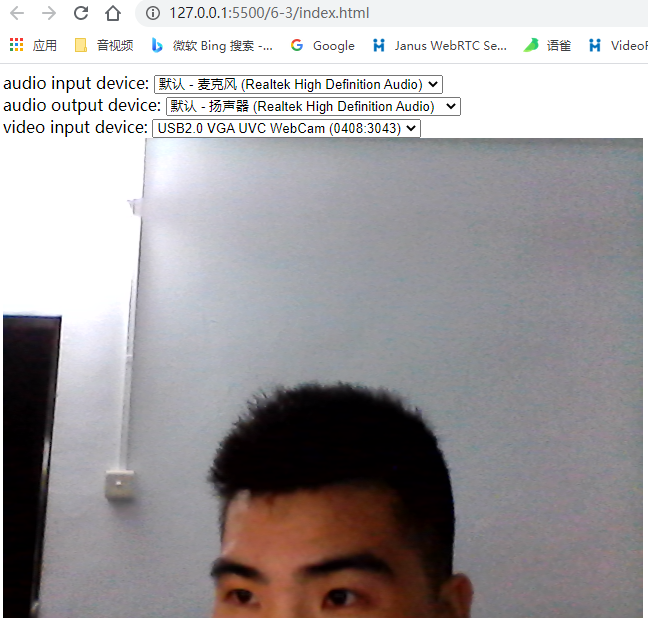

6-3 【安全管理】获取音视频设备的访问权限

<html><meta charset="utf-8"><head><title>WebRTC 获取视频和音频</title></head><body><div><label>audio input device:</label><select id="audioSource"></select></div><div><label>audio output device:</label><select id="audioOutput"></select></div><div><label>video input device:</label><select id="videoSource"></select></div><video autoplay playsinline id="player"></video><script src="http://webrtc.github.io/adapter/adapter-latest.js"></script><script src="./js/client.js"></script></body></html>

'use strict'//devicesvar audioSource = document.querySelector('select#audioSource');var audioOutput = document.querySelector('select#audioOutput');var videoSource = document.querySelector('select#videoSource');var videoplay = document.querySelector('video#player');function gotDevices(deviceInfos){deviceInfos.forEach(function(deviceinfo){var option = document.createElement('option');option.text = deviceinfo.label;option.value = deviceinfo.deviceId;if(deviceinfo.kind === 'audioinput'){audioSource.appendChild(option);}else if(deviceinfo.kind === 'audiooutput'){audioOutput.appendChild(option);}else if(deviceinfo.kind === 'videoinput'){videoSource.appendChild(option);}})}function gotMediaStream(stream){videoplay.srcObject = stream;return navigator.mediaDevices.enumerateDevices();}function handleError(err){console.log('getUserMedia error:', err);}if(!navigator.mediaDevices ||!navigator.mediaDevices.getUserMedia){console.log('getUserMedia is not supported!');}else{var constraints = {video : true,audio : false}navigator.mediaDevices.getUserMedia(constraints).then(gotMediaStream).then(gotDevices).catch(handleError);}

放在服务器运行使用https访问。

本地双击index.html运行访问

最后使用vscode,本地服务器运行

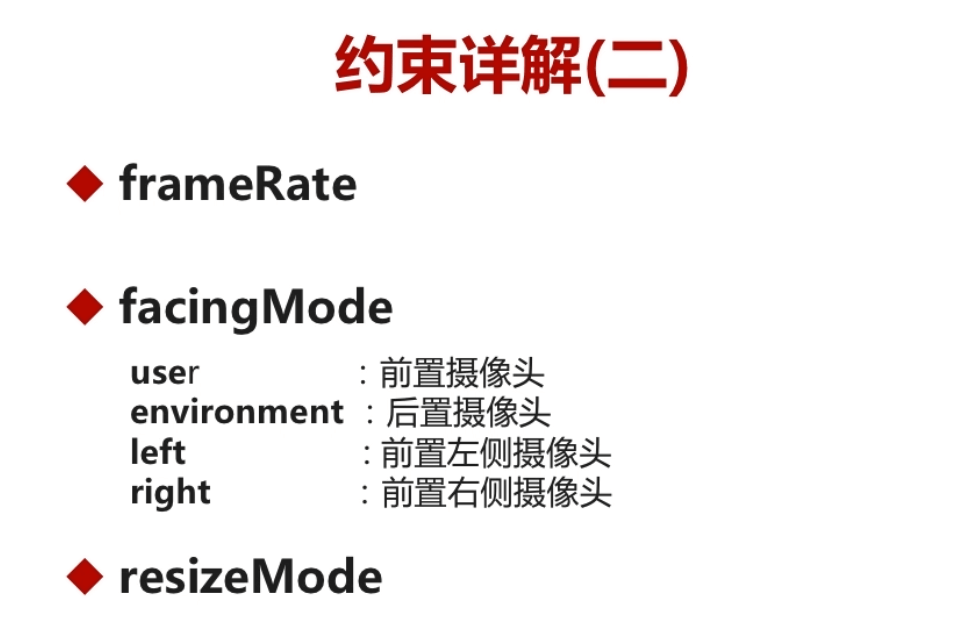

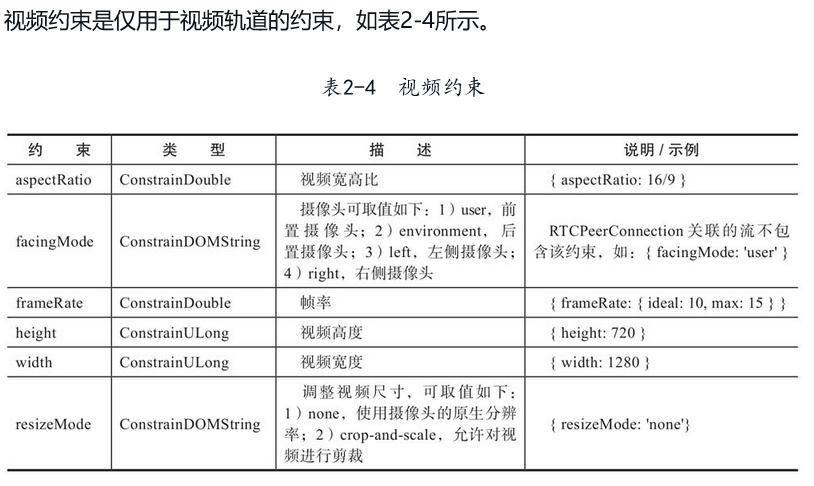

6-4 【视频参数调整】视频约束

aspectRatio一般不设置

var constraints = {video : {width:640,height:480,frameRate:30,facingMode:'environment'},audio : false}

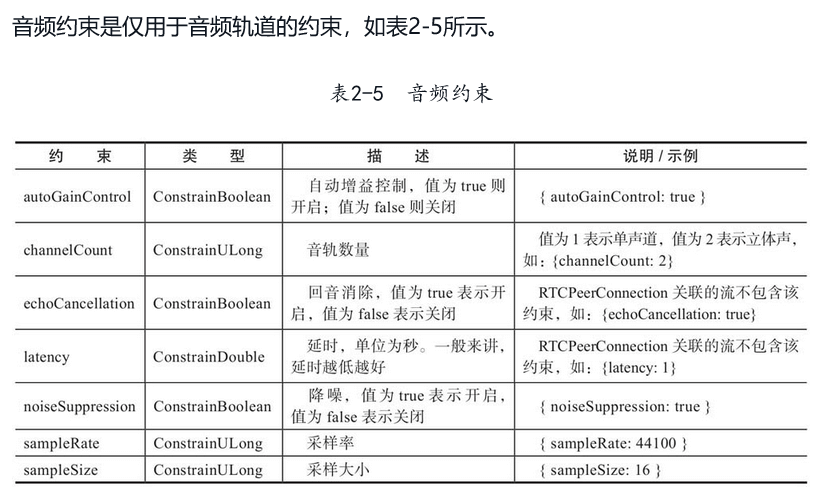

6-5 【音频参数调整】音频约束

var constraints = {video : {width: 640,height: 480,frameRate: 30,facingMode: 'environment' ,deviceId : deviceId ? {exact:deviceId} : undefined},audio : {noiseSuppression: true,echoCancellation: true}}

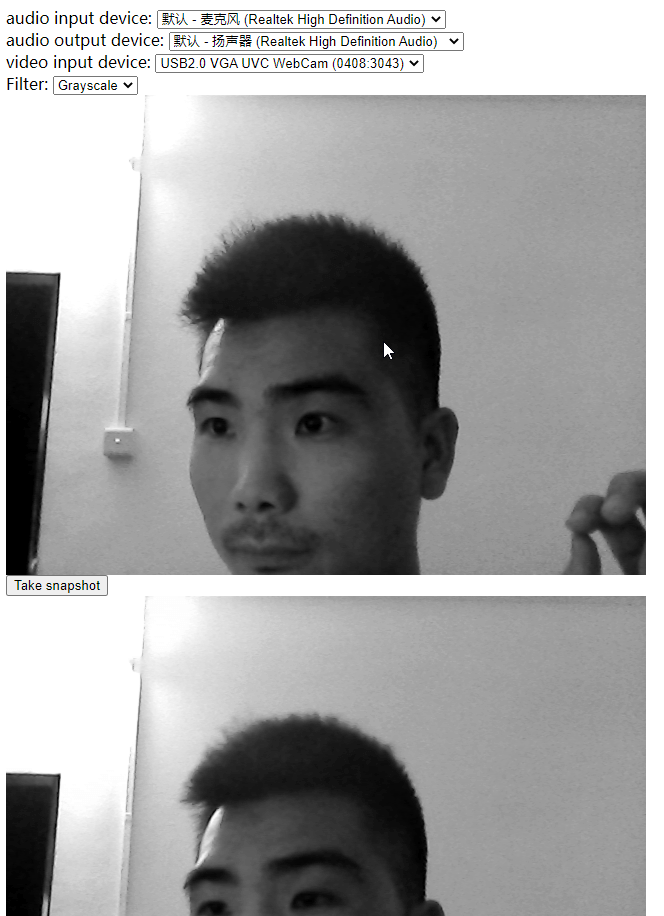

6-6 【来点实战】视频特效

https://www.runoob.com/cssref/css3-pr-filter.html

<html><meta charset="utf-8"><head><title>WebRTC 获取视频和音频</title><style>.none {-webkit-filter: none;}.blur {-webkit-filter: blur(3px);}.grayscale {-webkit-filter: grayscale(1);}.invert {-webkit-filter: invert(1);}.sepia {-webkit-filter: sepia(1);}</style></head><body><div><label>audio input device:</label><select id="audioSource"></select></div><div><label>audio output device:</label><select id="audioOutput"></select></div><div><label>video input device:</label><select id="videoSource"></select></div><div><label>Filter:</label><select id="filter"><option value="none">None</option><option value="blur">blur</option><option value="grayscale">Grayscale</option><option value="invert">Invert</option><option value="sepia">sepia</option></select></div><div><video autoplay playsinline id="player"></video></div><script src="http://webrtc.github.io/adapter/adapter-latest.js"></script><script src="./js/client.js"></script></body></html>

client.js

'use strict'//devicesvar audioSource = document.querySelector('select#audioSource');var audioOutput = document.querySelector('select#audioOutput');var videoSource = document.querySelector('select#videoSource');//filtervar filtersSelect = document.querySelector('select#filter');var videoplay = document.querySelector('video#player');function gotDevices(deviceInfos){deviceInfos.forEach(function(deviceinfo){var option = document.createElement('option');option.text = deviceinfo.label;option.value = deviceinfo.deviceId;if(deviceinfo.kind === 'audioinput'){audioSource.appendChild(option);}else if(deviceinfo.kind === 'audiooutput'){audioOutput.appendChild(option);}else if(deviceinfo.kind === 'videoinput'){videoSource.appendChild(option);}})}function gotMediaStream(stream){videoplay.srcObject = stream;return navigator.mediaDevices.enumerateDevices();}function handleError(err){console.log('getUserMedia error:', err);}//匹配视频设备切换function start() {if(!navigator.mediaDevices ||!navigator.mediaDevices.getUserMedia){console.log('getUserMedia is not supported!');}else{var deviceId = videoSource.value;var constraints = {video : {width: 640,height: 480,frameRate: 30,facingMode: 'environment' ,deviceId : deviceId ? {exact:deviceId} : undefined},audio : {noiseSuppression: true,echoCancellation: true}}navigator.mediaDevices.getUserMedia(constraints).then(gotMediaStream).then(gotDevices).catch(handleError);}}start();videoSource.onchange = start;filtersSelect.onchange = function(){videoplay.className = filtersSelect.value;}

https://coding.imooc.com/learn/questiondetail/pylDvPyrvK2YkBNm.html

6-7 【来点实战】从视频中获取图片

1、先要获取视频流

2、增加摄像按钮,和Canvas显示采集到的图片

效果如下:

<html><meta charset="utf-8"><head><title>WebRTC 获取视频和音频</title><style>.none {-webkit-filter: none;}.blur {-webkit-filter: blur(3px);}.grayscale {-webkit-filter: grayscale(1);}.invert {-webkit-filter: invert(1);}.sepia {-webkit-filter: sepia(1);}</style></head><body><div><label>audio input device:</label><select id="audioSource"></select></div><div><label>audio output device:</label><select id="audioOutput"></select></div><div><label>video input device:</label><select id="videoSource"></select></div><div><label>Filter:</label><select id="filter"><option value="none">None</option><option value="blur">blur</option><option value="grayscale">Grayscale</option><option value="invert">Invert</option><option value="sepia">sepia</option></select></div><div><video autoplay playsinline id="player"></video></div><div><button id="snapshot">Take snapshot</button></div><div><canvas id="picture"></canvas></div><script src="http://webrtc.github.io/adapter/adapter-latest.js"></script><script src="./js/client.js"></script></body></html>

'use strict'//devicesvar audioSource = document.querySelector('select#audioSource');var audioOutput = document.querySelector('select#audioOutput');var videoSource = document.querySelector('select#videoSource');//filtervar filtersSelect = document.querySelector('select#filter');//picturevar snapshot = document.querySelector('button#snapshot');var picture = document.querySelector('canvas#picture');picture.width = 640;picture.height = 480;//videoplayvar videoplay = document.querySelector('video#player');function gotDevices(deviceInfos){deviceInfos.forEach(function(deviceinfo){var option = document.createElement('option');option.text = deviceinfo.label;option.value = deviceinfo.deviceId;if(deviceinfo.kind === 'audioinput'){audioSource.appendChild(option);}else if(deviceinfo.kind === 'audiooutput'){audioOutput.appendChild(option);}else if(deviceinfo.kind === 'videoinput'){videoSource.appendChild(option);}})}function gotMediaStream(stream){videoplay.srcObject = stream;return navigator.mediaDevices.enumerateDevices();}function handleError(err){console.log('getUserMedia error:', err);}//匹配视频设备切换function start() {if(!navigator.mediaDevices ||!navigator.mediaDevices.getUserMedia){console.log('getUserMedia is not supported!');}else{var deviceId = videoSource.value;var constraints = {video : {width: 640,height: 480,frameRate: 30,facingMode: 'environment' ,deviceId : deviceId ? {exact:deviceId} : undefined},audio : {noiseSuppression: true,echoCancellation: true}}navigator.mediaDevices.getUserMedia(constraints).then(gotMediaStream).then(gotDevices).catch(handleError);}}start();videoSource.onchange = start;filtersSelect.onchange = function(){videoplay.className = filtersSelect.value;}snapshot.onclick = function() {picture.className = filtersSelect.value; //如果先前使用了滤镜,则在截图后,也会使用滤镜picture.getContext('2d').drawImage(videoplay, 0, 0, picture.width, picture.height);}

6-8 【来点实战】WebRTC只采集音频数据

<html><meta charset="utf-8"><head><title>WebRTC 获取视频和音频</title><style>.none {-webkit-filter: none;}.blur {-webkit-filter: blur(3px);}.grayscale {-webkit-filter: grayscale(1);}.invert {-webkit-filter: invert(1);}.sepia {-webkit-filter: sepia(1);}</style></head><body><div><label>audio input device:</label><select id="audioSource"></select></div><div><label>audio output device:</label><select id="audioOutput"></select></div><div><label>video input device:</label><select id="videoSource"></select></div><div><label>Filter:</label><select id="filter"><option value="none">None</option><option value="blur">blur</option><option value="grayscale">Grayscale</option><option value="invert">Invert</option><option value="sepia">sepia</option></select></div><div><audio autoplay controls id="audioplayer"></audio><!-- <video autoplay playsinline id="player"></video> --></div><div><button id="snapshot">Take snapshot</button></div><div><canvas id="picture"></canvas></div><script src="http://webrtc.github.io/adapter/adapter-latest.js"></script><script src="./js/client.js"></script></body></html>

'use strict'//devicesvar audioSource = document.querySelector('select#audioSource');var audioOutput = document.querySelector('select#audioOutput');var videoSource = document.querySelector('select#videoSource');//filtervar filtersSelect = document.querySelector('select#filter');//picturevar snapshot = document.querySelector('button#snapshot');var picture = document.querySelector('canvas#picture');picture.width = 640;picture.height = 480;//videoplay//var videoplay = document.querySelector('video#player');var audioplay = document.querySelector('audio#audioplayer');function gotDevices(deviceInfos){deviceInfos.forEach(function(deviceinfo){var option = document.createElement('option');option.text = deviceinfo.label;option.value = deviceinfo.deviceId;if(deviceinfo.kind === 'audioinput'){audioSource.appendChild(option);}else if(deviceinfo.kind === 'audiooutput'){audioOutput.appendChild(option);}else if(deviceinfo.kind === 'videoinput'){videoSource.appendChild(option);}})}function gotMediaStream(stream){//videoplay.srcObject = stream;audioplay.srcObject = stream;return navigator.mediaDevices.enumerateDevices();}function handleError(err){console.log('getUserMedia error:', err);}//匹配视频设备切换function start() {if(!navigator.mediaDevices ||!navigator.mediaDevices.getUserMedia){console.log('getUserMedia is not supported!');}else{var deviceId = videoSource.value;/* var constraints = {video : {width: 640,height: 480,frameRate: 30,facingMode: 'environment' ,deviceId : deviceId ? {exact:deviceId} : undefined},audio : {noiseSuppression: true,echoCancellation: true}} */var constraints = {video : false,audio : true}navigator.mediaDevices.getUserMedia(constraints).then(gotMediaStream).then(gotDevices).catch(handleError);}}start();videoSource.onchange = start;filtersSelect.onchange = function(){videoplay.className = filtersSelect.value;}snapshot.onclick = function() {picture.className = filtersSelect.value; //如果先前使用了滤镜,则在截图后,也会使用滤镜picture.getContext('2d').drawImage(videoplay, 0, 0, picture.width, picture.height);}

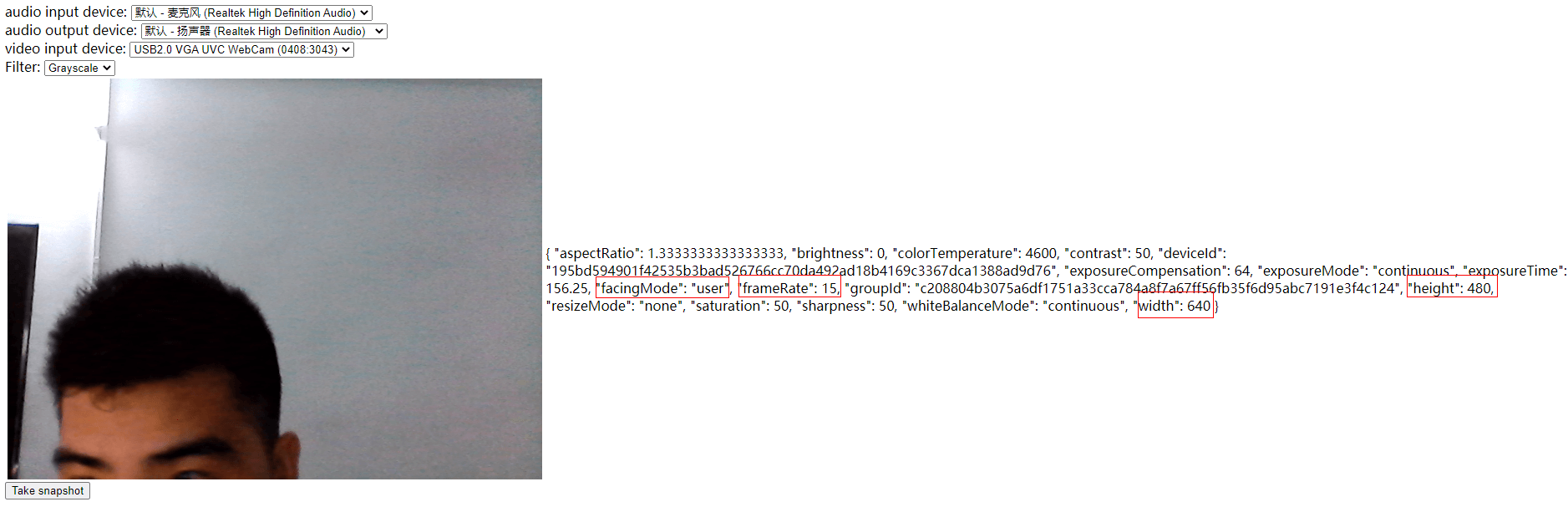

6-9 【来点实战】MediaStreamAPI及获取视频约束

<html><meta charset="utf-8"><head><title>WebRTC 获取视频和音频</title><style>.none {-webkit-filter: none;}.blur {-webkit-filter: blur(3px);}.grayscale {-webkit-filter: grayscale(1);}.invert {-webkit-filter: invert(1);}.sepia {-webkit-filter: sepia(1);}</style></head><body><section><div><label>audio input device:</label><select id="audioSource"></select></div><div><label>audio output device:</label><select id="audioOutput"></select></div><div><label>video input device:</label><select id="videoSource"></select></div></section><section><div><label>Filter:</label><select id="filter"><option value="none">None</option><option value="blur">blur</option><option value="grayscale">Grayscale</option><option value="invert">Invert</option><option value="sepia">sepia</option></select></div></section><section><table><!-- <audio autoplay controls id="audioplayer"></audio> --><tr><td><video autoplay playsinline id="player"></video> </td><td><div id='constraints' class='output'></div></td></tr></table></section><section><div><button id="snapshot">Take snapshot</button></div><div><canvas id="picture"></canvas></div></section><script src="http://webrtc.github.io/adapter/adapter-latest.js"></script><script src="./js/client.js"></script></body></html>

'use strict'//devicesvar audioSource = document.querySelector('select#audioSource');var audioOutput = document.querySelector('select#audioOutput');var videoSource = document.querySelector('select#videoSource');//filtervar filtersSelect = document.querySelector('select#filter');//picturevar snapshot = document.querySelector('button#snapshot');var picture = document.querySelector('canvas#picture');picture.width = 640;picture.height = 480;//videoplayvar videoplay = document.querySelector('video#player');//var audioplay = document.querySelector('audio#audioplayer');//divvar divConstraints = document.querySelector('div#constraints');function gotDevices(deviceInfos){deviceInfos.forEach(function(deviceinfo){var option = document.createElement('option');option.text = deviceinfo.label;option.value = deviceinfo.deviceId;if(deviceinfo.kind === 'audioinput'){audioSource.appendChild(option);}else if(deviceinfo.kind === 'audiooutput'){audioOutput.appendChild(option);}else if(deviceinfo.kind === 'videoinput'){videoSource.appendChild(option);}})}function gotMediaStream(stream){var videoTrack = stream.getVideoTracks()[0];var videoConstraints = videoTrack.getSettings();divConstraints.textContent = JSON.stringify(videoConstraints, null, 2);window.stream = stream;videoplay.srcObject = stream;//audioplay.srcObject = stream;return navigator.mediaDevices.enumerateDevices();}function handleError(err){console.log('getUserMedia error:', err);}//匹配视频设备切换function start() {if(!navigator.mediaDevices ||!navigator.mediaDevices.getUserMedia){console.log('getUserMedia is not supported!');}else{var deviceId = videoSource.value;var constraints = {video : {width: 640,height: 480,frameRate: 15,facingMode: 'environment' ,deviceId : deviceId ? {exact:deviceId} : undefined},audio : false//{//noiseSuppression: true,//echoCancellation: true//}}navigator.mediaDevices.getUserMedia(constraints).then(gotMediaStream).then(gotDevices).catch(handleError);}}start();videoSource.onchange = start;filtersSelect.onchange = function(){videoplay.className = filtersSelect.value;}snapshot.onclick = function() {picture.className = filtersSelect.value; //如果先前使用了滤镜,则在截图后,也会使用滤镜picture.getContext('2d').drawImage(videoplay, 0, 0, picture.width, picture.height);}