前言

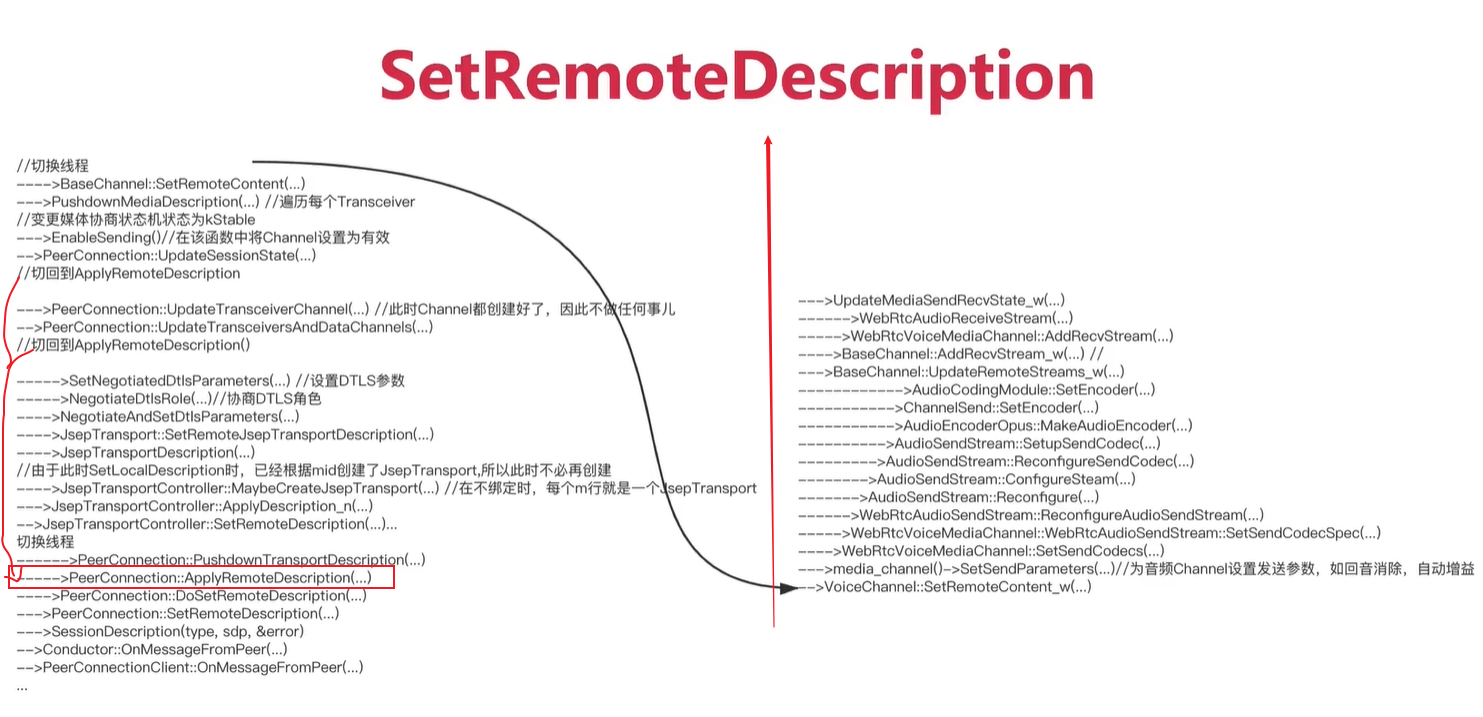

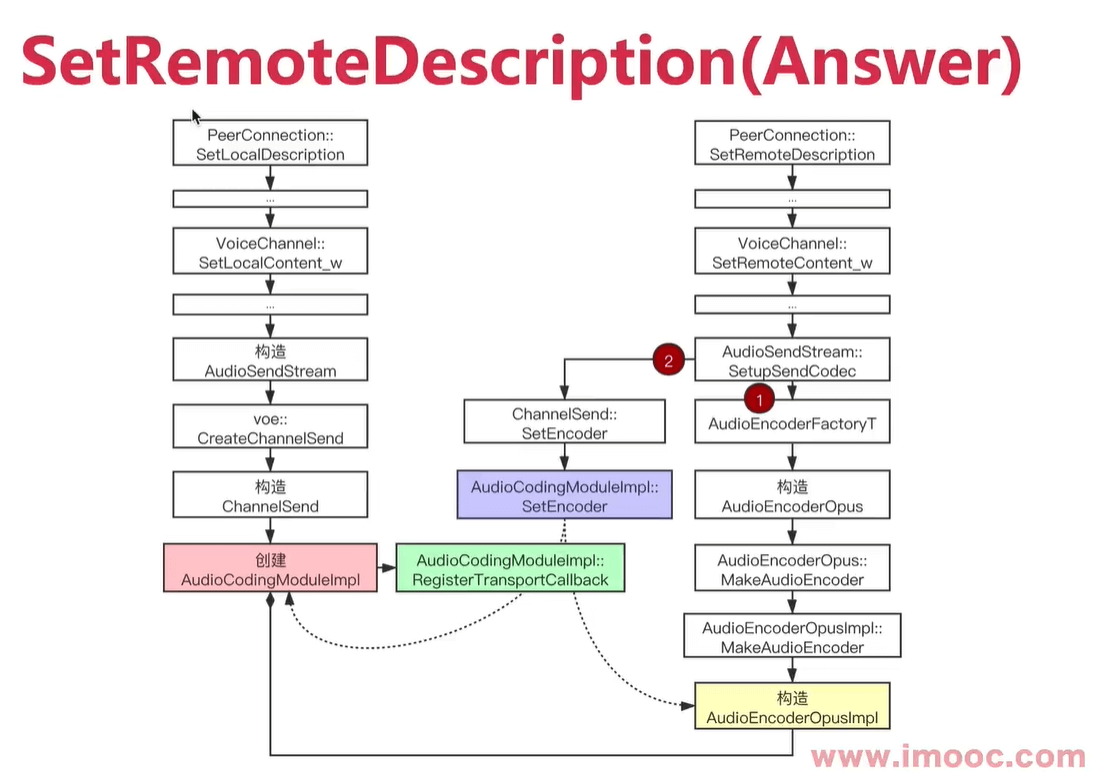

呼叫方调用SetLocalDescription ,被呼叫方调用 SetRemoteDescription 。

第一个都是处理音频。

注意是因为现在音频媒体协商确定后,才会调用AudioCodingModule::SetEncoder。之前只是收集编码器。

注意,最后是构建AudioEncoderOpusImpl,然后传到左边创建的AudioCodingModuleImpl,再调用AudioCodingModuleImpl::RegisterTransportCallback注册回调对象,这时候注册的回调对象就可以交给其他对象去处理了。

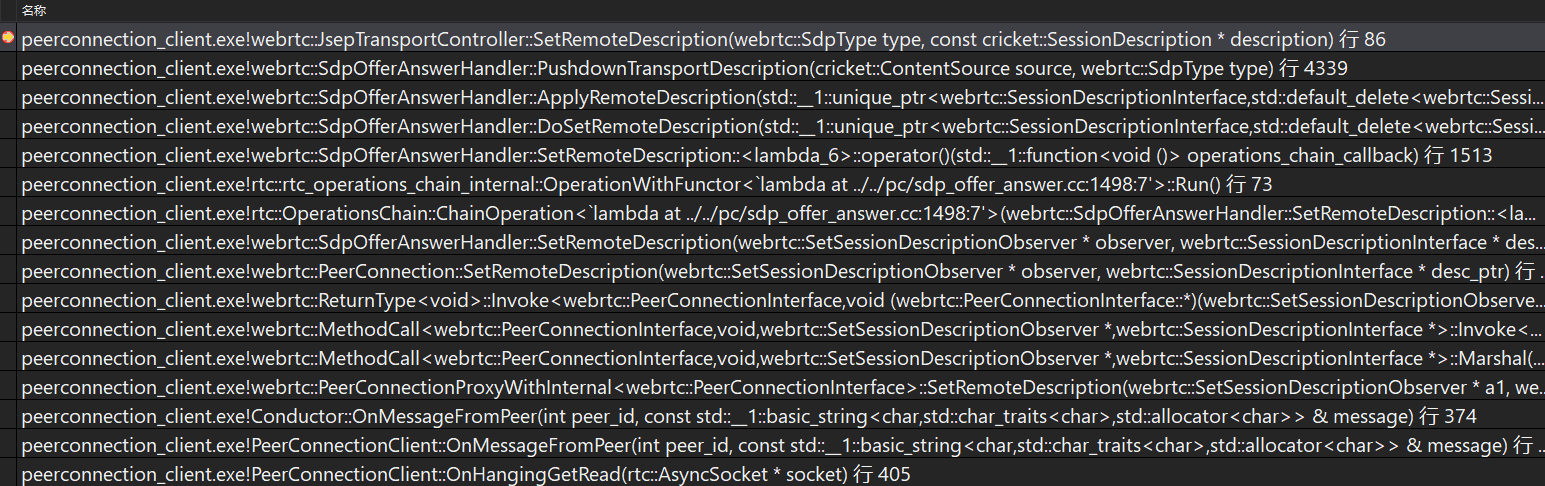

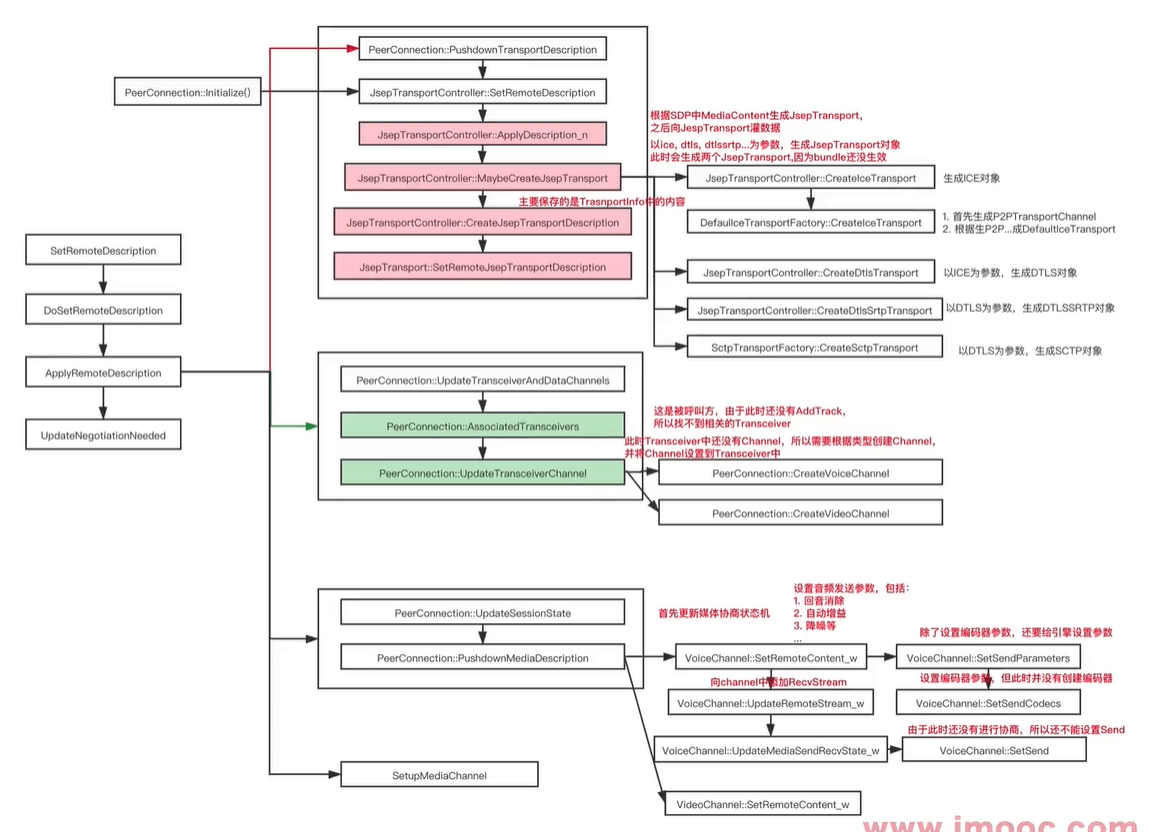

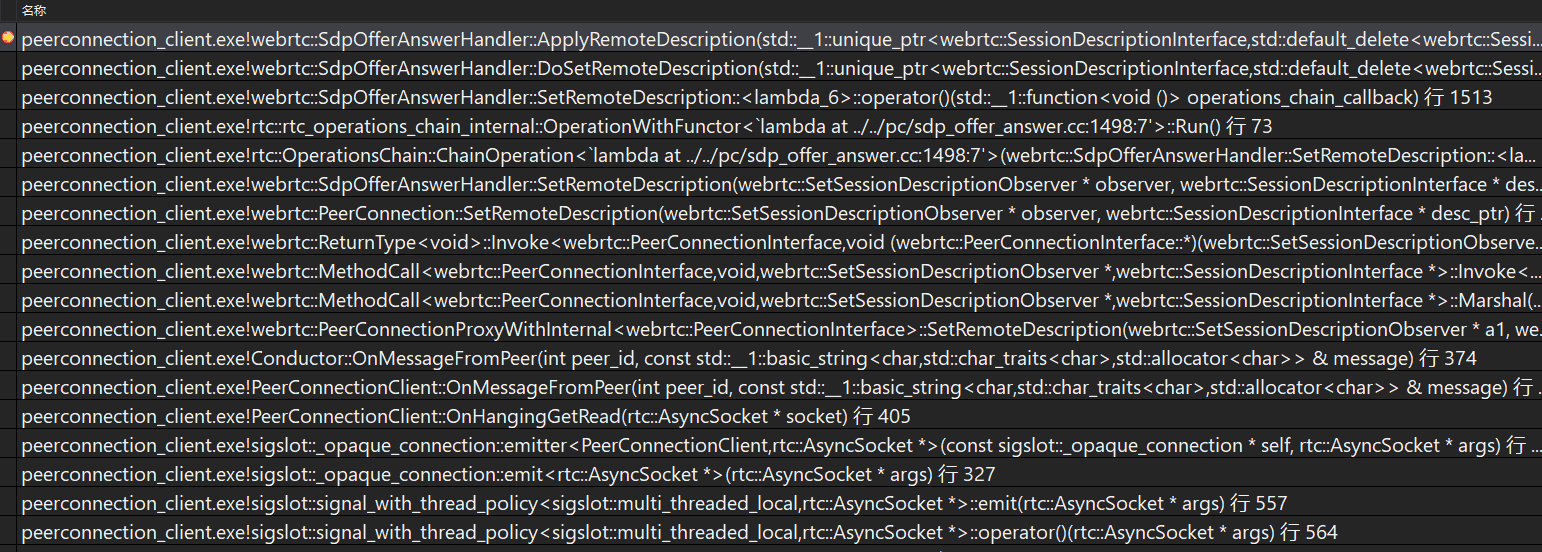

SdpOfferAnswerHandler::DoSetRemoteDescription

SdpOfferAnswerHandler::DoSetRemoteDescription{SdpOfferAnswerHandler::FillInMissingRemoteMidsSdpOfferAnswerHandler::ApplyRemoteDescriptionOnSetRemoteDescriptionCompleteSdpOfferAnswerHandler::UpdateNegotiationNeeded}

SdpOfferAnswerHandler::ApplyRemoteDescription

SdpOfferAnswerHandler::ApplyRemoteDescription{SdpOfferAnswerHandler::PushdownTransportDescriptionSdpOfferAnswerHandler::UpdateTransceiversAndDataChannelsSdpOfferAnswerHandler::UpdateSessionStateCheckForRemoteIceRestartUpdateRemoteSendersList}

SdpOfferAnswerHandler::UpdateTransceiversAndDataChannels

SdpOfferAnswerHandler::UpdateTransceiversAndDataChannels{SdpOfferAnswerHandler::AssociateTransceiverSdpOfferAnswerHandler::UpdateTransceiverChannel}SdpOfferAnswerHandler::UpdateTransceiverChannel{***if (transceiver->media_type() == cricket::MEDIA_TYPE_AUDIO) {channel = CreateVoiceChannel(content.name);} else {RTC_DCHECK_EQ(cricket::MEDIA_TYPE_VIDEO, transceiver->media_type());channel = CreateVideoChannel(content.name);}***}

JsepTransportController::SetRemoteDescription

RTCError JsepTransportController::SetRemoteDescription(SdpType type,const cricket::SessionDescription* description) {if (!network_thread_->IsCurrent()) {return network_thread_->Invoke<RTCError>(RTC_FROM_HERE, [=] { return SetRemoteDescription(type, description); });}RTC_DCHECK_RUN_ON(network_thread_);return ApplyDescription_n(/*local=*/false, type, description);}

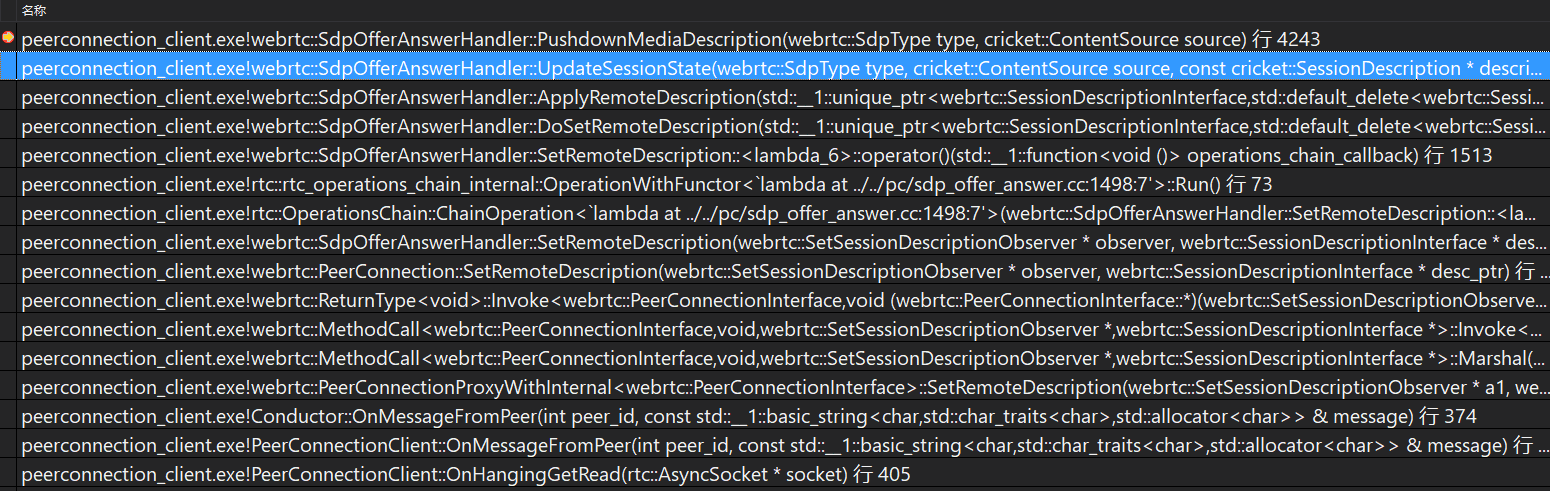

SdpOfferAnswerHandler::PushdownMediaDescription

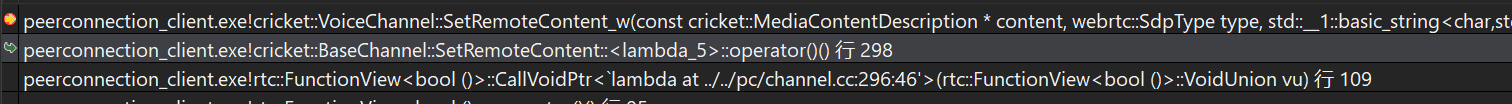

SdpOfferAnswerHandler::PushdownMediaDescription{***// Push down the new SDP media section for each audio/video transceiver.for (const auto& transceiver : transceivers()->List()) {const ContentInfo* content_info =FindMediaSectionForTransceiver(transceiver, sdesc);cricket::ChannelInterface* channel = transceiver->internal()->channel();if (!channel || !content_info || content_info->rejected) {continue;}const MediaContentDescription* content_desc =content_info->media_description();if (!content_desc) {continue;}std::string error;bool success = (source == cricket::CS_LOCAL)? channel->SetLocalContent(content_desc, type, &error): channel->SetRemoteContent(content_desc, type, &error);if (!success) {LOG_AND_RETURN_ERROR(RTCErrorType::INVALID_PARAMETER, error);}}}****-》bool BaseChannel::SetRemoteContent(const MediaContentDescription* content,SdpType type,std::string* error_desc) {TRACE_EVENT0("webrtc", "BaseChannel::SetRemoteContent");return InvokeOnWorker<bool>(RTC_FROM_HERE, [this, content, type, error_desc] {RTC_DCHECK_RUN_ON(worker_thread());return SetRemoteContent_w(content, type, error_desc);});}-》H:\webrtc-20210315\webrtc-20210315\webrtc\webrtc-checkout\src\pc\channel.ccVoiceChannel::SetRemoteContent_w

因为 channel->SetRemoteContent(content_desc, type, &error);,此时是音频,所以是VoiceChannel ,因为VoiceChannel 继承BaseChannel,所以会先调用父类的SetRemoteContent,然后再在工作线程中调用VoiceChannel::SetRemoteContent_w,举一反三视频的也一样。