前言概念

WindowsCore的构造函数

STA与MTA

STA: Single-Thread Apartment, 中文叫单线程套间。就是在COM库初始化的时候创建一个内存结构,然后让它和调用CoInitialize的线程相关联。这个内存结构针对每个线程都会有一个。支持STA的COM对象只能在创建它的线程里被使用,其它线程如果再创建它就会失败。

MTA: Mutil-Thread Apartment,中文叫多线程套间。COM库在进程中创建一个内存结构,这个内存结构在整个进程中只能有一个,然后让它和调用CoInitializeEx的线程相关联。支持MTA的COM对象可以在任意线程里被使用。多有针对它的调用都会被封装成为消息。

其实STA和MTA是COM规定的一套线程模型,用于保障多线程情况下你的组件代码的同步。比如说有一个COM对象它内部有一个静态变量 gHello,那么这个对象无论生成多少实例对于gHello在内存中只能有一份,那么如果有两个不同的实例在两个线程里面同时去读写它,就有可能出错,所以就要就要有种机制进行同步保护,STA或者MTA就是这种机制。

AVRT库

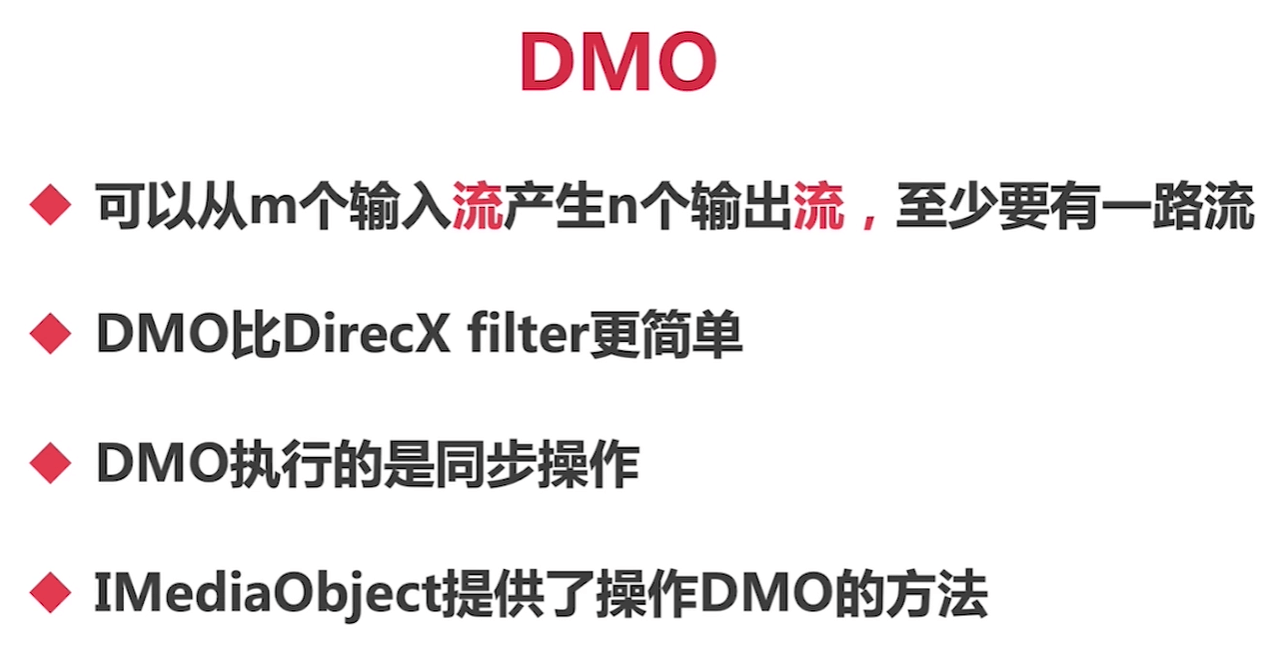

DMO

源码分析

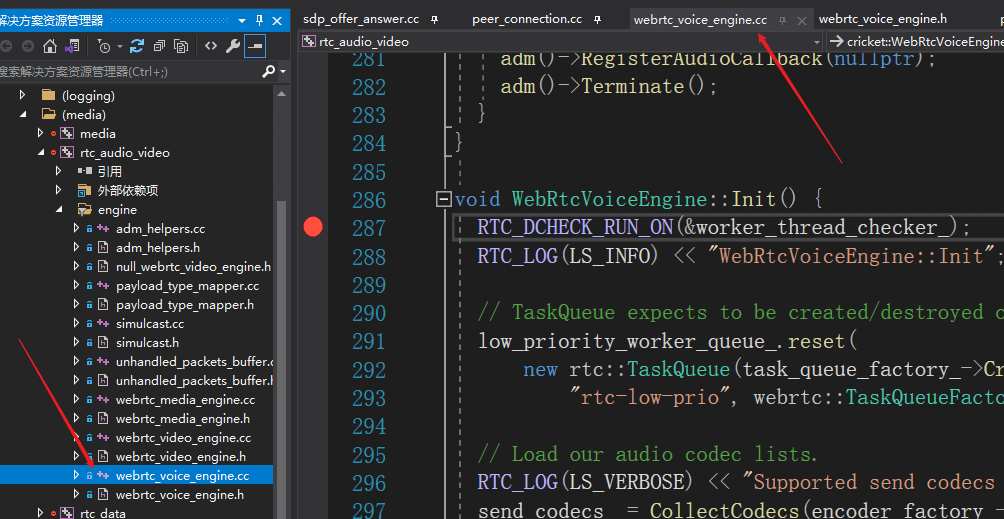

WebRtcVoiceEngine::Init

直接打断点

H:\webrtc-20210315\webrtc-20210315\webrtc\webrtc-checkout\src\media\engine\webrtc_voice_engine.cc

WebRtcVoiceEngine::Init

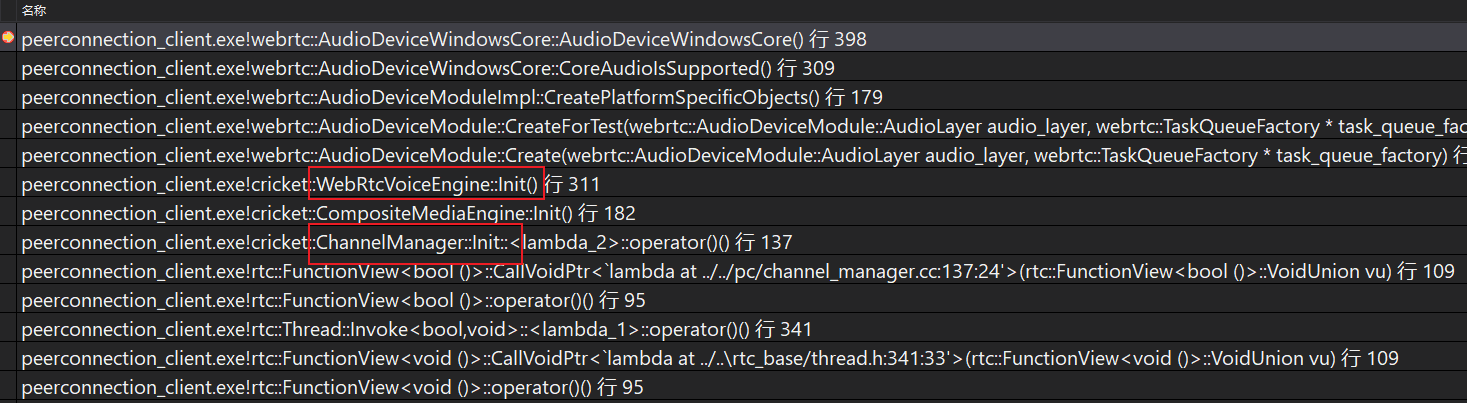

调用堆栈

cricket::ChannelManager::Init->cricket::CompositeMediaEngine::Init()->cricket::WebRtcVoiceEngine::Init()

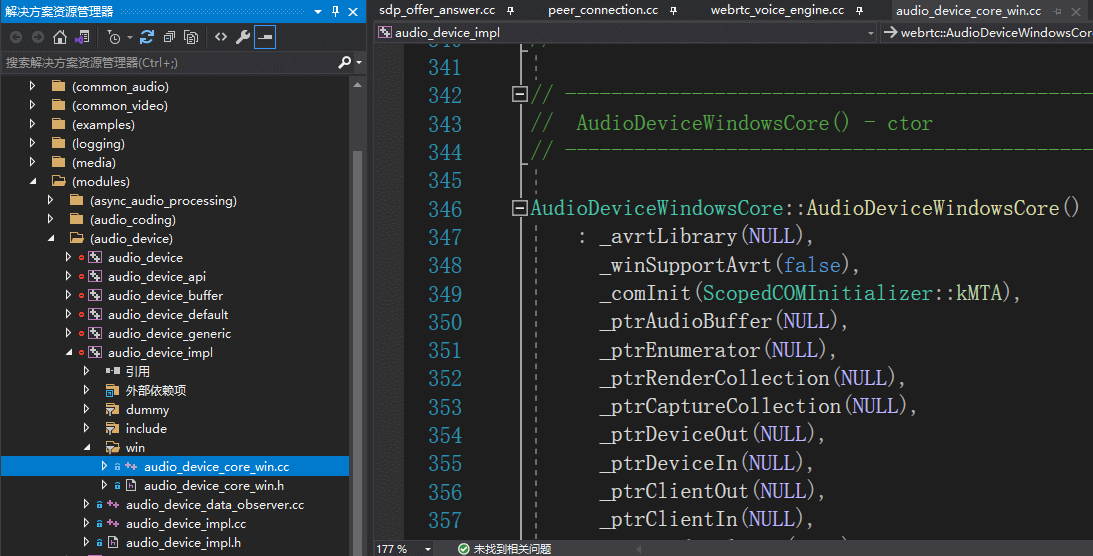

AudioDeviceWindowsCore::AudioDeviceWindowsCore

打断点

H:\webrtc-20210315\webrtc-20210315\webrtc\webrtc-checkout\src\modules\audio_device\win\audio_device_core_win.cc 构造函数的398行

调用堆栈

AudioDeviceWindowsCore::AudioDeviceWindowsCore(): _avrtLibrary(NULL),_winSupportAvrt(false),_comInit(ScopedCOMInitializer::kMTA),_ptrAudioBuffer(NULL),_ptrEnumerator(NULL),_ptrRenderCollection(NULL),_ptrCaptureCollection(NULL),_ptrDeviceOut(NULL),_ptrDeviceIn(NULL),_ptrClientOut(NULL),_ptrClientIn(NULL),_ptrRenderClient(NULL),_ptrCaptureClient(NULL),_ptrCaptureVolume(NULL),_ptrRenderSimpleVolume(NULL),_dmo(NULL),_mediaBuffer(NULL),_builtInAecEnabled(false),_hRenderSamplesReadyEvent(NULL),_hPlayThread(NULL),_hRenderStartedEvent(NULL),_hShutdownRenderEvent(NULL),_hCaptureSamplesReadyEvent(NULL),_hRecThread(NULL),_hCaptureStartedEvent(NULL),_hShutdownCaptureEvent(NULL),_hMmTask(NULL),_playAudioFrameSize(0),_playSampleRate(0),_playBlockSize(0),_playChannels(2),_sndCardPlayDelay(0),_writtenSamples(0),_readSamples(0),_recAudioFrameSize(0),_recSampleRate(0),_recBlockSize(0),_recChannels(2),_initialized(false),_recording(false),_playing(false),_recIsInitialized(false),_playIsInitialized(false),_speakerIsInitialized(false),_microphoneIsInitialized(false),_usingInputDeviceIndex(false),_usingOutputDeviceIndex(false),_inputDevice(AudioDeviceModule::kDefaultCommunicationDevice),_outputDevice(AudioDeviceModule::kDefaultCommunicationDevice),_inputDeviceIndex(0),_outputDeviceIndex(0) {RTC_DLOG(LS_INFO) << __FUNCTION__ << " created";RTC_DCHECK(_comInit.Succeeded());// Try to load the Avrt DLLif (!_avrtLibrary) {// Get handle to the Avrt DLL module._avrtLibrary = LoadLibrary(TEXT("Avrt.dll"));if (_avrtLibrary) {// Handle is valid (should only happen if OS larger than vista & win7).// Try to get the function addresses.RTC_LOG(LS_VERBOSE) << "AudioDeviceWindowsCore::AudioDeviceWindowsCore()"" The Avrt DLL module is now loaded";// 获取三个方法_PAvRevertMmThreadCharacteristics =(PAvRevertMmThreadCharacteristics)GetProcAddress(_avrtLibrary, "AvRevertMmThreadCharacteristics");_PAvSetMmThreadCharacteristicsA =(PAvSetMmThreadCharacteristicsA)GetProcAddress(_avrtLibrary, "AvSetMmThreadCharacteristicsA");_PAvSetMmThreadPriority = (PAvSetMmThreadPriority)GetProcAddress(_avrtLibrary, "AvSetMmThreadPriority");if (_PAvRevertMmThreadCharacteristics &&_PAvSetMmThreadCharacteristicsA && _PAvSetMmThreadPriority) {RTC_LOG(LS_VERBOSE)<< "AudioDeviceWindowsCore::AudioDeviceWindowsCore()"" AvRevertMmThreadCharacteristics() is OK";RTC_LOG(LS_VERBOSE)<< "AudioDeviceWindowsCore::AudioDeviceWindowsCore()"" AvSetMmThreadCharacteristicsA() is OK";RTC_LOG(LS_VERBOSE)<< "AudioDeviceWindowsCore::AudioDeviceWindowsCore()"" AvSetMmThreadPriority() is OK";// 设置标志_winSupportAvrt = true;}}}// Create our samples ready events - we want auto reset events that start in// the not-signaled state. The state of an auto-reset event object remains// signaled until a single waiting thread is released, at which time the// system automatically sets the state to nonsignaled. If no threads are// waiting, the event object's state remains signaled. (Except for// _hShutdownCaptureEvent, which is used to shutdown multiple threads)._hRenderSamplesReadyEvent = CreateEvent(NULL, FALSE, FALSE, NULL);_hCaptureSamplesReadyEvent = CreateEvent(NULL, FALSE, FALSE, NULL);_hShutdownRenderEvent = CreateEvent(NULL, FALSE, FALSE, NULL);_hShutdownCaptureEvent = CreateEvent(NULL, TRUE, FALSE, NULL);_hRenderStartedEvent = CreateEvent(NULL, FALSE, FALSE, NULL);_hCaptureStartedEvent = CreateEvent(NULL, FALSE, FALSE, NULL);_perfCounterFreq.QuadPart = 1;_perfCounterFactor = 0.0;// list of number of channels to use on recording side_recChannelsPrioList[0] = 2; // stereo is prio 1_recChannelsPrioList[1] = 1; // mono is prio 2_recChannelsPrioList[2] = 4; // quad is prio 3// list of number of channels to use on playout side_playChannelsPrioList[0] = 2; // stereo is prio 1_playChannelsPrioList[1] = 1; // mono is prio 2HRESULT hr;// We know that this API will work since it has already been verified in// CoreAudioIsSupported, hence no need to check for errors here as well.// Retrive the IMMDeviceEnumerator API (should load the MMDevAPI.dll)// TODO(henrika): we should probably move this allocation to Init() instead// and deallocate in Terminate() to make the implementation more symmetric.CoCreateInstance(__uuidof(MMDeviceEnumerator), NULL, CLSCTX_ALL,__uuidof(IMMDeviceEnumerator),reinterpret_cast<void**>(&_ptrEnumerator));assert(NULL != _ptrEnumerator);// DMO初始化,主要用于回音消除,硬件层次。如果是软件层次,就是WebRTC的算法了。// 苹果手机,硬件。。Windows一般使用软件。// DMO initialization for built-in WASAPI AEC.{IMediaObject* ptrDMO = NULL;hr = CoCreateInstance(CLSID_CWMAudioAEC, NULL, CLSCTX_INPROC_SERVER,IID_IMediaObject, reinterpret_cast<void**>(&ptrDMO));if (FAILED(hr) || ptrDMO == NULL) {// Since we check that _dmo is non-NULL in EnableBuiltInAEC(), the// feature is prevented from being enabled._builtInAecEnabled = false;_TraceCOMError(hr);}_dmo = ptrDMO;SAFE_RELEASE(ptrDMO);}}

其中ScopedCOMInitializer _comInit;

去到该类的定义去,可以发现,选择的是MTA 多线程套间。

class ScopedCOMInitializer {

public:

enum SelectMTA { kMTA };

explicit ScopedCOMInitializer(SelectMTA mta);

}