Linear Equations:

Linear Equations, for example:

can be written in matrix form, m rows and n columns:

or in general:

Matrix transpose:

and

Matrix multiplication:

- What matrices can be multiplied by each other?

cols of 1st matrix == # rows of 2nd matrix

- What’s the size of the result of the multiplication?

rows of 1st matrix * # cols of 2nd matrix

For example:

(24)(43) = (23)

- Matrix multiplication is not commutative:

but associative:

Inverse of matrix:

- The inverse of a matrix A is written as A^-1

where I is the identity matrix:

Vectors:

- a kind of matrix that only includes one column

such as

length/norm of a vector:

e.g.

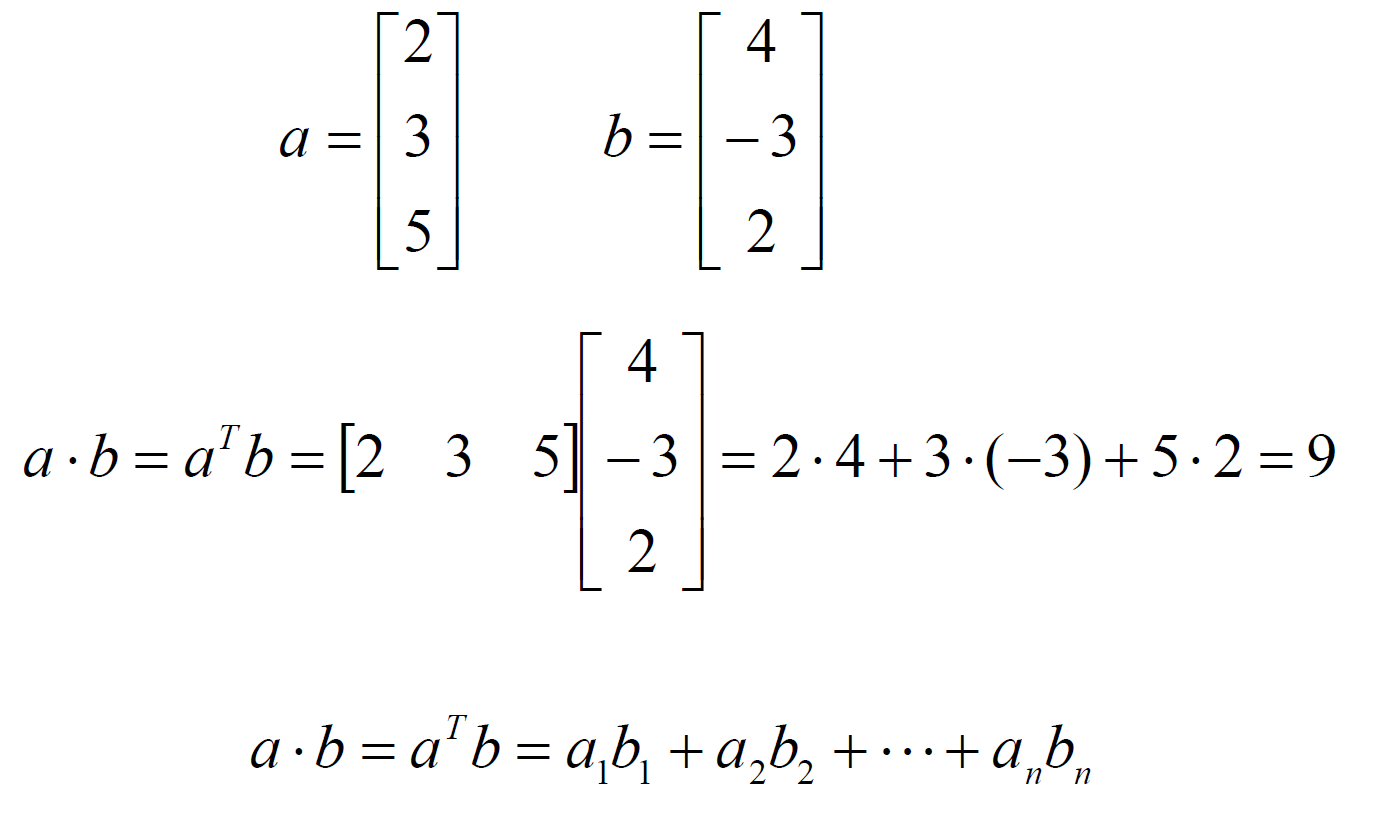

Vector Dot Product/Inner Product:

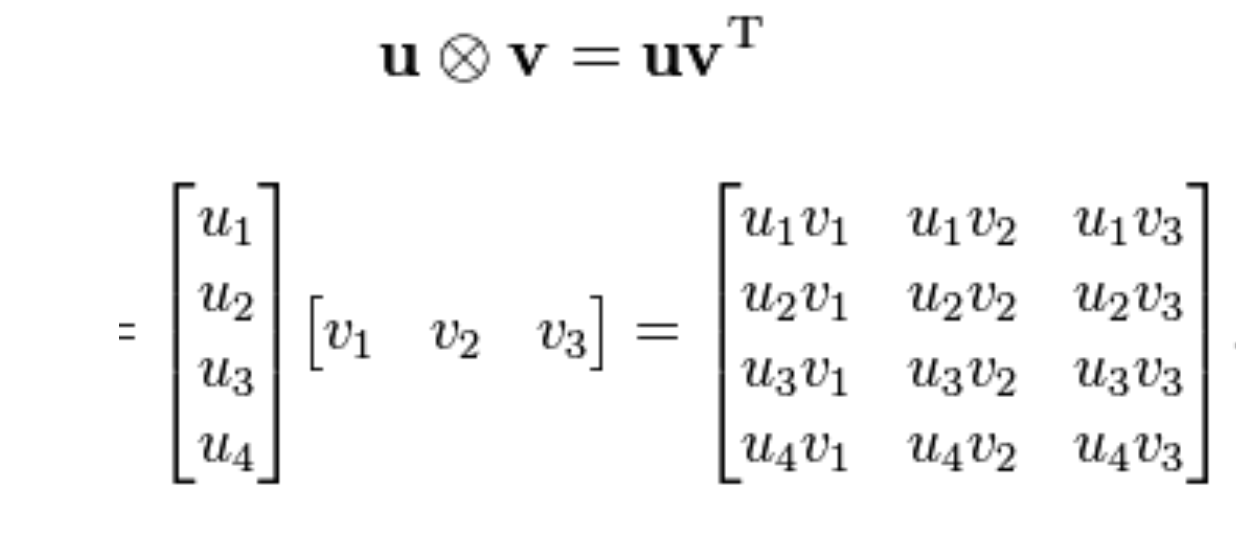

Vector Outer Product:

Coordinate Rotation:

is the angle of rotation

- x and y are coordinates of a point in x-axis and y-axis

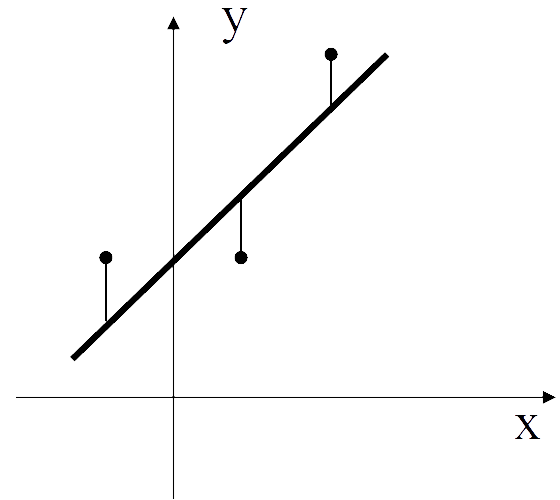

Least Squares Fitting example:

Problem: find the line that best fit these three points.

Solution:

- Follow the equation

or

c = intercept

d = slope

- From 3 points, we can get:

c - d = 1

c + d = 1

c + 2d = 3

OR

and

- Then

is

OR

3c + 2d = 5

2c + 6d = 6

- Finally we can get c = 9/7, d = 4/7

and best line to fit those 3 points is

This method of Least Square Fitting is used only if m > n for an m-by-n matrix A, and Ax = b has no solution.

Eigenvalue & Eigenvector:

We have a square matrix A, if is called eigenvalue and x is called eigenvector.

Example:

and

5 and 2 are eigenvalue**s**,

and

are eigenvectors.

If s are distinct eigenvalues of a matrix, then the corresponding eigenvectors are linearly independent.

(cannot be expressed as a linear combination of the other vectors)

(The rank of a square matrix is the number of linearly independent rows or columns)

A real, symmetric matrix has real eigenvalues with eigenvectors that can be chosen to be orthonormal matrix.

( OR

)

Singular Value Decomposition (SVD):

An m*n matrix A can be decomposed into:

U is mm, _V _is nn, both of them are orthogonal matrices:

D is a diagonal matrix (other positions except diagonal elements are 0), and the diagonal elements are called the singular values, while they are all

Also, a matrix is non-singular iff all of the singular values are

Example:

If A is a square, non-singular matrix, it’s inverse can be written as

- The singular values of D are the square roots of non-zero eigenvalues of both the nn matrix

and of the mm matrix

- The columns of U are the eigenvectors of

- The columns of V are the eigenvectors of

Reference:

- handout of COMP4102: Introduction to Computer Vision from Carleton University School of Computer Science, 2019