在解决过拟合问题时,虽然简化模型和增大数据集是有效的方法,但是实际中并不常用,简化模型意味着有些特征被丢弃,增大数据集面临数据获取难的问题。权重衰减(weight decay)是一种更为常用的方法。

权重衰减等价于 L2 范数正则化(regularization)

理论知识看教程复习即可。重点用代码实现看一下正则化的效果。

生成数据集

原始数据的函数关系为,p 表示特征向量的维数:

为了制造过拟合的现象,减少训练集的大小,增加特征的数量到200个。

import torchimport d2lzhn_train, n_test, num_inputs = 20, 100, 200true_w, true_b = torch.ones(num_inputs, 1) * 0.01, 0.05features = torch.randn((n_train + n_test, num_inputs))labels = torch.matmul(features, true_w) + true_blabels += torch.normal(mean=0, std=0.01, size=labels.size())train_features, test_features = features[:n_train, :], features[n_train:, :]train_labels, test_labels = labels[:n_train], labels[n_train:]

L2范数惩罚项

下面定义_L_2范数惩罚项。这里只惩罚模型的权重参数。

def l2_penalty(w):return (w**2).sum() / 2

训练及测试

与前一节的函数类似

batch_size, num_epochs, lr = 1, 100, 0.003net, loss = d2lzh.linreg, d2lzh.squared_lossdataset = torch.utils.data.TensorDataset(train_features, train_labels)train_iter = torch.utils.data.DataLoader(dataset, batch_size, shuffle=True)def fit_and_plot(lambd):train_ls, test_ls = [], []for _ in range(num_epochs):for x, y in train_iter:l = loss(net(x, w, b), y) + lambd * l2_penalty(w)l = l.sum()if w.grad is not None:w.grad.data.zero_()b.grad.data.zero_()l.backward()d2lzh.sgd([w, b], lr, batch_size)train_ls.append(loss(net(train_features, w, b), train_labels).mean().item())test_ls.append(loss(net(test_features, w, b), test_labels).mean().item())d2lzh.semilogy(range(1, num_epochs + 1), train_ls, 'epochs', 'loss',range(1, num_epochs + 1), test_ls, ['train', 'test'])print('L2 norm of w:', w.norm().item())

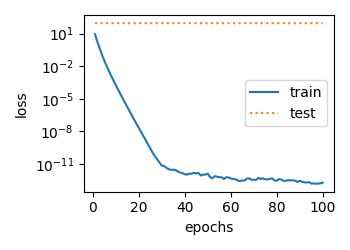

观察过拟合

先看一下不加权重衰减的表现,即 惩罚项系数为0

if __name__ == "__main__":fit_and_plot(lambd=0)

尽管训练误差近似为 0,但泛化误差高居不下

# 矩阵范数L2 norm of w: 13.747507095336914

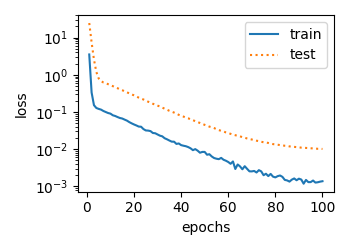

再来看一下加上权重衰减后的效果,

# 加上权重衰减if __name__ == "__main__":fit_and_plot(lambd=10)

可以看到,泛化误差已经大大降低了,最终得到的矩阵范数也比较小。

# 矩阵范数L2 norm of w: 0.02657567523419857

完整代码如下:

import torchimport d2lzhn_train, n_test, num_inputs = 20, 100, 200true_w, true_b = torch.ones(num_inputs, 1) * 0.01, 0.05features = torch.randn((n_train + n_test, num_inputs))labels = torch.matmul(features, true_w) + true_blabels += torch.normal(mean=0, std=0.01, size=labels.size())train_features, test_features = features[:n_train, :], features[n_train:, :]train_labels, test_labels = labels[:n_train], labels[n_train:]w = torch.randn((num_inputs, 1), requires_grad=True)b = torch.zeros(1, requires_grad=True)def l2_penalty(w):return (w ** 2).sum() / 2batch_size, num_epochs, lr = 1, 100, 0.003net, loss = d2lzh.linreg, d2lzh.squared_lossdataset = torch.utils.data.TensorDataset(train_features, train_labels)train_iter = torch.utils.data.DataLoader(dataset, batch_size, shuffle=True)def fit_and_plot(lambd):train_ls, test_ls = [], []for _ in range(num_epochs):for x, y in train_iter:l = loss(net(x, w, b), y) + lambd * l2_penalty(w)l = l.sum()if w.grad is not None:w.grad.data.zero_()b.grad.data.zero_()l.backward()d2lzh.sgd([w, b], lr, batch_size)train_ls.append(loss(net(train_features, w, b), train_labels).mean().item())test_ls.append(loss(net(test_features, w, b), test_labels).mean().item())d2lzh.semilogy(range(1, num_epochs + 1), train_ls, 'epochs', 'loss',range(1, num_epochs + 1), test_ls, ['train', 'test'])print('L2 norm of w:', w.norm().item())if __name__ == "__main__":fit_and_plot(lambd=10)

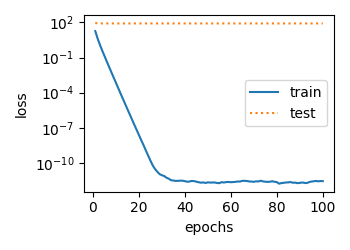

简洁实现:

权重衰减在定义优化器时就可以设置

def fit_and_plot_pytorch(wd):net = nn.Linear(num_inputs, 1)nn.init.normal_(net.weight, mean=0, std=1)nn.init.normal_(net.bias, mean=0, std=1)optimizer_w = torch.optim.SGD(params=[net.weight], lr=lr, weight_decay=wd)optimizer_b = torch.optim.SGD(params=[net.bias], lr=lr)train_ls, test_ls = [], []for _ in range(num_epochs):for x, y in train_iter:l = loss(net(x), y).mean()optimizer_w.zero_grad()optimizer_b.zero_grad()l.backward()optimizer_w.step()optimizer_b.step()train_ls.append(loss(net(train_features), train_labels).mean().item())test_ls.append(loss(net(test_features), test_labels).mean().item())d2lzh.semilogy(range(1, num_epochs + 1), train_ls, 'epochs', 'loss',range(1, num_epochs + 1), test_ls, ['train', 'test'])print('L2 norm of w:', net.weight.data.norm().item())if __name__ == "__main__":fit_and_plot_pytorch(0)fit_and_plot_pytorch(10)

结果跟手动实现差不多

L2 norm of w: 13.253257751464844L2 norm of w: 0.022790107876062393

小结

- 正则化通过为模型损失函数添加惩罚项使学出的模型参数值较小,是应对过拟合的常用手段。

- 权重衰减等价于L2L_2_L_2范数正则化,通常会使学到的权重参数的元素较接近0。

- 权重衰减可以通过优化器中的

weight_decay超参数来指定。- 可以定义多个优化器实例对不同的模型参数使用不同的迭代方法。