C4W1 Quiz - The basics of ConvNets

Ans: C

Ans: D

Note: 100(3003003)+100 = 27000100

Ans: B

Note: 55100 + 100 = 2600

Ans: C

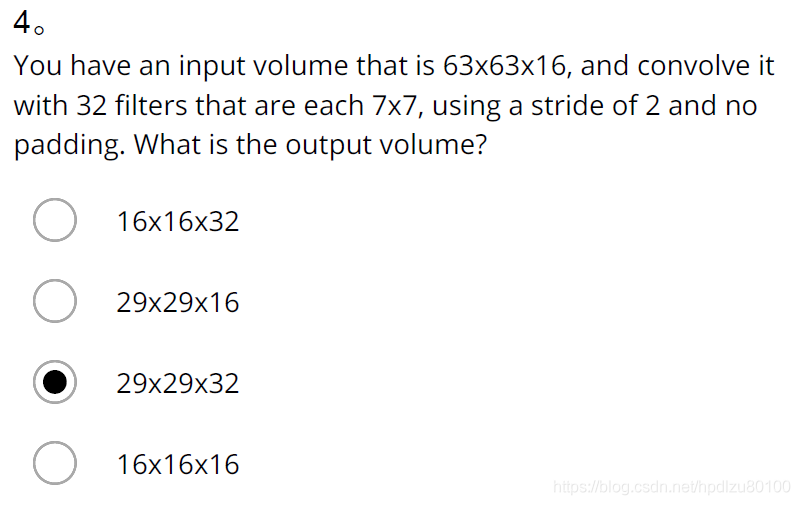

Note: n=63, f=7, nC=32, s=2, p=0

nH=nW=(n+2p-f)/s+1=(63-7)/2+1=29

Shape of output: nHnWnC= 292932

Ans: C

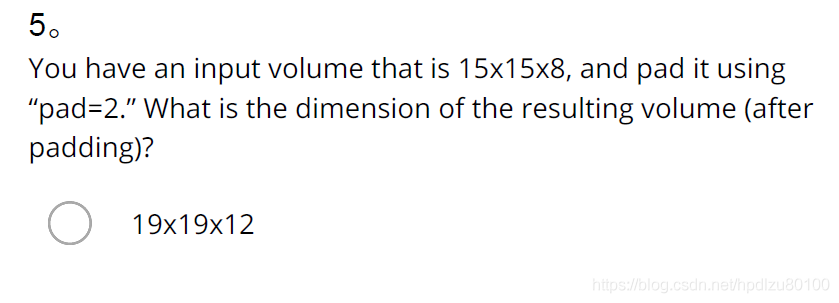

Note: n=15, p=2, nC=8

nH=nW=n+2p=19

Shape of input: nHnWnC= 19198

Ans: C

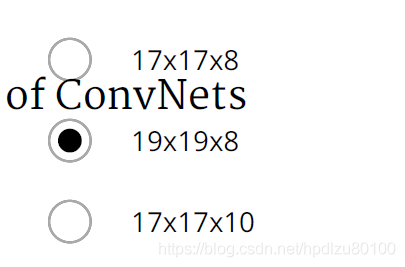

Note: n=63, s=1, f=7

(n+2p-f)/s+1=n => p=((n-1)s-n+f)/2=(f-1)/2=(7-1)/2=3

Ans:

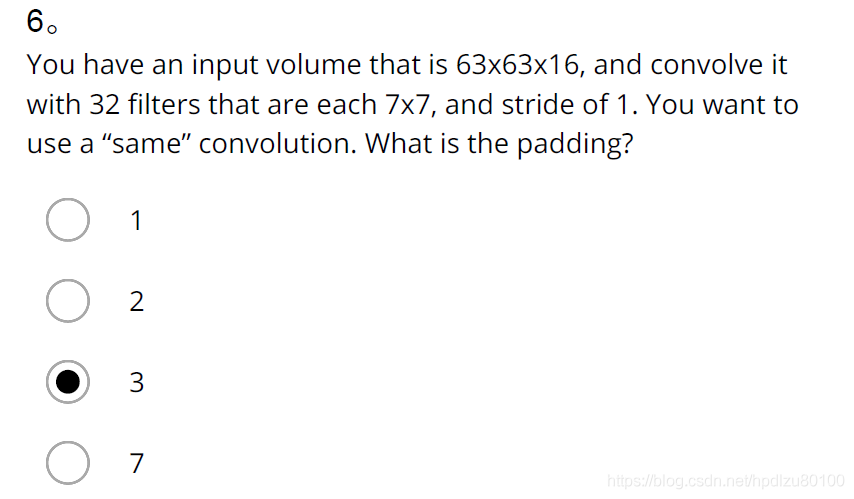

Note: n=32, nC=16, s=2

nH=nW=n/s=32/2=16

Shape of output: nHnWnC= 161616

Ans: False

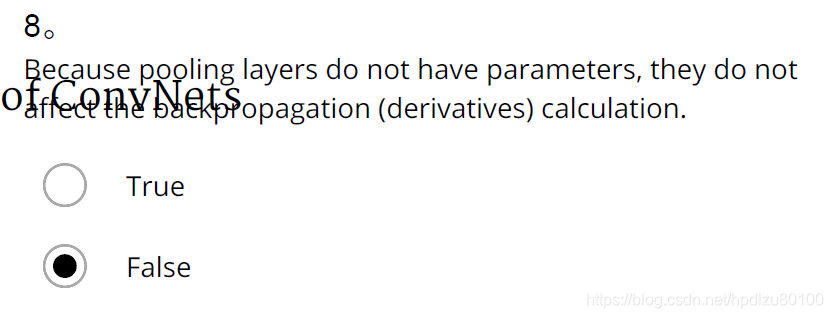

Note: 由卷积层->池化层作为一个layer,在前向传播过程中,池化层里保存着卷积层的各个部分的最大值/平均值,然后由池化层传递给下一层,在反向传播过程中,由下一层传递梯度过来,“不影响反向传播的计算”这意味着池化层到卷积层(反向)没有梯度变化,梯度值就为0,既然梯度值为0,那么例如在W[l]=W[l]−α×dW[l]的过程中,参数W[l]=W[l]−α×0,也就是说它不再更新,那么反向传播到此中断。所以池化层会影响反向传播的计算。

Ans: B、C

Ans: B

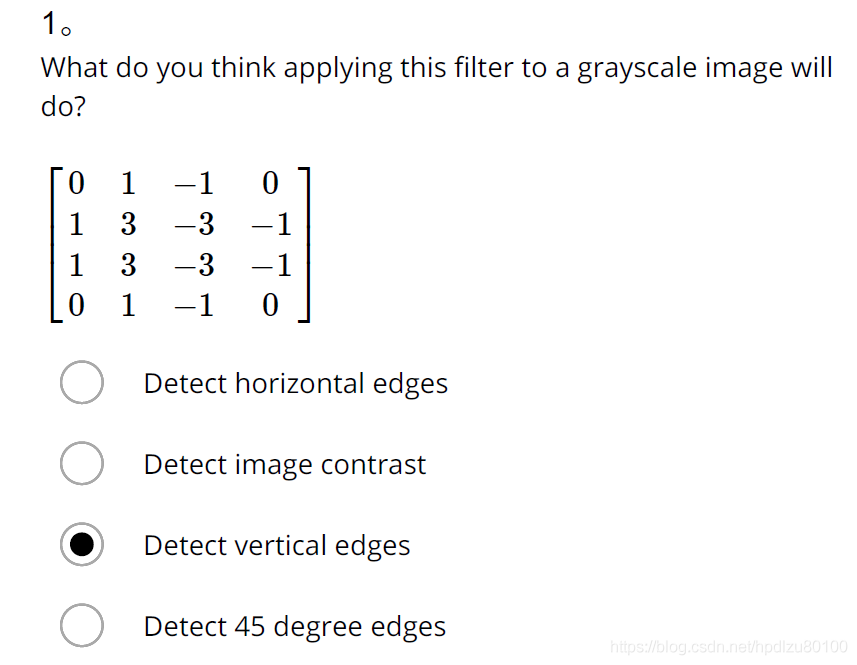

- What do you think applying this filter to a grayscale image will do?

[[0 1 -1 0][ 1 3 -3 -1][ 1 3 -3 -1][ 0 1 -1 0]]

Ans: Detect vertical edges

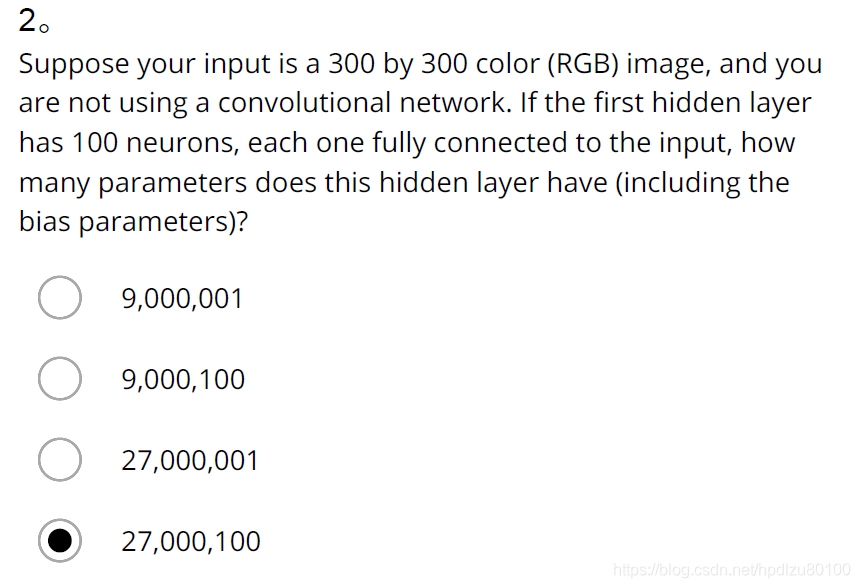

- Suppose your input is a 300 by 300 color (RGB) image, and you are not using a convolutional network. If the first hidden layer has 100 neurons, each one fully connected to the input, how many parameters does this hidden layer have (including the bias parameters)?

Ans: 27,000,100

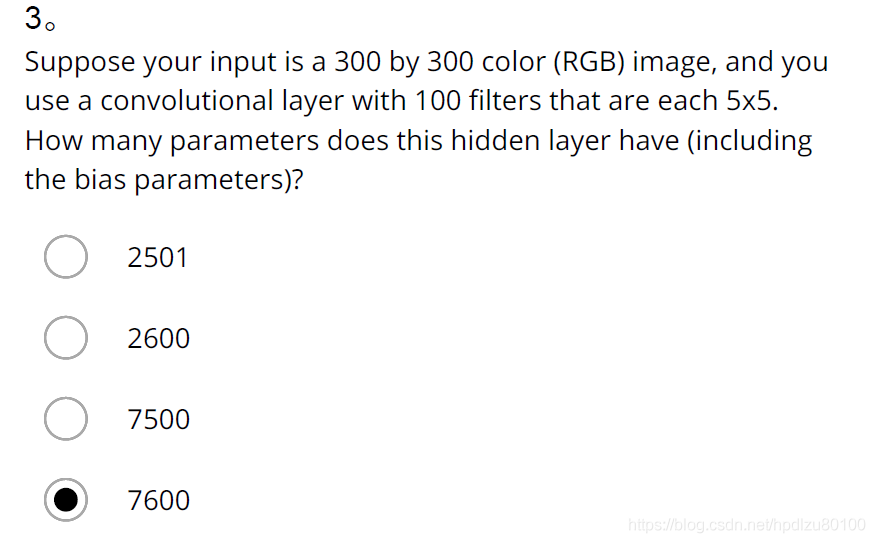

- Suppose your input is a 300 by 300 color (RGB) image, and you use a convolutional layer with 100 filters that are each 5x5. How many parameters does this hidden layer have (including the bias parameters)?

Ans: 2600

- You have an input volume that is 63x63x16, and convolve it with 32 filters that are each 7x7, using a stride of 2 and no padding. What is the output volume?

Ans: 29x29x32

- You have an input volume that is 15x15x8, and pad it using “pad=2.” What is the dimension of the resulting volume (after padding)?

Ans: 19x19x8

- You have an input volume that is 63x63x16, and convolve it with 32 filters that are each 7x7, and stride of 1. You want to use a “same” convolution. What is the padding?

Ans: 3

- You have an input volume that is 32x32x16, and apply max pooling with a stride of 2 and a filter size of 2. What is the output volume?

Ans: 16x16x16

- Because pooling layers do not have parameters, they do not affect the backpropagation (derivatives) calculation.

Ans: False

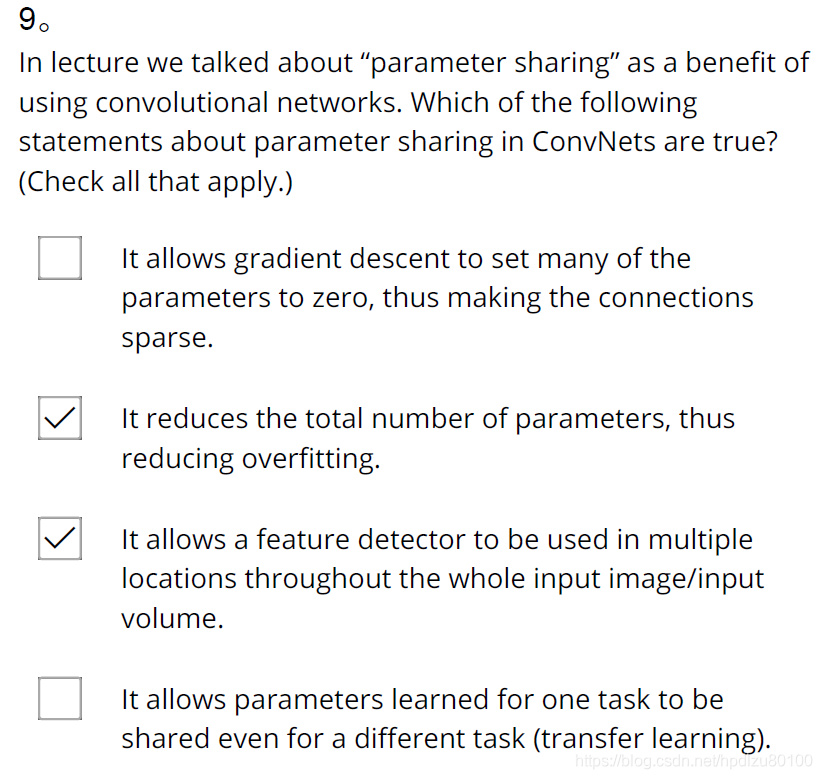

- In lecture we talked about “parameter sharing” as a benefit of using convolutional networks. Which of the following statements about parameter sharing in ConvNets are true? (Check all that apply.)

Ans: It reduces the total number of parameters, thus reducing overfitting.

It allows a feature detector to be used in multiple locations throughout the whole input image/input

volume.

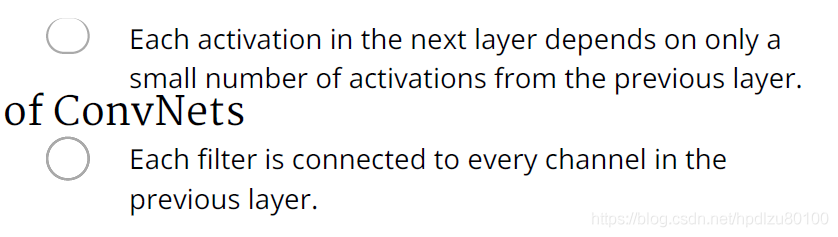

- In lecture we talked about “sparsity of connections” as a benefit of using convolutional layers. What does this mean?

Ans: Each activation in the next layer depends on only a small number of activations from the previous layer.