引言

TensorFlow 是 Google 基于 DistBelief 进行研发的第二代人工智能学习系统,被广泛用于语音识别或图像识别等多项机器深度学习领域。其命名来源于本身的运行原理。Tensor(张量)意味着 N 维数组,Flow(流)意味着基于数据流图的计算,TensorFlow 代表着张量从图象的一端流动到另一端计算过程,是将复杂的数据结构传输至人工智能神经网中进行分析和处理的过程。

TensorFlow 完全开源,任何人都可以使用。可在小到一部智能手机、大到数千台数据中心服务器的各种设备上运行。

『机器学习进阶笔记』系列将深入解析 TensorFlow 系统的技术实践,从零开始,由浅入深,与大家一起走上机器学习的进阶之路。

今天的文章是关于 VGG 和 Deep Residual 的两篇 Paper 解读。

VGGnet

VGG 解读

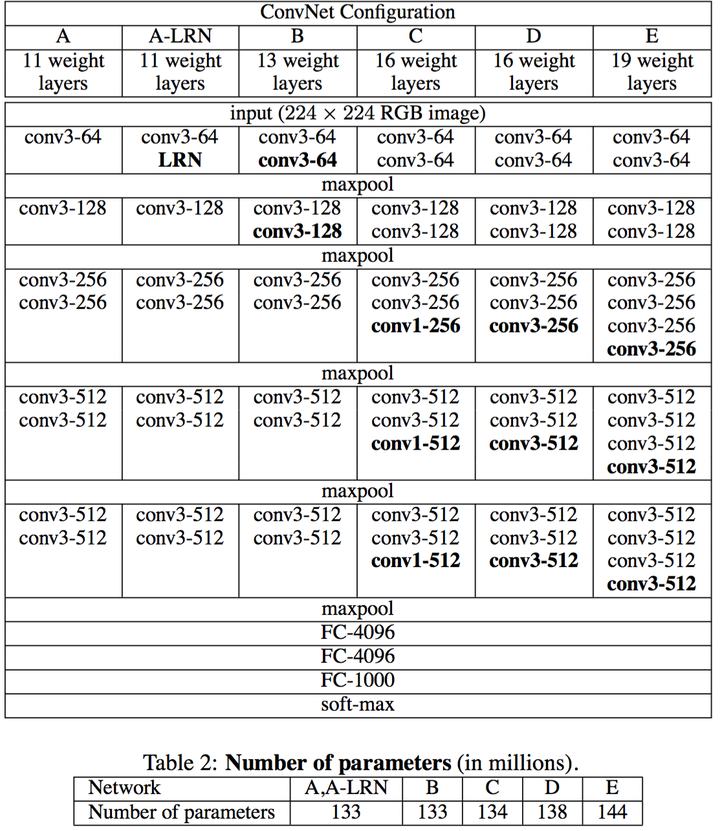

VGGnet 是 Oxford 的 Visual Geometry Group 的 team,在 ILSVRC 2014 上的相关工作,主要工作是证明了增加网络的深度能够在一定程度上影响网络最终的性能,如下图,文章通过逐步增加网络深度来提高性能,虽然看起来有一点小暴力,没有特别多取巧的,但是确实有效,很多 pretrained 的方法就是使用 VGG 的 model(主要是 16 和 19),VGG 相对其他的方法,参数空间很大,最终的 model 有 500 多 m,alnext 只有 200m,googlenet 更少,所以 train 一个 vgg 模型通常要花费更长的时间,所幸有公开的 pretrained model 让我们很方便的使用,前面 neural style 这篇文章就使用的 pretrained 的 model,paper 中的几种模型如下:

可以从图中看出,从 A 到最后的 E,他们增加的是每一个卷积组中的卷积层数,最后 D,E 是我们常见的 VGG-16,VGG-19 模型,C 中作者说明,在引入 1*1 是考虑做线性变换(这里 channel 一致, 不做降维),后面在最终数据的分析上来看 C 相对于 B 确实有一定程度的提升,但不如 D、VGG 主要得优势在于

- 减少参数的措施,对于一组(假定 3 个,paper 里面只 stack of three 33)卷积相对于 77 在使用 3 层的非线性关系(3 层 RELU)的同时保证参数数量为 3(32)=27C^2 的,而 77 为 49C^2,参数约为 7*7 的 81%。

- 去掉了 LRN,减少了内存的小消耗和计算时间

VGG-16 tflearn 实现

tflearn 官方 github 上有给出基于 tflearn 下的 VGG-16 的实现

from future import division, print_function, absolute_import

import tflearnfrom tflearn.layers.core import input_data, dropout, fully_connectedfrom tflearn.layers.conv import conv_2d, max_pool_2dfrom tflearn.layers.estimator import regression# Data loading and preprocessingimport tflearn.datasets.oxflower17 as oxflower17X, Y = oxflower17.load_data(one_hot=True)# Building 'VGG Network'network = input_data(shape=[None, 224, 224, 3])network = conv_2d(network, 64, 3, activation='relu')network = conv_2d(network, 64, 3, activation='relu')network = max_pool_2d(network, 2, strides=2)network = conv_2d(network, 128, 3, activation='relu')network = conv_2d(network, 128, 3, activation='relu')network = max_pool_2d(network, 2, strides=2)network = conv_2d(network, 256, 3, activation='relu')network = conv_2d(network, 256, 3, activation='relu')network = conv_2d(network, 256, 3, activation='relu')network = max_pool_2d(network, 2, strides=2)network = conv_2d(network, 512, 3, activation='relu')network = conv_2d(network, 512, 3, activation='relu')network = conv_2d(network, 512, 3, activation='relu')network = max_pool_2d(network, 2, strides=2)network = conv_2d(network, 512, 3, activation='relu')network = conv_2d(network, 512, 3, activation='relu')network = conv_2d(network, 512, 3, activation='relu')network = max_pool_2d(network, 2, strides=2)network = fully_connected(network, 4096, activation='relu')network = dropout(network, 0.5)network = fully_connected(network, 4096, activation='relu')network = dropout(network, 0.5)network = fully_connected(network, 17, activation='softmax')network = regression(network, optimizer='rmsprop',loss='categorical_crossentropy',learning_rate=0.001)# Trainingmodel = tflearn.DNN(network, checkpoint_path='model_vgg',max_checkpoints=1, tensorboard_verbose=0)model.fit(X, Y, n_epoch=500, shuffle=True,show_metric=True, batch_size=32, snapshot_step=500,snapshot_epoch=False, run_id='vgg_oxflowers17')

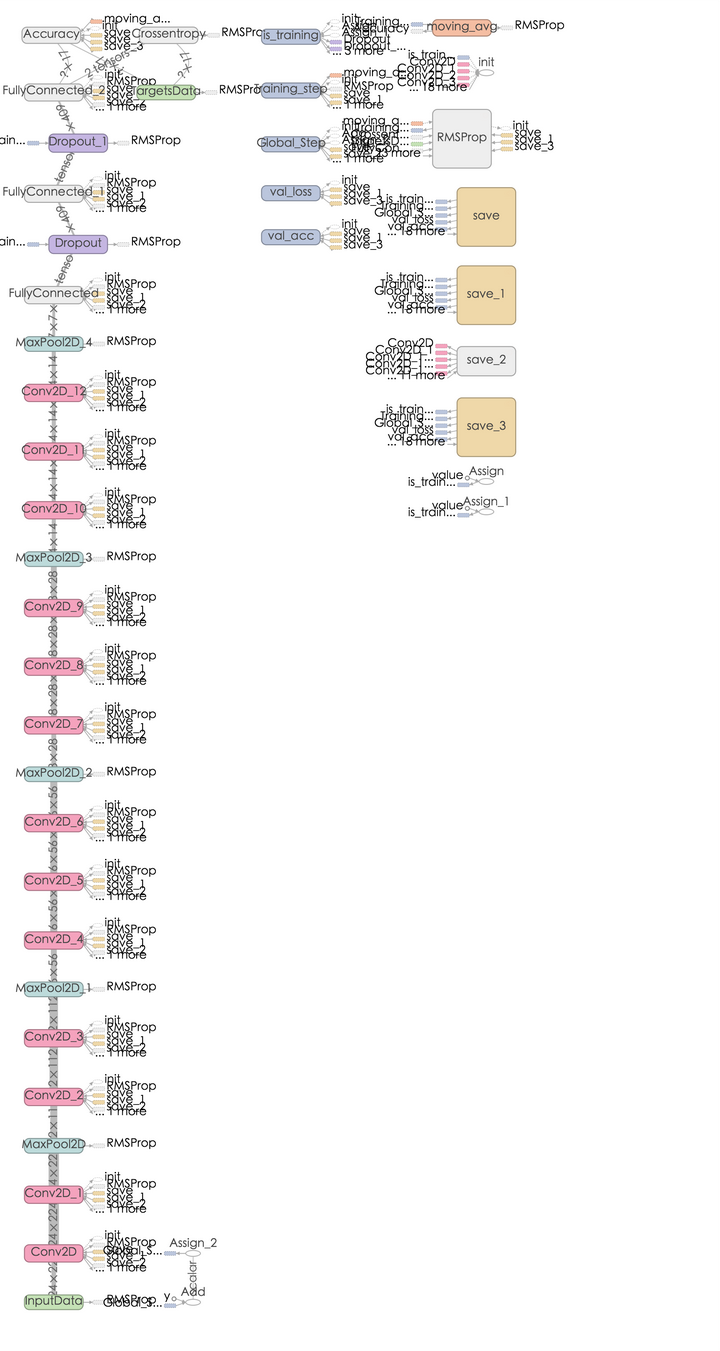

VGG-16 graph 如下:

对 VGG,我个人觉得他的亮点不多,pre-trained 的 model 我们可以很好的使用,但是不如 GoogLeNet 那样让我有眼前一亮的感觉。

Deep Residual Network

Deep Residual Network 解读

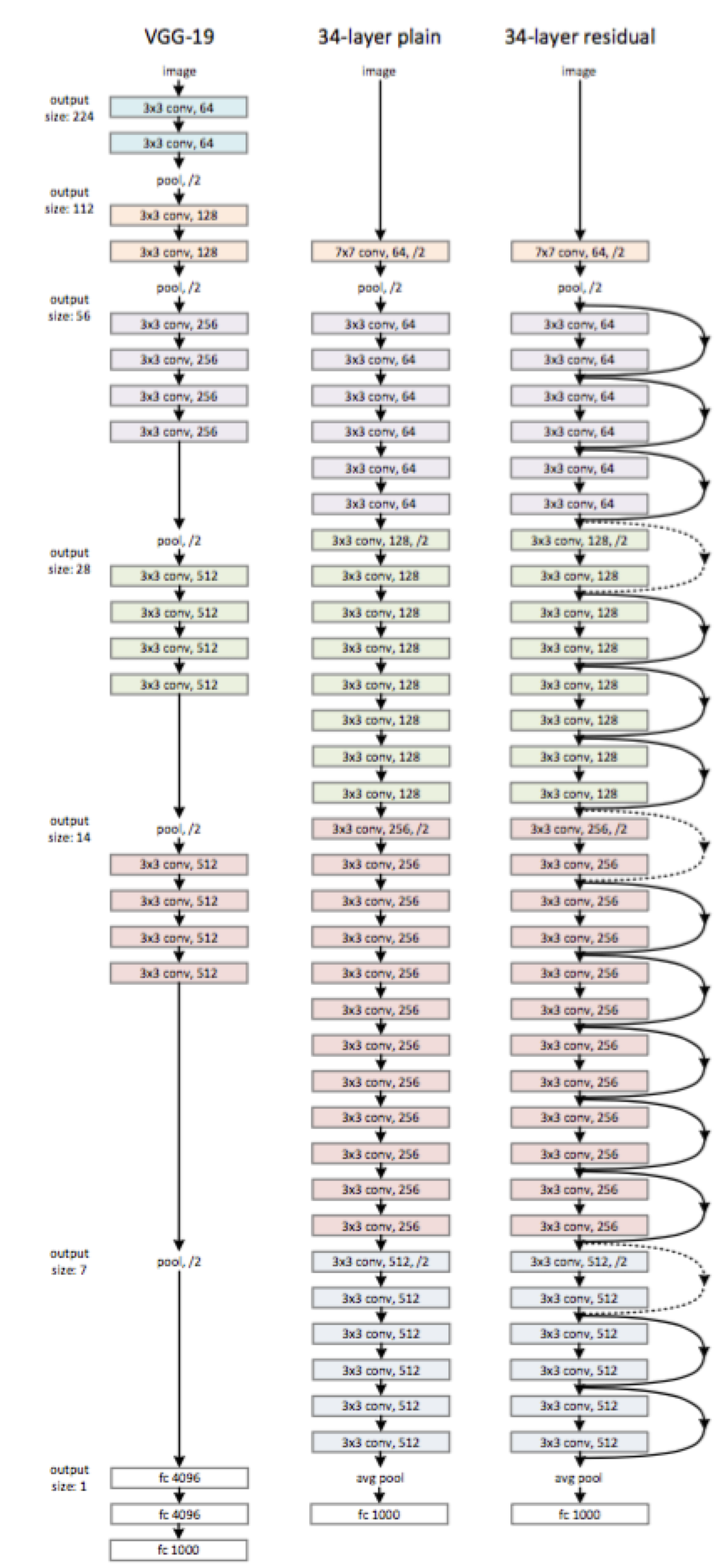

一般来说越深的网络,越难被训练,Deep Residual Learning for Image Recognition中提出一种 residual learning 的框架,能够大大简化模型网络的训练时间,使得在可接受时间内,模型能够更深 (152 甚至尝试了 1000),该方法在 ILSVRC2015 上取得最好的成绩。

随着模型深度的增加,会产生以下问题:

- vanishing/exploding gradient,导致了训练十分难收敛,这类问题能够通过 norimalized initialization 和 intermediate normalization layers 解决;

- 对合适的额深度模型再次增加层数,模型准确率会迅速下滑(不是 overfit 造成),training error 和 test error 都会很高,相应的现象在 CIFAR-10 和 ImageNet 都有提及

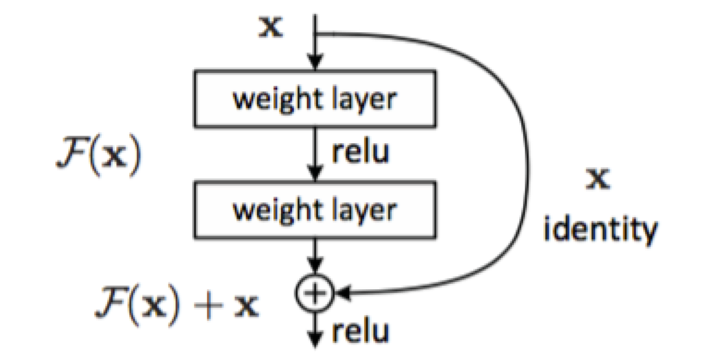

为了解决因深度增加而产生的性能下降问题,作者提出下面一种结构来做 residual learning:

假设潜在映射为 H(x),使 stacked nonlinear layers 去拟合 F(x):=H(x)-x,残差优化比优化 H(x) 更容易。

F(x)+x 能够很容易通过”shortcut connections” 来实现。

这篇文章主要得改善就是对传统的卷积模型增加 residual learning,通过残差优化来找到近似最优 identity mappings。

paper 当中的一个网络结构:

Deep Residual Network tflearn 实现

tflearn 官方有一个 cifar10 的实现, 代码如下:

from __future__ import division, print_function, absolute_importimport tflearn# Residual blocks# 32 layers: n=5, 56 layers: n=9, 110 layers: n=18n = 5# Data loadingfrom tflearn.datasets import cifar10(X, Y), (testX, testY) = cifar10.load_data()Y = tflearn.data_utils.to_categorical(Y, 10)testY = tflearn.data_utils.to_categorical(testY, 10)# Real-time data preprocessingimg_prep = tflearn.ImagePreprocessing()img_prep.add_featurewise_zero_center(per_channel=True)# Real-time data augmentationimg_aug = tflearn.ImageAugmentation()img_aug.add_random_flip_leftright()img_aug.add_random_crop([32, 32], padding=4)# Building Residual Networknet = tflearn.input_data(shape=[None, 32, 32, 3],data_preprocessing=img_prep,data_augmentation=img_aug)net = tflearn.conv_2d(net, 16, 3, regularizer='L2', weight_decay=0.0001)net = tflearn.residual_block(net, n, 16)net = tflearn.residual_block(net, 1, 32, downsample=True)net = tflearn.residual_block(net, n-1, 32)net = tflearn.residual_block(net, 1, 64, downsample=True)net = tflearn.residual_block(net, n-1, 64)net = tflearn.batch_normalization(net)net = tflearn.activation(net, 'relu')net = tflearn.global_avg_pool(net)# Regressionnet = tflearn.fully_connected(net, 10, activation='softmax')mom = tflearn.Momentum(0.1, lr_decay=0.1, decay_step=32000, staircase=True)net = tflearn.regression(net, optimizer=mom,loss='categorical_crossentropy')# Trainingmodel = tflearn.DNN(net, checkpoint_path='model_resnet_cifar10',max_checkpoints=10, tensorboard_verbose=0,clip_gradients=0.)model.fit(X, Y, n_epoch=200, validation_set=(testX, testY),snapshot_epoch=False, snapshot_step=500,show_metric=True, batch_size=128, shuffle=True,run_id='resnet_cifar10')

其中,residual_block 实现了 shortcut,代码写的十分棒:

def residual_block(incoming, nb_blocks, out_channels, downsample=False,downsample_strides=2, activation='relu', batch_norm=True,bias=True, weights_init='variance_scaling',bias_init='zeros', regularizer='L2', weight_decay=0.0001,trainable=True, restore=True, reuse=False, scope=None,name="ResidualBlock"):""" Residual Block.A residual block as described in MSRA's Deep Residual Network paper.Full pre-activation architecture is used here.Input:4-D Tensor [batch, height, width, in_channels].Output:4-D Tensor [batch, new height, new width, nb_filter].Arguments:incoming: `Tensor`. Incoming 4-D Layer.nb_blocks: `int`. Number of layer blocks.out_channels: `int`. The number of convolutional filters of theconvolution layers.downsample: `bool`. If True, apply downsampling using'downsample_strides' for strides.downsample_strides: `int`. The strides to use when downsampling.activation: `str` (name) or `function` (returning a `Tensor`).Activation applied to this layer (see tflearn.activations).Default: 'linear'.batch_norm: `bool`. If True, apply batch normalization.bias: `bool`. If True, a bias is used.weights_init: `str` (name) or `Tensor`. Weights initialization.(see tflearn.initializations) Default: 'uniform_scaling'.bias_init: `str` (name) or `tf.Tensor`. Bias initialization.(see tflearn.initializations) Default: 'zeros'.regularizer: `str` (name) or `Tensor`. Add a regularizer to thislayer weights (see tflearn.regularizers). Default: None.weight_decay: `float`. Regularizer decay parameter. Default: 0.001.trainable: `bool`. If True, weights will be trainable.restore: `bool`. If True, this layer weights will be restored whenloading a model.reuse: `bool`. If True and 'scope' is provided, this layer variableswill be reused (shared).scope: `str`. Define this layer scope (optional). A scope can beused to share variables between layers. Note that scope willoverride name.name: A name for this layer (optional). Default: 'ShallowBottleneck'.References:- Deep Residual Learning for Image Recognition. Kaiming He, XiangyuZhang, Shaoqing Ren, Jian Sun. 2015.- Identity Mappings in Deep Residual Networks. Kaiming He, XiangyuZhang, Shaoqing Ren, Jian Sun. 2015.Links:- [http://arxiv.org/pdf/1512.03385v1.pdf]([http://arxiv.org/pdf/1512.03385v1.pdf](http://arxiv.org/pdf/1512.03385v1.pdf))- [Identity Mappings in Deep Residual Networks]([https://arxiv.org/pdf/1603.05027v2.pdf](https://arxiv.org/pdf/1603.05027v2.pdf))"""resnet = incomingin_channels = incoming.get_shape().as_list()[-1]with tf.variable_op_scope([incoming], scope, name, reuse=reuse) as scope:name = scope.name #TODOfor i in range(nb_blocks):identity = resnetif not downsample:downsample_strides = 1if batch_norm:resnet = tflearn.batch_normalization(resnet)resnet = tflearn.activation(resnet, activation)resnet = conv_2d(resnet, out_channels, 3,downsample_strides, 'same', 'linear',bias, weights_init, bias_init,regularizer, weight_decay, trainable,restore)if batch_norm:resnet = tflearn.batch_normalization(resnet)resnet = tflearn.activation(resnet, activation)resnet = conv_2d(resnet, out_channels, 3, 1, 'same','linear', bias, weights_init,bias_init, regularizer, weight_decay,trainable, restore)# Downsamplingif downsample_strides > 1:identity = tflearn.avg_pool_2d(identity, 1,downsample_strides)# Projection to new dimensionif in_channels != out_channels:ch = (out_channels - in_channels)//2identity = tf.pad(identity,[[0, 0], [0, 0], [0, 0], [ch, ch]])in_channels = out_channelsresnet = resnet + identityreturn resnet

Deep Residual Network tflearn 这个里面有一个 downsample, 我在 run 这段代码的时候出现一个 error,是 tensorflow 提示 kernel size 1 小于 stride,我看了好久, sample 确实要这样,莫非是 tensorflow 不支持 kernel 小于 stride 的情况?我这里往 tflearn 里提了个 issue issue-331

kaiming He 在新的 paper 里面提了 proposed Residualk Unit,相比于上面提到的采用 pre-activation 的理念,相对于原始的 residual unit 能够更容易的训练,并且得到更好的泛化能力。

总结

前面一段时间,大部分花在看 CV 模型上,研究其中的原理,从 AlexNet 到 deep residual network, 从大牛的 paper 里面学到了很多,接下来一段时间,我会去 github 找一些特别有意思的相关项目,可能会包括 GAN 等等的东西来玩玩,还有在 DL meetup 上听周昌大神说的那些 neural style 的各种升级版本,也许还有强化学习的一些框架以及好玩的东西。

——————

相关阅读推荐:

机器学习进阶笔记之二 | 深入理解 Neural Style

本文由『UCloud 内核与虚拟化研发团队』提供。

关于作者:

Burness( ), UCloud 平台研发中心深度学习研发工程师,tflearn Contributor & tensorflow Contributor,做过电商推荐、精准化营销相关算法工作,专注于分布式深度学习框架、计算机视觉算法研究,平时喜欢玩玩算法,研究研究开源的项目,偶尔也会去一些数据比赛打打酱油,生活中是个极客,对新技术、新技能痴迷。

你可以在 Github 上找到他:http://hacker.duanshishi.com/

「UCloud 机构号」将独家分享云计算领域的技术洞见、行业资讯以及一切你想知道的相关讯息。

欢迎提问 & 求关注 o(////▽////)q~