Hu, W., Cai, X., Hou, J., Yi, S., & Lin, Z. (AAAI2020). GTC: Guided Training of CTC Towards Efficient and Accurate Scene Text Recognition. Retrieved from http://arxiv.org/abs/2002.01276

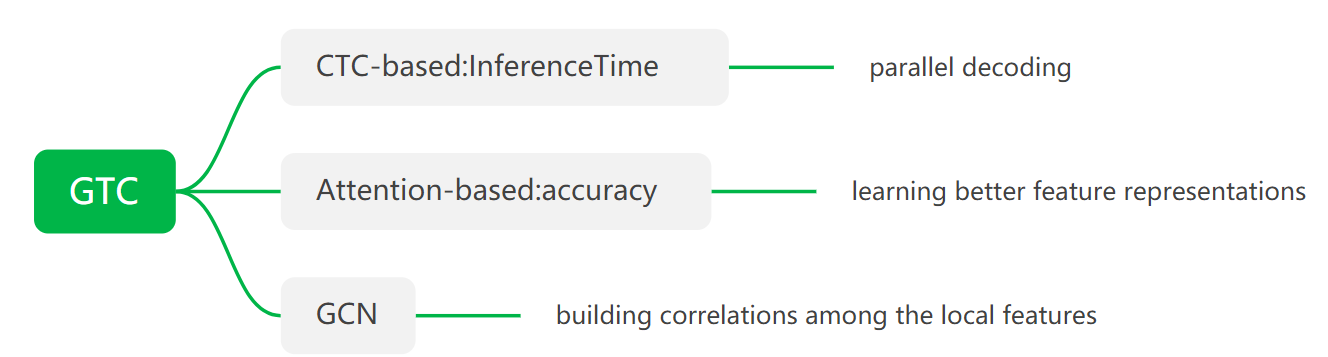

Abstract: proposed the guided training of CTC (GTC), where CTC model learns a better alignment and feature representations from a more powerful attentional guidance,first attempt to apply graphs in scene text recognition and build sequence correlations by using GCN。<br /> Keywords: Graph Convolutional Network(GCN) Connectionist Temporal Classification(CTC)

在论文中,作者认为当前识别算法都有局限性(精确率和推理时间之间的平衡关系):

- attention-based methods不是并行解码,所以推理速度慢

- CTC-based methods 在 feature alignments and feature representations 容易出现误差,优化的没有attention-based 好,如对于label为AB,当在3 time-steps output时,有五种情况:‘A-B’ or ‘-AB’ or ‘AB-’ or ‘AAB’ or ‘ABB.

- 在CTC解码中存在blank和重复characters,因此作者认为相邻time steps中有supplementary features, 如果只考虑一个time step可能不完整,例如‘H’可能被识别为‘I’当只有字符的部分feature被考虑时。

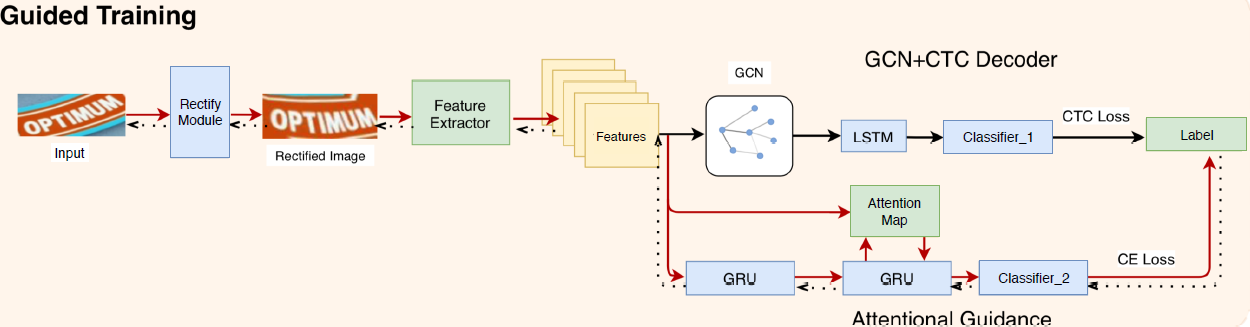

在上面基础上,作者提出以下这个东西:在training的时候,用attention-based的cross entropy去优化STN和feature extraction网络,用CTC loss 去优化GCN和CTC解码部分。在inference的时候,用CTC解码结合attention-based优化的feature extraction网络上的权重进行推理。作者还提出一个GCN模块去捕捉每个sequence slice的dependency进行合并提升模型的鲁棒性和准确率。

The STN, ResNet-CNN and the attentional guidance are solely trained with cross entropy loss, while the GCN+CTC decoder is trained with CTC loss.

具体实现(主要是以下两块):

- Attentional Guidance

- GCN+CTC Decoder

- Conclusion