规划与准备 (先后顺序有问题)

本次计划部署的 consul 集群有 3 个节点,都是 server 类型

| 容器 IP | 节点 | 类型 |

|---|---|---|

| 172.17.0.2 | server1 | server |

| 172.17.0.3 | server2 | server |

| 172.17.0.4 | server3 | server |

把 consul 的数据文件都映射到宿主机上,有利于备份数据以及方便以后重构容器。

宿主机建立目录 server1、server2、server3,下面分别存放 3 个 consul 节点的信息:

[root@wuli-centOS7 ~]# mkdir -p /data/consul/server1/config[root@wuli-centOS7 ~]# mkdir -p /data/consul/server1/data## log 指定启动会报权限拒绝,暂时不使用[root@wuli-centOS7 ~]# mkdir -p /data/consul/server1/log[root@wuli-centOS7 ~]# mkdir -p /data/consul/server2/config[root@wuli-centOS7 ~]# mkdir -p /data/consul/server2/data## log 指定启动会报权限拒绝,暂时不使用[root@wuli-centOS7 ~]# mkdir -p /data/consul/server2/log[root@wuli-centOS7 ~]# mkdir -p /data/consul/server3/config[root@wuli-centOS7 ~]# mkdir -p /data/consul/server3/data## log 指定启动会报权限拒绝,暂时不使用[root@wuli-centOS7 ~]# mkdir -p /data/consul/server3/log

搭建 Consul 集群

创建 server1 配置文件,启动 server1 节点

- 创建 server1 的配置文件:

[root@wuli-centOS7 ~]# vi /data/consul/server1/config/config.json

{"datacenter": "dc1","bootstrap_expect": 3,"data_dir": "/consul/data","log_level": "INFO","node_name": "consul_server_1","client_addr": "0.0.0.0","server": true,"ui": true,"enable_script_checks": true,"addresses": {"https": "0.0.0.0","dns": "0.0.0.0"}}如果加入参数"log_file": "/consul/log/",指定会报权限拒绝,以后研究后使用

- 启动节点 consul_server_1

docker run -d -p 8510:8500 -v /data/consul/server1/data:/consul/data -v /data/consul/server1/config:/consul/config --privileged=true -e CONSUL_BIND_INTERFACE='eth0' --name=consul_server_1 consul:1.9.5 agent -config-dir=/consul/config

docker run 命令说明:

- Environment Variable(环境变量):

CONSUL_BIND_INTERFACE=eth0:在容器启动时,自动绑定 eth0 端口的 IP 地址 - docker 参数:

-e:将时区信息传入到容器内部

-d:Daemon 模式

-p:绑定端口

–privileged:表示以 root 权限运行

–name:指定实例名称

consul:consul 启动命令

启动后,因为配置了 bootstrap_expect=3,但只启动了一个 server,所以会报错:没有集群领导者

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul monitor2020-05-03T18:01:40.453Z [ERROR] agent.anti_entropy: failed to sync remote state: error="No cluster leader"2020-05-03T18:01:57.802Z [ERROR] agent: Coordinate update error: error="No cluster leader"

创建 server2 配置文件,启动 server2 节点

- 创建 server2 的配置文件:

[root@wuli-centOS7 ~]# vi /data/consul/server2/config/config.json

{"datacenter": "dc1","bootstrap_expect": 3,"data_dir": "/consul/data","log_level": "INFO","node_name": "consul_server_2","client_addr": "0.0.0.0","server": true,"ui": true,"enable_script_checks": true,"addresses": {"https": "0.0.0.0","dns": "0.0.0.0"}}如果加入参数"log_file": "/consul/log/",指定会报权限拒绝,以后研究后使用

- 启动节点 consul_server_2

docker run -d -p 8520:8500 -v /data/consul/server2/data:/consul/data -v /data/consul/server2/config:/consul/config --privileged=true -e CONSUL_BIND_INTERFACE='eth0' --name=consul_server_2 consul:1.9.5 agent -config-dir=/consul/config

创建 server3 配置文件,启动 server3 节点

- 创建 server3 的配置文件:

[root@wuli-centOS7 ~]# vi /data/consul/server3/config/config.json

{"datacenter": "dc1","bootstrap_expect": 3,"data_dir": "/consul/data","log_level": "INFO","node_name": "consul_server_3","client_addr": "0.0.0.0","server": true,"ui": true,"enable_script_checks": true,"addresses": {"https": "0.0.0.0","dns": "0.0.0.0"}}如果加入参数"log_file": "/consul/log/",指定会报权限拒绝,以后研究后使用

- 启动节点 consul_server_3

docker run -d -p 8530:8500 -v /data/consul/server3/data:/consul/data -v /data/consul/server3/config:/consul/config --privileged=true -e CONSUL_BIND_INTERFACE='eth0' --name=consul_server_3 consul:1.9.5 agent -config-dir=/consul/config

加入集群

- 查看节点 server2、server3 的 IP,然后通过 join 命令把全部节点加入集群

[root@wuli-centOS7 ~]# docker inspect --format '{{ .NetworkSettings.IPAddress }}' consul_server_2172.17.0.3[root@wuli-centOS7 ~]# docker inspect --format '{{ .NetworkSettings.IPAddress }}' consul_server_3172.17.0.4

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul join 172.17.0.3[root@wuli-centOS7 ~]# docker exec consul_server_1 consul join 172.17.0.4

- 查看后台日志:

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul monitor2020-05-03T19:04:39.254Z [INFO] agent: (LAN) joining: lan_addresses=[172.17.0.3]2020-05-03T19:04:39.260Z [INFO] agent.server.serf.lan: serf: EventMemberJoin: consul_server_2 172.17.0.32020-05-03T19:04:39.261Z [INFO] agent: (LAN) joined: number_of_nodes=12020-05-03T19:04:39.261Z [INFO] agent.server: Adding LAN server: server="consul_server_2 (Addr: tcp/172.17.0.3:8300) (DC: dc1)"2020-05-03T19:04:39.265Z [INFO] agent.server.serf.wan: serf: EventMemberJoin: consul_server_2.dc1 172.17.0.32020-05-03T19:04:39.266Z [INFO] agent.server: Handled event for server in area: event=member-join server=consul_server_2.dc1 area=wan2020-05-03T19:04:40.362Z [ERROR] agent: Coordinate update error: error="No cluster leader"2020-05-03T19:04:44.019Z [INFO] agent: (LAN) joining: lan_addresses=[172.17.0.4]2020-05-03T19:04:44.021Z [INFO] agent.server.serf.lan: serf: EventMemberJoin: consul_server_3 172.17.0.42020-05-03T19:04:44.021Z [INFO] agent: (LAN) joined: number_of_nodes=12020-05-03T19:04:44.022Z [INFO] agent.server: Adding LAN server: server="consul_server_3 (Addr: tcp/172.17.0.4:8300) (DC: dc1)"2020-05-03T19:04:44.027Z [INFO] agent.server: Found expected number of peers, attempting bootstrap: peers=172.17.0.2:8300,172.17.0.3:8300,172.17.0.4:83002020-05-03T19:04:44.049Z [INFO] agent.server.serf.wan: serf: EventMemberJoin: consul_server_3.dc1 172.17.0.42020-05-03T19:04:44.049Z [INFO] agent.server: Handled event for server in area: event=member-join server=consul_server_3.dc1 area=wan2020-05-03T19:04:48.088Z [WARN] agent.server.raft: heartbeat timeout reached, starting election: last-leader=2020-05-03T19:04:48.088Z [INFO] agent.server.raft: entering candidate state: node="Node at 172.17.0.2:8300 [Candidate]" term=22020-05-03T19:04:48.100Z [INFO] agent.server.raft: election won: tally=22020-05-03T19:04:48.101Z [INFO] agent.server.raft: entering leader state: leader="Node at 172.17.0.2:8300 [Leader]"2020-05-03T19:04:48.101Z [INFO] agent.server.raft: added peer, starting replication: peer=78293668-16a6-1de0-673f-455d594e74472020-05-03T19:04:48.101Z [INFO] agent.server.raft: added peer, starting replication: peer=0b6169d8-7acc-ed24-682f-56ffd12b486c2020-05-03T19:04:48.102Z [INFO] agent.server: cluster leadership acquired2020-05-03T19:04:48.103Z [INFO] agent.server: New leader elected: payload=consul_server_12020-05-03T19:04:48.104Z [WARN] agent.server.raft: appendEntries rejected, sending older logs: peer="{Voter 78293668-16a6-1de0-673f-455d594e7447 172.17.0.3:8300}" next=12020-05-03T19:04:48.107Z [INFO] agent.server.raft: pipelining replication: peer="{Voter 0b6169d8-7acc-ed24-682f-56ffd12b486c 172.17.0.4:8300}"2020-05-03T19:04:48.112Z [INFO] agent.server.raft: pipelining replication: peer="{Voter 78293668-16a6-1de0-673f-455d594e7447 172.17.0.3:8300}"2020-05-03T19:04:48.120Z [INFO] agent.server: Cannot upgrade to new ACLs: leaderMode=0 mode=0 found=true leader=172.17.0.2:83002020-05-03T19:04:48.129Z [INFO] agent.leader: started routine: routine="CA root pruning"2020-05-03T19:04:48.129Z [INFO] agent.server: member joined, marking health alive: member=consul_server_12020-05-03T19:04:48.146Z [INFO] agent.server: member joined, marking health alive: member=consul_server_22020-05-03T19:04:48.156Z [INFO] agent.server: member joined, marking health alive: member=consul_server_32020-05-03T19:04:48.720Z [INFO] agent: Synced node info

- 查看成员

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul membersNode Address Status Type Build Protocol DC Segmentconsul_server_1 172.17.0.2:8301 alive server 1.7.2 2 dc1 <all>consul_server_2 172.17.0.3:8301 alive server 1.7.2 2 dc1 <all>consul_server_3 172.17.0.4:8301 alive server 1.7.2 2 dc1 <all>

- 查看集群的选举情况,领导者为哪个节点等信息

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul operator raft list-peersNode ID Address State Voter RaftProtocolconsul_server_1 0214e0b9-04fe-e8ad-c855-b5091dfc8e2e 172.17.0.2:8300 leader true 3consul_server_2 78293668-16a6-1de0-673f-455d594e7447 172.17.0.3:8300 follower true 3consul_server_3 0b6169d8-7acc-ed24-682f-56ffd12b486c 172.17.0.4:8300 follower true 3

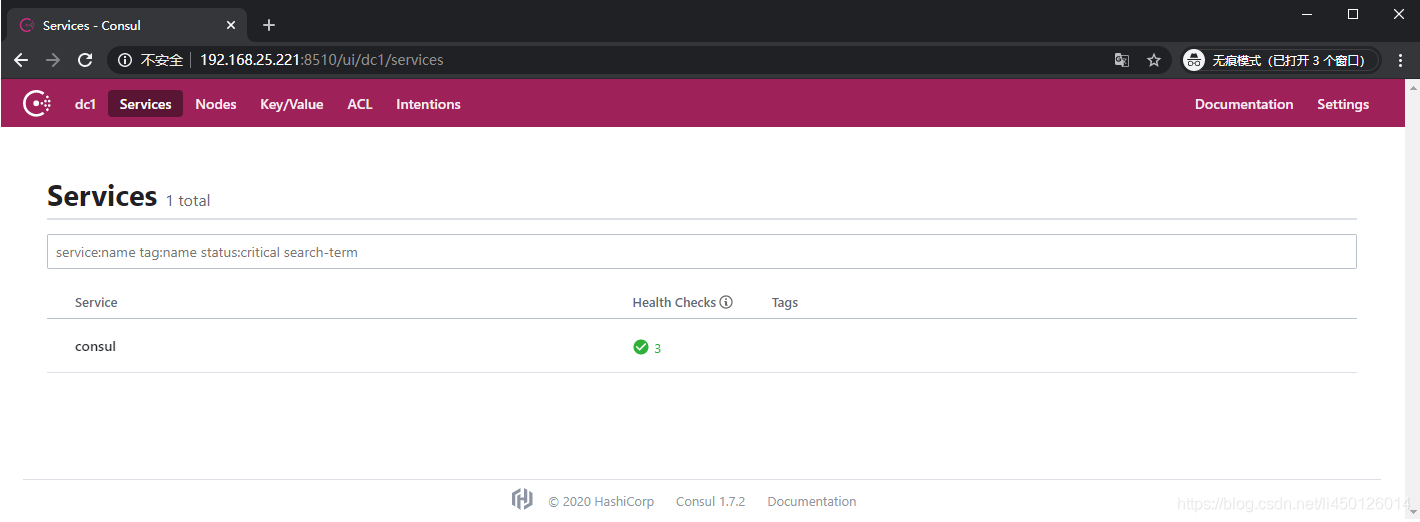

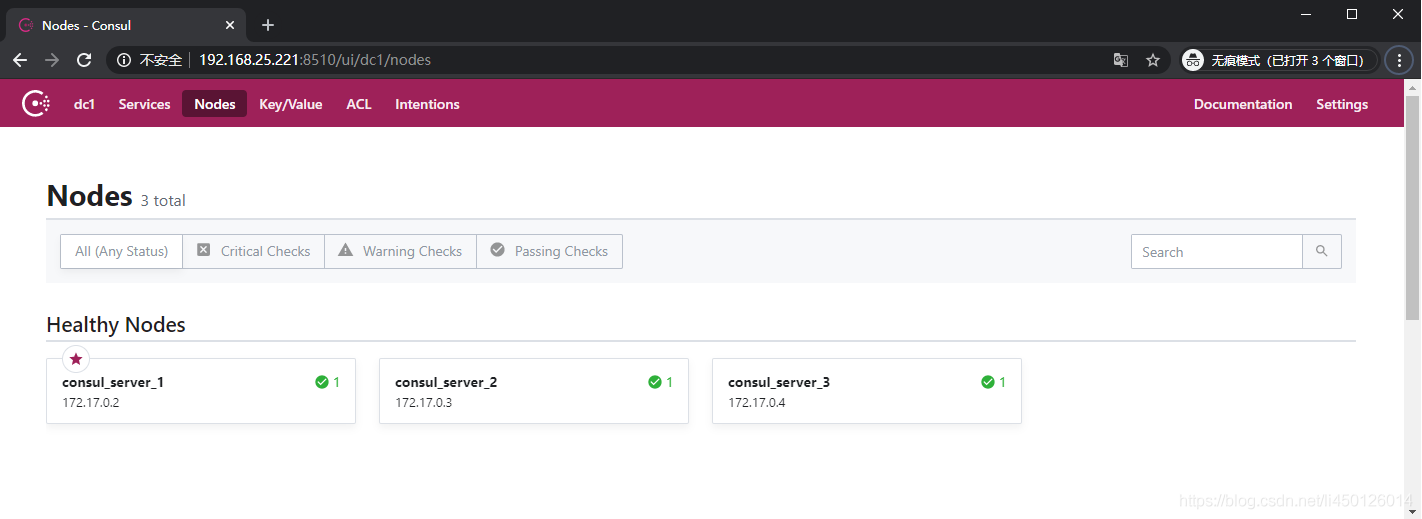

- 访问 ui,节点正常,标星的节点表示是 leader

验证 Consul 集群选举机制

Consul 中只有 server 节点会参与 Raft 算法并且作为 peer set 中的一部分。Raft 中的节点总是处于以下三种状态之一: follower、candidate 或 leader。目前 server1 是 leader,我们下面重启 consul_server_1 容器,观察 consul 集群变化情况。

- 重启 consul_server_1

[root@wuli-centOS7 ~]# docker restart consul_server_1

- 观察 server2 和 server3 的日志,consul_server_2 后台日志:

[root@wuli-centOS7 ~]# docker exec consul_server_2 consul monitor2020-05-03T19:26:29.564Z [INFO] agent.server.memberlist.lan: memberlist: Suspect consul_server_1 has failed, no acks received2020-05-03T19:26:30.533Z [INFO] agent.server.serf.wan: serf: EventMemberUpdate: consul_server_1.dc12020-05-03T19:26:30.533Z [INFO] agent.server: Handled event for server in area: event=member-update server=consul_server_1.dc1 area=wan2020-05-03T19:26:30.564Z [INFO] agent.server.serf.lan: serf: EventMemberUpdate: consul_server_12020-05-03T19:26:30.565Z [INFO] agent.server: Updating LAN server: server="consul_server_1 (Addr: tcp/172.17.0.2:8300) (DC: dc1)"2020-05-03T19:26:33.542Z [WARN] agent.server.raft: rejecting vote request since we have a leader: from=172.17.0.4:8300 leader=172.17.0.2:83002020-05-03T19:26:33.565Z [INFO] agent.server: New leader elected: payload=consul_server_3

- consul_server_3 后台日志:

[root@wuli-centOS7 ~]# docker exec consul_server_3 consul monitor2020-05-03T19:26:28.151Z [ERROR] agent.server.memberlist.lan: memberlist: Push/Pull with consul_server_1 failed: dial tcp 172.17.0.2:8301: connect: connection refused2020-05-03T19:26:30.235Z [INFO] agent.server.memberlist.lan: memberlist: Suspect consul_server_1 has failed, no acks received2020-05-03T19:26:30.420Z [ERROR] agent: Coordinate update error: error="rpc error making call: stream closed"2020-05-03T19:26:30.536Z [INFO] agent.server.serf.wan: serf: EventMemberUpdate: consul_server_1.dc12020-05-03T19:26:30.536Z [INFO] agent.server: Handled event for server in area: event=member-update server=consul_server_1.dc1 area=wan2020-05-03T19:26:30.729Z [INFO] agent.server.serf.lan: serf: EventMemberUpdate: consul_server_12020-05-03T19:26:30.729Z [INFO] agent.server: Updating LAN server: server="consul_server_1 (Addr: tcp/172.17.0.2:8300) (DC: dc1)"2020-05-03T19:26:32.234Z [WARN] agent.server.memberlist.wan: memberlist: Was able to connect to consul_server_1.dc1 but other probes failed, network may be misconfigured2020-05-03T19:26:33.536Z [WARN] agent.server.raft: heartbeat timeout reached, starting election: last-leader=172.17.0.2:83002020-05-03T19:26:33.536Z [INFO] agent.server.raft: entering candidate state: node="Node at 172.17.0.4:8300 [Candidate]" term=32020-05-03T19:26:33.546Z [INFO] agent.server.raft: election won: tally=22020-05-03T19:26:33.546Z [INFO] agent.server.raft: entering leader state: leader="Node at 172.17.0.4:8300 [Leader]"2020-05-03T19:26:33.546Z [INFO] agent.server.raft: added peer, starting replication: peer=0214e0b9-04fe-e8ad-c855-b5091dfc8e2e2020-05-03T19:26:33.546Z [INFO] agent.server.raft: added peer, starting replication: peer=78293668-16a6-1de0-673f-455d594e74472020-05-03T19:26:33.547Z [INFO] agent.server: cluster leadership acquired2020-05-03T19:26:33.548Z [INFO] agent.server: New leader elected: payload=consul_server_32020-05-03T19:26:33.550Z [INFO] agent.server.raft: pipelining replication: peer="{Voter 78293668-16a6-1de0-673f-455d594e7447 172.17.0.3:8300}"2020-05-03T19:26:33.551Z [INFO] agent.server.raft: pipelining replication: peer="{Voter 0214e0b9-04fe-e8ad-c855-b5091dfc8e2e 172.17.0.2:8300}"2020-05-03T19:26:33.554Z [INFO] agent.server: Cannot upgrade to new ACLs: leaderMode=0 mode=0 found=true leader=172.17.0.4:83002020-05-03T19:26:33.555Z [INFO] agent.leader: started routine: routine="CA root pruning"

- consul_server_1 重启后,查看领导者,此时 consul_server_3 已被推选为领导者:

[root@wuli-centOS7 ~]# docker exec -it consul_server_1 consul operator raft list-peersNode ID Address State Voter RaftProtocolconsul_server_3 0b6169d8-7acc-ed24-682f-56ffd12b486c 172.17.0.4:8300 leader true 3consul_server_1 0214e0b9-04fe-e8ad-c855-b5091dfc8e2e 172.17.0.2:8300 follower true 3consul_server_2 78293668-16a6-1de0-673f-455d594e7447 172.17.0.3:8300 follower true 3

配置 join 参数,节点自动加入集群

在 Consul 集群中,一个节点优雅退出,不会影响到集群中其他节点的正常运行,而集群的数据中心会把离开的节点标志为 left 状态,等到该节点重新加入集群,状态会变为 alive,默认情况下,数据中心会保留离开节点信息 72 小时,72 小时候后如果仍然没有加入集群,则会把该节点的信息移除掉。

server 节点退出集群

下面演示节点退出的情况:

- consul_server_2 优雅退出

[root@wuli-centOS7 ~]# docker exec consul_server_2 consul leaveGraceful leave complete

- 查看成员状态,

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul membersNode Address Status Type Build Protocol DC Segmentconsul_server_1 172.17.0.2:8301 alive server 1.7.2 2 dc1 <all>consul_server_2 172.17.0.3:8301 left server 1.7.2 2 dc1 <all>consul_server_3 172.17.0.4:8301 alive server 1.7.2 2 dc1 <all>

- 查看 leader

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul operator raft list-peersNode ID Address State Voter RaftProtocolconsul_server_3 0b6169d8-7acc-ed24-682f-56ffd12b486c 172.17.0.4:8300 leader true 3consul_server_1 0214e0b9-04fe-e8ad-c855-b5091dfc8e2e 172.17.0.2:8300 follower true 3

查看后台日志,没有报错,访问 ui 正常,说明配置文件的 bootstrap_expect=3,只是在创建集群的时候期待的节点数量,如果达不到就不会初次创建集群,但节点数据量达到 3 后,集群初次创建成功,后面如果 server 通过优雅退出,不影响集群的健康情况,集群仍然会正常运行,而优雅退出的集群的状态会标志为 “left”。

- 重新启动 consul_server_2 后,不会自动加入集群,因为配置文件没有 start_join 和 retry_join 参数,需要通过命令 consul join 加入集群,通过 consul_server_2 或者集群中的其他 server 发起命令都可以:

[root@wuli-centOS7 ~]# docker exec consul_server_2 consul join 172.17.0.2Successfully joined cluster by contacting 1 nodes.

或者:

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul join 172.17.0.3Successfully joined cluster by contacting 1 nodes.

节点自动加入集群

全部 server 的配置文件添加 start_join 和 retry_join 参数,可以在重启后自动集群。

- 参看各个 server 的 ip:

[root@wuli-centOS7 server4]# docker inspect --format '{{ .NetworkSettings.IPAddress }}' consul_server_1172.17.0.2[root@wuli-centOS7 server4]# docker inspect --format '{{ .NetworkSettings.IPAddress }}' consul_server_2172.17.0.3[root@wuli-centOS7 server4]# docker inspect --format '{{ .NetworkSettings.IPAddress }}' consul_server_3172.17.0.4

- 分别编辑各个 server 的配置文件

[root@wuli-centOS7 ~]# vi /data/consul/server1/config/config.json

{"datacenter": "dc1","bootstrap_expect": 3,"data_dir": "/consul/data","log_level": "INFO","node_name": "consul_server_3","client_addr": "0.0.0.0","server": true,"ui": true,"enable_script_checks": true,"addresses": {"https": "0.0.0.0","dns": "0.0.0.0"},"start_join":["172.17.0.2","172.17.0.3","172.17.0.4"],"retry_join":["172.17.0.2","172.17.0.3","172.17.0.4"]}如果加入参数"log_file": "/consul/log/",指定会报权限拒绝,以后研究后使用

- 重载配置文件,验证配置是否正确

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul reloadConfiguration reload triggered

- 配置正确,以后重启 consul server,或者优雅退出后再启动,会自动加入集群,无需使用命令 join。

节点之间加入通讯密钥

增加通讯密钥,可以防止其他节点加入集群。步骤如下:

- 使用 consul keygen 命令生成通讯密钥

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul keygenzVCGFgICqf5MAU61Wd/1wDP1hoQ37rQQFVvMkhzpM1c=

- 把密钥信息分别写入 3 个 server 的配置文件中

[root@wuli-centOS7 ~]# vim /data/consul/server1/config/config.json

{"datacenter": "dc1","bootstrap_expect": 3,"data_dir": "/consul/data","log_level": "INFO","node_name": "consul_server_1","client_addr": "0.0.0.0","server": true,"ui": true,"enable_script_checks": true,"addresses": {"https": "0.0.0.0","dns": "0.0.0.0"},"encrypt": "zVCGFgICqf5MAU61Wd/1wDP1hoQ37rQQFVvMkhzpM1c="}如果加入参数"log_file": "/consul/log/",指定会报权限拒绝,以后研究后使用

- consul 重新加载配置文件

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul reloadConfiguration reload triggered

- 实验中,发现重载配置文件后,其他节点仍然可以加入集群,需要重新启动 consul

[root@wuli-centOS7 ~]# docker restart consul_server_1consul_server_1[root@wuli-centOS7 ~]# docker restart consul_server_2consul_server_2[root@wuli-centOS7 ~]# docker restart consul_server_3consul_server_3

后面如果有新的节点,要加入集群中,必须提供 encrypt 才行。

- 再创建一个节点 server4,尝试加入有密钥的集群:

5.1 创建配置配置文件:

[root@wuli-centOS7 ~]# vi /data/consul/server4/config/config.json

{"datacenter": "dc1","bootstrap_expect": 1,"data_dir": "/consul/data","log_level": "INFO","node_name": "consul_server_4","client_addr": "0.0.0.0","server": true,"ui": true,"enable_script_checks": true,"addresses": {"https": "0.0.0.0","dns": "0.0.0.0"}}如果加入参数"log_file": "/consul/log/",指定会报权限拒绝,以后研究后使用

5.2 启动 server4

[root@wuli-centOS7 ~]# docker run -d -p 8540:8500 -v /data/consul/server4/data:/consul/data -v /data/consul/server4/config:/consul/config -e CONSUL_BIND_INTERFACE='eth0' --privileged=true --name=consul_server_4 consul agent -data-dir=/consul/data;cdf6edee727f92baf2f7d324cb9522644c851f81fe62356fb2fb9aad126eaf13

5.3 尝试加入集群,失败:

[root@wuli-centOS7 ~]# docker exec consul_server_4 consul join 172.17.0.2Error joining address '172.17.0.2': Unexpected response code: 500 (1 error occurred:* Failed to join 172.17.0.2: Remote state is encrypted and encryption is not configured)Failed to join any nodes.

5.4 在配置文件中加入通讯密钥

[root@wuli-centOS7 ~]# vi /data/consul/server4/config/config.json

{"datacenter": "dc1","bootstrap_expect": 1,"data_dir": "/consul/data","log_level": "INFO","node_name": "consul_server_4","client_addr": "0.0.0.0","server": true,"ui": true,"enable_script_checks": true,"addresses": {"https": "0.0.0.0","dns": "0.0.0.0"},"encrypt": "zVCGFgICqf5MAU61Wd/1wDP1hoQ37rQQFVvMkhzpM1c="}如果加入参数"log_file": "/consul/log/",指定会报权限拒绝,以后研究后使用

5.5 重载配置文件,然后尝试加入集群,仍然失败,说明添加密钥都需要重启 consul 才生效

[root@wuli-centOS7 ~]# docker exec consul_server_4 consul reloadConfiguration reload triggered[root@wuli-centOS7 ~]# docker exec consul_server_4 consul join 172.17.0.2Error joining address '172.17.0.2': Unexpected response code: 500 (1 error occurred:* Failed to join 172.17.0.2: Remote state is encrypted and encryption is not configured)Failed to join any nodes.

5.6 重启 server4,加入集群成功!

[root@wuli-centOS7 server4]# docker restart consul_server_4consul_server_4[root@wuli-centOS7 server4]# docker exec consul_server_4 consul join 172.17.0.2Successfully joined cluster by contacting 1 nodes.[root@wuli-centOS7 server4]# docker exec consul_server_1 consul membersNode Address Status Type Build Protocol DC Segmentconsul_server_1 172.17.0.2:8301 alive server 1.7.2 2 dc1 <all>consul_server_2 172.17.0.3:8301 alive server 1.7.2 2 dc1 <all>consul_server_3 172.17.0.4:8301 alive server 1.7.2 2 dc1 <all>consul_server_4 172.17.0.5:8301 alive server 1.7.2 2 dc1 <all>[root@wuli-centOS7 server4]# docker exec consul_server_1 consul operator raft list-peersNode ID Address State Voter RaftProtocolconsul_server_3 0b6169d8-7acc-ed24-682f-56ffd12b486c 172.17.0.4:8300 leader true 3consul_server_2 78293668-16a6-1de0-673f-455d594e7447 172.17.0.3:8300 follower true 3consul_server_1 0214e0b9-04fe-e8ad-c855-b5091dfc8e2e 172.17.0.2:8300 follower true 3consul_server_4 587e81c7-f1b5-5f19-311c-741c06ca446d 172.17.0.5:8300 follower false 3

增加 ACL token 权限配置

配置 master 的 token,master 的 token 可以自由定义,但为了与其他 token 格式一致,官方建议使用 64 位的 UUID。consul 的配置文件可以有多个,文件后缀名可以是 json 或者 hcl,我们这里使用 hcl 来演示。

启用 ACL,配置 master token

Consul 的 ACL 功能需要显示启用,在配置文件中通过设置参数 acl.enabled=true 即可。

下面演示了两种方法来配置 ACL,个人推荐方法二。

方法一:配置 acl.enabled=true,然后通过命令 consul acl bootstrap 生成 token,之后把改 token 作为 master 的 token。

- 添加配置文件 acl.hcl:

[root@wuli-centOS7 ~]# vim /data/consul/server4/config/acl.hcl

primary_datacenter = "dc1"acl {enabled = truedefault_policy = "deny"enable_token_persistence = truetokens {}}

- 重载配置文件,创建初始 token,生成的 SecretID 就是 token

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul reloadConfiguration reload triggered[root@wuli-centOS7 ~]# consul acl bootstrapAccessorID: b6676320-dbef-4020-ae69-8a47ae13dcefSecretID: 474dcea7-ee4d-3f11-1af1-a38eb37d3f5dDescription: Bootstrap Token (Global Management)Local: falseCreate Time: 2020-04-28 11:30:42.2992871 +0800 CSTPolicies:00000000-0000-0000-0000-000000000001 - global-management

- 修改配置文件 acl.hcl,加入 mater token

[root@wuli-centOS7 ~]# vi /data/consul/server4/config/acl.hcl

primary_datacenter = "dc1"acl {enabled = truedefault_policy = "deny"enable_token_persistence = truetokens {master = "474dcea7-ee4d-3f11-1af1-a38eb37d3f5d}}

- 重启服务,验证

方法二:

- 使用 linux 的 uuidgen 命令生成一个 64 位 UUID 作为 master token

[root@wuli-centOS7 ~]# uuidgendcb93655-0661-4ea1-bfc4-e5744317f99e

- 编写 acl.hcl 文件文件

[root@wuli-centOS7 ~]# vi /data/consul/server4/config/acl.hcl

primary_datacenter = "dc1"acl {enabled = truedefault_policy = "deny"enable_token_persistence = truetokens {master = "dcb93655-0661-4ea1-bfc4-e5744317f99e"}}

修改 config.json 配置,把 bootstrap_expect 修改成 1

- 重载配置文件,验证是否正确。

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul reloadConfiguration reload triggered

- 优雅关闭其他 server,重启 consul_server_1 容器

[root@wuli-centOS7 ~]# docker exec consul_server_2 consul leave[root@wuli-centOS7 ~]# docker exec consul_server_3 consul leave[root@wuli-centOS7 ~]# docker exec consul_server_4 consul leave[root@wuli-centOS7 ~]# docker restart consul_server_1

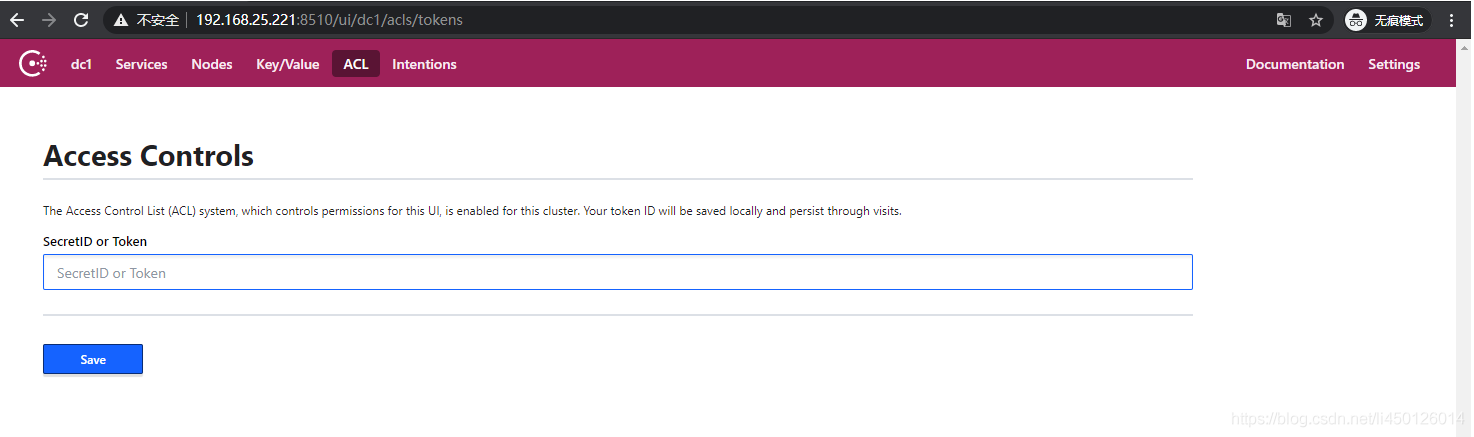

- 访问 ui,提示需要输入 token,输入我们上面的 mater token 即可

- 此时,使用 consul 大部分命令,都需要带上 token,否则报错:

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul infoError querying agent: Unexpected response code: 403 (Permission denied)

带上 token 参数:

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul info -token=dcb93655-0661-4ea1-bfc4-e5744317f99eagent:check_monitors = 0check_ttls = 0checks = 0services = 0……

- 不是在 docker 启动的 consul,可以通过增加环境变量 CONSUL_HTTP_TOKEN 代替每次命令后带 token 参数

[root@wuli-centOS7 ~]# vi /etc/profileexport CONSUL_HTTP_TOKEN=dcb93655-0661-4ea1-bfc4-e5744317f99e[root@wuli-centOS7 ~]# source /etc/profile

在 docker 运行中的 consul 容器,目前不清楚怎么修改环境变量永久生效,但可以 rm 移除旧容器,然后在 run 的时候添加上环境变量,这里就不演示了。

查看 consul_server_1 容器的全部环境变量

[root@wuli-centOS7 ~]# docker exec consul_server_1 envPATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/binHOSTNAME=2c2c68c4058fCONSUL_BIND_INTERFACE=eth0CONSUL_VERSION=1.7.2HASHICORP_RELEASES=https://releases.hashicorp.comHOME=/root

查资料 CONSUL_BIND_INTERFACE 是什么参数

配置 agent token

agent token 是每个集群都需要的 token

没有配置 agent token,查看日志报以下警告:

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul monitor -token=dcb93655-0661-4ea1-bfc4-e5744317f99e2020-05-06T02:40:09.386Z [WARN] agent: Coordinate update blocked by ACLs: accessorID=

- 使用 API 生成全权限的 token 作为 agent token,可以根据实际情况分配 agent token 的权限

[root@wuli-centOS7 ~]# curl -X PUT \http://localhost:8510/v1/acl/create \-H 'X-Consul-Token: dcb93655-0661-4ea1-bfc4-e5744317f99e' \-d '{"Name": "dc1","Type": "management"}'{"ID":"7f587432-3650-9073-e3f4-445a2463b11f"}

- 把生成的 token 写入到配置文件 acl.hcl 中

[root@wuli-centOS7 ~]# vim /data/consul/server1/config/acl.hcl

primary_datacenter = "dc1"acl {enabled = truedefault_policy = "deny"enable_token_persistence = truetokens {master = "dcb93655-0661-4ea1-bfc4-e5744317f99e"agent = "7f587432-3650-9073-e3f4-445a2463b11f"}}

- 重载配置文件,这里无需重启 consul 或者容器

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul reload -token=dcb93655-0661-4ea1-bfc4-e5744317f99eConfiguration reload triggered

- 查看日志,重载配置文件后,已经不报任何警告了

[root@wuli-centOS7 ~]# docker exec consul_server_1 consul monitor -token=dcb93655-0661-4ea1-bfc4-e5744317f99e2020-05-06T03:00:20.034Z [INFO] agent: Caught: signal=hangup2020-05-06T03:00:20.034Z [INFO] agent: Reloading configuration...

- 在集群中 acl 的配置信息是一致的,所以直接把 server1 的 acl.hcl 配置文件复制到其他 server 节点的配置文件夹下即可

[root@wuli-centOS7 config]# cp /data/consul/server1/config/acl.hcl /data/consul/server2/config/[root@wuli-centOS7 config]# cp /data/consul/server1/config/acl.hcl /data/consul/server3/config/

- 重启其他容器,查看集群情况

[root@wuli-centOS7 config]# docker restart consul_server_2consul_server_2[root@wuli-centOS7 config]# docker restart consul_server_3consul_server_3[root@wuli-centOS7 config]# docker exec consul_server_1 consul members -token=dcb93655-0661-4ea1-bfc4-e5744317f99eNode Address Status Type Build Protocol DC Segmentconsul_server_1 172.17.0.2:8301 alive server 1.7.2 2 dc1 <all>consul_server_2 172.17.0.3:8301 alive server 1.7.2 2 dc1 <all>consul_server_3 172.17.0.4:8301 alive server 1.7.2 2 dc1 <all>[root@wuli-centOS7 config]# docker exec consul_server_1 consul operator raft list-peers -token=dcb93655-0661-4ea1-bfc4-e5744317f99eNode ID Address State Voter RaftProtocolconsul_server_1 0214e0b9-04fe-e8ad-c855-b5091dfc8e2e 172.17.0.2:8300 leader true 3consul_server_2 78293668-16a6-1de0-673f-455d594e7447 172.17.0.3:8300 follower true 3consul_server_3 0b6169d8-7acc-ed24-682f-56ffd12b486c 172.17.0.4:8300 follower true 3

可以看到,由于前面配置了 join 参数,所以节点会自动加入集群。

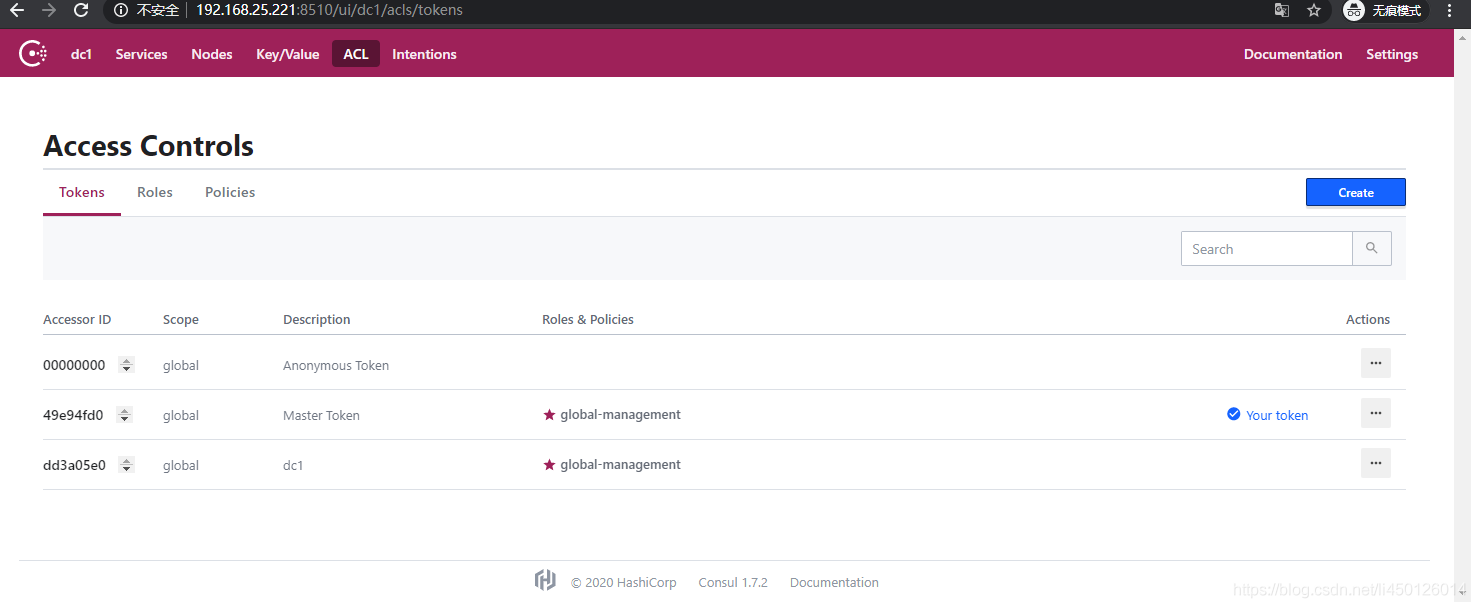

7. 分别访问各个 server 的 ui,查看到的 token 信息是一致的

至此,consul 集群搭建和 ACL token 权限配置完成!