目录

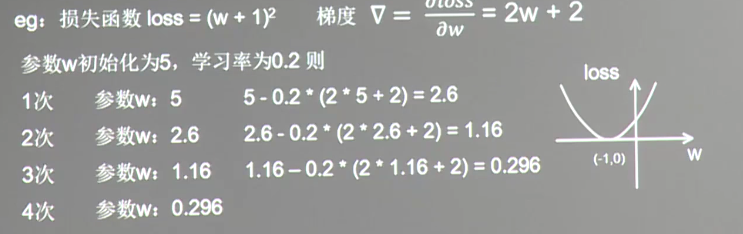

1.2 举例理解

代码实现

#coding:utf-8#设损失函数 loss=(w+1)^2, 令w初值是常数5。反向传播就是求最优w,即求最小loss对应的w值import tensorflow as tf#定义待优化参数w初值赋5w = tf.Variable(tf.constant(5, dtype=tf.float32))#定义损失函数lossloss = tf.square(w+1)#tf.square()是对a里的每一个元素求平方#定义反向传播方法train_step = tf.train.GradientDescentOptimizer(0.2).minimize(loss)#生成会话,训练40轮with tf.Session() as sess:init_op=tf.global_variables_initializer()#初始化sess.run(init_op)#初始化for i in range(40):#训练40轮sess.run(train_step)#训练w_val = sess.run(w)#权重loss_val = sess.run(loss)#损失函数print("After %s steps: w is %f, loss is %f." % (i, w_val,loss_val))#打印

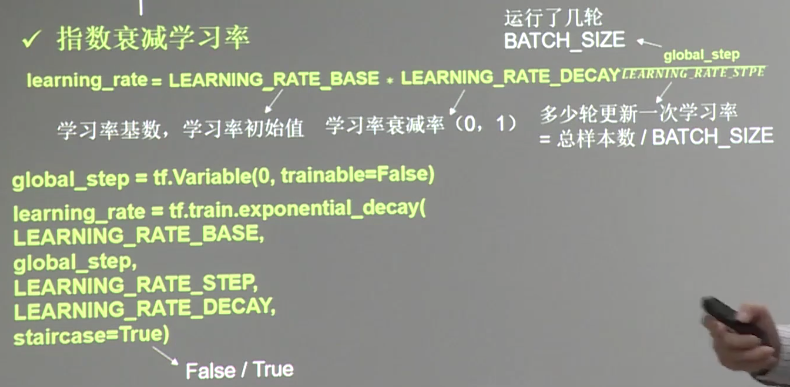

1.3 学习率的选择

学习率大了震荡不收敛,学习率小了,收敛速度慢。

因此提出指数衰减学习率

learning_rate =LEARNING_RATE_BASE*LEARNING_RATE_OECAY#其中LEARNING_RATE_OECAY = Batch_size/Learning_rate_step(运行了几轮/多少轮更新一次学习率)其中Learning_rate_size 也等于总样本数/Batch_size

#coding:utf-8#设损失函数 loss=(w+1)^2, 令w初值是常数10。反向传播就是求最优w,即求最小loss对应的w值#使用指数衰减的学习率,在迭代初期得到较高的下降速度,可以在较小的训练轮数下取得更有收敛度。import tensorflow as tfLEARNING_RATE_BASE = 0.1 #最初学习率LEARNING_RATE_DECAY = 0.99 #学习率衰减率LEARNING_RATE_STEP = 1 #喂入多少轮BATCH_SIZE后,更新一次学习率,一般设为:总样本数/BATCH_SIZE#运行了几轮BATCH_SIZE的计数器,初值给0, 设为不被训练global_step = tf.Variable(0, trainable=False)#定义指数下降学习率learning_rate = tf.train.exponential_decay(LEARNING_RATE_BASE, global_step, LEARNING_RATE_STEP, LEARNING_RATE_DECAY, staircase=True)#定义待优化参数,初值给10w = tf.Variable(tf.constant(5, dtype=tf.float32))#定义损失函数lossloss = tf.square(w+1)#tf.square()是对a里的每一个元素求平方#定义反向传播方法 使用minimize()操作,该操作不仅可以优化更新训练的模型参数,也可以为全局步骤(global_step)计数train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)#生成会话,训练40轮with tf.Session() as sess:init_op=tf.global_variables_initializer()#初始化sess.run(init_op)for i in range(40):#40次sess.run(train_step)#训练learning_rate_val = sess.run(learning_rate)#学习率global_step_val = sess.run(global_step)#计算获取计数器的值w_val = sess.run(w)#计算权重loss_val = sess.run(loss)#计算损失函数#打印相应数据print ("After %s steps: global_step is %f, w is %f, learning rate is %f, loss is %f" % (i, global_step_val, w_val, learning_rate_val, loss_val))

2 滑动平均

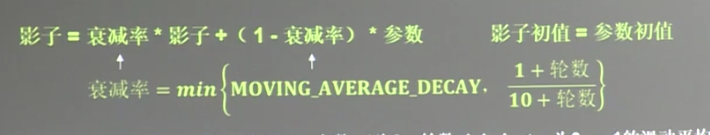

2.1 概念

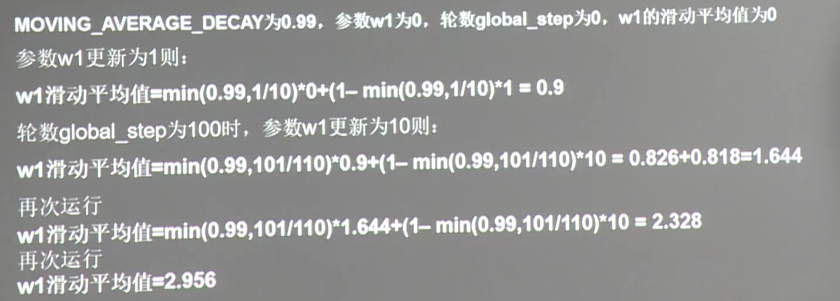

滑动平均(影子值):记录了每个参数一段时间内国王值的平均,增加了模型泛化性。

针对权重和偏(像是给参数加了影子,参数变化,影子缓慢追随)

举例如下

2.2 滑动平均的实现

核心代码

ema = tf.train.ExponentialMovingAverage(衰减率MOVING_AVERAGE_DECAY, 当前轮数global_step)#滑动平均ema_op = ema.apply(tf.trainable_variables())#每运行此句,所有待优化的参数求滑动平均# 通常我们把滑动平均与训练过程绑定在一起,使它们合成一个训练节点。如下所示with tf.control_dependencies([train_step,ema_op]):train_op = tf.no_op(name='train')# ema.average(参数名)查看某参数的滑动平均值

完整的代码

#coding:utf-8#tensorflow学习笔记(北京大学) tf4_6.py 完全解析 滑动平均#QQ群:476842922(欢迎加群讨论学习)#如有错误还望留言指正,谢谢🌝import tensorflow as tf#1. 定义变量及滑动平均类#定义一个32位浮点变量,初始值为0.0 这个代码就是不断更新w1参数,优化w1参数,滑动平均做了个w1的影子w1 = tf.Variable(0, dtype=tf.float32)#定义num_updates(NN的迭代轮数),初始值为0,不可被优化(训练),这个参数不训练global_step = tf.Variable(0, trainable=False)#实例化滑动平均类,给衰减率为0.99,当前轮数global_stepMOVING_AVERAGE_DECAY = 0.99ema = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)#滑动平均#ema.apply后的括号里是更新列表,每次运行sess.run(ema_op)时,对更新列表中的元素求滑动平均值。#在实际应用中会使用tf.trainable_variables()自动将所有待训练的参数汇总为列表#ema_op = ema.apply([w1])#apply(func [, args [, kwargs ]]) 函数用于当函数参数已经存在于一个元组或字典中时,间接地调用函数。ema_op = ema.apply(tf.trainable_variables())#2. 查看不同迭代中变量取值的变化。with tf.Session() as sess:# 初始化init_op = tf.global_variables_initializer()#初始化sess.run(init_op)#计算初始化#用ema.average(w1)获取w1滑动平均值 (要运行多个节点,作为列表中的元素列出,写在sess.run中)#打印出当前参数w1和w1滑动平均值print "current global_step:", sess.run(global_step)#打印global_stepprint "current w1", sess.run([w1, ema.average(w1)]) #计算滑动平均# 参数w1的值赋为1#tf.assign(A, new_number): 这个函数的功能主要是把A的值变为new_numbersess.run(tf.assign(w1, 1))sess.run(ema_op)print "current global_step:", sess.run(global_step)print "current w1", sess.run([w1, ema.average(w1)])# 更新global_step和w1的值,模拟出轮数为100时,参数w1变为10, 以下代码global_step保持为100,每次执行滑动平均操作,影子值会更新sess.run(tf.assign(global_step, 100)) #设置global_step为100sess.run(tf.assign(w1, 10))#设置W1为10sess.run(ema_op)#运行ema_opprint "current global_step:", sess.run(global_step)#打印print "current w1:", sess.run([w1, ema.average(w1)]) #打印# 每次sess.run会更新一次w1的滑动平均值sess.run(ema_op)print "current global_step:" , sess.run(global_step)print "current w1:", sess.run([w1, ema.average(w1)])sess.run(ema_op)print "current global_step:" , sess.run(global_step)print "current w1:", sess.run([w1, ema.average(w1)])sess.run(ema_op)print "current global_step:" , sess.run(global_step)print "current w1:", sess.run([w1, ema.average(w1)])sess.run(ema_op)print "current global_step:" , sess.run(global_step)print "current w1:", sess.run([w1, ema.average(w1)])sess.run(ema_op)print "current global_step:" , sess.run(global_step)print "current w1:", sess.run([w1, ema.average(w1)])sess.run(ema_op)print "current global_step:" , sess.run(global_step)print "current w1:", sess.run([w1, ema.average(w1)])#更改MOVING_AVERAGE_DECAY 为 0.1 看影子追随速度"""current global_step: 0current w1 [0.0, 0.0]current global_step: 0current w1 [1.0, 0.9]current global_step: 100current w1: [10.0, 1.6445453]current global_step: 100current w1: [10.0, 2.3281732]current global_step: 100current w1: [10.0, 2.955868]current global_step: 100current w1: [10.0, 3.532206]current global_step: 100current w1: [10.0, 4.061389]current global_step: 100current w1: [10.0, 4.547275]current global_step: 100current w1: [10.0, 4.9934072]"""

3 正则化

3.1 概念

利用正则化缓解过拟合:正则化在损失函数中引入模型复杂度指标,利用给W加权值,弱化了训练数据的噪声(一般不正则化b偏置)

3.2 实现

#正则化法有两种,l1和l2,在使用时,二选一tf.contrib.layers.l1_regularizer(regularizer)(w)l2_regularizer(regularizer)(w)# 使用方式如下tf.add_to_collection('losses', tf.contrib.layers.l1_regularizer(regularizer)(w))loss = cem +tf.add_n(tf.get_collection('losses'))

3.3 举例

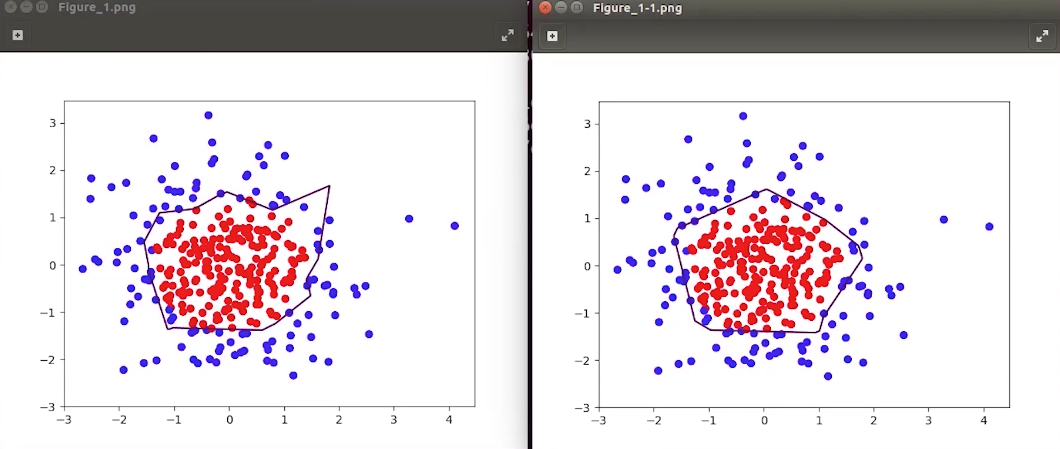

数据X[x0,x1]为正态分布随机点,标注Y当时y= 1(标记为红色),其余y_=0(标记未蓝色)

其中涉及一个可视化模块的使用如下

import matplotlib.pyplot as plt#用各行Y_c对应的值表示颜色(c是color的缩写)plt.scatter(X坐标, y坐标, c=np.squeeze(Y_c))plt.show()# 形成网格坐标点xx, yy = np.mgrid[起:止:步长,起:止:步长]# 降低维度,说直白点就是把数据拉直# x,y坐标进行配对grid = np.c_[xx.rave(),yy.ravel()]# np.c_表示组成矩阵,xx.rave(),yy.ravel()表示拉直# 把手机的网格坐标点,进行训练probs = sess.run(y,feed_dict={x:grid})probs = probs.reshape(xx.shape)# 给点上色plt.contour(x轴坐标值,y轴坐标值,该点的高度,levels =[等高线的高度])plt.show()

完成实现如下

#coding:utf-8#0导入模块 ,生成模拟数据集import tensorflow as tfimport numpy as npimport matplotlib.pyplot as pltBATCH_SIZE = 30seed = 2#基于seed产生随机数rdm = np.random.RandomState(seed)#随机数返回300行2列的矩阵,表示300组坐标点(x0,x1)作为输入数据集X = rdm.randn(300,2)#从X这个300行2列的矩阵中取出一行,判断如果两个坐标的平方和小于2,给Y赋值1,其余赋值0#作为输入数据集的标签(正确答案)Y_ = [int(x0*x0 + x1*x1 <2) for (x0,x1) in X]#遍历Y中的每个元素,1赋值'red'其余赋值'blue',这样可视化显示时人可以直观区分Y_c = [['red' if y else 'blue'] for y in Y_]#对数据集X和标签Y进行shape整理,第一个元素为-1表示,随第二个参数计算得到,第二个元素表示多少列,把X整理为n行2列,把Y整理为n行1列X = np.vstack(X).reshape(-1,2)#n行两列Y_ = np.vstack(Y_).reshape(-1,1)print(X)print(Y_)print(Y_c)#用plt.scatter画出数据集X各行中第0列元素和第1列元素的点即各行的(x0,x1),用各行Y_c对应的值表示颜色(c是color的缩写)plt.scatter(X[:,0], X[:,1], c=np.squeeze(Y_c))plt.show()#定义神经网络的输入、参数和输出,定义前向传播过程def get_weight(shape, regularizer):w = tf.Variable(tf.random_normal(shape), dtype=tf.float32)tf.add_to_collection('losses', tf.contrib.layers.l2_regularizer(regularizer)(w))return wdef get_bias(shape):b = tf.Variable(tf.constant(0.01, shape=shape))return bx = tf.placeholder(tf.float32, shape=(None, 2))y_ = tf.placeholder(tf.float32, shape=(None, 1))w1 = get_weight([2,11], 0.01)b1 = get_bias([11])y1 = tf.nn.relu(tf.matmul(x, w1)+b1)w2 = get_weight([11,1], 0.01)b2 = get_bias([1])y = tf.matmul(y1, w2)+b2#定义损失函数# 一般的损失函数loss_mse = tf.reduce_mean(tf.square(y-y_))# 正则化的损失函数loss_total = loss_mse + tf.add_n(tf.get_collection('losses'))#定义反向传播方法:不含正则化train_step = tf.train.AdamOptimizer(0.0001).minimize(loss_mse)with tf.Session() as sess:init_op = tf.global_variables_initializer()sess.run(init_op)STEPS = 40000for i in range(STEPS):start = (i*BATCH_SIZE) % 300end = start + BATCH_SIZEsess.run(train_step, feed_dict={x:X[start:end], y_:Y_[start:end]})if i % 2000 == 0:loss_mse_v = sess.run(loss_mse, feed_dict={x:X, y_:Y_})print("After %d steps, loss is: %f" %(i, loss_mse_v))#xx在-3到3之间以步长为0.01,yy在-3到3之间以步长0.01,生成二维网格坐标点xx, yy = np.mgrid[-3:3:.01, -3:3:.01]#将xx , yy拉直,并合并成一个2列的矩阵,得到一个网格坐标点的集合grid = np.c_[xx.ravel(), yy.ravel()]#将网格坐标点喂入神经网络 ,probs为输出probs = sess.run(y, feed_dict={x:grid})#probs的shape调整成xx的样子probs = probs.reshape(xx.shape)print("w1:\n",sess.run(w1))print("b1:\n",sess.run(b1))print("w2:\n",sess.run(w2))print("b2:\n",sess.run(b2))plt.scatter(X[:,0], X[:,1], c=np.squeeze(Y_c))plt.contour(xx, yy, probs, levels=[.5])plt.show()#定义反向传播方法:包含正则化train_step = tf.train.AdamOptimizer(0.0001).minimize(loss_total)with tf.Session() as sess:init_op = tf.global_variables_initializer()sess.run(init_op)STEPS = 40000for i in range(STEPS):start = (i*BATCH_SIZE) % 300end = start + BATCH_SIZEsess.run(train_step, feed_dict={x: X[start:end], y_:Y_[start:end]})if i % 2000 == 0:loss_v = sess.run(loss_total, feed_dict={x:X,y_:Y_})print("After %d steps, loss is: %f" %(i, loss_v))xx, yy = np.mgrid[-3:3:.01, -3:3:.01]grid = np.c_[xx.ravel(), yy.ravel()]probs = sess.run(y, feed_dict={x:grid})probs = probs.reshape(xx.shape)print("w1:\n",sess.run(w1))print("b1:\n",sess.run(b1))print("w2:\n",sess.run(w2))print("b2:\n",sess.run(b2))plt.scatter(X[:,0], X[:,1], c=np.squeeze(Y_c))plt.contour(xx, yy, probs, levels=[.5])plt.show()

左边是没有正则化,右边是正则化的结果