1 项目介绍

1.1 项目功能

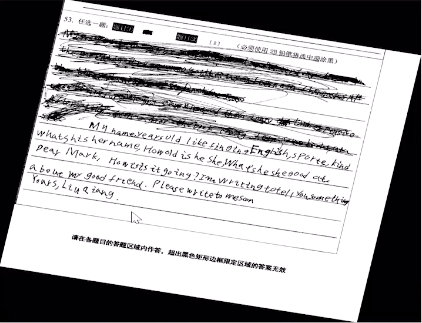

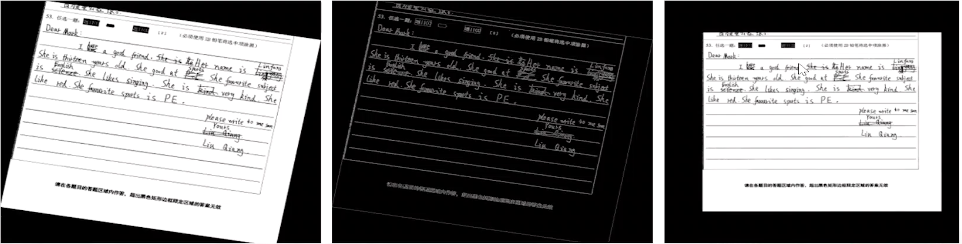

(1)项目功能:英文手写识别,如输入数据为手写英文作文扫描图片,技术:OCR技术

(2)应用场景:

- 高考等应试教育英语作文电子阅卷

- 英文手写电子笔记的上传

1.2 评估指标

(1)模型评估指标:动态规划实现的字符串相似度算法,公式如下

2 数据集介绍

2.1 数据特征

数据集中存在的问题和难点

(1)数据集数量不够大,

(2)扫描得到的图像倾斜,文字区域无法定位,或文字区域无法精准定位

(3)图片中有很多噪声信息,如下划线

(4)图片中手写英文存在很多连笔,涂改等。

3 数据的预处理

3.1 数据增强

数据集的预处理工作,为提升模型的性能,做了数据预处理工作。

(1)图像旋转

(2)图像缩放

(3)对图像添加噪声

(4)对图像进行模糊

(5)将图像往x、y方向上按指定的数量移动图像像素

3.2 倾斜矫正

扫描版或拍着图片会存在图像倾斜的情况,将大大降低识别效果,因此需要对图像进行倾斜矫正预处理。

原理:

(1)首先对图像进行边缘轮廓检测

(2)对边缘轮廓检测后的图片进行霍夫曼倾斜矫正

霍夫曼倾斜矫正原理:通过识别图像中的直线,检测直线倾斜角度和直线的位置信息对图像进行旋转,实测效果佳,且边缘轮廓检测对霍夫曼倾斜矫正起到很好的辅助作用。

3.3 去横线

图像中的横线对文字识别有一定的影响,因此需要在识别前对图像进行横线去除工作,去除横线方法调用Ieptonical库

原理:

(1)首先旋转图片进行倾斜矫正,使得横线变水平,然后提取出水平横线调用函数班背景去掉,只留下横线

(2)接着将横线进行阈值处理,高于阈值的横线加黑,低于阈值的变白,将处理图片上的黑色横线翻转为白色

(3)步骤2原图的横线被去掉,但原图人物身体的部分也被擦除

(4)此时调用相关函数使横线图片与人物擦除的图片想结合,补出擦除的部分,得到较好的去横线的效果。

3.4 文本区域定位

英文行全页面自动定位算法,文本区域定位,在输入神经网络模型前需要做文本区域定位,基于MSER算法进行改进。

算法原理:MSER算法产生的局部文字区域杂乱,对MSER产生的边框又进行了下面的四步筛选,大大提升了问题区域定位的效果

(1)首先根据矩形的大小,将过大或过小的矩形筛除掉

(2)将大矩形和小矩形如果交叠部分大于设定的阈值,将小矩形筛除掉

(3)此步特殊之处在于并不筛除掉矩阵,而是按照规则取min_left_top_x min_left_y max_right_bottom_x max_right_bottom_y 将一个类的矩阵合并成一个大的矩形

(4)按照矩形边框的height > min_height weight > min_weight筛选出最后的边框。

4 网络结构

(1)神经网络结构:四层卷积层+四层池化层

(2)神经网络使用的是:双向LSTM

(3)结构分析

该神经网络使用的是双向递归神经网络tf.nn.bidirectional_dynamic_rnn()

双向的RNN,当cell使用LSTM时,便是双向LSTMD。单向的RNN只考虑上文的信息对下文信息的影响,双向RNN即考虑当前信息不仅受到上文的影响,同时也考虑下文的影响。

前向RNN和dynamic_rnn完全一致,后向RNN输入的序列经过了反转。

(4)优化算法

本神经网络使用的参数优化算法:AdamOptimizer。除了该算法还有Momentum优化算法

- Momentum优化算法计算梯度的指数加权平均,加快迭代速度

- Adam算法集成了momentum动量梯度下降法和RMSprop梯度下降法的优点

(5)损失函数

CTC损失函数(connectionist temporal classification)

CTC在神经网络中计算一种损失值,主要用于可以对没有对齐的数据进行自动补齐,即主要是用在没有事先对齐的序列化数据训练上,应用领域如:语音识别、OCR识别

(6)池化

池化过程使用最大池化max_pool.

原因:虽然最大池化和平均池化都对数据进行了下采样,但是最大池化做特征选择,选出了分类识别度更好的特征

- 最大池化:可以降低卷积层参数误差造成估计均值的偏移,更多的保留纹理信息

- 最大池化提供了非线性,这是最大池化效果更好的原因

(7)使用Dropout

使用Dropout简化训练的网络结构,控制过拟合出现的风险,并通过调整,得到了一个比较合适的dropout参数

(8)激活函数

使用relu函数。可以降低网络参数训练过程中梯度消失或者梯度爆炸的风险

(9)断点续训

保证函数在训练中断后继续进行训练。

5 OCR实现

ocr_generated.py

import osimport globimport randomimport numpy as npfrom PIL import Imagefrom PIL import ImageFilter#记录一个问题: tf.placeholder 报错InvalidArgumentError: You must feed a value for placeholder tensor 'inputs/x_input'#chr函数: 将数字转化成字符#ord函数: 将字符转化成数字#characterNo字典:a-z, A-Z, 0-10, " .,?\'-:;!/\"<>&(+" 为key分别对应值是0-25,26-51,52-61,62...#characters列表: 存储的是cahracterNo字典的key#建立characterNo字典的意思是: 为了将之后手写体对应的txt文件中的句子转化成 数字编码便于存储和运算求距离charactersNo={}characters=[]length=[]for i in range(26):charactersNo[chr(ord('a')+i)]=icharacters.append(chr(ord('a')+i))for i in range(26):charactersNo[chr(ord('A')+i)]=i+26characters.append(chr(ord('A')+i))for i in range(10):charactersNo[chr(ord('0')+i)]=i+52characters.append(chr(ord('0')+i))punctuations=" .,?\'-:;!/\"<>&(+"for p in punctuations:charactersNo[p]=len(charactersNo)characters.append(p)def get_data():#读取了train_img和train_txt文件夹下的所有文件的读取路径#下面代码的作用是:#Imgs:列表结构 存储的是手写的英文图片#Y: 数组结构 存储的是图片对应的txt文件中句子,只不过存储的是字符转码后的数字#length: 数组结构 存储的是图片对应的txt文件中句子含有字符的数量imgFiles=glob.glob(os.path.join("train_img", "*"))imgFiles.sort()txtFiles=glob.glob(os.path.join("train_txt", "*"))txtFiles.sort()Imgs=[]Y=[]length=[]for i in range(len(imgFiles)):fin=open(txtFiles[i])line=fin.readlines()line=line[0]fin.close()y=np.asarray([0]*(len(line)))succ=Truefor j in range(len(line)):if line[j] not in charactersNo:succ=Falsebreaky[j]=charactersNo[line[j]]if not succ:continueY.append(y)length.append(len(line))im = Image.open(imgFiles[i])width,height = im.size#1499,1386im = im.convert("L")Imgs.append(im)#np.asarray()函数 和 np.array()函数: 将list等结构转化成数组#区别是np.asarray()函数不是copy对象,而np.array()函数是copy对象print("train:",len(Imgs),len(Y))Y = np.asarray(Y)length = np.asarray(length)return Imgs, Y

ocr_forward.py

import tensorflow as tfimport osimport globimport randomimport numpy as npfrom PIL import Imagefrom PIL import ImageFilterimport ocr_generatedconv1_filter=32conv2_filter=64conv3_filter=128conv4_filter=256def get_weight(shape, regularizer):#参数w初始化,并且对w进行正则化处理,防止模型过拟合w = tf.Variable(tf.truncated_normal((shape), stddev=0.1, dtype=tf.float32))if regularizer != None: tf.add_to_collection('losses', tf.contrib.layers.l2_regularizer(regularizer)(w))return wdef get_bias(shape):#参数b初始化b = tf.Variable(tf.constant(0., shape=shape, dtype=tf.float32))return bdef conv2d(x,w):#卷积层函数tf.nn.conv2dreturn tf.nn.conv2d(x, w, strides=[1, 1, 1, 1], padding='SAME')def max_pool_2x2(x, kernel_size):#池化层函数,在池化层采用最大池化,有效的提取特征return tf.nn.max_pool(x, ksize=kernel_size, strides=kernel_size, padding='VALID')def forward(x, train, regularizer):#前向传播中共使用了四层神经网络#第一层卷积层和池化层实现conv1_w = get_weight([3, 3, 1, conv1_filter], regularizer)conv1_b = get_bias([conv1_filter])conv1 = conv2d(x, conv1_w)relu1 = tf.nn.relu(tf.nn.bias_add(conv1, conv1_b))pool1 = max_pool_2x2(relu1, [1,2,2,1])#通过keep_prob参数控制drop_out函数对神经元的筛选if train:keep_prob = 0.6 #防止过拟合else:keep_prob = 1.0#第二层卷积层和池化层实现conv2_w = get_weight([5, 5, conv1_filter, conv2_filter], regularizer)conv2_b = get_bias([conv2_filter])conv2 = conv2d(tf.nn.dropout(pool1, keep_prob), conv2_w)relu2 = tf.nn.relu(tf.nn.bias_add(conv2, conv2_b))pool2 = max_pool_2x2(relu2, [1,2,1,1])#第三层卷积层和池化层conv3_w = get_weight([5, 5, conv2_filter, conv3_filter], regularizer)conv3_b = get_bias([conv3_filter])conv3 = conv2d(tf.nn.dropout(pool2, keep_prob), conv3_w)relu3 = tf.nn.relu(tf.nn.bias_add(conv3, conv3_b))pool3 = max_pool_2x2(relu3, [1,4,2,1])#第四层卷积层和池化层conv4_w = get_weight([5, 5, conv3_filter, conv4_filter], regularizer)conv4_b = get_bias([conv4_filter])conv4 = conv2d(tf.nn.dropout(pool3, keep_prob), conv4_w)relu4 = tf.nn.relu(tf.nn.bias_add(conv4, conv4_b))pool4 = max_pool_2x2(relu4, [1,7,1,1])rnn_inputs=tf.reshape(tf.nn.dropout(pool4,keep_prob),[-1,256,conv4_filter])num_hidden=512num_classes=len(ocr_generated.charactersNo)+1W = tf.Variable(tf.truncated_normal([num_hidden,num_classes],stddev=0.1), name="W")b = tf.Variable(tf.constant(0., shape=[num_classes]), name="b")#前向传播、反向传播,利用双向LSTM长时记忆循环网络#seq_len = tf.placeholder(tf.int32, shape=[None])#labels=tf.sparse_placeholder(tf.int32, shape=[None,2])cell_fw = tf.nn.rnn_cell.LSTMCell(num_hidden>>1, state_is_tuple=True)cell_bw = tf.nn.rnn_cell.LSTMCell(num_hidden>>1, state_is_tuple=True)#outputs_fw_bw: (output_fw, output_bw) 是(output_fw, output_bw)的元组outputs_fw_bw, _ = tf.nn.bidirectional_dynamic_rnn(cell_fw, cell_bw, rnn_inputs, dtype=tf.float32)#tf.contat 连接前向和反向得到的结果,在指定维度上进行连接outputs1 = tf.concat(outputs_fw_bw, 2)shape = tf.shape(x)batch_s, max_timesteps = shape[0], shape[1]outputs = tf.reshape(outputs1, [-1, num_hidden])#全连接层实现logits0 = tf.matmul(tf.nn.dropout(outputs,keep_prob), W) + blogits1 = tf.reshape(logits0, [batch_s, -1, num_classes])logits = tf.transpose(logits1, (1, 0, 2))y = tf.cast(logits, tf.float32)return y

ocr_backward.py

import tensorflow as tfimport ocr_forwardimport ocr_generatedimport osimport globimport randomimport numpy as npfrom PIL import Imagefrom PIL import ImageFilterREGULARIZER = 0.0001graphSize = (112,1024)MODEL_SAVE_PATH = "./model/"MODEL_NAME = "ocr_model"def transform(im, flag=True):'''将传入的图片进行预处理:对图像进行图像缩放和数据增强Args:im : 传入的待处理的图片Return:graph : 返回经过预处理的图片#random.uniform(a, b)随机产生[a, b)之间的一个浮点数'''graph=np.zeros(graphSize[1]*graphSize[0]*1).reshape(graphSize[0],graphSize[1],1)deltaX=0deltaY=0ratio=1.464if flag:lowerRatio=max(1.269,im.size[1]*1.0/graphSize[0],im.size[0]*1.0/graphSize[1])upperRatio=max(lowerRatio,2.0)ratio=random.uniform(lowerRatio,upperRatio)deltaX=random.randint(0,int(graphSize[0]-im.size[1]/ratio))deltaY=random.randint(0,int(graphSize[1]-im.size[0]/ratio))else:ratio=max(1.464,im.size[1]*1.0/graphSize[0],im.size[0]*1.0/graphSize[1])deltaX=int(graphSize[0]-im.size[1]/ratio)>>1deltaY=int(graphSize[1]-im.size[0]/ratio)>>1height=int(im.size[1]/ratio)width=int(im.size[0]/ratio)data = im.resize((width,height),Image.ANTIALIAS).getdata()data = 1-np.asarray(data,dtype='float')/255.0data = data.reshape(height,width)graph[deltaX:deltaX+height,deltaY:deltaY+width,0]=datareturn graphdef create_sparse(Y,dtype=np.int32):'''对txt文本转化出来的数字序列Y作进一步的处理Args:YReturn:indices: 数组Y下标索引构成的新数组values: 下标索引对应的真实的数字码shape'''indices = []values = []for i in range(len(Y)):for j in range(len(Y[i])):indices.append((i,j))values.append(Y[i][j])indices = np.asarray(indices, dtype=np.int64)values = np.asarray(values, dtype=dtype)shape = np.asarray([len(Y), np.asarray(indices).max(0)[1] + 1], dtype=np.int64) #[64,180]return (indices, values, shape)def backward():x = tf.placeholder(tf.float32, shape=[None, graphSize[0], graphSize[1],1])y = ocr_forward.forward(x, True, REGULARIZER)#y_: 表示真实标签数据#Y : 从文本中读取到的标签数据,训练时传给y_#y : 神经网络预测的标签global_step = tf.Variable(0, trainable=False)#全局步骤计数seq_len = tf.placeholder(tf.int32, shape=[None])y_ = tf.sparse_placeholder(tf.int32, shape=[None,2])Imgs, Y = ocr_generated.get_data()#损失函数使用的ctc_loss函数loss = tf.nn.ctc_loss(y_, y, seq_len)cost = tf.reduce_mean(loss)#优化函数使用的是Adam算法optimizer1 = tf.train.AdamOptimizer(learning_rate=0.0003).minimize(cost, global_step=global_step)optimizer2 = tf.train.AdamOptimizer(learning_rate=0.0001).minimize(cost, global_step=global_step)width1_decoded, width1_log_prob=tf.nn.ctc_beam_search_decoder(y, seq_len, merge_repeated=False,beam_width=1)decoded, log_prob = tf.nn.ctc_beam_search_decoder(y, seq_len, merge_repeated=False)width1_acc = tf.reduce_mean(tf.edit_distance(tf.cast(width1_decoded[0], tf.int32), y_))acc = tf.reduce_mean(tf.edit_distance(tf.cast(decoded[0], tf.int32), y_))nBatchArray=np.arange(Y.shape[0])epoch=100batchSize=32saver=tf.train.Saver(max_to_keep=1)config = tf.ConfigProto()config.gpu_options.allow_growth = Truesess=tf.Session(config=config)bestDevErr=100.0with sess:sess.run(tf.global_variables_initializer())ckpt = tf.train.get_checkpoint_state(MODEL_SAVE_PATH)if ckpt and ckpt.model_checkpoint_path:saver.restore(sess, ckpt.model_checkpoint_path)#saver.restore(sess, "model/model.ckpt")#print(outputs.get_shape())for ep in range(epoch):np.random.shuffle(nBatchArray)for i in range(0, Y.shape[0], batchSize):batch_output = create_sparse(Y[nBatchArray[i:i+batchSize]])X=[None]*min(Y.shape[0]-i,batchSize)for j in range(len(X)):X[j]=transform(Imgs[nBatchArray[i+j]])feed_dict={x:X,seq_len :np.ones(min(Y.shape[0]-i,batchSize)) * 256, y_:batch_output}if ep<50:sess.run(optimizer1, feed_dict=feed_dict)else:sess.run(optimizer2, feed_dict=feed_dict)print(ep,i,"loss:",tf.reduce_mean(loss.eval(feed_dict=feed_dict)).eval(),"err:",tf.reduce_mean(width1_acc.eval(feed_dict=feed_dict)).eval())#saver.save(sess, "model/model.ckpt")saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME))def main():backward()if __name__ == '__main__':main()

ocr_test.py

import osimport globimport randomimport numpy as npfrom PIL import Imagefrom PIL import ImageFilterimport ocr_forwardimport tensorflow as tfREGULARIZER = 0.0001graphSize = (112,1024)def transform(im,flag=True):'''对image做预处理,将其形状强制转化成(112, 1024, 1)的ndarray对象并返回Args:im = Image ObjectReturn:graph = Ndarray Object'''graph=np.zeros(graphSize[1]*graphSize[0]*1).reshape(graphSize[0],graphSize[1],1)deltaX=0deltaY=0ratio=1.464if flag:lowerRatio=max(1.269,im.size[1]*1.0/graphSize[0],im.size[0]*1.0/graphSize[1])upperRatio=max(lowerRatio,1.659)ratio=random.uniform(lowerRatio,upperRatio)deltaX=random.randint(0,int(graphSize[0]-im.size[1]/ratio))deltaY=random.randint(0,int(graphSize[1]-im.size[0]/ratio))else:ratio=max(1.464,im.size[1]*1.0/graphSize[0],im.size[0]*1.0/graphSize[1])deltaX=int(graphSize[0]-im.size[1]/ratio)>>1deltaY=int(graphSize[1]-im.size[0]/ratio)>>1height=int(im.size[1]/ratio)width=int(im.size[0]/ratio)data = im.resize((width,height),Image.ANTIALIAS).getdata()data = 1-np.asarray(data,dtype='float')/255.0data = data.reshape(height,width)graph[deltaX:deltaX+height,deltaY:deltaY+width,0]=datareturn graphdef countMargin(v,minSum,direction=True):'''Args:v = listminSum = IntReturn:v中比minSum小的项数'''if direction:for i in range(len(v)):if v[i]>minSum:return ireturn len(v)for i in range(len(v)-1,-1,-1):if v[i]>minSum:return len(v)-i-1return len(v)def splitLine(seg,dataSum,h,maxHeight):i=0while i<len(seg)-1:if seg[i+1]-seg[i]<maxHeight:i+=1continuex=countMargin(dataSum[seg[i]:],3,True)y=countMargin(dataSum[:seg[i+1]],3,False)if seg[i+1]-seg[i]-x-y<maxHeight:i+=1continueidx=dataSum[seg[i]+x+h:seg[i+1]-h-y].argmin()+hif 0.33<=idx/(seg[i+1]-seg[i]-x-y)<=0.67:seg.insert(i+1,dataSum[seg[i]+x+h:seg[i+1]-y-h].argmin()+seg[i]+x+h)else:i+=1def getLine(im,data,upperbound=8,lowerbound=25,threshold=30,h=40,minHeight=35,maxHeight=120,beginX=20,endX=-20,beginY=125,endY=1100,merged=True):''''''dataSum=data[:,beginX:endX].sum(1) #dataSum是一个一维向量lastPosition=beginYseg=[]flag=Truecnt=0for i in range(beginY,endY):if dataSum[i]<=lowerbound:flag=Trueif dataSum[i]<=upperbound:cnt=0continueif flag:cnt+=1if cnt>=threshold:lineNo=np.argmin(dataSum[lastPosition:i])+lastPosition if threshold<=i-beginY else beginYif not merged or len(seg)==0 or lineNo-seg[-1]-countMargin(dataSum[seg[-1]:],5,True)-countMargin(dataSum[:lineNo],5,False)>minHeight:seg.append(lineNo)else:avg1=dataSum[max(0,seg[-1]-1):seg[-1]+2]avg1=avg1.sum()/avg1.shape[0]avg2=dataSum[max(0,lineNo-1):lineNo+2]avg2=avg2.sum()/avg2.shape[0]if avg1>avg2:seg[-1]=lineNolastPosition=iflag=FalselineNo=np.argmin(dataSum[lastPosition:]>10)+lastPosition if threshold<i else beginYif not merged or len(seg)==0 or lineNo-seg[-1]-countMargin(dataSum[seg[-1]:],10,True)-countMargin(dataSum[:lineNo],10,False)>minHeight:seg.append(lineNo)else:avg1=dataSum[max(0,seg[-1]-1):seg[-1]+2]avg1=avg1.sum()/avg1.shape[0]avg2=dataSum[max(0,lineNo-1):lineNo+2]avg2=avg2.sum()/avg2.shape[0]if avg1>avg2:seg[-1]=lineNosplitLine(seg,dataSum,h,maxHeight)results=[]for i in range(0,len(seg)-1):results.append(im.crop((0,seg[i]+countMargin(dataSum[seg[i]:],0),im.size[0],seg[i+1]-countMargin(dataSum[:seg[i+1]],0,False))))return resultsdef calEditDistance(text1,text2):dp=np.asarray([0]*(len(text1)+1)*(len(text2)+1)).reshape(len(text1)+1,len(text2)+1)dp[0]=np.arange(len(text2)+1)dp[:,0]=np.arange(len(text1)+1)for i in range(1,len(text1)+1):for j in range(1,len(text2)+1):if text1[i-1]==text2[j-1]:dp[i,j]=dp[i-1,j-1]else:dp[i,j]=min(dp[i,j-1],dp[i-1,j],dp[i-1,j-1])+1return dp[-1,-1]def test():x = tf.placeholder(tf.float32, shape=[None, graphSize[0], graphSize[1], 1])y = ocr_forward.forward(x, False, REGULARIZER)seq_len = tf.placeholder(tf.int32, shape=[None])labels=tf.sparse_placeholder(tf.int32, shape=[None,2])loss = tf.nn.ctc_loss(labels, y, seq_len)cost = tf.reduce_mean(loss)width1_decoded, width1_log_prob=tf.nn.ctc_beam_search_decoder(y, seq_len, merge_repeated=False,beam_width=1)decoded, log_prob = tf.nn.ctc_beam_search_decoder(y, seq_len, merge_repeated=False)width1_acc = tf.reduce_mean(tf.edit_distance(tf.cast(width1_decoded[0], tf.int32), labels))acc = tf.reduce_mean(tf.edit_distance(tf.cast(decoded[0], tf.int32), labels))saver=tf.train.Saver(max_to_keep=1)result=0imgFiles=glob.glob(os.path.join("test_img","*"))imgFiles.sort()txtFiles=glob.glob(os.path.join("test_txt","*"))txtFiles.sort()for i in range(len(imgFiles)):goldLines=[]fin=open(txtFiles[i])lines=fin.readlines()fin.close()for j in range(len(lines)):goldLines.append(lines[j])im = Image.open(imgFiles[i])width, height = im.sizeim = im.convert("L")data = im.getdata()data = 1-np.asarray(data,dtype='float')/255.0data = data.reshape(height,width)#getLine()将图片切割成一行一行的词条Imgs = getLine(im,data)config = tf.ConfigProto()config.gpu_options.allow_growth = Truesess=tf.Session(config=config)with sess:saver.restore(sess,"model/model.ckpt")X=[None]*len(Imgs)for j in range(len(Imgs)):X[j]=transform(Imgs[j],False)feed_dict={inputs:X,seq_len :np.ones(len(X)) * 256}predict = decoded[0].eval(feed_dict=feed_dict)j=0predict_text=""gold_text="".join(goldLines)for k in range(predict.dense_shape[0]):while j<len(predict.indices) and predict.indices[j][0]==k:predict_text+=characters[predict.values[j]]j+=1predict_text+="\n"predict_text=predict_text.rstrip("\n")print("predict_text:")print(predict_text)fout=open("predict%s%s.txt"%(os.sep,txtFiles[i][txtFiles[i].find(os.sep)+1:txtFiles[i].rfind('.')]),'w')fout.write(predict_text)fout.close()print("gold_text:")print(gold_text)cer=calEditDistance(predict_text,gold_text)*1.0/len(gold_text)print("预测正确率: ", end='')print(cer)print()result+=cerprint("test composition err:",result*1.0/len(imgFiles))def main():test()if __name__ == '__main__':main()