Spark测试示例.docx

spark跨集群多数据源使用示例

本示例以spark-sql跨集群访问hive数据源为例,演示如何配置以及测试。

1. 跨集群读取hive数据源配置

1) 计算节点修改$HADOOP_HOME/conf目录下hdfs-site.xml,增加跨集群UclusterID配置。其中Ucluster为当前计算节点的nameservice,Ucluster1、Ucluster2为远程集群的nameservice。

配置如下所示:

<property><name>dfs.nameservices</name><value>Ucluster,Ucluster1,Ucluster2</value></property><property><name>dfs.ha.namenodes.Ucluster</name><value>nn1,nn2</value></property><property><name>dfs.namenode.rpc-address.Ucluster.nn1</name><value>uhadoop-xxscq5sk-master1:8020</value></property><property><name>dfs.namenode.rpc-address.Ucluster.nn2</name><value>uhadoop-xxscq5sk-master2:8020</value></property><property><name>dfs.namenode.http-address.Ucluster.nn1</name><value>uhadoop-xxscq5sk-master1:50070</value></property><property><name>dfs.namenode.http-address.Ucluster.nn2</name><value>uhadoop-xxscq5sk-master2:50070</value></property><property><name>dfs.ha.namenodes.Ucluster1</name><value>nn1,nn2</value></property><property><name>dfs.namenode.rpc-address.Ucluster1.nn1</name><value>uhadoop-vqa44mp2-master1:8020</value></property><property><name>dfs.namenode.rpc-address.Ucluster1.nn2</name><value>uhadoop-vqa44mp2-master2:8020</value></property><property><name>dfs.namenode.http-address.Ucluster1.nn1</name><value>uhadoop-vqa44mp2-master1:50070</value></property><property><name>dfs.namenode.http-address.Ucluster1.nn2</name><value>uhadoop-vqa44mp2-master2:50070</value></property><property><name>dfs.ha.namenodes.Ucluster2</name><value>nn1,nn2</value></property><property><name>dfs.namenode.rpc-address.Ucluster2.nn1</name><value>uhadoop-1dcag42a-master1:8020</value></property><property><name>dfs.namenode.rpc-address.Ucluster2.nn2</name><value>uhadoop-1dcag42a-master2:8020</value></property><property><name>dfs.namenode.http-address.Ucluster2.nn1</name><value>uhadoop-1dcag42a-master1:50070</value></property><property><name>dfs.namenode.http-address.Ucluster2.nn2</name><value>uhadoop-1dcag42a-master2:50070</value></property><property><name>dfs.client.failover.proxy.provider.Ucluster</name><value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value></property><property><name>dfs.client.failover.proxy.provider.Ucluster1</name><value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value></property><property><name>dfs.client.failover.proxy.provider.Ucluster2</name><value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value></property>

2) 重启namenode,datanode,hive-metastore

/etc/init.d/hadoop-hdfs-namenode restart

/etc/init.d/hadoop-hdfs-datanode restart

/etc/init.d/hive-metastore restart

3) 验证跨集群hdfs文件访问

hdfs dfs -ls hdfs://Ucluster1/

hdfs dfs -ls hdfs://Ucluster2/

说明:

1) 本测试采用的是共享hive metastore的方式,独立metastore的方式在spark-sql中尝试不起作用。独立metastore方式可以通过编写spark程序解决。

2. 测试

1) 启动hive客户端

2) 跨存储集群创建表

hive > CREATE TABLE spark_1(id int, name string)

ROW FORMAT SERDE

‘org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe’

STORED AS INPUTFORMAT

‘org.apache.hadoop.mapred.TextInputFormat’

OUTPUTFORMAT

‘org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat’

LOCATION

‘hdfs://Ucluster1/user/hive/warehouse/spark_1’;

hive > insert into spark_1(id,name) values(1,’zhangsan’);

hive > insert into spark_1(id,name) values(2,’lisi’);

hive > CREATE TABLE spark_2(id int, age string)

ROW FORMAT SERDE

‘org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe’

STORED AS INPUTFORMAT

‘org.apache.hadoop.mapred.TextInputFormat’

OUTPUTFORMAT

‘org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat’

LOCATION

‘hdfs://Ucluster2/user/hive/warehouse/spark_2’;

hive > insert into spark_2(id,age) values(1,’30’);

hive > insert into spark_2(id,age) values(2,’40’);

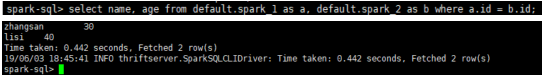

3) 打开spark-sql进行join关联查询