10分钟pandas教程

这是一个简短的介绍pandas用法,主要面向新用户。 在Cookbook你可以看到更复杂的方法。

通常,我们导入以下模块:

In [1]: import pandas as pdIn [2]: import numpy as npIn [3]: import matplotlib.pyplot as plt

创建对象

创建一个Series对象:

In [4]: s = pd.Series([1,3,5,np.nan,6,8])In [5]: sOut[5]:0 1.01 3.02 5.03 NaN4 6.05 8.0dtype: float64

通过numpy数组创建一个DateFrame对象,包括索引和列标签:

In [6]: dates = pd.date_range('20130101', periods=6)In [7]: datesOut[7]:DatetimeIndex(['2013-01-01', '2013-01-02', '2013-01-03', '2013-01-04','2013-01-05', '2013-01-06'],dtype='datetime64[ns]', freq='D')In [8]: df = pd.DataFrame(np.random.randn(6,4), index=dates, columns=list('ABCD'))In [9]: dfOut[9]:A B C D2013-01-01 0.469112 -0.282863 -1.509059 -1.1356322013-01-02 1.212112 -0.173215 0.119209 -1.0442362013-01-03 -0.861849 -2.104569 -0.494929 1.0718042013-01-04 0.721555 -0.706771 -1.039575 0.2718602013-01-05 -0.424972 0.567020 0.276232 -1.0874012013-01-06 -0.673690 0.113648 -1.478427 0.524988

通过字典方式创建DataFrame对象:

In [10]: df2 = pd.DataFrame({ 'A' : 1.,....: 'B' : pd.Timestamp('20130102'),....: 'C' : pd.Series(1,index=list(range(4)),dtype='float32'),....: 'D' : np.array([3] * 4,dtype='int32'),....: 'E' : pd.Categorical(["test","train","test","train"]),....: 'F' : 'foo' })....:In [11]: df2Out[11]:A B C D E F0 1.0 2013-01-02 1.0 3 test foo1 1.0 2013-01-02 1.0 3 train foo2 1.0 2013-01-02 1.0 3 test foo3 1.0 2013-01-02 1.0 3 train foo

查看各列的类型:

In [12]: df2.dtypesOut[12]:A float64B datetime64[ns]C float32D int32E categoryF objectdtype: object

可视化数据

查看首尾行数:

In [14]: df.head()Out[14]:A B C D2013-01-01 0.469112 -0.282863 -1.509059 -1.1356322013-01-02 1.212112 -0.173215 0.119209 -1.0442362013-01-03 -0.861849 -2.104569 -0.494929 1.0718042013-01-04 0.721555 -0.706771 -1.039575 0.2718602013-01-05 -0.424972 0.567020 0.276232 -1.087401In [15]: df.tail(3)Out[15]:A B C D2013-01-04 0.721555 -0.706771 -1.039575 0.2718602013-01-05 -0.424972 0.567020 0.276232 -1.0874012013-01-06 -0.673690 0.113648 -1.478427 0.524988

显示索引,列标签和底层numpy数据:

In [16]: df.indexOut[16]:DatetimeIndex(['2013-01-01', '2013-01-02', '2013-01-03', '2013-01-04','2013-01-05', '2013-01-06'],dtype='datetime64[ns]', freq='D')In [17]: df.columnsOut[17]: Index([u'A', u'B', u'C', u'D'], dtype='object')In [18]: df.valuesOut[18]:array([[ 0.4691, -0.2829, -1.5091, -1.1356],[ 1.2121, -0.1732, 0.1192, -1.0442],[-0.8618, -2.1046, -0.4949, 1.0718],[ 0.7216, -0.7068, -1.0396, 0.2719],[-0.425 , 0.567 , 0.2762, -1.0874],[-0.6737, 0.1136, -1.4784, 0.525 ]])

describe方法显示数据的快速统计汇总结果:

In [19]: df.describe()Out[19]:A B C Dcount 6.000000 6.000000 6.000000 6.000000mean 0.073711 -0.431125 -0.687758 -0.233103std 0.843157 0.922818 0.779887 0.973118min -0.861849 -2.104569 -1.509059 -1.13563225% -0.611510 -0.600794 -1.368714 -1.07661050% 0.022070 -0.228039 -0.767252 -0.38618875% 0.658444 0.041933 -0.034326 0.461706max 1.212112 0.567020 0.276232 1.071804

转置数据:

In [20]: df.TOut[20]:2013-01-01 2013-01-02 2013-01-03 2013-01-04 2013-01-05 2013-01-06A 0.469112 1.212112 -0.861849 0.721555 -0.424972 -0.673690B -0.282863 -0.173215 -2.104569 -0.706771 0.567020 0.113648C -1.509059 0.119209 -0.494929 -1.039575 0.276232 -1.478427D -1.135632 -1.044236 1.071804 0.271860 -1.087401 0.524988

按索引排序:

In [21]: df.sort_index(axis=1, ascending=False)Out[21]:D C B A2013-01-01 -1.135632 -1.509059 -0.282863 0.4691122013-01-02 -1.044236 0.119209 -0.173215 1.2121122013-01-03 1.071804 -0.494929 -2.104569 -0.8618492013-01-04 0.271860 -1.039575 -0.706771 0.7215552013-01-05 -1.087401 0.276232 0.567020 -0.4249722013-01-06 0.524988 -1.478427 0.113648 -0.673690

按指定列的值排序:

In [22]: df.sort_values(by='B')Out[22]:A B C D2013-01-03 -0.861849 -2.104569 -0.494929 1.0718042013-01-04 0.721555 -0.706771 -1.039575 0.2718602013-01-01 0.469112 -0.282863 -1.509059 -1.1356322013-01-02 1.212112 -0.173215 0.119209 -1.0442362013-01-06 -0.673690 0.113648 -1.478427 0.5249882013-01-05 -0.424972 0.567020 0.276232 -1.087401

选择数据

Note:标准Python/Numpy的数据选择和设置很直观和方便,但是在生产环境,我们推荐优化的pandas方法,如at, .iat, .loc, .iloc 和 .ix

Geting数据

选择一列数据,返回Series数据类型,和 df.A 命令等价:

In [23]: df['A']Out[23]:2013-01-01 0.4691122013-01-02 1.2121122013-01-03 -0.8618492013-01-04 0.7215552013-01-05 -0.4249722013-01-06 -0.673690Freq: D, Name: A, dtype: float64

通过 [] 选择行数据:

In [24]: df[0:3]Out[24]:A B C D2013-01-01 0.469112 -0.282863 -1.509059 -1.1356322013-01-02 1.212112 -0.173215 0.119209 -1.0442362013-01-03 -0.861849 -2.104569 -0.494929 1.071804In [25]: df['20130102':'20130104']Out[25]:A B C D2013-01-02 1.212112 -0.173215 0.119209 -1.0442362013-01-03 -0.861849 -2.104569 -0.494929 1.0718042013-01-04 0.721555 -0.706771 -1.039575 0.271860

列标签选择数据

通过date索引获取一个横截面(cross section)数据:

In [26]: df.loc[dates[0]]Out[26]:A 0.469112B -0.282863C -1.509059D -1.135632Name: 2013-01-01 00:00:00, dtype: float64

多个标签获取数据:

In [27]: df.loc[:,['A','B']]Out[27]:A B2013-01-01 0.469112 -0.2828632013-01-02 1.212112 -0.1732152013-01-03 -0.861849 -2.1045692013-01-04 0.721555 -0.7067712013-01-05 -0.424972 0.5670202013-01-06 -0.673690 0.113648

切片数据:

In [28]: df.loc['20130102':'20130104',['A','B']]Out[28]:A B2013-01-02 1.212112 -0.1732152013-01-03 -0.861849 -2.1045692013-01-04 0.721555 -0.706771

在切片数据上精简维度:

In [29]: df.loc['20130102',['A','B']]Out[29]:A 1.212112B -0.173215Name: 2013-01-02 00:00:00, dtype: float64

获取一个标量数据:

In [30]: df.loc[dates[0],'A']Out[30]: 0.46911229990718628

一个更快速获取标量数据的方法(和上一个方法等同):

In [31]: df.at[dates[0],'A']Out[31]: 0.46911229990718628

通过位置获取数据

通过传递一个整数值定位:

In [32]: df.iloc[3]Out[32]:A 0.721555B -0.706771C -1.039575D 0.271860Name: 2013-01-04 00:00:00, dtype: float64

类似Numpy/python,通过切片定位:

In [33]: df.iloc[3:5,0:2]Out[33]:A B2013-01-04 0.721555 -0.7067712013-01-05 -0.424972 0.567020

通过整数列表定位:

In [34]: df.iloc[[1,2,4],[0,2]]Out[34]:A C2013-01-02 1.212112 0.1192092013-01-03 -0.861849 -0.4949292013-01-05 -0.424972 0.276232

指定行切片:

In [35]: df.iloc[1:3,:]Out[35]:A B C D2013-01-02 1.212112 -0.173215 0.119209 -1.0442362013-01-03 -0.861849 -2.104569 -0.494929 1.071804

指定列切片:

In [36]: df.iloc[:,1:3]Out[36]:B C2013-01-01 -0.282863 -1.5090592013-01-02 -0.173215 0.1192092013-01-03 -2.104569 -0.4949292013-01-04 -0.706771 -1.0395752013-01-05 0.567020 0.2762322013-01-06 0.113648 -1.478427

通过位置获取值:

In [37]: df.iloc[1,1]Out[37]: -0.17321464905330858

类似的iat方法:

In [38]: df.iat[1,1]Out[38]: -0.17321464905330858

布尔索引

通过单列值取数:

In [39]: df[df.A > 0]Out[39]:A B C D2013-01-01 0.469112 -0.282863 -1.509059 -1.1356322013-01-02 1.212112 -0.173215 0.119209 -1.0442362013-01-04 0.721555 -0.706771 -1.039575 0.271860

一个where操作取值:

In [40]: df[df > 0]Out[40]:A B C D2013-01-01 0.469112 NaN NaN NaN2013-01-02 1.212112 NaN 0.119209 NaN2013-01-03 NaN NaN NaN 1.0718042013-01-04 0.721555 NaN NaN 0.2718602013-01-05 NaN 0.567020 0.276232 NaN2013-01-06 NaN 0.113648 NaN 0.524988

isin()方法:

In [41]: df2 = df.copy()In [42]: df2['E'] = ['one', 'one','two','three','four','three']In [43]: df2Out[43]:A B C D E2013-01-01 0.469112 -0.282863 -1.509059 -1.135632 one2013-01-02 1.212112 -0.173215 0.119209 -1.044236 one2013-01-03 -0.861849 -2.104569 -0.494929 1.071804 two2013-01-04 0.721555 -0.706771 -1.039575 0.271860 three2013-01-05 -0.424972 0.567020 0.276232 -1.087401 four2013-01-06 -0.673690 0.113648 -1.478427 0.524988 threeIn [44]: df2[df2['E'].isin(['two','four'])]Out[44]:A B C D E2013-01-03 -0.861849 -2.104569 -0.494929 1.071804 two2013-01-05 -0.424972 0.567020 0.276232 -1.087401 four

赋值

相同索引赋值一列数据:

In [45]: s1 = pd.Series([1,2,3,4,5,6], index=pd.date_range('20130102', periods=6))In [46]: s1Out[46]:2013-01-02 12013-01-03 22013-01-04 32013-01-05 42013-01-06 52013-01-07 6Freq: D, dtype: int64In [47]: df['F'] = s1

通过标签赋值:

In [48]: df.at[dates[0],'A'] = 0

位置赋值:

In [49]: df.iat[0,1] = 0

numpy数组赋值:

In [50]: df.loc[:,'D'] = np.array([5] * len(df))

前面操作的结果展示:

In [51]: dfOut[51]:A B C D F2013-01-01 0.000000 0.000000 -1.509059 5 NaN2013-01-02 1.212112 -0.173215 0.119209 5 1.02013-01-03 -0.861849 -2.104569 -0.494929 5 2.02013-01-04 0.721555 -0.706771 -1.039575 5 3.02013-01-05 -0.424972 0.567020 0.276232 5 4.02013-01-06 -0.673690 0.113648 -1.478427 5 5.0

where操作赋值:

In [52]: df2 = df.copy()In [53]: df2[df2 > 0] = -df2In [54]: df2Out[54]:A B C D F2013-01-01 0.000000 0.000000 -1.509059 -5 NaN2013-01-02 -1.212112 -0.173215 -0.119209 -5 -1.02013-01-03 -0.861849 -2.104569 -0.494929 -5 -2.02013-01-04 -0.721555 -0.706771 -1.039575 -5 -3.02013-01-05 -0.424972 -0.567020 -0.276232 -5 -4.02013-01-06 -0.673690 -0.113648 -1.478427 -5 -5.0

缺失数据处理

pandas 主要用np.nan表示缺失数据,默认不列入计算。

reindex方法允许在指定的轴上增/删/改原索引,返回一个副本:

In [55]: df1 = df.reindex(index=dates[0:4], columns=list(df.columns) + ['E'])In [56]: df1.loc[dates[0]:dates[1],'E'] = 1In [57]: df1Out[57]:A B C D E2018-03-01 0.004023 -1.776898 0.689760 0.753648 1.02018-03-02 1.979978 0.965044 0.512416 -2.538019 1.02018-03-03 -0.991052 -0.690239 0.077280 0.860828 NaN2018-03-04 0.147670 0.886152 1.991110 -0.514134 NaN

删除有缺失值的行:

In [58]: df1.dropna(how='any')Out[58]:A B C D F E2013-01-02 1.212112 -0.173215 0.119209 5 1.0 1.0

在缺失值位置填充:

In [40]: df1.fillna(value=5)Out[40]:A B C D E2018-03-01 0.004023 -1.776898 0.689760 0.753648 1.02018-03-02 1.979978 0.965044 0.512416 -2.538019 1.02018-03-03 -0.991052 -0.690239 0.077280 0.860828 5.02018-03-04 0.147670 0.886152 1.991110 -0.514134 5.0

判断是否缺失,返回布尔集:

In [41]: df1.isnull()Out[41]:A B C D E2018-03-01 False False False False False2018-03-02 False False False False False2018-03-03 False False False False True2018-03-04 False False False False True

数据操作

Operations 通常排除缺失数据

描述统计:

In [61]: df.mean()Out[61]:A -0.004474B -0.383981C -0.687758D 5.000000F 3.000000dtype: float64

同样操作在标签维度:

In [62]: df.mean(1)Out[62]:2013-01-01 0.8727352013-01-02 1.4316212013-01-03 0.7077312013-01-04 1.3950422013-01-05 1.8836562013-01-06 1.592306Freq: D, dtype: float64

pandas操作不同维度的数据需要对齐,另外它会按指定的维度方向计算:

In [63]: s = pd.Series([1,3,5,np.nan,6,8], index=dates).shift(2)In [64]: sOut[64]:2013-01-01 NaN2013-01-02 NaN2013-01-03 1.02013-01-04 3.02013-01-05 5.02013-01-06 NaNFreq: D, dtype: float64In [65]: df.sub(s, axis='index')Out[65]:A B C D F2013-01-01 NaN NaN NaN NaN NaN2013-01-02 NaN NaN NaN NaN NaN2013-01-03 -1.861849 -3.104569 -1.494929 4.0 1.02013-01-04 -2.278445 -3.706771 -4.039575 2.0 0.02013-01-05 -5.424972 -4.432980 -4.723768 0.0 -1.02013-01-06 NaN NaN NaN NaN NaN

apply方法

applying 函数:

In [66]: df.apply(np.cumsum)Out[66]:A B C D F2013-01-01 0.000000 0.000000 -1.509059 5 NaN2013-01-02 1.212112 -0.173215 -1.389850 10 1.02013-01-03 0.350263 -2.277784 -1.884779 15 3.02013-01-04 1.071818 -2.984555 -2.924354 20 6.02013-01-05 0.646846 -2.417535 -2.648122 25 10.02013-01-06 -0.026844 -2.303886 -4.126549 30 15.0In [67]: df.apply(lambda x: x.max() - x.min())Out[67]:A 2.073961B 2.671590C 1.785291D 0.000000F 4.000000dtype: float64

直方图

In [68]: s = pd.Series(np.random.randint(0, 7, size=10))In [69]: sOut[69]:0 41 22 13 24 65 46 47 68 49 4dtype: int64In [70]: s.value_counts()Out[70]:4 56 22 21 1dtype: int64

字符串方法

Series中的字符处理方法和Python中的str方法一样。另外str方法默认在模式匹配的时候默认使用正则表达。

In [71]: s = pd.Series(['A', 'B', 'C', 'Aaba', 'Baca', np.nan, 'CABA', 'dog', 'cat'])In [72]: s.str.lower()Out[72]:0 a1 b2 c3 aaba4 baca5 NaN6 caba7 dog8 catdtype: object

合并(merge)

concat方法:

In [73]: df = pd.DataFrame(np.random.randn(10, 4))In [74]: dfOut[74]:0 1 2 30 -0.548702 1.467327 -1.015962 -0.4830751 1.637550 -1.217659 -0.291519 -1.7455052 -0.263952 0.991460 -0.919069 0.2660463 -0.709661 1.669052 1.037882 -1.7057754 -0.919854 -0.042379 1.247642 -0.0099205 0.290213 0.495767 0.362949 1.5481066 -1.131345 -0.089329 0.337863 -0.9458677 -0.932132 1.956030 0.017587 -0.0166928 -0.575247 0.254161 -1.143704 0.2158979 1.193555 -0.077118 -0.408530 -0.862495# break it into piecesIn [75]: pieces = [df[:3], df[3:7], df[7:]]In [76]: pd.concat(pieces)Out[76]:0 1 2 30 -0.548702 1.467327 -1.015962 -0.4830751 1.637550 -1.217659 -0.291519 -1.7455052 -0.263952 0.991460 -0.919069 0.2660463 -0.709661 1.669052 1.037882 -1.7057754 -0.919854 -0.042379 1.247642 -0.0099205 0.290213 0.495767 0.362949 1.5481066 -1.131345 -0.089329 0.337863 -0.9458677 -0.932132 1.956030 0.017587 -0.0166928 -0.575247 0.254161 -1.143704 0.2158979 1.193555 -0.077118 -0.408530 -0.862495

join方法

In [77]: left = pd.DataFrame({'key': ['foo', 'foo'], 'lval': [1, 2]})In [78]: right = pd.DataFrame({'key': ['foo', 'foo'], 'rval': [4, 5]})In [79]: leftOut[79]:key lval0 foo 11 foo 2In [80]: rightOut[80]:key rval0 foo 41 foo 5In [81]: pd.merge(left, right, on='key')Out[81]:key lval rval0 foo 1 41 foo 1 52 foo 2 43 foo 2 5

append方法

在DataFrame中增加一列:

In [82]: df = pd.DataFrame(np.random.randn(8, 4), columns=['A','B','C','D'])In [83]: dfOut[83]:A B C D0 1.346061 1.511763 1.627081 -0.9905821 -0.441652 1.211526 0.268520 0.0245802 -1.577585 0.396823 -0.105381 -0.5325323 1.453749 1.208843 -0.080952 -0.2646104 -0.727965 -0.589346 0.339969 -0.6932055 -0.339355 0.593616 0.884345 1.5914316 0.141809 0.220390 0.435589 0.1924517 -0.096701 0.803351 1.715071 -0.708758In [84]: s = df.iloc[3]In [85]: df.append(s, ignore_index=True)Out[85]:A B C D0 1.346061 1.511763 1.627081 -0.9905821 -0.441652 1.211526 0.268520 0.0245802 -1.577585 0.396823 -0.105381 -0.5325323 1.453749 1.208843 -0.080952 -0.2646104 -0.727965 -0.589346 0.339969 -0.6932055 -0.339355 0.593616 0.884345 1.5914316 0.141809 0.220390 0.435589 0.1924517 -0.096701 0.803351 1.715071 -0.708758

分组(grouping)

在”group by”的时候涉及到以下几步:

- Spliting 按条件分割数据

- Applying 在每组上应用函数

- Combing 合并成一个数据集

In [86]: df = pd.DataFrame({'A' : ['foo', 'bar', 'foo', 'bar',....: 'foo', 'bar', 'foo', 'foo'],....: 'B' : ['one', 'one', 'two', 'three',....: 'two', 'two', 'one', 'three'],....: 'C' : np.random.randn(8),....: 'D' : np.random.randn(8)})....:In [87]: dfOut[87]:A B C D0 foo one -1.202872 -0.0552241 bar one -1.814470 2.3959852 foo two 1.018601 1.5528253 bar three -0.595447 0.1665994 foo two 1.395433 0.0476095 bar two -0.392670 -0.1364736 foo one 0.007207 -0.5617577 foo three 1.928123 -1.623033

先分组然后应用sum函数:

In [88]: df.groupby('A').sum()Out[88]:C DAbar -2.802588 2.42611foo 3.146492 -0.63958

通过多列分组并生成层次索引,然后应用函数:

In [89]: df.groupby(['A','B']).sum()Out[89]:C DA Bbar one -1.814470 2.395985three -0.595447 0.166599two -0.392670 -0.136473foo one -1.195665 -0.616981three 1.928123 -1.623033two 2.414034 1.600434

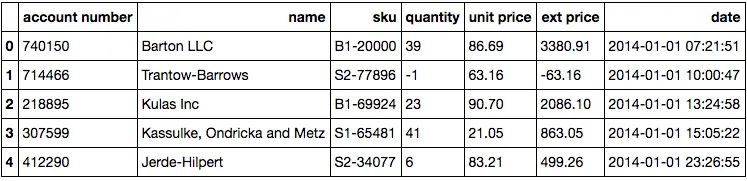

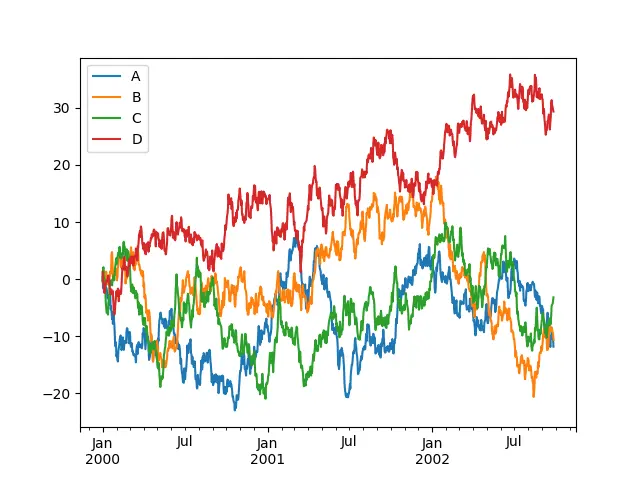

groupby、Grouper和agg函数的使用(重要重要重要!!!)

(1) groupby && Grouper

import pandas as pddf = pd.read_excel("https://github.com/chris1610/pbpython/blob/master/data/sample-salesv3.xlsx?raw=True")df["date"] = pd.to_datetime(df['date'])df.head()

统计’ext price’这个属性在每个月的累和(sum)值,resample 只有在index为date类型的时候才能用:

df.set_index('date').resample('M')['ext price'].sum()

date2014-01-31 185361.662014-02-28 146211.622014-03-31 203921.382014-04-30 174574.112014-05-31 165418.552014-06-30 174089.332014-07-31 191662.112014-08-31 153778.592014-09-30 168443.172014-10-31 171495.322014-11-30 119961.222014-12-31 163867.26Freq: M, Name: ext price, dtype: float64

进一步的,我们想知道每个用户每个月的sum值,那么就需要一个groupby了

# df.set_index('date').groupby('name')['ext price'].resample("M").sum()df.groupby(['name', pd.Grouper(key='date', freq='M')])['ext price'].sum()

name dateBarton LLC 2014-01-31 6177.572014-02-28 12218.032014-03-31 3513.532014-04-30 11474.202014-05-31 10220.172014-06-30 10463.732014-07-31 6750.482014-08-31 17541.462014-09-30 14053.612014-10-31 9351.682014-11-30 4901.142014-12-31 2772.90Cronin, Oberbrunner and Spencer 2014-01-31 1141.752014-02-28 13976.262014-03-31 11691.622014-04-30 3685.442014-05-31 6760.112014-06-30 5379.672014-07-31 6020.302014-08-31 5399.582014-09-30 12693.742014-10-31 9324.372014-11-30 6021.112014-12-31 7640.60Frami, Hills and Schmidt 2014-01-31 5112.342014-02-28 4124.532014-03-31 10397.442014-04-30 5036.182014-05-31 4097.872014-06-30 13192.19...Trantow-Barrows 2014-07-31 11987.342014-08-31 17251.652014-09-30 6992.482014-10-31 10064.272014-11-30 6550.102014-12-31 10124.23White-Trantow 2014-01-31 13703.772014-02-28 11783.982014-03-31 8583.052014-04-30 19009.202014-05-31 5877.292014-06-30 14791.322014-07-31 10242.622014-08-31 12287.212014-09-30 5315.162014-10-31 19896.852014-11-30 9544.612014-12-31 4806.93Will LLC 2014-01-31 20953.872014-02-28 13613.062014-03-31 9838.932014-04-30 6094.942014-05-31 11856.952014-06-30 2419.522014-07-31 11017.542014-08-31 1439.822014-09-30 4345.992014-10-31 7085.332014-11-30 3210.442014-12-31 12561.21Name: ext price, Length: 240, dtype: float64

显然,这种写法多敲了很多次键盘,那么它的好处是啥呢?

首先,逻辑上更加直接,当你敲代码完成以上统计的时候,你首先想到的就是groupby操作,而set_index, resample好像不会立马想到。想到了groupby这个’动作’之后,你就会紧接着想按照哪个key来操作,此时你只需要用字符串,或者Grouper把key定义好就行了。最后使用聚合函数,就得到了结果。所以,从人类的思考角度看,后者更容易记忆。

Grouper里的freq可以方便的改成其他周期参数(resample也可以),比如:

# 按照年度,且截止到12月最后一天统计ext price的sum值df.groupby(['name', pd.Grouper(key='date', freq='A-DEC')])['ext price'].sum()

name dateBarton LLC 2014-12-31 109438.50Cronin, Oberbrunner and Spencer 2014-12-31 89734.55Frami, Hills and Schmidt 2014-12-31 103569.59Fritsch, Russel and Anderson 2014-12-31 112214.71Halvorson, Crona and Champlin 2014-12-31 70004.36Herman LLC 2014-12-31 82865.00Jerde-Hilpert 2014-12-31 112591.43Kassulke, Ondricka and Metz 2014-12-31 86451.07Keeling LLC 2014-12-31 100934.30Kiehn-Spinka 2014-12-31 99608.77Koepp Ltd 2014-12-31 103660.54Kuhn-Gusikowski 2014-12-31 91094.28Kulas Inc 2014-12-31 137351.96Pollich LLC 2014-12-31 87347.18Purdy-Kunde 2014-12-31 77898.21Sanford and Sons 2014-12-31 98822.98Stokes LLC 2014-12-31 91535.92Trantow-Barrows 2014-12-31 123381.38White-Trantow 2014-12-31 135841.99Will LLC 2014-12-31 104437.60Name: ext price, dtype: float64

(2) agg

从0.20.1开始,pandas引入了agg函数,它提供基于列的聚合操作。而groupby可以看做是基于行,或者说index的聚合操作。

从实现上看,groupby返回的是一个DataFrameGroupBy结构,这个结构必须调用聚合函数(如sum)之后,才会得到结构为Series的数据结果。

而agg是DataFrame的直接方法,返回的也是一个DataFrame。当然,很多功能用sum、mean等等也可以实现。但是agg更加简洁, 而且传给它的函数可以是字符串,也可以自定义,参数是column对应的子DataFrame

举个例子:

df[["ext price", "quantity", "unit price"]].agg(['sum', 'mean'])

你还可以针对不同的列使用不同的聚合函数:

df.agg({'ext price': ['sum', 'mean'], 'quantity': ['sum', 'mean'], 'unit price': ['mean']})

自定义函数怎么用呢,也是so easy。比如,我想统计sku中,购买次数最多的产品编号,可以这样做:

# 这里的x是sku对应的columnget_max = lambda x: x.value_counts(dropna=False).index[0]df.agg({'ext price': ['sum', 'mean'],'quantity': ['sum', 'mean'],'unit price': ['mean'],'sku': [get_max]})

重塑(reshape)

stack方法

In [90]: tuples = list(zip(*[['bar', 'bar', 'baz', 'baz',....: 'foo', 'foo', 'qux', 'qux'],....: ['one', 'two', 'one', 'two',....: 'one', 'two', 'one', 'two']]))....:In [91]: index = pd.MultiIndex.from_tuples(tuples, names=['first', 'second'])In [92]: df = pd.DataFrame(np.random.randn(8, 2), index=index, columns=['A', 'B'])In [93]: df2 = df[:4]In [94]: df2Out[94]:A Bfirst secondbar one 0.029399 -0.542108two 0.282696 -0.087302baz one -1.575170 1.771208two 0.816482 1.100230

stack方法用列标签新增一层索引:

In [95]: stacked = df2.stack()In [96]: stackedOut[96]:first secondbar one A 0.029399B -0.542108two A 0.282696B -0.087302baz one A -1.575170B 1.771208two A 0.816482B 1.100230dtype: float64

stack方法的逆操作为unstack,默认解压最后一层:

In [97]: stacked.unstack()Out[97]:A Bfirst secondbar one 0.029399 -0.542108two 0.282696 -0.087302baz one -1.575170 1.771208two 0.816482 1.100230In [98]: stacked.unstack(1)Out[98]:second one twofirstbar A 0.029399 0.282696B -0.542108 -0.087302baz A -1.575170 0.816482B 1.771208 1.100230In [99]: stacked.unstack(0)Out[99]:first bar bazsecondone A 0.029399 -1.575170B -0.542108 1.771208two A 0.282696 0.816482B -0.087302 1.100230

透视表(Pivot table)

In [100]: df = pd.DataFrame({'A' : ['one', 'one', 'two', 'three'] * 3,.....: 'B' : ['A', 'B', 'C'] * 4,.....: 'C' : ['foo', 'foo', 'foo', 'bar', 'bar', 'bar'] * 2,.....: 'D' : np.random.randn(12),.....: 'E' : np.random.randn(12)}).....:In [101]: dfOut[101]:A B C D E0 one A foo 1.418757 -0.1796661 one B foo -1.879024 1.2918362 two C foo 0.536826 -0.0096143 three A bar 1.006160 0.3921494 one B bar -0.029716 0.2645995 one C bar -1.146178 -0.0574096 two A foo 0.100900 -1.4256387 three B foo -1.035018 1.0240988 one C foo 0.314665 -0.1060629 one A bar -0.773723 1.82437510 two B bar -1.170653 0.59597411 three C bar 0.648740 1.167115

可以通过pivot_table方法很轻松的透视数据:

In [102]: pd.pivot_table(df, values='D', index=['A', 'B'], columns=['C'])Out[102]:C bar fooA Bone A -0.773723 1.418757B -0.029716 -1.879024C -1.146178 0.314665three A 1.006160 NaNB NaN -1.035018C 0.648740 NaNtwo A NaN 0.100900B -1.170653 NaNC NaN 0.536826

时间序列(Time Series)

pandas 拥有简单,强大,高效的函数用来处理频率转换中的重采样问题(例如将秒数据转换为5分钟数据)。

In [103]: rng = pd.date_range('1/1/2012', periods=100, freq='S')In [104]: ts = pd.Series(np.random.randint(0, 500, len(rng)), index=rng)In [105]: ts.resample('5Min').sum()Out[105]:2012-01-01 25083Freq: 5T, dtype: int64

时区表示:

In [106]: rng = pd.date_range('3/6/2012 00:00', periods=5, freq='D')In [107]: ts = pd.Series(np.random.randn(len(rng)), rng)In [108]: tsOut[108]:2012-03-06 0.4640002012-03-07 0.2273712012-03-08 -0.4969222012-03-09 0.3063892012-03-10 -2.290613Freq: D, dtype: float64In [109]: ts_utc = ts.tz_localize('UTC')In [110]: ts_utcOut[110]:2012-03-06 00:00:00+00:00 0.4640002012-03-07 00:00:00+00:00 0.2273712012-03-08 00:00:00+00:00 -0.4969222012-03-09 00:00:00+00:00 0.3063892012-03-10 00:00:00+00:00 -2.290613Freq: D, dtype: float64

转换时区:

In [111]: ts_utc.tz_convert('US/Eastern')Out[111]:2012-03-05 19:00:00-05:00 0.4640002012-03-06 19:00:00-05:00 0.2273712012-03-07 19:00:00-05:00 -0.4969222012-03-08 19:00:00-05:00 0.3063892012-03-09 19:00:00-05:00 -2.290613Freq: D, dtype: float64

时区跨度转换:

In [112]: rng = pd.date_range('1/1/2012', periods=5, freq='M')In [113]: ts = pd.Series(np.random.randn(len(rng)), index=rng)In [114]: tsOut[114]:2012-01-31 -1.1346232012-02-29 -1.5618192012-03-31 -0.2608382012-04-30 0.2819572012-05-31 1.523962Freq: M, dtype: float64In [115]: ps = ts.to_period()In [116]: psOut[116]:2012-01 -1.1346232012-02 -1.5618192012-03 -0.2608382012-04 0.2819572012-05 1.523962Freq: M, dtype: float64In [117]: ps.to_timestamp()Out[117]:2012-01-01 -1.1346232012-02-01 -1.5618192012-03-01 -0.2608382012-04-01 0.2819572012-05-01 1.523962Freq: MS, dtype: float64

period和timestamp之间的转换让某些算术函数应用起来非常方便。下面的例子将一个quarterly frequency with year ending in November 转化成 9am of the end of the month following the quarter end:

In [118]: prng = pd.period_range('1990Q1', '2000Q4', freq='Q-NOV')In [119]: ts = pd.Series(np.random.randn(len(prng)), prng)In [120]: ts.index = (prng.asfreq('M', 'e') + 1).asfreq('H', 's') + 9In [121]: ts.head()Out[121]:1990-03-01 09:00 -0.9029371990-06-01 09:00 0.0681591990-09-01 09:00 -0.0578731990-12-01 09:00 -0.3682041991-03-01 09:00 -1.144073Freq: H, dtype: float64

Categoricals

从0.15版开始,DateFrame已经包含了categorical类型

将原始数据转换为categorical类型:

In [123]: df["grade"] = df["raw_grade"].astype("category")In [124]: df["grade"]Out[124]:0 a1 b2 b3 a4 a5 eName: grade, dtype: categoryCategories (3, object): [a, b, e]

重命名categorical类型:

In [125]: df["grade"].cat.categories = ["very good","good","very bad"]

重新排列并新增缺失数据:

In [126]: df["grade"] = df["grade"].cat.set_categories(["very bad", "bad", "medium", "good", "very good"])In [127]: df["grade"]Out[127]:0 very good1 good2 good3 very good4 very good5 very badName: grade, dtype: categoryCategories (5, object): [very bad, bad, medium, good, very good]

排序:

In [128]: df.sort_values(by="grade")Out[128]:id raw_grade grade5 6 e very bad1 2 b good2 3 b good0 1 a very good3 4 a very good4 5 a very good

分组:

In [129]: df.groupby("grade").size()Out[129]:gradevery bad 1bad 0medium 0good 2very good 3dtype: int64

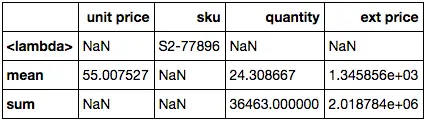

画图

In [130]: ts = pd.Series(np.random.randn(1000), index=pd.date_range('1/1/2000', periods=1000))In [131]: ts = ts.cumsum()In [132]: ts.plot()Out[132]: <matplotlib.axes._subplots.AxesSubplot at 0x10efd5a90>

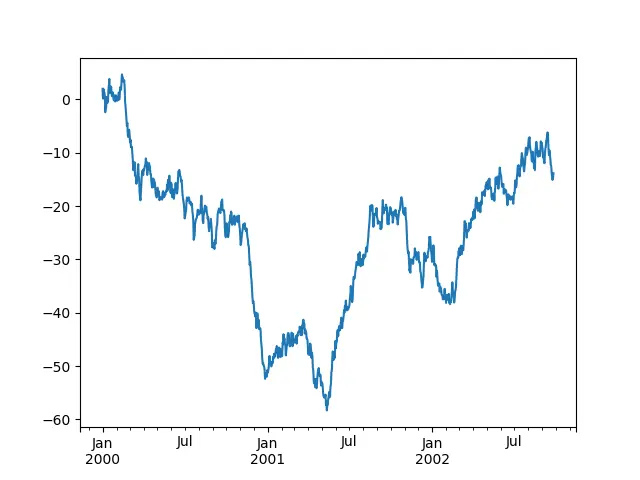

在DataFrame中画出所有列:

In [133]: df = pd.DataFrame(np.random.randn(1000, 4), index=ts.index,.....: columns=['A', 'B', 'C', 'D']).....:In [134]: df = df.cumsum()In [135]: plt.figure(); df.plot(); plt.legend(loc='best')Out[135]: <matplotlib.legend.Legend at 0x112854d90>

文件输入输出获取数据(Getting Data In/Out)

csv

将数据写入一个csv文件:

In [136]: df.to_csv('foo.csv')

读取csv数据文件:

In [137]: pd.read_csv('foo.csv')Out[137]:Unnamed: 0 A B C D0 2000-01-01 0.266457 -0.399641 -0.219582 1.1868601 2000-01-02 -1.170732 -0.345873 1.653061 -0.2829532 2000-01-03 -1.734933 0.530468 2.060811 -0.5155363 2000-01-04 -1.555121 1.452620 0.239859 -1.1568964 2000-01-05 0.578117 0.511371 0.103552 -2.4282025 2000-01-06 0.478344 0.449933 -0.741620 -1.9624096 2000-01-07 1.235339 -0.091757 -1.543861 -1.084753.. ... ... ... ... ...993 2002-09-20 -10.628548 -9.153563 -7.883146 28.313940994 2002-09-21 -10.390377 -8.727491 -6.399645 30.914107995 2002-09-22 -8.985362 -8.485624 -4.669462 31.367740996 2002-09-23 -9.558560 -8.781216 -4.499815 30.518439997 2002-09-24 -9.902058 -9.340490 -4.386639 30.105593998 2002-09-25 -10.216020 -9.480682 -3.933802 29.758560999 2002-09-26 -11.856774 -10.671012 -3.216025 29.369368[1000 rows x 5 columns]

HDF5

写入HDF5:

In [138]: df.to_hdf('foo.h5','df')

读取HDF5文件:

In [139]: pd.read_hdf('foo.h5','df')Out[139]:A B C D2000-01-01 0.266457 -0.399641 -0.219582 1.1868602000-01-02 -1.170732 -0.345873 1.653061 -0.2829532000-01-03 -1.734933 0.530468 2.060811 -0.5155362000-01-04 -1.555121 1.452620 0.239859 -1.1568962000-01-05 0.578117 0.511371 0.103552 -2.4282022000-01-06 0.478344 0.449933 -0.741620 -1.9624092000-01-07 1.235339 -0.091757 -1.543861 -1.084753... ... ... ... ...2002-09-20 -10.628548 -9.153563 -7.883146 28.3139402002-09-21 -10.390377 -8.727491 -6.399645 30.9141072002-09-22 -8.985362 -8.485624 -4.669462 31.3677402002-09-23 -9.558560 -8.781216 -4.499815 30.5184392002-09-24 -9.902058 -9.340490 -4.386639 30.1055932002-09-25 -10.216020 -9.480682 -3.933802 29.7585602002-09-26 -11.856774 -10.671012 -3.216025 29.369368[1000 rows x 4 columns]

Excel

写入excel:

In [140]: df.to_excel('foo.xlsx', sheet_name='Sheet1')

读取Excel:

In [141]: pd.read_excel('foo.xlsx', 'Sheet1', index_col=None, na_values=['NA'])Out[141]:A B C D2000-01-01 0.266457 -0.399641 -0.219582 1.1868602000-01-02 -1.170732 -0.345873 1.653061 -0.2829532000-01-03 -1.734933 0.530468 2.060811 -0.5155362000-01-04 -1.555121 1.452620 0.239859 -1.1568962000-01-05 0.578117 0.511371 0.103552 -2.4282022000-01-06 0.478344 0.449933 -0.741620 -1.9624092000-01-07 1.235339 -0.091757 -1.543861 -1.084753... ... ... ... ...2002-09-20 -10.628548 -9.153563 -7.883146 28.3139402002-09-21 -10.390377 -8.727491 -6.399645 30.9141072002-09-22 -8.985362 -8.485624 -4.669462 31.3677402002-09-23 -9.558560 -8.781216 -4.499815 30.5184392002-09-24 -9.902058 -9.340490 -4.386639 30.1055932002-09-25 -10.216020 -9.480682 -3.933802 29.7585602002-09-26 -11.856774 -10.671012 -3.216025 29.369368[1000 rows x 4 columns]