资料: https://www.cnblogs.com/noah-luo/

文档: 📎k8s课程.html

1:k8s集群的安装

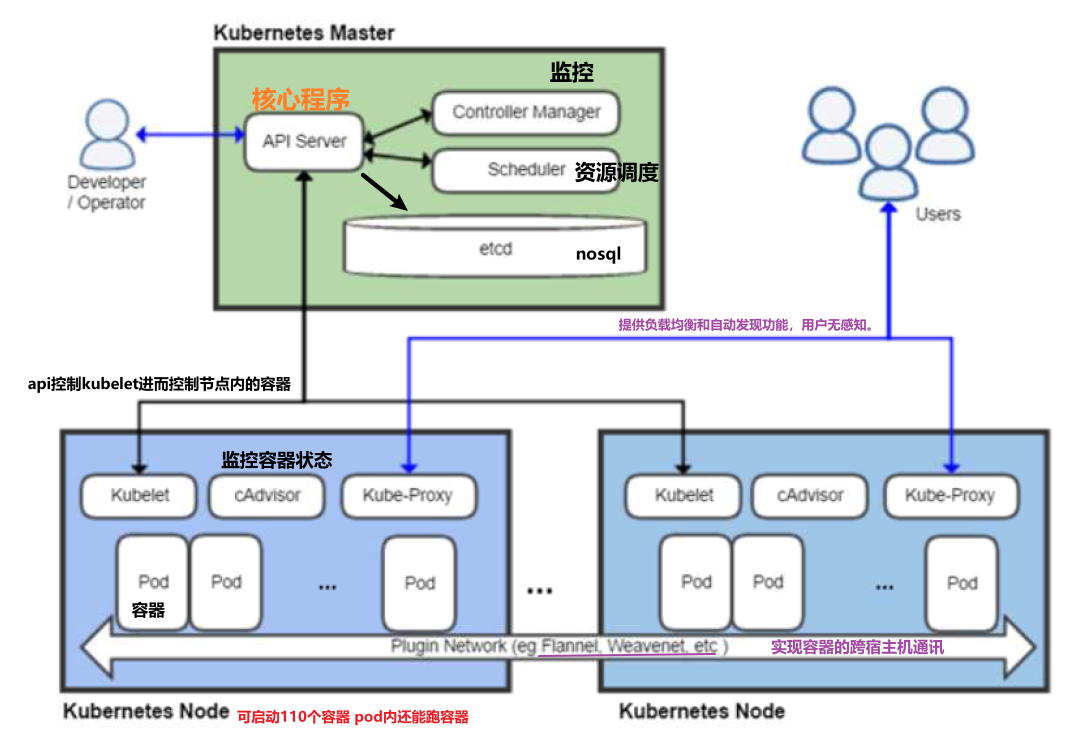

1.1 k8s的架构

- 基础架构简书:

- 除了核心组件,还有一些推荐的Add-ons: | 组件名称 | 说明 | | —- | :—-: | | kube-dns | 负责为整个集群提供DNS服务 | | Ingress Controller | 为服务提供外网入口 | | Heapster/Prometheus | 提供资源监控 | | Dashboard | 提供GUI | | Federation | 提供跨可用区的集群 | | Fluentd-elasticsearch | 提供集群日志采集、存储与查询 |

1.2:修改IP地址、主机名和host解析

10.0.0.11 k8s-master 1G10.0.0.12 k8s-node-1 1G10.0.0.13 k8s-node-2 1G

所有节点需要做hosts解析

1.3:master节点安装etcd

yum install etcd -yvim /etc/etcd/etcd.conf6行:ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379"21行:ETCD_ADVERTISE_CLIENT_URLS="http://10.0.0.11:2379"systemctl start etcd.servicesystemctl enable etcd.serviceetcdctl set testdir/testkey0 0etcdctl get testdir/testkey0etcdctl -C http://10.0.0.11:2379 cluster-health

etcd原生支持做集群,

练习: 安装部署etcd集群,要求三个节点

1.4:master节点安装kubernetes

yum install kubernetes-master.x86_64 -yvim /etc/kubernetes/apiserver8行: KUBE_API_ADDRESS="--insecure-bind-address=0.0.0.0"11行:KUBE_API_PORT="--port=8080"14行: KUBELET_PORT="--kubelet-port=10250" #,http://10.0.0.11:2379" 配置多个etcd集群17行:KUBE_ETCD_SERVERS="--etcd-servers=http://10.0.0.11:2379"23行:KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ResourceQuota" #删除 ...accontvim /etc/kubernetes/config22行:KUBE_MASTER="--master=http://10.0.0.11:8080"systemctl enable kube-apiserver.servicesystemctl restart kube-apiserver.servicesystemctl enable kube-controller-manager.servicesystemctl restart kube-controller-manager.servicesystemctl enable kube-scheduler.servicesystemctl restart kube-scheduler.service

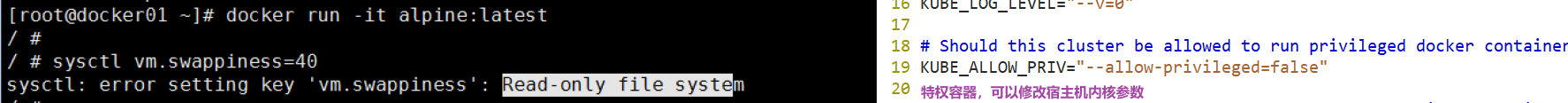

Ps: /etc/kubernetes/config

- 日志输出

15 # journal message level, 0 is debug

16 KUBE_LOG_LEVEL=”—v=0” #0有助于排错,生产建议需改,比较占用磁盘 - 特权容器(一般不建议使用)

19 KUBE_ALLOW_PRIV=”—allow-privileged=false”

检查服务是否安装正常

[root@k8s-master ~]# kubectl get componentstatusNAME STATUS MESSAGE ERRORscheduler Healthy okcontroller-manager Healthy oketcd-0 Healthy {"health":"true"}

1.5:node节点安装kubernetes

yum install kubernetes-node.x86_64 -y

vim /etc/kubernetes/config

22行:KUBE_MASTER="--master=http://10.0.0.11:8080"

vim /etc/kubernetes/kubelet

5行:KUBELET_ADDRESS="--address=0.0.0.0"

8行:KUBELET_PORT="--port=10250"

11行:KUBELET_HOSTNAME="--hostname-override=10.0.0.12"

14行:KUBELET_API_SERVER="--api-servers=http://10.0.0.11:8080"

systemctl enable kubelet.service

systemctl restart kubelet.service

systemctl enable kube-proxy.service

systemctl restart kube-proxy.service

在master节点检查

[root@k8s-master ~]# kubectl get nodes

NAME STATUS AGE

10.0.0.12 Ready 6m

10.0.0.13 Ready 3s

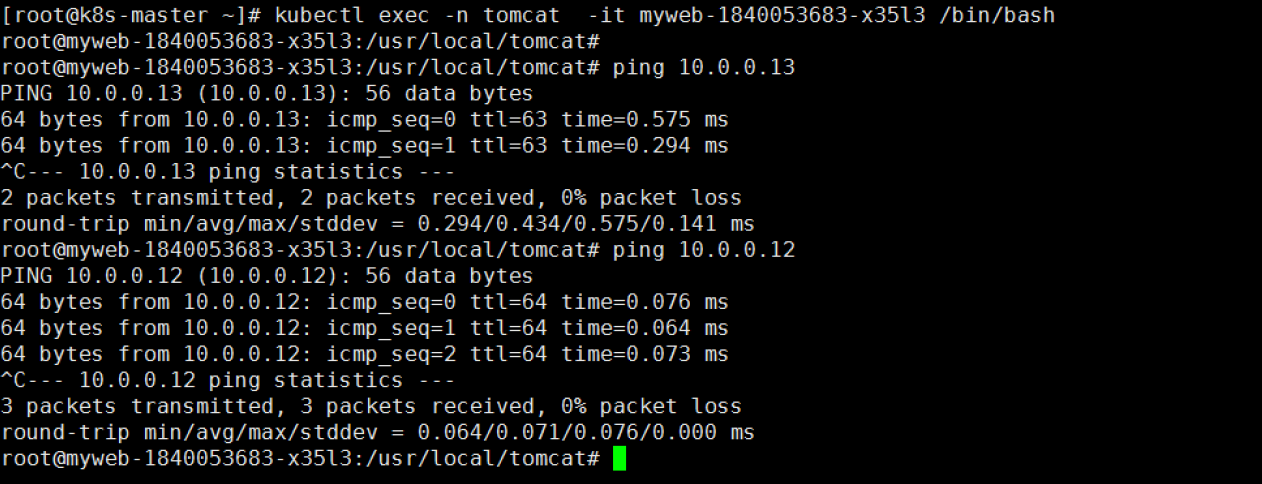

6:所有节点配置flannel网络

- 网络介绍:

跨主机通信的一个解决方案是Flannel,由CoreOS推出,支持3种实现:UDP、VXLAN、host-gwudp模式∶

udp模式: 使用设备flannel.O进行封包解包,不是内核原生支持,上下文切换较大,性能非常差

vxlan模式︰ 使用flannel.1进行封包解包,内核原生支持,性能较强

host-gw模式︰ 无需flannel.1这样的中间设备,直接宿主机当作子网的下一跳地址,性能最强.#需要维护路由表, 云环境不能使用。

host-gw的性能损失大约在10%左右,而其他所有基于VXLAN“隧道"机制的网络方案,性能损失在20%~3

- 安装服务

yum install flannel -y

sed -i 's#http://127.0.0.1:2379#http://10.0.0.11:2379#g' /etc/sysconfig/flanneld

###master节点:

[root@k8s-master ~]# etcdctl mk /atomic.io/network/config '{"Network":"172.18.0.0/16","Backend": {"Type": "vxlan"}}'

systemctl enable flanneld.service

systemctl restart flanneld.service

###node节点:

systemctl enable flanneld.service

systemctl restart flanneld.service

systemctl restart docker

systemctl restart kubelet.service

systemctl restart kube-proxy.service

vim /usr/lib/systemd/system/docker.service

#在[Service]区域下增加一行

ExecStartPost=/usr/sbin/iptables -P FORWARD ACCEPT

systemctl daemon-reload

systemctl restart docker

7:配置master为镜像仓库(后期升级harbor)

#所有节点配置本地镜像:

vi /etc/docker/daemon.json

{

"registry-mirrors": ["https://registry.docker-cn.com"],

"insecure-registries": ["10.0.0.11:5000"]

}

systemctl restart docker

#master搭建镜像仓库:

yum install docker -y

systemctl enable docker

systemctl restart docker

#上传并运行:

[root@k8s-master ~]# docker load -i docker-registry.tar.gz

[root@k8s-master ~]# docker run -d -p 5000:5000 --restart=always --name registry -v /opt/myregistry:/var/lib/registry registry

#测试:

[root@k8s-node-1 ~]# docker tag alpine:latest 10.0.0.11:5000/alpine:latest

[root@k8s-node-1 ~]# docker push 10.0.0.11:5000/alpine:latest

2:什么是k8s,k8s有什么功能?

k8s是一个docker集群的管理工具

k8s是容器的编排工具

2.1 k8s的核心功能

自愈: 重新启动失败的容器,在节点不可用时,替换和重新调度节点上的容器,对用户定义的健康检查不响应的容器会被中止,并且在容器准备好服务之前不会把其向客户端广播。

弹性伸缩: 通过监控容器的cpu的负载值,如果这个平均高于80%,增加容器的数量,如果这个平均低于10%,减少容器的数量

服务的自动发现和负载均衡: 不需要修改您的应用程序来使用不熟悉的服务发现机制,Kubernetes 为容器提供了自己的 IP 地址和一组容器的单个 DNS 名称,并可以在它们之间进行负载均衡。

滚动升级和一键回滚: Kubernetes 逐渐部署对应用程序或其配置的更改,同时监视应用程序运行状况,以确保它不会同时终止所有实例。 如果出现问题,Kubernetes会为您恢复更改,利用日益增长的部署解决方案的生态系统。

私密配置文件管理: web容器里面,数据库的账户密码(测试库密码)

2.2 k8s的历史

2014年 docker容器编排工具,立项

2015年7月 发布kubernetes 1.0, 加入cncf基金会 孵化

2016年,kubernetes干掉两个对手,docker swarm,mesos marathon 1.2版

2017年 1.5 -1.9

2018年 k8s 从cncf基金会 毕业项目1.10 1.11 1.12

2019年: 1.13, 1.14 ,1.15,1.16 1.17

cncf :cloud native compute foundation 孵化器

kubernetes (k8s): 希腊语 舵手,领航者 容器编排领域,

谷歌15年容器使用经验,borg容器管理平台,使用golang重构borg,kubernetes

2.3 k8s的安装方式

#1. yum安装 1.5 最容易安装成功,最适合学习的

2. 源码编译安装---难度最大 可以安装最新版

#3. 二进制安装 ---步骤繁琐(生产安装方式) 可以安装最新版 # shell,ansible,saltstack

#4. kubeadm---安装最容易(生产安装方式) 网络,可以安装最新版

5. minikube 适合开发人员体验k8s, 单机版。

2.4 k8s的应用场景

k8s最适合跑微服务项目!

###早期:

#mvc 架构 - 早期业务的开发架构

#Java 微服 - 业务的开发架构

1. dubbo 微服务

2. spring cloud 微服务

- docker解决了快速部署,环境一致性的问题。

- k8s解决管理docker问题。

3:k8s常用的资源

3.1 创建pod资源 ✨

pod是最小资源单位.

#pod的设计就是为了实现k8s高级功能,占用资源非常小,可以忽略不计,pod会伴随容器的启动。

k8s yaml的主要组成

#任何的一个k8s资源都可以由yaml清单文件来定义

apiVersion: v1 api版本

kind: pod 资源类型

metadata: 属性

spec: #详细: 指定以何种方式启动

k8s_pod.yaml

vi k8s_pod.yml

apiVersion: v1

kind: Pod

metadata:

name: nginx

labels:

app: web

spec:

containers:

- name: nginx

image: 10.0.0.11:5000/nginx:1.13

ports:

- containerPort: 80

kubectl create -f k8s_pod.yml #创建

kubectl get pod #查看

get pod

NAME READY STATUS RESTARTS AGE

nginx 0/1 ContainerCreating 0 31s

没有的话先下载镜像(自行准备)

wget http://192.168.14.251/file/docker_nginx1.13.tar.gz #内网环境资源 docker load -i docker_nginx1.13.tar.gz docker tag docker.io/nginx:1.13 10.0.0.11:5000/nginx:1.13 docker push 10.0.0.11:5000/nginx:1.13再查看一下是pod否启动

kubectl get pod -o wide

未启动,再查看一下详细信息

kubectl describe pod nginx

发现是从redhead拉取的证书,国外资源太慢,切换docke hub拉取

kubectl describe pod nginx

51m 51m 1 {default-scheduler } Normal Scheduled Successfully assigned nginx to 10.0.0.13

51m 45m 6 {kubelet 10.0.0.13} Warning FailedSync Error syncing pod, skipping: failed to “StartContainer” for “POD” with ErrImagePull: “image pull failed for registry.access.redhat.com/rhel7/pod-infrastructure:latest, this may be because there are no credentials on this request. details: (open /etc/docker/certs.d/registry.access.redhat.com/redhat-ca.crt: no such file or directory)”

51m 43m 33 {kubelet 10.0.0.13} Warning FailedSync Error syncing pod, skipping: failed to “StartContainer” for “POD” with ImagePullBackOff: “Back-off pulling image \”registry.access.redhat.com/rhel7/pod-infrastructure:latest\””

准备证书

wget http://192.168.14.251/file/pod-infrastructure-latest.tar.gz #docker hub 中可搜索到 docker load -i pod-infrastructure-latest.tar.gz docker tag docker.io/tianyebj/pod-infrastructure:latest 10.0.0.11:5000/pod-infrastructure:latest docker push 10.0.0.11:5000/pod-infrastructure:latest

3.node节点: [root@k8s-node-2 ~] # kubectl get pod -o wide 发现再node-2节点, 需要重启服务

vim /etc/kubernetes/kubelet

pod infrastructure container

KUBELET_POD_INFRA_CONTAINER=”—pod-infra-container-image=10.0.0.11:5000/pod-infrastructure:latest”

systemctl restart kubelet.service

> pod资源: 至少由两个容器组成,pod基础容器和业务容器组成 (最多1+4)

```javascript

#pod是什么

pod相当于逻辑主机,每个pod都有自己的ip地址

pod内的容器共享相同的ip和端口空间

默认情况下,每个容器的文件系统与其他容器完全隔离,pod容器组内可通过127.0.0.1通讯

pod配置文件2:

apiVersion: v1

kind: Pod

metadata:

name: test

labels:

app: web

spec:

containers:

- name: nginx

image: 10.0.0.11:5000/nginx:1.13

ports:

- containerPort: 80

- name: alpine

image: 10.0.0.11:5000/alpine:latest

command: ["sleep","1000"]

pod是k8s最小的资源单位

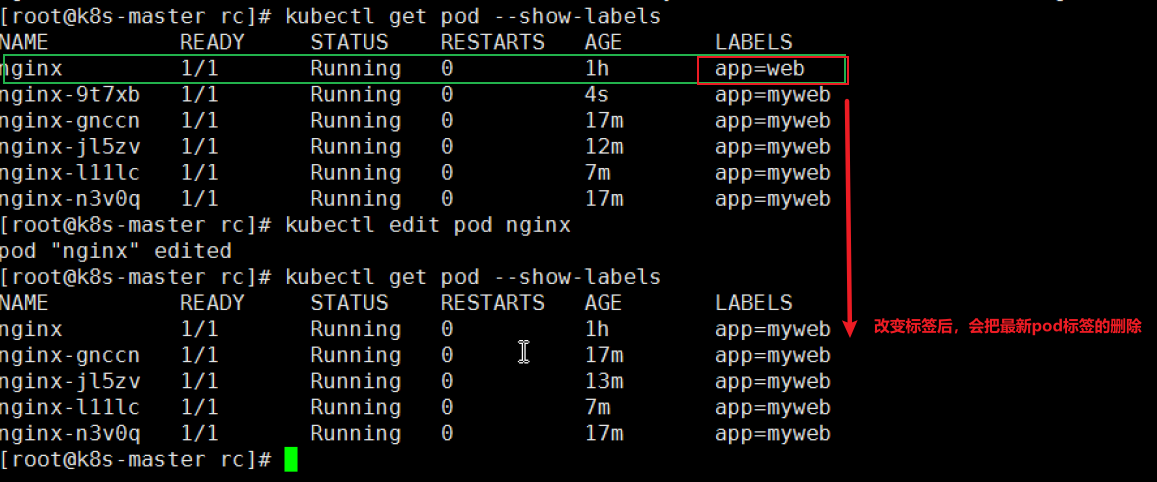

3.2 ReplicationController资源

rc:保证指定数量的pod始终存活,rc通过标签选择器来关联pod

k8s资源的常见操作:

#增删查改:

kubectl create -f xxx.yaml

kubectl delete pod nginx 或者kubectl delete -f xxx.yaml

kubectl get pod | rc

kubectl describe pod nginx

kubectl edit pod nginx

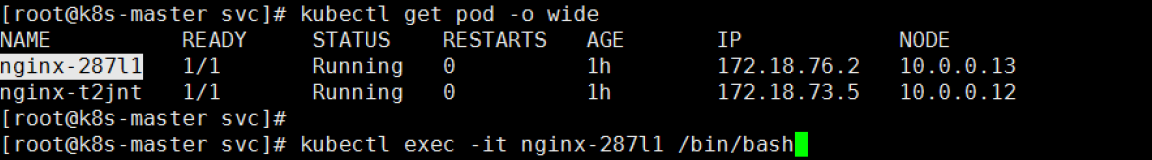

- 进入pod中:

- 创建一个rc

apiVersion: v1 kind: ReplicationController metadata: name: nginx spec: replicas: 5 #副本数为5 selector: #选择器 app: myweb template: #pod模板 metadata: labels: app: myweb spec: #如何启动,可指定镜像,端口,启动命令等... containers: - name: myweb image: 10.0.0.11:5000/nginx:1.13 ports: - containerPort: 80

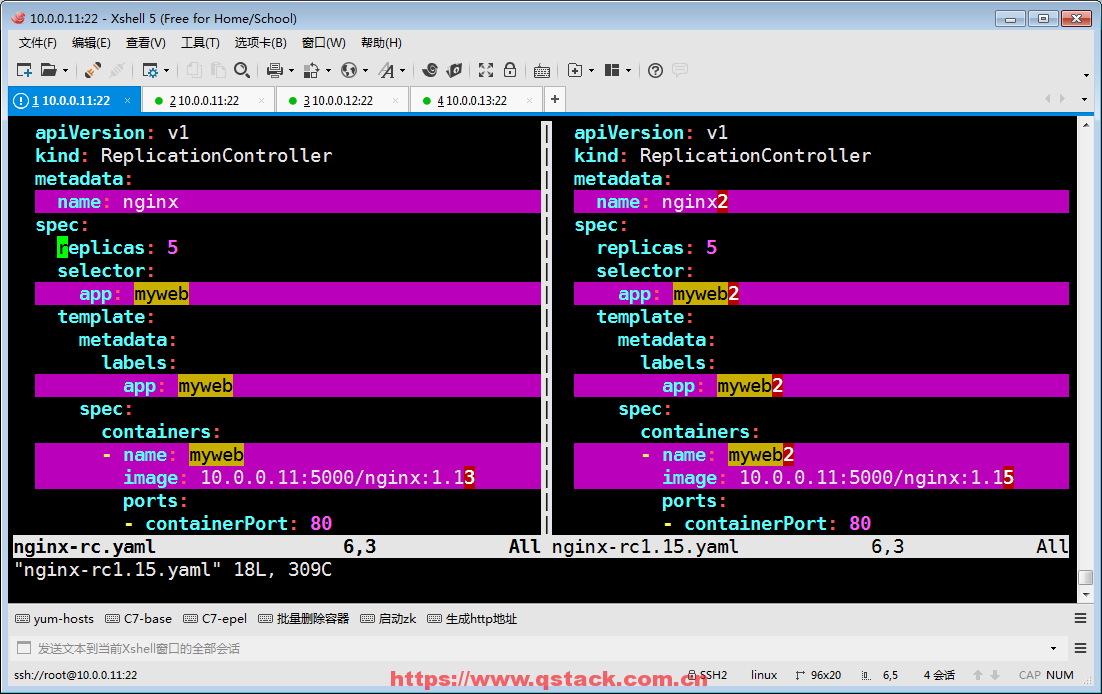

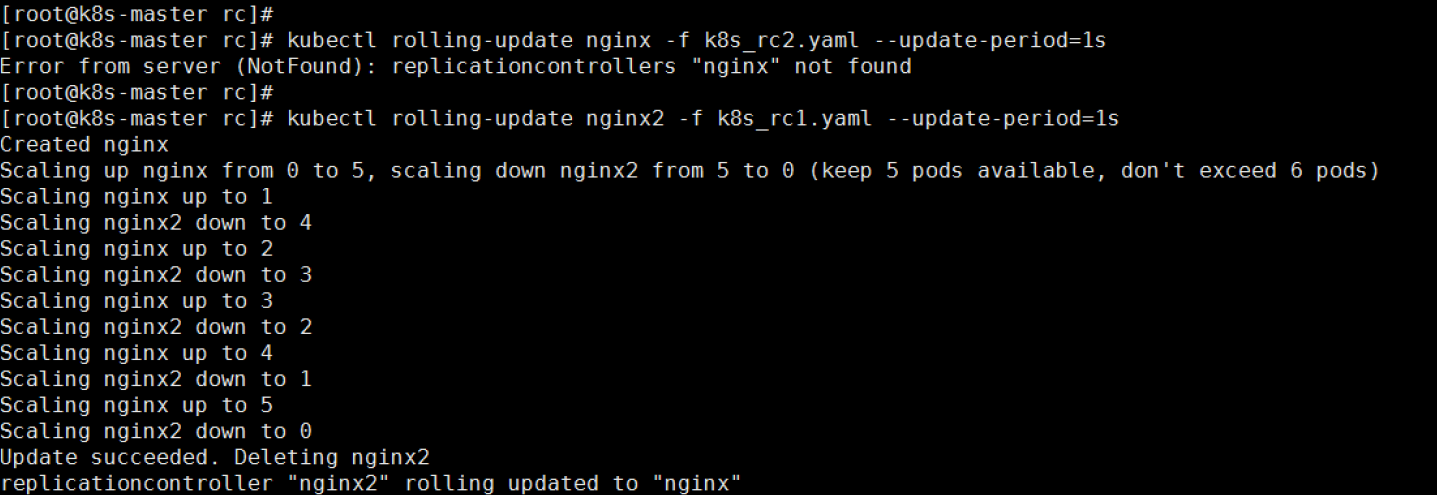

rc的滚动升级 新建一个nginx-rc1.15.yaml

#了解:

升级

kubectl rolling-update nginx -f nginx-rc1.15.yaml --update-period=10s

回滚

kubectl rolling-update nginx2 -f nginx-rc.yaml --update-period=1s

- 注意准备升降级的镜像:

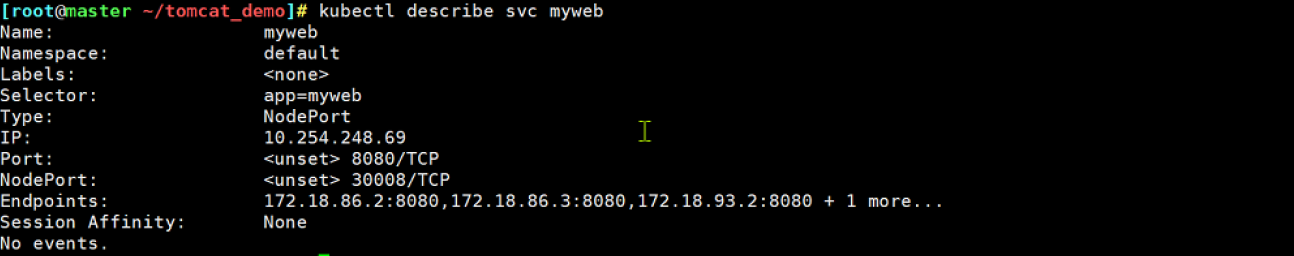

3.3 service (svc)资源

service帮助pod暴露端口

创建一个service

apiVersion: v1

kind: Service #简称svc

metadata:

name: myweb

spec:

type: NodePort #默认ClusterIP

ports:

- port: 80 #clusterIP

nodePort: 30000 #node port

targetPort: 80 #pod port

selector:

app: myweb2

可实现服务的自动发现

可实现服务的负载均衡

- 常用命令:

#调整rc的副本书 kubectl scale rc nginx --replicas=2 #进入pod容器 kubectl exec -it pod_name /bin/bash

修改nodePort范围

vim /etc/kubernetes/apiserver

KUBE_API_ARGS="--service-node-port-range=3000-50000"

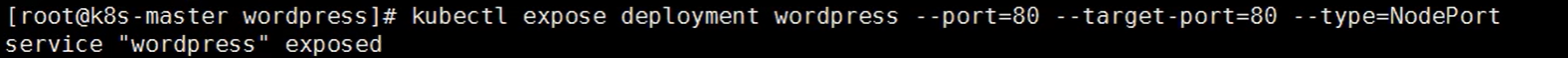

命令行创建service资源

kubectl expose rc nginx --type=NodePort --port=80

service默认使用iptables来实现负载均衡, k8s 1.8新版本中推荐使用lvs(四层负载均衡 传输层tcp,udp)

3.4 deployment资源✨

有rc在滚动升级,会造成服务访问中断 (原因: rc的升级会修改标签,需要手动修改标签)

于是k8s引入了deployment资源,来取代rc。

#rc和deployment的区别:

共同点: 可以控制pod数量,都可以滚动升级,通过标签选择器关联pod

不同点: rc升级需要yaml文件,deployment修改配置文件实时生效,deployment升级服务不中断

- 创建deployment

参数详解apiVersion: extensions/v1beta1 #必选,版本号,例如v1等 kind: Deployment #必选,Pod/ReplicationController/Deployment metadata: name: nginx #必选,Pod名称 spec: replicas: 3 strategy: rollingUpdate: #滚动升级 maxSurge: 1 ##在原有的基础上多启动1个容器 maxUnavailable: 1 ##最大不可用资源个数 type: RollingUpdate minReadySeconds: 30 #升级此略,30s升级一次 template: metadata: labels: app: nginx #自定义标签 spec: containers: - name: nginx #容器名称 image: 10.0.0.11:5000/nginx:1.13 #容器的镜像名称 ports: - containerPort: 80 resources: limits: cpu: 100m requests: cpu: 100m

Ps: 使用滚动升级,不会出现访问中断的问题。

###deployment升级和回滚

#命令行创建deployment

kubectl run nginx --image=10.0.0.11:5000/nginx:1.13 --replicas=3 --record

#命令行升级指定版本,使用这个控制版本,比较好!!!

kubectl set image deployment nginx nginx=10.0.0.11:5000/nginx:1.15

#查看deployment所有历史版本

kubectl rollout history deployment nginx

deployment回滚到上一个版本

kubectl rollout undo deployment nginx

deployment回滚到指定版本

kubectl rollout undo deployment nginx --to-revision=2

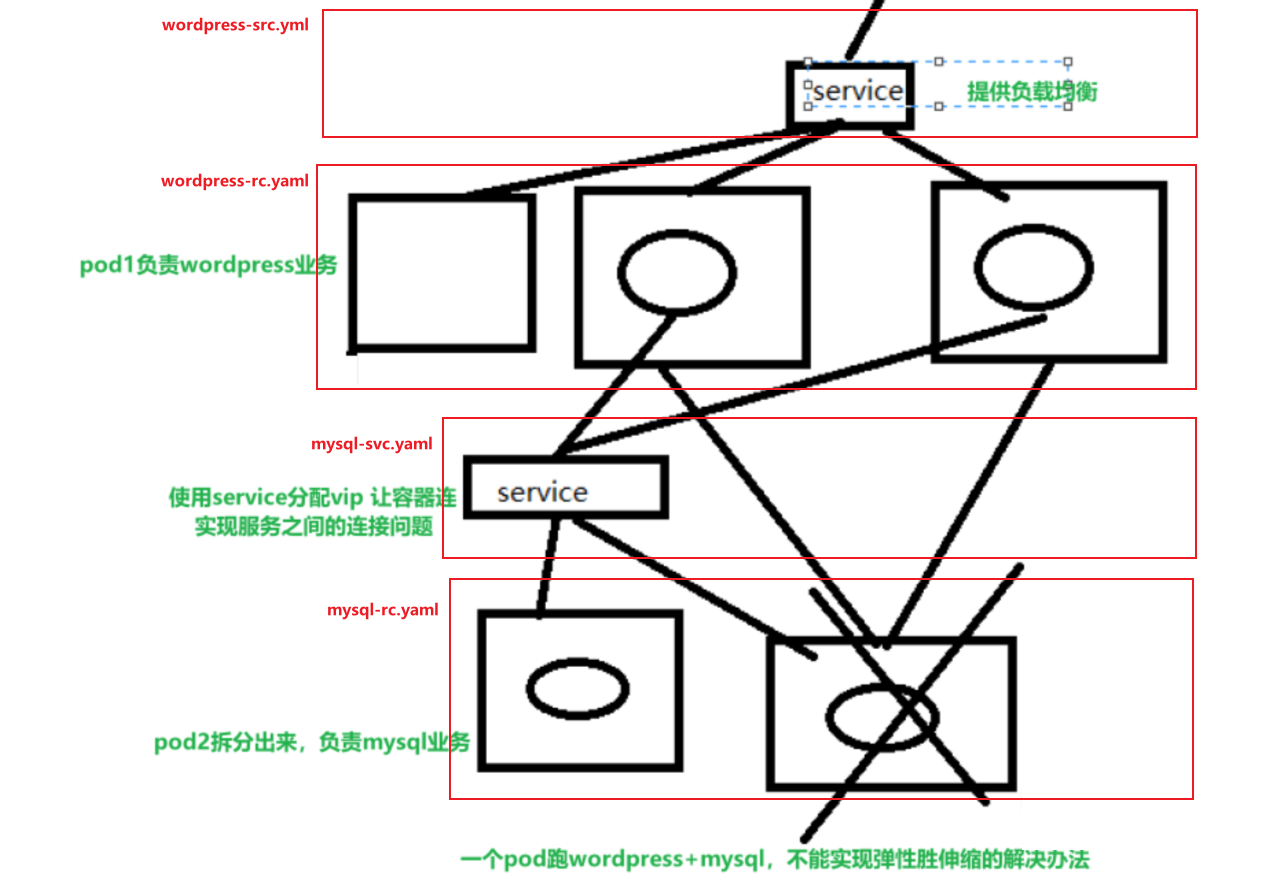

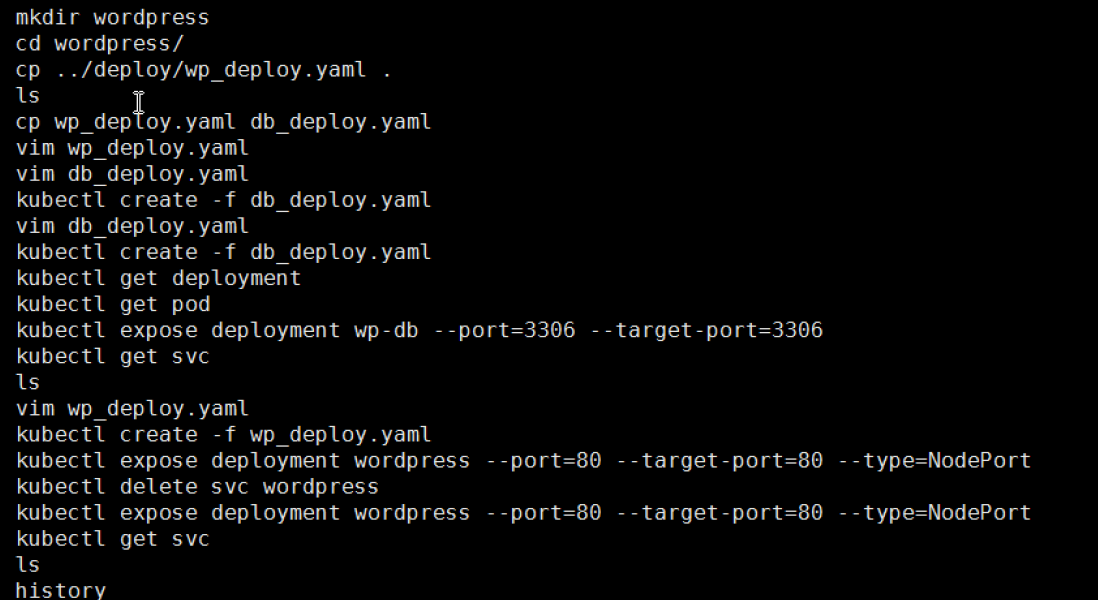

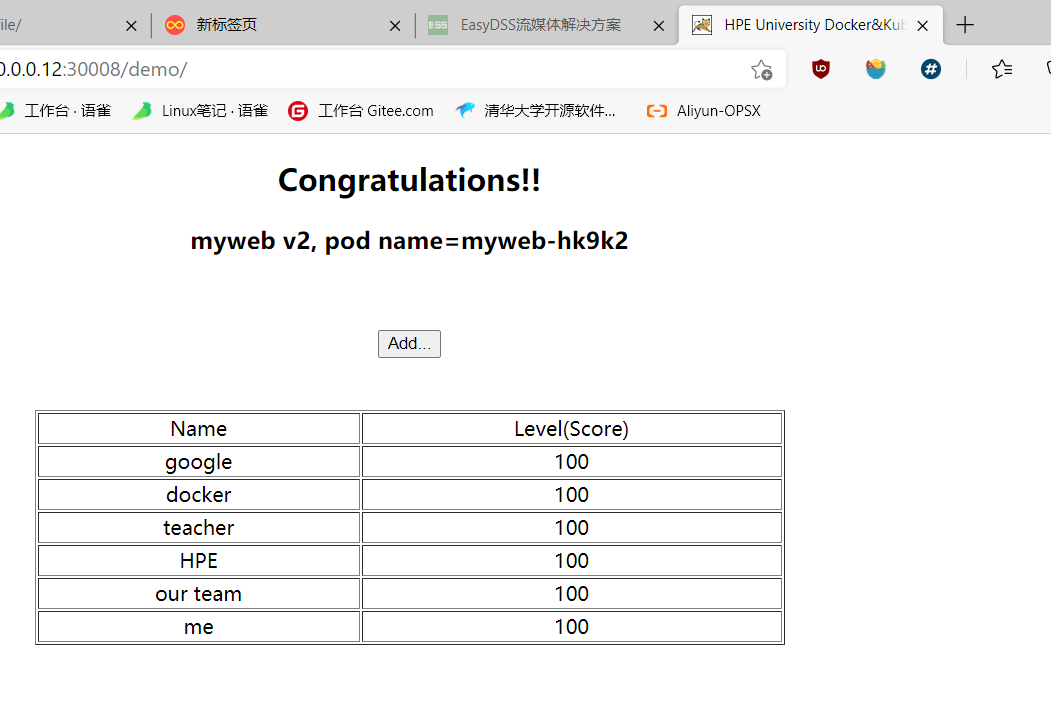

3.5 tomcat+mysql ✨

- wordpress练习示例

在k8s中容器之间相互访问,通过VIP地址!

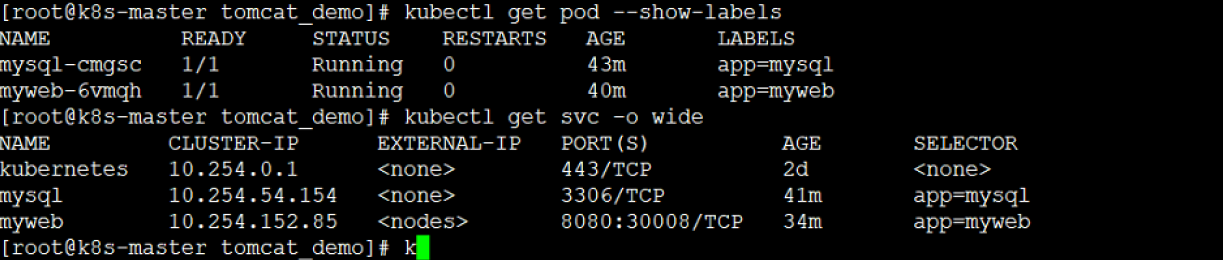

- tomcat + mysql 📎tomcat_demo.zip ```javascript

初始化环境 kubectl delete svc myweb #之前实例有使用到这个svc会冲突

下载镜像,并上传到私有镜像仓库: wget http://192.168.14.251/file/tomcat-app-v2.tar.gz 67 wget http://192.168.14.251/file/docker-mysql-5.7.tar.gz 69 docker load -i docker-mysql-5.7.tar.gz 70 docker load -i tomcat-app-v2.tar.gz 71 docker images 72 docker tag docker.io/kubeguide/tomcat-app:v2 10.0.0.11:5000/tomcat-app:v2 73 docker push 10.0.0.11:5000/tomcat-app:v2 74 docker images 75 docker tag docker.io/mysql:5.7 10.0.0.11:5000/mysql:5.7 76 docker push 10.0.0.11:5000/mysql:5.7

创建rc 和svc网络服务 103 unzip tomcat_demo.zip 104 ll 105 cd tomcat_demo/

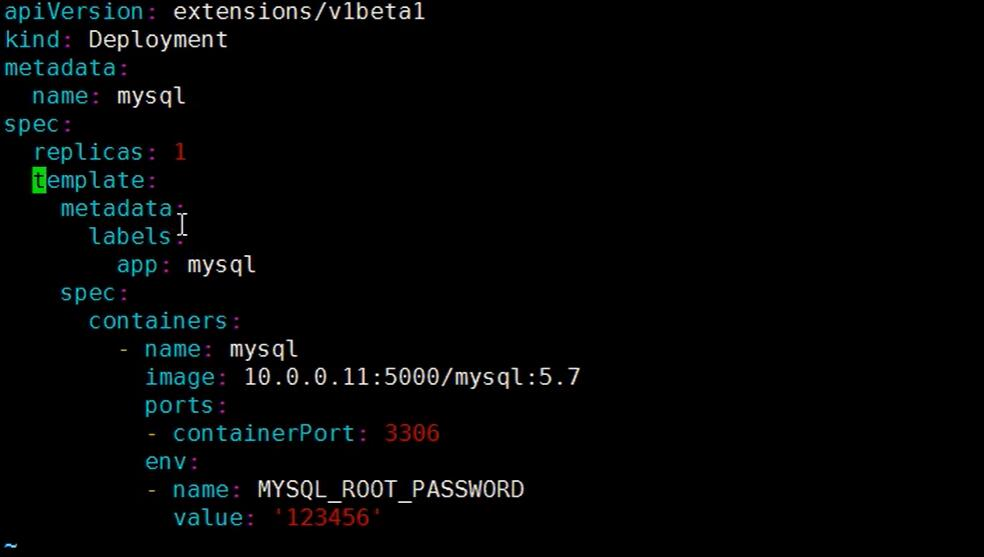

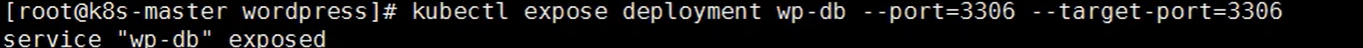

107 cat mysql-rc.yml #由于tomcat-app镜像连接数据库账号密码固定了,所以要注意root:123456 108 kubectl create -f mysql-rc.yml 109 cat mysql-svc.yml 110 kubectl create -f mysql-svc.yml

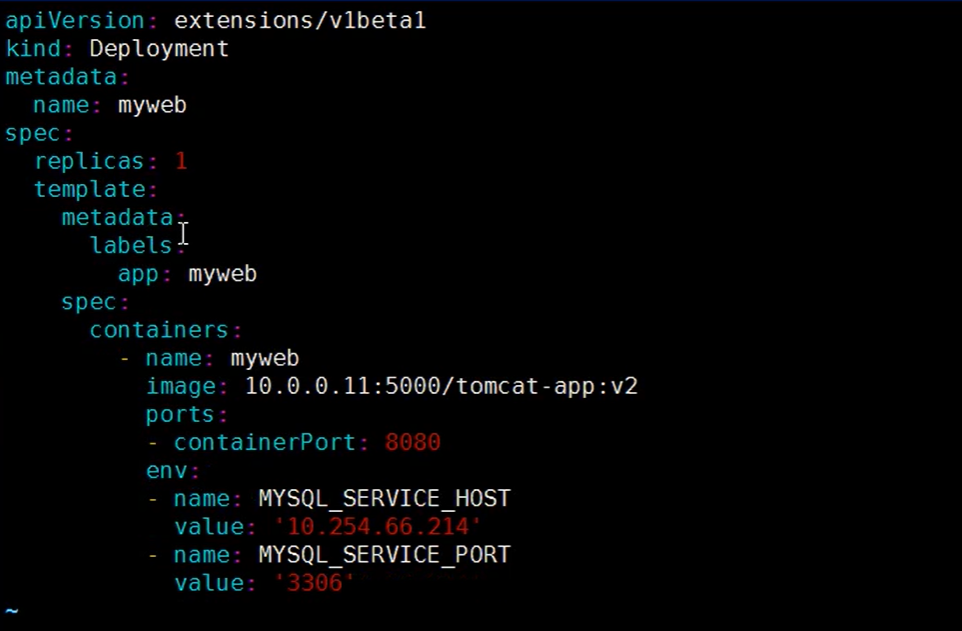

126 vim tomcat-rc.yml #修改vip连接地址 127 kubectl create -f tomcat-rc.yml 128 kubectl create -f tomcat-svc.yml

Ps:配置错误 删除对应svc/rc

kubectl delete svc mysql/tomcat

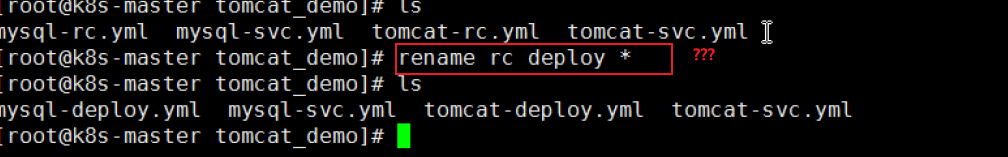

**将RC修改为deployment类型:**

vim mysql-rc-chg-deploy.yaml<br />

vim tomcat-rc-chag-deploy.yaml<br />

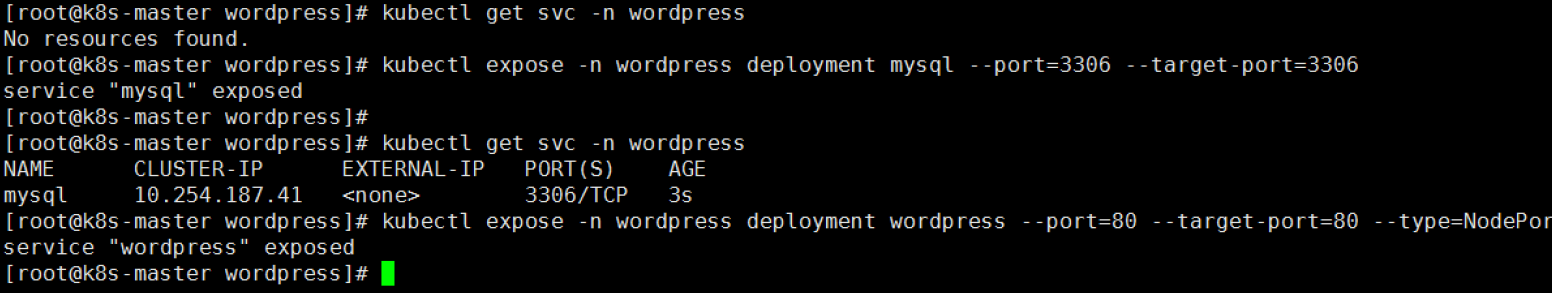

Ps:命令行创建svc<br /><br /><br />svc 持久化:

```less

[ root@k8s-master wordpress]# kubectl get svc -n wordpress wordpress -o yaml >wordpress-svc.yaml

#创建资源:

kubectl create -f mysql-deploy.yaml

kubectl create -f tomcat-deploy.yaml

K8S Pod status的状态分析

ImagePullBackOff: 正在重试拉取

InvalidImageName: 无法解析镜像名称

ImageInspectError: 无法校验镜像

RegistryUnavailable: 连接不到镜像中心

ErrImagePull: 通用的拉取镜像出错

ErrImageNeverPull: 策略禁止拉取镜像

CreateContainerError: 创建容器失败

RunContainerError: 启动容器失败

CreateContainerConfigError: 不能创建kubelet使用的容器配置

CrashLoopBackOff: 容器退出,kubelet正在将它重启

m.internalLifecycle.PreStartContainer 执行hook报错

PostStartHookError: 执行hook报错

ContainersNotInitialized: 容器没有初始化完毕

ContainersNotReady: 容器没有准备完毕

ContainerCreating:容器创建中

PodInitializing:pod 初始化中

DockerDaemonNotReady:docker还没有完全启动

NetworkPluginNotReady: 网络插件还没有完全启动

日常排错命令:

- 查看报错信息:

日常排错命令:

- 查看报错信息:

- 查看详细日志:

查看资源的标签选择器否一致:

直接查看某个标签选择器的yaml内容

查看某个标签关联了那些资源

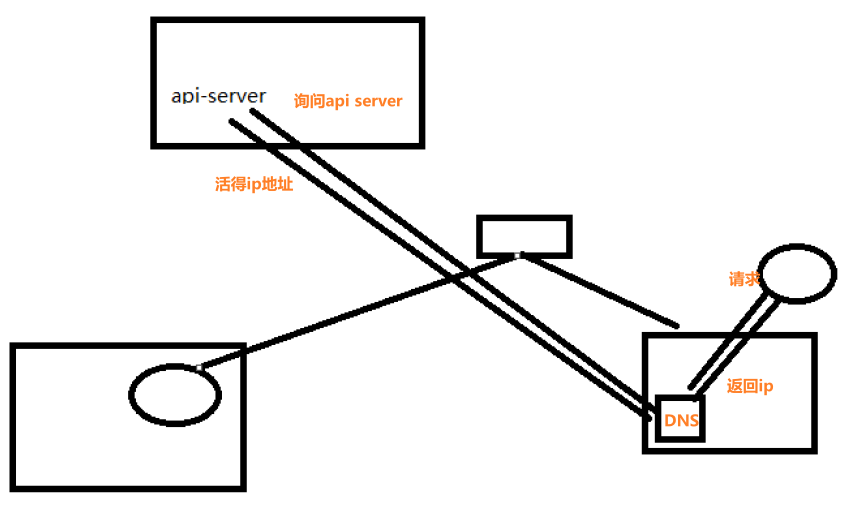

k8s的附加组件

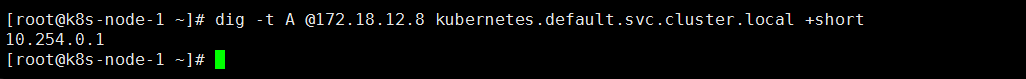

k8s集群中dns服务的作用,就是将svc的名称解析成对应VIP地址

4.1 dns服务

安装dns服务

1:下载dns_docker镜像包(node2节点10.0.0.13)

[root@k8s-node-2 ~]# wget http://192.168.14.251/file/k8s_dns.tar.gz

2:导入dns_docker镜像包(node2节点10.0.0.13)

3:创建dns服务

vi skydns-rc.yaml

...

spec:

nodeName: 10.0.0.13

containers:

kubectl create -f skydns-rc.yaml

kubectl create -f skydns-svc.yaml

4:检查

kubectl get all --namespace=kube-system

Ps: 通过DNS pod ip的方式 检测DNS服务。

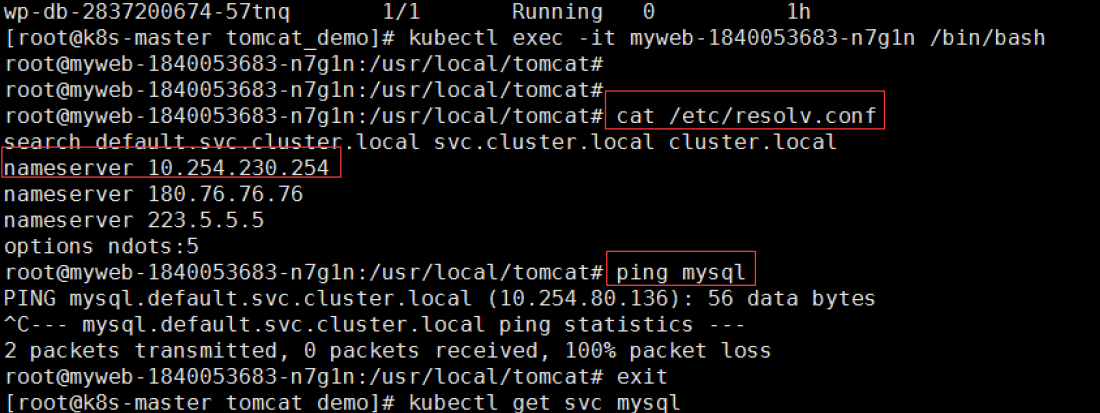

5:修改所有node节点kubelet的配置文件

vim /etc/kubernetes/kubelet

KUBELET_ARGS="--cluster_dns=10.254.230.254 --cluster_domain=cluster.local"

systemctl restart kubelet

6:修改tomcat-rc.yml

env:

- name: MYSQL_SERVICE_HOST

value: 'mysql' #修改前值是VIP

kubectl delete -f .

kubectl create -f .

7:验证

4.2 namespace命令空间

#创建namespace命令空间

kubectl create namespace tomcat

#删除tomcat目录下所有deploy

cd /root/k8s_yaml/tomcat_demo

kubectl delete -f .

#修改tomcat-rc.yml文件

#在matedate下添加一行namespace: tomcat

sed -i '3a \ \ namespace: tomcat' *

#创建新的pod

kubectl create -f .

#查看是否创建成功

kubectl get pod -n tomcat

#删除namespace(注:删除namespace里面的所有的pod也将删除)

kubectl delete namespace tomcat

#Ps: doc换行格式专换unix

[root@k8s-master tomcat_demo]# dos2unix ./*

namespace做资源隔离

- -n 连接namespave空间内的容器

4.3 健康检查和可用性检查

4.3.1 探针的种类

livenessProbe:健康状态检查,周期性检查服务是否存活,检查结果失败,将重启容器

readinessProbe:可用性检查,周期性检查服务是否可用,不可用将从service的endpoints中移除

AF AD

4.3.2 探针的检测方法

- exec:执行一段命令 返回值为0, 非0 (mysqladmin ping )

- httpGet:检测某个 http 请求的返回状态码 2xx,3xx正常, 4xx,5xx错误

- tcpSocket:测试某个端口是否能够连接

4.3.3 liveness探针的exec使用

vi nginx_pod_exec.yaml

iapiVersion: v1

kind: Pod

metadata:

name: exec

spec:

containers:

- name: nginx

image: 10.0.0.11:5000/nginx:1.13

ports:

- containerPort: 80

args:

- /bin/sh

- -c

- touch /tmp/healthy; sleep 30; rm -rf /tmp/healthy; sleep 600

livenessProbe:

exec:

command:

- cat

- /tmp/healthy

initialDelaySeconds: 5

periodSeconds: 5

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 1

4.3.4 liveness探针的httpGet使用

vi nginx_pod_httpGet.yaml

iapiVersion: v1

kind: Pod

metadata:

name: httpget

spec:

containers:

- name: nginx

image: 10.0.0.11:5000/nginx:1.13

ports:

- containerPort: 80

livenessProbe:

httpGet:

path: /index.html

port: 80

initialDelaySeconds: 3

periodSeconds: 3

4.3.5 liveness探针的tcpSocket使用

vi nginx_pod_tcpSocket.yaml

iapiVersion: v1

kind: Pod

metadata:

name: tcpSocket

spec:

containers:

- name: nginx

image: 10.0.0.11:5000/nginx:1.13

ports:

- containerPort: 80

args:

- /bin/sh

- -c

- tail -f /etc/hosts

livenessProbe:

tcpSocket:

port: 80

initialDelaySeconds: 10

periodSeconds: 3

4.3.6 readiness探针的httpGet使用

vi nginx-rc-httpGet.yaml

iapiVersion: v1

kind: ReplicationController

metadata:

name: readiness

spec:

replicas: 2

selector:

app: readiness

template:

metadata:

labels:

app: readiness

spec:

containers:

- name: readiness

image: 10.0.0.11:5000/nginx:1.13

ports:

- containerPort: 80

readinessProbe:

httpGet:

path: /qiangge.html

port: 80

initialDelaySeconds: 3

periodSeconds: 3

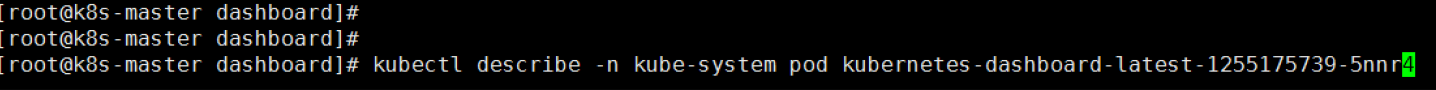

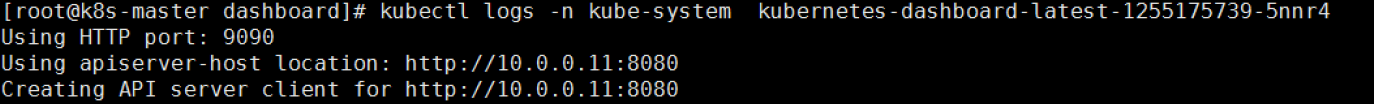

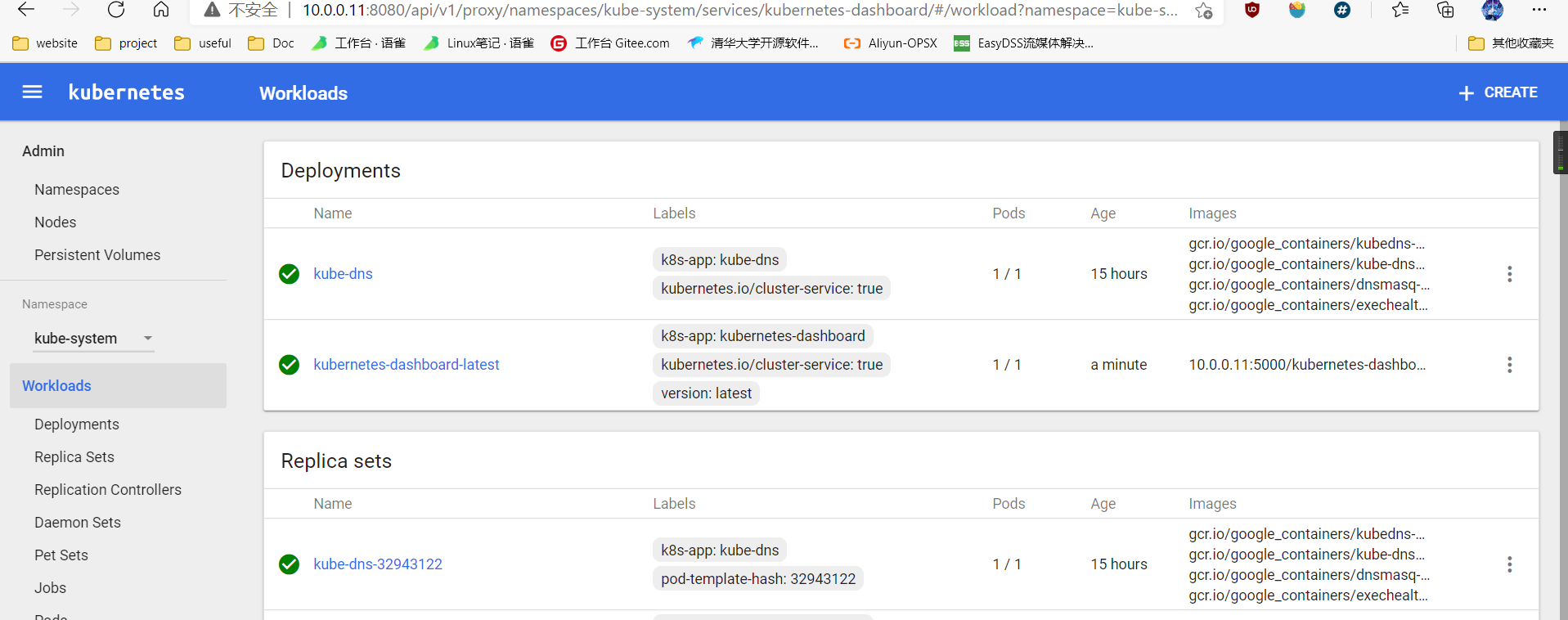

4.4 dashboard服务

1:上传并导入镜像,打标签

2:创建dashborad的deployment和service

dashbord上的集中类型:

daemon set 守护进程集合

应用场景: (适合用来跑监控,每个节点创建一个就ok)监控宿主机 node-exporter日志收集

deployment : 用来跑 node-exporter pod时,随机节点的拉起pod。 所以不是适合跑监控服务。,适合跑应用服务。

job类型: —次性的任务

类似于cronjob定时任务

pet sets: 宠物应用有数据的应用 (1.5后改名为 statufuset : 有状态应用)

mysql,redisstatefulset

4.5 通过apiservicer反向代理访问service

第一种:NodePort类型

type: NodePort

ports:

- port: 80

targetPort: 80

nodePort: 30008

第二种:ClusterIP类型

type: ClusterIP

ports:

- port: 80

targetPort: 80

http://10.0.0.11:8080/api/v1/proxy/namespaces/命令空间/services/service的名字/

#例子:

http://10.0.0.11:8080/api/v1/proxy/namespaces/qiangge/services/wordpress

5: k8s弹性伸缩

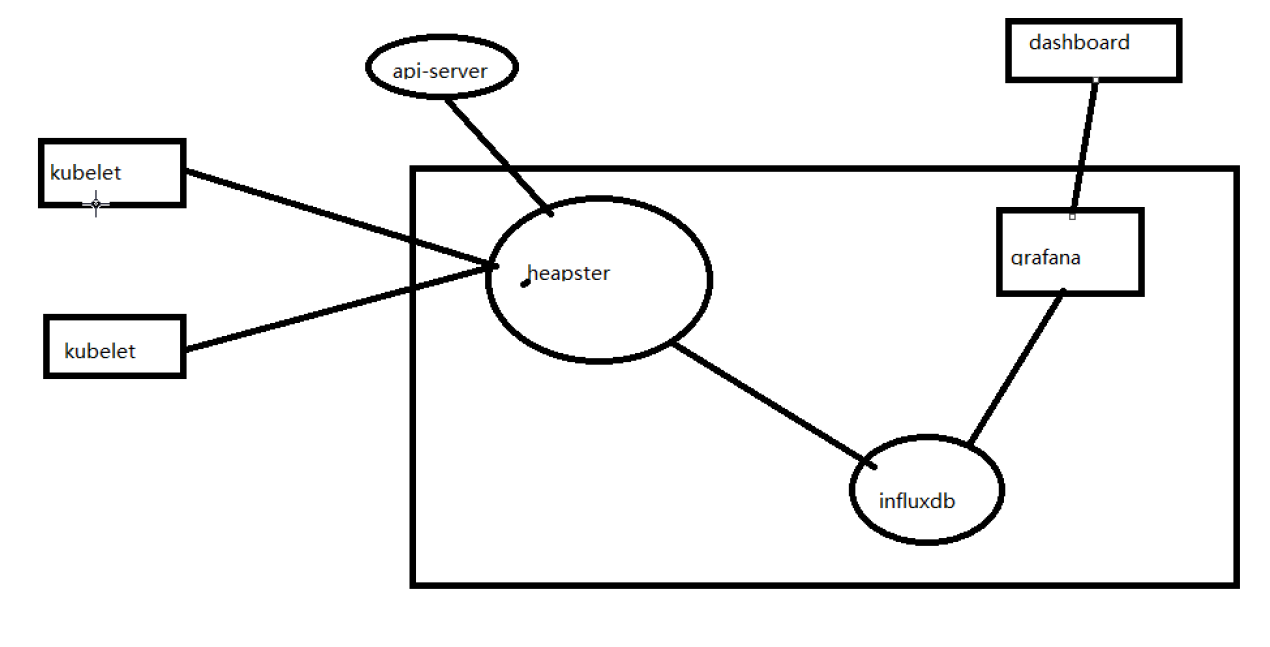

k8s弹性伸缩,需要附加插件heapster监控

5.1 安装heapster监控

1:上传并导入镜像,打标签

ls *.tar.gz

for n in `ls *.tar.gz`;do docker load -i $n ;done

docker tag docker.io/kubernetes/heapster_grafana:v2.6.0 10.0.0.11:5000/heapster_grafana:v2.6.0

docker tag docker.io/kubernetes/heapster_influxdb:v0.5 10.0.0.11:5000/heapster_influxdb:v0.5

docker tag docker.io/kubernetes/heapster:canary 10.0.0.11:5000/heapster:canary

安装监控后可使用的命令:

[root@k8s-master tomcat_demo]# kubectl top pod

2:上传配置文件

修改配置文件:

#heapster-controller.yaml

spec:

nodeName: 10.0.0.13

containers:

- name: heapster

image: 10.0.0.11:5000/heapster:canary

imagePullPolicy: IfNotPresent

#influxdb-grafana-controller.yaml

spec:

nodeName: 10.0.0.13

containers:

kubectl create -f .

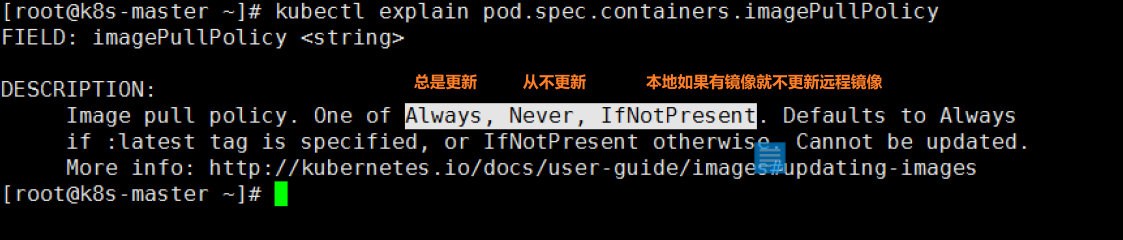

Ps: 镜像想在策略: (没有指定,系统自动补充为IfNotPresent)

- 监控架构图:

heapster采集数据

influxdb存储数据

grafana展示出图

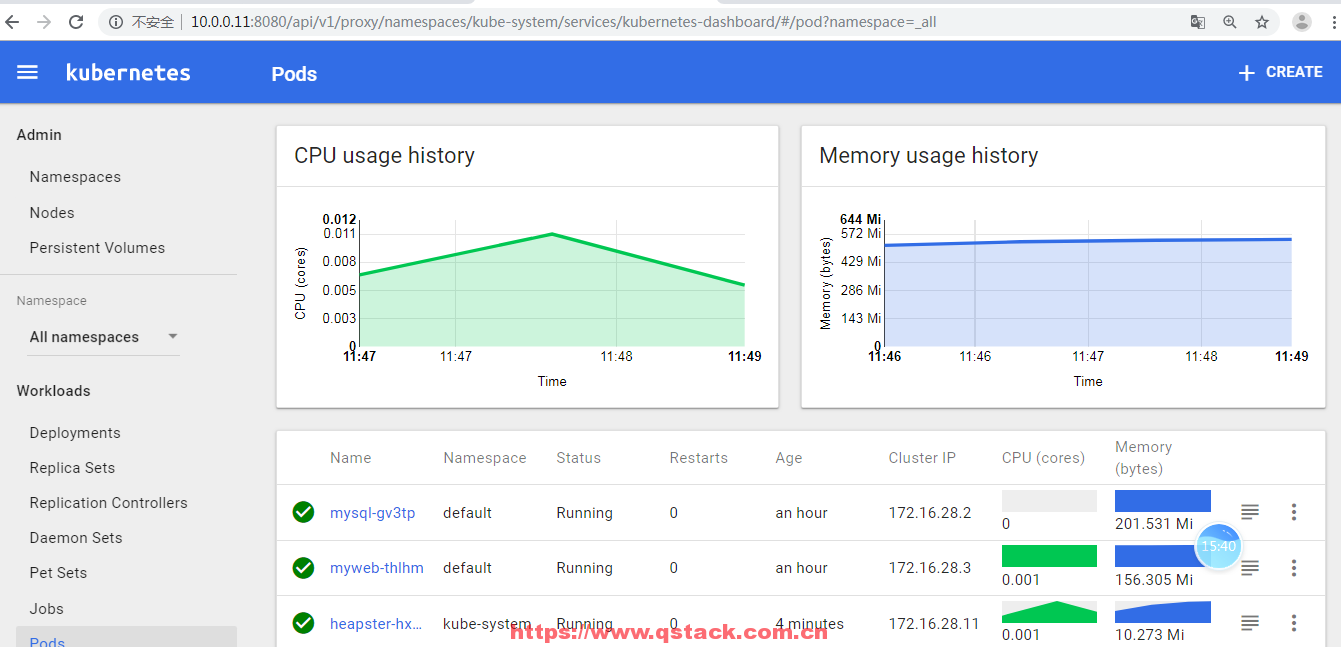

3:打开dashboard验证

压测: yum install http-tools -y ab -n 10000 -c 10 http://10.0.0.12:30008/demo/

5.2 弹性伸缩

1: 修改rc的配置文件

containers:

- name: myweb

image: 10.0.0.11:5000/nginx:1.13

ports:

- containerPort: 80

resources:

limits:

cpu: 100m

requests:

cpu: 100m

Ps: m 1/1000的使用率

2:创建弹性伸缩规则

创建弹性伸缩命令:

kubectl get pod --all-namespave

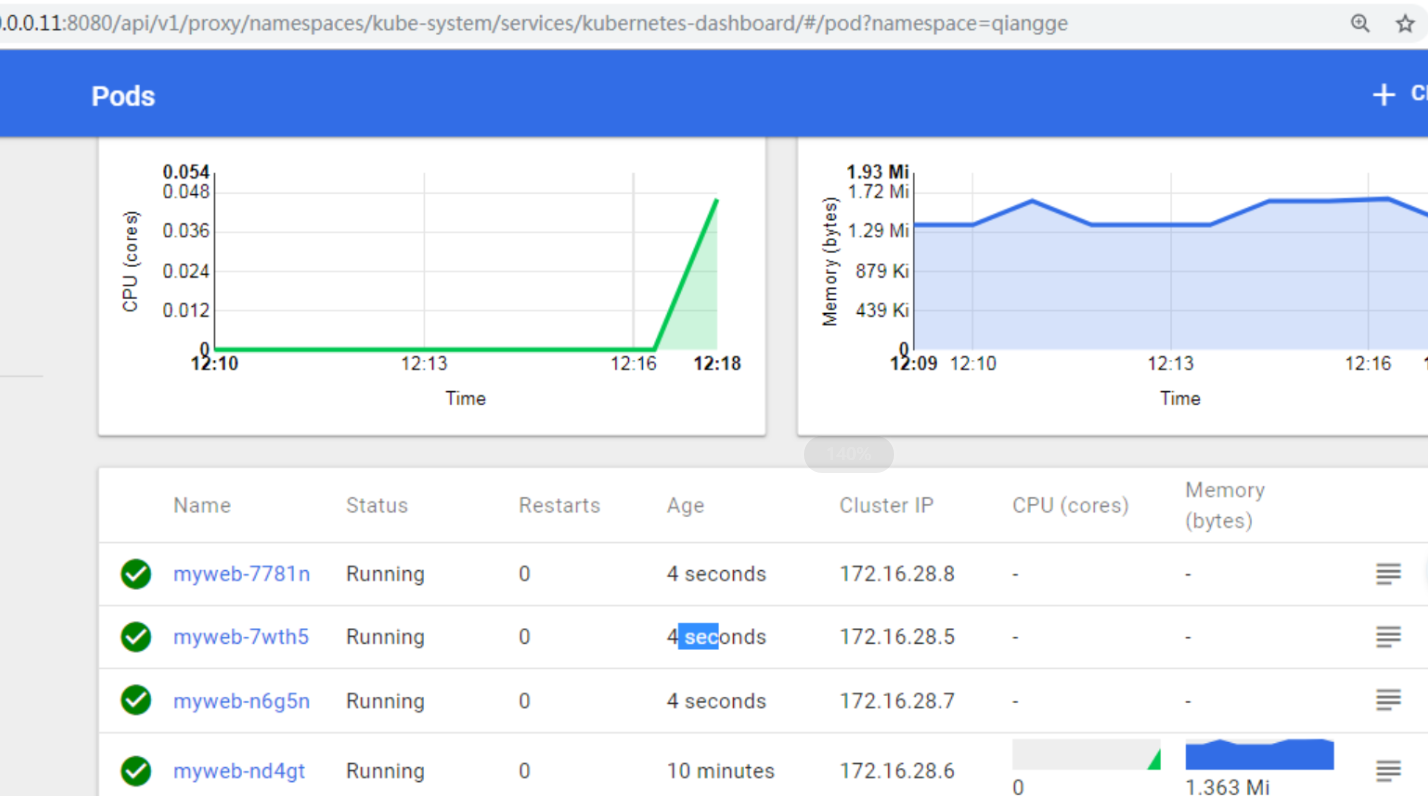

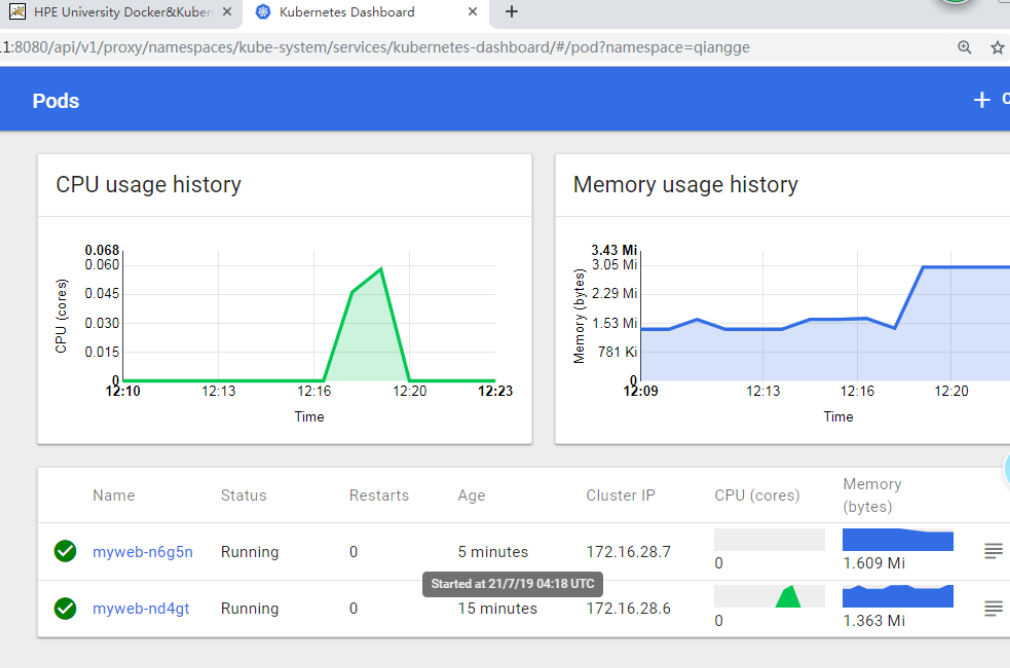

kubectl autoscale deploy myweb --max=5 --min=1 --cpu-percent=5

#Ps: -n 可以指定namespace #cpu-percent=5 cpu平均的使用百分比5%

[root@k8s-master tomcat_demo]# kubectl -n tomcat autoscale deploy myweb --max=8 --min=1 --cpu-percent=5

deployment "myweb" autoscaled

3:压力测试:

ab -n 1000000 -c 40 http://10.0.0.12:33218/index.html

扩容截图

缩容:

6:持久化存储

数据持久化类型:

6.1 emptyDir:

spec:

nodeName: 10.0.0.13

volumes:

- name: mysql

emptyDir: {}

containers:

- name: wp-mysql

image: 10.0.0.11:5000/mysql:5.7

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3306

volumeMounts:

- mountPath: /var/lib/mysql

name: mysql

Ps: 实现了持久化 不能实现数据共享 (目录 随着pod创建和删除而创建/删除) —- 适合用变化的内容: 日志 [root@k8s-node-1 ~]# find /var/lib/kubelet/pods/ -name ‘mysql’ -type d

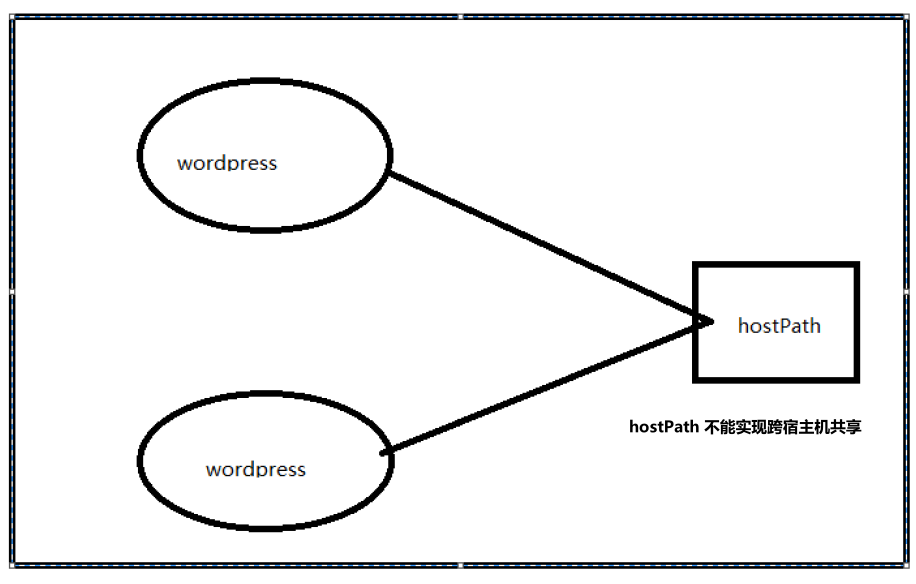

6.2 HostPath:

spec:

nodeName: 10.0.0.12

volumes:

- name: mysql

hostPath:

path: /data/wp_mysql

containers:

- name: wp-mysql

image: 10.0.0.11:5000/mysql:5.7

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3306

volumeMounts:

- mountPath: /var/lib/mysql

name: mysql

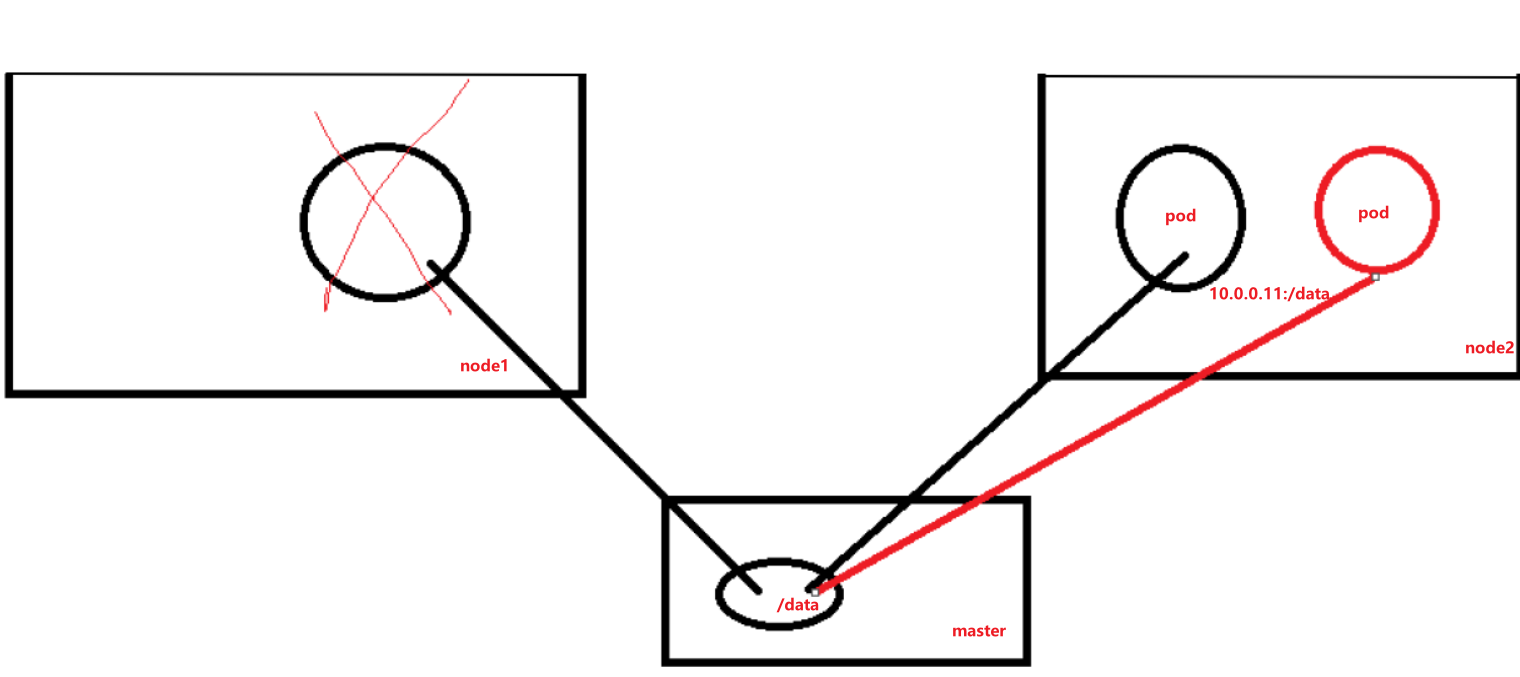

6.3 nfs:

volumes:

- name: mysql

nfs:

path: /data/wp_mysql

server: 10.0.0.11

如果使用nfs存储,建议使用支持nfs的硬件设备。(提高性能和稳定性)

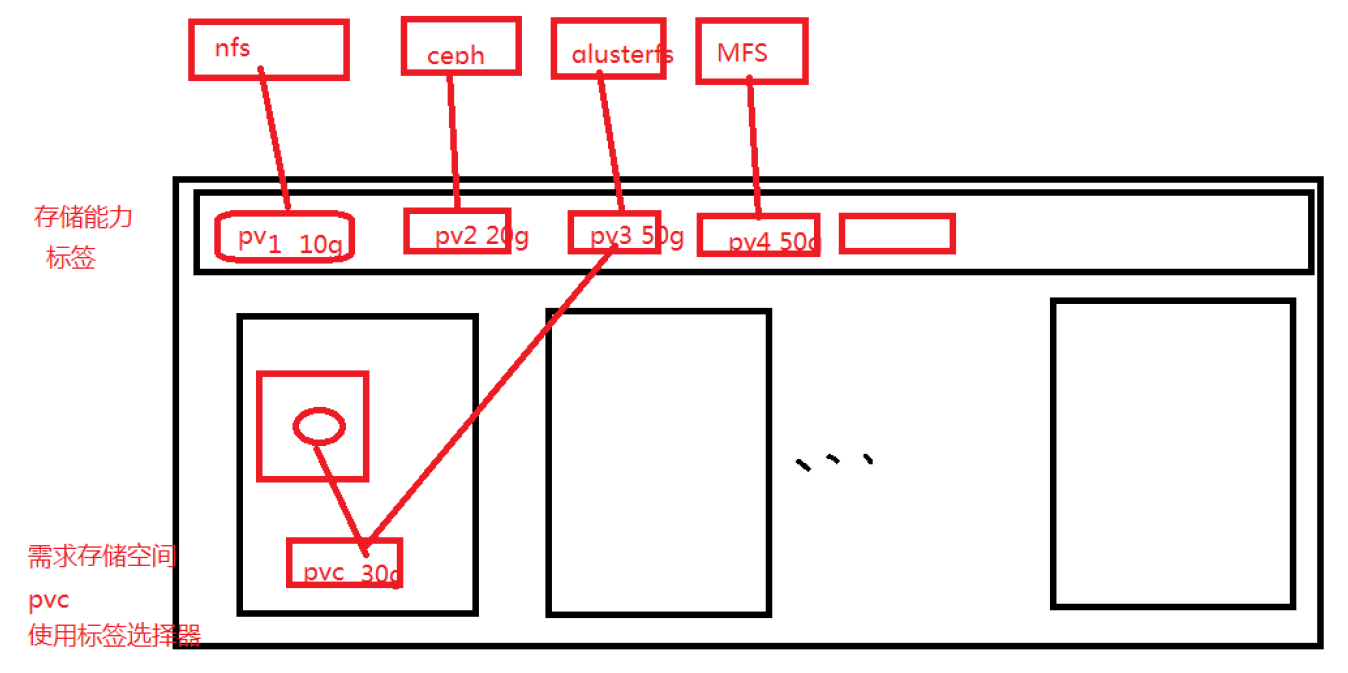

6.4 pv和pvc:

pv: persistent volume 全局资源,k8s集群

pvc: persistent volume claim, 局部资源属于某一个namespace

6.4.1:安装nfs服务端(10.0.0.11)

yum install nfs-utils.x86_64 -y

mkdir /data

vim /etc/exports

/data 10.0.0.0/24(rw,async,no_root_squash,no_all_squash)

systemctl start rpcbind

systemctl start nfs

6.4.2:在node节点安装nfs客户端

yum install nfs-utils.x86_64 -y

showmount -e 10.0.0.11

6.4.3:创建pv和pvc

上传yaml配置文件,创建pv和pvc

6.4.4:创建mysql-rc,pod模板里使用volume

volumes:

- name: mysql

persistentVolumeClaim:

claimName: tomcat-mysql

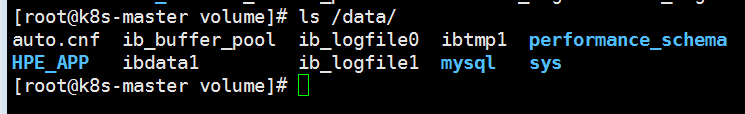

6.4.5: 验证持久化

验证方法1:删除mysql的pod,数据库不丢

kubectl delete pod mysql-gt054

验证方法2:查看nfs服务端,是否有mysql的数据文件

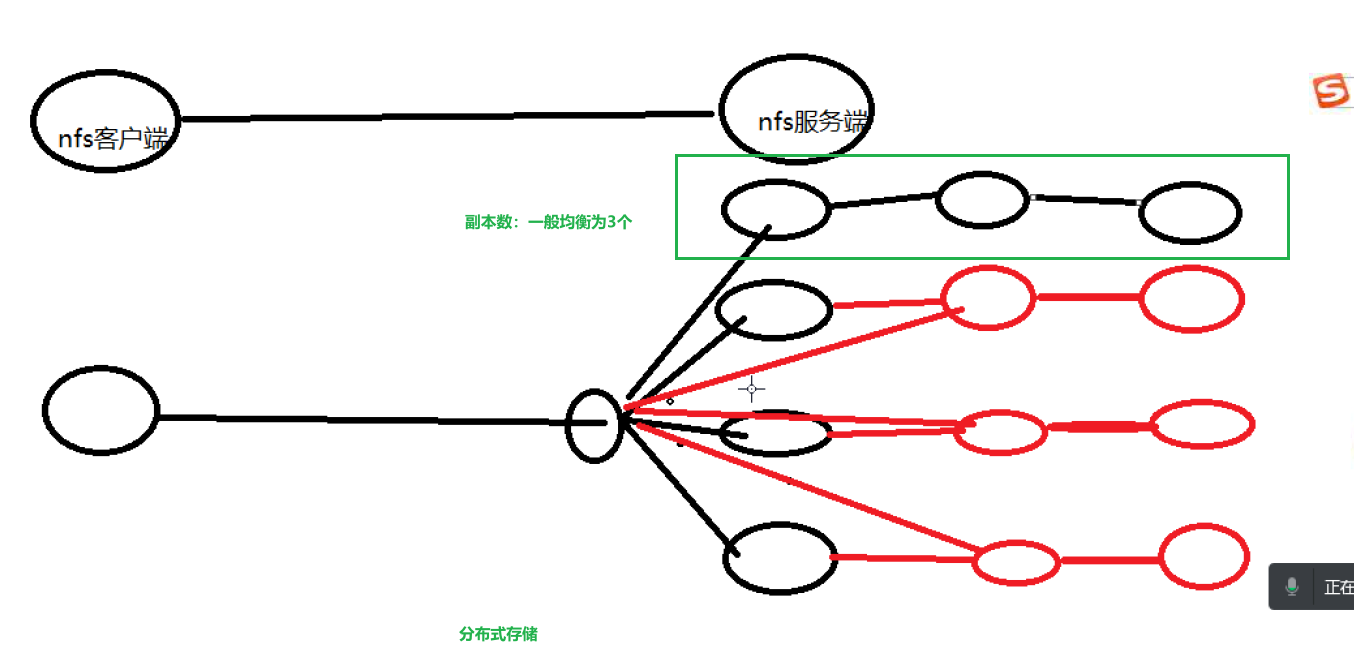

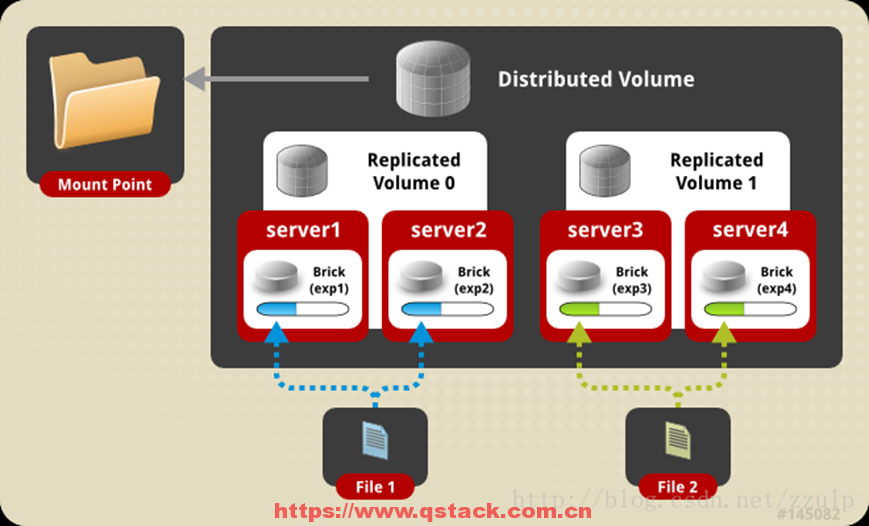

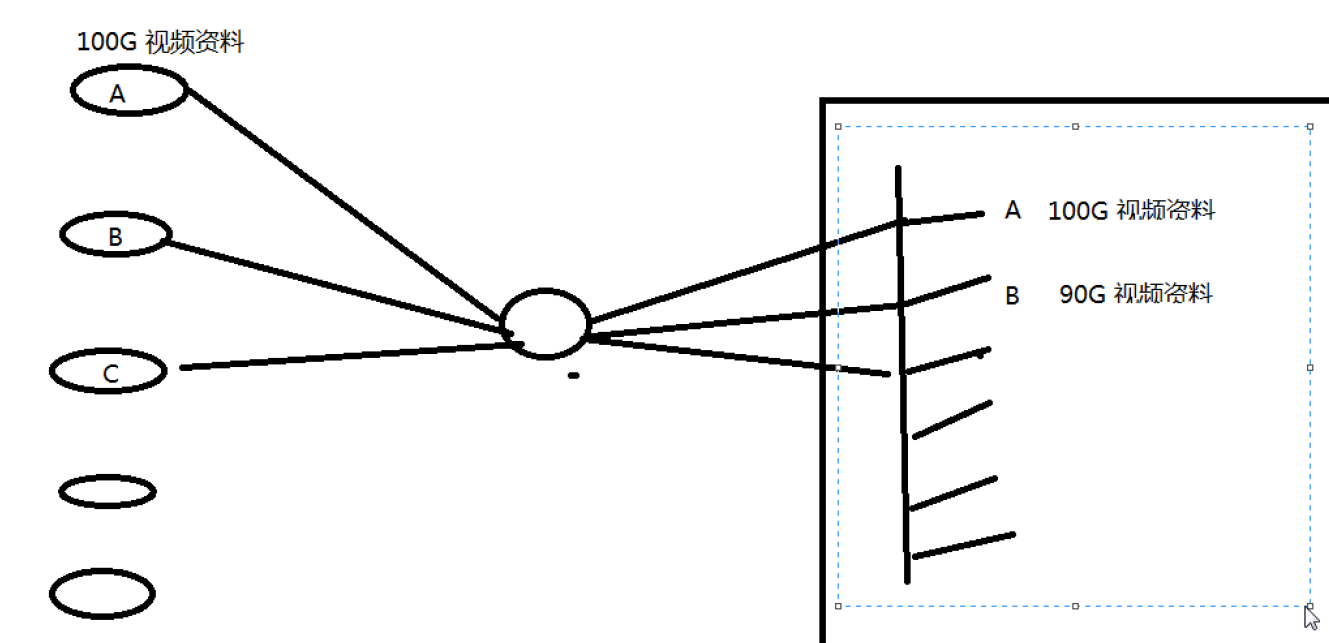

6.5: 分布式存储glusterfs

a: 什么是glusterfs

Glusterfs是一个开源分布式文件系统,具有强大的横向扩展能力,可支持数PB存储容量和数千客户端,通过网络互联成一个并行的网络文件系统。具有可扩展性、高性能、高可用性等特点。

b: 安装glusterfs

所有节点:

yum install centos-release-gluster6.noarch -y

yum install glusterfs-server -y

systemctl start glusterd.service

systemctl enable glusterd.service

#为gluster集群增加存储单元brick

echo '- - -' >/sys/class/scsi_host/host0/scan

echo '- - -' >/sys/class/scsi_host/host1/scan

echo '- - -' >/sys/class/scsi_host/host2/scan

mkfs.xfs /dev/sdb

mkfs.xfs /dev/sdc

mkfs.xfs /dev/sdd

mkdir -p /gfs/test1

mkdir -p /gfs/test2

mkdir -p /gfs/test3

mount /dev/sdb /gfs/test1

mount /dev/sdc /gfs/test2

mount /dev/sdd /gfs/test3

c: 添加存储资源池

master节点:

gluster pool list

gluster peer probe k8s-node1

gluster peer probe k8s-node2

gluster pool list

d: glusterfs卷管理

#创建分布式复制卷

gluster volume create qiangge replica 2 k8s-master:/gfs/test1 k8s-node-1:/gfs/test1 k8s-master:/gfs/test2 k8s-node-1:/gfs/test2 force

#启动卷

gluster volume start qiangge

#查看卷

gluster volume info qiangge

#挂载卷

mount -t glusterfs 10.0.0.11:/qiangge /mnt

e: 分布式复制卷讲解

f: 分布式复制卷扩容

#扩容前查看容量:

df -h

#扩容命令:

gluster volume add-brick qiangge k8s-node-2:/gfs/test1 k8s-node-2:/gfs/test2 force

#扩容后查看容量:

df -h

6.6 k8s 对接glusterfs存储

a:创建endpoint

vi glusterfs-ep.yaml

iapiVersion: v1

kind: Endpoints

metadata:

name: glusterfs

namespace: tomcat

subsets:

- addresses:

- ip: 10.0.0.11

- ip: 10.0.0.12

- ip: 10.0.0.13

ports:

- port: 49152

protocol: TCP

b: 创建service(用于外部服务映射的svc,不需要标签选择器)

vi glusterfs-svc.yaml

iapiVersion: v1

kind: Service

metadata:

name: glusterfs

namespace: tomcat

spec:

ports:

- port: 49152

protocol: TCP

targetPort: 49152

type: ClusterIP

c: 创建gluster类型pv

apiVersion: v1

kind: PersistentVolume

metadata:

name: gluster

labels:

type: glusterfs

spec:

capacity:

storage: 50Gi

accessModes:

- ReadWriteMany

glusterfs:

endpoints: "glusterfs"

path: "qiangge"

readOnly: false

d: 创建pvc

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: gluster

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 200Gi

e:在pod中使用gluster

vi nginx_pod.yaml

……

volumeMounts:

- name: nfs-vol2

mountPath: /usr/share/nginx/html

volumes:

- name: nfs-vol2

persistentVolumeClaim:

claimName: gluster

pvc : 局部资源

pv:全局资源

- 文件存储

- 分布式存储

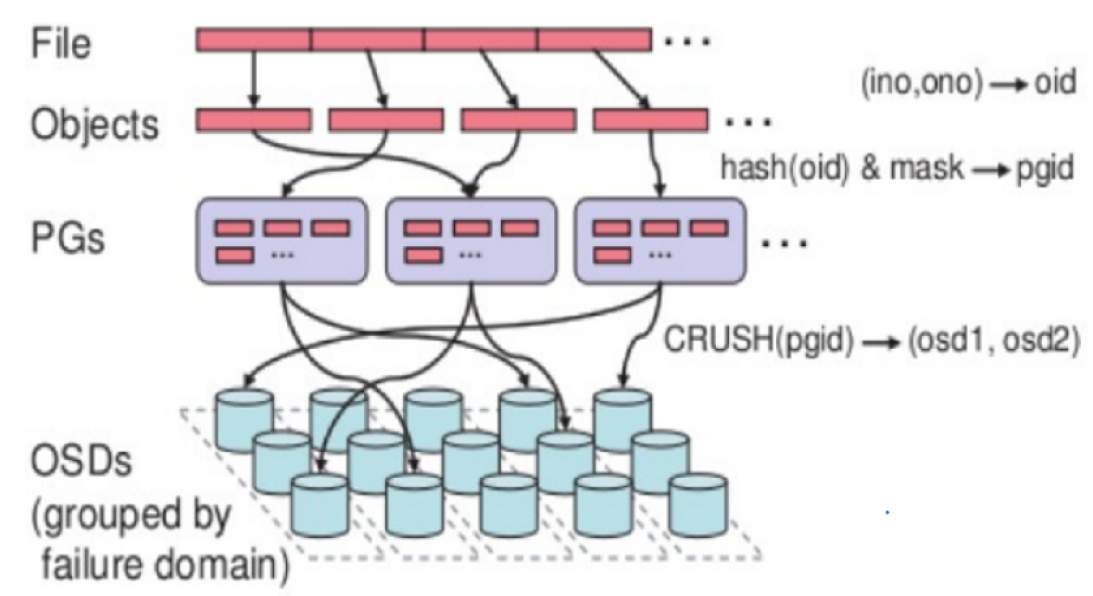

6.7 k8s 对接ceph

存储:

1:块存储 块设备 lvm cinder

2:文件存储 nfs glusterfs

3:对象存储 fastdfs Swift

存储:

硬件存储: nas san

软件存储: nfs lvm 分布式存储

分布式存储介绍:

ceph:支持块存储,支持文件存储,支持对象存储

部署ceph使用ceph-deploy部署

10.0.0.14 ceph01

10.0.0.15 ceph02

10.0.0.16 ceph03

####

配置免密码登录

ceph01 安装

#ceph01初始ceph配置文件

ceph-deploy new --public-network 10.0.0.0/24 ceph01 ceph02 ceph03

#安装rpm包

#yum install ceph ceph-mon ceph-mgr ceph-radosgw.x86_64 ceph-mds.x86_64 ceph-osd.x86_64 -y

#安装ceph-monitor

ceph-deploy mon create-initial

#配置admin用户

ceph-deploy admin ceph01 ceph02 ceph03

#安装并启动ceph-manager

ceph-deploy mgr create ceph01 ceph02 ceph03

#创建osd

ceph-deploy osd create ceph01 --data /dev/sdb

ceph-deploy osd create ceph02 --data /dev/sdb

ceph-deploy osd create ceph03 --data /dev/sdb

#创建pool资源池

ceph osd pool create test_demo 128 128

#创建一个rdb

rbd create --size 1024 k8s/tomcat_mysql.img

#如何使用rbd

rbd feature disable k8s/tomcat_mysql.img object-map fast-diff deep-flatten

rbd map k8s/tomcat_mysql.img

mkfs.xfs /dev/rbd0

mount /dev/rbd0 /mnt

#扩容rdb

rbd resize --size 2048 k8s/tomcat_mysql.img

mount /dev/rbd0 /mnt

xfs_growfs /dev/rbd0

文件存储:

ceph-matedata-server

对象存储:

ceph-radosgw

openstack对接ceph rbd

secret: 保存密码,秘钥

rbd create --size 2048 --image-feature layering k8s/test2.img

ceph对接k8s:

# https://github.com/kubernetes/examples/tree/master/volumes/rbd

#node节点安装ceph-common

#ceph.client.admin.keyring ceph.conf

#取admin用户的秘钥

# grep key /etc/ceph/ceph.client.kube.keyring |awk '{printf "%s", $NF}'|base64

QVFBTWdYaFZ3QkNlRGhBQTlubFBhRnlmVVNhdEdENGRyRldEdlE9PQ==

[root@k8s-master ceph]# cat ceph-secret.yaml

apiVersion: v1

kind: Secret

metadata:

name: ceph-secret

namespace: tomcat

type: "kubernetes.io/rbd"

data:

key: QVFCczV0RmZIVG8xSEJBQUxUbm5TSWZaRFl1VDZ2aERGeEZnWXc9PQ==

#创建rbd

rbd create --size 2048 --image-feature layering k8s/test2.img

[root@k8s-master ceph]# cat test_ceph_pod.yml

apiVersion: v1

kind: Pod

metadata:

name: rbd3

namespace: tomcat

spec:

containers:

- image: 10.0.0.11:5000/nginx:1.13

name: rbd-rw

volumeMounts:

- name: rbdpd

mountPath: /data

volumes:

- name: rbdpd

rbd:

monitors:

- '10.0.0.14:6789'

- '10.0.0.15:6789'

- '10.0.0.16:6789'

pool: k8s

image: test2.img

fsType: ext4

user: admin

secretRef:

name: ceph-secret

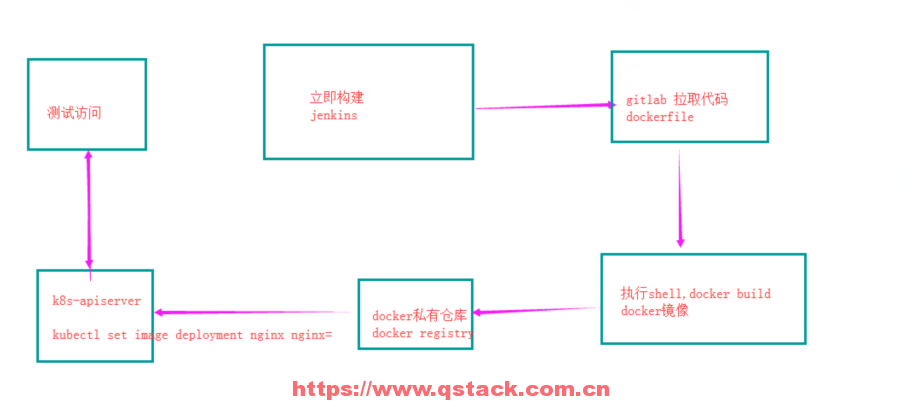

7:使用jenkins实现k8s持续更新

| ip地址 | 服务 | 内存 |

|---|---|---|

| 10.0.0.11 | kube-apiserver 8080 | 1G |

| 10.0.0.12 | kube-apiserver 8080 | 1G |

| 10.0.0.13 | jenkins(tomcat + jdk) 8080 | 3G |

代码仓库使用gitee托管

7.1: 安装gitlab并上传代码

#a:安装

wget https://mirrors.tuna.tsinghua.edu.cn/gitlab-ce/yum/el7/gitlab-ce-11.9.11-ce.0.el7.x86_64.rpm

yum localinstall gitlab-ce-11.9.11-ce.0.el7.x86_64.rpm -y

#b:配置

vim /etc/gitlab/gitlab.rb

external_url 'http://10.0.0.13'

prometheus_monitoring['enable'] = false

#c:应用并启动服务

gitlab-ctl reconfigure

#使用浏览器访问http://10.0.0.13,修改root用户密码,创建project

#上传代码到git仓库

cd /srv/

rz -E

unzip xiaoniaofeifei.zip

rm -fr xiaoniaofeifei.zip

git config --global user.name "Administrator"

git config --global user.email "admin@example.com"

git init

git remote add origin http://10.0.0.13/root/xiaoniao.git

git add .

git commit -m "Initial commit"

git push -u origin master

7.2 安装jenkins,并自动构建docker镜像

1:安装jenkins

cd /opt/

wget http://192.168.12.201/191216/apache-tomcat-8.0.27.tar.gz

wget http://192.168.12.201/191216/jdk-8u102-linux-x64.rpm

wget http://192.168.12.201/191216/jenkin-data.tar.gz

wget http://192.168.12.201/191216/jenkins.war

rpm -ivh jdk-8u102-linux-x64.rpm

mkdir /app -p

tar xf apache-tomcat-8.0.27.tar.gz -C /app

rm -fr /app/apache-tomcat-8.0.27/webapps/*

mv jenkins.war /app/apache-tomcat-8.0.27/webapps/ROOT.war

tar xf jenkin-data.tar.gz -C /root

/app/apache-tomcat-8.0.27/bin/startup.sh

netstat -lntup

2:访问jenkins

访问http://10.0.0.12:8080/,默认账号密码admin:123456

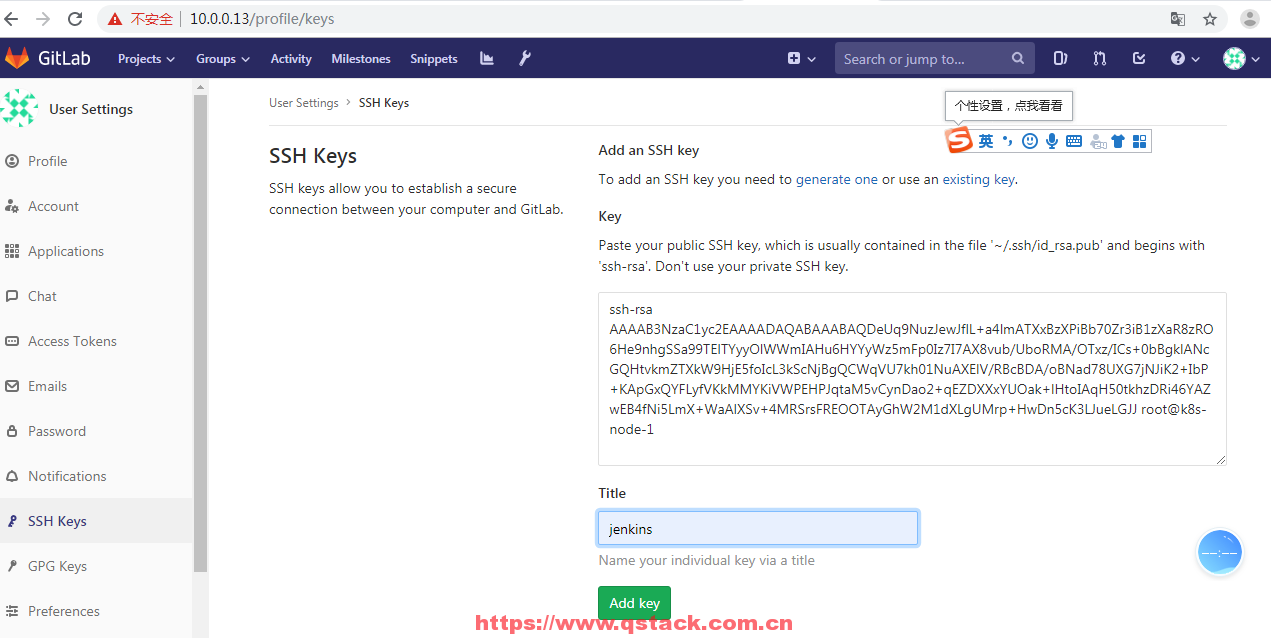

3:配置jenkins拉取gitlab代码凭据

a:在jenkins上生成秘钥对

ssh-keygen -t rsa

b:复制公钥粘贴gitlab上

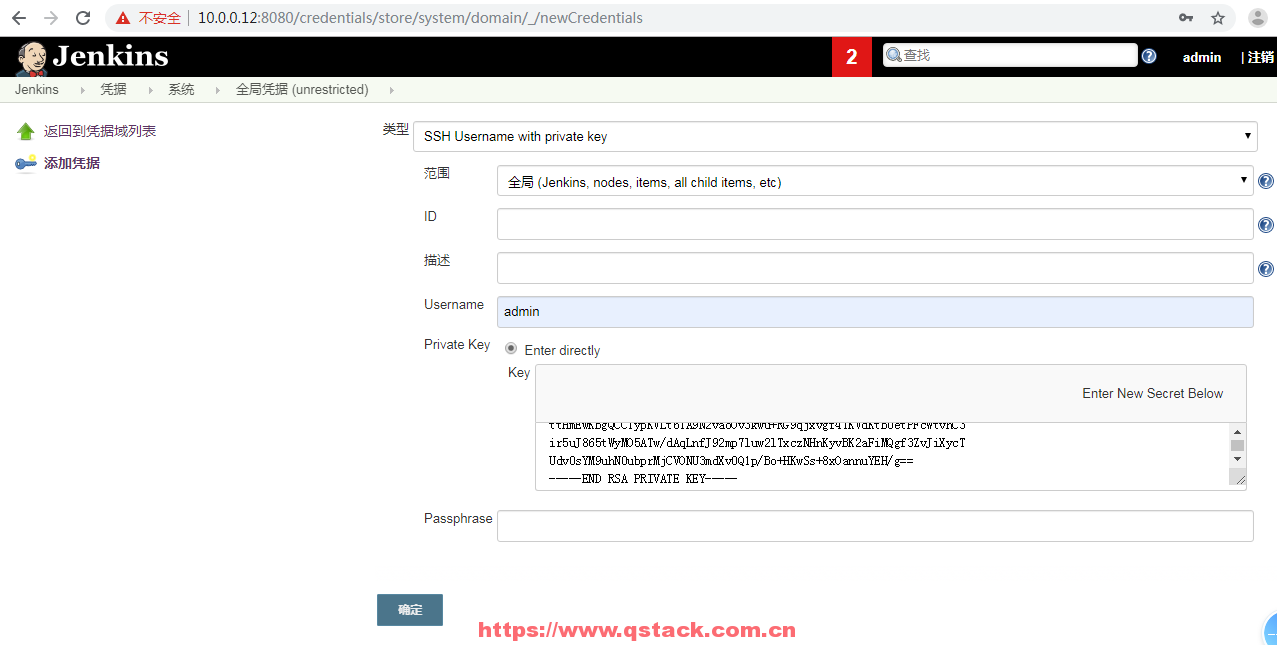

c:jenkins上创建全局凭据

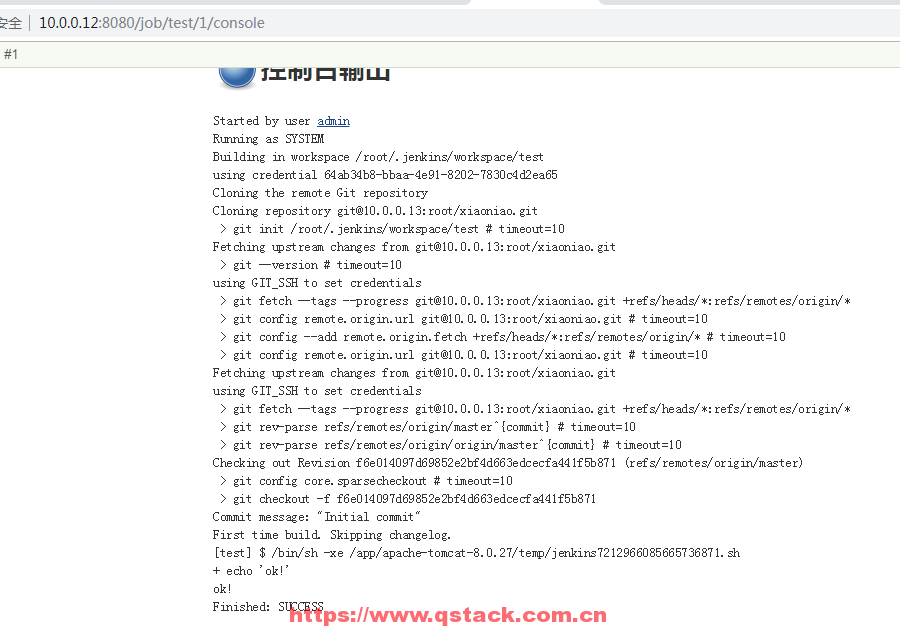

4:拉取代码测试

5:编写dockerfile并测试

#vim dockerfile

FROM 10.0.0.11:5000/nginx:1.13

add . /usr/share/nginx/html

添加docker build构建时不add的文件

vim .dockerignore dockerfile

docker build -t xiaoniao:v1 . docker run -d -p 88:80 xiaoniao:v1

打开浏览器测试访问xiaoniaofeifei的项目

6:上传dockerfile和.dockerignore到私有仓库

git add docker .dockerignore git commit -m “fisrt commit” git push -u origin master

7:点击jenkins立即构建,自动构建docker镜像并上传到私有仓库

修改jenkins 工程配置

docker build -t 10.0.0.11:5000/test:v$BUILD_ID .

docker push 10.0.0.11:5000/test:v$BUILD_ID

7.3 jenkins自动部署应用到k8s

kubectl -s 10.0.0.11:8080 get nodes

if [ -f /tmp/xiaoniao.lock ];then

docker build -t 10.0.0.11:5000/xiaoniao:v$BUILD_ID .

docker push 10.0.0.11:5000/xiaoniao:v$BUILD_ID

kubectl -s 10.0.0.11:8080 set image -n xiaoniao deploy xiaoniao xiaoniao=10.0.0.11:5000/xiaoniao:v$BUILD_ID

port=`kubectl -s 10.0.0.11:8080 get svc -n xiaoniao|grep -oP '(?<=80:)\d+'`

echo "你的项目地址访问是http://10.0.0.13:$port"

echo "更新成功"

else

docker build -t 10.0.0.11:5000/xiaoniao:v$BUILD_ID .

docker push 10.0.0.11:5000/xiaoniao:v$BUILD_ID

kubectl -s 10.0.0.11:8080 create namespace xiaoniao

kubectl -s 10.0.0.11:8080 run xiaoniao -n xiaoniao --image=10.0.0.11:5000/xiaoniao:v$BUILD_ID --replicas=3 --record

kubectl -s 10.0.0.11:8080 expose -n xiaoniao deployment xiaoniao --port=80 --type=NodePort

port=`kubectl -s 10.0.0.11:8080 get svc -n xiaoniao|grep -oP '(?<=80:)\d+'`

echo "你的项目地址访问是http://10.0.0.13:$port"

echo "发布成功"

touch /tmp/xiaoniao.lock

chattr +i /tmp/xiaoniao.lock

fi

jenkins一键回滚

kubectl -s 10.0.0.11:8080 rollout undo -n xiaoniao deployment xiaoniao

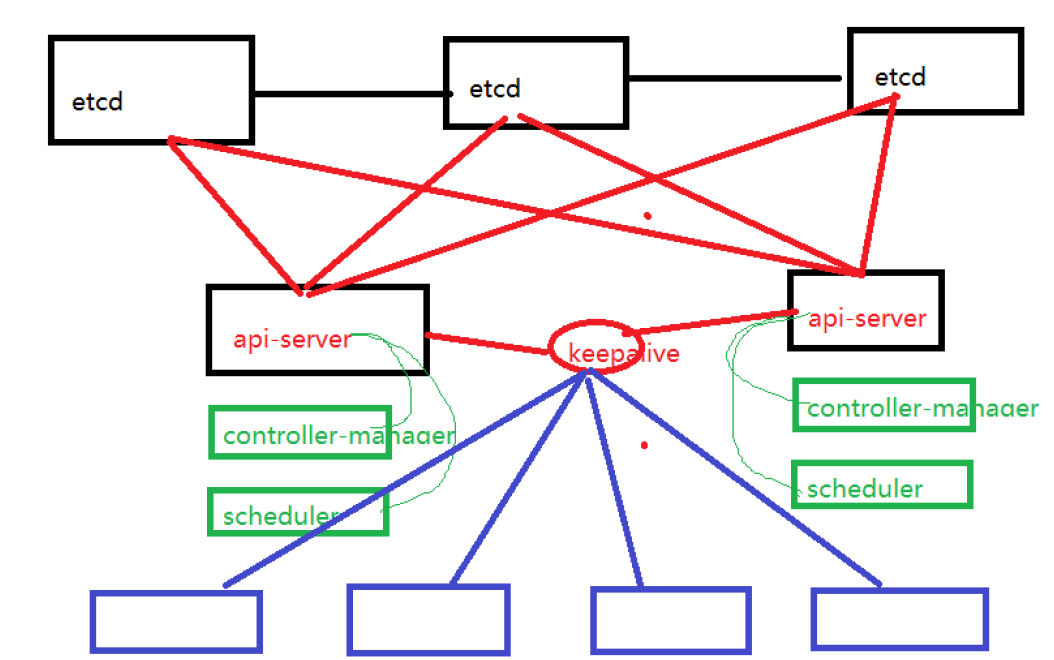

8: k8s高可用

8.1: 安装配置etcd高可用集群

#所有节点安装etcd

yum install etcd -y

3:ETCD_DATA_DIR="/var/lib/etcd/"

5:ETCD_LISTEN_PEER_URLS="http://0.0.0.0:2380"

6:ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379"

9:ETCD_NAME="node1" #节点的名字

20:ETCD_INITIAL_ADVERTISE_PEER_URLS="http://10.0.0.11:2380" #节点的同步数据的地址

21:ETCD_ADVERTISE_CLIENT_URLS="http://10.0.0.11:2379" #节点对外提供服务的地址

26:ETCD_INITIAL_CLUSTER="node1=http://10.0.0.11:2380,node2=http://10.0.0.12:2380,node3=http://10.0.0.13:2380"

27:ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

28:ETCD_INITIAL_CLUSTER_STATE="new"

systemctl enable etcd

systemctl restart etcd

[root@k8s-master tomcat_demo]# etcdctl cluster-health

member 9e80988e833ccb43 is healthy: got healthy result from http://10.0.0.11:2379

member a10d8f7920cc71c7 is healthy: got healthy result from http://10.0.0.13:2379

member abdc532bc0516b2d is healthy: got healthy result from http://10.0.0.12:2379

cluster is healthy

#修改flannel

vim /etc/sysconfig/flanneld

FLANNEL_ETCD_ENDPOINTS="http://10.0.0.11:2379,http://10.0.0.12:2379,http://10.0.0.13:2379"

etcdctl mk /atomic.io/network/config '{ "Network": "172.18.0.0/16" }'

systemctl restart flanneld

systemctl restart docker

8.2 安装配置master01的api-server,controller-manager,scheduler(127.0.0.1:8080)

vim /etc/kubernetes/apiserver

KUBE_ETCD_SERVERS="--etcd-servers=http://10.0.0.11:2379,http://10.0.0.12:2379,http://10.0.0.13:2379"

vim /etc/kubernetes/config

KUBE_MASTER="--master=http://127.0.0.1:8080"

systemctl restart kube-apiserver.service

systemctl restart kube-controller-manager.service kube-scheduler.service

8.3 安装配置master02的api-server,controller-manager,scheduler(127.0.0.1:8080)

yum install kubernetes-master.x86_64 -y

scp -rp 10.0.0.11:/etc/kubernetes/apiserver /etc/kubernetes/apiserver

scp -rp 10.0.0.11:/etc/kubernetes/config /etc/kubernetes/config

systemctl stop kubelet.service

systemctl disable kubelet.service

systemctl stop kube-proxy.service

systemctl disable kube-proxy.service

systemctl enable kube-apiserver.service

systemctl restart kube-apiserver.service

systemctl enable kube-controller-manager.service

systemctl restart kube-controller-manager.service

systemctl enable kube-scheduler.service

systemctl restart kube-scheduler.service

8.4 为master01和master02安装配置Keepalived

yum install keepalived.x86_64 -y

#master01配置:

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL_11

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.10

}

}

#master02配置

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL_12

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 51

priority 80

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

10.0.0.10

}

}

systemctl enable keepalived

systemctl start keepalived

8.5: 所有node节点kubelet,kube-proxy指向api-server的vip

vim /etc/kubernetes/kubelet

KUBELET_API_SERVER="--api-servers=http://10.0.0.10:8080"

vim /etc/kubernetes/config

KUBE_MASTER="--master=http://10.0.0.10:8080"

systemctl restart kubelet.service kube-proxy.service