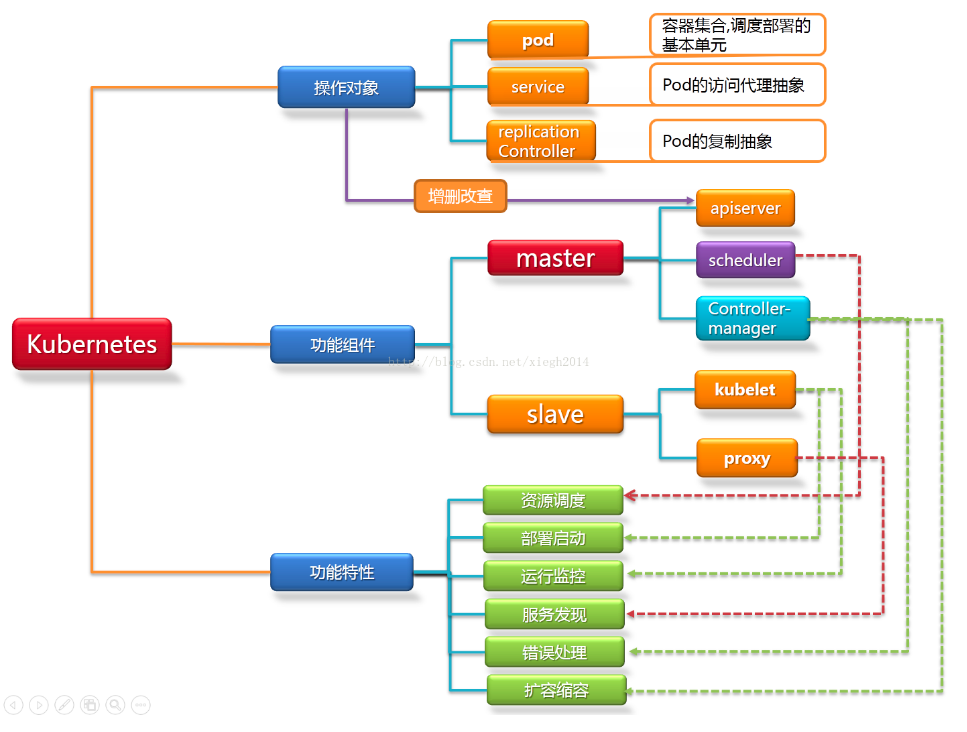

1. 架构篇

1.1 kubernetes 架构说明

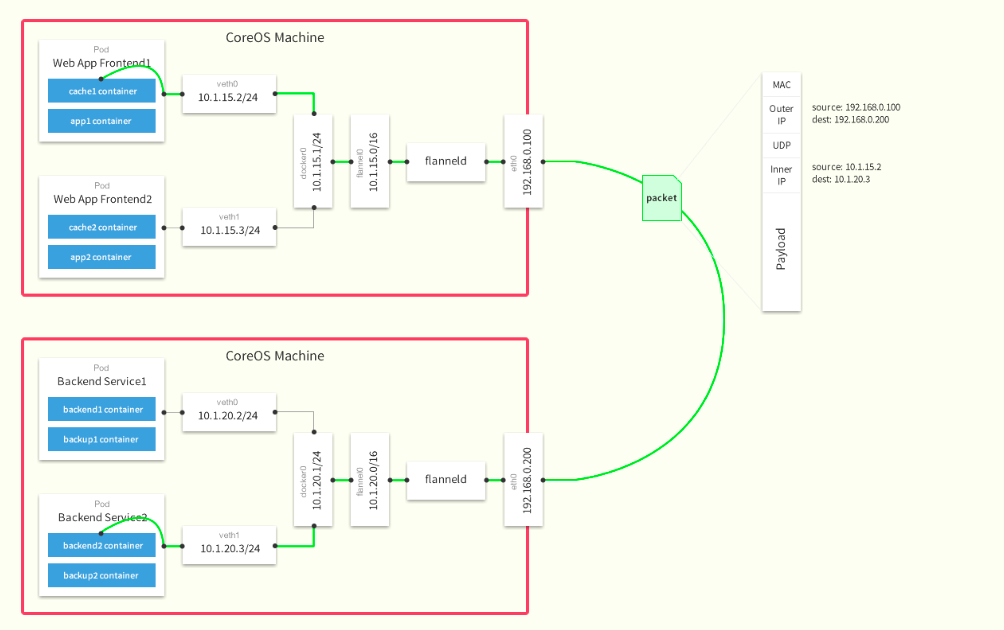

1.2 Flannel网络架构图

集群功能各模块功能描述: Master节点: Master节点上面主要由四个模块组成,APIServer,schedule,controller-manager,etcd APIServer: APIServer负责对外提供RESTful的kubernetes API的服务,它是系统管理指令的统一接口,任何对资源的增删该查都要交给APIServer处理后再交给etcd,如图,kubectl(kubernetes提供的客户端工具,该工具内部是对kubernetes API的调用)是直接和APIServer交互的。 schedule: schedule负责调度Pod到合适的Node上,如果把scheduler看成一个黑匣子,那么它的输入是pod和由多个Node组成的列表,输出是Pod和一个Node的绑定。 kubernetes目前提供了调度算法,同样也保留了接口。用户根据自己的需求定义自己的调度算法。 controller manager: 如果APIServer做的是前台的工作的话,那么controller manager就是负责后台的。每一个资源都对应一个控制器。而control manager就是负责管理这些控制器的,比如我们通过APIServer创建了一个Pod,当这个Pod创建成功后,APIServer的任务就算完成了。 etcd:etcd是一个高可用的键值存储系统,kubernetes使用它来存储各个资源的状态,从而实现了Restful的API。 Node节点: 每个Node节点主要由三个模板组成:kublet, kube-proxy kube-proxy: 该模块实现了kubernetes中的服务发现和反向代理功能。kube-proxy支持TCP和UDP连接转发,默认基Round Robin算法将客户端流量转发到与service对应的一组后端pod。服务发现方面,kube-proxy使用etcd的watch机制监控集群中service和endpoint对象数据的动态变化,并且维护一个service到endpoint的映射关系,从而保证了后端pod的IP变化不会对访问者造成影响,另外,kube-proxy还支持session affinity。 kublet:kublet是Master在每个Node节点上面的agent,是Node节点上面最重要的模块,它负责维护和管理该Node上的所有容器,但是如果容器不是通过kubernetes创建的,它并不会管理。本质上,它负责使Pod的运行状态与期望的状态一致。

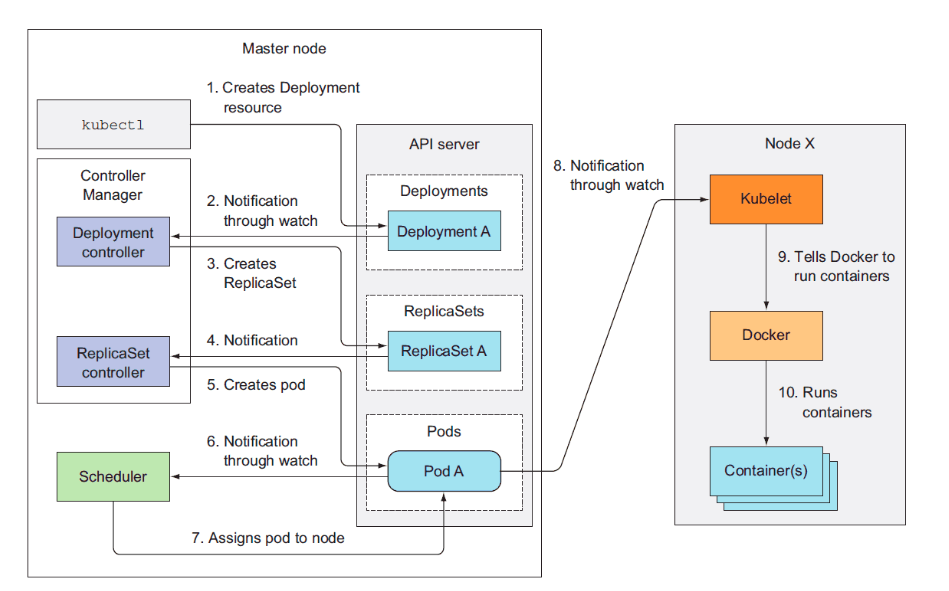

1.3 Kubernetes工作流程

2. 环境说明

2.1 部署节点说明

| 主机名 |

IP |

用途 | 部署软件 |

|---|---|---|---|

| k8s-1 | 192.168.123.211 | master |

apiserver,scheduler,controller-manager etcd,flanneld |

| k8s-2 | 192.168.123.212 | node | kubelet,kube-proxy etcd,flanneld |

| k8s-3 | 192.168.123.213 | node | kubelet,kube-proxy etcd,flanneld |

2.2 操作系统环境

# 三台机器相同[root@k8s-1 /root] master# uname -r3.10.0-957.el7.x86_64[root@k8s-1 /root] master# cat /etc/redhat-releaseCentOS Linux release 7.6.1810 (Core)[root@k8s-1 /root] master# sestatusSELinux status: disabled

2.3 软件包版本

| 软件包 | 下载地址 |

|---|---|

| kubernetes-node-linux-amd64.tar.gz | https://dl.k8s.io/v1.15.1/kubernetes-node-linux-amd64.tar.gz |

| kubernetes-server-linux-amd64.tar.gz | https://dl.k8s.io/v1.15.1/kubernetes-server-linux-amd64.tar.gz |

| flannel-v0.11.0-linux-amd64.tar.gz | https://github.com/coreos/flannel/releases/download/v0.11.0/flannel-v0.11.0-linux-amd64.tar.gz |

| etcd-v3.3.10-linux-amd64.tar.gz | https://github.com/etcd-io/etcd/releases/download/v3.3.10/etcd-v3.3.10-linux-amd64.tar.gz |

3. Kubernetes 安装及配置

3.1 初始化环境

3.1.1 设置关闭防火墙及SELINUX

systemctl stop firewalld && systemctl disable firewalldsetenforce 0vi /etc/selinux/configSELINUX=disabled

3.1.2 关闭swap

swapoff -a && sysctl -w vm.swappiness=0sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

3.1.3 设置Docker所需参数

cat > /etc/sysctl.d/kubernetes.conf <<EOFnet.ipv4.ip_forward=1net.ipv4.tcp_tw_recycle=0vm.swappiness=0vm.overcommit_memory=1vm.panic_on_oom=0fs.inotify.max_user_watches=89100fs.file-max=52706963fs.nr_open=52706963net.ipv6.conf.all.disable_ipv6=1EOFsysctl -p /etc/sysctl.d/kubernetes.conf

3.1.4 安装 Docker

# 配置yum源cd /etc/yum.repo.d/wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repoyum clean all ;yum repolist -y# 安装docker ,版本 18.06.2yum install docker-ce-18.06.2.ce-3.el7 -y# 启动systemctl start docker && systemctl enable docker

3.1.5 创建相关目录

# 创建安装包存储目录mkdir /data/{install,ssl_config} -pmkdir /data/ssl_config/{etcd,kubernetes} -p# 创建安装目录mkdir /cloud/k8s/etcd/{bin,cfg,ssl} -pmkdir /cloud/k8s/kubernetes/{bin,cfg,ssl} -p

3.1.6 SSH 互信配置

ssh-keygenssh-copy-id k8s-1ssh-copy-id k8s-2ssh-copy-id k8s-3

3.1.7 添加 PATH 变量

vi /etc/profileexport PATH=$PATH:/cloud/k8s/etcd/bin/:/cloud/k8s/kubernetes/bin/

3.2 创建ssl证书

3.2.1 安装及配置CFSSL

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64mv cfssl_linux-amd64 /usr/local/bin/cfsslmv cfssljson_linux-amd64 /usr/local/bin/cfssljsonmv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo

3.2.2 创建 ETCD 相关证书

以下操作均在/data/ssl_config/etcd/目录中

etcd证书ca配置

cd /data/ssl_config/etcd/cat << EOF | tee ca-config.json{"signing": {"default": {"expiry": "87600h"},"profiles": {"www": {"expiry": "87600h","usages": ["signing","key encipherment","server auth","client auth"]}}}}EOF

创建 ETCD CA 配置文件

cat << EOF | tee ca-csr.json{"CN": "etcd CA","key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "Beijing","ST": "Beijing"}]}EOF

创建 ETCD Server 证书

cat << EOF | tee server-csr.json{"CN": "etcd","hosts": ["k8s-3","k8s-2","k8s-1","192.168.123.211","192.168.123.212","192.168.123.213"],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "Beijing","ST": "Beijing"}]}EOF

生成 ETCD CA 证书和私钥

cd /data/ssl_config/etcd/# 生成ca证书cfssl gencert -initca ca-csr.json | cfssljson -bare ca -# 生成server证书cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

3.2.3 创建 Kubernetes 相关证书

以下操作均在/data/ssl_config/kubernetes/目录中

kubernetes 证书ca配置

cd /data/ssl_config/kubernetes/cat << EOF | tee ca-config.json{"signing": {"default": {"expiry": "87600h"},"profiles": {"kubernetes": {"expiry": "87600h","usages": ["signing","key encipherment","server auth","client auth"]}}}}EOF

创建ca证书配置

cat << EOF | tee ca-csr.json{"CN": "kubernetes","key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "Beijing","ST": "Beijing","O": "k8s","OU": "System"}]}EOF

生成API_SERVER证书

cat << EOF | tee server-csr.json{"CN": "kubernetes","hosts": ["10.0.0.1","127.0.0.1","192.168.123.211","k8s-1","kubernetes","kubernetes.default","kubernetes.default.svc","kubernetes.default.svc.cluster","kubernetes.default.svc.cluster.local"],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "Beijing","ST": "Beijing","O": "k8s","OU": "System"}]}EOF

创建 Kubernetes Proxy 证书

cat << EOF | tee kube-proxy-csr.json{"CN": "system:kube-proxy","hosts": [],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "Beijing","ST": "Beijing","O": "k8s","OU": "System"}]}EOF

生成 kubernetes CA 证书和私钥

# 生成ca证书cfssl gencert -initca ca-csr.json | cfssljson -bare ca -# 生成 api-server 证书cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server# 生成 kube-proxy 证书cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

3.3 部署etcd

3.3.1 配置软件包

cd /data/install/tar -xvf etcd-v3.3.10-linux-amd64.tar.gzcd etcd-v3.3.10-linux-amd64/cp etcd etcdctl /cloud/k8s/etcd/bin/

3.3.2 编辑etcd配置文件

vim /cloud/k8s/etcd/cfg/etcd#[Member]ETCD_NAME="etcd01"ETCD_DATA_DIR="/var/lib/etcd/default.etcd"ETCD_LISTEN_PEER_URLS="https://192.168.123.211:2380"ETCD_LISTEN_CLIENT_URLS="https://192.168.123.211:2379"#[Clustering]ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.123.211:2380"ETCD_ADVERTISE_CLIENT_URLS="https://192.168.123.211:2379"ETCD_INITIAL_CLUSTER="etcd01=https://192.168.123.211:2380,etcd02=https://192.168.123.212:2380,etcd03=https://192.168.123.213:2380"ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"ETCD_INITIAL_CLUSTER_STATE="new"

3.3.3 创建 etcd的 systemd unit 文件

vim /usr/lib/systemd/system/etcd.service[Unit]Description=Etcd ServerAfter=network.targetAfter=network-online.targetWants=network-online.target[Service]Type=notifyEnvironmentFile=/cloud/k8s/etcd/cfg/etcdExecStart=/cloud/k8s/etcd/bin/etcd \--name=${ETCD_NAME} \--data-dir=${ETCD_DATA_DIR} \--listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \--listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \--advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \--initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \--initial-cluster=${ETCD_INITIAL_CLUSTER} \--initial-cluster-token=${ETCD_INITIAL_CLUSTER_TOKEN} \--initial-cluster-state=new \--cert-file=/cloud/k8s/etcd/ssl/server.pem \--key-file=/cloud/k8s/etcd/ssl/server-key.pem \--peer-cert-file=/cloud/k8s/etcd/ssl/server.pem \--peer-key-file=/cloud/k8s/etcd/ssl/server-key.pem \--trusted-ca-file=/cloud/k8s/etcd/ssl/ca.pem \--peer-trusted-ca-file=/cloud/k8s/etcd/ssl/ca.pemRestart=on-failureLimitNOFILE=65536[Install]WantedBy=multi-user.target

3.3.4 配置证书文件

cd /data/ssl_config/etcd/cp ca*pem server*pem /cloud/k8s/etcd/ssl

3.3.5 配置文件拷贝到 节点1、节点2

cd /cloud/k8s/scp -r etcd k8s-2:/cloud/k8s/scp -r etcd k8s-3:/cloud/k8s/scp /usr/lib/systemd/system/etcd.service k8s-2:/usr/lib/systemd/system/etcd.servicescp /usr/lib/systemd/system/etcd.service k8s-3:/usr/lib/systemd/system/etcd.service

修改另外两台机器配置文件

### k8s-2cat /cloud/k8s/etcd/cfg/etcd#[Member]ETCD_NAME="etcd02"ETCD_DATA_DIR="/var/lib/etcd/default.etcd"ETCD_LISTEN_PEER_URLS="https://192.168.123.212:2380"ETCD_LISTEN_CLIENT_URLS="https://192.168.123.212:2379"#[Clustering]ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.123.212:2380"ETCD_ADVERTISE_CLIENT_URLS="https://192.168.123.212:2379"ETCD_INITIAL_CLUSTER="etcd01=https://192.168.123.211:2380,etcd02=https://192.168.123.212:2380,etcd03=https://192.168.123.213:2380"ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"ETCD_INITIAL_CLUSTER_STATE="new"### k8s-3cat /cloud/k8s/etcd/cfg/etcd#[Member]ETCD_NAME="etcd03"ETCD_DATA_DIR="/var/lib/etcd/default.etcd"ETCD_LISTEN_PEER_URLS="https://192.168.123.213:2380"ETCD_LISTEN_CLIENT_URLS="https://192.168.123.213:2379"#[Clustering]ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.123.213:2380"ETCD_ADVERTISE_CLIENT_URLS="https://192.168.123.213:2379"ETCD_INITIAL_CLUSTER="etcd01=https://192.168.123.211:2380,etcd02=https://192.168.123.212:2380,etcd03=https://192.168.123.213:2380"ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"ETCD_INITIAL_CLUSTER_STATE="new"

3.3.6 启动ETCD服务

systemctl daemon-reloadsystemctl enable etcdsystemctl start etcd#启动ETCD集群同时启动二个节点,单节点是无法正常启动的。

3.3.7 检查服务是否正常

[root@k8s-1 /cloud/k8s/etcd/bin] master# etcdctl --ca-file=/cloud/k8s/etcd/ssl/ca.pem --cert-file=/cloud/k8s/etcd/ssl/server.pem --key-file=/cloud/k8s/etcd/ssl/server-key.pem --endpoints="https://192.168.123.211:2379,https://192.168.123.212:2379,https://192.168.123.213:2379" cluster-healthmember 4c6bfb873a73368c is healthy: got healthy result from https://192.168.123.213:2379member 622f71dbd55b34ce is healthy: got healthy result from https://192.168.123.211:2379member f14004aa5403b07d is healthy: got healthy result from https://192.168.123.212:2379cluster is healthy

3.4 部署Flannel网络

3.4.1 向 etcd 写入集群 Pod 网段信息

cd /cloud/k8s/etcd/binetcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \--endpoints="https://192.168.123.211:2379,https://192.168.123.212:2379,https://192.168.123.213:2379" \set /coreos.com/network/config \'{ "Network": "172.18.0.0/16", "Backend": {"Type": "vxlan"}}'

写入的 Pod 网段 ${CLUSTER_CIDR} 必须是 /16 段地址,必须与 kube-controller-manager 的 --cluster-cidr 参数值一致;

3.4.2 部署 flannel

cd /data/install/tar xf flannel-v0.11.0-linux-amd64.tar.gzmv flanneld mk-docker-opts.sh /cloud/k8s/kubernetes/bin/

3.5.3 配置flannel

vim /cloud/k8s/kubernetes/cfg/flanneldFLANNEL_OPTIONS="--etcd-endpoints=https://192.168.123.211:2379,https://192.168.123.212:2379,https://192.168.123.213:2379 -etcd-cafile=/cloud/k8s/etcd/ssl/ca.pem -etcd-certfile=/cloud/k8s/etcd/ssl/server.pem -etcd-keyfile=/cloud/k8s/etcd/ssl/server-key.pem"

3.5.4 创建 flanneld 的 systemd unit 文件

vim /usr/lib/systemd/system/flanneld.service[Unit]Description=Flanneld overlay address etcd agentAfter=network-online.target network.targetBefore=docker.service[Service]Type=notifyEnvironmentFile=/cloud/k8s/kubernetes/cfg/flanneldExecStart=/cloud/k8s/kubernetes/bin/flanneld --ip-masq $FLANNEL_OPTIONSExecStartPost=/cloud/k8s/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.envRestart=on-failure[Install]WantedBy=multi-user.target

mk-docker-opts.sh 脚本将分配给 flanneld 的 Pod 子网网段信息写入 /run/flannel/docker 文件,后续 docker 启动时 使用这个文件中的环境变量配置 docker0 网桥;

flanneld 使用系统缺省路由所在的接口与其它节点通信,对于有多个网络接口(如内网和公网)的节点,可以用 -iface 参数指定通信接口,如上面的 eth0 接口; flanneld 运行时需要 root 权限;

3.5.5 配置Docker启动指定子网段

vim /usr/lib/systemd/system/docker.service[Unit]Description=Docker Application Container EngineDocumentation=https://docs.docker.comAfter=network-online.target firewalld.serviceWants=network-online.target[Service]Type=notifyEnvironmentFile=/run/flannel/subnet.envExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONSExecReload=/bin/kill -s HUP $MAINPIDLimitNOFILE=infinityLimitNPROC=infinityLimitCORE=infinityTimeoutStartSec=0Delegate=yesKillMode=processRestart=on-failureStartLimitBurst=3StartLimitInterval=60s[Install]WantedBy=multi-user.target

3.5.6 将flanneld systemd unit 文件到所有节点

cd /cloud/k8s/scp -r kubernetes k8s-2:/cloud/k8s/scp -r kubernetes k8s-3:/cloud/k8s/scp /cloud/k8s/kubernetes/cfg/flanneld k8s-2:/cloud/k8s/kubernetes/cfg/flanneldscp /cloud/k8s/kubernetes/cfg/flanneld k8s-3:/cloud/k8s/kubernetes/cfg/flanneldscp /usr/lib/systemd/system/docker.service k8s-2:/usr/lib/systemd/system/docker.servicescp /usr/lib/systemd/system/docker.service k8s-3:/usr/lib/systemd/system/docker.servicescp /usr/lib/systemd/system/flanneld.service k8s-2:/usr/lib/systemd/system/flanneld.servicescp /usr/lib/systemd/system/flanneld.service k8s-3:/usr/lib/systemd/system/flanneld.service# 启动服务(每台节点都操作)systemctl daemon-reloadsystemctl start flanneldsystemctl enable flanneldsystemctl restart docker

3.5.7 验证fannel网络配置

[root@k8s-1 /data/install] master# ip a1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00inet 127.0.0.1/8 scope host lovalid_lft forever preferred_lft forever2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000link/ether 00:0c:29:33:de:53 brd ff:ff:ff:ff:ff:ffinet 192.168.123.211/24 brd 192.168.123.255 scope global noprefixroute eth0valid_lft forever preferred_lft forever3: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN group defaultlink/ether 1a:6f:5a:0b:c7:18 brd ff:ff:ff:ff:ff:ffinet 172.18.30.0/32 scope global flannel.1valid_lft forever preferred_lft forever4: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group defaultlink/ether 02:42:5c:d0:df:e6 brd ff:ff:ff:ff:ff:ffinet 172.18.30.1/24 brd 172.18.30.255 scope global docker0valid_lft forever preferred_lft forever

3.5 部署 master 节点

kubernetes master 节点运行如下组件: kube-apiserver kube-scheduler kube-controller-manager kube-scheduler 和 kube-controller-manager 可以以集群模式运行,通过 leader 选举产生一个工作进程,其它进程处于阻塞模式。

3.5.1 配置 master 节点文件

cd /data/install/tar xf kubernetes-server-linux-amd64.tar.gzcd kubernetes/server/bin/cp kube-scheduler kube-apiserver kube-controller-manager kubectl /cloud/k8s/kubernetes/bin/

3.5.2 配置 kubernetes相关证书

cd /data/ssl_config/kubernetes/cp *pem /cloud/k8s/kubernetes/ssl/

3.5.3 部署 kube-apiserver 组件

创建 TLS Bootstrapping Token

# head -c 16 /dev/urandom | od -An -t x | tr -d ' '449bbeb0ea7e50f321087a123a509a19# vim /cloud/k8s/kubernetes/cfg/token.csv449bbeb0ea7e50f321087a123a509a19,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

创建apiserver配置文件

vim /cloud/k8s/kubernetes/cfg/kube-apiserverKUBE_APISERVER_OPTS="--logtostderr=true \--v=4 \--etcd-servers=https://192.168.123.211:2379,https://192.168.123.212:2379,https://192.168.123.213:2379 \--bind-address=192.168.123.211 \--secure-port=6443 \--advertise-address=192.168.123.211 \--allow-privileged=true \--service-cluster-ip-range=10.0.0.0/24 \--enable-admission-plugins=NamespaceLifecycle,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota,NodeRestriction \--authorization-mode=RBAC,Node \--enable-bootstrap-token-auth \--token-auth-file=/cloud/k8s/kubernetes/cfg/token.csv \--service-node-port-range=30000-50000 \--tls-cert-file=/cloud/k8s/kubernetes/ssl/server.pem \--tls-private-key-file=/cloud/k8s/kubernetes/ssl/server-key.pem \--client-ca-file=/cloud/k8s/kubernetes/ssl/ca.pem \--service-account-key-file=/cloud/k8s/kubernetes/ssl/ca-key.pem \--etcd-cafile=/cloud/k8s/etcd/ssl/ca.pem \--etcd-certfile=/cloud/k8s/etcd/ssl/server.pem \--etcd-keyfile=/cloud/k8s/etcd/ssl/server-key.pem"

创建 kube-apiserver systemd unit 文件

vim /usr/lib/systemd/system/kube-apiserver.service[Unit]Description=Kubernetes API ServerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=/cloud/k8s/kubernetes/cfg/kube-apiserverExecStart=/cloud/k8s/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTSRestart=on-failure[Install]WantedBy=multi-user.target

启动服务

systemctl daemon-reloadsystemctl enable kube-apiserversystemctl restart kube-apiserver

查看apiserver是否运行

[root@k8s-1 /data/ssl_config/kubernetes] master# ps -ef |grep kube-apiserverroot 5475 1 2 7月30 ? 01:57:41 /cloud/k8s/kubernetes/bin/kube-apiserver --logtostderr=true --v=4 --etcd-servers=https://192.168.123.211:2379,https://192.168.123.212:2379,https://192.168.123.213:2379 --bind-address=192.168.123.211 --secure-port=6443 --advertise-address=192.168.123.211 --allow-privileged=true --service-cluster-ip-range=10.0.0.0/24 --enable-admission-plugins=NamespaceLifecycle,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota,NodeRestriction --authorization-mode=RBAC,Node --enable-bootstrap-token-auth --token-auth-file=/cloud/k8s/kubernetes/cfg/token.csv --service-node-port-range=30000-50000 --tls-cert-file=/cloud/k8s/kubernetes/ssl/server.pem --tls-private-key-file=/cloud/k8s/kubernetes/ssl/server-key.pem --client-ca-file=/cloud/k8s/kubernetes/ssl/ca.pem --service-account-key-file=/cloud/k8s/kubernetes/ssl/ca-key.pem --etcd-cafile=/cloud/k8s/etcd/ssl/ca.pem --etcd-certfile=/cloud/k8s/etcd/ssl/server.pem --etcd-keyfile=/cloud/k8s/etcd/ssl/server-key.pemroot 6886 2930 0 19:49 pts/0 00:00:00 grep --color=auto kube-apiserver

3.5.4 部署kube-scheduler

创建kube-scheduler配置文件

vim /cloud/k8s/kubernetes/cfg/kube-schedulerKUBE_SCHEDULER_OPTS="--logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect"

--address:在 127.0.0.1:10251 端口接收 http /metrics 请求;kube-scheduler 目前还不支持接收 https 请求;

—kubeconfig:指定 kubeconfig 文件路径,kube-scheduler 使用它连接和验证 kube-apiserver; —leader-elect=true:集群运行模式,启用选举功能;被选为 leader 的节点负责处理工作,其它节点为阻塞状态;

创建kube-scheduler systemd unit 文件

vim /usr/lib/systemd/system/kube-scheduler.service[Unit]Description=Kubernetes SchedulerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=/cloud/k8s/kubernetes/cfg/kube-schedulerExecStart=/cloud/k8s/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTSRestart=on-failure[Install]WantedBy=multi-user.target

启动kube-scheduler服务

systemctl daemon-reloadsystemctl enable kube-scheduler.servicesystemctl restart kube-scheduler.service

查看kube-scheduler是否运行

[root@k8s-1 /data/ssl_config/kubernetes] master# ps -ef |grep kube-schedulerroot 7269 2930 0 19:54 pts/0 00:00:00 grep --color=auto kube-schedulerroot 13970 1 0 8月02 ? 00:01:21 /cloud/k8s/kubernetes/bin/kube-scheduler --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect[root@k8s-1 /data/ssl_config/kubernetes] master# systemctl status kube-scheduler● kube-scheduler.service - Kubernetes SchedulerLoaded: loaded (/usr/lib/systemd/system/kube-scheduler.service; enabled; vendor preset: disabled)Active: active (running) since 五 2019-08-02 18:33:11 CST; 1 day 1h agoDocs: https://github.com/kubernetes/kubernetesMain PID: 13970 (kube-scheduler)Tasks: 10Memory: 19.1MCGroup: /system.slice/kube-scheduler.service└─13970 /cloud/k8s/kubernetes/bin/kube-scheduler --logtostderr=tru...8月 03 19:46:52 k8s-1 kube-scheduler[13970]: I0803 19:46:52.580662 13970 ...d8月 03 19:47:03 k8s-1 kube-scheduler[13970]: I0803 19:47:03.578985 13970 ...d8月 03 19:47:03 k8s-1 kube-scheduler[13970]: I0803 19:47:03.583437 13970 ...d8月 03 19:48:31 k8s-1 kube-scheduler[13970]: I0803 19:48:31.637472 13970 ...d8月 03 19:48:48 k8s-1 kube-scheduler[13970]: I0803 19:48:48.617124 13970 ...d8月 03 19:50:00 k8s-1 kube-scheduler[13970]: I0803 19:50:00.589103 13970 ...d8月 03 19:50:18 k8s-1 kube-scheduler[13970]: I0803 19:50:18.616574 13970 ...d8月 03 19:52:15 k8s-1 kube-scheduler[13970]: I0803 19:52:15.629976 13970 ...d8月 03 19:52:45 k8s-1 kube-scheduler[13970]: I0803 19:52:45.580103 13970 ...d8月 03 19:53:56 k8s-1 kube-scheduler[13970]: I0803 19:53:56.601687 13970 ...dHint: Some lines were ellipsized, use -l to show in full.

3.5.5 部署kube-controller-manager

创建kube-controller-manager配置文件

vim /cloud/k8s/kubernetes/cfg/kube-controller-managerKUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \--v=4 \--master=127.0.0.1:8080 \--leader-elect=true \--address=127.0.0.1 \--service-cluster-ip-range=10.0.0.0/24 \--cluster-name=kubernetes \--cluster-signing-cert-file=/cloud/k8s/kubernetes/ssl/ca.pem \--cluster-signing-key-file=/cloud/k8s/kubernetes/ssl/ca-key.pem \--root-ca-file=/cloud/k8s/kubernetes/ssl/ca.pem \--service-account-private-key-file=/cloud/k8s/kubernetes/ssl/ca-key.pem"

创建kube-controller-manager systemd unit 文件

vim /usr/lib/systemd/system/kube-controller-manager.service[Unit]Description=Kubernetes Controller ManagerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=/cloud/k8s/kubernetes/cfg/kube-controller-managerExecStart=/cloud/k8s/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTSRestart=on-failure[Install]WantedBy=multi-user.target

启动服务

systemctl daemon-reloadsystemctl enable kube-controller-managersystemctl restart kube-controller-manager

查看kube-controller-manager是否运行

[root@k8s-1 /data/ssl_config/kubernetes] master# ps -ef |grep kube-controller-managerroot 7759 2930 0 19:59 pts/0 00:00:00 grep --color=auto kube-controller-managerroot 13972 1 0 8月02 ? 00:10:56 /cloud/k8s/kubernetes/bin/kube-controller-manager --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect=true --address=127.0.0.1 --service-cluster-ip-range=10.0.0.0/24 --cluster-name=kubernetes --cluster-signing-cert-file=/cloud/k8s/kubernetes/ssl/ca.pem --cluster-signing-key-file=/cloud/k8s/kubernetes/ssl/ca-key.pem --root-ca-file=/cloud/k8s/kubernetes/ssl/ca.pem --service-account-private-key-file=/cloud/k8s/kubernetes/ssl/ca-key.pem[root@k8s-1 /data/ssl_config/kubernetes] master# systemctl status kube-controller-manager● kube-controller-manager.service - Kubernetes Controller ManagerLoaded: loaded (/usr/lib/systemd/system/kube-controller-manager.service; enabled; vendor preset: disabled)Active: active (running) since 五 2019-08-02 18:33:13 CST; 1 day 1h agoDocs: https://github.com/kubernetes/kubernetesMain PID: 13972 (kube-controller)Tasks: 10Memory: 51.9MCGroup: /system.slice/kube-controller-manager.service└─13972 /cloud/k8s/kubernetes/bin/kube-controller-manager --logtos...8月 03 19:59:48 k8s-1 kube-controller-manager[13972]: I0803 19:59:48.522342 ...8月 03 19:59:48 k8s-1 kube-controller-manager[13972]: I0803 19:59:48.526381 ...8月 03 19:59:50 k8s-1 kube-controller-manager[13972]: I0803 19:59:50.211112 ...8月 03 19:59:50 k8s-1 kube-controller-manager[13972]: I0803 19:59:50.455555 ...8月 03 19:59:50 k8s-1 kube-controller-manager[13972]: I0803 19:59:50.738397 ...8月 03 19:59:50 k8s-1 kube-controller-manager[13972]: I0803 19:59:50.767332 ...8月 03 19:59:51 k8s-1 kube-controller-manager[13972]: I0803 19:59:51.502835 ...8月 03 19:59:53 k8s-1 kube-controller-manager[13972]: I0803 19:59:53.567923 ...8月 03 19:59:53 k8s-1 kube-controller-manager[13972]: I0803 19:59:53.567955 ...8月 03 19:59:54 k8s-1 kube-controller-manager[13972]: I0803 19:59:54.321341 ...Hint: Some lines were ellipsized, use -l to show in full.

3.5.6 查看master集群状态

[root@k8s-1 /root] master# kubectl get cs,nodesNAME STATUS MESSAGE ERRORcomponentstatus/controller-manager Healthy okcomponentstatus/scheduler Healthy okcomponentstatus/etcd-2 Healthy {"health":"true"}componentstatus/etcd-1 Healthy {"health":"true"}componentstatus/etcd-0 Healthy {"health":"true"}

3.6 部署node 节点

kubernetes work 节点运行如下组件: docker kubelet kube-proxy

3.6.1 部署 kubelet 组件

kublet 运行在每个 worker 节点上,接收 kube-apiserver 发送的请求,管理 Pod 容器,执行交互式命令,如exec、run、logs 等;

kublet 启动时自动向 kube-apiserver 注册节点信息,内置的 cadvisor 统计和监控节点的资源使用情况; 为确保安全,本文档只开启接收 https 请求的安全端口,对请求进行认证和授权,拒绝未授权的访问(如apiserver、heapster)。

配置node节点

cd /data/install/kubernetes/server/bin/scp kubelet kube-proxy k8s-2:/cloud/k8s/kubernetes/bin/scp kubelet kube-proxy k8s-3:/cloud/k8s/kubernetes/bin/

创建 kubelet bootstrap.kubeconfig 文件

cd /data/ssl_config/kubernetes/vim environment.sh# 创建kubelet bootstrapping kubeconfigBOOTSTRAP_TOKEN=449bbeb0ea7e50f321087a123a509a19KUBE_APISERVER="https://192.168.123.211:6443"# 设置集群参数kubectl config set-cluster kubernetes \--certificate-authority=./ca.pem \--embed-certs=true \--server=${KUBE_APISERVER} \--kubeconfig=bootstrap.kubeconfig# 设置客户端认证参数kubectl config set-credentials kubelet-bootstrap \--token=${BOOTSTRAP_TOKEN} \--kubeconfig=bootstrap.kubeconfig# 设置上下文参数kubectl config set-context default \--cluster=kubernetes \--user=kubelet-bootstrap \--kubeconfig=bootstrap.kubeconfig# 设置默认上下文kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

通过

bash environment.sh获取 bootstrap.kubeconfig 配置文件。

创建 kubelet.kubeconfig 文件

vim envkubelet.kubeconfig.sh# 创建kubelet bootstrapping kubeconfigBOOTSTRAP_TOKEN=449bbeb0ea7e50f321087a123a509a19KUBE_APISERVER="https://192.168.123.211:6443"# 设置集群参数kubectl config set-cluster kubernetes \--certificate-authority=./ca.pem \--embed-certs=true \--server=${KUBE_APISERVER} \--kubeconfig=kubelet.kubeconfig# 设置客户端认证参数kubectl config set-credentials kubelet \--token=${BOOTSTRAP_TOKEN} \--kubeconfig=kubelet.kubeconfig# 设置上下文参数kubectl config set-context default \--cluster=kubernetes \--user=kubelet \--kubeconfig=kubelet.kubeconfig# 设置默认上下文kubectl config use-context default --kubeconfig=kubelet.kubeconfig

创建kube-proxy kubeconfig文件

vim env_proxy.sh# 创建kube-proxy kubeconfig文件BOOTSTRAP_TOKEN=449bbeb0ea7e50f321087a123a509a19KUBE_APISERVER="https://192.168.123.211:6443"kubectl config set-cluster kubernetes \--certificate-authority=./ca.pem \--embed-certs=true \--server=${KUBE_APISERVER} \--kubeconfig=kube-proxy.kubeconfigkubectl config set-credentials kube-proxy \--client-certificate=./kube-proxy.pem \--client-key=./kube-proxy-key.pem \--embed-certs=true \--kubeconfig=kube-proxy.kubeconfigkubectl config set-context default \--cluster=kubernetes \--user=kube-proxy \--kubeconfig=kube-proxy.kubeconfigkubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

将bootstrap kubeconfig kube-proxy.kubeconfig 文件拷贝到所有 nodes节点

scp -rp bootstrap.kubeconfig kube-proxy.kubeconfig k8s-2:/cloud/k8s/kubernetes/cfg/scp -rp bootstrap.kubeconfig kube-proxy.kubeconfig k8s-3:/cloud/k8s/kubernetes/cfg/

3.6.2 创建kubelet 参数配置文件拷贝到所有 nodes节点

创建 kubelet 参数配置模板文件

[root@k8s-2 /root] node# cat /cloud/k8s/kubernetes/cfg/kubelet.configkind: KubeletConfigurationapiVersion: kubelet.config.k8s.io/v1beta1address: 192.168.123.212port: 10250readOnlyPort: 10255cgroupDriver: cgroupfsclusterDomain: cluster.local.failSwapOn: falseauthentication:anonymous:enabled: true

创建kubelet配置文件

vim /cloud/k8s/kubernetes/cfg/kubeletKUBELET_OPTS="--logtostderr=true \--v=4 \--hostname-override=k8s-2 \--kubeconfig=/cloud/k8s/kubernetes/cfg/kubelet.kubeconfig \--bootstrap-kubeconfig=/cloud/k8s/kubernetes/cfg/bootstrap.kubeconfig \--config=/cloud/k8s/kubernetes/cfg/kubelet.config \--cert-dir=/cloud/k8s/kubernetes/ssl \--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

创建kubelet systemd unit 文件

vim /usr/lib/systemd/system/kubelet.service[Unit]Description=Kubernetes KubeletAfter=docker.serviceRequires=docker.service[Service]EnvironmentFile=/cloud/k8s/kubernetes/cfg/kubeletExecStart=/cloud/k8s/kubernetes/bin/kubelet $KUBELET_OPTSRestart=on-failureKillMode=process[Install]WantedBy=multi-user.target

将kubelet-bootstrap用户绑定到系统集群角色

kubectl create clusterrolebinding kubelet-bootstrap \--clusterrole=system:node-bootstrapper \--user=kubelet-bootstrap

3.6.3 启动kubelet服务

systemctl daemon-reloadsystemctl enable kubeletsystemctl restart kubelet

3.6.4 approve kubelet CSR 请求

kubectl get csrkubectl certificate approve $NAMEkubectl get csrcsr 状态变为 Approved,Issued 即可

- Requesting User:请求 CSR 的用户,kube-apiserver 对它进行认证和授权;

- Subject:请求签名的证书信息;

- 证书的 CN 是 system:node:kube-node2, Organization 是 system:nodes,kube-apiserver 的 Node 授权模式会授予该证书的相关权限;

3.6.5 查看集群状态

[root@k8s-1 /root] master# kubectl get nodes,csNAME STATUS ROLES AGE VERSIONnode/k8s-2 Ready node 3d22h v1.15.1node/k8s-3 Ready node 3d22h v1.15.1NAME STATUS MESSAGE ERRORcomponentstatus/scheduler Healthy okcomponentstatus/controller-manager Healthy okcomponentstatus/etcd-0 Healthy {"health":"true"}componentstatus/etcd-2 Healthy {"health":"true"}componentstatus/etcd-1 Healthy {"health":"true"}

3.7 部署 node kube-proxy 组件

kube-proxy 运行在所有 node节点上,它监听 apiserver 中 service 和 Endpoint 的变化情况,创建路由规则来进行服务负载均衡。

3.7.1 创建kube-proxy配置文件

vim /cloud/k8s/kubernetes/cfg/kube-proxyKUBE_PROXY_OPTS="--logtostderr=true \--v=4 \--hostname-override=k8s-2 \--cluster-cidr=10.0.0.0/24 \--kubeconfig=/cloud/k8s/kubernetes/cfg/kube-proxy.kubeconfig"

3.7.2 创建kube-proxy systemd unit 文件

vim /usr/lib/systemd/system/kube-proxy.service[Unit]Description=Kubernetes ProxyAfter=network.target[Service]EnvironmentFile=/cloud/k8s/kubernetes/cfg/kube-proxyExecStart=/cloud/k8s/kubernetes/bin/kube-proxy $KUBE_PROXY_OPTSRestart=on-failure[Install]WantedBy=multi-user.target

3.7.3 启动kube-proxy服务

systemctl daemon-reloadsystemctl enable kube-proxysystemctl restart kube-proxy

3.7.4 检查服务运行状态

[root@k8s-2 /root] node# systemctl status kube-proxy● kube-proxy.service - Kubernetes ProxyLoaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: disabled)Active: active (running) since 二 2019-07-30 22:30:04 CST; 3 days agoMain PID: 5764 (kube-proxy)Tasks: 0Memory: 11.8MCGroup: /system.slice/kube-proxy.service‣ 5764 /cloud/k8s/kubernetes/bin/kube-proxy --logtostderr=true --v...8月 03 20:51:33 k8s-2 kube-proxy[5764]: I0803 20:51:33.736946 5764 iptab...]8月 03 20:51:33 k8s-2 kube-proxy[5764]: I0803 20:51:33.740738 5764 healt..."8月 03 20:51:33 k8s-2 kube-proxy[5764]: I0803 20:51:33.740762 5764 proxi...s8月 03 20:51:33 k8s-2 kube-proxy[5764]: I0803 20:51:33.740780 5764 bound...s8月 03 20:51:34 k8s-2 kube-proxy[5764]: I0803 20:51:34.018700 5764 confi...e8月 03 20:51:34 k8s-2 kube-proxy[5764]: I0803 20:51:34.028396 5764 confi...e8月 03 20:51:36 k8s-2 kube-proxy[5764]: I0803 20:51:36.035812 5764 confi...e8月 03 20:51:36 k8s-2 kube-proxy[5764]: I0803 20:51:36.046563 5764 confi...e8月 03 20:51:38 k8s-2 kube-proxy[5764]: I0803 20:51:38.045395 5764 confi...e8月 03 20:51:38 k8s-2 kube-proxy[5764]: I0803 20:51:38.061197 5764 confi...eHint: Some lines were ellipsized, use -l to show in full.

至此 kubernetes 1.15 版本简单部署完成~