- 1. 云计算简介

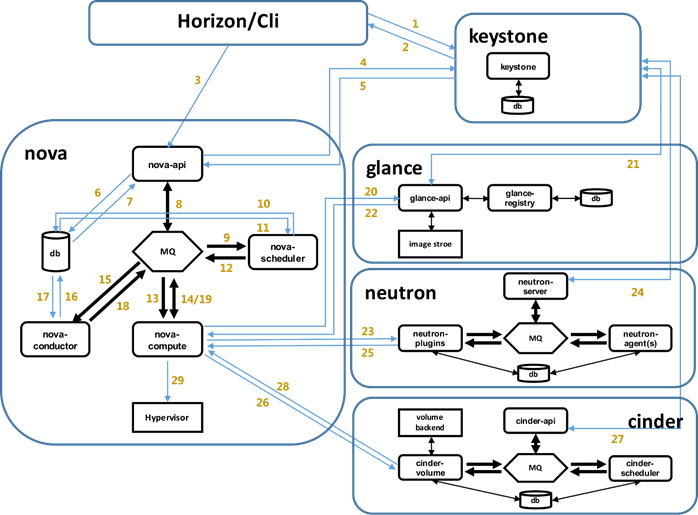

- 2. OpenStack简介

- 3. OpenStack基础配置服务

- 4. Keystone认证服务配置

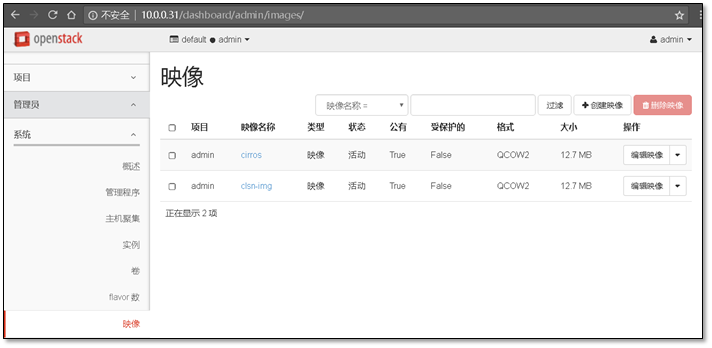

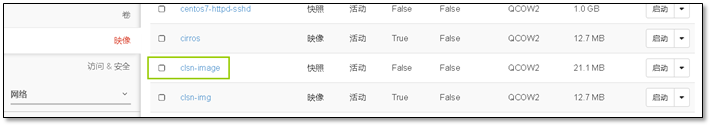

- 5. 镜像服务glance部署

- 6. 计算服务(nova)部署

- 查看服务状态

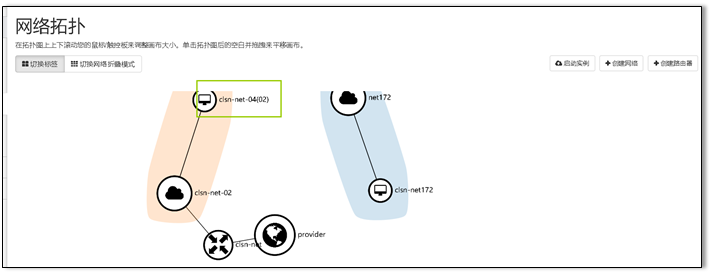

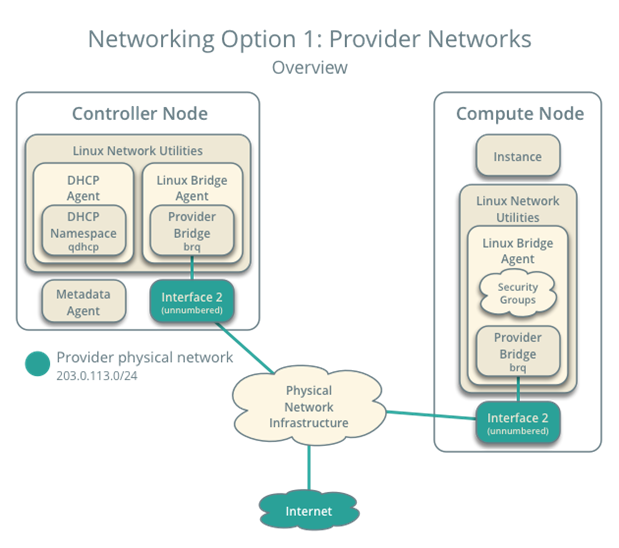

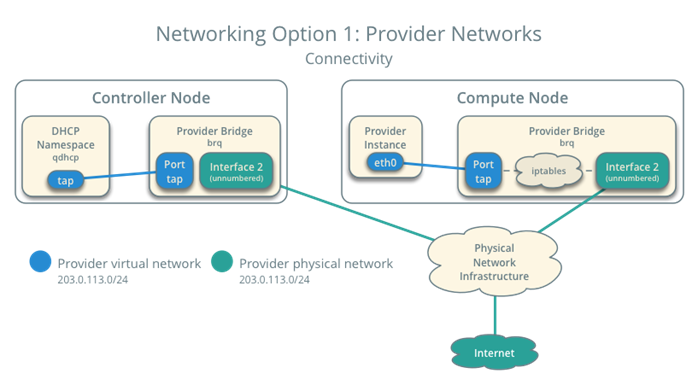

- 7. Networking(neutron)服务

- 在这里,我只进行验证网络,网络正常说明服务正常

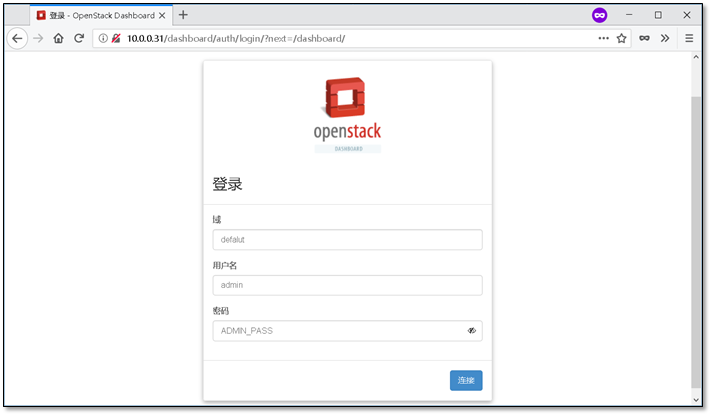

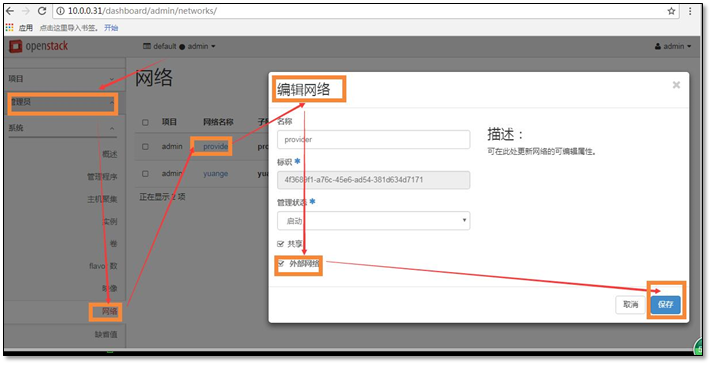

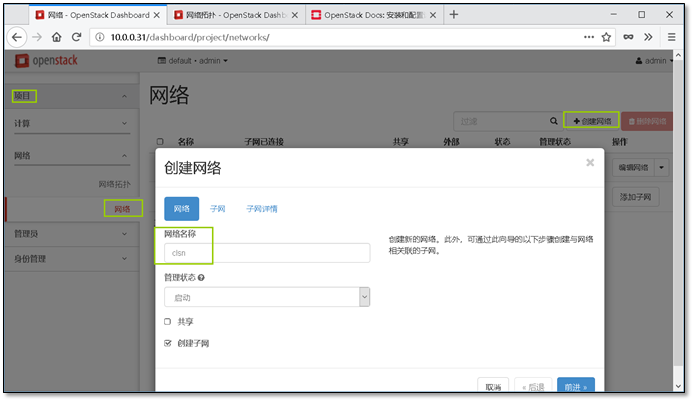

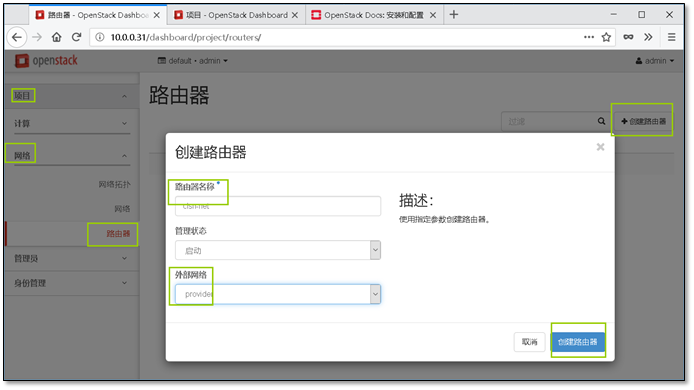

- 8. Dashboard(horizon-web界面)安装

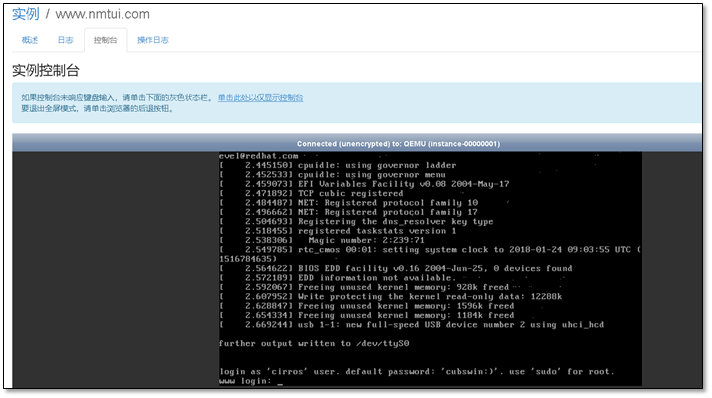

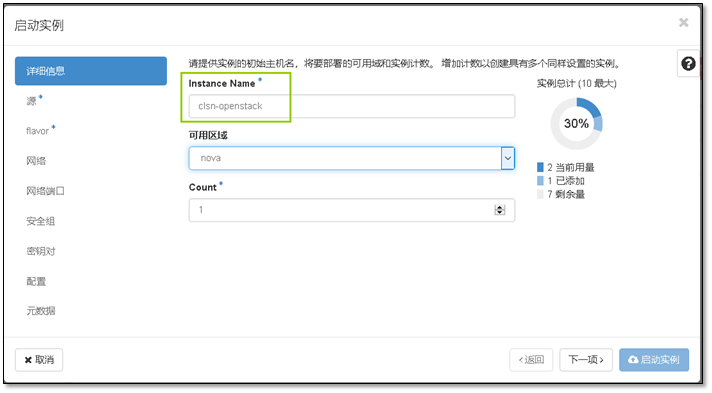

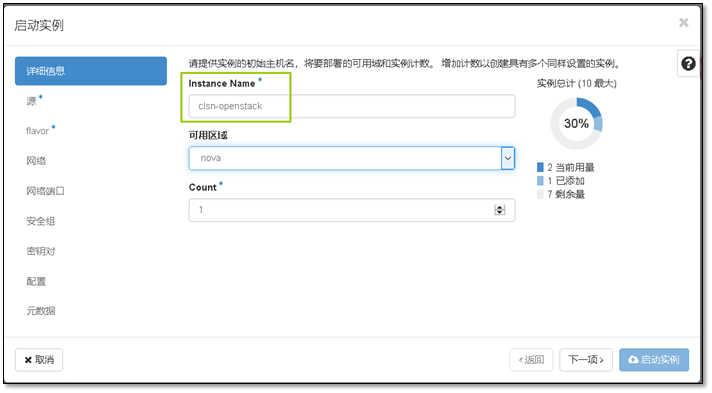

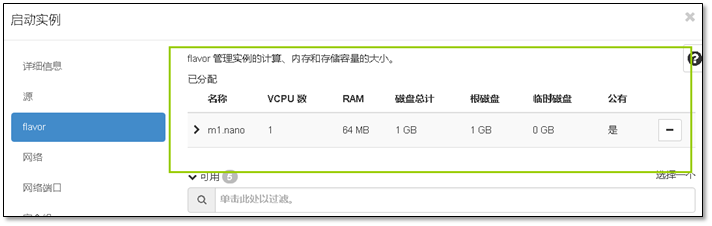

- 9. 启动第一台实例

- 使用提供者物理网络的子网CIDR标记替换

PROVIDER_NETWORK_CIDR。 - 将

START_IP_ADDRESS和END_IP_ADDRESS使用你想分配给实例的子网网段的第一个和最后一个IP地址。这个范围不能包括任何已经使用的IP地址。 - 将 DNS_RESOLVER 替换为DNS解析服务的IP地址。在大多数情况下,你可以从主机

/etc/resolv.conf文件选择一个使用。 - 将

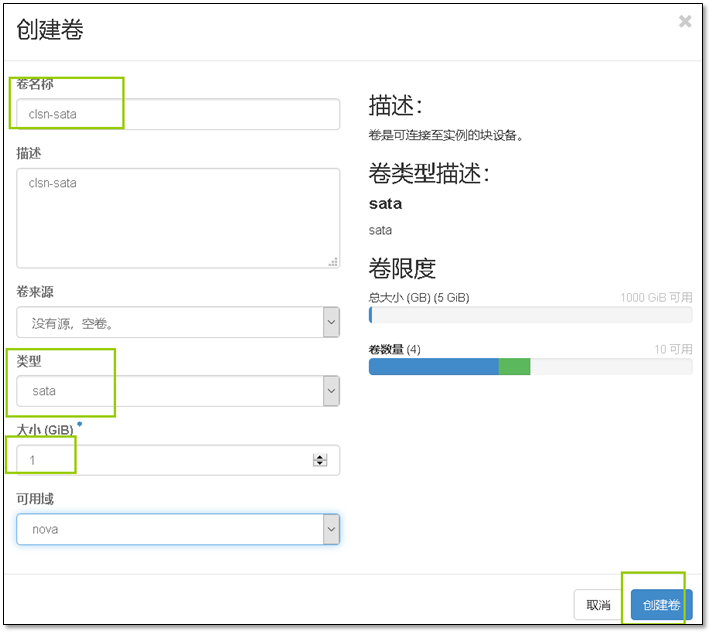

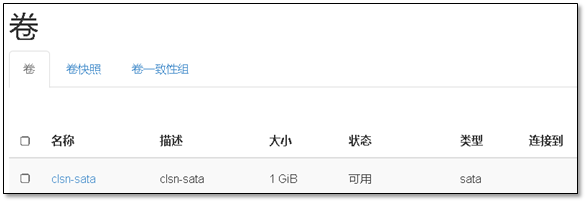

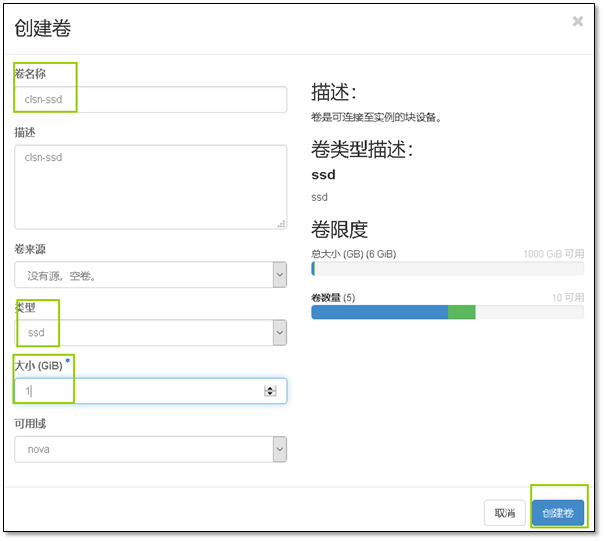

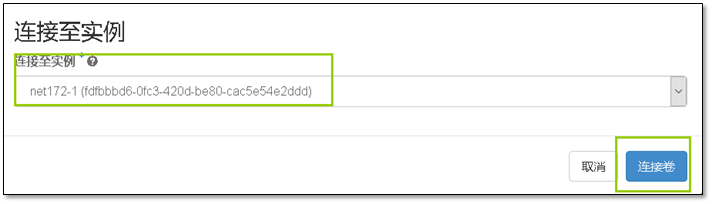

PUBLIC_NETWORK_GATEWAY替换为公共网络的网关,一般的网关IP地址以 ”.1” 结尾。 - 10 cinder块存储服务

- v2版本注册

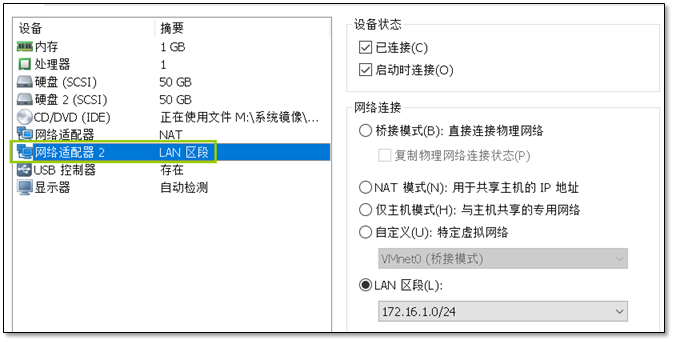

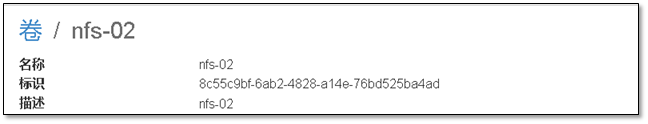

- 11. 添加一台新的计算节点

- 启动nova服务,设置开机自启动

- 启动Linuxbridge代理并配置它开机自启动

- 查看状态

- 12. Glance镜像服务迁移

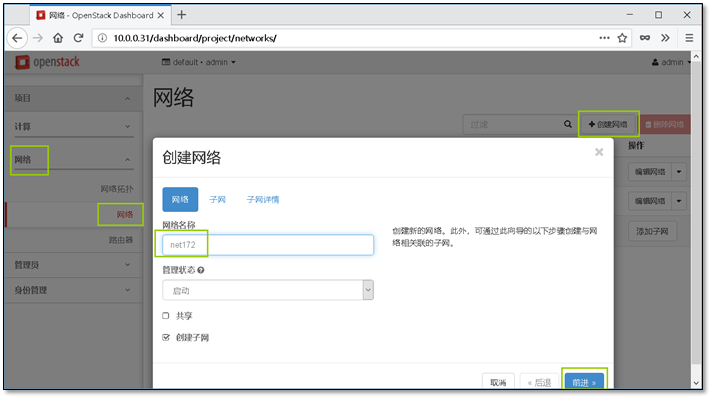

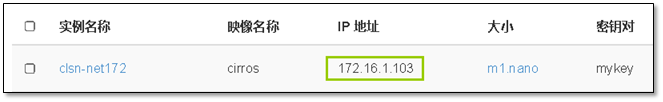

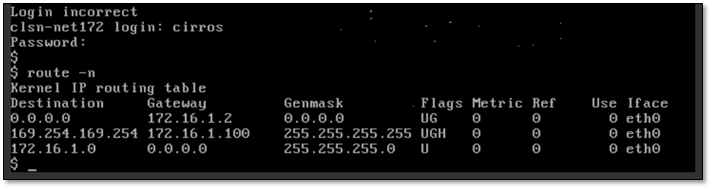

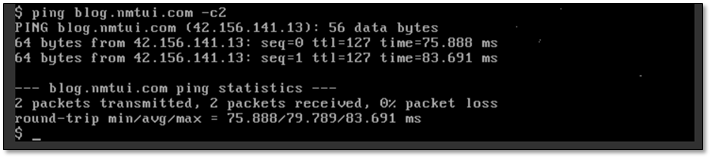

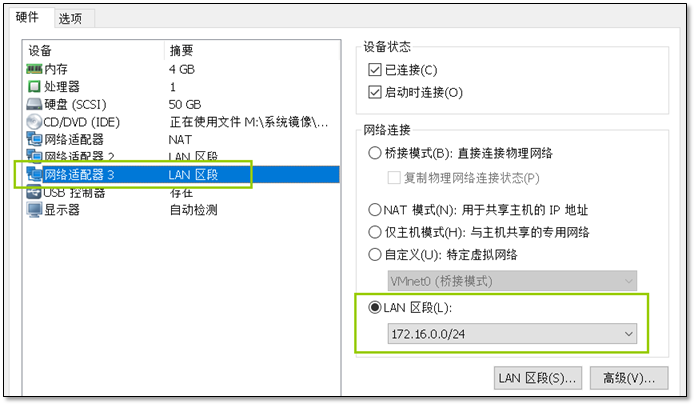

- 13. 添加一个新的网段并让它能够上网

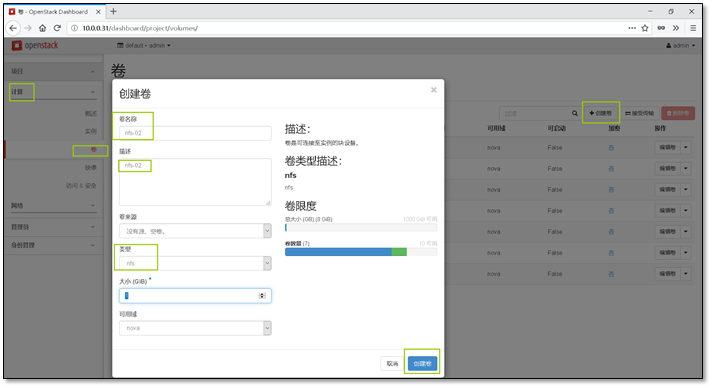

- 14. Cinder服务对接NFS配置

- 15. OpenStack中的VXLAN网络

- 16. openstack API应用

- 17. 附录

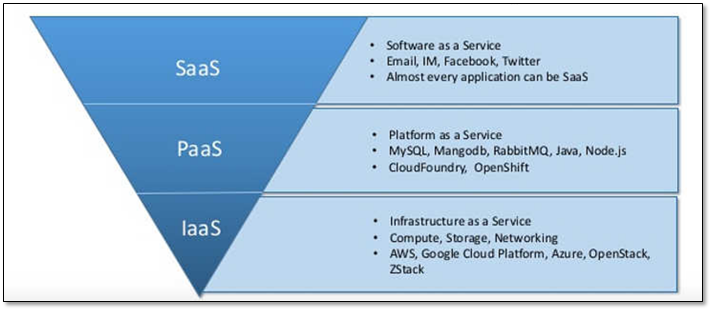

1. 云计算简介

云计算(英语:cloud computing ),是一种基于互联网的计算方式,通过这种方式,共享的软硬件资源和信息可以按需求提供给计算机各种终端和其他设备。

云计算是继1980年代大型计算机到客户端-服务器的大转变之后的又一种巨变。用户不再需要了解“云”中基础设施的细节,不必具有相应的专业知识,也无需直接进行控制。云计算描述了一种基于互联网的新的IT服务增加、使用和交付模式,通常涉及通过互联网来提供动态易扩展而且经常是虚拟化的资源。

1.1 云计算的特点

互联网上的云计算服务特征和自然界的云、水循环具有一定的相似性,因此,云是一个相当贴切的比喻。根据技术研究院的定义如下。

云计算服务应该具备以下几条特征:

- 随需应变自助服务。

- 随时随地用任何网络设备访问。

- 多人共享资源池。

- 快速重新部署灵活度。

- 可被监控与量测的服务。

一般认为还有如下特征:

- 基于虚拟化技术快速部署资源或获得服务。

- 减少用户终端的处理负担。

- 降低了用户对于IT专业知识的依赖。

1.2 云计算服务模式

云计算定义中明确了三种服务模式:

图 - 服务模式详情

软件即服务(SaaS**):

即Software-as-a-service;

消费者使用应用程序,但并不掌控操作系统、硬件或运作的网络基础架构。是一种服务观念的基础,软件服务供应商,以租赁的概念提供客户服务,而非购买,比较常见的模式是提供一组账号密码。

例如:Microsoft CRM与Salesforce.com。

平台即服务(PaaS**):

即Platform-as-a-service;

消费者使用主机操作应用程序。消费者掌控运作应用程序的环境(也拥有主机部分掌控权),但并不掌控操作系统、硬件或运作的网络基础架构。平台通常是应用程序基础架构。

例如:Google App Engine。

基础设施即服务(IaaS**):

**即Infrastructure-as-a-service;

消费者使用“基础计算资源”,如处理能力、存储空间、网络组件或中间件。消费者能掌控操作系统、存储空间、已部署的应用程序及网络组件(如防火墙、负载平衡器等),但并不掌控云基础架构。

例如:Amazon AWS、Rackspace。

关于这三种服务模式更多详情可以参考:https://www.zhihu.com/question/216417781.3 云计算的类型

图 - 云类型示例

公有云(Public Cloud)

简而言之,公用云服务可通过网络及第三方服务供应者,开放给客户使用,“公有”一词并不一定代表“免费”,但也可能代表免费或相当廉价,公用云并不表示用户数据可供任何人查看,公用云供应者通常会对用户实施使用访问控制机制,公用云作为解决方案,既有弹性,又具备成本效益。

私有云(Private Cloud)

私有云具备许多公用云环境的优点,例如弹性、适合提供服务,两者差别在于私有云服务中,数据与程序皆在组织内管理,且与公用云服务不同,不会受到网络带宽、安全疑虑、法规限制影响;此外,私有云服务让供应者及用户更能掌控云基础架构、改善安全与弹性,因为用户与网络都受到特殊限制。

混合云(Hybrid Cloud)

混合云结合公用云及私有云,这个模式中,用户通常将非企业关键信息外包,并在公用云上处理,但同时掌控企业关键服务及数据。1.4 为什么要选择云计算

1、有效解决硬件单点故障问题

2、按需增/减硬件资源

3、BGP线路解决南北互通问题

4、按需增/减带宽

5、更有吸引力的费用支付方式

详情查看《云计算之路:为什么要选择云计算》https://www.cnblogs.com/cmt/archive/2013/02/27/why-into-cloud.html

2. OpenStack简介

OpenStack是一个美国宇航局和Rackspace合作研发的云计算软件,以Apache授权条款2.0授权,并且是一个自由软件和开放源代码项目。

OpenStack是基础设施即服务(IaaS)软件,让任何人都可以自行创建和提供云计算服务。

此外,OpenStack也用作创建防火墙内的“私有云”(Private Cloud),提供机构或企业内各部门共享资源。

2.1 市场趋向

Rackspace以OpenStack为基础的私有云业务每年营收7亿美元,增长率超过了20%。

OpenStack虽然有些方面还不太成熟,然而它有全球大量的组织支持,大量的开发人员参与,发展迅速。国际上已经有很多使用OpenStack搭建的公有云、私有云、混合云,例如:RackspaceCloud、惠普云、MercadoLibre的IT基础设施云、AT&T的CloudArchitec、戴尔的OpenStack解决方案等等。而在国内OpenStack的热度也在逐渐升温,华胜天成、高德地图、京东、阿里巴巴、百度、中兴、华为等都对OpenStack产生了浓厚的兴趣并参与其中。

自2010年创立以来,已发布10个版本。其中Icehouse版本有120个组织、1202名代码贡献者参与,而最新的是Juno版本。OpenStack很可能在未来的基础设施即服务(IaaS)资源管理方面占据领导位置,成为公有云、私有云及混合云管理的“云操作系统”标准

2.2 大型用户

美国国家航空航天局

加拿大半官方机构CANARIE网络的DAIR(Digital Accelerator for Innovation and Research)项目,向大学与中小型企业提供研究和开发云端运算环境。

惠普云(使用Ubuntu Linux)

MercadoLibre的IT基础设施云,现时以OpenStack管理超过6000 台虚拟机器。

AT&T的“Cloud Architect”,将在美国的达拉斯、圣地亚哥和新泽西州提供对外云端服务。

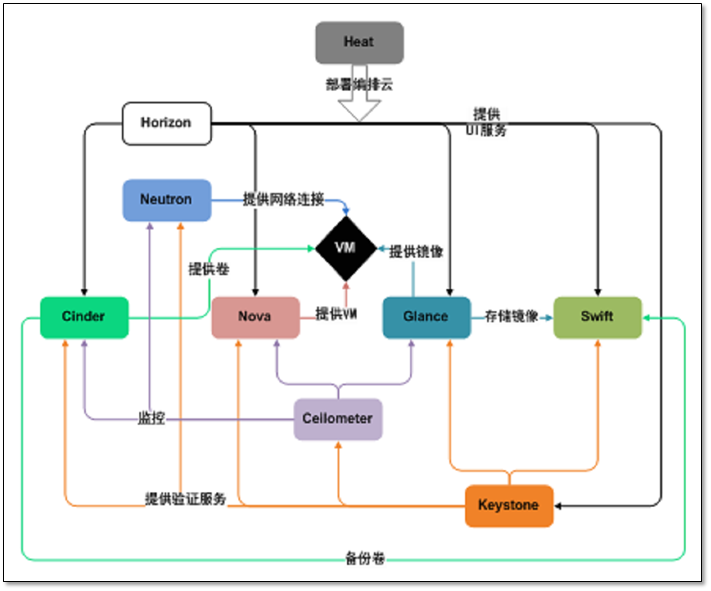

2.3 OpenStack项目介绍

图 - 各项目关系图

各组件的详细说明:

| 服务类型 | 项目名称 | 描述 |

|---|---|---|

| Dashboard | Horizon 提供web界面 |

提供了一个基于web的自服务门户,与OpenStack底层服务交互,诸如启动一个实例,分配IP地址以及配置访问控制。 |

| Compute | Nova 计算节点 |

在OpenStack环境中计算实例的生命周期管理。按需响应包括生成、调度、回收虚拟机等操作。 |

| Networking | Neutron 网络服务 |

确保为其它OpenStack服务提供网络连接即服务,比如OpenStack计算。为用户提供API定义网络和使用。基于插件的架构其支持众多的网络提供商和技术。 |

| 存储 | ||

| Object Storage | Swift 对象存储 |

通过一个 RESTful,基于HTTP的应用程序接口存储和任意检索的非结构化数据对象。它拥有高容错机制,基于数据复制和可扩展架构。它的实现并像是一个文件服务器需要挂载目录。在此种方式下,它写入对象和文件到多个硬盘中,以确保数据是在集群内跨服务器的多份复制。 |

| Block Storage | Cinder 块存储 |

为运行实例而提供的持久性块存储。它的可插拔驱动架构的功能有助于创建和管理块存储设备。 |

| 共享服务 | ||

| Identity service | Keystone 认证节点 |

为其他OpenStack服务提供认证和授权服务,为所有的OpenStack服务提供一个端点目录。 |

| Image service | Glance 镜像服务 |

存储和检索虚拟机磁盘镜像,OpenStack计算会在实例部署时使用此服务。 |

| Telemetry | Ceilometer 计费 |

为OpenStack云的计费、基准、扩展性以及统计等目的提供监测和计量。 |

| 高层次服务 | ||

| Orchestration | Heat | Orchestration服务支持多样化的综合的云应用,通过调用OpenStack-native REST API和CloudFormation-compatible Query API,支持:term:HOT <Heat Orchestration Template (HOT)>格式模板或者AWS CloudFormation格式模板 |

2.4 系统环境说明

本文档使用主机环境均安装官方推荐进行设置:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/environment.html

controller节点说明

[root@controller ~]# cat /etc/redhat-releaseCentOS Linux release 7.2.1511 (Core)[root@controller ~]# uname -r3.10.0-327.el7.x86_64[root@controller ~]# sestatusSELinux status: disabled[root@controller ~]# systemctl status firewalld.service● firewalld.service - firewalld - dynamic firewall daemonLoaded: loaded (/usr/lib/systemd/system/firewalld.service; disabled; vendor preset: enabled)Active: inactive (dead)[root@controller ~]# hostname -I10.0.0.11 172.16.1.11[root@controller ~]# tail -3 /etc/hosts10.0.0.11 controller10.0.0.31 compute110.0.0.32 compute2

compute1与compute2节点的配置与controller相同。

系统安装参考文档:http://www.cnblogs.com/clsn/p/8338099.html#_label1

系统优化说明:http://www.cnblogs.com/clsn/p/8338099.html#_label4

注意点:网卡的名称修改

3. OpenStack基础配置服务

注:本文中所使用的用户及密码都参考该文档,并且高度一致。

https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/environment-security.html

OpenStack 相关服务安装流程(keystone服务除外):

1)在数据库中,创库,授权;

2)在keystone中创建用户并授权;

3)在keystone中创建服务实体,和注册API接口;

4)安装软件包;

5)修改配置文件(数据库信息);

6)同步数据库;

7)启动服务。

3.1 OpenStack服务部署顺序

[1] 基础环境准备 https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/environment.html [2] 部署 Keystorne 认证服务,token https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/keystone.html [3] 部署 Glance 镜像服务 https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/glance.html [4] 部署 Nova 计算服务(kvm) https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/nova.html [5] 部署 Neutron 网络服务 https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/neutron.html [6] 部署 Horizon 提供web界面 https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/horizon.html [7] 部署 Cinder 块存储(硬盘) https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/horizon.html

3.2 配置本地yum源

首先将镜像挂载到 /mnt

mount /dev/cdrom /mntecho 'mount /dev/cdrom /mnt' > /etc/rc.d/rc.localchmod +x /etc/rc.d/rc.local

创建repo文件

cat >/etc/yum.repos.d/local.repo<<-'EOF'[local]name=localbaseurl=file:///mntgpgcheck=0[openstack]name=openstack-mitakabaseurl=file:///opt/repogpgcheck=0EOF

生成yum缓存

[root@controller repo]# yum makecache

3.3 安装NTP时间服务

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/environment-ntp.html

控制节点(提供时间服务,供其他机器同步)

安装软件

yum install chrony -y

配置控制节点,修改第22行

[root@controller ~]# vim /etc/chrony.conf···# Allow NTP client access from local network.allow 10/8

启动,设置自启动

systemctl enable chronyd.servicesystemctl start chronyd.service

计算节点(配置chrony客户端)

安装软件

yum install chrony -y

配置文件第三行,删除无用的上游服务器。

使用sed命令修改

sed -ri.bak '/server/s/^/#/g;2a server 10.0.0.11 iburst' /etc/chrony.conf

配置文件说明:

[root@compute1 ~]# vim /etc/chrony.conf# Use public servers from the pool.ntp.org project.# Please consider joining the pool (http://www.pool.ntp.org/join.html).server 10.0.0.11 iburst

启动,设置自启动

systemctl enable chronyd.servicesystemctl start chronyd.service

3.4 OpenStack的包操作(添加新的计算节点时需要安装)

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/environment-packages.html

安装 OpenStack 客户端:

yum -y install python-openstackclient

RHEL 和 CentOS 默认启用了 SELinux

# 安装 openstack-selinux 软件包以便自动管理 OpenStack 服务的安全策略yum -y install openstack-selinux

3.5 SQL数据库安装(在控制节点操作)

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/environment-sql-database.html

安装mariadb软件包:

[root@controller ~]# yum -y install mariadb mariadb-server python2-PyMySQL

创建配置文件

cat > /etc/my.cnf.d/openstack.cnf <<-'EOF'[mysqld]bind-address = 10.0.0.11default-storage-engine = innodbinnodb_file_per_tablemax_connections = 4096collation-server = utf8_general_cicharacter-set-server = utf8EOF

启动mariadb

systemctl enable mariadb.servicesystemctl start mariadb.service

执行mariadb安全初始化

为了保证数据库服务的安全性,运行mysql_secure_installation脚本。特别需要说明的是,为数据库的root用户设置一个适当的密码。

[root@controller ~]# mysql_secure_installation···Enter current password for root (enter for none):OK, successfully used password, moving on...Set root password? [Y/n] n... skipping.Remove anonymous users? [Y/n] Y... Success!Disallow root login remotely? [Y/n] Y... Success!Remove test database and access to it? [Y/n] Y- Dropping test database...... Success!- Removing privileges on test database...... Success!Reload privilege tables now? [Y/n] Y... Success!Thanks for using MariaDB!

3.6 NoSQL 数据库

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/environment-nosql-database.html

Telemetry 服务使用 NoSQL 数据库来存储信息,典型地,这个数据库运行在控制节点上。

向导中使用MongoDB。

在ceilometer中计费使用。由于本次搭建的为私有云平台,私有云不需要计费服务,这里就不进行安装了。

3.7 消息队列部署

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/environment-messaging.html

安装消息队列软件

[root@controller ~]# yum -y install rabbitmq-server

启动消息队列服务并将其配置为随系统启动:

systemctl enable rabbitmq-server.servicesystemctl start rabbitmq-server.service

添加 openstack 用户:

[root@controller ~]# rabbitmqctl add_user openstack RABBIT_PASSCreating user "openstack" ...用合适的密码替换 RABBIT_DBPASS。

给openstack用户配置写和读权限:

[root@controller ~]# rabbitmqctl set_permissions openstack ".*" ".*" ".*"Setting permissions for user "openstack" in vhost "/" ...

3.8 Memcached服务部署

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/environment-memcached.html

安装memcached软件包

[root@controller ~]# yum -y install memcached python-memcached

配置memcached配置文件

[root@controller ~]# cat /etc/sysconfig/memcachedPORT="11211"USER="memcached"MAXCONN="1024"CACHESIZE="64"OPTIONS="-l 10.0.0.11" <--修改位置,配置为memcached主机地址或网段信息

启动Memcached服务,并且配置它随机启动。

systemctl enable memcached.servicesystemctl start memcached.service

3.9 验证以上部署的服务是否正常

查看端口信息

[root@controller ~]# netstat -lntupActive Internet connections (only servers)Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program nametcp 0 0 0.0.0.0:25672 0.0.0.0:* LISTEN 17164/beamtcp 0 0 10.0.0.11:3306 0.0.0.0:* LISTEN 16985/mysqldtcp 0 0 10.0.0.11:11211 0.0.0.0:* LISTEN 17962/memcachedtcp 0 0 0.0.0.0:4369 0.0.0.0:* LISTEN 1/systemdtcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN 1402/sshdtcp6 0 0 :::5672 :::* LISTEN 17164/beamtcp6 0 0 :::22 :::* LISTEN 1402/sshdudp 0 0 0.0.0.0:123 0.0.0.0:* 1681/chronydudp 0 0 127.0.0.1:323 0.0.0.0:* 1681/chronydudp 0 0 10.0.0.11:11211 0.0.0.0:* 17962/memcachedudp6 0 0 ::1:323 :::* 1681/chronyd

端口信息说明

chronyd服务 123(提供给其他机器)、323(与上游同步端口) Mariadb 数据库 3306数据接口 rabbitmq 消息队列 4369、25672(高可用架构使用)、5672(程序写端口) memcached token保存 11211

4. Keystone认证服务配置

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/keystone-install.html

认证管理:授权管理和服务目录服务管理提供单点整合。

目录服务:相当于呼叫中心(前台)

在控制节点上安装和配置OpenStack身份认证服务,代码名称keystone。出现性能原因,这个配置部署Fernet令牌和Apache HTTP服务处理请求。

4.1 创建数据库

用数据库连接客户端以 root 用户连接到数据库服务器:

[root@controller ~]# mysql -u root -p

创建 keystone 数据库:

MariaDB [(none)]> CREATE DATABASE keystone;

对keystone数据库授予恰当的权限:

GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'localhost' IDENTIFIED BY 'KEYSTONE_DBPASS';GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' IDENTIFIED BY 'KEYSTONE_DBPASS';

添加完成后退出数据库客户端。

MariaDB [(none)]> exit

4.2 安装keystone

yum -y install openstack-keystone httpd mod_wsgi

安装的软件包为 keystone服务包,http服务,用于连接python程序与web服务的中间件

如何理解 CGI, WSGI? https://www.zhihu.com/question/19998865

4.3 修改配置文件

备份配置文件

[root@controller ~]# cp /etc/keystone/keystone.conf{,.bak}

精简化配置文件

[root@controller ~]# egrep -v '^#|^$' /etc/keystone/keystone.conf.bak >/etc/keystone/keystone.conf

手动修改配置文件

在[DEFAULT]部分,定义初始管理令牌的值

[DEFAULT]admin_token = ADMIN_TOKEN

在 [database] 部分,配置数据库访问

[database]connection = mysql+pymysql://keystone:KEYSTONE_DBPASS@controller/keystone

在[token]部分,配置Fernet UUID令牌的提供者

[token]provider = fernet 关于令牌类型的说明:https://www.abcdocker.com/abcdocker/1797

【自动化】**自动化配置-**配置文件(本文大量使用)

安装自动配置软件openstack-utils

yum install openstack-utils.noarch -y[root@controller ~]# rpm -ql openstack-utils/usr/bin/openstack-config

自动化配置命令

cp /etc/keystone/keystone.conf{,.bak}grep '^[a-Z\[]' /etc/keystone/keystone.conf.bak > /etc/keystone/keystone.confopenstack-config --set /etc/keystone/keystone.conf DEFAULT admin_token ADMIN_TOKENopenstack-config --set /etc/keystone/keystone.conf database connection mysql+pymysql://keystone:KEYSTONE_DBPASS@controller/keystoneopenstack-config --set /etc/keystone/keystone.conf token provider fernet

4.4 初始化身份认证服务的数据库(同步数据库)

[root@controller ~]# su -s /bin/sh -c "keystone-manage db_sync" keystone

验证数据库是否同步成功

[root@controller ~]# mysql keystone -e 'show tables'

4.5 初始化Fernet keys

[root@controller ~]# keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone

命令执行后会在/etc/keystone/目录下生成fernet-keys 文件:

[root@controller ~]# ls /etc/keystone/default_catalog.templates keystone.conf.bak policy.jsonfernet-keys keystone-paste.ini sso_callback_template.htmlkeystone.conf logging.conf

4.6 配置 Apache HTTP 服务器

编辑/etc/httpd/conf/httpd.conf 文件,配置ServerName。

echo 'ServerName controller' >>/etc/httpd/conf/httpd.conf

创建配置文件 /etc/httpd/conf.d/wsgi-keystone.conf

注:keystone服务较为特殊,其他的服务可自行创建配置文件。

[root@controller ~]# cat /etc/httpd/conf.d/wsgi-keystone.confListen 5000Listen 35357<VirtualHost *:5000>WSGIDaemonProcess keystone-public processes=5 threads=1 user=keystone group=keystone display-name=%{GROUP}WSGIProcessGroup keystone-publicWSGIScriptAlias / /usr/bin/keystone-wsgi-publicWSGIApplicationGroup %{GLOBAL}WSGIPassAuthorization OnErrorLogFormat "%{cu}t %M"ErrorLog /var/log/httpd/keystone-error.logCustomLog /var/log/httpd/keystone-access.log combined<Directory /usr/bin>Require all granted</Directory></VirtualHost><VirtualHost *:35357>WSGIDaemonProcess keystone-admin processes=5 threads=1 user=keystone group=keystone display-name=%{GROUP}WSGIProcessGroup keystone-adminWSGIScriptAlias / /usr/bin/keystone-wsgi-adminWSGIApplicationGroup %{GLOBAL}WSGIPassAuthorization OnErrorLogFormat "%{cu}t %M"ErrorLog /var/log/httpd/keystone-error.logCustomLog /var/log/httpd/keystone-access.log combined<Directory /usr/bin>Require all granted</Directory></VirtualHost>

4.7 启动 Apache HTTP 服务并配置其随系统启动

systemctl enable httpd.servicesystemctl start httpd.service

4.8 创建服务实体和API端点

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/keystone-services.html

a.配置环境变量

配置认证令牌

export OS_TOKEN=ADMIN_TOKEN

配置端点URL

export OS_URL=http://controller:35357/v3

配置认证 API 版本

export OS_IDENTITY_API_VERSION=3

查看环境变量

[root@controller ~]# env |grep OS

命令集:

export OS_TOKEN=ADMIN_TOKENexport OS_URL=http://controller:35357/v3export OS_IDENTITY_API_VERSION=3env |grep OS

b.创建服务实体和API端点

创建命令

openstack service create --name keystone --description "OpenStack Identity" identity

执行过程

[root@controller ~]# openstack service create \> --name keystone --description "OpenStack Identity" identity+-------------+----------------------------------+| Field | Value |+-------------+----------------------------------+| description | OpenStack Identity || enabled | True || id | f08ec36b2b7340d6976fcb2bbd24e83b || name | keystone || type | identity |+-------------+----------------------------------+

c.创建认证服务的 API 端点

命令集

openstack endpoint create --region RegionOne identity public http://controller:5000/v3openstack endpoint create --region RegionOne identity internal http://controller:5000/v3openstack endpoint create --region RegionOne identity admin http://controller:35357/v3

执行过程

[root@controller ~]# openstack endpoint create --region RegionOne \> identity public http://controller:5000/v3+--------------+----------------------------------+| Field | Value |+--------------+----------------------------------+| enabled | True || id | e27dd713753f47b8a1062ac50ca33845 || interface | public || region | RegionOne || region_id | RegionOne || service_id | f08ec36b2b7340d6976fcb2bbd24e83b || service_name | keystone || service_type | identity || url | http://controller:5000/v3 |+--------------+----------------------------------+[root@controller ~]# openstack endpoint create --region RegionOne \> identity internal http://controller:5000/v3+--------------+----------------------------------+| Field | Value |+--------------+----------------------------------+| enabled | True || id | 71b7435fa2df4c58bb6ca5cc38a434a7 || interface | internal || region | RegionOne || region_id | RegionOne || service_id | f08ec36b2b7340d6976fcb2bbd24e83b || service_name | keystone || service_type | identity || url | http://controller:5000/v3 |+--------------+----------------------------------+[root@controller ~]# openstack endpoint create --region RegionOne \> identity admin http://controller:35357/v3+--------------+----------------------------------+| Field | Value |+--------------+----------------------------------+| enabled | True || id | cf58eee084c04777a520d487adc1a88f || interface | admin || region | RegionOne || region_id | RegionOne || service_id | f08ec36b2b7340d6976fcb2bbd24e83b || service_name | keystone || service_type | identity || url | http://controller:35357/v3 |+--------------+----------------------------------+

4.9 创建域、项目、用户和角色

官方文档https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/keystone-users.html

a.创建域default

openstack domain create --description "Default Domain" default

b.在你的环境中,为进行管理操作,创建管理的项目、用户和角色

创建 admin 项目

openstack project create --domain default --description "Admin Project" admin

创建 admin 用户

openstack user create --domain default --password-prompt admin

创建 admin 角色

openstack role create admin

添加admin 角色到 admin 项目和用户上

openstack role add --project admin --user admin admin

命令集:

openstack domain create --description "Default Domain" defaultopenstack project create --domain default --description "Admin Project" adminopenstack user create --domain default --password ADMIN_PASS adminopenstack role create adminopenstack role add --project admin --user admin admin

c.创建servers项目

[root@controller ~]# openstack project create --domain default --description "Service Project" service+-------------+----------------------------------+| Field | Value |+-------------+----------------------------------+| description | Service Project || domain_id | df6407ae93bb407d876f2ee4787ede79 || enabled | True || id | cd2107aa3a8f4066a871ca029641cfd7 || is_domain | False || name | service || parent_id | df6407ae93bb407d876f2ee4787ede79 |+-------------+----------------------------------+

验证之前的所有操作

命令集:

openstack service listopenstack endpoint list | grep keystone |wc -lopenstack domain listopenstack project listopenstack user listopenstack role list

查看服务列表

[root@controller ~]# openstack service list+----------------------------------+----------+----------+| ID | Name | Type |+----------------------------------+----------+----------+| f08ec36b2b7340d6976fcb2bbd24e83b | keystone | identity |+----------------------------------+----------+----------+

查看当前的域

[root@controller ~]# openstack domain list+----------------------------------+---------+---------+----------------+| ID | Name | Enabled | Description |+----------------------------------+---------+---------+----------------+| df6407ae93bb407d876f2ee4787ede79 | default | True | Default Domain |+----------------------------------+---------+---------+----------------+

查看集合

[root@controller ~]# openstack project list+----------------------------------+---------+| ID | Name |+----------------------------------+---------+| cd2107aa3a8f4066a871ca029641cfd7 | service || d0dfbdbc115b4a728c24d28bc1ce1e62 | admin |+----------------------------------+---------+

查看当前的用户列表

[root@controller ~]# openstack user list+----------------------------------+-------+| ID | Name |+----------------------------------+-------+| d8f4a1d74f52482d8ebe2184692d2c1c | admin |+----------------------------------+-------+

查看当前的角色

[root@controller ~]# openstack role list+----------------------------------+-------+| ID | Name |+----------------------------------+-------+| 4de514c418ee480d898773e4f543b79d | admin |+----------------------------------+-------+

关于域、项目、用户和角色的说明:

| 类型 | 说明 |

|---|---|

| Domain | 表示 project 和 user 的集合,在公有云或者私有云中常常表示一个客户 |

| Group | 一个domain 中的部分用户的集合 |

| Project | 项目、IT基础设施资源的集合,比如虚机,卷,镜像等 |

| Role | 授权,角色,表示一个 user 对一个 project resource 的权限 |

| Token | 一个 user 对于某个目标(project 或者 domain)的一个有限时间段内的身份令牌 |

4.10 创建 OpenStack 客户端环境脚本

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/keystone-openrc.html

编辑文件 admin-openrc 并添加如下内容

[root@controller ~]# vi admin-openrcexport OS_PROJECT_DOMAIN_NAME=defaultexport OS_USER_DOMAIN_NAME=defaultexport OS_PROJECT_NAME=adminexport OS_USERNAME=adminexport OS_PASSWORD=ADMIN_PASSexport OS_AUTH_URL=http://controller:35357/v3export OS_IDENTITY_API_VERSION=3export OS_IMAGE_API_VERSION=2

【重要】务必使用环境变量脚本

使用脚本创建环境变量

[root@controller ~]# source admin-openrc[root@controller ~]# env|grep OSHOSTNAME=controllerOS_USER_DOMAIN_NAME=defaultOS_IMAGE_API_VERSION=2OS_PROJECT_NAME=adminOS_IDENTITY_API_VERSION=3OS_PASSWORD=ADMIN_PASSOS_AUTH_URL=http://controller:35357/v3OS_USERNAME=adminOS_PROJECT_DOMAIN_NAME=default

5. 镜像服务glance部署

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/glance.html

5.1 创库授权

参考文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/glance-install.html

# 登陆mysql数据库[root@controller ~]# mysql

创建 glance 数据库:

CREATE DATABASE glance;

对glance数据库授予恰当的权限:

GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'localhost' IDENTIFIED BY 'GLANCE_DBPASS';GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' IDENTIFIED BY 'GLANCE_DBPASS';

5.2 创建glance用户和授权

[重要]加载环境变量

注:每次使用openstack管理命令时都依赖与环境变量

[root@controller ~]# . admin-openrc

创建 glance 用户

openstack user create --domain default --password GLANCE_PASS glance

添加 admin 角色到 glance 用户和 service 项目上

openstack role add --project service --user glance admin

5.3 创建镜像服务的 API 端点,并注册

创建glance服务实体

openstack service create --name glance --description "OpenStack Image" image

执行过程

[root@controller ~]# openstack service create --name glance --description "OpenStack Image" image+-------------+----------------------------------+| Field | Value |+-------------+----------------------------------+| description | OpenStack Image || enabled | True || id | 30357ca18e5046b98dbc0dd4f1e7d69c || name | glance || type | image |+-------------+----------------------------------+

创建镜像服务的 API 端点

命令集

openstack endpoint create --region RegionOne image public http://controller:9292openstack endpoint create --region RegionOne image internal http://controller:9292openstack endpoint create --region RegionOne image admin http://controller:9292

执行过程

[root@controller ~]# openstack endpoint create --region RegionOne image public http://controller:9292+--------------+----------------------------------+| Field | Value |+--------------+----------------------------------+| enabled | True || id | 671486d2528448e9a4067ab04a15e015 || interface | public || region | RegionOne || region_id | RegionOne || service_id | 30357ca18e5046b98dbc0dd4f1e7d69c || service_name | glance || service_type | image || url | http://controller:9292 |+--------------+----------------------------------+[root@controller ~]# openstack endpoint create --region RegionOne image internal http://controller:9292+--------------+----------------------------------+| Field | Value |+--------------+----------------------------------+| enabled | True || id | 8ff6131b7e1b4234bb4f34daecbbd615 || interface | internal || region | RegionOne || region_id | RegionOne || service_id | 30357ca18e5046b98dbc0dd4f1e7d69c || service_name | glance || service_type | image || url | http://controller:9292 |+--------------+----------------------------------+[root@controller ~]# openstack endpoint create --region RegionOne image admin http://controller:9292+--------------+----------------------------------+| Field | Value |+--------------+----------------------------------+| enabled | True || id | 4a1b3341a0604dbfb710eaa63aab626a || interface | admin || region | RegionOne || region_id | RegionOne || service_id | 30357ca18e5046b98dbc0dd4f1e7d69c || service_name | glance || service_type | image || url | http://controller:9292 |+--------------+----------------------------------+

5.4 安装glance软件包

yum install openstack-glance -y

服务说明:

glance-api 负责镜像的上传、下载、查看、删除 glance-registry 修改镜像的源数据:镜像所需的配置

5.5 修改glance相关配置文件

/etc/glance/glance-api.conf # 接收镜像API的调用,诸如镜像发现、恢复、存储。 /etc/glance/glance-registry.conf #存储、处理和恢复镜像的元数据,元数据包括项诸如大小和类型。

1、编辑文件 /etc/glance/glance-registry.conf

[database] 部分,配置数据库访问

[database]...connection = mysql+pymysql://glance:GLANCE_DBPASS@controller/glance

[keystone_authtoken] 和 [paste_deploy] 部分,配置认证服务访问

[keystone_authtoken]...auth_uri = http://controller:5000auth_url = http://controller:35357memcached_servers = controller:11211auth_type = passwordproject_domain_name = defaultuser_domain_name = defaultproject_name = serviceusername = glancepassword = GLANCE_PASS[paste_deploy]...flavor = keystone

[glance_store] 部分,配置本地文件系统存储和镜像文件位置

[glance_store]...stores = file,httpdefault_store = filefilesystem_store_datadir = /var/lib/glance/images/

命令集

cp /etc/glance/glance-api.conf{,.bak}grep '^[a-Z\[]' /etc/glance/glance-api.conf.bak >/etc/glance/glance-api.confopenstack-config --set /etc/glance/glance-api.conf database connection mysql+pymysql://glance:GLANCE_DBPASS@controller/glanceopenstack-config --set /etc/glance/glance-api.conf glance_store stores file,httpopenstack-config --set /etc/glance/glance-api.conf glance_store default_store fileopenstack-config --set /etc/glance/glance-api.conf glance_store filesystem_store_datadir /var/lib/glance/images/openstack-config --set /etc/glance/glance-api.conf keystone_authtoken auth_uri http://controller:5000openstack-config --set /etc/glance/glance-api.conf keystone_authtoken auth_url http://controller:35357openstack-config --set /etc/glance/glance-api.conf keystone_authtoken memcached_servers controller:11211openstack-config --set /etc/glance/glance-api.conf keystone_authtoken auth_type passwordopenstack-config --set /etc/glance/glance-api.conf keystone_authtoken project_domain_name defaultopenstack-config --set /etc/glance/glance-api.conf keystone_authtoken user_domain_name defaultopenstack-config --set /etc/glance/glance-api.conf keystone_authtoken project_name serviceopenstack-config --set /etc/glance/glance-api.conf keystone_authtoken username glanceopenstack-config --set /etc/glance/glance-api.conf keystone_authtoken password GLANCE_PASSopenstack-config --set /etc/glance/glance-api.conf paste_deploy flavor keystone

2、编辑文件 /etc/glance/glance-registry.conf

[database] 部分,配置数据库访问

[database]...connection = mysql+pymysql://glance:GLANCE_DBPASS@controller/glance

[keystone_authtoken] 和 [paste_deploy] 部分,配置认证服务访问

[keystone_authtoken]...auth_uri = http://controller:5000auth_url = http://controller:35357memcached_servers = controller:11211auth_type = passwordproject_domain_name = defaultuser_domain_name = defaultproject_name = serviceusername = glancepassword = GLANCE_PASS[paste_deploy]...flavor = keystone

命令集

cp /etc/glance/glance-registry.conf{,.bak}grep '^[a-Z\[]' /etc/glance/glance-registry.conf.bak > /etc/glance/glance-registry.confopenstack-config --set /etc/glance/glance-registry.conf database connection mysql+pymysql://glance:GLANCE_DBPASS@controller/glanceopenstack-config --set /etc/glance/glance-registry.conf keystone_authtoken auth_uri http://controller:5000openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken auth_url http://controller:35357openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken memcached_servers controller:11211openstack-config --set /etc/glance/glance-registry.conf keystone_authtoken auth_type passwordopenstack-config --set /etc/glance/glance-registry.conf keystone_authtoken project_domain_name defaultopenstack-config --set /etc/glance/glance-registry.conf keystone_authtoken user_domain_name defaultopenstack-config --set /etc/glance/glance-registry.conf keystone_authtoken project_name serviceopenstack-config --set /etc/glance/glance-registry.conf keystone_authtoken username glanceopenstack-config --set /etc/glance/glance-registry.conf keystone_authtoken password GLANCE_PASSopenstack-config --set /etc/glance/glance-registry.conf paste_deploy flavor keystone

5.6 同步数据库

[root@controller ~]# su -s /bin/sh -c "glance-manage db_sync" glance

注:忽略输出中任何不推荐使用的信息。

检查数据库是否同步成功

[root@controller ~]# mysql glance -e "show tables" |wc -l21

5.7 启动glance服务

启动镜像服务、配置他们随机启动

systemctl enable openstack-glance-api.service openstack-glance-registry.servicesystemctl start openstack-glance-api.service openstack-glance-registry.service

5.8 验证glance服务操作

a.设置环境变量

. admin-openrc

b.下载源镜像

wget http://download.cirros-cloud.net/0.3.4/cirros-0.3.4-x86_64-disk.img

c.使用 QCOW2 磁盘格式, bare 容器格式上传镜像到镜像服务并设置公共可见,这样所有的项目都可以访问它

openstack image create "cirros" --file cirros-0.3.4-x86_64-disk.img --disk-format qcow2 --container-format bare --public

执行过程如下

[root@controller ~]# openstack image create "cirros" --file cirros-0.3.4-x86_64-disk.img --disk-format qcow2 --container-format bare --public+------------------+------------------------------------------------------+| Field | Value |+------------------+------------------------------------------------------+| checksum | ee1eca47dc88f4879d8a229cc70a07c6 || container_format | bare || created_at | 2018-01-23T10:20:19Z || disk_format | qcow2 || file | /v2/images/9d92c601-0824-493a-bc6e-cecb10e9a4c6/file || id | 9d92c601-0824-493a-bc6e-cecb10e9a4c6 || min_disk | 0 || min_ram | 0 || name | cirros || owner | d0dfbdbc115b4a728c24d28bc1ce1e62 || protected | False || schema | /v2/schemas/image || size | 13287936 || status | active || tags | || updated_at | 2018-01-23T10:20:20Z || virtual_size | None || visibility | public |+------------------+------------------------------------------------------+

查看镜像列表

[root@controller ~]# openstack image list+--------------------------------------+--------+--------+| ID | Name | Status |+--------------------------------------+--------+--------+| 9d92c601-0824-493a-bc6e-cecb10e9a4c6 | cirros | active |+--------------------------------------+--------+--------+

镜像位置,镜像上传后以id命名。

[root@controller ~]# ll -h /var/lib/glance/images/total 13M-rw-r----- 1 glance glance 13M Jan 23 18:20 9d92c601-0824-493a-bc6e-cecb10e9a4c6

6. 计算服务(nova)部署

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/nova.html

6.1 在控制节点安装并配置

参考文献:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/nova-controller-install.html

1)在数据库中,创库,授权

数据库连接客户端以 root 用户连接到数据库服务器

mysql -u root -p

创建 nova_api 和 nova 数据库:

CREATE DATABASE nova_api;CREATE DATABASE nova;

对数据库进行正确的授权

GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS';GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS';GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';

2)在keystone中创建用户并授权

加载环境变量

[root@controller ~]# . admin-openrc

创建用户

openstack user create --domain default --password NOVA_PASS nova

关联角色

openstack role add --project service --user nova admin

3)在keystone中创建服务实体,和注册API接口

创建服务实体

openstack service create --name nova --description "OpenStack Compute" compute

注册API接口

openstack endpoint create --region RegionOne compute public http://controller:8774/v2.1/%\(tenant_id\)sopenstack endpoint create --region RegionOne compute internal http://controller:8774/v2.1/%\(tenant_id\)sopenstack endpoint create --region RegionOne compute admin http://controller:8774/v2.1/%\(tenant_id\)s

4)安装软件包

yum -y install openstack-nova-api openstack-nova-conductor openstack-nova-console openstack-nova-novncproxy openstack-nova-scheduler

软件包说明

nova-api # 提供api接口 nova-scheduler # 调度 nova-conductor # 替代计算节点进入数据库操作 nova-consoleauth # 提供web界面版的vnc管理 nova-novncproxy # 提供web界面版的vnc管理 nova-compute # 调度libvirtd进行虚拟机生命周期的管理

5)修改配置文件

编辑/etc/nova/nova.conf文件并完成下面的操作:

在[DEFAULT]部分,只启用计算和元数据API:

[DEFAULT]...enabled_apis = osapi_compute,metadata

在[api_database]和[database]部分,配置数据库的连接:

[api_database]...connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova_api[database]...connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova

在 “[DEFAULT]” 和 “[oslo_messaging_rabbit]”部分,配置 “RabbitMQ” 消息队列访问

[DEFAULT]...rpc_backend = rabbit[oslo_messaging_rabbit]...rabbit_host = controllerrabbit_userid = openstackrabbit_password = RABBIT_PASS

在 “[DEFAULT]” 和 “[keystone_authtoken]” 部分,配置认证服务访问

[DEFAULT]...auth_strategy = keystone[keystone_authtoken]...auth_uri = http://controller:5000auth_url = http://controller:35357memcached_servers = controller:11211auth_type = passwordproject_domain_name = defaultuser_domain_name = defaultproject_name = serviceusername = novapassword = NOVA_PASS

在 [DEFAULT]部分,配置my_ip 来使用控制节点的管理接口的IP 地址。

[DEFAULT]...my_ip = 10.0.0.11

在 [DEFAULT] 部分,使能 Networking 服务:

[DEFAULT]...use_neutron = Truefirewall_driver = nova.virt.firewall.NoopFirewallDriver

在[vnc]部分,配置VNC代理使用控制节点的管理接口IP地址 :

[vnc]...vncserver_listen = $my_ipvncserver_proxyclient_address = $my_ip

在 [glance] 区域,配置镜像服务 API 的位置:

[glance]...api_servers = http://controller:9292

在 [oslo_concurrency] 部分,配置锁路径:

[oslo_concurrency]...lock_path = /var/lib/nova/tmp

命令集

cp /etc/nova/nova.conf{,.bak}grep '^[a-Z\[]' /etc/nova/nova.conf.bak >/etc/nova/nova.confopenstack-config --set /etc/nova/nova.conf DEFAULT enabled_apis osapi_compute,metadataopenstack-config --set /etc/nova/nova.conf DEFAULT rpc_backend rabbitopenstack-config --set /etc/nova/nova.conf DEFAULT auth_strategy keystoneopenstack-config --set /etc/nova/nova.conf DEFAULT my_ip 10.0.0.11openstack-config --set /etc/nova/nova.conf DEFAULT use_neutron Trueopenstack-config --set /etc/nova/nova.conf DEFAULT firewall_driver nova.virt.firewall.NoopFirewallDriveropenstack-config --set /etc/nova/nova.conf api_database connection mysql+pymysql://nova:NOVA_DBPASS@controller/nova_apiopenstack-config --set /etc/nova/nova.conf database connection mysql+pymysql://nova:NOVA_DBPASS@controller/novaopenstack-config --set /etc/nova/nova.conf glance api_servers http://controller:9292openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_uri http://controller:5000openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_url http://controller:35357openstack-config --set /etc/nova/nova.conf keystone_authtoken memcached_servers controller:11211openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_type passwordopenstack-config --set /etc/nova/nova.conf keystone_authtoken project_domain_name defaultopenstack-config --set /etc/nova/nova.conf keystone_authtoken user_domain_name defaultopenstack-config --set /etc/nova/nova.conf keystone_authtoken project_name serviceopenstack-config --set /etc/nova/nova.conf keystone_authtoken username novaopenstack-config --set /etc/nova/nova.conf keystone_authtoken password NOVA_PASSopenstack-config --set /etc/nova/nova.conf oslo_concurrency lock_path /var/lib/nova/tmpopenstack-config --set /etc/nova/nova.conf oslo_messaging_rabbit rabbit_host controlleropenstack-config --set /etc/nova/nova.conf oslo_messaging_rabbit rabbit_userid openstackopenstack-config --set /etc/nova/nova.conf oslo_messaging_rabbit rabbit_password RABBIT_PASSopenstack-config --set /etc/nova/nova.conf vnc vncserver_listen '$my_ip'openstack-config --set /etc/nova/nova.conf vnc vncserver_proxyclient_address '$my_ip'

6)同步数据库

su -s /bin/sh -c "nova-manage api_db sync" novasu -s /bin/sh -c "nova-manage db sync" nova

注意:忽略执行过程中输出中任何不推荐使用的信息

[root@controller ~]# mysql nova_api -e 'show tables' |wc -l10[root@controller ~]# mysql nova -e 'show tables' |wc -l110

7)启动服务

设置开启自启动

systemctl enable openstack-nova-api.service openstack-nova-consoleauth.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service

启动服务

systemctl start openstack-nova-api.service openstack-nova-consoleauth.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service

查看服务状态

[root@controller ~]# systemctl status openstack-nova-api.service openstack-nova-consoleauth.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service |grep 'active (running)' |wc -l5

6.2 在计算节点安装和配置

查考文献:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/nova-compute-install.html

1)安装软件包

yum -y install openstack-nova-compute

2)修改配置文件

编辑/etc/nova/nova.conf文件并完成下面的操作

在[DEFAULT] 和 [oslo_messaging_rabbit]部分,配置RabbitMQ消息队列的连接:

[DEFAULT]...rpc_backend = rabbit[oslo_messaging_rabbit]...rabbit_host = controllerrabbit_userid = openstackrabbit_password = RABBIT_PASS

在 “[DEFAULT]” 和 “[keystone_authtoken]” 部分,配置认证服务访问:

[DEFAULT]...auth_strategy = keystone[keystone_authtoken]...auth_uri = http://controller:5000auth_url = http://controller:35357memcached_servers = controller:11211auth_type = passwordproject_domain_name = defaultuser_domain_name = defaultproject_name = serviceusername = novapassword = NOVA_PASS

在 [DEFAULT] 部分,配置 my_ip 选项:

[DEFAULT]...my_ip = MANAGEMENT_INTERFACE_IP_ADDRESS

注意: 将其中的 MANAGEMENT_INTERFACE_IP_ADDRESS 替换为计算节点上的管理网络接口的IP 地址,例如 :ref:example architecture <overview-example-architectures>中所示的第一个节点 10.0.0.31

在 [DEFAULT] 部分,使能 Networking 服务:

[DEFAULT]...use_neutron = Truefirewall_driver = nova.virt.firewall.NoopFirewallDriver

在[vnc]部分,启用并配置远程控制台访问

[vnc]...enabled = Truevncserver_listen = 0.0.0.0vncserver_proxyclient_address = $my_ipnovncproxy_base_url = http://controller:6080/vnc_auto.html

在 [glance] 区域,配置镜像服务 API 的位置:

[glance]...api_servers = http://controller:9292

在 [oslo_concurrency] 部分,配置锁路径:

[oslo_concurrency]...lock_path = /var/lib/nova/tmp

命令集

cp /etc/nova/nova.conf{,.bak}grep '^[a-Z\[]' /etc/nova/nova.conf.bak >/etc/nova/nova.confopenstack-config --set /etc/nova/nova.conf DEFAULT enabled_apis osapi_compute,metadataopenstack-config --set /etc/nova/nova.conf DEFAULT rpc_backend rabbitopenstack-config --set /etc/nova/nova.conf DEFAULT auth_strategy keystoneopenstack-config --set /etc/nova/nova.conf DEFAULT my_ip 10.0.0.31openstack-config --set /etc/nova/nova.conf DEFAULT use_neutron Trueopenstack-config --set /etc/nova/nova.conf DEFAULT firewall_driver nova.virt.firewall.NoopFirewallDriveropenstack-config --set /etc/nova/nova.conf glance api_servers http://controller:9292openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_uri http://controller:5000openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_url http://controller:35357openstack-config --set /etc/nova/nova.conf keystone_authtoken memcached_servers controller:11211openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_type passwordopenstack-config --set /etc/nova/nova.conf keystone_authtoken project_domain_name defaultopenstack-config --set /etc/nova/nova.conf keystone_authtoken user_domain_name defaultopenstack-config --set /etc/nova/nova.conf keystone_authtoken project_name serviceopenstack-config --set /etc/nova/nova.conf keystone_authtoken username novaopenstack-config --set /etc/nova/nova.conf keystone_authtoken password NOVA_PASSopenstack-config --set /etc/nova/nova.conf oslo_concurrency lock_path /var/lib/nova/tmpopenstack-config --set /etc/nova/nova.conf oslo_messaging_rabbit rabbit_host controlleropenstack-config --set /etc/nova/nova.conf oslo_messaging_rabbit rabbit_userid openstackopenstack-config --set /etc/nova/nova.conf oslo_messaging_rabbit rabbit_password RABBIT_PASSopenstack-config --set /etc/nova/nova.conf vnc enabled Trueopenstack-config --set /etc/nova/nova.conf vnc vncserver_listen 0.0.0.0openstack-config --set /etc/nova/nova.conf vnc vncserver_proxyclient_address '$my_ip'openstack-config --set /etc/nova/nova.conf vnc novncproxy_base_url http://controller:6080/vnc_auto.html

3)启动服务

确定您的计算节点是否支持虚拟机的硬件加速

[root@compute1 ~]# egrep -c '(vmx|svm)' /proc/cpuinfo1

说明:如果这个命令返回了 1 或更大的值,那么你的计算节点支持硬件加速且不需要额外的配置。

启动,开机自启动

systemctl enable libvirtd.service openstack-nova-compute.servicesystemctl start libvirtd.service openstack-nova-compute.service# 查看状态systemctl status libvirtd.service openstack-nova-compute.service

在控制节点查看计算节点状态

[root@controller ~]# source admin-openrc[root@controller ~]# openstack compute service list+----+------------------+------------+----------+---------+-------+----------------------------+| Id | Binary | Host | Zone | Status | State | Updated At |+----+------------------+------------+----------+---------+-------+----------------------------+| 1 | nova-scheduler | controller | internal | enabled | up | 2018-01-23T12:02:04.000000 || 2 | nova-conductor | controller | internal | enabled | up | 2018-01-23T12:02:03.000000 || 3 | nova-consoleauth | controller | internal | enabled | up | 2018-01-23T12:02:05.000000 || 6 | nova-compute | compute1 | nova | enabled | up | 2018-01-23T12:02:05.000000 |+----+------------------+------------+----------+---------+-------+----------------------------+

6.3 验证服务

在进行下一步操作之前,先验证之前部署的服务是否正常。

注意: 执行命令前需先加载环境变量脚本

# 检查认证服务openstack user list# 检查镜像服务openstack image list# 检查计算服务openstack compute service list

7. Networking(neutron)服务

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/neutron.html

7.1 安装并配置控制节点

以下全命令全在 controller 主机中执行

参考文献:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/neutron-controller-install.html

1)在数据库中,创库,授权

连接到数据库服务器

mysql

创建neutron 数据库

CREATE DATABASE neutron;

对neutron 数据库授予合适的访问权限

GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'localhost' IDENTIFIED BY 'NEUTRON_DBPASS';GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' IDENTIFIED BY 'NEUTRON_DBPASS';

2)在keystone中创建用户并授权

创建neutron用户

openstack user create --domain default --password NEUTRON_PASS neutron

添加admin 角色到neutron 用户

openstack role add --project service --user neutron admin

3)在keystone中创建服务实体,和注册API接口

创建neutron服务实体

openstack service create --name neutron --description "OpenStack Networking" network

创建网络服务API端点

openstack endpoint create --region RegionOne network public http://controller:9696openstack endpoint create --region RegionOne network internal http://controller:9696openstack endpoint create --region RegionOne network admin http://controller:9696

4)安装软件包

这这里我选用的时’网络选项__1:公共网络‘ 该网络模式较为简单。

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/neutron-controller-install-option1.html

安装软件包

yum -y install openstack-neutron openstack-neutron-ml2 openstack-neutron-linuxbridge ebtables

5)修改配置文件

① 编辑/etc/neutron/neutron.conf 文件并完成如下操作

在 [database] 部分,配置数据库访问

[database]...connection = mysql+pymysql://neutron:NEUTRON_DBPASS@controller/neutron

在[DEFAULT]部分,启用ML2插件并禁用其他插件

[DEFAULT]...core_plugin = ml2service_plugins =

在 “[DEFAULT]” 和 “[oslo_messaging_rabbit]”部分,配置 “RabbitMQ” 消息队列的连接

[DEFAULT]...rpc_backend = rabbit[oslo_messaging_rabbit]...rabbit_host = controllerrabbit_userid = openstackrabbit_password = RABBIT_PASS

在 “[DEFAULT]” 和 “[keystone_authtoken]” 部分,配置认证服务访问

[DEFAULT]...auth_strategy = keystone[keystone_authtoken]...auth_uri = http://controller:5000auth_url = http://controller:35357memcached_servers = controller:11211auth_type = passwordproject_domain_name = defaultuser_domain_name = defaultproject_name = serviceusername = neutronpassword = NEUTRON_PASS

在[DEFAULT]和[nova]部分,配置网络服务来通知计算节点的网络拓扑变化

[DEFAULT]...notify_nova_on_port_status_changes = Truenotify_nova_on_port_data_changes = True[nova]...auth_url = http://controller:35357auth_type = passwordproject_domain_name = defaultuser_domain_name = defaultregion_name = RegionOneproject_name = serviceusername = novapassword = NOVA_PASS

在 [oslo_concurrency] 部分,配置锁路径

[oslo_concurrency]...lock_path = /var/lib/neutron/tmp

命令集

cp /etc/neutron/neutron.conf{,.bak}grep '^[a-Z\[]' /etc/neutron/neutron.conf.bak >/etc/neutron/neutron.confopenstack-config --set /etc/neutron/neutron.conf DEFAULT core_plugin ml2openstack-config --set /etc/neutron/neutron.conf DEFAULT service_pluginsopenstack-config --set /etc/neutron/neutron.conf DEFAULT rpc_backend rabbitopenstack-config --set /etc/neutron/neutron.conf DEFAULT auth_strategy keystoneopenstack-config --set /etc/neutron/neutron.conf DEFAULT notify_nova_on_port_status_changes Trueopenstack-config --set /etc/neutron/neutron.conf DEFAULT notify_nova_on_port_data_changes Trueopenstack-config --set /etc/neutron/neutron.conf database connection mysql+pymysql://neutron:NEUTRON_DBPASS@controller/neutronopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_uri http://controller:5000openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_url http://controller:35357openstack-config --set /etc/neutron/neutron.conf keystone_authtoken memcached_servers controller:11211openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_type passwordopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken project_domain_name defaultopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken user_domain_name defaultopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken project_name serviceopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken username neutronopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken password NEUTRON_PASSopenstack-config --set /etc/neutron/neutron.conf nova auth_url http://controller:35357openstack-config --set /etc/neutron/neutron.conf nova auth_type passwordopenstack-config --set /etc/neutron/neutron.conf nova project_domain_name defaultopenstack-config --set /etc/neutron/neutron.conf nova user_domain_name defaultopenstack-config --set /etc/neutron/neutron.conf nova region_name RegionOneopenstack-config --set /etc/neutron/neutron.conf nova project_name serviceopenstack-config --set /etc/neutron/neutron.conf nova username novaopenstack-config --set /etc/neutron/neutron.conf nova password NOVA_PASSopenstack-config --set /etc/neutron/neutron.conf oslo_concurrency lock_path /var/lib/neutron/tmpopenstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit rabbit_host controlleropenstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit rabbit_userid openstackopenstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit rabbit_password RABBIT_PASS

② 配置 Modular Layer 2 (ML2) 插件

编辑/etc/neutron/plugins/ml2/ml2_conf.ini文件并完成以下操作

在[ml2]部分,启用flat和VLAN网络

[ml2]...type_drivers = flat,vlan

在[ml2]部分,禁用私有网络

[ml2]...tenant_network_types =

在[ml2]部分,启用Linuxbridge机制

[ml2]...mechanism_drivers = linuxbridge

在[ml2] 部分,启用端口安全扩展驱动

[ml2]...extension_drivers = port_security

在[ml2_type_flat]部分,配置公共虚拟网络为flat网络

[ml2_type_flat]...flat_networks = provider

在 [securitygroup]部分,启用 ipset 增加安全组规则的高效性

[securitygroup]...enable_ipset = True

命令集

cp /etc/neutron/plugins/ml2/ml2_conf.ini{,.bak}grep '^[a-Z\[]' /etc/neutron/plugins/ml2/ml2_conf.ini.bak >/etc/neutron/plugins/ml2/ml2_conf.iniopenstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 type_drivers flat,vlanopenstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 tenant_network_typesopenstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 mechanism_drivers linuxbridgeopenstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2 extension_drivers port_securityopenstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini ml2_type_flat flat_networks provideropenstack-config --set /etc/neutron/plugins/ml2/ml2_conf.ini securitygroup enable_ipset True

③ 配置Linuxbridge代理

编辑/etc/neutron/plugins/ml2/linuxbridge_agent.ini文件并且完成以下操作

在[linux_bridge]部分,将公共虚拟网络和公共物理网络接口对应起来

[linux_bridge]physical_interface_mappings = provider:PROVIDER_INTERFACE_NAME

注意:将PUBLIC_INTERFACE_NAME 替换为底层的物理公共网络接口,例如eth0。

在[vxlan]部分,禁止VXLAN覆盖网络

[vxlan]enable_vxlan = False

在 [securitygroup]部分,启用安全组并配置

[securitygroup]...enable_security_group = Truefirewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

命令集

cp /etc/neutron/plugins/ml2/linuxbridge_agent.ini{,.bak}grep '^[a-Z\[]' /etc/neutron/plugins/ml2/linuxbridge_agent.ini.bak >/etc/neutron/plugins/ml2/linuxbridge_agent.iniopenstack-config --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini linux_bridge physical_interface_mappings provider:eth0openstack-config --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup enable_security_group Trueopenstack-config --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup firewall_driver neutron.agent.linux.iptables_firewall.IptablesFirewallDriveropenstack-config --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini vxlan enable_vxlan False

④ 配置DHCP代理

编辑/etc/neutron/dhcp_agent.ini文件并完成下面的操作

在[DEFAULT]部分,配置Linuxbridge驱动接口,DHCP驱动并启用隔离元数据,这样在公共网络上的实例就可以通过网络来访问元数据

[DEFAULT]...interface_driver = neutron.agent.linux.interface.BridgeInterfaceDriverdhcp_driver = neutron.agent.linux.dhcp.Dnsmasqenable_isolated_metadata = True

命令集

neutron.agent.linux.interface.BridgeInterfaceDriveropenstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT dhcp_driver neutron.agent.linux.dhcp.Dnsmasqopenstack-config --set /etc/neutron/dhcp_agent.ini DEFAULT enable_isolated_metadata true

⑤ 配置元数据代理

编辑/etc/neutron/metadata_agent.ini文件并完成以下操作

在[DEFAULT] 部分,配置元数据主机以及共享密码

[DEFAULT]...nova_metadata_ip = controllermetadata_proxy_shared_secret = METADATA_SECRET

命令集

openstack-config --set /etc/neutron/metadata_agent.ini DEFAULT nova_metadata_ip controlleropenstack-config --set /etc/neutron/metadata_agent.ini DEFAULT metadata_proxy_shared_secret METADATA_SECRET

⑥ 为nove配置网络服务

再次编辑/etc/nova/nova.conf文件并完成以下操作

在[neutron]部分,配置访问参数,启用元数据代理并设置密码

[neutron]...url = http://controller:9696auth_url = http://controller:35357auth_type = passwordproject_domain_name = defaultuser_domain_name = defaultregion_name = RegionOneproject_name = serviceusername = neutronpassword = NEUTRON_PASSservice_metadata_proxy = Truemetadata_proxy_shared_secret = METADATA_SECRET

命令集

openstack-config --set /etc/nova/nova.conf neutron url http://controller:9696openstack-config --set /etc/nova/nova.conf neutron auth_url http://controller:35357openstack-config --set /etc/nova/nova.conf neutron auth_type passwordopenstack-config --set /etc/nova/nova.conf neutron project_domain_name defaultopenstack-config --set /etc/nova/nova.conf neutron user_domain_name defaultopenstack-config --set /etc/nova/nova.conf neutron region_name RegionOneopenstack-config --set /etc/nova/nova.conf neutron project_name serviceopenstack-config --set /etc/nova/nova.conf neutron username neutronopenstack-config --set /etc/nova/nova.conf neutron password NEUTRON_PASSopenstack-config --set /etc/nova/nova.conf neutron service_metadata_proxy Trueopenstack-config --set /etc/nova/nova.conf neutron metadata_proxy_shared_secret METADATA_SECRET

6)同步数据库

网络服务初始化脚本需要一个超链接 /etc/neutron/plugin.ini指向ML2插件配置文件/etc/neutron/plugins/ml2/ml2_conf.ini。

如果超链接不存在,使用下面的命令创建它

ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

同步数据库

su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron

7)启动服务

重启计算API 服务

systemctl restart openstack-nova-api.service

当系统启动时,启动 Networking 服务并配置它启动。

systemctl enable neutron-server.service neutron-linuxbridge-agent.service neutron-dhcp-agent.service neutron-metadata-agent.servicesystemctl start neutron-server.service neutron-linuxbridge-agent.service neutron-dhcp-agent.service neutron-metadata-agent.servicesystemctl status neutron-server.service neutron-linuxbridge-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service

7.2 安装和配置计算节点

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/neutron-compute-install.html

1)安装组件

yum -y install openstack-neutron-linuxbridge ebtables ipset

2)修改配置文件

在计算节点配置选择 网络选项1:公共网络,与控制节点相同

① 编辑/etc/neutron/neutron.conf 文件并完成如下操作

在 “[DEFAULT]” 和 “[oslo_messaging_rabbit]”部分,配置 “RabbitMQ” 消息队列的连接

[DEFAULT]...rpc_backend = rabbit[oslo_messaging_rabbit]...rabbit_host = controllerrabbit_userid = openstackrabbit_password = RABBIT_PASS

在 “[DEFAULT]” 和 “[keystone_authtoken]” 部分,配置认证服务访问

[DEFAULT]...auth_strategy = keystone[keystone_authtoken]...auth_uri = http://controller:5000auth_url = http://controller:35357memcached_servers = controller:11211auth_type = passwordproject_domain_name = defaultuser_domain_name = defaultproject_name = serviceusername = neutronpassword = NEUTRON_PASS

在 [oslo_concurrency] 部分,配置锁路径

[oslo_concurrency]...lock_path = /var/lib/neutron/tmp

命令集

cp /etc/neutron/neutron.conf{,.bak}grep -Ev '^$|#' /etc/neutron/neutron.conf.bak >/etc/neutron/neutron.confopenstack-config --set /etc/neutron/neutron.conf DEFAULT rpc_backend rabbitopenstack-config --set /etc/neutron/neutron.conf DEFAULT auth_strategy keystoneopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_uri http://controller:5000openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_url http://controller:35357openstack-config --set /etc/neutron/neutron.conf keystone_authtoken memcached_servers controller:11211openstack-config --set /etc/neutron/neutron.conf keystone_authtoken auth_type passwordopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken project_domain_name defaultopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken user_domain_name defaultopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken project_name serviceopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken username neutronopenstack-config --set /etc/neutron/neutron.conf keystone_authtoken password NEUTRON_PASSopenstack-config --set /etc/neutron/neutron.conf oslo_concurrency lock_path /var/lib/neutron/tmpopenstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit rabbit_host controlleropenstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit rabbit_userid openstackopenstack-config --set /etc/neutron/neutron.conf oslo_messaging_rabbit rabbit_password RABBIT_PASS

② 配置Linuxbridge代理

编辑/etc/neutron/plugins/ml2/linuxbridge_agent.ini文件并且完成以下操作

在[linux_bridge]部分,将公共虚拟网络和公共物理网络接口对应起来

[linux_bridge]physical_interface_mappings = provider:PROVIDER_INTERFACE_NAME

注意:将PUBLIC_INTERFACE_NAME 替换为底层的物理公共网络接口,例如eth0。 在[vxlan]部分,禁止VXLAN覆盖网络

[vxlan]enable_vxlan = False

在 [securitygroup]部分,启用安全组并配置

[securitygroup]...enable_security_group = Truefirewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

命令集

cp /etc/neutron/plugins/ml2/linuxbridge_agent.ini{,.bak}grep '^[a-Z\[]' /etc/neutron/plugins/ml2/linuxbridge_agent.ini.bak >/etc/neutron/plugins/ml2/linuxbridge_agent.iniopenstack-config --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini linux_bridge physical_interface_mappings provider:eth0openstack-config --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup enable_security_group Trueopenstack-config --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini securitygroup firewall_driver neutron.agent.linux.iptables_firewall.IptablesFirewallDriveropenstack-config --set /etc/neutron/plugins/ml2/linuxbridge_agent.ini vxlan enable_vxlan False

③ 为计算节点配置网络服务

编辑/etc/nova/nova.conf文件并完成下面的操作

在[neutron] 部分,配置访问参数

[neutron]...url = http://controller:9696auth_url = http://controller:35357auth_type = passwordproject_domain_name = defaultuser_domain_name = defaultregion_name = RegionOneproject_name = serviceusername = neutronpassword = NEUTRON_PASS

命令集

openstack-config --set /etc/nova/nova.conf neutron url http://controller:9696openstack-config --set /etc/nova/nova.conf neutron auth_url http://controller:35357openstack-config --set /etc/nova/nova.conf neutron auth_type passwordopenstack-config --set /etc/nova/nova.conf neutron project_domain_name defaultopenstack-config --set /etc/nova/nova.conf neutron user_domain_name defaultopenstack-config --set /etc/nova/nova.conf neutron region_name RegionOneopenstack-config --set /etc/nova/nova.conf neutron project_name serviceopenstack-config --set /etc/nova/nova.conf neutron username neutronopenstack-config --set /etc/nova/nova.conf neutron password NEUTRON_PASS

3)启动服务

重启计算服务

systemctl restart openstack-nova-compute.service

启动Linuxbridge代理并配置它开机自启动

systemctl enable neutron-linuxbridge-agent.servicesystemctl start neutron-linuxbridge-agent.service

7.3 验证操作

官方验证方法

https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/neutron-verify.html https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/neutron-verify-option1.html

在这里,我只进行验证网络,网络正常说明服务正常

[root@controller ~]# neutron agent-list+--------------------------------------+--------------------+------------+-------------------+-------+----------------+---------------------------+| id | agent_type | host | availability_zone | alive | admin_state_up | binary |+--------------------------------------+--------------------+------------+-------------------+-------+----------------+---------------------------+| 3ab2f17f-737e-4c3f-86f0-2289c56a541b | DHCP agent | controller | nova | :-) | True | neutron-dhcp-agent || 4f64caf6-a9b0-4742-b0d1-0d961063200a | Linux bridge agent | controller | | :-) | True | neutron-linuxbridge-agent || 630540de-d0a0-473b-96b5-757afc1057de | Linux bridge agent | compute1 | | :-) | True | neutron-linuxbridge-agent || 9989ddcb-6aba-4b7f-9bd7-7d61f774f2bb | Metadata agent | controller | | :-) | True | neutron-metadata-agent |+--------------------------------------+--------------------+------------+-------------------+-------+----------------+---------------------------+

8. Dashboard(horizon-web界面)安装

官方文档:https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/horizon.html

8.1 安全并配置组件(单独主机安装)

[root@compute1 ~]# yum -y install openstack-dashboard

由于Dashboard服务需要使用到httpd服务,安装在控制节点,可能回影响到Keystone服务的正常运行,所以选择单独安装,与官方文档略有不同。

8.2 修改配置文件

编辑文件 /etc/openstack-dashboard/local_settings 并完成如下动作

在 controller 节点上配置仪表盘以使用 OpenStack 服务

OPENSTACK_HOST = "controller"# 指向认证服务的地址=keystone

允许所有主机访问仪表板

ALLOWED_HOSTS = ['*', ]

配置 memcached 会话存储服务

SESSION_ENGINE = 'django.contrib.sessions.backends.cache'CACHES = {'default': {'BACKEND': 'django.core.cache.backends.memcached.MemcachedCache','LOCATION': 'controller:11211',}}

启用第3版认证API:

OPENSTACK_KEYSTONE_URL = "http://%s:5000/v3" % OPENSTACK_HOST

启用对域的支持

OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = True

配置API版本

OPENSTACK_API_VERSIONS = {"identity": 3,"image": 2,"volume": 2,}

通过仪表盘创建用户时的默认域配置为 default :

OPENSTACK_KEYSTONE_DEFAULT_DOMAIN = "default"

通过仪表盘创建的用户默认角色配置为 user

OPENSTACK_KEYSTONE_DEFAULT_ROLE = "user"

如果您选择网络选项1,需要禁用支持3层网络服务

OPENSTACK_NEUTRON_NETWORK = {...'enable_router': False,'enable_quotas': False,'enable_distributed_router': False,'enable_ha_router': False,'enable_lb': False,'enable_firewall': False,'enable_vpn': False,'enable_fip_topology_check': False,}

可以选择性地配置时区

TIME_ZONE = "Asia/Shanghai"

最终配置文件

wget https://files.cnblogs.com/files/clsn/local_settings.zip

文件详情: