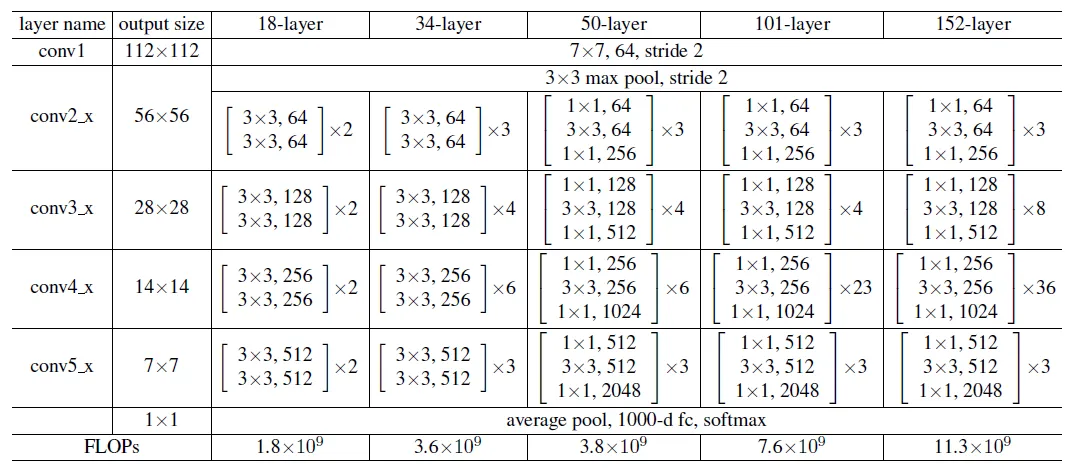

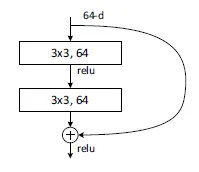

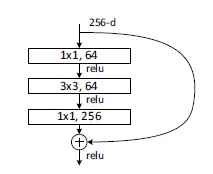

resnet18和resnet34用的是basicblock,renset50、resnet101、resnet152用的是bottleneck

BasicBlock

BottleNeck

Resnet

本图为当输入为3x224x224时推算的结果,每一层都有padding,第一层输出为224 / stide = 112

代码

def resnet18():""" return a ResNet 18 object"""return ResNet(BasicBlock, [2, 2, 2, 2])def resnet34():""" return a ResNet 34 object"""return ResNet(BasicBlock, [3, 4, 6, 3])def resnet50():""" return a ResNet 50 object"""return ResNet(BottleNeck, [3, 4, 6, 3])def resnet101():""" return a ResNet 101 object"""return ResNet(BottleNeck, [3, 4, 23, 3])def resnet152():""" return a ResNet 152 object"""return ResNet(BottleNeck, [3, 8, 36, 3])

class BasicBlock(nn.Module):"""Basic Block for resnet 18 and resnet 34"""# BasicBlock and BottleNeck block# have different output size# we use class attribute expansion# to distinctexpansion = 1def __init__(self, in_channels, out_channels, stride=1):super().__init__()# residual functionself.residual_function = nn.Sequential(nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False),nn.BatchNorm2d(out_channels),nn.ReLU(inplace=True),nn.Conv2d(out_channels, out_channels * BasicBlock.expansion, kernel_size=3, padding=1, bias=False),nn.BatchNorm2d(out_channels * BasicBlock.expansion))# shortcutself.shortcut = nn.Sequential()# the shortcut output dimension is not the same with residual function# use 1*1 convolution to match the dimensionif stride != 1 or in_channels != BasicBlock.expansion * out_channels:self.shortcut = nn.Sequential(nn.Conv2d(in_channels, out_channels * BasicBlock.expansion, kernel_size=1, stride=stride, bias=False),nn.BatchNorm2d(out_channels * BasicBlock.expansion))def forward(self, x):return nn.ReLU(inplace=True)(self.residual_function(x) + self.shortcut(x))class BottleNeck(nn.Module):"""Residual block for resnet over 50 layers"""expansion = 4def __init__(self, in_channels, out_channels, stride=1):super().__init__()self.residual_function = nn.Sequential(nn.Conv2d(in_channels, out_channels, kernel_size=1, bias=False),nn.BatchNorm2d(out_channels),nn.ReLU(inplace=True),nn.Conv2d(out_channels, out_channels, stride=stride, kernel_size=3, padding=1, bias=False),nn.BatchNorm2d(out_channels),nn.ReLU(inplace=True),nn.Conv2d(out_channels, out_channels * BottleNeck.expansion, kernel_size=1, bias=False),nn.BatchNorm2d(out_channels * BottleNeck.expansion),)self.shortcut = nn.Sequential()if stride != 1 or in_channels != out_channels * BottleNeck.expansion:self.shortcut = nn.Sequential(nn.Conv2d(in_channels, out_channels * BottleNeck.expansion, stride=stride, kernel_size=1, bias=False),nn.BatchNorm2d(out_channels * BottleNeck.expansion))def forward(self, x):return nn.ReLU(inplace=True)(self.residual_function(x) + self.shortcut(x))class ResNet(nn.Module):def __init__(self, block, num_block, num_classes=100):super().__init__()self.in_channels = 64self.conv1 = nn.Sequential(nn.Conv2d(3, 64, kernel_size=3, padding=1, bias=False),nn.BatchNorm2d(64),nn.ReLU(inplace=True))# we use a different inputsize than the original paper# so conv2_x's stride is 1self.conv2_x = self._make_layer(block, 64, num_block[0], 1)self.conv3_x = self._make_layer(block, 128, num_block[1], 2)self.conv4_x = self._make_layer(block, 256, num_block[2], 2)self.conv5_x = self._make_layer(block, 512, num_block[3], 2)self.avg_pool = nn.AdaptiveAvgPool2d((1, 1))self.fc = nn.Linear(512 * block.expansion, num_classes)def _make_layer(self, block, out_channels, num_blocks, stride):"""make resnet layers(by layer i didnt mean this 'layer' was thesame as a neuron netowork layer, ex. conv layer), one layer maycontain more than one residual blockArgs:block: block type, basic block or bottle neck blockout_channels: output depth channel number of this layernum_blocks: how many blocks per layerstride: the stride of the first block of this layerReturn:return a resnet layer"""# we have num_block blocks per layer, the first block# could be 1 or 2, other blocks would always be 1strides = [stride] + [1] * (num_blocks - 1)layers = []for stride in strides:layers.append(block(self.in_channels, out_channels, stride))self.in_channels = out_channels * block.expansionreturn nn.Sequential(*layers)def forward(self, x):output = self.conv1(x)output = self.conv2_x(output)output = self.conv3_x(output)output = self.conv4_x(output)output = self.conv5_x(output)output = self.avg_pool(output)output = output.view(output.size(0), -1)output = self.fc(output)return output