Scala IDE for Eclipse

创建用户hadoop1

useradd hadoop1

echo “111111”|passwd —stdin hadoop1

配置hadoop1,使hadoop1和hadoop是同一用户

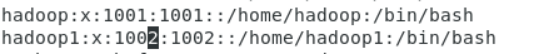

编辑/etc/passwd

将hadoop1的UID和GID改成和hadoop用户一样

vi /etc/passwd

修改为

修改hadoop1主目录的属主为hadoop1

chown -R hadoop.hadoop /home/hadoop1

UID和GID和hadoop用户一样

区别在于主目录不同!

id hadoop

id hadoop1

为hadoop1用户配置环境文件

退出图形界面,用hadoop1用户重新登录图形界面,然后执行如下命令:

cd ~hadoop1

cat > ~hadoop1/.bashrc <

# Source global definitions

if [ -f /etc/bashrc ]; then

. /etc/bashrc

fi

# User specific aliases and functions

JAVA_HOME=/usr/jdk

export JAVA_HOME

HADOOP_HOME=/opt/hadoop

export HADOOP_HOME

HADOOP_CONF_DIR=/opt/hadoop/etc/hadoop

export HADOOP_CONF_DIR

export SPARK_HOME=/opt/spark

export PATH=\$PATH:\$SPARK_HOME/bin:\$SPARK_HOME/sbin

ECLIPSE_HOME=/usr/eclipse

export ECLIPSE_HOME

PATH=\$ECLIPSE_HOME/:\$HADOOP_HOME/bin:\$HADOOP_HOME/sbin:\$SPARK_HOME/bin:\$SPARK_HOME/sbin:\$PATH

export PATH

cd ~hadoop1

EOF

cd ~hadoop1

source .bashrc

使用hadoop1用户第一次登录系统

创建Scala IDE for Eclipse的安装目录

以root用户身份,执行如下命令:

mkdir /usr/eclipse

chown hadoop.hadoop /usr/eclipse

下载Scala IDE for Eclipse 安装介质

http://scala-ide.org/

http://scala-ide.org/download/current.html

解压缩下载后的Scala IDE for Eclipse

cd /home/hadoop1/Desktop/1

tar xvfz scala-SDK-4.7.0-vfinal-2.12-linux.gtk.x86_64.tar.gz -C /usr

下载例子程序要用到的jar包

wget http://www.Java2s.com/Code/JarDownload/joda/joda-time-2.2.jar.zip

wget http://www.Java2s.com/Code/JarDownload/jfreechart/jfreechart-1.0.3.jar.zip

wget http://www.Java2s.com/Code/JarDownload/jcommon/jcommon-1.0.16.jar.zip

unzip jcommon-1.0.16.jar.zip

unzip jfreechart-1.0.3.jar.zip

unzip joda-time-2.2.jar.zip

mkdir ~hadoop1/lib

mv j*jar ~hadoop1/lib

第一次启动Scala IDE for Eclipse

使用Scala IDE for Eclipse开发Spark应用:WordCount

启动eclipse

创建名字为WordCount的Scala项目:

创建名字为com.zqf.test的包

创建名字为com.zqf.test.RunWordCount的Scala Object

package com.zqf.test

import org.apache.log4j.Logger

import org.apache.log4j.Level

import org.apache.spark.{ SparkConf, SparkContext }

import org.apache.spark.rdd.RDD

object RunWordCount {

def main(args: Array[String]): Unit = {

Logger.getLogger(“org”).setLevel(Level.OFF)

System.setProperty(“spark.ui.showConsoleProgress”, “false”)

println(“Begin to Run RunWordCount”)

val sc = new SparkContext(new SparkConf().setAppName(“wordCount”).setMaster(“local[4]”))

println(“Begin to read file…”)

val textFile = sc.textFile(“data/a.txt”)

println(“Begin to Create RDD…”)

val countsRDD = textFile.flatMap(line => line.split(“ “))

.map(word => (word, 1))

.reduceByKey( + )

println(“Begin to Store file to Disk…”)

try {

countsRDD.saveAsTextFile(“output”)

println(“Store Disk Sucessful”)

} catch {

case e: Exception => println(“Output Directory Existed already!”);

}

}

}

准备输入数据

cd /home/hadoop1/workspace/WordCount

mkdir data

cd data

cat> a.txt<

world

hello

hello

he

he

he

EOF

在开发环境中运行样例程序

导出jar文件

在Spark上运行jar文件(spark-submit)

本地模式运行jar文件

运行前先删除上次的输出

cd /home/hadoop1/workspace/WordCount

rm -rf output/

运行

spark-submit —driver-memory 2g —master local[4] —class RunWordCount.class WordCount.jar

spark-submit —master local[*] —class RunWordCount WordCount.jar

spark-submit —master local —class RunWordCount WordCount.jar

spark-submit —master local[1] –class RunWordCount WordCount.jar

在Standalone集群模式下运行jar文件

启动Spark单机standalone集群

执行如下命令启动Spark standalone集群:

/opt/spark/sbin/start-all.sh

使用spark-submit运行jar文件

运行前先删除上次的输出

cd

rm -rf output/

spark-submit —master spark://test:7077 —class RunWordCount WordCount.jar

spark-submit —master spark://test:7077 —class com.zqf.test.RunWordCount WordCount.jar

查看Spark Standalone Web UI

在YARN集群模式下运行jar文件

启动Hadoop YARN集群

使用如下命令启动YARN:

start-yarn.sh

使用如下命令停止YARN:

stop-yarn.sh

使用spark-submit运行jar文件

运行前先删除上次的输出

cd

rm -rf output/

spark-submit —master yarn —class RunWordCount WordCount.jar

spark-submit —master yarn —class com.zqf.test.RunWordCount WordCount.jar

浏览ResourceManager的web接口

在Scala IDE for Eclipse中导入Spark源代码

Github下载Spark源代码

cd /home/hadoop1/

git clone https://github.com/apache/spark.git

选版本1.6.3

cd /home/hadoop1/spark

git branch -a

git checkout v1.6.3

安装Egit插件(默认已经安装了!)

启动eclipse

eclipse &

搜索Egit插件

以下显示Egit已经安装了!

导入Spark1.6.3源代码

安装Spark开发环境: IntelliJ IDEA

创建IntelliJ EDEA的安装目录

以root用户身份,执行如下命令:

mkdir /usr/idea-IC-171.4694.23

chown hadoop.hadoop /usr/idea-IC-171.4694.23

ln -s /usr/idea-IC-171.4694.23 /usr/idea-IC

下载IntelliJ IDEA介质

http://www.jetbrains.com/idea/download/#section=linux

解压缩下载后的IntelliJ IDEA

cd /home/hadoop/Desktop

tar xvfz ideaIC-2017.1.4.tar.gz -C /usr

创建用户hadoop2

useradd hadoop2

echo “hadoop123”|passwd —stdin hadoop2

使用hadoop2用户第一次登录系统

配置hadoop2,使hadoop2和hadoop是同一用户

编辑/etc/passwd

将hadoop1的UID和GID改成和hadoop用户一样

vi /etc/passwd

修改为

修改hadoop2主目录的属主为hadoop

chown -R hadoop.hadoop /home/hadoop2

UID和GID和hadoop用户一样

区别在于主目录不同!

id hadoop2

为hadoop2用户配置环境文件

以hadoop1用户身份,执行如下命令:

cd ~hadoop2

cat > ~hadoop2/.bashrc <

# Source global definitions

if [ -f /etc/bashrc ]; then

. /etc/bashrc

fi

# User specific aliases and functions

JAVA_HOME=/usr/jdk

export JAVA_HOME

HADOOP_HOME=/opt/hadoop

export HADOOP_HOME

HADOOP_CONF_DIR=/opt/hadoop/etc/hadoop

export HADOOP_CONF_DIR

export SPARK_HOME=/opt/spark

export PATH=\$PATH:\$SPARK_HOME/bin:\$SPARK_HOME/sbin

ECLIPSE_HOME=/usr/eclipse

export ECLIPSE_HOME

IDEA_HOME=/usr/idea-IC

export IDEA_HOME

PATH=\$IDEA_HOME/bin:\$ECLIPSE_HOME/:\$HADOOP_HOME/bin:\$HADOOP_HOME/sbin:\$SPARK_HOME/bin:\$SPARK_HOME/sbin:\$PATH

export PATH

cd ~hadoop2

EOF

第一次启动IntelliJ IDEA

用hadoop2用户登录系统

/usr/idea-IC/bin/idea.sh

点击右上角的X,退出IntelliJ IDEA

IntelliJ IDEA开发Spark应用:WordCount

用hadoop2用户登录系统

启动IntelliJ IDEA

创建名字为WordCount的Java项目:

引入Spark包

创建名字为com.zqf.test的包

创建名字为RunWordCount的Scala Object

输入以下代码

package com.zqf.test

/**

* Created by hadoop on 6/21/17.

*/

import org.apache.log4j.Logger

import org.apache.log4j.Level

import org.apache.spark.{ SparkConf, SparkContext }

import org.apache.spark.rdd.RDD

object RunWordCount {

def main(args: Array[String]): Unit = {

Logger.getLogger(“org”).setLevel(Level.OFF)

System.setProperty(“spark.ui.showConsoleProgress”, “false”)

println(“Begin to Run RunWordCount”)

val sc = new SparkContext(new SparkConf().setAppName(“wordCount”).setMaster(“local[4]”))

println(“Begin to read file…”)

val textFile = sc.textFile(“data/a.txt”)

println(“Begin to Create RDD…”)

val countsRDD = textFile.flatMap(line => line.split(“ “))

.map(word => (word, 1))

.reduceByKey( + )

println(“Begin to Store file to Disk…”)

try {

countsRDD.saveAsTextFile(“output”)

println(“Store Disk Sucessful”)

} catch {

case e: Exception => println(“Output Directory Existed already!”);

}

}

}

准备输入数据

cd /home/hadoop/IdeaProjects/WordCount

mkdir data

cd data

cat> a.txt<

world

hello

hello

he

he

he

EOF

编译和调试程序

导出jar文件

https://www.cnblogs.com/yangcx666/p/8723899.html

运行jar文件

本地模式运行jar文件

运行前先删除上次的输出

cd

rm -rf output/

mkdir data

cd data

cat> a.txt<

world

hello

hello

he

he

he

EOF

cd ..

运行

spark-submit —master local[1] —class com.zqf.test.RunWordCount WordCount.jar

检查结果:

cd output

ls

cat *

集群方式运行WordCount.jar

在IntelliJ IDEA中导入Spark源代码

在官方网站上下载Spark源代码

官方网站

http://spark.apache.org/

http://spark.apache.org/downloads.html

spark-1.6.3.tgz

解压缩源代码文件

解压缩源代码文件,并将目录改为spark

tar xvfz spark-1.6.3.tgz

mv spark-1.6.3 spark

编辑pom.xml

cd /home/hadoop2/spark-1.6.3

vi pom.xml

启动IntelliJ IDEA

导入Spark源代码