- GoogLeNet

- ResNet">ResNet:引入残差单元,简化学习目标和难度,加快训练速度,模型加深时,不会产生退化问题;能够有效解决训练过程中梯度消失和梯度爆炸问题。ResNet

- 课后Exercise

视频:https://www.bilibili.com/video/BV1Y7411d7Ys?p=11

博客

主要内容:各种CNN优化模型

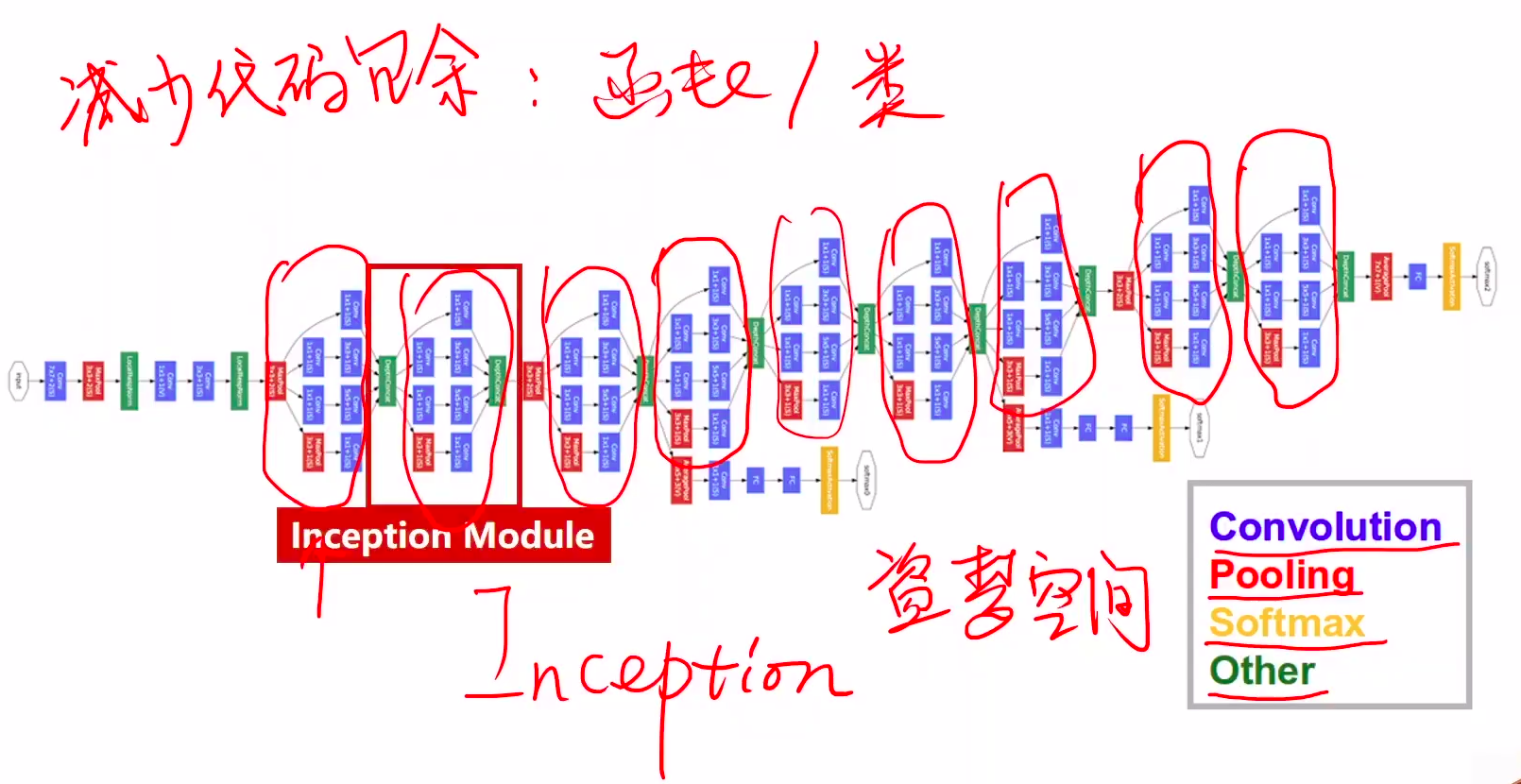

GoogLeNet

GoogLeNet:14年ImageNet分类第一名。引入Inception模块,采用不同大小的卷积核意味着不同大小的感受野,最后拼接意味着不同尺度特征的融合;采用了average pooling来代替全连接层;避免梯度消失,网络额外增加了2个辅助的softmax用于向前传导梯度。

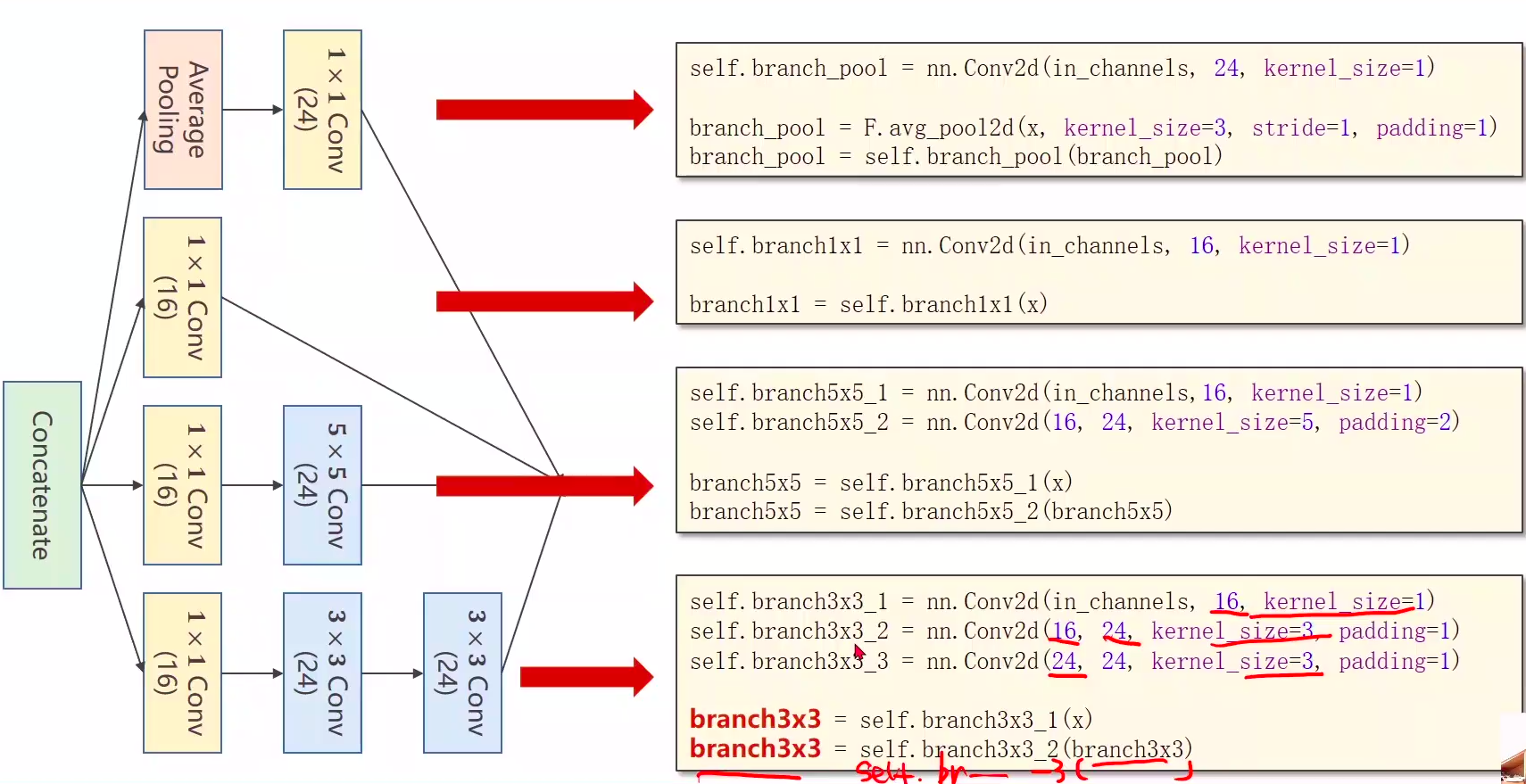

说明:Inception Moudel

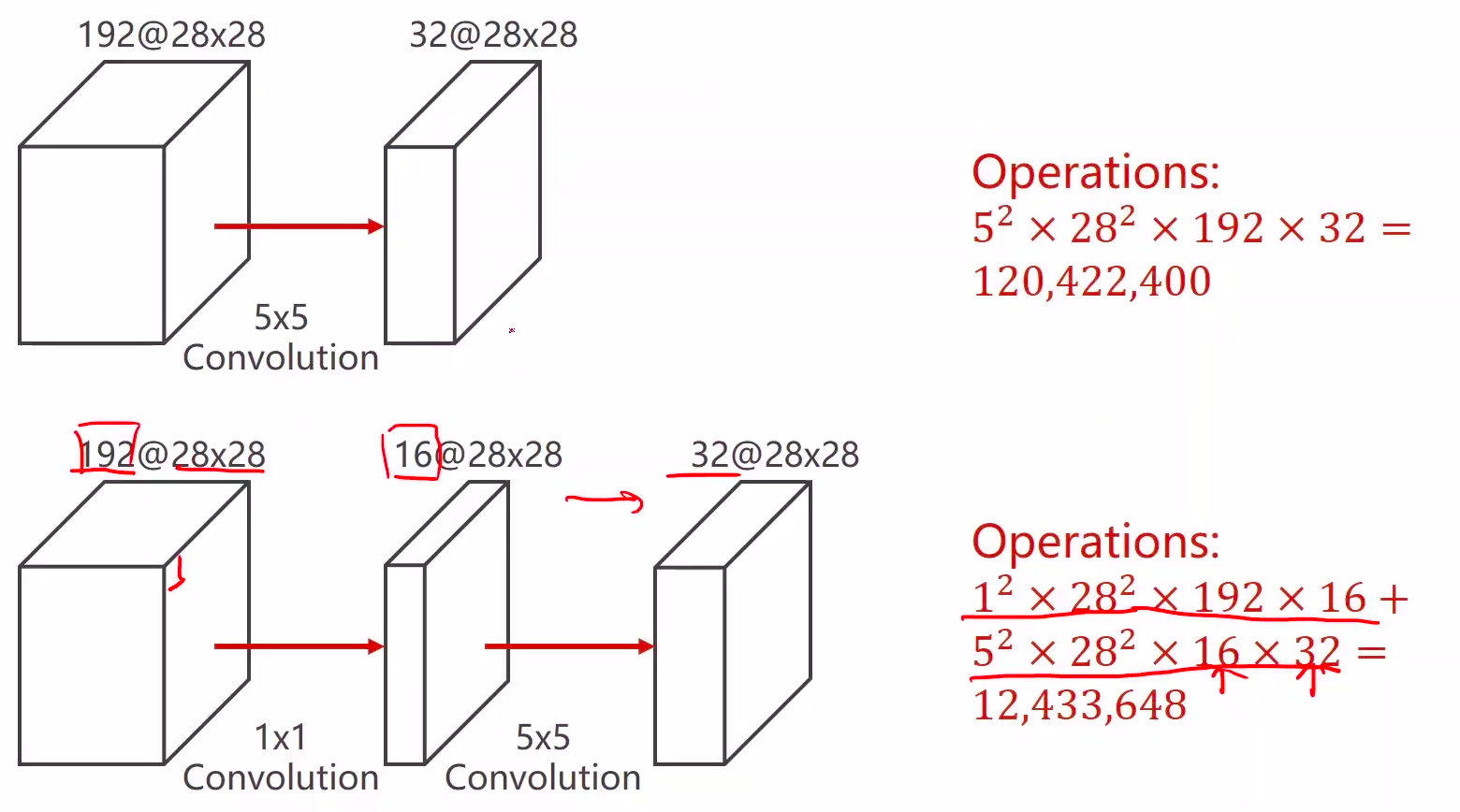

- 卷积核超参数选择困难,自动**找到卷积的最佳组合,相应的权重大。**

- Concatenate:把张量拼接在一块,但要保证宽度和高度是一致的(average pooling宽高一致)

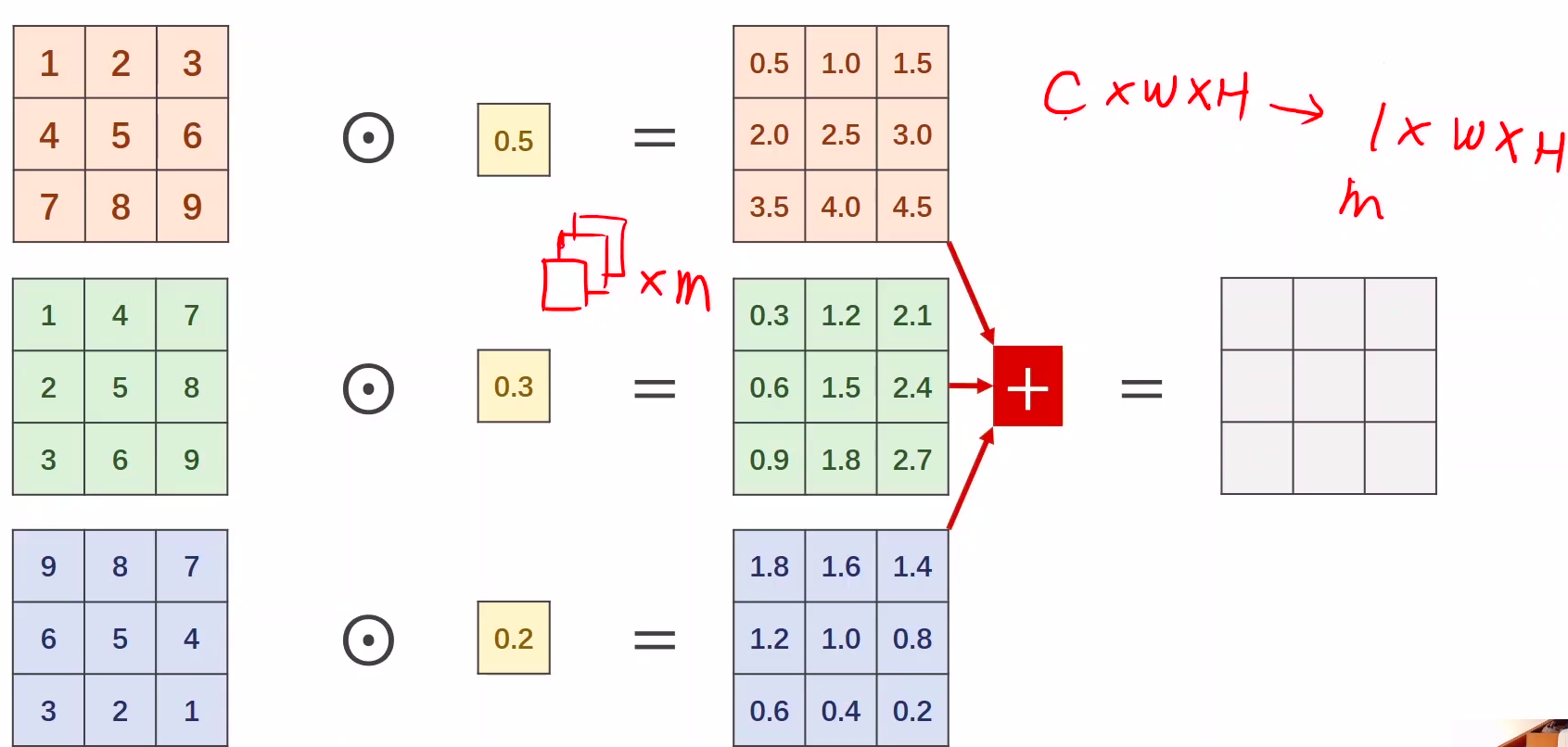

- 1x1卷积核,减少计算量,不同通道的信息融合。数量取决于输入张量的通道

- 卷积过程:各通道卷积再求和叠加为一个通道(cxwxh —> 1xwxh)

- 卷积后的每个通道信息包含了卷积前的各通道信息(融合)

- 多少个卷积核,卷积后多少个通道

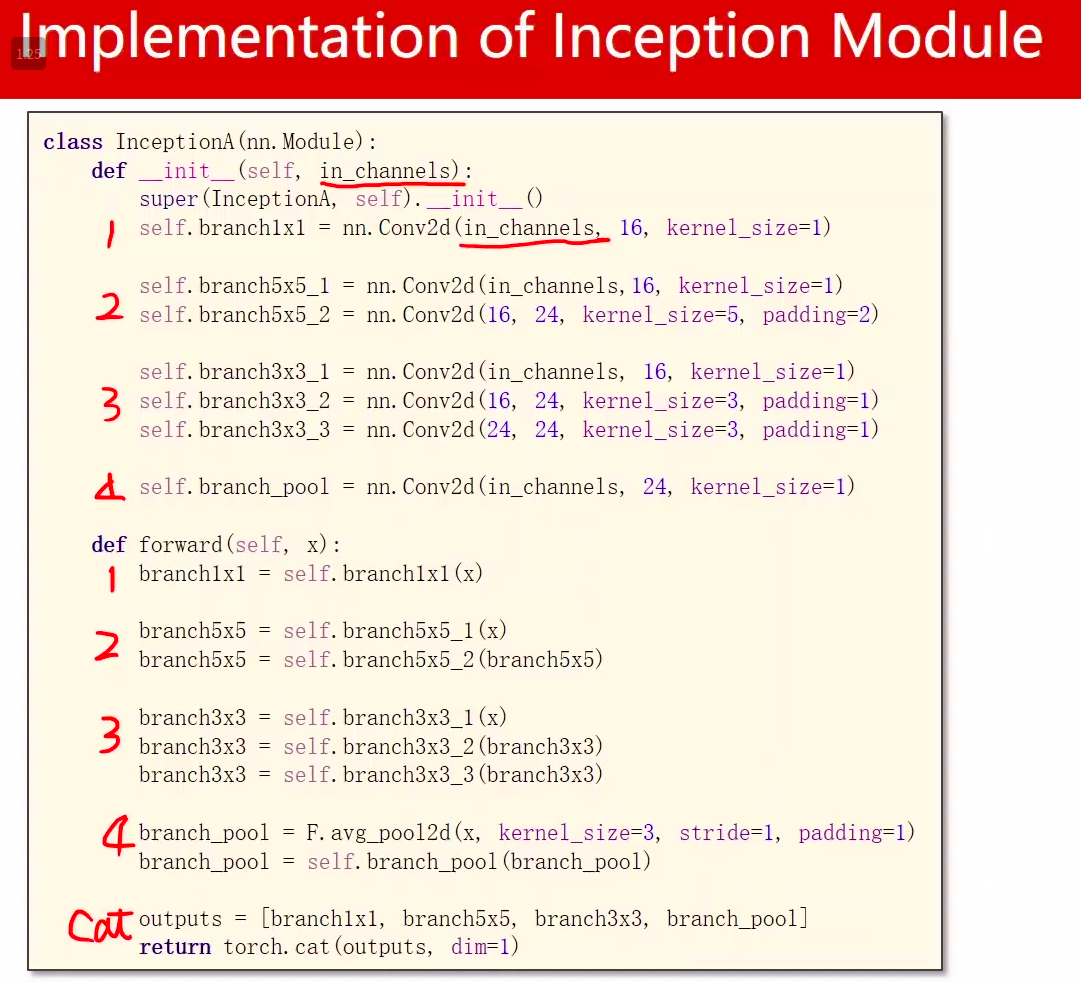

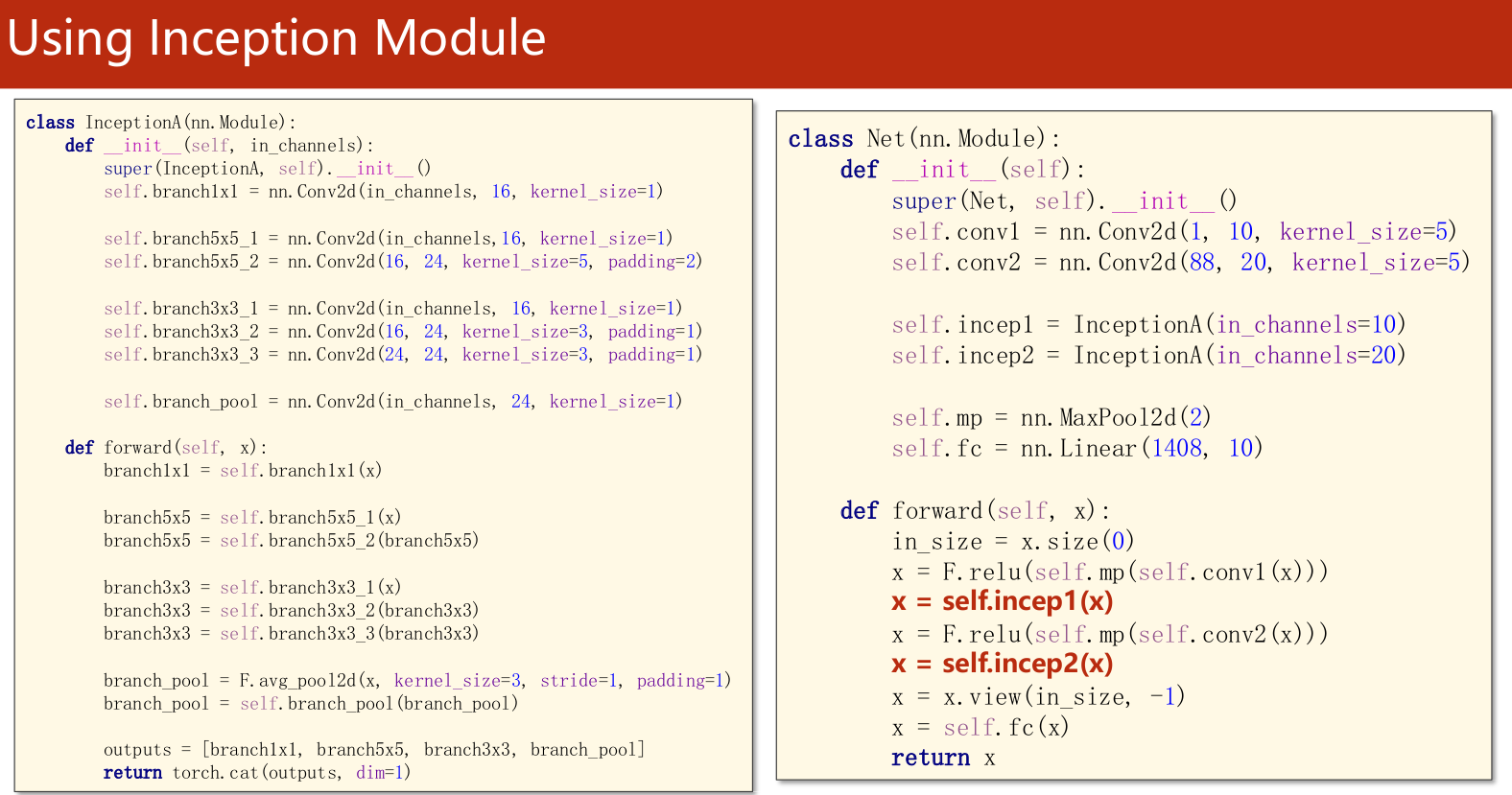

Inception Moudel代码说明

下图中,括号是输出channel

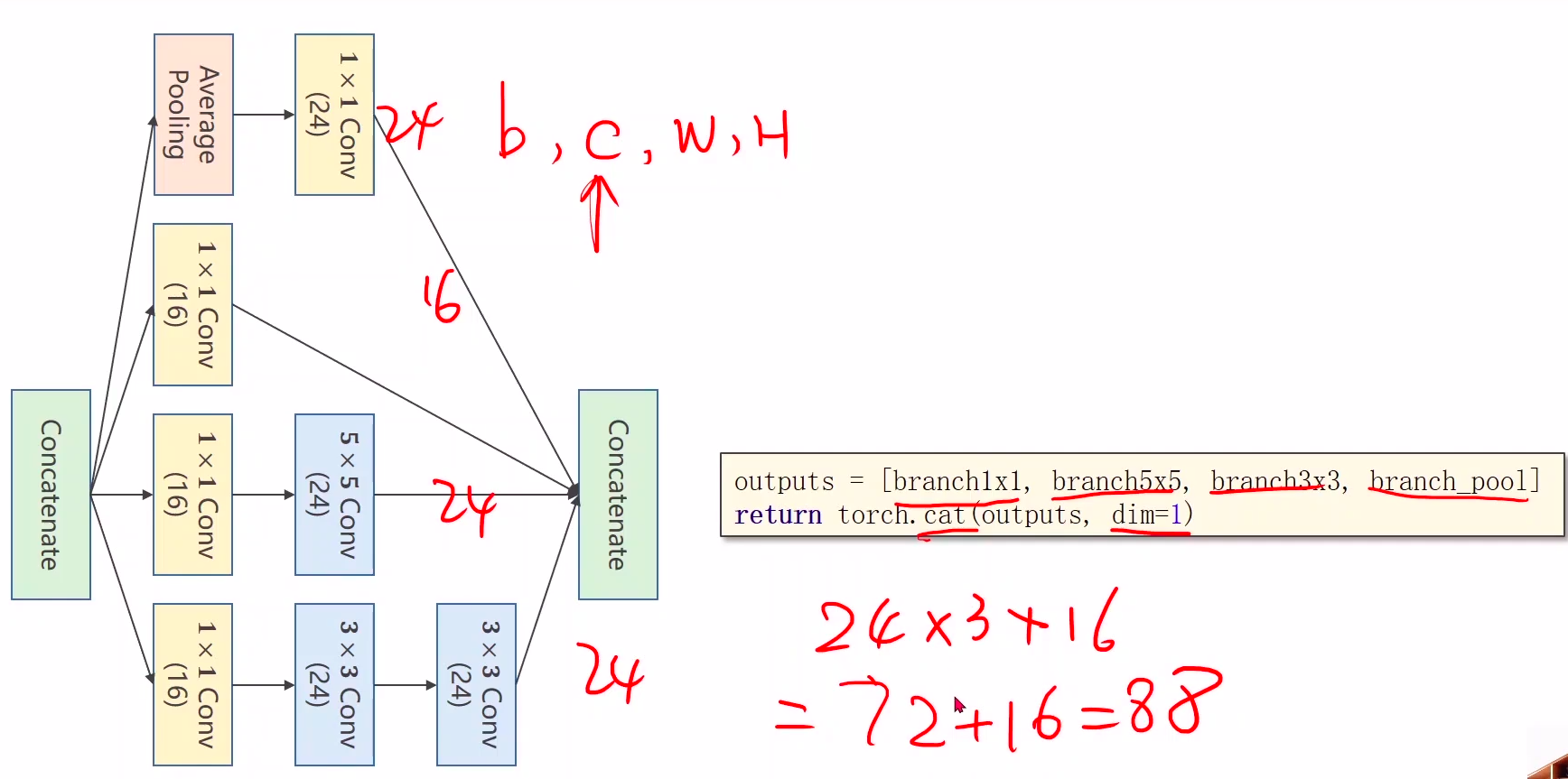

拼接在一起cat

沿着通道的维度拼接在一起 dim=1

outputs = [branch1x1, branch5x5, branch3x3, branch_pool]return torch.cat(outputs, dim=1)

初始通道注意多少,没有写死

Inception后都是88通道

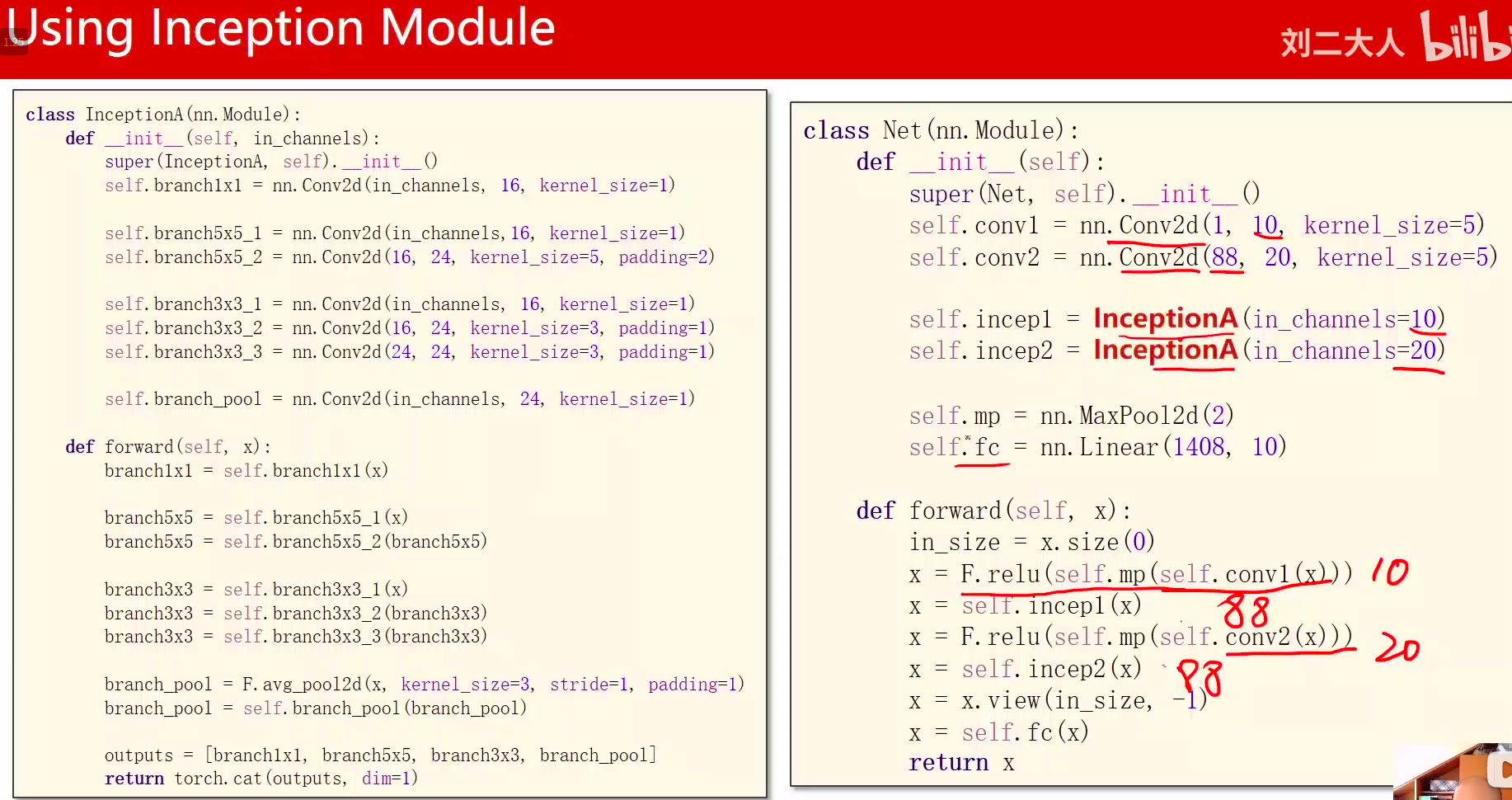

代码实现

代码说明:

1、先是1个卷积层(conv,maxpooling,relu),然后inceptionA模块,接下来又是一个卷积层(conv,mp,relu),然后inceptionA模块,最后一个全连接层(fc)。

2、1408这个数据可以通过x = x.view(in_size, -1)后调用x.shape得到。

1408 = channelswidthheight = 8844

widthheight 44来源:**

原始照片size:28x*28 nn.Conv2d(1, 10, kernel_size=5)(-4):24*24 nn.Conv2d(88, 20, kernel_size=5)(-4) :20*20 第一次InceptionA(-8): 第二次InceptionA(-8):**44*

Inception后都是88通道

'''Description: Inception Moudel视频:https://www.bilibili.com/video/BV1Y7411d7Ys?p=11博客• https://blog.csdn.net/bit452/article/details/109693790• https://blog.csdn.net/weixin_44841652/article/details/105256034Author: HCQCompany(School): UCASEmail: 1756260160@qq.comDate: 2020-12-12 11:32:16LastEditTime: 2020-12-12 15:24:18FilePath: /pytorch/PyTorch深度学习实践/11.1AdvancedCNN-Inception.py'''"""1408 = channels*width*height = 88*4*4width*height 4*4来源:原始照片size:28x*28nn.Conv2d(1, 10, kernel_size=5)(-4):24*24nn.Conv2d(88, 20, kernel_size=5)(-4) :20*20第一次InceptionA(-8):第二次InceptionA(-8):4*4"""import torchimport torch.nn as nnfrom torchvision import transformsfrom torchvision import datasetsfrom torch.utils.data import DataLoaderimport torch.nn.functional as Fimport torch.optim as optim# prepare datasetbatch_size = 64transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))]) # 归一化,均值和方差train_dataset = datasets.MNIST(root='./data/mnist/', train=True, download=True, transform=transform)train_loader = DataLoader(train_dataset, shuffle=True, batch_size=batch_size)test_dataset = datasets.MNIST(root='./data/mnist/', train=False, download=True, transform=transform)test_loader = DataLoader(test_dataset, shuffle=False, batch_size=batch_size)# design model using classclass InceptionA(nn.Module):def __init__(self, in_channels):super(InceptionA, self).__init__()self.branch1x1 = nn.Conv2d(in_channels, 16, kernel_size=1)self.branch5x5_1 = nn.Conv2d(in_channels, 16, kernel_size=1)self.branch5x5_2 = nn.Conv2d(16, 24, kernel_size=5, padding=2)self.branch3x3_1 = nn.Conv2d(in_channels, 16, kernel_size=1)self.branch3x3_2 = nn.Conv2d(16, 24, kernel_size=3, padding=1)self.branch3x3_3 = nn.Conv2d(24, 24, kernel_size=3, padding=1)self.branch_pool = nn.Conv2d(in_channels, 24, kernel_size=1)def forward(self, x):branch1x1 = self.branch1x1(x)branch5x5 = self.branch5x5_1(x)branch5x5 = self.branch5x5_2(branch5x5)branch3x3 = self.branch3x3_1(x)branch3x3 = self.branch3x3_2(branch3x3)branch3x3 = self.branch3x3_3(branch3x3)branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)branch_pool = self.branch_pool(branch_pool)outputs = [branch1x1, branch5x5, branch3x3, branch_pool]return torch.cat(outputs, dim=1) # b,c,w,h c对应的是dim=1class Net(nn.Module):def __init__(self):super(Net, self).__init__()self.conv1 = nn.Conv2d(1, 10, kernel_size=5)self.conv2 = nn.Conv2d(88, 20, kernel_size=5) # 88 = 24x3 + 16self.incep1 = InceptionA(in_channels=10) # 与conv1 中的10对应self.incep2 = InceptionA(in_channels=20) # 与conv2 中的20对应self.mp = nn.MaxPool2d(2)self.fc = nn.Linear(1408, 10) # 暂时不知道1408咋能自动出来的 1408 = channels*width*height = 88*4*4def forward(self, x):in_size = x.size(0) # 64 x.size: torch.Size([64, 10])x = F.relu(self.mp(self.conv1(x)))x = self.incep1(x)x = F.relu(self.mp(self.conv2(x)))x = self.incep2(x)x = x.view(in_size, -1) # torch.Size([64, 1408]) Channels:64 每一层channels1408x = self.fc(x)return xmodel = Net()# construct loss and optimizercriterion = torch.nn.CrossEntropyLoss()optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)# training cycle forward, backward, updatedef train(epoch):running_loss = 0.0for batch_idx, data in enumerate(train_loader, 0):inputs, target = dataoptimizer.zero_grad()outputs = model(inputs)loss = criterion(outputs, target)loss.backward()optimizer.step()running_loss += loss.item()if batch_idx % 300 == 299:print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300))running_loss = 0.0def test():correct = 0total = 0with torch.no_grad():for data in test_loader:images, labels = dataoutputs = model(images)_, predicted = torch.max(outputs.data, dim=1)total += labels.size(0)correct += (predicted == labels).sum().item()print('accuracy on test set: %d %% ' % (100*correct/total))if __name__ == '__main__':for epoch in range(10):train(epoch)test()

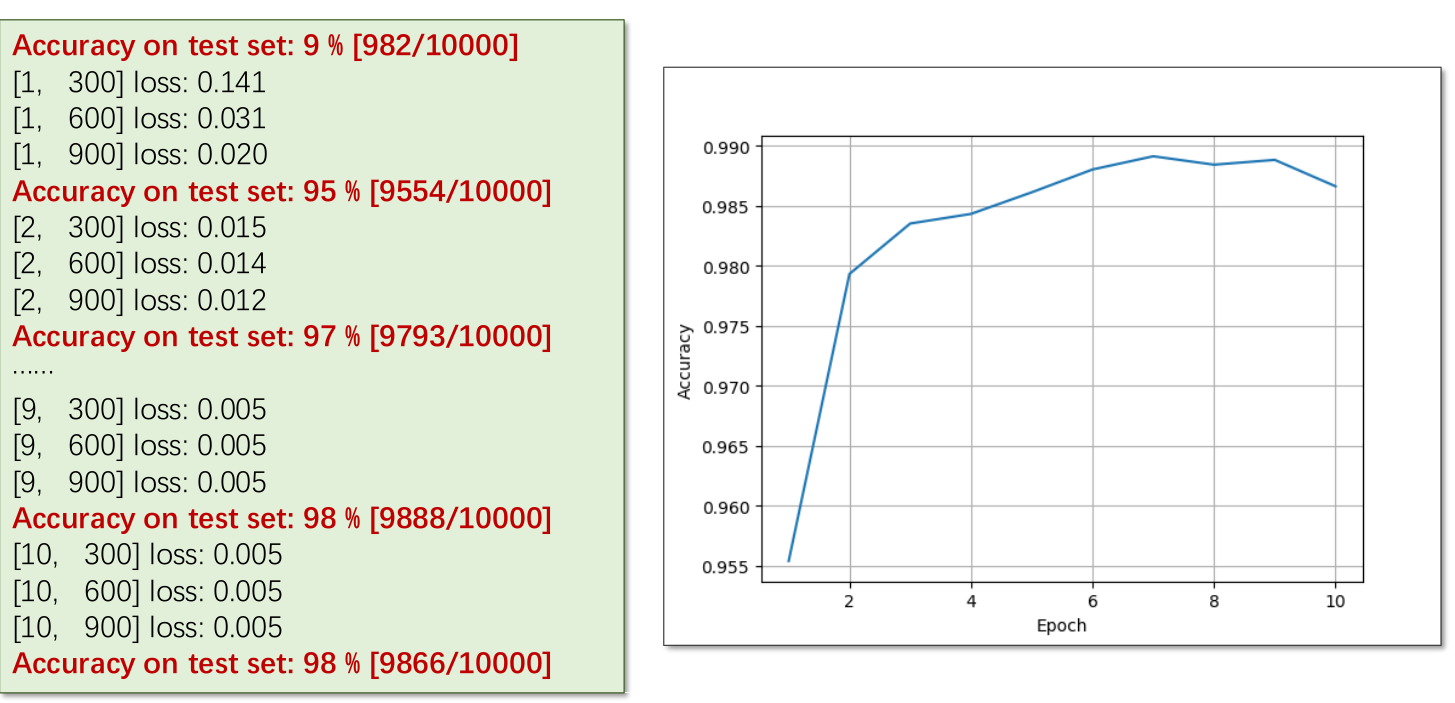

结果展示

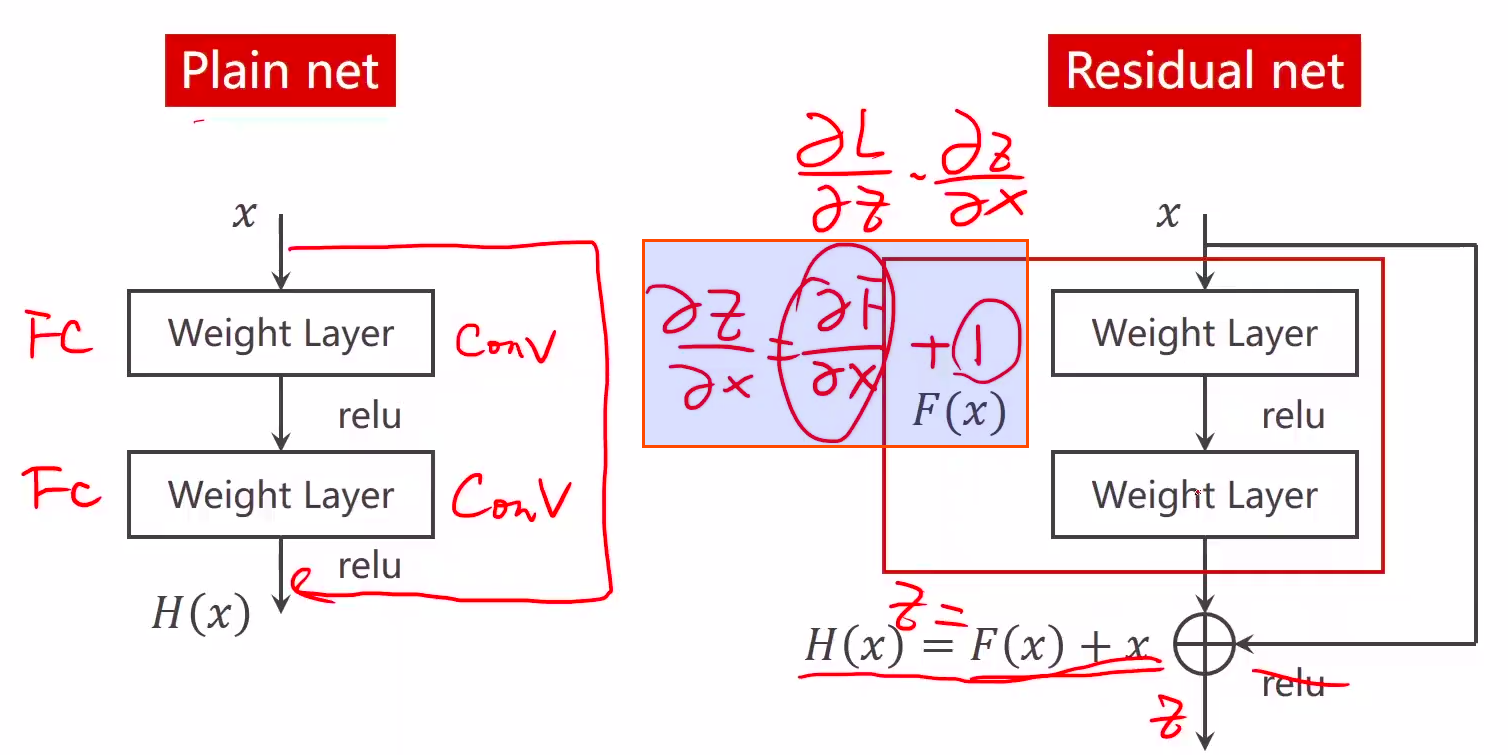

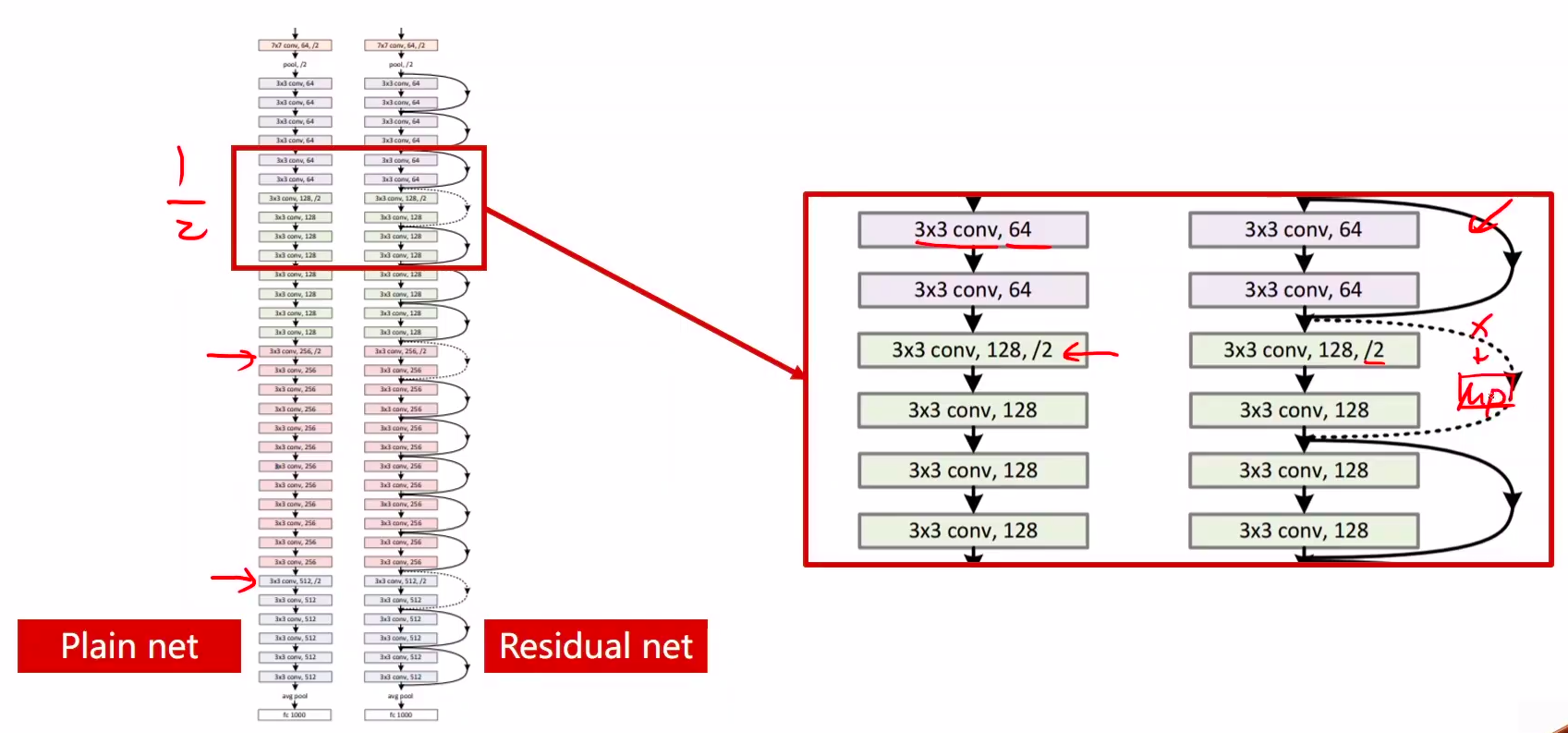

ResNet:引入残差单元,简化学习目标和难度,加快训练速度,模型加深时,不会产生退化问题;能够有效解决训练过程中梯度消失和梯度爆炸问题。ResNet

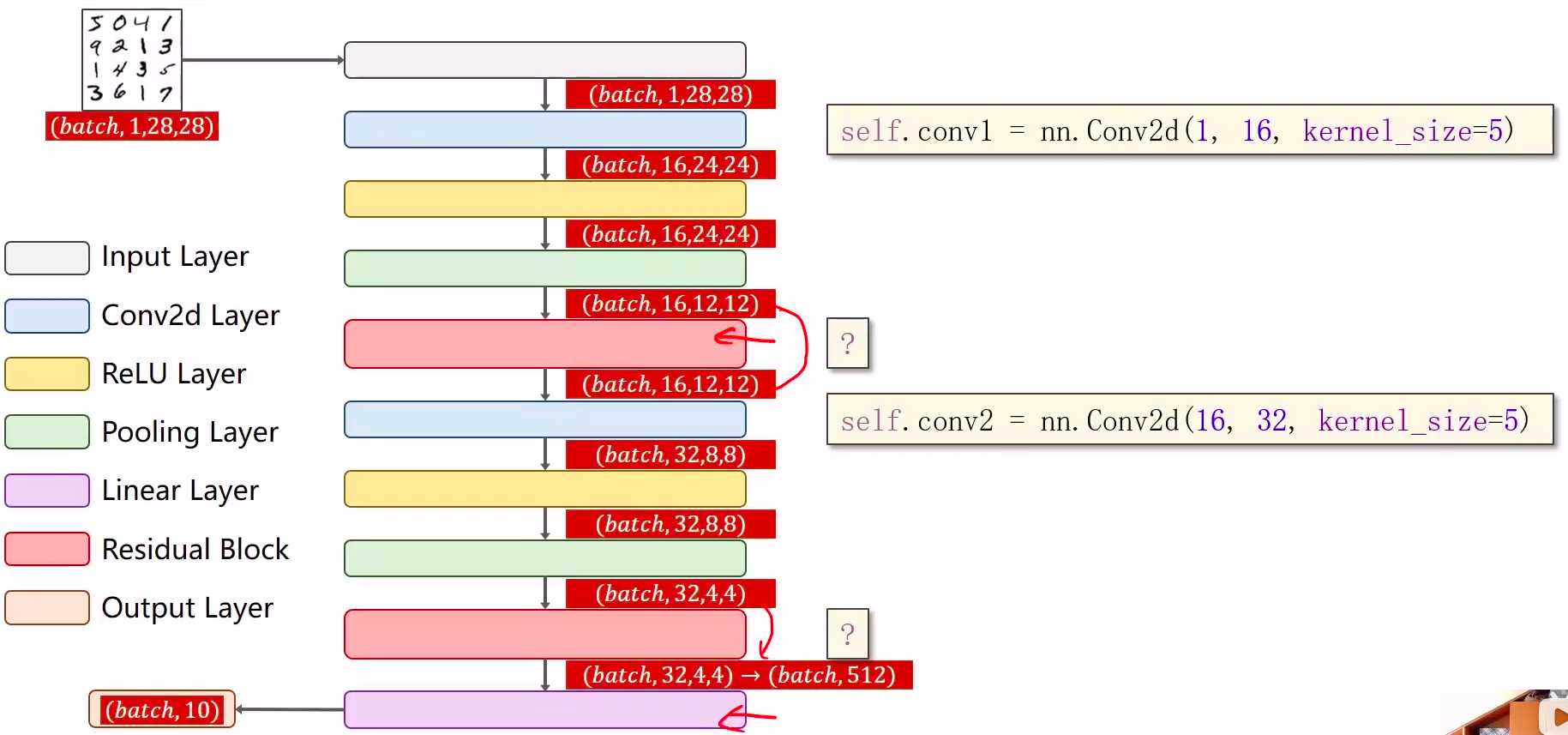

bert思路:多任务轮流训练

视频中截图: 说明:1、要解决的问题:梯度消失 2、跳连接,H(x) = F(x) + x,张量维度必须一样,加完后再激活。不要做pooling,张量的维度会发生变化。 代码说明: 1、先是1个卷积层(conv,maxpooling,relu),然后ResidualBlock模块,接下来又是一个卷积层(conv,mp,relu),然后esidualBlock模块模块,最后一个全连接层(fc)。

层数越深越好?

针对CIFAR-10,20层比56层loss要小

看上去网络变复杂了,但其实性能降低了。可能原因:梯度消失

说明

1、要解决的问题:梯度消失

2、跳连接,H(x) = F(x) + x,张量维度必须一样,加完后再激活。不要做pooling,张量的维度会发生变化

最小梯度也是1左右

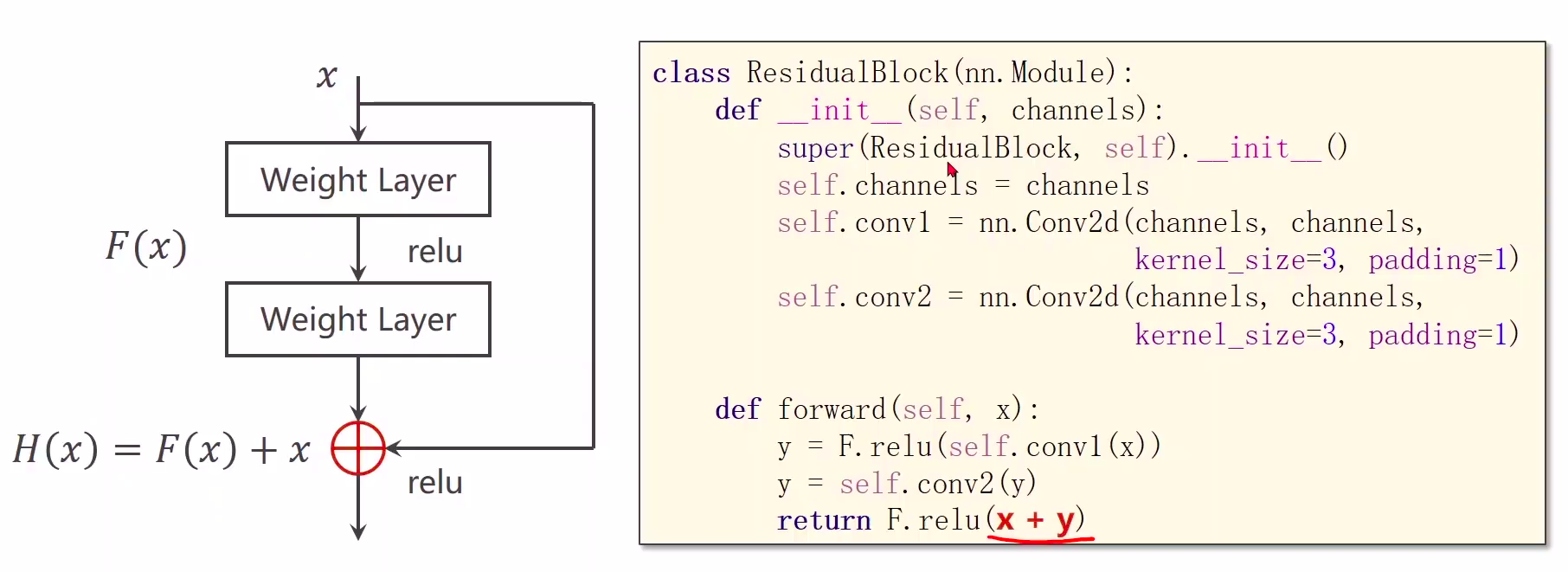

代码实现

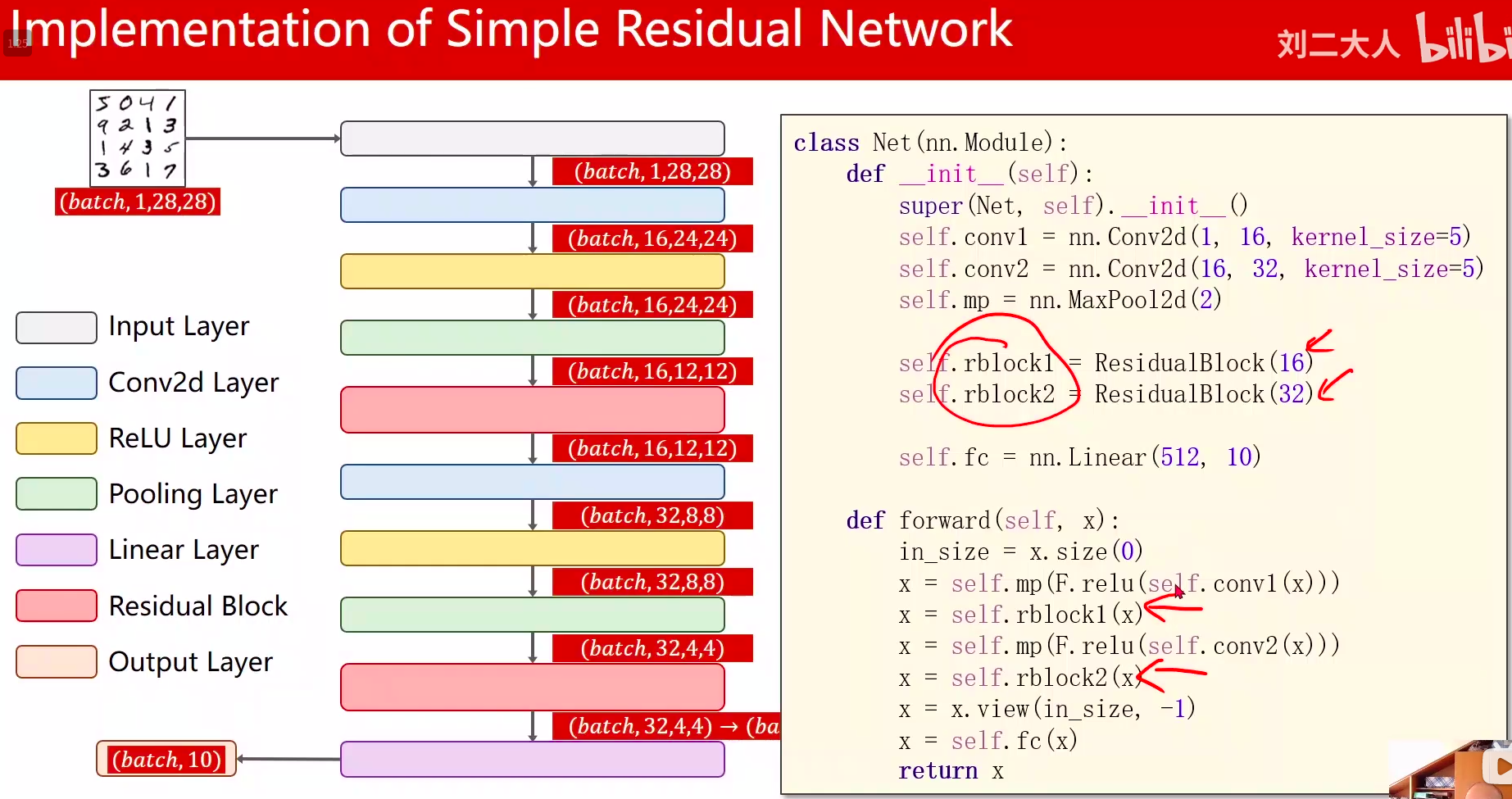

代码说明:

- 先是1个卷积层(conv,maxpooling,relu),然后ResidualBlock模块,接下来又是一个卷积层(conv,mp,relu),然后ResidualBlock模块模块,最后一个全连接层

- ResidualBlock模块的输出张量维度和输入张量维度要保持一致

输出通道和输入通道一致,代码中直接设置同一个channels

- 卷积是层中的事情,res是层间的事情

总体代码

写网络,增量式开发的思想:一层写好测试下,再写下一层

'''

Description: ResNet

视频:https://www.bilibili.com/video/BV1Y7411d7Ys?p=11

博客

• https://blog.csdn.net/bit452/article/details/109693790

• https://blog.csdn.net/weixin_44841652/article/details/105256034

Author: HCQ

Company(School): UCAS

Email: 1756260160@qq.com

Date: 2020-12-12 15:25:16

LastEditTime: 2020-12-12 15:29:18

FilePath: /pytorch/PyTorch深度学习实践/11.2AdvancedCNN-ResNet.py

'''

import torch

import torch.nn as nn

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

# prepare dataset

batch_size = 64

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307,), (0.3081,))]) # 归一化,均值和方差

train_dataset = datasets.MNIST(root='./data/mnist/', train=True, download=True, transform=transform)

train_loader = DataLoader(train_dataset, shuffle=True, batch_size=batch_size)

test_dataset = datasets.MNIST(root='./data/mnist/', train=False, download=True, transform=transform)

test_loader = DataLoader(test_dataset, shuffle=False, batch_size=batch_size)

# design model using class

class ResidualBlock(nn.Module):

def __init__(self, channels):

super(ResidualBlock, self).__init__()

self.channels = channels

self.conv1 = nn.Conv2d(channels, channels, kernel_size=3, padding=1)

self.conv2 = nn.Conv2d(channels, channels, kernel_size=3, padding=1)

def forward(self, x):

y = F.relu(self.conv1(x))

y = self.conv2(y)

return F.relu(x + y)

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 16, kernel_size=5)

self.conv2 = nn.Conv2d(16, 32, kernel_size=5) # 88 = 24x3 + 16

self.rblock1 = ResidualBlock(16)

self.rblock2 = ResidualBlock(32)

self.mp = nn.MaxPool2d(2)

self.fc = nn.Linear(512, 10) # 512咋能自动出来的 512 = channels*width*height

def forward(self, x):

in_size = x.size(0)

x = self.mp(F.relu(self.conv1(x)))

x = self.rblock1(x)

x = self.mp(F.relu(self.conv2(x)))

x = self.rblock2(x)

x = x.view(in_size, -1)

x = self.fc(x)

return x

model = Net()

# construct loss and optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

# training cycle forward, backward, update

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300))

running_loss = 0.0

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('accuracy on test set: %d %% ' % (100*correct/total))

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()

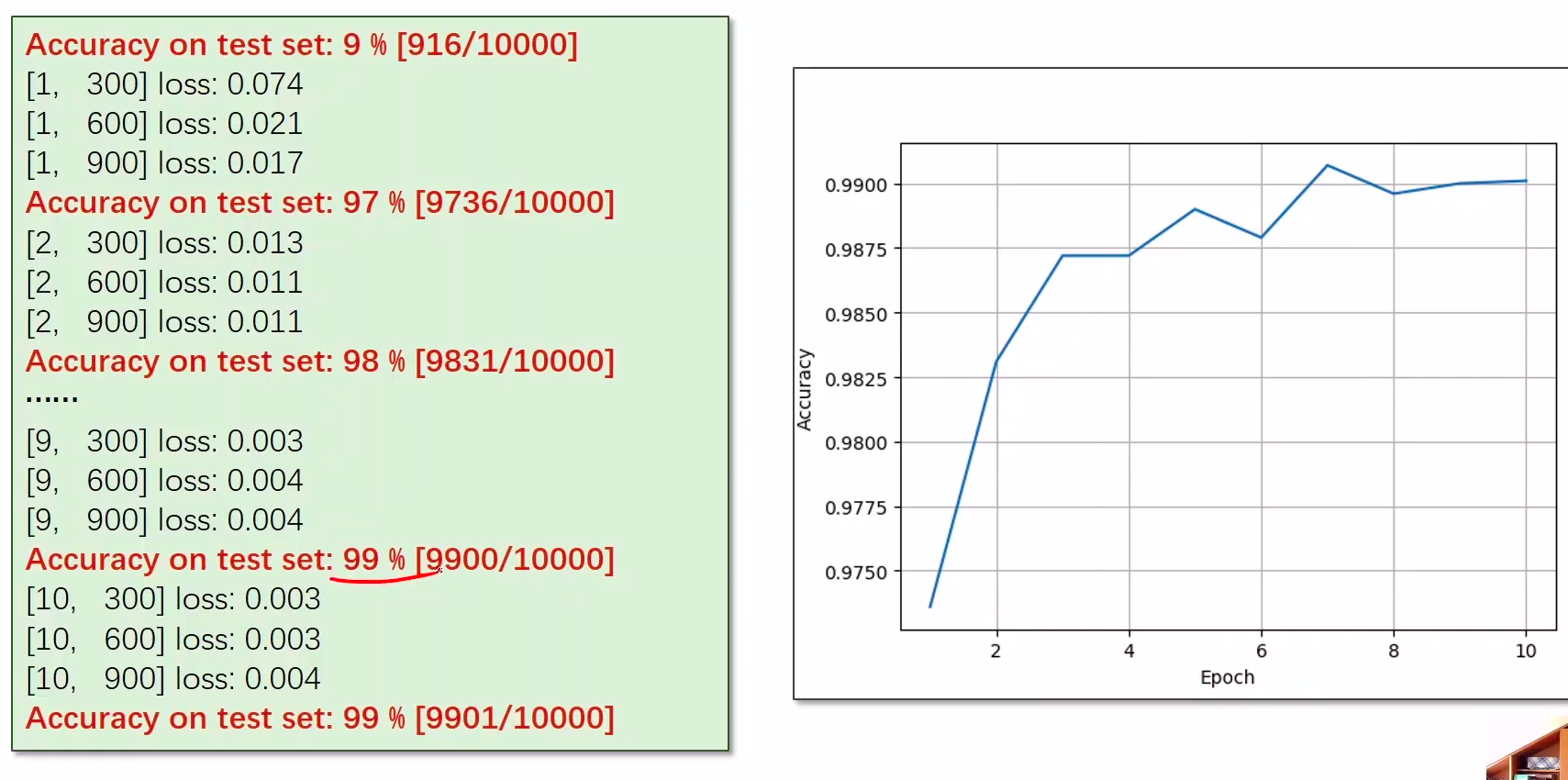

结果展示

课后Exercise

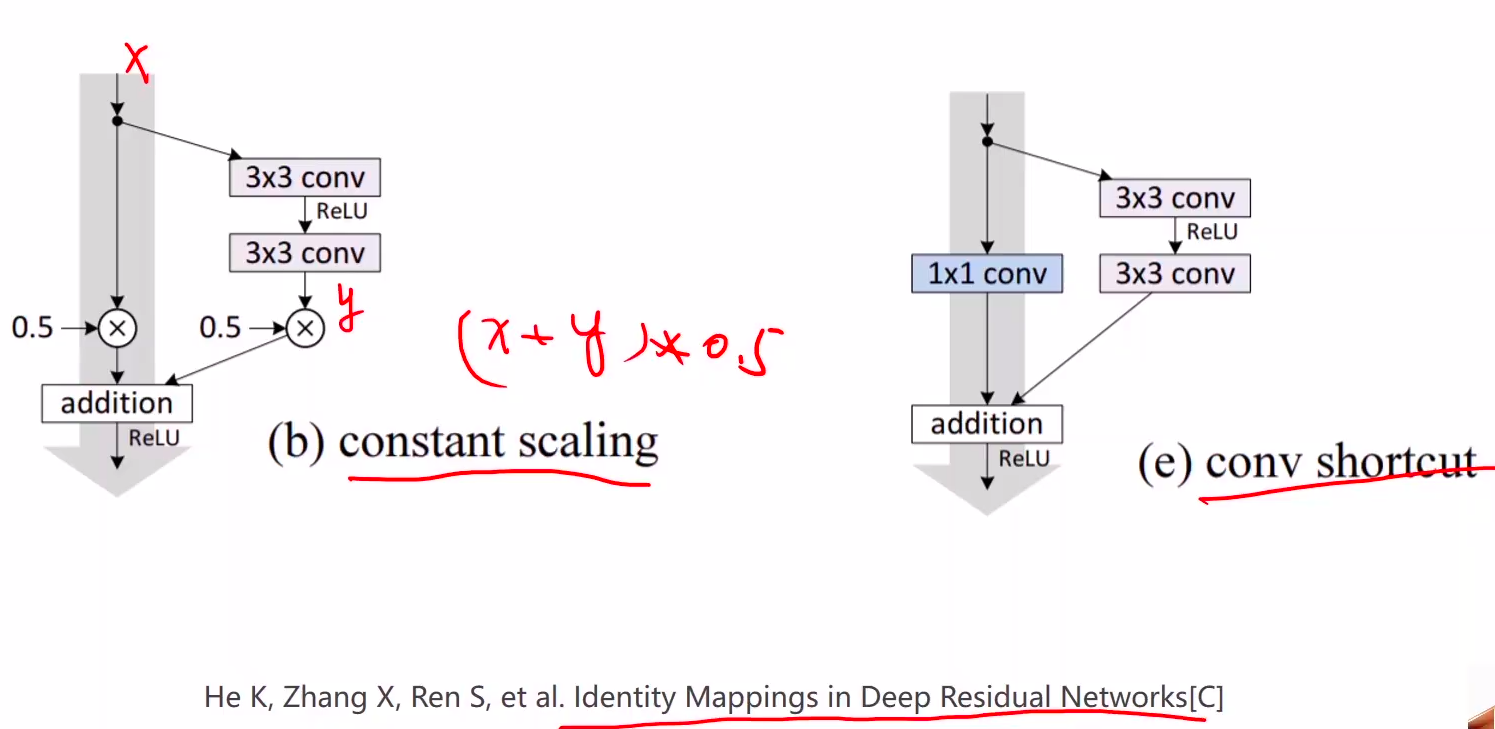

He K, Zhang X, Ren S, et al. Identity Mappings in Deep Residual Networks[C]

更多Residual相关设计

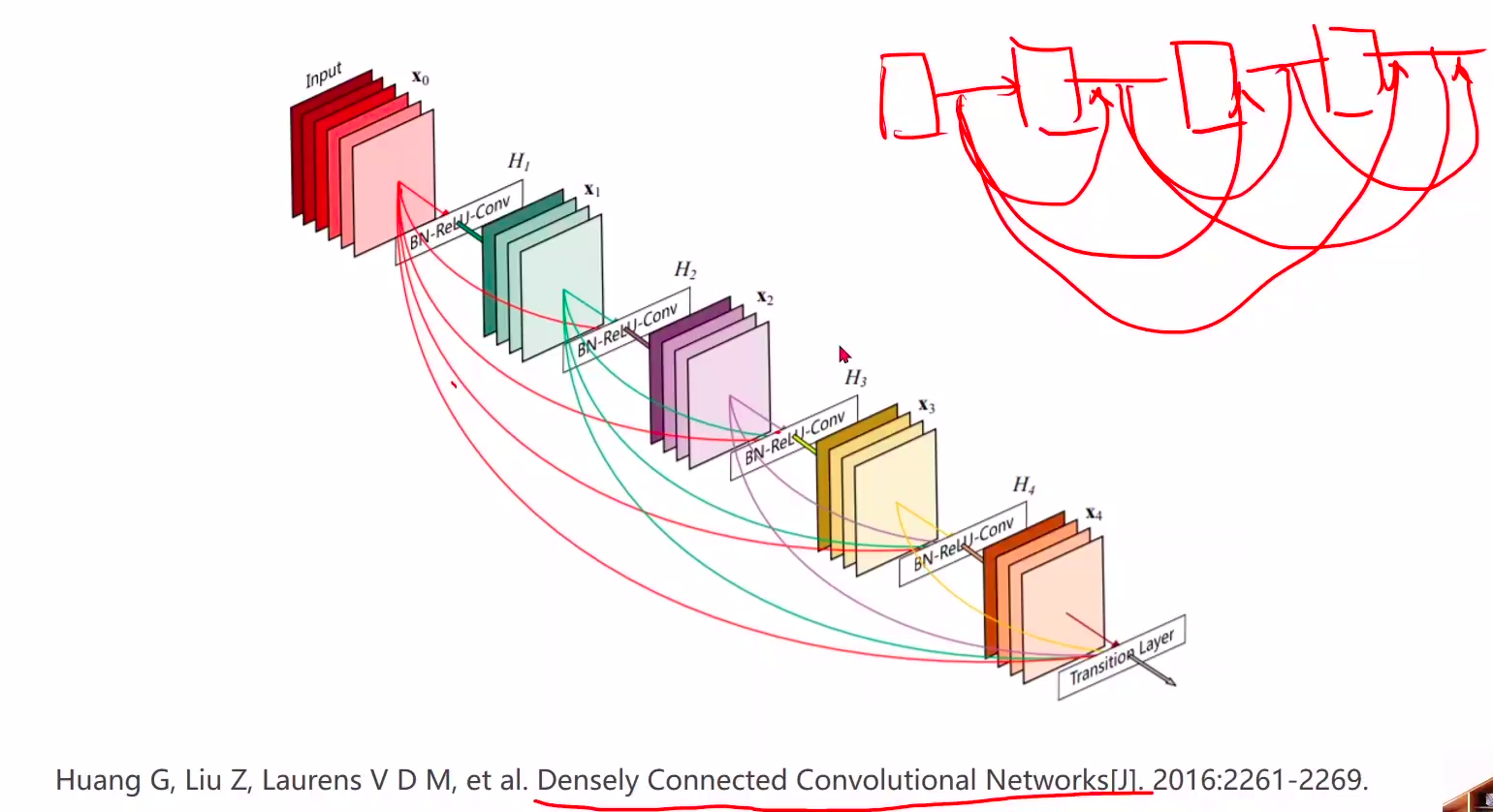

Huang G, Liu Z, Laurens V D M, et al. Densely Connected Convolutional Networks[J]. 2016:2261-2269.

DenseNet:密集连接;加强特征传播,鼓励特征复用,极大的减少了参数量。DenseNet

多了好多跳

**