从 v1.8 开始,资源使用情况的监控可以通过 Metrics API的形式获取,具体的组件为Metrics Server,用来替换之前的heapster,heapster从1.11开始逐渐被废弃。

Metrics-Server是集群核心监控数据的聚合器,从 Kubernetes1.8 开始,它作为一个 Deployment对象默认部署在由kube-up.sh脚本创建的集群中,如果是其他部署方式需要单独安装,或者咨询对应的云厂商。

Metrics API

介绍Metrics-Server之前,必须要提一下Metrics API的概念

Metrics API相比于之前的监控采集方式(hepaster)是一种新的思路,官方希望核心指标的监控应该是稳定的,版本可控的,且可以直接被用户访问(例如通过使用 kubectl top 命令),或由集群中的控制器使用(如HPA),和其他的Kubernetes APIs一样。

官方废弃heapster项目,就是为了将核心资源监控作为一等公民对待,即像pod、service那样直接通过api-server或者client直接访问,不再是安装一个hepater来汇聚且由heapster单独管理。

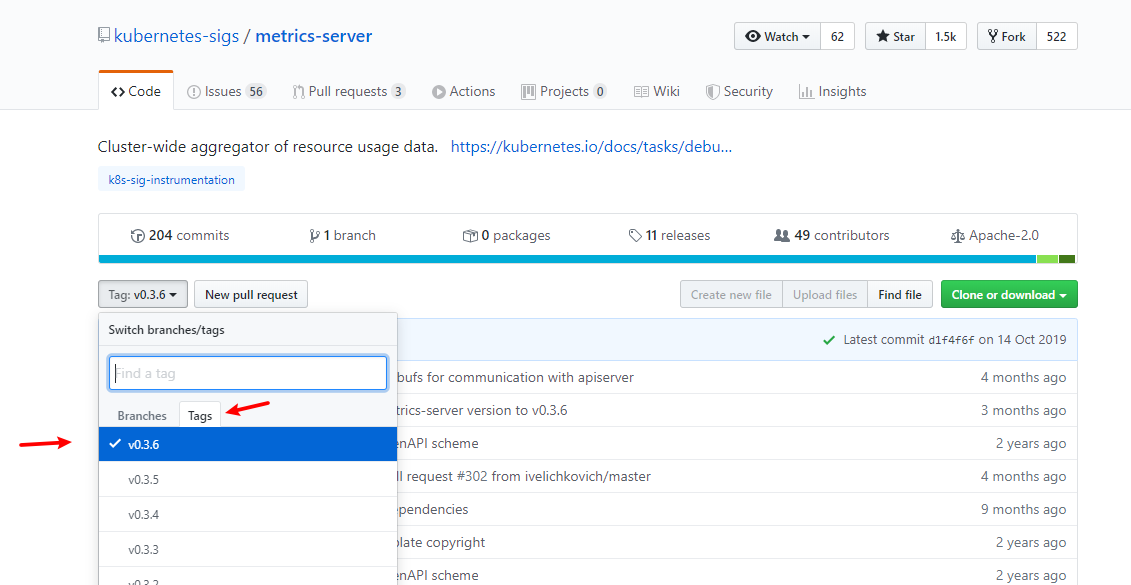

官方GitHub

https://github.com/kubernetes-sigs/metrics-server

创建Metrics-Server

使用Metrics-Server v0.3.6

unzip metrics-server-0.3.6.zip

修改yaml

[root@master01 ~]# cd metrics-server-0.3.6/deploy/1.8+/

[root@master01 1.8+]# ll

total 28

-rw-r--r-- 1 root root 393 Oct 14 20:42 aggregated-metrics-reader.yaml

-rw-r--r-- 1 root root 308 Oct 14 20:42 auth-delegator.yaml

-rw-r--r-- 1 root root 329 Oct 14 20:42 auth-reader.yaml

-rw-r--r-- 1 root root 298 Oct 14 20:42 metrics-apiservice.yaml

-rw-r--r-- 1 root root 804 Oct 14 20:42 metrics-server-deployment.yaml

-rw-r--r-- 1 root root 291 Oct 14 20:42 metrics-server-service.yaml

-rw-r--r-- 1 root root 517 Oct 14 20:42 resource-reader.yaml

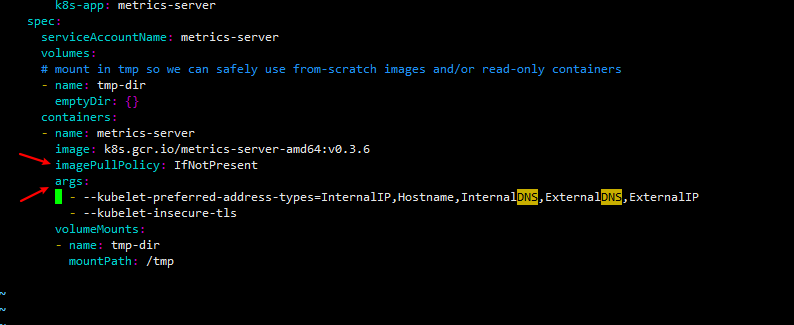

vim metrics-server-deployment.yaml

...

...

...

image: k8s.gcr.io/metrics-server-amd64:v0.3.6

imagePullPolicy: IfNotPresent

args:

- --kubelet-preferred-address-types=InternalIP,Hostname,InternalDNS,ExternalDNS,ExternalIP

- --kubelet-insecure-tls

镜像下载

链接: https://pan.baidu.com/s/1wnKCpBcY2YNKKTPwSnVW0g 提取码: agnv

docker pull registry.aliyuncs.com/k8sxio/metrics-server-amd64:v0.3.6

docker tag registry.aliyuncs.com/k8sxio/metrics-server-amd64:v0.3.6 k8s.gcr.io/metrics-server-amd64:v0.3.6

加载yaml

[root@master01 1.8+]# pwd

/root/metrics-server-0.3.6/deploy/1.8+

[root@master01 1.8+]# kubectl apply -f .

clusterrole.rbac.authorization.k8s.io/system:aggregated-metrics-reader created

clusterrolebinding.rbac.authorization.k8s.io/metrics-server:system:auth-delegator created

rolebinding.rbac.authorization.k8s.io/metrics-server-auth-reader created

apiservice.apiregistration.k8s.io/v1beta1.metrics.k8s.io created

serviceaccount/metrics-server created

deployment.apps/metrics-server created

service/metrics-server created

clusterrole.rbac.authorization.k8s.io/system:metrics-server created

clusterrolebinding.rbac.authorization.k8s.io/system:metrics-server created

[root@master01 1.8+]# kubectl get pods -n kube-system | grep metrics

metrics-server-54fff4c8bb-6j5pk 1/1 Running 0 102s

[root@master01 1.8+]# kubectl get apiservices.apiregistration.k8s.io

NAME SERVICE AVAILABLE AGE

v1. Local True 8d

v1.admissionregistration.k8s.io Local True 8d

v1.apiextensions.k8s.io Local True 8d

v1.apps Local True 8d

v1.authentication.k8s.io Local True 8d

v1.authorization.k8s.io Local True 8d

v1.autoscaling Local True 8d

v1.batch Local True 8d

v1.coordination.k8s.io Local True 8d

v1.crd.projectcalico.org Local True 43m

v1.networking.k8s.io Local True 8d

v1.rbac.authorization.k8s.io Local True 8d

v1.scheduling.k8s.io Local True 8d

v1.storage.k8s.io Local True 8d

v1alpha1.settings.k8s.io Local True 8d

v1beta1.admissionregistration.k8s.io Local True 8d

v1beta1.apiextensions.k8s.io Local True 8d

v1beta1.authentication.k8s.io Local True 8d

v1beta1.authorization.k8s.io Local True 8d

v1beta1.batch Local True 8d

v1beta1.certificates.k8s.io Local True 8d

v1beta1.coordination.k8s.io Local True 8d

v1beta1.events.k8s.io Local True 8d

v1beta1.extensions Local True 8d

v1beta1.metrics.k8s.io kube-system/metrics-server True 14m

v1beta1.networking.k8s.io Local True 8d

v1beta1.node.k8s.io Local True 8d

v1beta1.policy Local True 8d

v1beta1.rbac.authorization.k8s.io Local True 8d

v1beta1.scheduling.k8s.io Local True 8d

v1beta1.storage.k8s.io Local True 8d

v2beta1.autoscaling Local True 8d

v2beta2.autoscaling Local True 8d

查看资源

[root@master01 1.8+]# kubectl top nodes

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

master01 255m 12% 874Mi 23%

master02 217m 10% 877Mi 23%

master03 187m 9% 815Mi 22%

node01 148m 7% 489Mi 13%

node02 116m 5% 468Mi 12%

node03 115m 5% 457Mi 12%

[root@master01 1.8+]# kubectl top pod --all-namespaces

NAMESPACE NAME CPU(cores) MEMORY(bytes)

kube-system calico-kube-controllers-648f4868b8-pzg2d 2m 13Mi

kube-system calico-node-d4hqw 24m 48Mi

kube-system calico-node-glmcl 24m 48Mi

kube-system calico-node-qm8zz 27m 48Mi

kube-system calico-node-s64r9 25m 48Mi

kube-system calico-node-shxhv 25m 48Mi

kube-system calico-node-zx7nw 25m 48Mi

kube-system coredns-7b8f8b6cf6-gqp8s 3m 10Mi

kube-system coredns-7b8f8b6cf6-mkxdv 3m 10Mi

kube-system etcd-master01 42m 102Mi

kube-system etcd-master02 30m 80Mi

kube-system etcd-master03 33m 87Mi

kube-system kube-apiserver-master01 25m 249Mi

kube-system kube-apiserver-master02 43m 336Mi

kube-system kube-apiserver-master03 28m 267Mi

kube-system kube-controller-manager-master01 12m 40Mi

kube-system kube-controller-manager-master02 1m 14Mi

kube-system kube-controller-manager-master03 1m 12Mi

kube-system kube-proxy-2zbx4 6m 15Mi

kube-system kube-proxy-bbvqk 5m 14Mi

kube-system kube-proxy-j8899 6m 15Mi

kube-system kube-proxy-khrw5 7m 17Mi

kube-system kube-proxy-srpz9 7m 15Mi

kube-system kube-proxy-tz24q 4m 15Mi

kube-system kube-scheduler-master01 2m 13Mi

kube-system kube-scheduler-master02 1m 13Mi

kube-system kube-scheduler-master03 2m 15Mi

kube-system metrics-server-54fff4c8bb-6j5pk 1m 12Mi

HPA

通过kubectl api-versions可以看到,目前有3个版本:

- autoscaling/v1 #只支持通过cpu为参考依据,来改变pod副本数

- autoscaling/v2beta1 #支持通过cpu、内存、连接数以及用户自定义的资源指标数据为参考依据。

- autoscaling/v2beta2 #同上,小的变动

部署HPA

SVC、Deployment资源清单

cat > hpa-deploy.yaml << EOF

apiVersion: v1

kind: Service

metadata:

name: svc-hpa

namespace: default

spec:

selector:

app: myapp

type: NodePort

ports:

- name: http

port: 80

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: myapp-hpa

namespace: default

spec:

replicas: 1

selector:

matchLabels:

app: myapp

template:

metadata:

name: myapp-demo

namespace: default

labels:

app: myapp

spec:

containers:

- name: myapp

image: ikubernetes/myapp:v1

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 80

resources:

requests:

cpu: 50m

memory: 50Mi

limits:

cpu: 50m

memory: 50Mi

EOF

kubectl apply -f hpa-deploy.yaml

HPA资源清单

cat > hpa-myapp.yaml << EOF

apiVersion: autoscaling/v2beta1

kind: HorizontalPodAutoscaler

metadata:

name: myapp-hpa-v2

namespace: default

spec:

minReplicas: 1 ##至少1个副本

maxReplicas: 8 ##最多8个副本

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: myapp-hpa

metrics:

- type: Resource

resource:

name: cpu

targetAverageUtilization: 50 ##注意此时是根据使用率,也可以根据使用量:targetAverageValue

- type: Resource

resource:

name: memory

targetAverageUtilization: 50 ##注意此时是根据使用率,也可以根据使用量:targetAverageValue

EOF

kubectl apply -f hpa-myapp.yaml

[root@master01 ~]# kubectl get all

NAME READY STATUS RESTARTS AGE

pod/myapp-hpa-6cf986669d-vhtb5 1/1 Running 0 2m5s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 8d

service/myapp-svc NodePort 10.106.201.169 <none> 80:30080/TCP 17h

service/svc-hpa NodePort 10.108.166.226 <none> 80:32465/TCP 2m6s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/myapp-hpa 1/1 1 1 2m5s

NAME DESIRED CURRENT READY AGE

replicaset.apps/myapp-hpa-6cf986669d 1 1 1 2m5s

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

horizontalpodautoscaler.autoscaling/myapp-hpa-v2 Deployment/myapp <unknown>/50%, <unknown>/50% 1 8 0 21s

压测

使用工具模拟压力测试

yum install openssl-devel git

git clone https://github.com/wg/wrk.git

cd wrk

make

mv wrk /usr/bin

wrk -t20 -c30 -d120s --latency http://master01:32465/hostname.html

[root@master01 ~]# kubectl get pods -w

NAME READY STATUS RESTARTS AGE

myapp-hpa-6cf986669d-vhtb5 1/1 Running 0 4h52m

myapp-hpa-6cf986669d-txkrv 0/1 Pending 0 0s

myapp-hpa-6cf986669d-txkrv 0/1 Pending 0 0s

myapp-hpa-6cf986669d-txkrv 0/1 ContainerCreating 0 0s

myapp-hpa-6cf986669d-txkrv 0/1 ContainerCreating 0 3s

myapp-hpa-6cf986669d-txkrv 1/1 Running 0 4s

myapp-hpa-6cf986669d-lfq4l 0/1 Pending 0 0s

myapp-hpa-6cf986669d-lfq4l 0/1 Pending 0 0s

myapp-hpa-6cf986669d-rgsww 0/1 Pending 0 0s

myapp-hpa-6cf986669d-rgsww 0/1 Pending 0 0s

myapp-hpa-6cf986669d-rgsww 0/1 ContainerCreating 0 0s

myapp-hpa-6cf986669d-lfq4l 0/1 ContainerCreating 0 0s

myapp-hpa-6cf986669d-lfq4l 0/1 ContainerCreating 0 2s

myapp-hpa-6cf986669d-rgsww 0/1 ContainerCreating 0 3s

myapp-hpa-6cf986669d-lfq4l 1/1 Running 0 3s

myapp-hpa-6cf986669d-rgsww 1/1 Running 0 4s

myapp-hpa-6cf986669d-8tn4x 0/1 Pending 0 0s

myapp-hpa-6cf986669d-8tn4x 0/1 Pending 0 0s

myapp-hpa-6cf986669d-8tn4x 0/1 ContainerCreating 0 0s

myapp-hpa-6cf986669d-8tn4x 0/1 ContainerCreating 0 2s

myapp-hpa-6cf986669d-8tn4x 1/1 Running 0 3s

[root@master01 ~]# kubectl get hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

myapp-hpa-v2 Deployment/myapp-hpa 2%/50%, 93%/50% 1 8 8 6m41s