The curse of dimensionality

- Properties of high dimensional problems Line

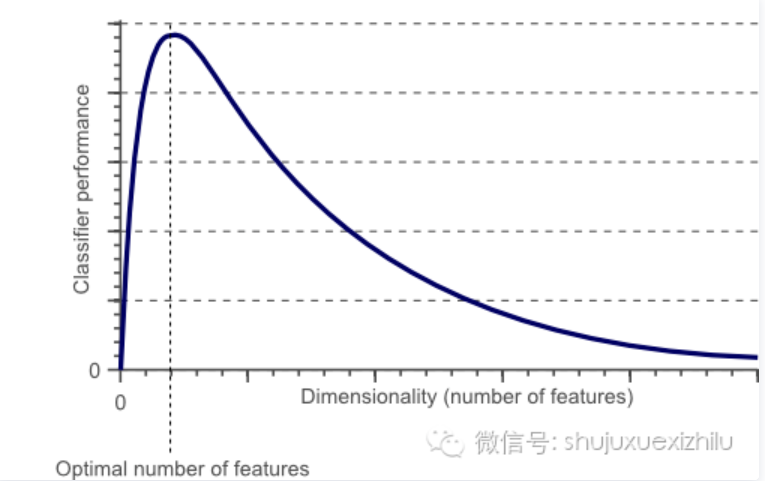

- 随着维数的增加,分类器的性能随之增加,直到达到最佳的特征数量。进一步增加维度而不增加训练样本的数量导致分类器性能的降低。

高维度特征会带来几方面的问题:

- 一是在K近邻算法中,高维空间下两点之间的距离很难得到有效的衡量;

- 二是在逻辑回归模型中,参数的数量会随着维度的增高而增加,容易引起过拟合问题;

- 三是通常只有部分维度是对分类、预测有帮助,因此可以考虑配合特征选择来降低维度。

来源:百面机器学习 延申阅读:高维度究竟有什么危害?深入讨论维度诅咒(Curse of Dimensionality)的三大特点

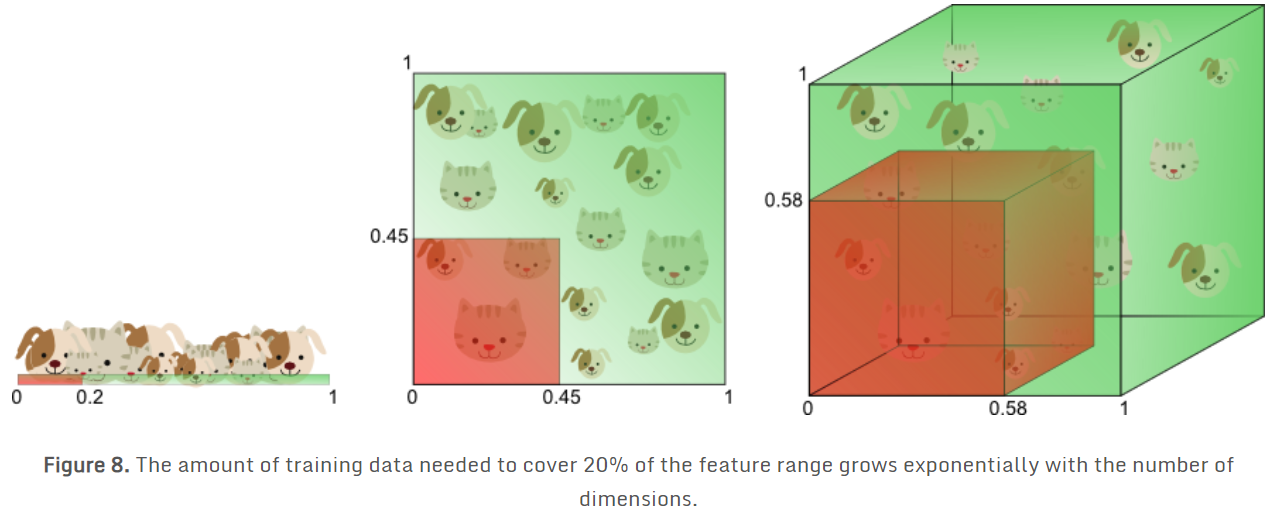

原因

- 引入了稀疏性。当我们增加维数时,训练样本的密度呈指数下降,特征空间的维度随之增长,并且变得更稀疏【具体(低维)→抽象、独立(高维)】;

参考:

Blessings of dimensionality

- Several features will be correlated and we can average over them

- Underlying distribution will be finite, informative data will lie on a low-dimensional manifold(流形)

- Underlying structure in data (samples from continuous processes, images etc) will give an approximate finite dimensionality.

Dimension reduction

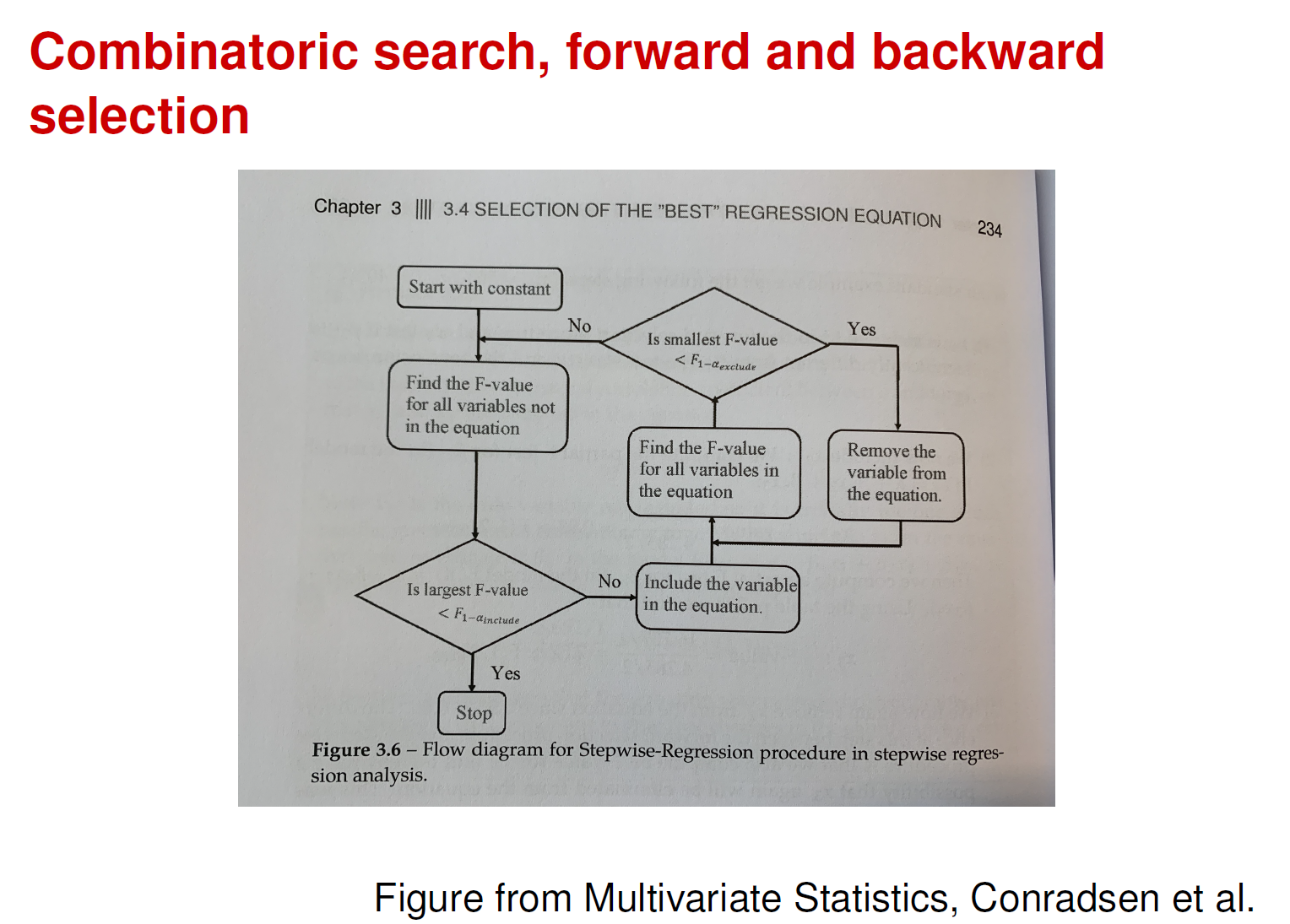

Combinatoric search

Forward selection

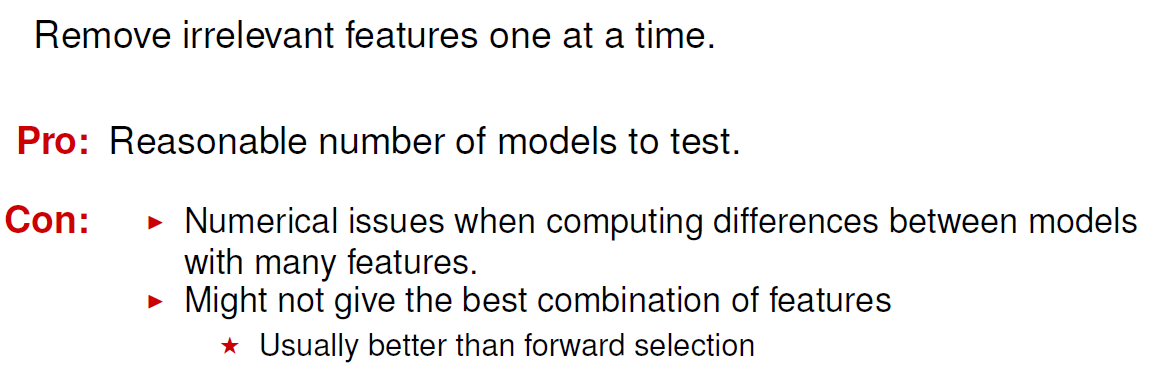

Backward elimination

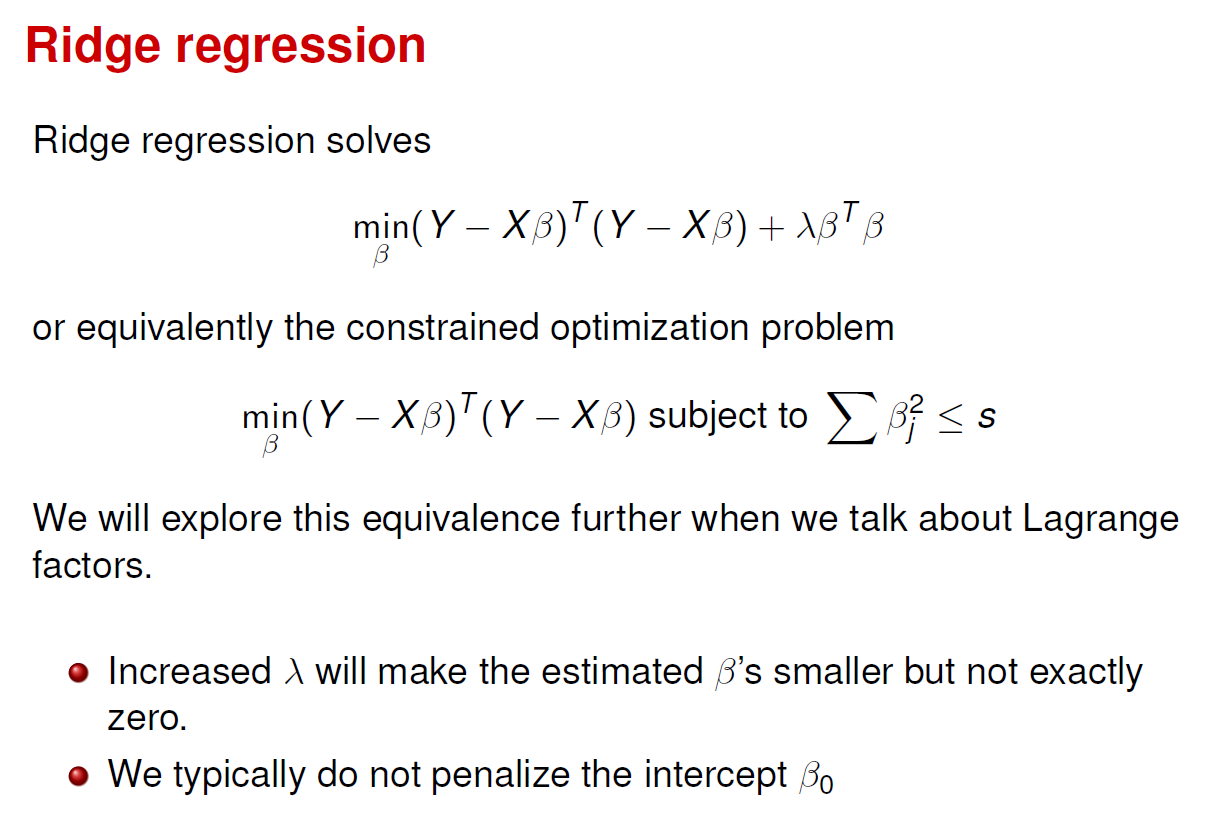

Regularization

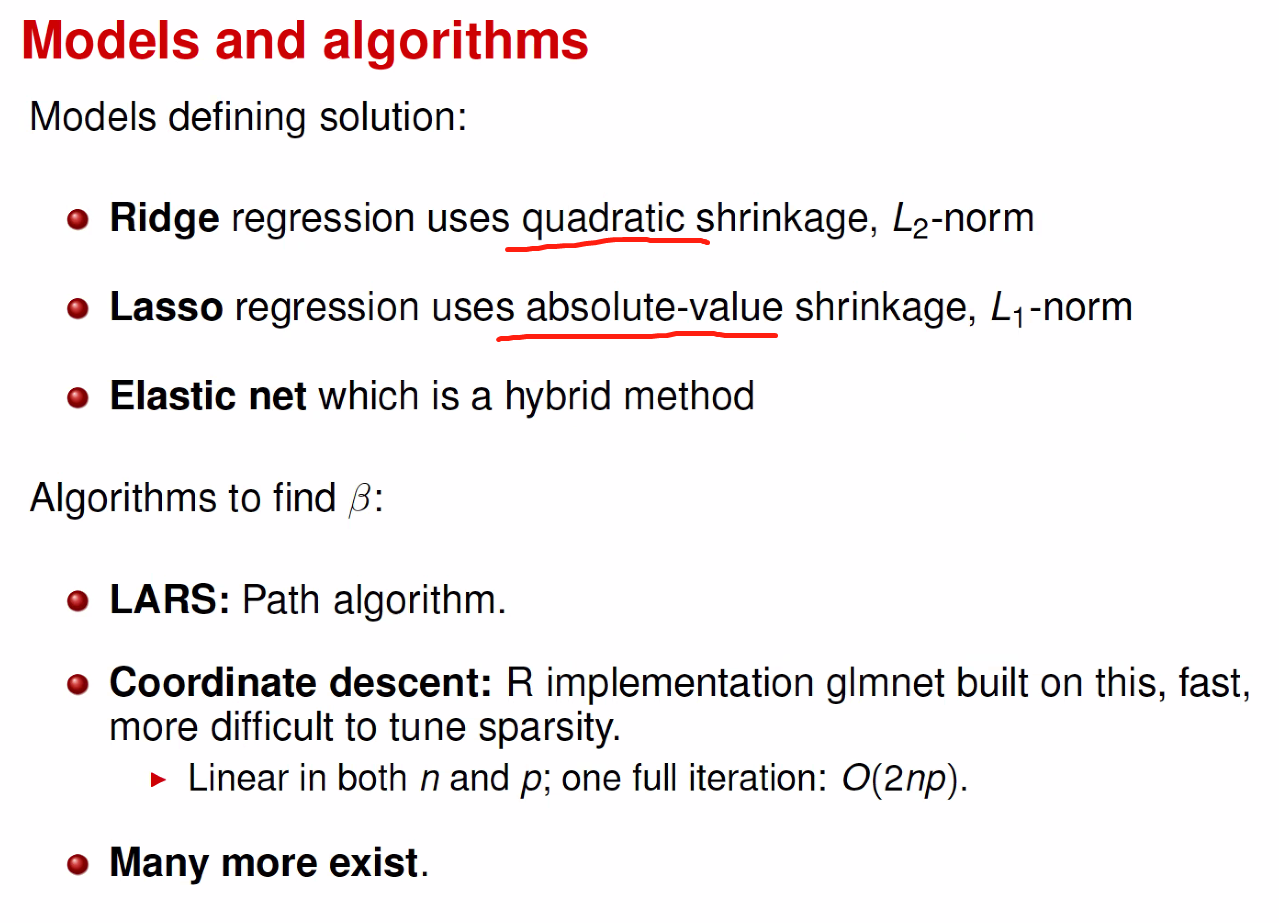

Models and algorithms

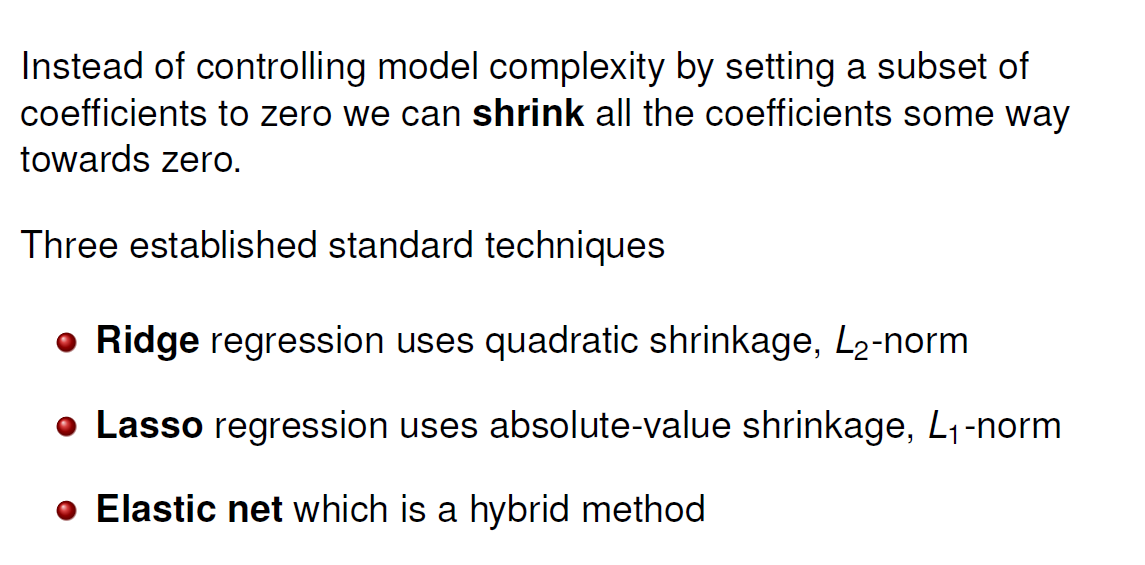

Shrink methods

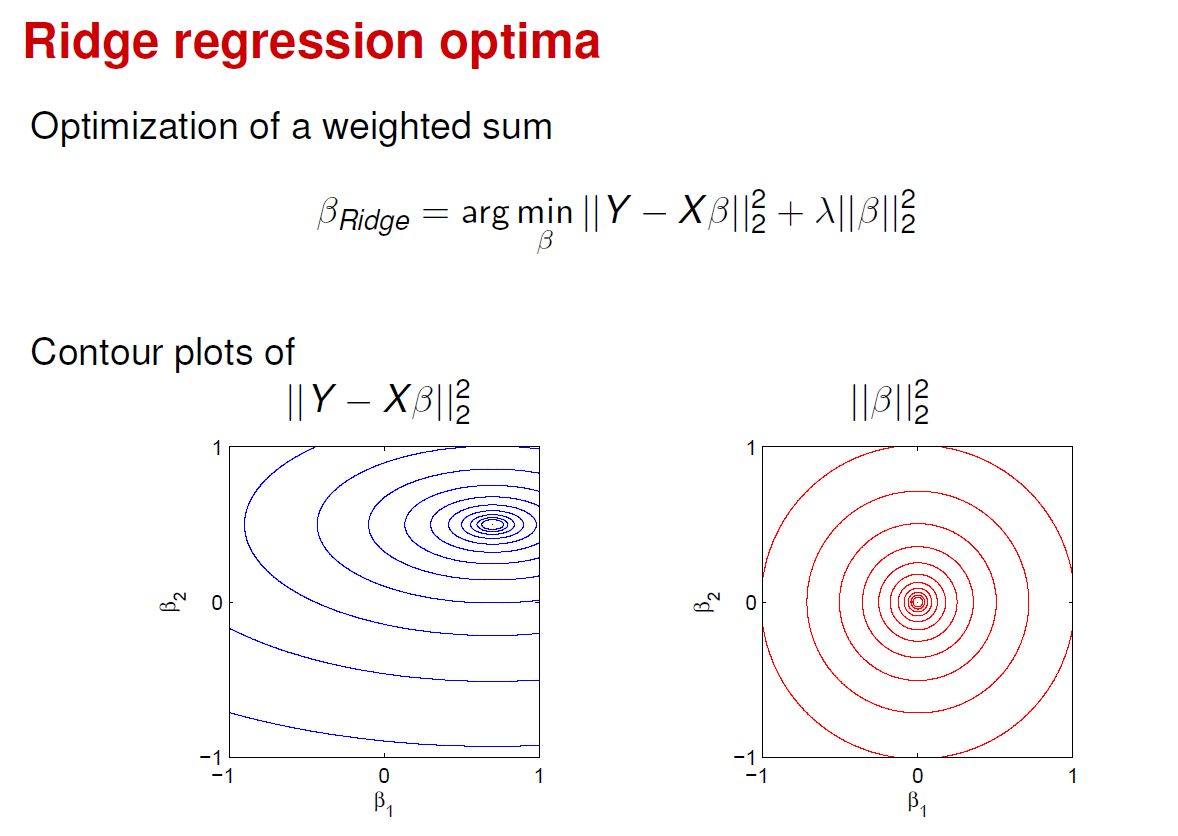

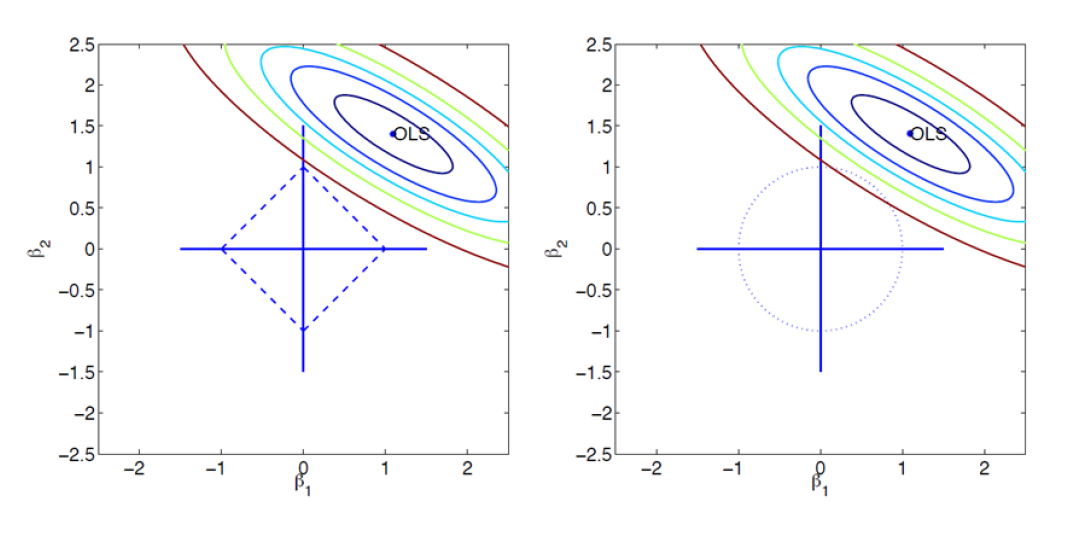

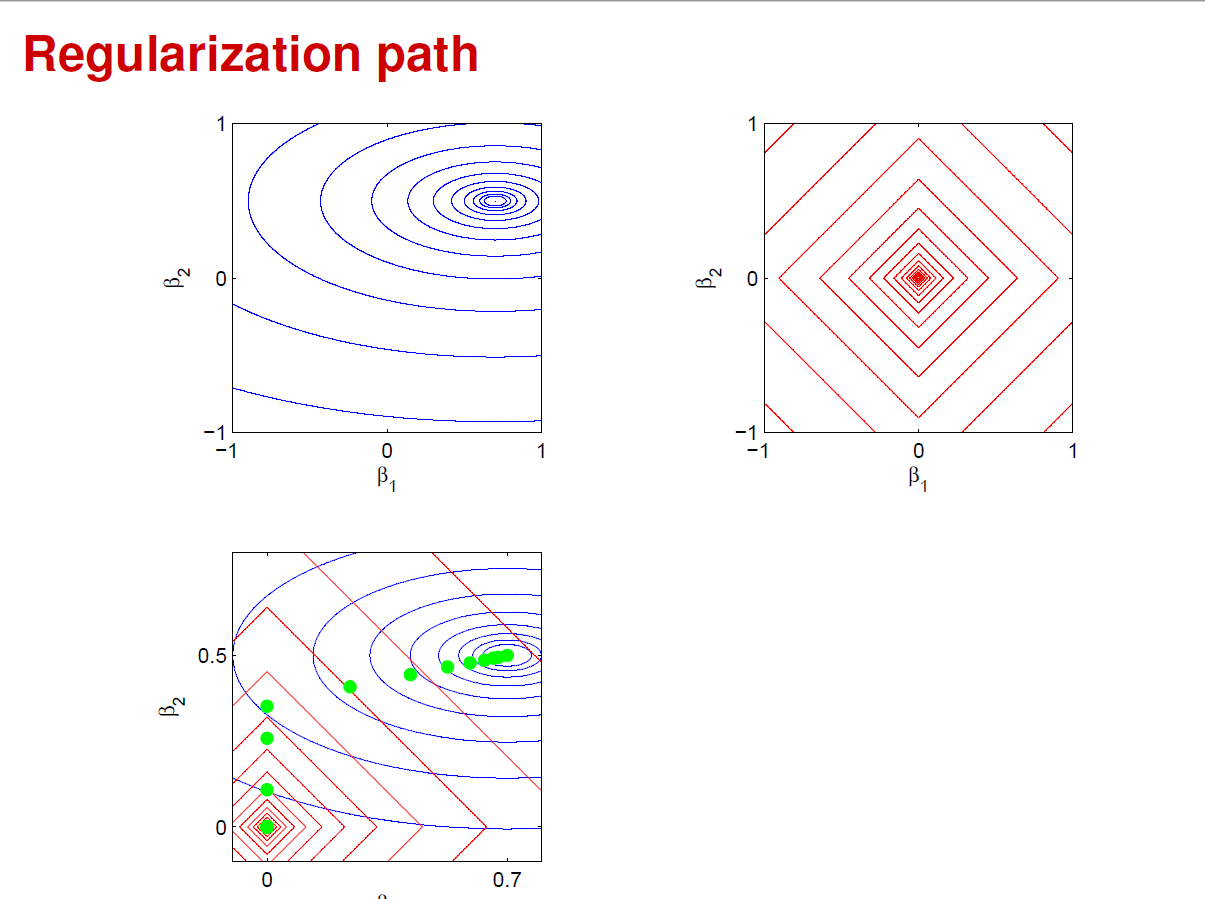

Ridge

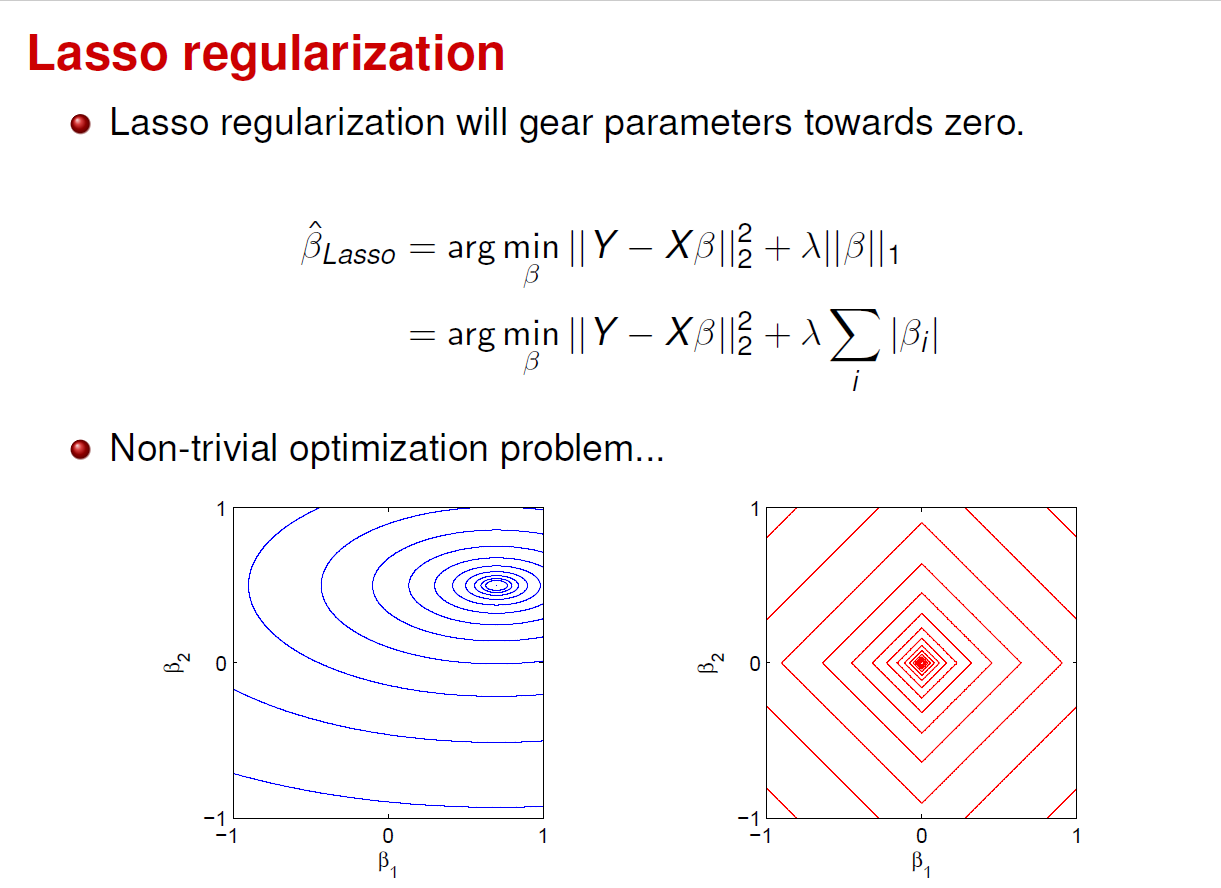

The Lasso

Algorithms for Lasso

Algorithms for Lasso

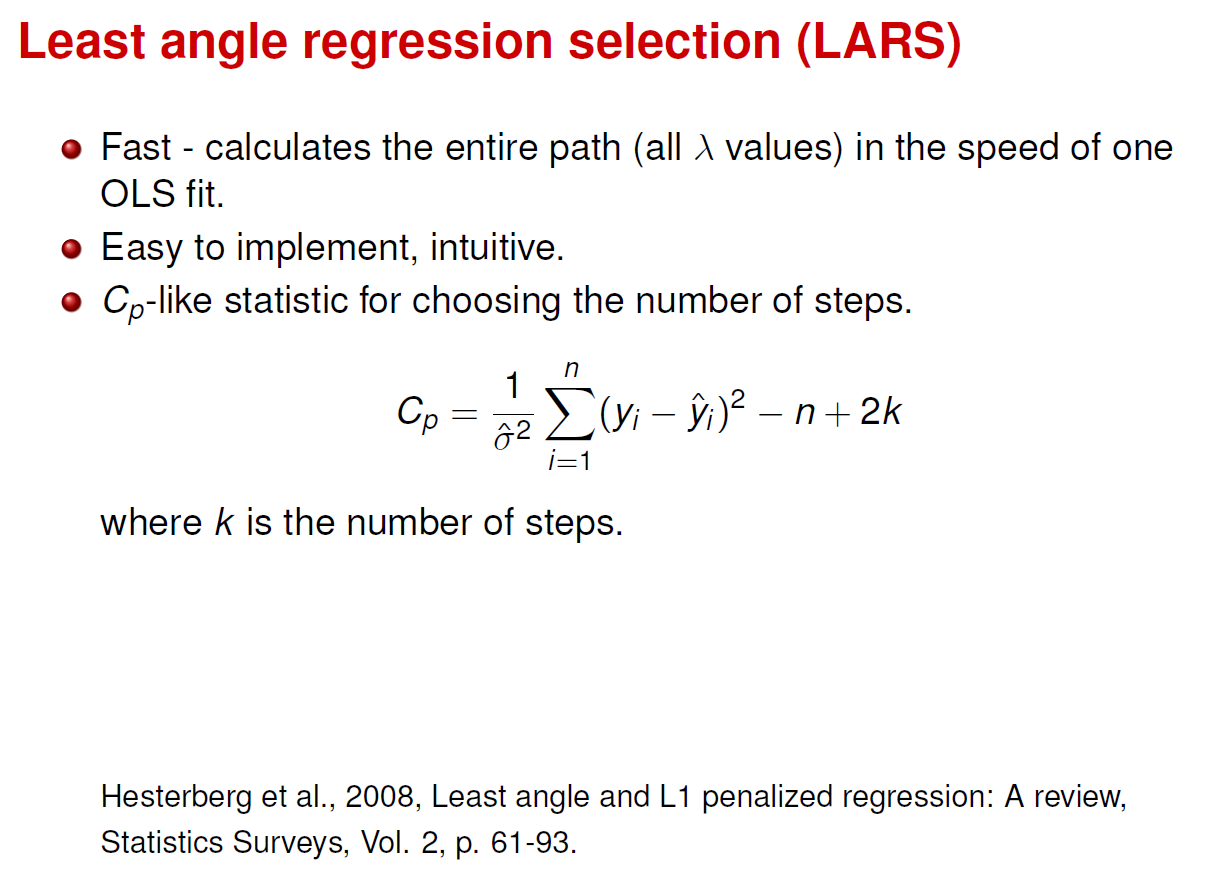

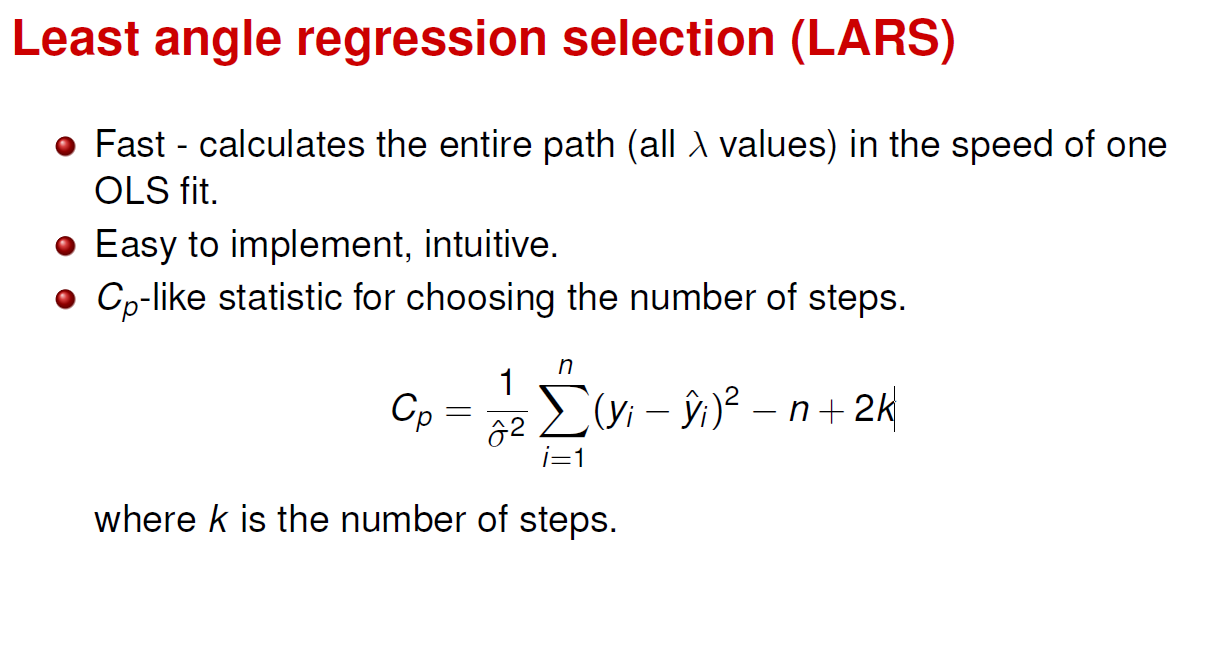

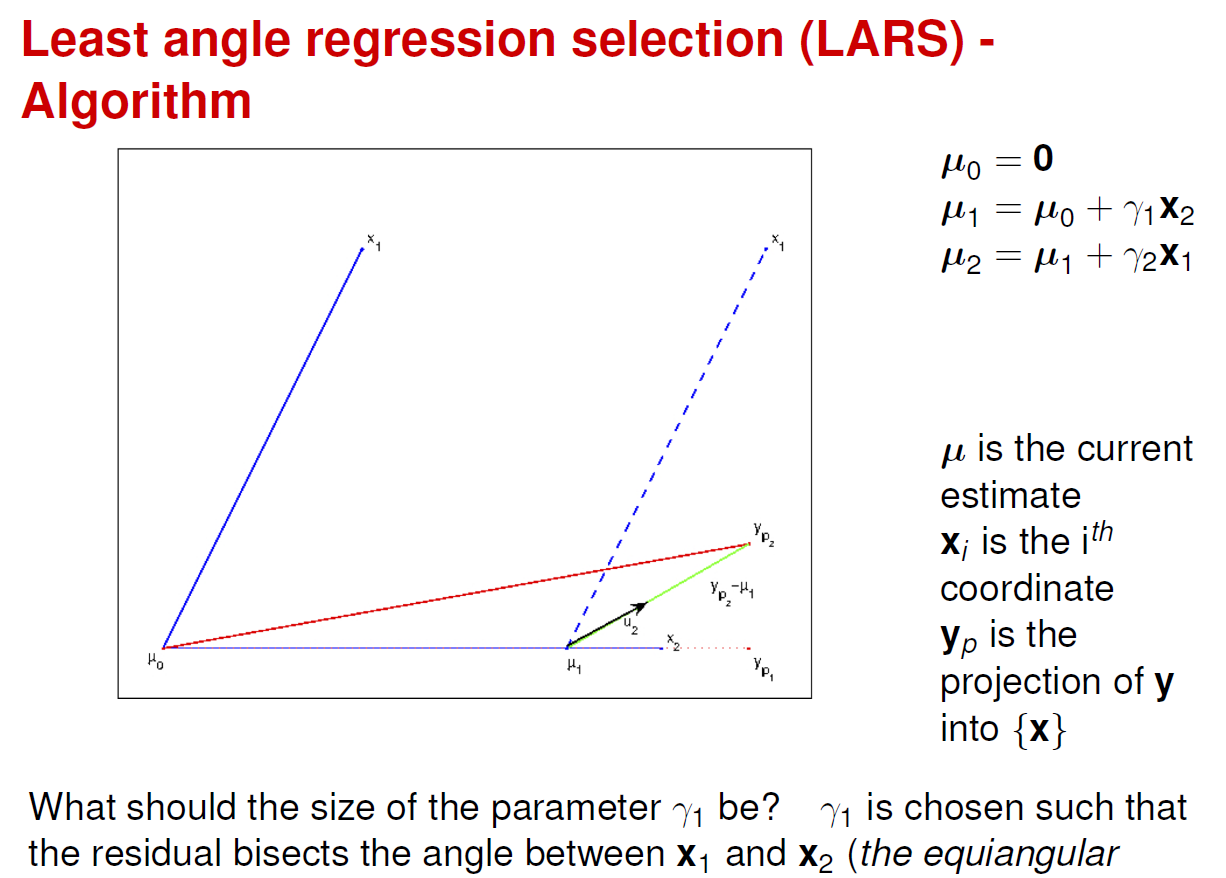

LARS

https://learn.inside.dtu.dk/d2l/le/content/103753/viewContent/409418/View

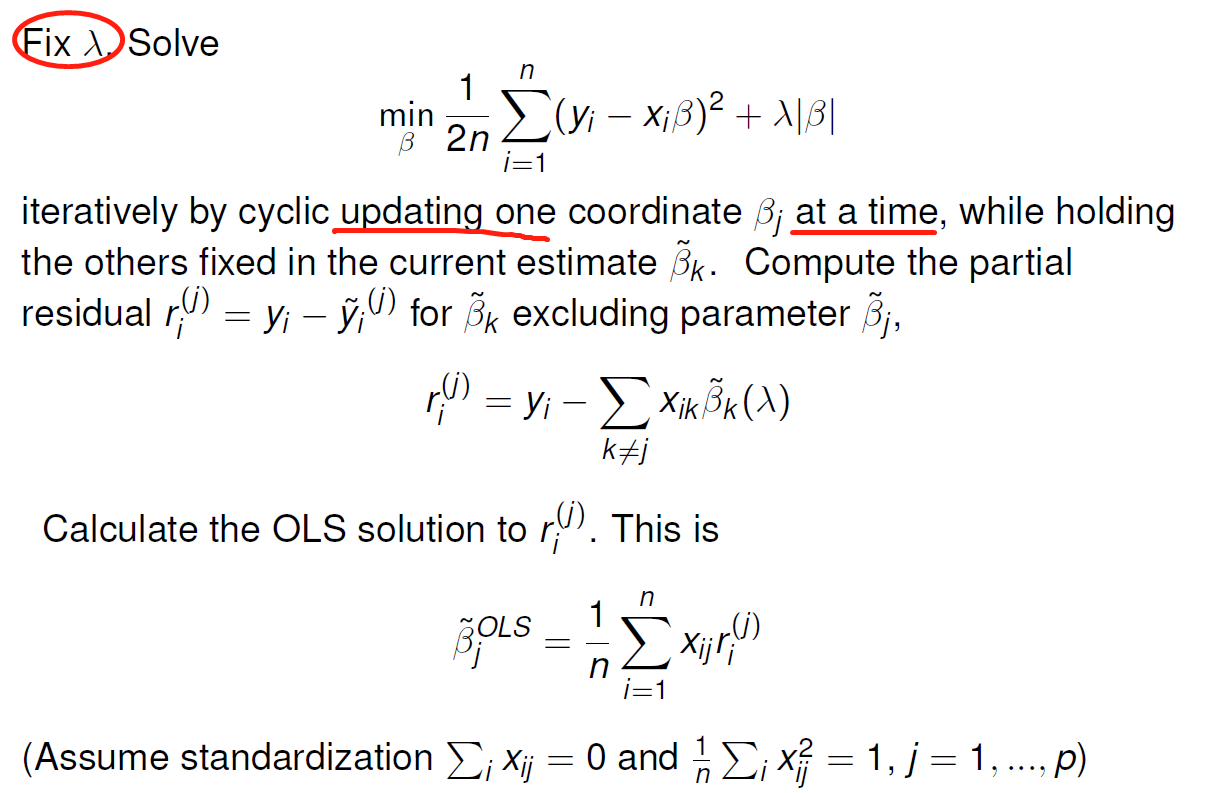

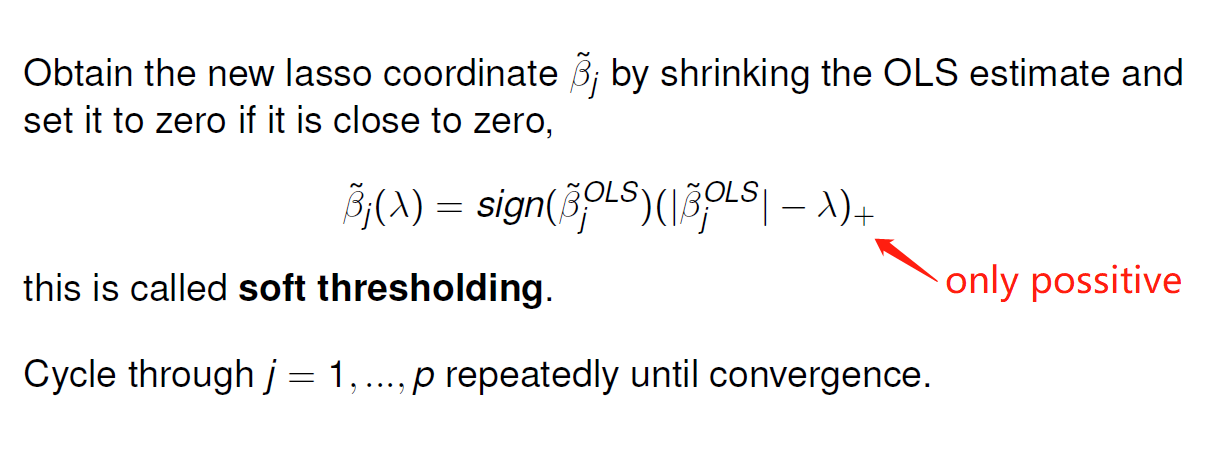

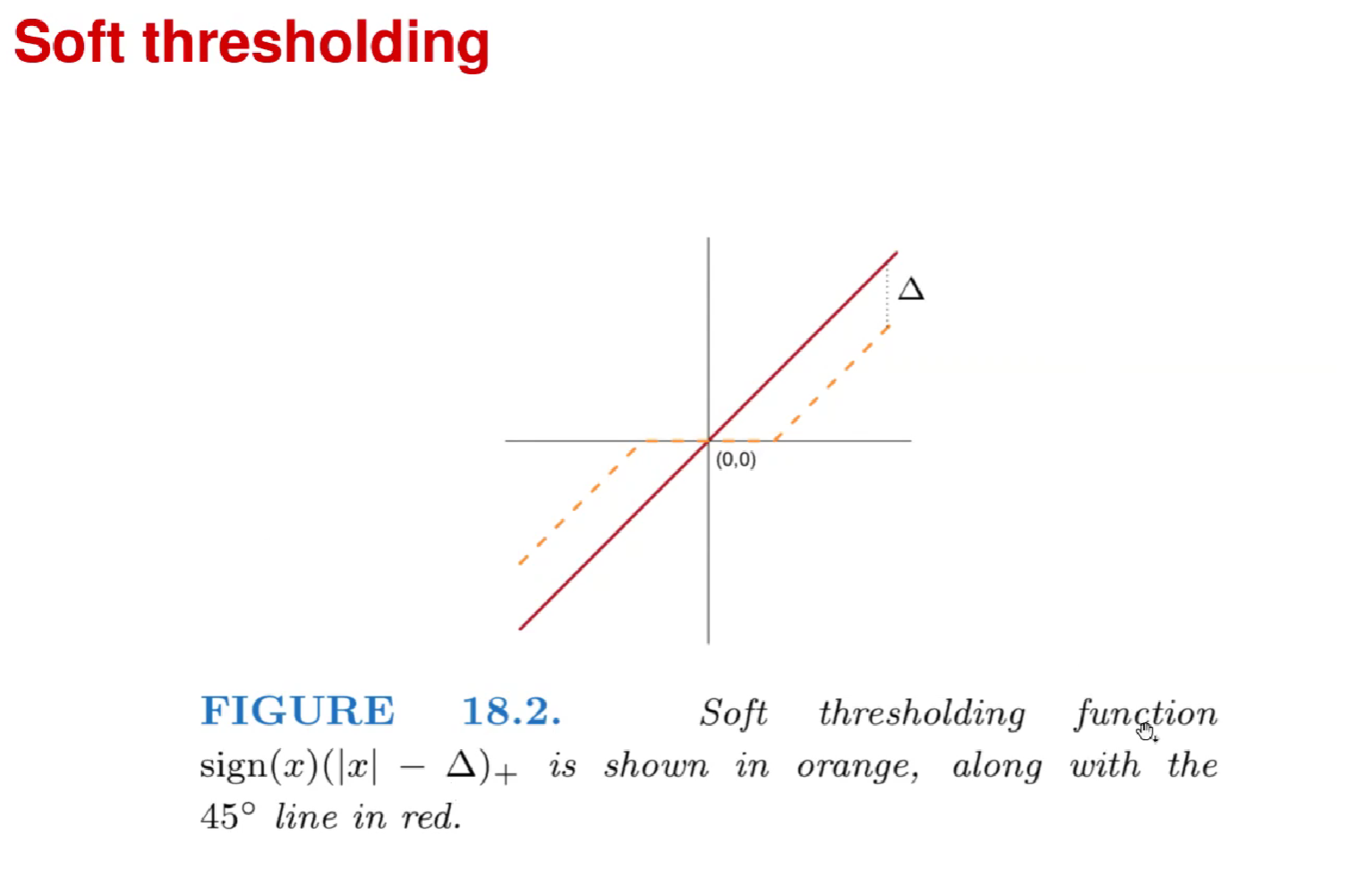

Cyclical coordinate descent

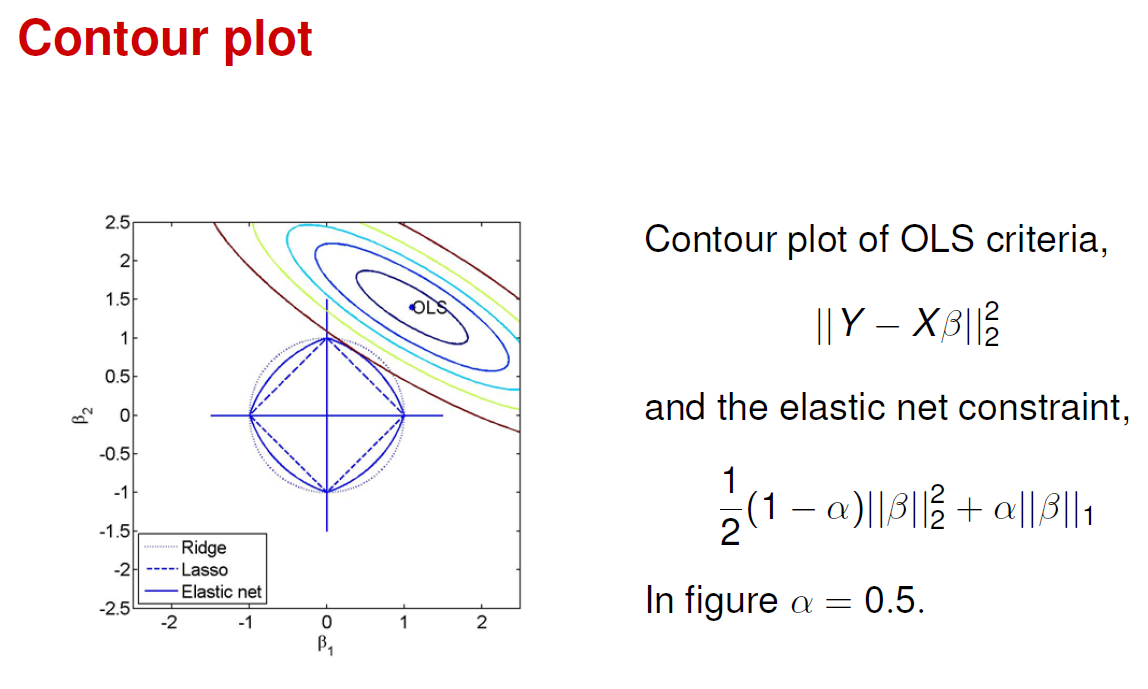

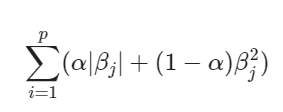

The elastic net 弹性网(coordinate descent)

Lasso 惩罚某种程度上不受强相关的变量集的选择的影响;另一方面,岭惩罚趋向于将相关变量的系数相互收缩。弹性网 (elastic net) 惩罚是一种妥协的方式,其形式为第二项鼓励高相关的特征进行平均,而第一项鼓励在平均特征系数中的稀疏解。

弹性网惩罚可以用到任何线性模型中,特别是用于回归或分类中.

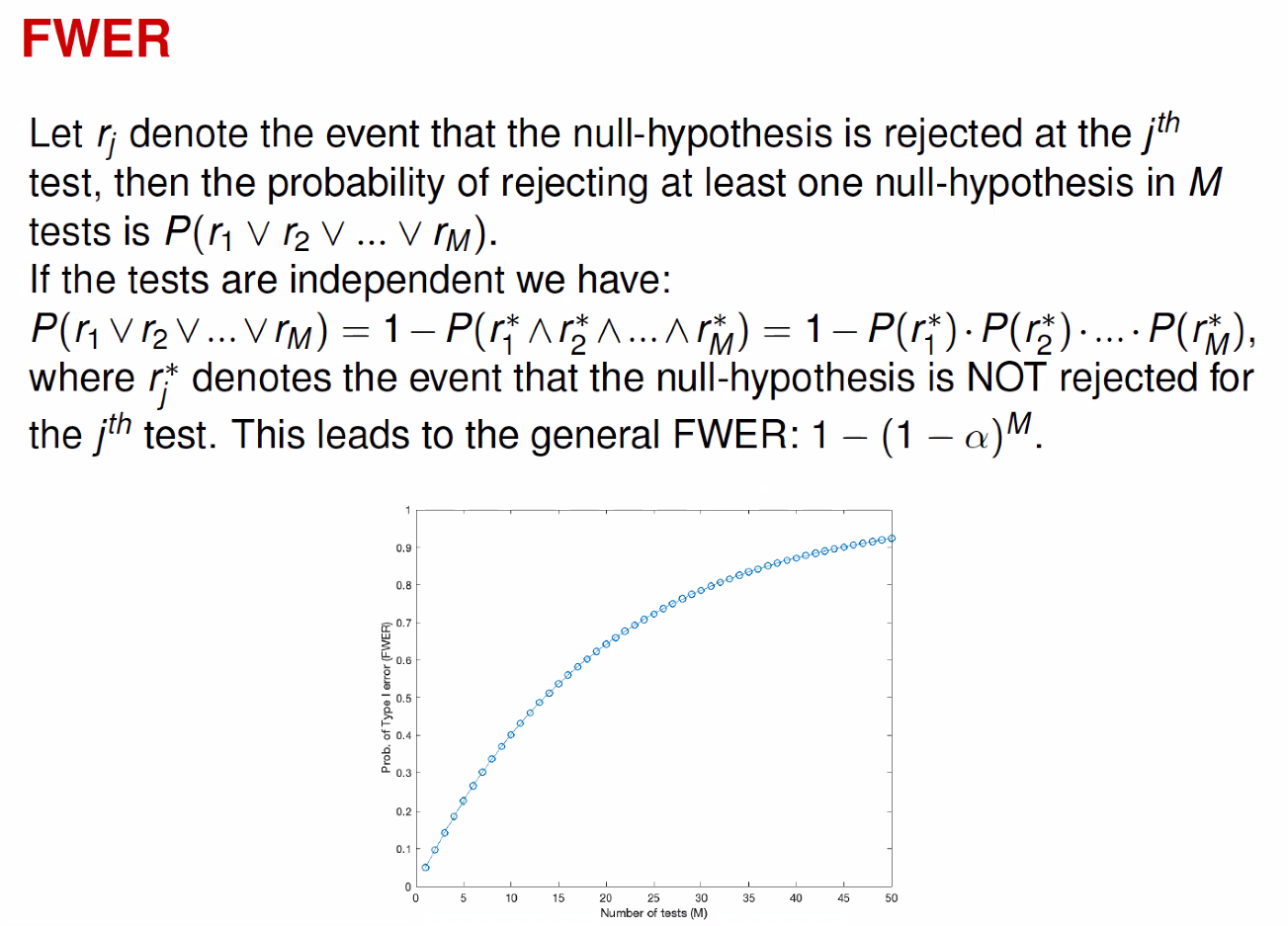

the family-wise error rate(FWER)

FWER is the probability of at least one false rejection, and is a commonly used overall measure of error.

Multiple Hypothesis(Testing)

Find p-value (significance) in scikit-learn LinearRegression

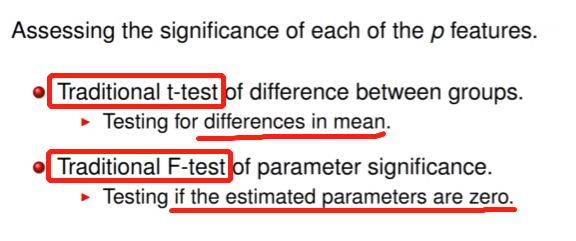

Feature assessment

Bonferroni correction: Controls FWER

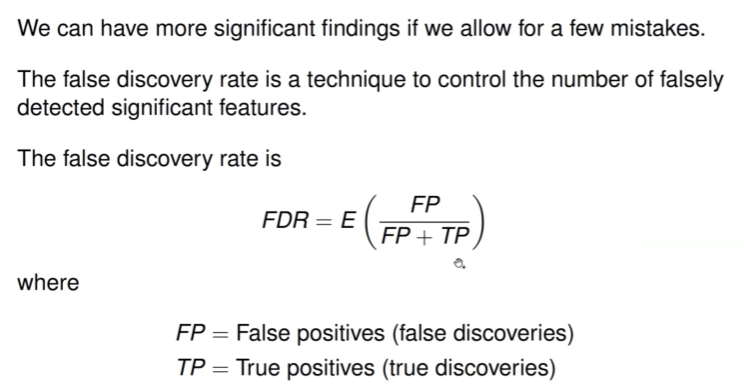

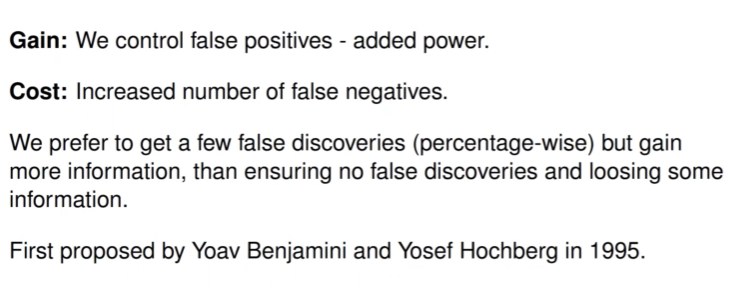

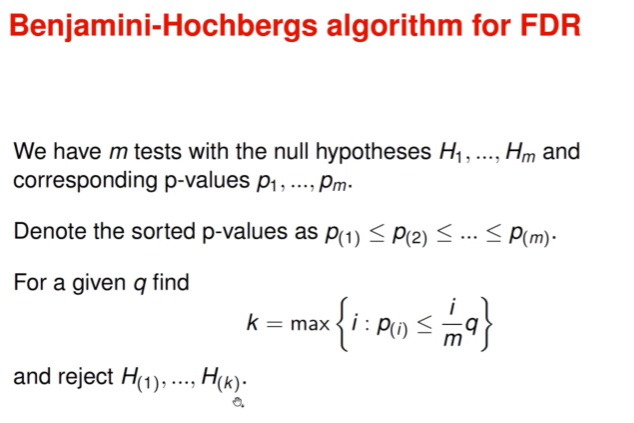

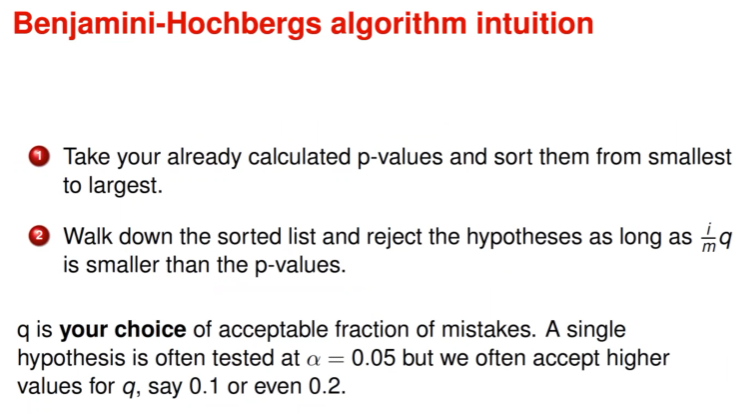

False Discovery Rate(FDR): Controls FDR