Title

xDeepFM: Combining Explicit and Implicit Feature Interactionsfor Recommender Systems

Summary

Combine the explicit high-order interaction module (CIN) with implicit interaction module and traditional FM module.

CIN: aims to learn high-order feature interactions explicitly with two virtues:

- it can learn certain bounded-degree feature interactions effectively.

- it learns feature interactions at a vector-wise level

Research Objective

xDeepFM model with:

- No manual feature engineering

- Neural network based model learns feature interactions explicitly.

Taking into account both memorization and generalization.

Problem Statement

Learning to interact features without manual engineering is a meaningful task, it is promising to exploit DNNs to learn sophisticated and selective feature interactions.

- DNNs focus more on high-order feature interactions while capture little low-order interactions. Hybrid architectures such as Wide&Deep and DeepFM can overcome this with both memorization and generalization.

- DNNs model high-order feature interactions in an implicit fashion.

- But DNNs model high-order feature interactions in an implicit fashion, no theoretical conclusion on what the maximum degree of feature interactions is.

- DNNs model feature interactions at the bit-wise level, which is different from the traditional FM framework which models feature interactions at the vector-wise level.

Method(s)

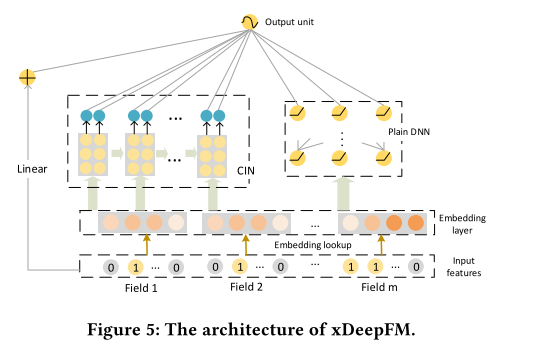

Combine the explicit high-order interaction module with implicit interaction module and traditional FM module, and name the joint model eXtreme Deep Factorization Machine (xDeepFM).CIN

We formulate the output of field embedding as a matrix:

whereis row number,

is the dimension of the fieldembedding, the i-th row in

is the embedding vector of the i-th field:

.

The output of the k-th layer in CIN is also a matrix:

wheredenotes the number of(embedding) feature vectors in the k-th layer and we let

.

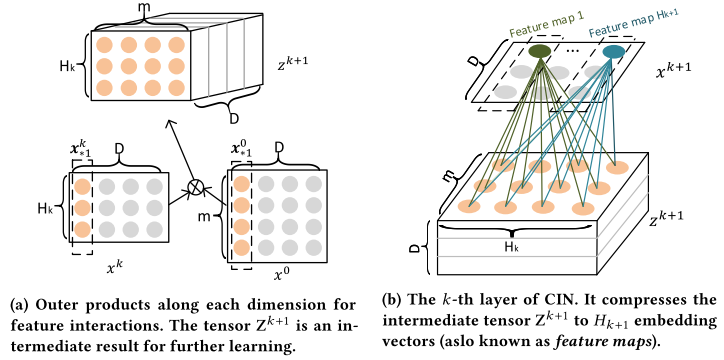

For each layer,is calculated via:

whereand

denotes Hadamard product.

If we introduce an intermediate tensor, which is the outer products of hidden layer

and original feature matrix

. Then

can be regarded as a special type of image and

is a filter.

There are

parameters at the k-th layer

We slide the filter across along the embedding dimension (D) as shown in Figure (b), and get an hidden vector

, like a feature map. Therefor,

is a collection of

differect feature maps.

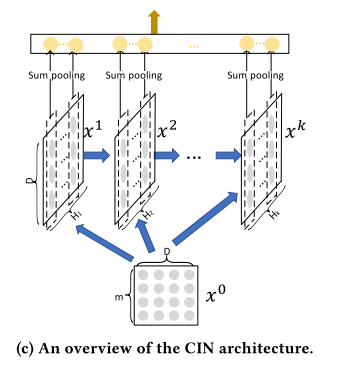

Figure (c) provides an overview of the architecture of CIN. Let denotes the depth of the network. Every hidden layer

has a connection with output units.

First apply sum pooling on each feature map of the hidden layer:for

Thus, we have a pooling vector with length

for the k-th hidden layer.

Then concat all this polling vectors from hidden layers:

If we use CIN directly for binary classification, the output unit is a sigmoid node on :

Combination with Implicit Networks

Its resulting output unit becomes:

where is raw features,

and

are outputs of the plain DNN and CIN respectively.

For binary classification, the loss function is:

Evaluation

CIN Analysis

- Space complexity

- CIN

- when necessary, can exploit a L-order decomposition and replace

with two smaller matrices

and

where

, complexity become

- DNN

- CIN

Time complexity (major downside)

Indicate that for practical datasets, higher-order interactions over sparse features are necessary, because DNN, CrossNet and CIN outperform FM significantly on all the three datasets.

CIN is the best individual model, which demonstrates the effectiveness of CIN on modeling explicit high-order feature interactions.

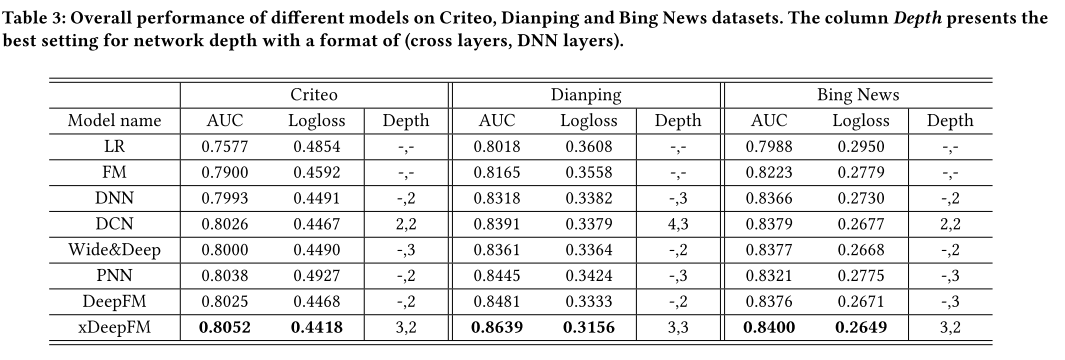

Overall performance of different models

LR is far worse than all the rest models, which demonstrates that factorization-based models are essential for measuring sparse features.

- Wide&Deep, DCN, DeepFM and xDeepFM are significantly better than DNN, which directly reflects that, despite their simplicity, incorporating hybrid components are important for boosting the accuracy of predictive systems.

- xDeepFM achieves the best performance on all datasets, which demonstrates that combining explicit and implicit high-order feature interaction is necessary.

Hyper-Parameter Study

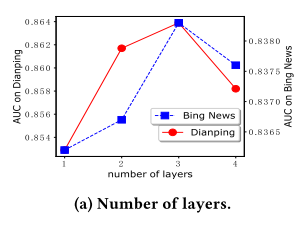

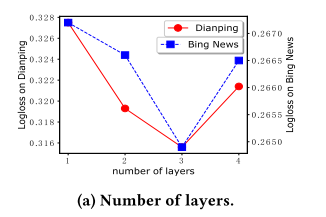

Depth of Network

Model performance degrades when the depth of network is set greater than 3. It is caused by overfitting evidenced by that we notice that the loss of training data still keeps decreasing when we add more hidden layers.

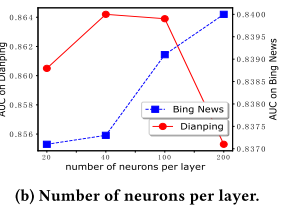

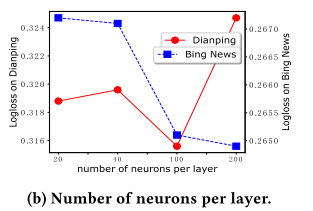

Number of Neurons per Layer

In this experi- ment we fix the depth of network at 3.

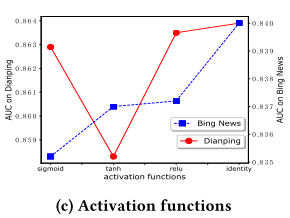

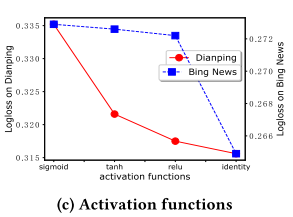

Activation Function

Identify function is indeed the most suitable one for neurons in CIN

Conclusion

xDeepFM can auto- matically learn high-order feature interactions in both explicit and implicit fashions, which is of great significance to reducing manual feature engineering work. We conduct comprehensive experiments and the results demonstrate that our xDeepFM outperforms state-of-the-art models consistently on three real-world datasets.

Notes(optional)

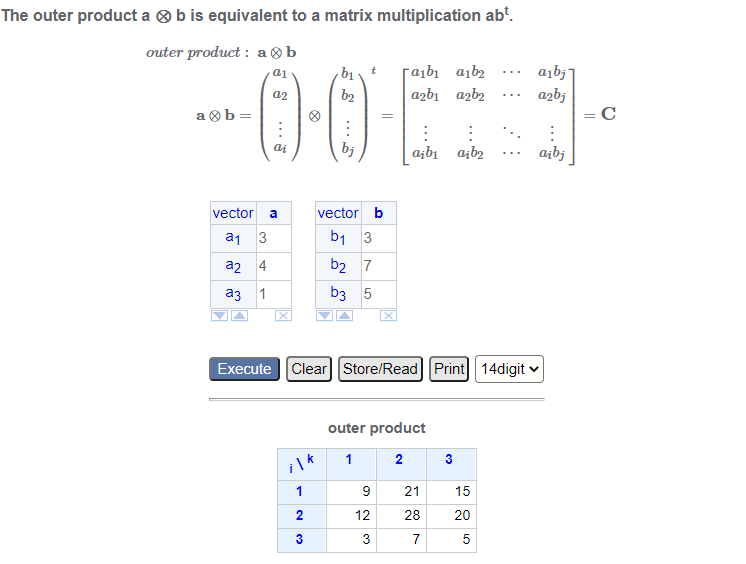

Outer Porduct

Reference(optional)

DeepFM

Huifeng Guo, Ruiming Tang, Yunming Ye, Zhenguo Li, and Xiuqiang He. 2017. Deepfm: A factorization-machine based neural network for CTR prediction. arXiv preprint arXiv:1703.04247 (2017).

DCN

Ruoxi Wang, Bin Fu, Gang Fu, and MingliangWang. 2017. Deep & Cross Network for Ad Click Predictions. arXiv preprint arXiv:1708.05123 (2017).