- 实验环境:阿里云ECS实例

- 实验目标

- 实验步骤—-

- 1. 前置规划

- 2. 部署Containerd(每台服务器都需要操作)

- 3. 部署Etcd集群

- 3.3 部署Etcd">3.3 部署Etcd

- 3.4 部署Etcd集群">3.4 部署Etcd集群

- 4. 部署Master节点

- 4.5 部署kube-controller-manager">4.5 部署kube-controller-manager

- 4.6 部署kube-scheduler">4.6 部署kube-scheduler

- 4.7 部署kubelet">4.7 部署kubelet

- 4.8 部署kube-proxy">4.8 部署kube-proxy

- 5. 部署Node节点

- 部署Dashboard和CoreDNS">6. 部署Dashboard和CoreDNS

- 扩容多Master节点(高可用)">7. 拓展篇:扩容多Master节点(高可用)

- 实验总结:

实验环境:阿里云ECS实例

实验目标

- 通过二进制方式部署(containerd容器运行时)Kubernetes集群

- 对k8s各项组件配置深入了解

- 通过负载均衡器(nginx+keepalived)实现高可用集群

实验步骤—-

1. 前置规划

1.1 环境准备

- 建议最小硬件配置: 2核Cpu 2G内存 30G硬盘

- 需要从外网拉取容器镜像(内网环境需要提前下载好容器镜像离线导入节点)

| 操作系统 | Centos 7.x |

|---|---|

| 容器运行时 | Containerd v1.6.9 |

| Kubernetes | Kubernetes v1.25.2 |

| Etcdctl | Etcd v3.5.1 |

| Cfssl | Cfssl 1.2.0 |

- 服务器规划为1Master 1Node 或 2Master 1Node 1Lb

| HostName | IP | 组件 |

|---|---|---|

| k8s-master01 | 192.168.22.88 | Kube-apiserver,Kube-controller-manager,kube-scheduler,kubelet,kube-proxy,containerd,etcd,nginx,keepalived |

| k8s-master02 (可选项) | 192.168.22.89 | Kube-apiserver,Kube-controller-manager,kube-scheduler,kubelet,kube-proxy,containerd,etcd,nginx,keepalived |

| k8s-node01 | 192.168.22.90 | kubelet,kube-proxy,containerd |

| LbVip(可选项) | 192.168.22.91 | Slb或nginx之类四层LB |

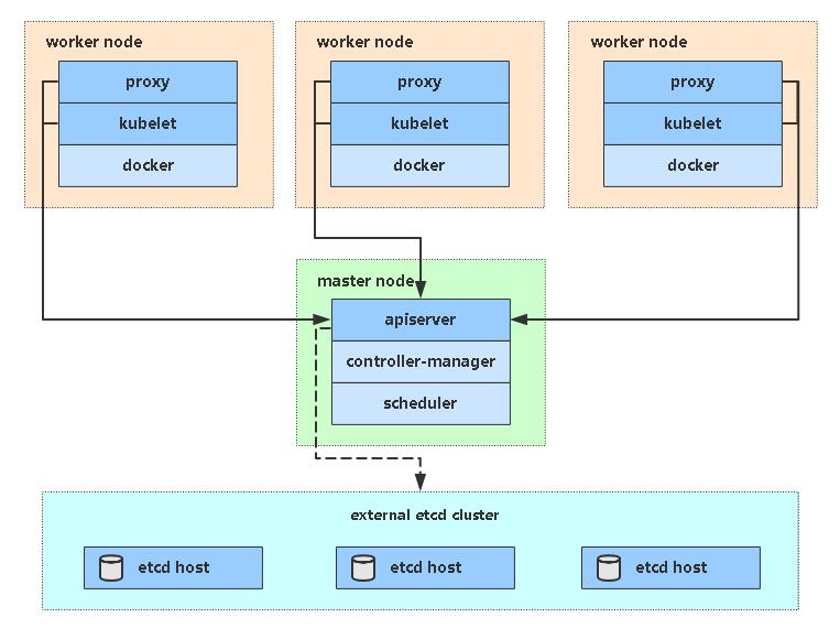

- 简单k8s架构图

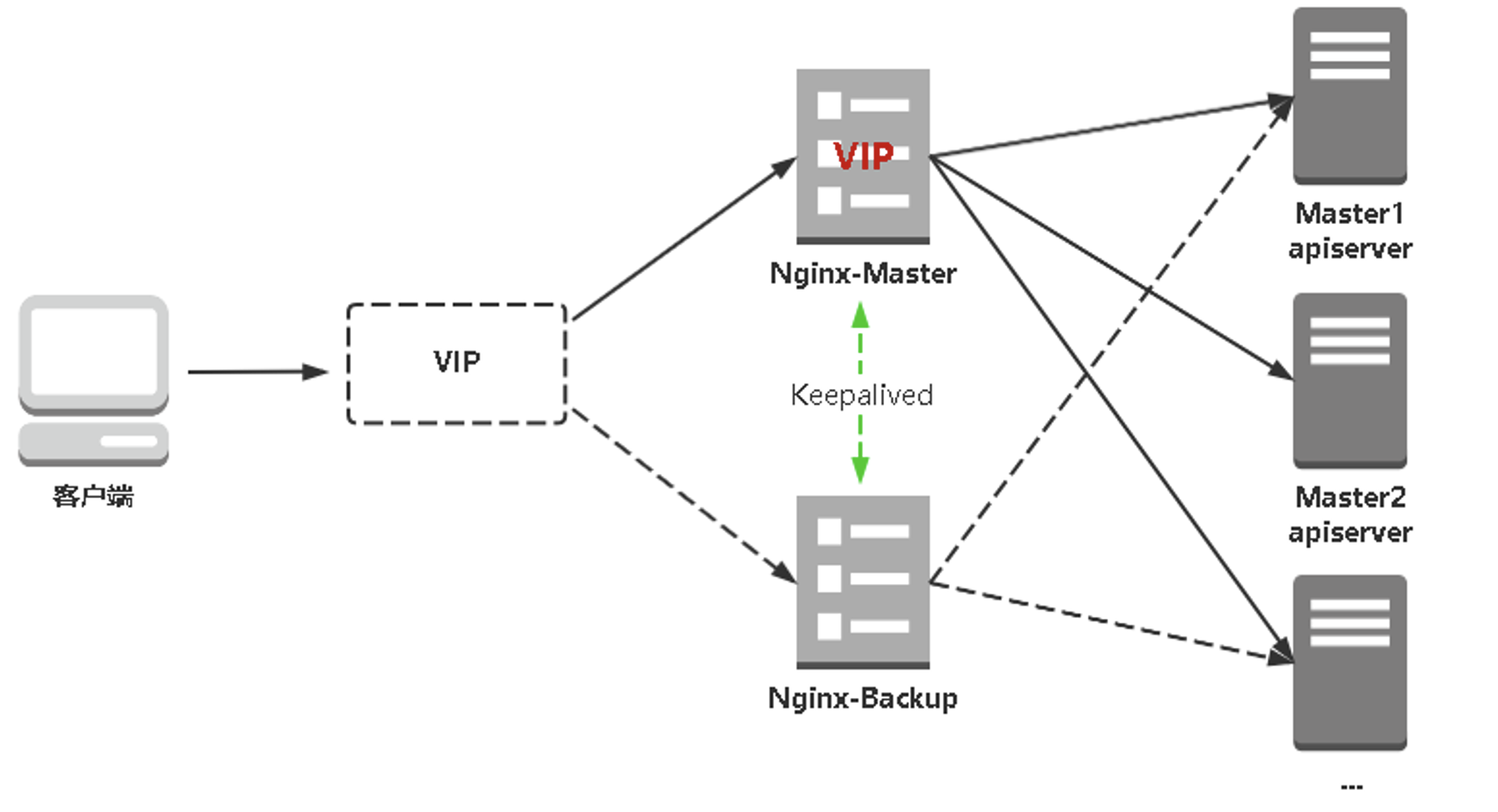

- 高可用集群架构图

1.2 服务器初始化配置

# 关闭防火墙systemctl stop firewalldsystemctl disable firewalld# 关闭selinuxsed -i 's/enforcing/disabled/' /etc/selinux/config # 永久setenforce 0 # 临时# 关闭swapswapoff -a # 临时sed -ri 's/.*swap.*/#&/' /etc/fstab # 永久# 根据规划设置主机名hostnamectl set-hostname <hostname># 在master添加hostscat >> /etc/hosts << EOF192.168.22.88 k8s-master01192.168.22.89 k8s-master02192.168.22.90 k8s-node01EOF#安装依赖包yum -y install wget jq psmisc vim net-tools nfs-utils telnet yum-utils device-mapper-persistent-data lvm2 git network-scripts tar curl -y#启用ipvsyum install ipvsadm ipset sysstat conntrack libseccomp -ymkdir -p /etc/modules-load.d/cat >> /etc/modules-load.d/ipvs.conf << EOFip_vsip_vs_rrip_vs_wrrip_vs_shnf_conntrackip_tablesip_setxt_setipt_setipt_rpfilteript_REJECTipipEOFsystemctl restart systemd-modules-load.servicelsmod | grep -e ip_vs -e nf_conntrack# 将桥接的IPv4流量传递到iptables的链cat >> /etc/sysctl.d/k8s.conf << EOFnet.ipv4.ip_forward = 1net.bridge.bridge-nf-call-iptables = 1vm.overcommit_memory = 1vm.panic_on_oom = 0fs.inotify.max_user_watches = 89100fs.file-max = 52706963fs.nr_open = 52706963net.netfilter.nf_conntrack_max = 2310720net.ipv4.tcp_keepalive_time = 600net.ipv4.tcp_keepalive_probes = 3net.ipv4.tcp_keepalive_intvl = 15net.ipv4.tcp_max_tw_buckets = 36000net.ipv4.tcp_tw_reuse = 1net.ipv4.tcp_max_orphans = 327680net.ipv4.tcp_orphan_retries = 3net.ipv4.tcp_syncookies = 1net.ipv4.tcp_max_syn_backlog = 16384net.ipv4.tcp_max_syn_backlog = 16384net.ipv4.tcp_timestamps = 0net.core.somaxconn = 16384net.ipv6.conf.all.disable_ipv6 = 0net.ipv6.conf.default.disable_ipv6 = 0net.ipv6.conf.lo.disable_ipv6 = 0net.ipv6.conf.all.forwarding = 1EOFmodprobe br_netfilterlsmod |grep conntrackmodprobe ip_conntracksysctl -p /etc/sysctl.d/k8s.conf # 生效# 时间同步yum install ntpdate -yntpdate time.windows.com

2. 部署Containerd(每台服务器都需要操作)

2.1 安装containerd

### 加载 containerd模块cat <<EOF | sudo tee /etc/modules-load.d/containerd.confoverlaybr_netfilterEOFsystemctl restart systemd-modules-load.servicecat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.confnet.bridge.bridge-nf-call-iptables = 1net.ipv4.ip_forward = 1net.bridge.bridge-nf-call-ip6tables = 1EOF# 加载内核sysctl --system

#获取阿里云docker源wget -O /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repoyum list | grep containerdyum install -y containerd.io#生成containerd的配置文件mkdir /etc/containerd -p#生成配置文件containerd config default > /etc/containerd/config.toml#编辑配置文件vim /etc/containerd/config.toml# SystemdCgroup = false 改为 SystemdCgroup = true# sandbox_image = "k8s.gcr.io/pause:3.6"# 改为:# sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.6"systemctl enable containerdsystemctl start containerdctr versionrunc -version

- Delegate**: 这个选项允许 containerd 以及运行时自己管理自己创建容器的 cgroups。如果不设置这个选项,systemd 就会将进程移到自己的 cgroups 中,从而导致 containerd 无法正确获取容器的资源使用情况。**

- KillMode**: 这个选项用来处理 containerd 进程被杀死的方式。默认情况下,systemd 会在进程的 cgroup 中查找并杀死 containerd 的所有子进程。KillMode 字段可以设置的值如下。**

- control-group**(默认值):当前控制组里面的所有子进程,都会被杀掉**

- process**:只杀主进程**

- mixed**:主进程将收到 SIGTERM 信号,子进程收到 SIGKILL 信号**

- none**:没有进程会被杀掉,只是执行服务的 stop 命令**

我们需要将 KillMode 的值设置为 process,这样可以确保升级或重启 containerd 时不杀死现有的容器。

2.2 配置镜像加速

# vim /etc/containerd/config.toml[plugins."io.containerd.grpc.v1.cri".registry][plugins."io.containerd.grpc.v1.cri".registry.mirrors][plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]endpoint = ["https://gaatfjuv.mirror.aliyuncs.com"][plugins."io.containerd.grpc.v1.cri".registry.mirrors."k8s.gcr.io"]endpoint = ["https://registry.aliyuncs.com/k8sxio"]

- registry.mirrors.”xxx”**: 表示需要配置 mirror 的镜像仓库,例如 registry.mirrors.”docker.io” 表示配置 docker.io 的 mirror。**

- endpoint**: 表示提供 mirror 的镜像加速服务,比如我们可以注册一个阿里云的镜像服务来作为 docker.io 的 mirror。**

#配置后重启systemctl restart containerd

3. 部署Etcd集群

Etcd 是一个分布式键值存储系统,Kubernetes使用Etcd进行数据存储,所以先准备一个Etcd数据库,为解决Etcd单点故障,应采用集群方式部署,这里使用3台组建集群,可容忍1台机器故障,当然,你也可以使用5台组建集群,可容忍2台机器故障。3.1 使用cfssl证书生成工具

cfssl是一个开源的证书管理工具,使用json文件生成证书,相比openssl更方便使用。这里使用k8s-master01作为终端操作

chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64mv cfssl_linux-amd64 /usr/local/bin/cfsslmv cfssljson_linux-amd64 /usr/local/bin/cfssljsonmv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo

3.2 生成Etcd证书

3.2.1 创建工作目录:

mkdir -p ~/TLS/{etcd,k8s}cd ~/TLS/etcd

自签CA

生成证书

cat > ca-config.json << EOF{"signing": {"default": {"expiry": "87600h"},"profiles": {"www": {"expiry": "87600h","usages": ["signing","key encipherment","server auth","client auth"]}}}}EOFcat > ca-csr.json << EOF{"CN": "etcd CA","key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "Beijing","ST": "Beijing"}]}EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -#会生成ca.pem和ca-key.pem文件

3.2.2 使用自签CA签发Etcd HTTPS证书 创建证书申请文件

注:上述文件hosts字段中IP为所有etcd节点的集群内部通信IP,一个都不能少!为了方便后期扩容可以多写几个预留的IP。 生成证书

cat > server-csr.json << EOF{"CN": "etcd","hosts": ["192.168.22.88","192.168.22.89","192.168.22.90","192.168.22.91","192.168.22.92",],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "BeiJing","ST": "BeiJing"}]}EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server#会生成server.pem和server-key.pem文件。

3.3 部署Etcd

以下在k8s-master01上操作,为简化操作,会将k8s-master01生成的所有文件拷贝到其他节点3.3.1 创建工作目录并解压二进制包

mkdir /opt/etcd/{bin,cfg,ssl} -ptar zxvf etcd-v3.5.1-linux-amd64.tar.gzmv etcd-v3.5.1-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/

3.3.2 创建etcd配置文件

• ETCD_NAME:节点名称,集群中唯一 • ETCD_DATA_DIR:数据目录 • ETCD_LISTEN_PEER_URLS:集群通信监听地址 • ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址 • ETCD_INITIAL_ADVERTISE_PEERURLS:集群通告地址 • ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址 • ETCD_INITIAL_CLUSTER:集群节点地址 • ETCD_INITIALCLUSTER_TOKEN:集群Token • ETCD_INITIALCLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群

cat > /opt/etcd/cfg/etcd.conf << EOF#[Member]ETCD_NAME="etcd-1"ETCD_DATA_DIR="/var/lib/etcd/default.etcd"ETCD_LISTEN_PEER_URLS="https://192.168.22.88:2380"ETCD_LISTEN_CLIENT_URLS="https://192.168.22.88:2379"#[Clustering]ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.22.88:2380"ETCD_ADVERTISE_CLIENT_URLS="https://192.168.22.88:2379"ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.22.88:2380,etcd-2=https://192.168.22.89:2380,etcd-3=https://192.168.22.90:2380"ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"ETCD_INITIAL_CLUSTER_STATE="new"EOF

3.3.3 systemd管理etcd

cat > /usr/lib/systemd/system/etcd.service << EOF[Unit]Description=Etcd ServerAfter=network.targetAfter=network-online.targetWants=network-online.target[Service]Type=notifyEnvironmentFile=/opt/etcd/cfg/etcd.confExecStart=/opt/etcd/bin/etcd \--cert-file=/opt/etcd/ssl/server.pem \--key-file=/opt/etcd/ssl/server-key.pem \--peer-cert-file=/opt/etcd/ssl/server.pem \--peer-key-file=/opt/etcd/ssl/server-key.pem \--trusted-ca-file=/opt/etcd/ssl/ca.pem \--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem \--logger=zapRestart=on-failureLimitNOFILE=65536[Install]WantedBy=multi-user.targetEOF

3.3.4 拷贝刚才生成的证书

cp ~/TLS/etcd/ca*pem ~/TLS/etcd/server*pem /opt/etcd/ssl/#把刚才生成的证书拷贝到配置文件中的路径

3.3.5 启动并设置开机启动

systemctl daemon-reloadsystemctl start etcdsystemctl enable etcd

3.4 部署Etcd集群

3.4.1 将k8s-master01所有生成的文件copy到k8s-master02和k8s-node01并配置

scp -r /opt/etcd/ root@192.168.22.89:/opt/scp /usr/lib/systemd/system/etcd.service root@192.168.22.89:/usr/lib/systemd/system/scp -r /opt/etcd/ root@192.168.22.90:/opt/scp /usr/lib/systemd/system/etcd.service root@192.168.22.90:/usr/lib/systemd/system/

k8s-master02和k8s-node01中分别修改etcd.conf配置文件中的节点名称和当前服务器IP:

vim /opt/etcd/cfg/etcd.conf#[Member]ETCD_NAME="etcd-2" # 修改此处,k8s-master02改为etcd-2,k8s-node01改为etcd-3ETCD_DATA_DIR="/var/lib/etcd/default.etcd"ETCD_LISTEN_PEER_URLS="https://192.168.22.89:2380" # 修改此处为当前服务器IPETCD_LISTEN_CLIENT_URLS="https://192.168.22.89:2379" # 修改此处为当前服务器IP#[Clustering]ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.22.89:2380" # 修改此处为当前服务器IPETCD_ADVERTISE_CLIENT_URLS="https://192.168.22.89:2379" # 修改此处为当前服务器IPETCD_INITIAL_CLUSTER="etcd-1=https://192.168.22.88:2380,etcd-2=https://192.168.22.89:2380,etcd-3=https://192.168.22.90:2380"ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"ETCD_INITIAL_CLUSTER_STATE="new"

vim /opt/etcd/cfg/etcd.conf#[Member]ETCD_NAME="etcd-3" # 修改此处,k8s-master02改为etcd-2,k8s-node01改为etcd-3ETCD_DATA_DIR="/var/lib/etcd/default.etcd"ETCD_LISTEN_PEER_URLS="https://192.168.22.90:2380" # 修改此处为当前服务器IPETCD_LISTEN_CLIENT_URLS="https://192.168.22.90:2379" # 修改此处为当前服务器IP#[Clustering]ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.22.90:2380" # 修改此处为当前服务器IPETCD_ADVERTISE_CLIENT_URLS="https://192.168.22.90:2379" # 修改此处为当前服务器IPETCD_INITIAL_CLUSTER="etcd-1=https://192.168.22.88:2380,etcd-2=https://192.168.22.89:2380,etcd-3=https://192.168.22.90:2380"ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"ETCD_INITIAL_CLUSTER_STATE="new"

3.4.2 启动etcd并设置开机启动

systemctl daemon-reloadsystemctl start etcdsystemctl enable etcd

3.4.3 查看集群状态

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.22.88:2379,https://192.168.22.89:2379,https://192.168.22.90:2379" endpoint health --write-out=table+----------------------------+--------+-------------+-------+| ENDPOINT | HEALTH | TOOK | ERROR |+----------------------------+--------+-------------+-------+| https://192.168.22.88:2379 | true | 10.301506ms | || https://192.168.22.89:2379 | true | 12.87467ms | || https://192.168.22.90:2379 | true | 13.225954ms | |+----------------------------+--------+-------------+-------+#若有错误 journalctl -u etcd 看日志

4. 部署Master节点

4.1 生成kube-apiserver证书

#自签证书颁发机构(CA)cd ~/TLS/k8scat > ca-config.json << EOF{"signing": {"default": {"expiry": "87600h"},"profiles": {"kubernetes": {"expiry": "87600h","usages": ["signing","key encipherment","server auth","client auth"]}}}}EOFcat > ca-csr.json << EOF{"CN": "kubernetes","key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "Beijing","ST": "Beijing","O": "k8s","OU": "System"}]}EOF

生成证书

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

4.2 使用自签CA签发kube-apiserver HTTPS证书

创建证书申请文件:(hosts字段中IP为所有Master/LB/VIP IP,为了方便后期扩容可以多写几个预留的IP)

cat > server-csr.json << EOF{"CN": "kubernetes","hosts": ["10.0.0.1","127.0.0.1","192.168.22.88","192.168.22.89","192.168.22.90","192.168.22.91","192.168.22.92","kubernetes","kubernetes.default","kubernetes.default.svc","kubernetes.default.svc.cluster","kubernetes.default.svc.cluster.local"],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "BeiJing","ST": "BeiJing","O": "k8s","OU": "System"}]}EOF

生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server#会生成server.pem和server-key.pem文件。

4.3 解压二进制包

#https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.25.mdmkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}tar zxvf kubernetes-server-linux-amd64.tar.gzcd kubernetes/server/bincp kube-apiserver kube-scheduler kube-controller-manager /opt/kubernetes/bincp kubectl /usr/bin/

4.4 部署kube-apiserver

4.4.1 创建配置文件

• —logtostderr:启用日志 • —-v:日志等级 • —log-dir:日志目录 • —etcd-servers:etcd集群地址 • —bind-address:监听地址 • —secure-port:https安全端口 • —advertise-address:集群通告地址 • —allow-privileged:启用授权 • —service-cluster-ip-range:Service虚拟IP地址段 • —enable-admission-plugins:准入控制模块 • —authorization-mode:认证授权,启用RBAC授权和节点自管理 • —enable-bootstrap-token-auth:启用TLS bootstrap机制 • —token-auth-file:bootstrap token文件 • —service-node-port-range:Service nodeport类型默认分配端口范围 • —kubelet-client-xxx:apiserver访问kubelet客户端证书 • —tls-xxx-file:apiserver https证书 • 1.20版本必须加的参数:—service-account-issuer,—service-account-signing-key-file • —etcd-xxxfile:连接Etcd集群证书 • —audit-log-xxx:审计日志 • 启动聚合层相关配置:—requestheader-client-ca-file,—proxy-client-cert-file,—proxy-client-key-file,—requestheader-allowed-names,—requestheader-extra-headers-prefix,—requestheader-group-headers,—requestheader-username-headers,—enable-aggregator-routing 拷贝刚才生成的证书

cat > /opt/kubernetes/cfg/kube-apiserver.conf << EOFKUBE_APISERVER_OPTS="--logtostderr=false \\--v=2 \\--log-dir=/opt/kubernetes/logs \\--etcd-servers=https://192.168.22.88:2379,https://192.168.22.89:2379,https://192.168.22.90:2379 \\--bind-address=192.168.22.88 \\--secure-port=6443 \\--advertise-address=192.168.22.88 \\--allow-privileged=true \\--service-cluster-ip-range=10.0.0.0/24 \\--enable-admission-plugins=NodeRestriction \\--authorization-mode=RBAC,Node \\--enable-bootstrap-token-auth=true \\--token-auth-file=/opt/kubernetes/cfg/token.csv \\--service-node-port-range=30000-32767 \\--kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \\--kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \\--tls-cert-file=/opt/kubernetes/ssl/server.pem \\--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \\--client-ca-file=/opt/kubernetes/ssl/ca.pem \\--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\--service-account-issuer=api \\--service-account-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\--etcd-cafile=/opt/etcd/ssl/ca.pem \\--etcd-certfile=/opt/etcd/ssl/server.pem \\--etcd-keyfile=/opt/etcd/ssl/server-key.pem \\--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \\--proxy-client-cert-file=/opt/kubernetes/ssl/server.pem \\--proxy-client-key-file=/opt/kubernetes/ssl/server-key.pem \\--requestheader-allowed-names=kubernetes \\--requestheader-extra-headers-prefix=X-Remote-Extra- \\--requestheader-group-headers=X-Remote-Group \\--requestheader-username-headers=X-Remote-User \\--enable-aggregator-routing=true \\--audit-log-maxage=30 \\--audit-log-maxbackup=3 \\--audit-log-maxsize=100 \\--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"EOF

cp ~/TLS/k8s/ca*pem ~/TLS/k8s/server*pem /opt/kubernetes/ssl/

4.4.2 启用 TLS Bootstrapping 机制

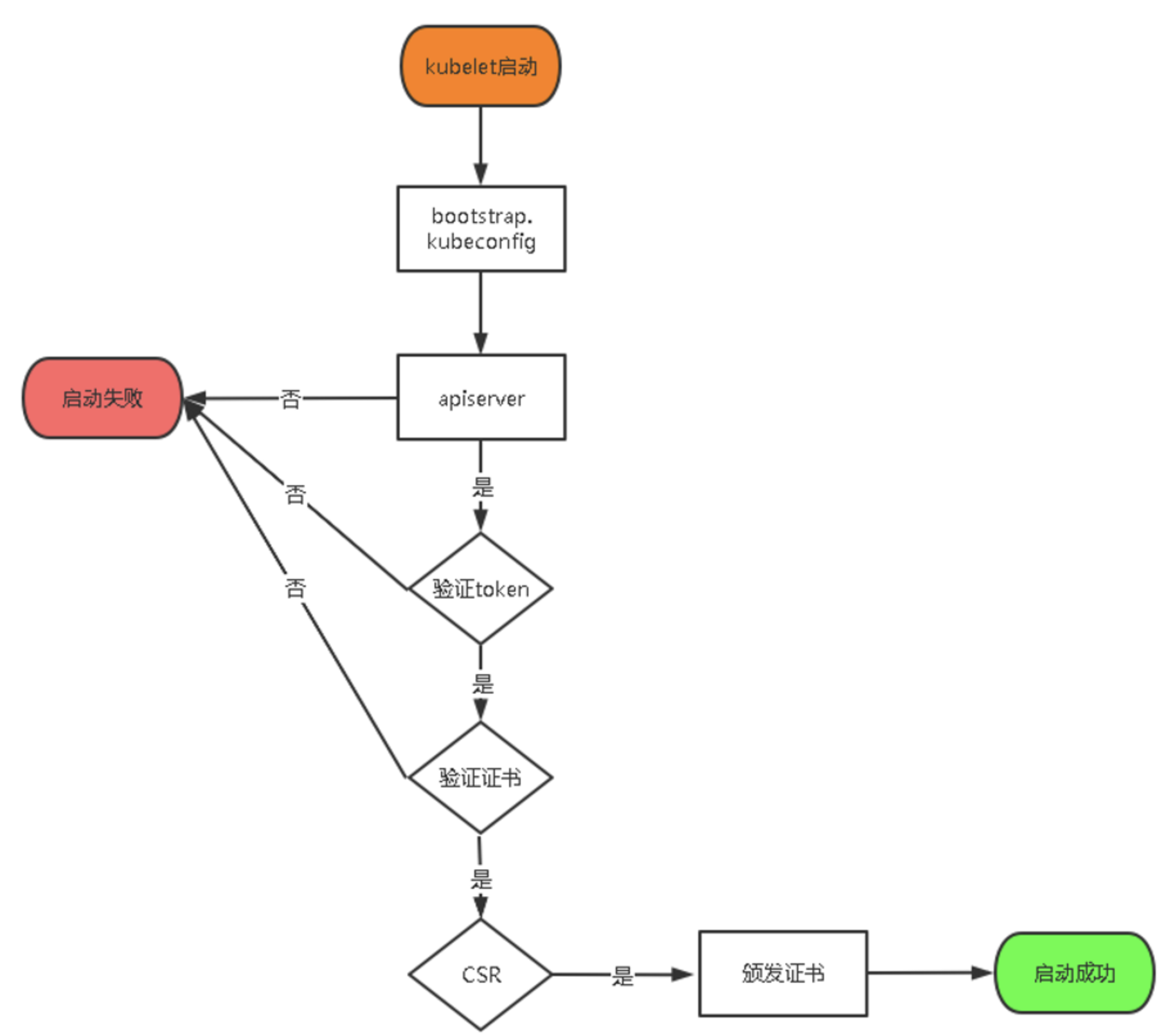

TLS Bootstraping:Master apiserver启用TLS认证后,Node节点kubelet和kube-proxy要与kube-apiserver进行通信,必须使用CA签发的有效证书才可以,当Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。为了简化流程,Kubernetes引入了TLS bootstraping机制来自动颁发客户端证书,kubelet会以一个低权限用户自动向apiserver申请证书,kubelet的证书由apiserver动态签署。所以强烈建议在Node上使用这种方式,目前主要用于kubelet,kube-proxy还是由我们统一颁发一个证书。 TLS bootstraping 工作流程:

token也可自行生成替换:

cat > /opt/kubernetes/cfg/token.csv << EOFc47ffb939f5ca36231d9e3121a252940,kubelet-bootstrap,10001,"system:node-bootstrapper"EOF#格式:token,用户名,UID,用户组

head -c 16 /dev/urandom | od -An -t x | tr -d ' '

4.4.3 systemd管理apiserver

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF[Unit]Description=Kubernetes API ServerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=/opt/kubernetes/cfg/kube-apiserver.confExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOF

4.4.4 启动并设置开机启动

systemctl daemon-reloadsystemctl start kube-apiserversystemctl enable kube-apiserver

4.5 部署kube-controller-manager

4.5.1 创建配置文件

• —kubeconfig:连接apiserver配置文件 • —leader-elect:当该组件启动多个时,自动选举(HA) • —cluster-signing-cert-file/—cluster-signing-key-file:自动为kubelet颁发证书的CA,与apiserver保持一致

cat > /opt/kubernetes/cfg/kube-controller-manager.conf << EOFKUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \\--v=2 \\--log-dir=/opt/kubernetes/logs \\--leader-elect=true \\--kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \\--bind-address=127.0.0.1 \\--allocate-node-cidrs=true \\--cluster-cidr=10.244.0.0/16 \\--service-cluster-ip-range=10.0.0.0/24 \\--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\--root-ca-file=/opt/kubernetes/ssl/ca.pem \\--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\--cluster-signing-duration=87600h0m0s"EOF

4.5.2 生成kubeconfig文件

生成kube-controller-manager证书:

# 切换工作目录cd ~/TLS/k8s# 创建证书请求文件cat > kube-controller-manager-csr.json << EOF{"CN": "system:kube-controller-manager","hosts": [],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "BeiJing","ST": "BeiJing","O": "system:masters","OU": "System"}]}EOF# 生成证书cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

4.5.3 在终端执行以下命令

KUBE_CONFIG="/opt/kubernetes/cfg/kube-controller-manager.kubeconfig"KUBE_APISERVER="https://192.168.22.88:6443"kubectl config set-cluster kubernetes \--certificate-authority=/opt/kubernetes/ssl/ca.pem \--embed-certs=true \--server=${KUBE_APISERVER} \--kubeconfig=${KUBE_CONFIG}kubectl config set-credentials kube-controller-manager \--client-certificate=./kube-controller-manager.pem \--client-key=./kube-controller-manager-key.pem \--embed-certs=true \--kubeconfig=${KUBE_CONFIG}kubectl config set-context default \--cluster=kubernetes \--user=kube-controller-manager \--kubeconfig=${KUBE_CONFIG}kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

4.5.4 systemd管理controller-manager

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF[Unit]Description=Kubernetes Controller ManagerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=/opt/kubernetes/cfg/kube-controller-manager.confExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOF

4.5.5 启动并设置开机启动

systemctl daemon-reloadsystemctl start kube-controller-managersystemctl enable kube-controller-manager

4.6 部署kube-scheduler

4.6.1 创建配置文件

• —kubeconfig:连接apiserver配置文件 • —leader-elect:当该组件启动多个时,自动选举(HA)

cat > /opt/kubernetes/cfg/kube-scheduler.conf << EOFKUBE_SCHEDULER_OPTS="--logtostderr=false \\--v=2 \\--log-dir=/opt/kubernetes/logs \\--leader-elect \\--kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \\--bind-address=127.0.0.1"EOF

4.6.2 生成kubeconfig文件

生成kube-scheduler证书:生成kubeconfig文件

# 切换工作目录cd ~/TLS/k8s# 创建证书请求文件cat > kube-scheduler-csr.json << EOF{"CN": "system:kube-scheduler","hosts": [],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "BeiJing","ST": "BeiJing","O": "system:masters","OU": "System"}]}EOF# 生成证书cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

KUBE_CONFIG="/opt/kubernetes/cfg/kube-scheduler.kubeconfig"KUBE_APISERVER="https://192.168.22.88:6443"kubectl config set-cluster kubernetes \--certificate-authority=/opt/kubernetes/ssl/ca.pem \--embed-certs=true \--server=${KUBE_APISERVER} \--kubeconfig=${KUBE_CONFIG}kubectl config set-credentials kube-scheduler \--client-certificate=./kube-scheduler.pem \--client-key=./kube-scheduler-key.pem \--embed-certs=true \--kubeconfig=${KUBE_CONFIG}kubectl config set-context default \--cluster=kubernetes \--user=kube-scheduler \--kubeconfig=${KUBE_CONFIG}kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

4.6.3 生成kubeconfig文件

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF[Unit]Description=Kubernetes SchedulerDocumentation=https://github.com/kubernetes/kubernetes[Service]EnvironmentFile=/opt/kubernetes/cfg/kube-scheduler.confExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTSRestart=on-failure[Install]WantedBy=multi-user.targetEOF

4.6.4 启动并设置开机启动

systemctl daemon-reloadsystemctl start kube-schedulersystemctl enable kube-scheduler

4.6.5 查看集群状态

生成kubectl连接集群的证书:生成kubeconfig文件:

cat > admin-csr.json <<EOF{"CN": "admin","hosts": [],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "BeiJing","ST": "BeiJing","O": "system:masters","OU": "System"}]}EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

通过kubectl工具查看当前集群组件状态:

mkdir /root/.kubeKUBE_CONFIG="/root/.kube/config"KUBE_APISERVER="https://192.168.22.88:6443"kubectl config set-cluster kubernetes \--certificate-authority=/opt/kubernetes/ssl/ca.pem \--embed-certs=true \--server=${KUBE_APISERVER} \--kubeconfig=${KUBE_CONFIG}kubectl config set-credentials cluster-admin \--client-certificate=./admin.pem \--client-key=./admin-key.pem \--embed-certs=true \--kubeconfig=${KUBE_CONFIG}kubectl config set-context default \--cluster=kubernetes \--user=cluster-admin \--kubeconfig=${KUBE_CONFIG}kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

kubectl get csNAME STATUS MESSAGE ERRORscheduler Healthy okcontroller-manager Healthy oketcd-2 Healthy {"health":"true"}etcd-1 Healthy {"health":"true"}etcd-0 Healthy {"health":"true"}#如上输出说明Master节点组件运行正常。

4.6.6 授权kubelet-bootstrap用户允许请求证书

kubectl create clusterrolebinding kubelet-bootstrap \--clusterrole=system:node-bootstrapper \--user=kubelet-bootstrap

4.7 部署kubelet

4.7.1 创建工作目录并拷贝二进制文件

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}cd kubernetes/server/bincp kubelet kube-proxy /opt/kubernetes/bin # 本地拷贝

4.7.2 创建配置文件

• —hostname-override:显示名称,集群中唯一 • —network-plugin:启用CNI • —kubeconfig:空路径,会自动生成,后面用于连接apiserver • —bootstrap-kubeconfig:首次启动向apiserver申请证书 • —config:配置参数文件 • —cert-dir:kubelet证书生成目录 • —pod-infra-container-image:管理Pod网络容器的镜像

cat > /opt/kubernetes/cfg/kubelet.conf << EOFKUBELET_OPTS="--logtostderr=false \\--v=2 \\--log-dir=/opt/kubernetes/logs \\--hostname-override=k8s-master01 \\--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\--config=/opt/kubernetes/cfg/kubelet-config.yml \\--cert-dir=/opt/kubernetes/ssl \\--container-runtime=remote \\--runtime-request-timeout=15m \\--container-runtime-endpoint=unix:///run/containerd/containerd.sock \\--cgroup-driver=systemd \\--node-labels=node.kubernetes.io/node='' \\--feature-gates=IPv6DualStack=trueEOF

4.7.3 配置参数

cat > /opt/kubernetes/cfg/kubelet-config.yml << EOFkind: KubeletConfigurationapiVersion: kubelet.config.k8s.io/v1beta1address: 0.0.0.0port: 10250readOnlyPort: 10255cgroupDriver: cgroupfsclusterDNS:- 10.0.0.2clusterDomain: cluster.localfailSwapOn: falseauthentication:anonymous:enabled: falsewebhook:cacheTTL: 2m0senabled: truex509:clientCAFile: /opt/kubernetes/ssl/ca.pemauthorization:mode: Webhookwebhook:cacheAuthorizedTTL: 5m0scacheUnauthorizedTTL: 30sevictionHard:imagefs.available: 15%memory.available: 100Minodefs.available: 10%nodefs.inodesFree: 5%maxOpenFiles: 1000000maxPods: 110EOF

4.7.4 生成kubelet初次加入集群引导kubeconfig文件

KUBE_CONFIG="/opt/kubernetes/cfg/bootstrap.kubeconfig"KUBE_APISERVER="https://192.168.22.88:6443" # apiserver IP:PORTTOKEN="c47ffb939f5ca36231d9e3121a252940" # 与token.csv里保持一致# 生成 kubelet bootstrap kubeconfig 配置文件kubectl config set-cluster kubernetes \--certificate-authority=/opt/kubernetes/ssl/ca.pem \--embed-certs=true \--server=${KUBE_APISERVER} \--kubeconfig=${KUBE_CONFIG}kubectl config set-credentials "kubelet-bootstrap" \--token=${TOKEN} \--kubeconfig=${KUBE_CONFIG}kubectl config set-context default \--cluster=kubernetes \--user="kubelet-bootstrap" \--kubeconfig=${KUBE_CONFIG}kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

4.7.5 systemd管理kubelet

cat > /usr/lib/systemd/system/kubelet.service << EOF[Unit]Description=Kubernetes KubeletAfter=docker.service[Service]EnvironmentFile=/opt/kubernetes/cfg/kubelet.confExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTSRestart=on-failureLimitNOFILE=65536[Install]WantedBy=multi-user.targetEOF

4.7.6 启动并设置开机启动

systemctl daemon-reloadsystemctl start kubeletsystemctl enable kubelet

4.7.7 批准kubelet证书申请并加入集群

# 查看kubelet证书请求kubectl get csrNAME AGE SIGNERNAME REQUESTOR CONDITIONnode-csr-uCEGPOIiDdlLODKts8J658HrFq9CZ--K6M4G7bjhk8A 6m3s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending# 批准申请kubectl certificate approve node-csr-uCEGPOIiDdlLODKts8J658HrFq9CZ--K6M4G7bjhk8A# 查看节点kubectl get nodeNAME STATUS ROLES AGE VERSIONk8s-master1 NotReady <none> 7s v1.25.2#由于网络插件还没有部署,节点会没有准备就绪 NotReady

4.8 部署kube-proxy

4.8.1 创建配置文件

cat > /opt/kubernetes/cfg/kube-proxy.conf << EOFKUBE_PROXY_OPTS="--logtostderr=false \\--v=2 \\--log-dir=/opt/kubernetes/logs \\--config=/opt/kubernetes/cfg/kube-proxy-config.yml"EOF

4.8.2 配置参数文件

cat > /opt/kubernetes/cfg/kube-proxy-config.yml << EOFkind: KubeProxyConfigurationapiVersion: kubeproxy.config.k8s.io/v1alpha1bindAddress: 0.0.0.0metricsBindAddress: 0.0.0.0:10249clientConnection:kubeconfig: /opt/kubernetes/cfg/kube-proxy.kubeconfighostnameOverride: k8s-master01clusterCIDR: 10.244.0.0/16EOF

4.8.3 生成kube-proxy.kubeconfig文件

# 切换工作目录cd ~/TLS/k8s# 创建证书请求文件cat > kube-proxy-csr.json << EOF{"CN": "system:kube-proxy","hosts": [],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","L": "BeiJing","ST": "BeiJing","O": "k8s","OU": "System"}]}EOF# 生成证书cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy生成kubeconfig文件:KUBE_CONFIG="/opt/kubernetes/cfg/kube-proxy.kubeconfig"KUBE_APISERVER="https://192.168.2.88:6443"kubectl config set-cluster kubernetes \--certificate-authority=/opt/kubernetes/ssl/ca.pem \--embed-certs=true \--server=${KUBE_APISERVER} \--kubeconfig=${KUBE_CONFIG}kubectl config set-credentials kube-proxy \--client-certificate=./kube-proxy.pem \--client-key=./kube-proxy-key.pem \--embed-certs=true \--kubeconfig=${KUBE_CONFIG}kubectl config set-context default \--cluster=kubernetes \--user=kube-proxy \--kubeconfig=${KUBE_CONFIG}kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

4.8.4 systemd管理kube-proxy

cat > /usr/lib/systemd/system/kube-proxy.service << EOF[Unit]Description=Kubernetes ProxyAfter=network.target[Service]EnvironmentFile=/opt/kubernetes/cfg/kube-proxy.confExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTSRestart=on-failureLimitNOFILE=65536[Install]WantedBy=multi-user.targetEOF

4.8.5 启动并设置开机启动

systemctl daemon-reloadsystemctl start kube-proxysystemctl enable kube-proxy

4.8.6 部署网络组件

Calico是一个纯三层的数据中心网络方案,是目前Kubernetes主流的网络方案。 部署Calico:

kubectl apply -f calico.yamlkubectl get pods -n kube-system

故障排查

①当calico镜像因为网络原因被墙下载不了的情况,课程包中也准备了离线镜像,使用Containerd的ctr命令导入本地(其中一定要加上命名空间-n=k8s.io)

ctr -n=k8s.io images import pause.tar

②上述步骤中改错任意IP或host地址都会导致集群组件抛异常,可通过journalctl -u kube-scheduler(任意组件名排查故障)

kubectl get nodeNAME STATUS ROLES AGE VERSIONk8s-master Ready <none> 37m v1.25.2#部署成功后节点会准备就绪

4.8.7 授权apiserver访问kubelet

cat > apiserver-to-kubelet-rbac.yaml << EOFapiVersion: rbac.authorization.k8s.io/v1kind: ClusterRolemetadata:annotations:rbac.authorization.kubernetes.io/autoupdate: "true"labels:kubernetes.io/bootstrapping: rbac-defaultsname: system:kube-apiserver-to-kubeletrules:- apiGroups:- ""resources:- nodes/proxy- nodes/stats- nodes/log- nodes/spec- nodes/metrics- pods/logverbs:- "*"---apiVersion: rbac.authorization.k8s.io/v1kind: ClusterRoleBindingmetadata:name: system:kube-apiservernamespace: ""roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: system:kube-apiserver-to-kubeletsubjects:- apiGroup: rbac.authorization.k8s.iokind: Username: kubernetesEOFkubectl apply -f apiserver-to-kubelet-rbac.yaml

5. 部署Node节点

5.1 拷贝已部署好的Node相关文件到新节点

在k8s-master01节点将Worker Node涉及文件拷贝到新节点192.168.22.90

scp -r /opt/kubernetes root@192.168.22.90:/opt/scp -r /usr/lib/systemd/system/{kubelet,kube-proxy}.service root@192.168.22.90:/usr/lib/systemd/system

5.2 删除kubelet证书和kubeconfig文件

rm -f /opt/kubernetes/cfg/kubelet.kubeconfigrm -f /opt/kubernetes/ssl/kubelet*#注:这几个文件是证书申请审批后自动生成的,每个Node不同,必须删除

5.3 修改主机名

vim /opt/kubernetes/cfg/kubelet.conf--hostname-override=k8s-node01vim /opt/kubernetes/cfg/kube-proxy-config.ymlhostnameOverride: k8s-node01

5.4 启动并设置开机启动

systemctl daemon-reloadsystemctl start kubelet kube-proxysystemctl enable kubelet kube-proxy

5.5 在Master上批准新Node kubelet证书申请

# 查看证书请求kubectl get csrNAME AGE SIGNERNAME REQUESTOR CONDITIONnode-csr-4zTjsaVSrhuyhIGqsefxzVoZDCNKei-aE2jyTP81Uro 89s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending# 授权请求kubectl certificate approve node-csr-4zTjsaVSrhuyhIGqsefxzVoZDCNKei-aE2jyTP81Uro

5.6 查看Node状态

kubectl get nodeNAME STATUS ROLES AGE VERSIONk8s-master01 Ready <none> 47m v1.25.2k8s-node01 Ready <none> 6m49s v1.25.2

后续新加的node节点同上。记得修改主机名!

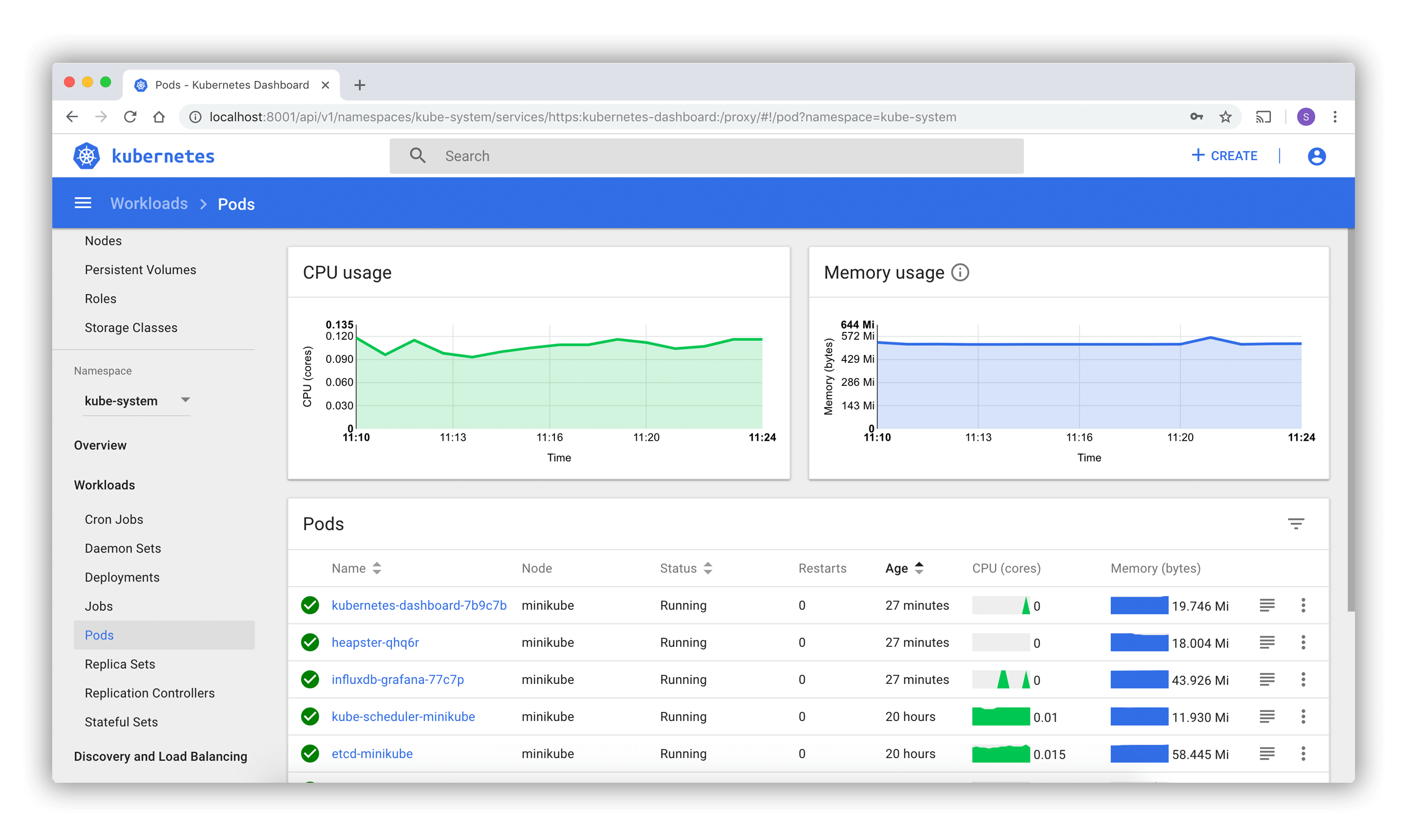

6. 部署Dashboard和CoreDNS

6.1 部署Dashboard

创建service account并绑定默认cluster-admin管理员集群角色:

kubectl apply -f kubernetes-dashboard.yaml# 查看部署kubectl get pods,svc -n kubernetes-dashboard#访问地址:https://NodeIP:30001

使用输出的token登录Dashboard。

kubectl create serviceaccount dashboard-admin -n kube-systemkubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-adminkubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

6.2 部署CoreDNS

CoreDNS用于集群内部Service名称解析。

kubectl apply -f coredns.yamlkubectl get pods -n kube-systemNAME READY STATUS RESTARTS AGEcoredns-5ffbfd976d-j6shb 1/1 Running 0 32s

6.2.1 DNS解析测试:

kubectl run -it --rm dns-test --image=busybox:1.28.4 shIf you don't see a command prompt, try pressing enter./ # nslookup kubernetesServer: 10.0.0.2Address 1: 10.0.0.2 kube-dns.kube-system.svc.cluster.localName: kubernetesAddress 1: 10.0.0.1 kubernetes.default.svc.cluster.local

以上。一个单Master集群就搭建完成了。足以满足后续的学习实验

7. 拓展篇:扩容多Master节点(高可用)

Kubernetes作为容器集群系统,通过健康检查+重启策略实现了Pod故障自我修复能力,通过调度算法实现将Pod分布式部署,并保持预期副本数,根据Node失效状态自动在其他Node拉起Pod,实现了应用层的高可用性。 针对Kubernetes集群,高可用性还应包含以下两个层面的考虑:Etcd数据库的高可用性和Kubernetes Master组件的高可用性。 而Etcd我们已经采用3个节点组建集群实现高可用,本节将对Master节点高可用进行说明和实施。 Master节点扮演着总控中心的角色,通过不断与工作节点上的Kubelet和kube-proxy进行通信来维护整个集群的健康工作状态。如果Master节点故障,将无法使用kubectl工具或者API做任何集群管理。 Master节点主要有三个服务kube-apiserver、kube-controller-manager和kube-scheduler,其中kube-controller-manager和kube-scheduler组件自身通过选择机制已经实现了高可用,所以Master高可用主要针对kube-apiserver组件,而该组件是以HTTP API提供服务,因此对他高可用与Web服务器类似,增加负载均衡器对其负载均衡即可,并且可水平扩容。

7.1 部署k8s-master02

k8s-master02 与已部署的k8s-master01所有操作一致。所以我们只需将Master1所有K8s文件拷贝过来,再修改下服务器IP和主机名启动即可。7.1.1 拷贝文件

scp -r /opt/kubernetes root@192.168.22.89:/optscp -r /opt/etcd/ssl root@192.168.22.89:/opt/etcdscp /usr/lib/systemd/system/kube* root@192.168.22.89:/usr/lib/systemd/systemscp /usr/bin/kubectl root@192.168.22.89:/usr/binscp -r ~/.kube root@192.168.22.89:~

7.1.2 删除证书文件

rm -f /opt/kubernetes/cfg/kubelet.kubeconfigrm -f /opt/kubernetes/ssl/kubelet*#删除kubelet证书和kubeconfig文件

7.1.3 修改apiserver、kubelet和kube-proxy配置文件为本地IP

vi /opt/kubernetes/cfg/kube-apiserver.conf...--bind-address=192.168.22.89 \--advertise-address=192.168.22.89 \...vi /opt/kubernetes/cfg/kube-controller-manager.kubeconfigserver: https://192.168.22.89:6443vi /opt/kubernetes/cfg/kube-scheduler.kubeconfigserver: https://192.168.22.89:6443vi /opt/kubernetes/cfg/kubelet.conf--hostname-override=k8s-master02vi /opt/kubernetes/cfg/kube-proxy-config.ymlhostnameOverride: k8s-master2vi ~/.kube/config...server: https://192.168.22.89:6443

7.1.4 启动设置开机启动

systemctl daemon-reloadsystemctl start kube-apiserver kube-controller-manager kube-scheduler kubelet kube-proxysystemctl enable kube-apiserver kube-controller-manager kube-scheduler kubelet kube-proxy

7.1.5 查看集群状态

kubectl get csNAME STATUS MESSAGE ERRORscheduler Healthy okcontroller-manager Healthy oketcd-1 Healthy {"health":"true"}etcd-2 Healthy {"health":"true"}etcd-0 Healthy {"health":"true"}

7.1.6 批准kubelet证书申请

# 查看证书请求kubectl get csrNAME AGE SIGNERNAME REQUESTOR CONDITIONnode-csr-JYNknakEa_YpHz797oKaN-ZTk43nD51Zc9CJkBLcASU 85m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending# 授权请求kubectl certificate approve node-csr-JYNknakEa_YpHz797oKaN-ZTk43nD51Zc9CJkBLcASU# 查看Nodekubectl get nodeNAME STATUS ROLES AGE VERSIONk8s-master01 Ready <none> 34h v1.25.2k8s-master22 Ready <none> 2m v1.25.2k8s-node01 Ready <none> 33h v1.25.2

7.2 高可用负载均衡器(Nginx+Keepalived,SLB等等)

这块就不再多叙述,主要思路就是将kube-apiserver做高可用。通过loadbalance访问

配置完LB VIP(192.168.22.91)以后。在所有节点执行

sed -i 's#192.168.22.88:6443#192.168.22.91:6443#' /opt/kubernetes/cfg/*systemctl restart kubelet kube-proxykubectl get node

实验总结:

基于上述实验,能够对k8s各项组件配置深入了解。