本文章是我刚刚大二写的,在我博客上面放着,现在腾到语雀上,我的博客地址:ubuntu系统下搭建hadoo环境

说明:本人Ubuntu系统是18.04版本 操作中用的jdk是jdk-8u162-linux-x64 hadoop是hadoop-2.7.1_64bit

1.前期准备

1.切换到root用户下

#初始化root用户密码sudo passwd

2.查看防火墙状态

inactive是防火墙关闭状态,active是开启状态

如果开的话就关闭防火墙

systemctl stop firewalld.service

开机不启动防火墙

systemctl disable firewalld.service

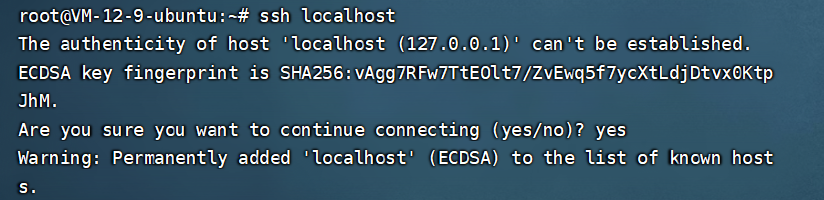

3.设置ssh localhost 免密码登录

ssh localhost

输入密码登录本机和退出本机

在进行了初次登陆后,会在当前家目录用户下有一个.ssh文件夹,进入该文件夹下:

cd ~/.ssh/

使用rsa算法生成秘钥和公钥对:

ssh-keygen -t rsa

运行后一路回车就可以了,其中第一个是要输入秘钥和公钥对的保存位置,默认是在:

.ssh/id_rsa

然后把公钥加入到授权中:

cat ./id_rsa.pub >> ./authorized_keys

再次ssh localhost的时候就可以无密码登陆了。

过程如下:

2.安装jdk

1.创建一个java的文件夹,用户存放Java文件

mkdir /usr/local/java

刷新,此时在我们的user/local中可以看到我们新建的java文件夹

2.上传我们的jdk安装包到我们新建的java目录下

#切换到java目录下

root@VM-12-9-ubuntu:~# cd /usr/local/java

#上传文件命令

root@VM-12-9-ubuntu:/usr/local/java# rz

3.入图所示,我们jdk下载成功了

4.解压jdk文件(注意文件名称,jdk版本)

#也可以用ls命令来检查这个目录下是否有这个文件

root@VM-12-9-ubuntu:/usr/local/java# ls

jdk-8u162-linux-x64.tar.gz

#解压jdk文件(注意文件名称,jdk版本,可以用Tab键来补全代码)

root@VM-12-9-ubuntu:/usr/local/java# tar -vxzf jdk-8u162-linux-x64.tar.gz

jdk1.8.0_162/

jdk1.8.0_162/javafx-src.zip

jdk1.8.0_162/bin/

jdk1.8.0_162/bin/jmc

jdk1.8.0_162/bin/serialver

jdk1.8.0_162/bin/jmc.ini

jdk1.8.0_162/bin/jstack

jdk1.8.0_162/bin/rmiregistry

jdk1.8.0_162/bin/unpack200

jdk1.8.0_162/bin/jar

jdk1.8.0_162/bin/jps

jdk1.8.0_162/bin/wsimport

#.....解压文件中还有很多的内容,这里就不一一列举了

5.配置环境变量

root@VM-12-9-ubuntu:/usr/local/java# vim .bashrc

##在里面输入

JAVA_HOME=/usr/local/java/jdk1.8.0_162

PATH=$PATH:$HOME/bin:$JAVA_HOME/bin

export JAVA_HOME

export PATH

#使配置文件生效

root@VM-12-9-ubuntu:~# source .bashrc

#查看java版本

root@VM-12-9-ubuntu:~# java -version

java version "1.8.0_162"

Java(TM) SE Runtime Environment (build 1.8.0_162-b12)

Java HotSpot(TM) 64-Bit Server VM (build 25.162-b12, mixed mode)

#查看java路径

root@VM-12-9-ubuntu:~# $echo $JAVA_HOME

bash: /usr/local/java/jdk1.8.0_162: Is a directory

#也可以用javac java来验证,看里面的

3.安装Hadoop

1.创建一个hadoop的文件夹,用户存放hadoop文件(步骤同上)

#新建hadoop目录

root@VM-12-9-ubuntu:~# mkdir /usr/local/hadoop

root@VM-12-9-ubuntu:~# cd /usr/local/hadoop

#上传文件

root@VM-12-9-ubuntu:/usr/local/hadoop# rz

2.解压hadoop(注意文件名称,hadoop版本)

root@VM-12-9-ubuntu:/usr/local/hadoop# tar -vxzf hadoop-2.7.1_64bit.tar.gz

3.移动hadoop-2.7.1文件下的文件到当前的目录

root@VM-12-9-ubuntu:/usr/local/hadoop# mv ./hadoop-2.7.1/* ./

4.设置环境变量

#设置环境变量:

root@VM-12-9-ubuntu:~# vim .bashrc

##在里面加入

JAVA_HOME=/usr/local/java/jdk1.8.0_162

HADOOP_HOME=/usr/local/hadoop

PATH=$PATH:$HOME/bin:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

export JAVA_HOME

export PATH

export HADOOP_HOME

#使配置文件生效

root@VM-12-9-ubuntu:~# source .bashrc

#检测:

root@VM-12-9-ubuntu:~# hadoop version

Hadoop 2.7.1

Subversion https://git-wip-us.apache.org/repos/asf/hadoop.git -r 15ecc87ccf4a0228f35af08fc56de536e6ce657a

Compiled by jenkins on 2015-06-29T06:04Z

Compiled with protoc 2.5.0

From source with checksum fc0a1a23fc1868e4d5ee7fa2b28a58a

This command was run using /usr/local/hadoop/share/hadoop/common/hadoop-common-2.7.1.jar

4.修改hadoop配置文件

1.在/usr/local/hadoop/etc/hadoop中找到core-site.xml文件和hdfs-site.xml文件

core-site.xml文件中配置信息入下

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/hadoop/tmp</value>

<description>Abase for other temporary directories.</description>

</property>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

hdfs-site.xml配置文件 入下:

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/hadoop/tmp/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/local/hadoop/tmp/dfs/data</value>

</property>

</configuration>

5.测试、启动

1.格式化namenode:

root@VM-12-9-ubuntu:/usr/local/hadoop/etc/hadoop# hadoop namenode -format

看到这两个字眼则表示测试成功!

2.启动hdfs

root@VM-12-9-ubuntu:/usr/local/hadoop/etc/hadoop# start-all.sh

3.查看相应的进程:

root@VM-12-9-ubuntu:/usr/local/hadoop/etc/hadoop# jps

4.访问测试

1.首先确保你的服务器50070端口是打开状态的,如果没有打开就去服务器防火墙处打开50070端口号,添加规则即可!

你的ip地址:50070

会出现如下页面:

3.修改配置文件,让在主目录中也能开启关闭hadoop服务

root@VM-12-9-ubuntu:~# vim ~/.bashrc

#在里面加入

export PATH=$PATH:/usr/local/hadoop/sbin:/user/local/hadoop/bin

#使配置文件生效

root@VM-12-9-ubuntu:~# source ~/.bashrc

#启动测试

root@VM-12-9-ubuntu:~# start-all.sh

This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh

Starting namenodes on [localhost]

localhost: namenode running as process 25883. Stop it first.

localhost: starting datanode, logging to /usr/local/hadoop/logs/hadoop-root-datanode-VM-12-9-ubuntu.out

Starting secondary namenodes [0.0.0.0]

0.0.0.0: secondarynamenode running as process 26521. Stop it first.

starting yarn daemons

resourcemanager running as process 26773. Stop it first.

localhost: nodemanager running as process 27193. Stop it first.