这是我第一次写生成式模型的文章,查阅了很多资料,因为各个论文和资料的表示方法不一样,本文遵从Lil’s Log和DDPM文章中的表示方法。

这篇文章的推导很难,看了很久。 在阅读本文之前需要读者有变分推断,VAE,GAN模型等相关知识。 由于笔者水平有限,如有错误还请各位看官怒斥。 2022/4/25

参考文献与链接

知乎:

score matching推导

Denoising Diffusion Probabilistic Model(DDPM) - 知乎 (zhihu.com)

从DDPM到GLIDE:基于扩散模型的图像生成算法进展 - 知乎 (zhihu.com)

博客(强烈推荐):

Yang Song | Generative Modeling by Estimating Gradients of the Data Distribution)

What are Diffusion Models? | Lil’Log

paper:

论文Denoising Diffusion Probabilistic Model

背景

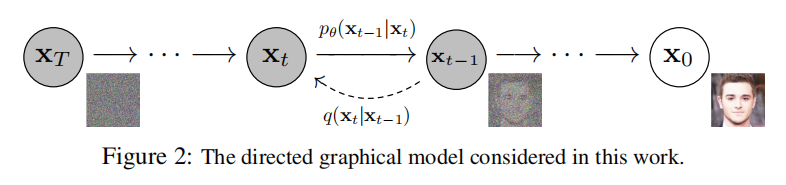

Diffusion Model是隐变量模型,其中

与数据都是一样的维度,

。

可以定义为一个具有正反向的马氏链:

正向过程

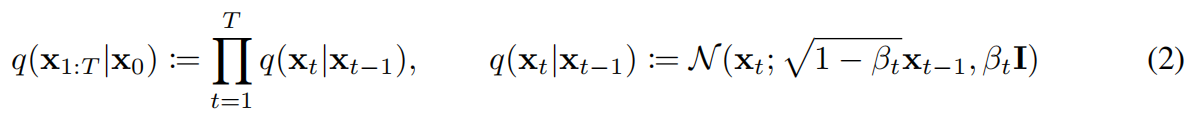

正向过程是逐渐向图片加噪的过程,噪声满足高斯分布。

正向过程的每一步是一个高斯噪声,均值

,方差为

,

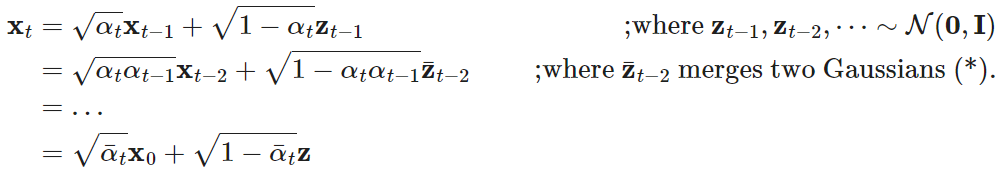

重参数技巧

上面的马氏链可以让我们在每一个时间步采样一个

。现在我们令

,

。

则有

这样我们就可以得到条件分布。

表示两个高斯分布的加和,由

,以此类推。

与SGLD的联系

SGLD是stochastic gradient langevin dynamics。

langevin dynamics是一个物理概念,来自于模拟分子运动,其提供了一个MCMC(markov chain monte carlo)过程,使用迭代的方式通过对数梯度从分布

中采样。确切来说,从先验概率

初始化链,按如下迭代式迭代:

,当

时,

可以被采样出来且收敛。

Compared to standard SGD, stochastic gradient Langevin dynamics injects Gaussian noise into the parameter updates to avoid collapses into local minima.

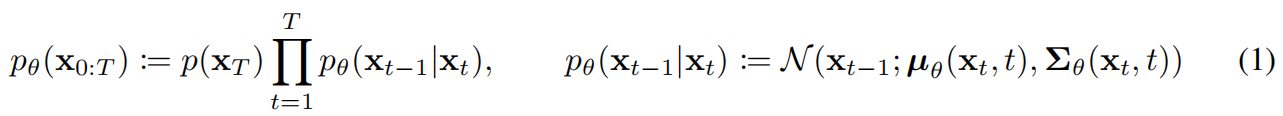

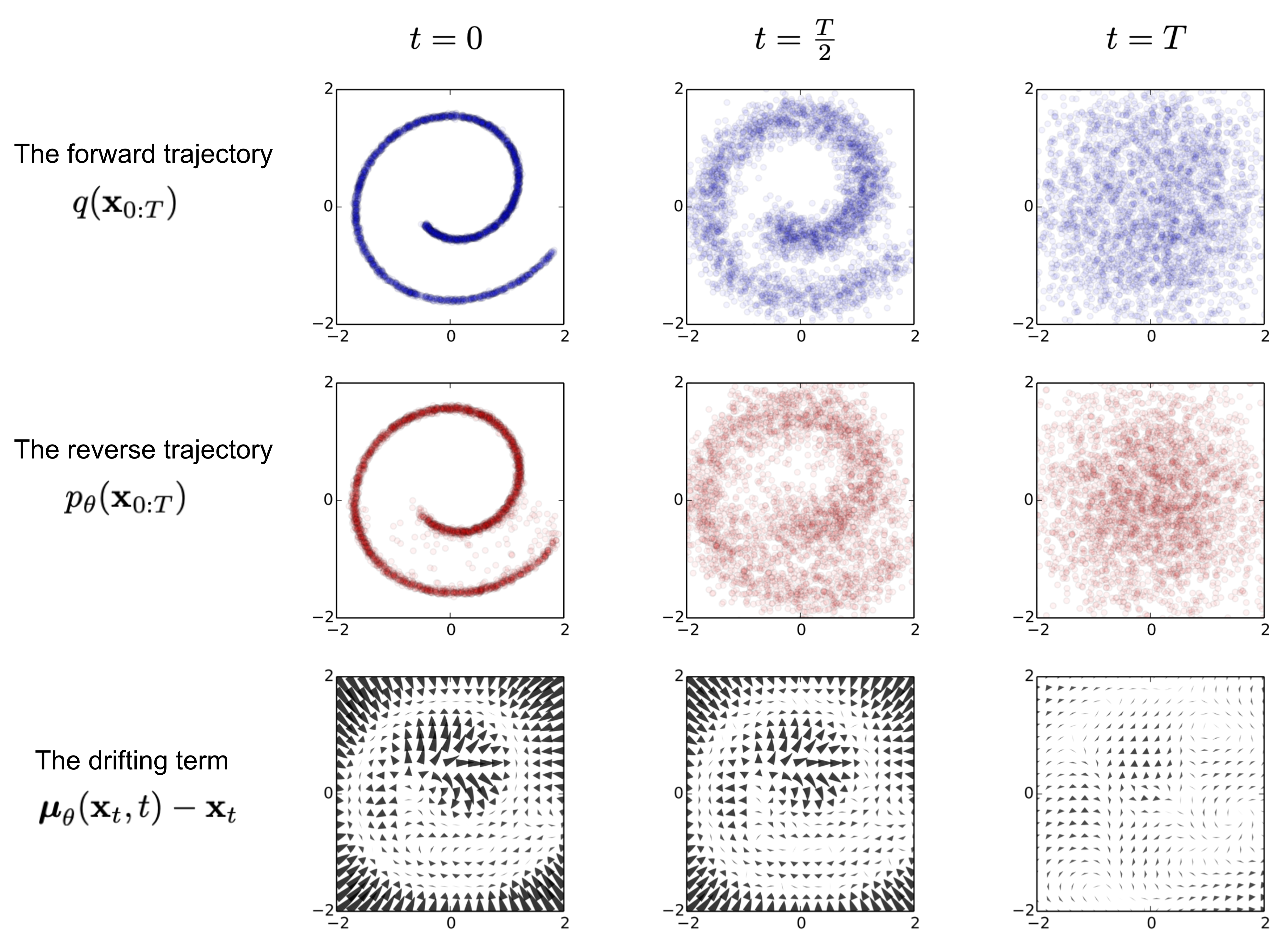

反向过程

反向过程从先验开始,逐渐从先验中去噪(denoise),

。

将每次去噪过程定义为一个高斯分布,那么实际上正反向过程分别是加和减高斯噪声。

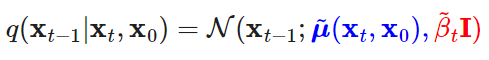

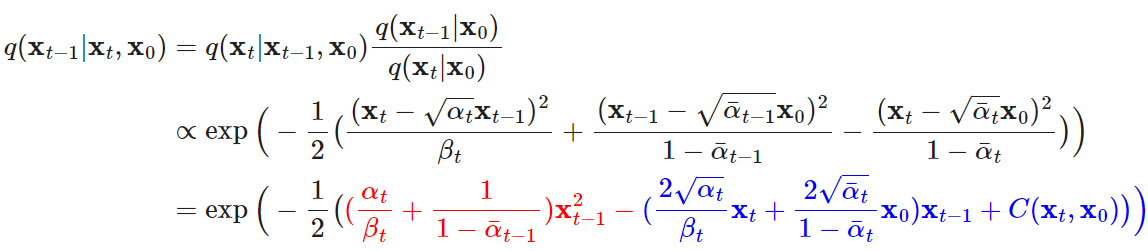

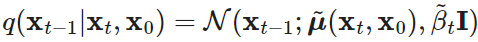

值得注意的是,当反向过程以为条件时,

是tractable的。

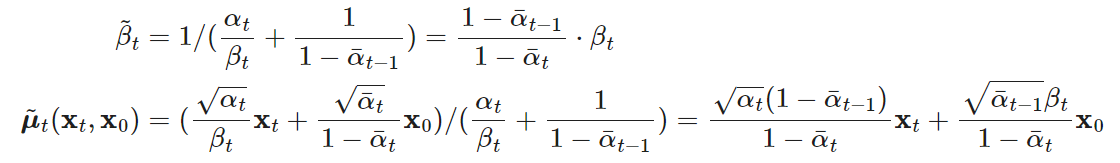

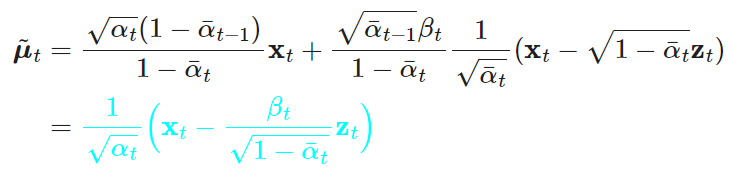

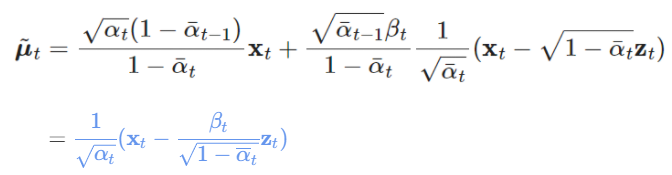

因此可以写出%22%20aria-hidden%3D%22true%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMATHI-3BC%22%20x%3D%220%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJSZ1-2DC%22%20x%3D%2251%22%20y%3D%22-46%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fsvg%3E#card=math&code=%5Ctilde%20%5Cmu&id=xLG1Z)和

将等式带入

得到

%22%20aria-hidden%3D%22true%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-3D%22%20x%3D%220%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(1056%2C0)%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%3E%0A%3Cg%20transform%3D%22translate(120%2C0)%22%3E%0A%3Crect%20stroke%3D%22none%22%20width%3D%221949%22%20height%3D%2260%22%20x%3D%220%22%20y%3D%22220%22%3E%3C%2Frect%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(724%2C676)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-31%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(60%2C-729)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-221A%22%20x%3D%220%22%20y%3D%22-152%22%3E%3C%2Fuse%3E%0A%3Crect%20stroke%3D%22none%22%20width%3D%22996%22%20height%3D%2260%22%20x%3D%22833%22%20y%3D%22589%22%3E%3C%2Frect%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(833%2C0)%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMATHI-3B1%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(640%2C-150)%22%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMATHI-74%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(2189%2C0)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-28%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(2579%2C0)%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAINB-78%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(607%2C-150)%22%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMATHI-74%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(3764%2C0)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-2212%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(4542%2C0)%22%3E%0A%3Cg%20transform%3D%22translate(342%2C0)%22%3E%0A%3Crect%20stroke%3D%22none%22%20width%3D%223770%22%20height%3D%2260%22%20x%3D%220%22%20y%3D%22220%22%3E%3C%2Frect%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(1424%2C714)%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMATHI-3B2%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(566%2C-150)%22%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMATHI-74%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(60%2C-841)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-221A%22%20x%3D%220%22%20y%3D%22-40%22%3E%3C%2Fuse%3E%0A%3Crect%20stroke%3D%22none%22%20width%3D%222817%22%20height%3D%2260%22%20x%3D%22833%22%20y%3D%22701%22%3E%3C%2Frect%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(833%2C0)%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-31%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(722%2C0)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-2212%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(1723%2C0)%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(35%2C0)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMATHI-3B1%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(27%2C7)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-AF%22%20x%3D%22-70%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-AF%22%20x%3D%22210%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(738%2C-150)%22%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMATHI-74%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(8776%2C0)%22%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAINB-7A%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(511%2C-150)%22%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMATHI-74%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3Cg%20fill%3D%22%236495ed%22%20stroke%3D%22%236495ed%22%20transform%3D%22translate(9643%2C0)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-29%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fsvg%3E#card=math&code=%3D%5Cblue%7B%5Ccfrac%201%7B%5Csqrt%7B%5Calpha_t%7D%7D%28%5Cmathbf%20x_t-%5Ccfrac%7B%5Cbeta_t%7D%7B%5Csqrt%7B1-%5Coverline%20%5Calpha_t%7D%7D%5Cmathbf%20z_t%29%7D&id=WuEpo)

这个结论后面会用到。

训练目标

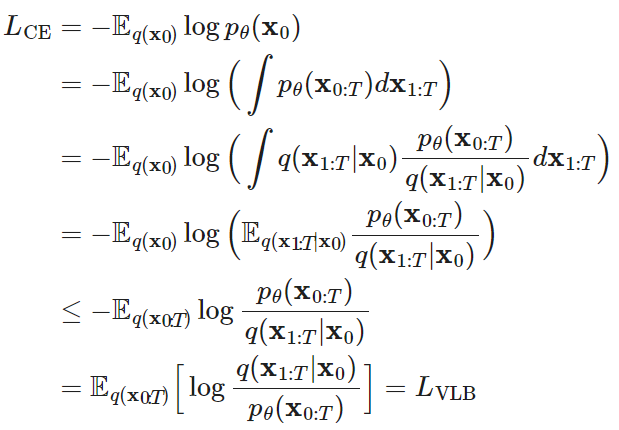

我们的训练目标是最小化CE:

第二个等号可以写为(这里为了简化令):

倒数第二个“”式子可以由Jensen不等式,交换

和

得到。

VLB表示Variational Lower Bound,此部分需要变分推断的知识。

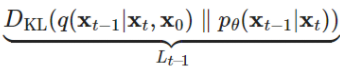

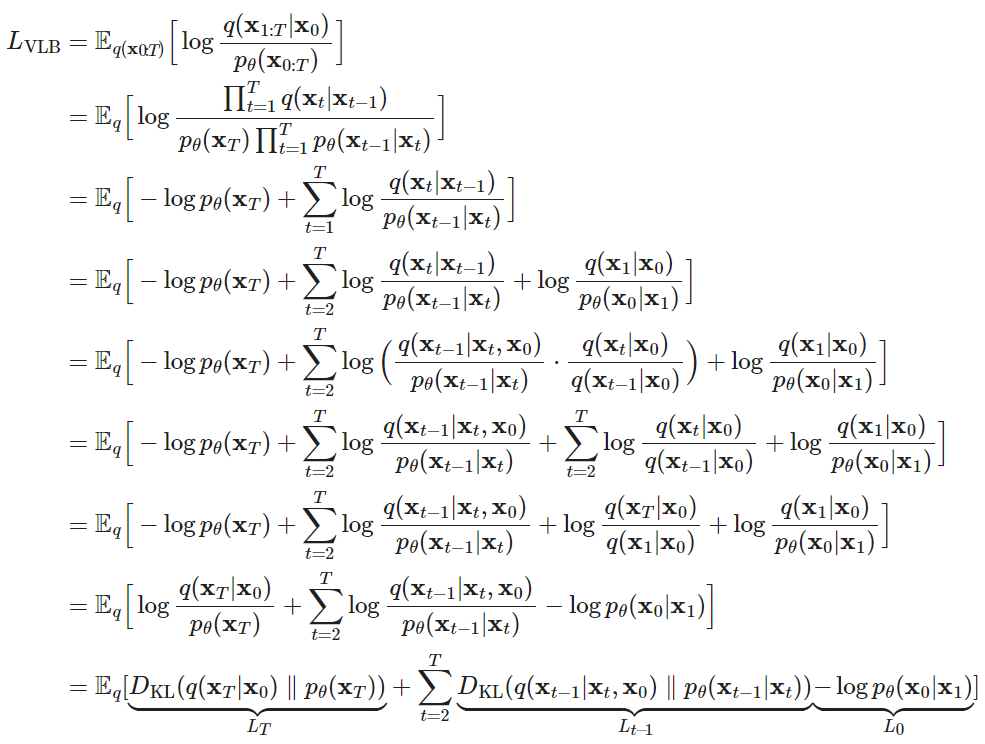

为了让每一项都能计算,需要写成KL距离或者熵的形式:

可以看出训练目标变为项,并且每一项是计算两个分布的KL距离,因此可以闭式计算,不需要高方差的蒙特卡洛法。

对于,由于

没有参数,且

是先验,所以

是常数可以忽略。

DDPM中,将建模为单独的离散的decoder,服从分布

。

DDPM

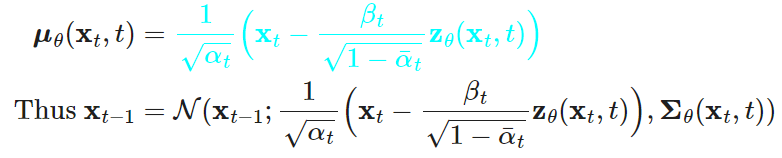

参数化

根据上一节推导出的和两个高斯分布的KL距离,可以参数化为下面式子(带入上述推导的分布

),以最小化与

的差距:

我将要用的式子放在这里,以供读者推导

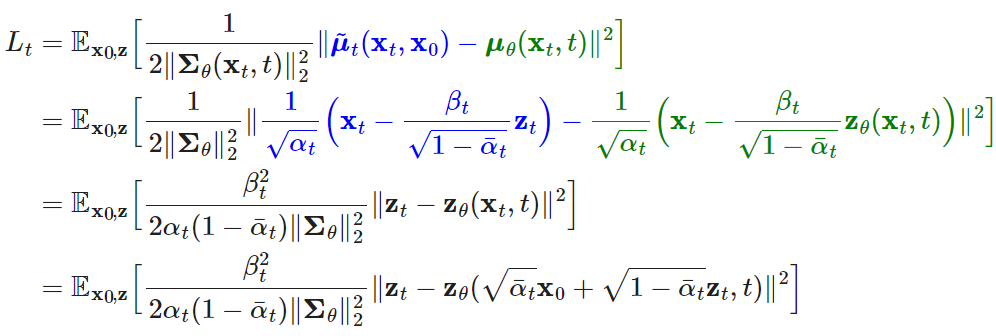

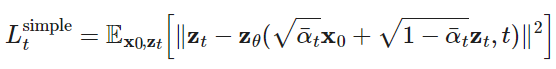

简化

DDPM作者发现把期望中的权重去掉可以更好的训练,因此将写为:

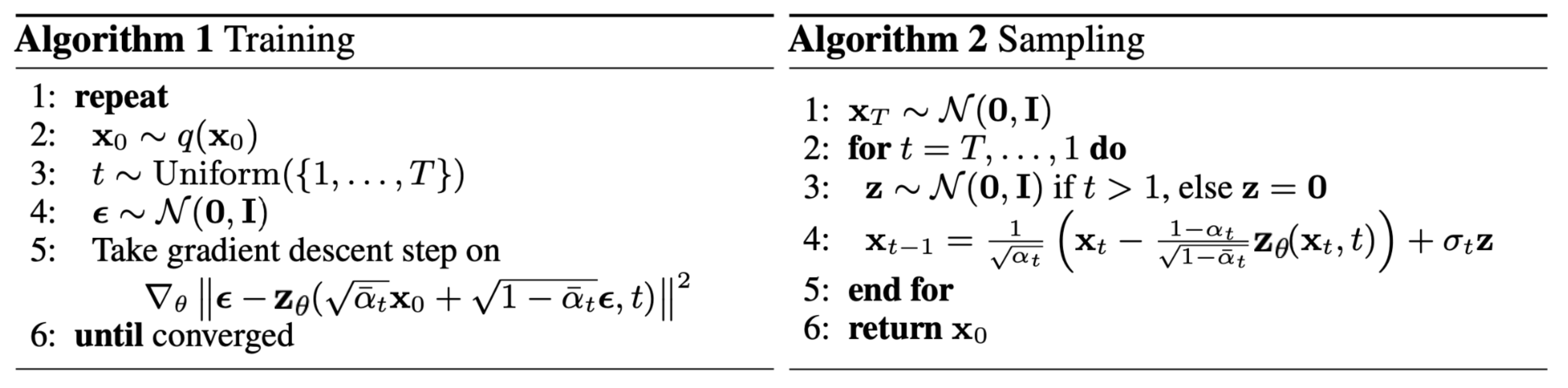

训练和采样过程

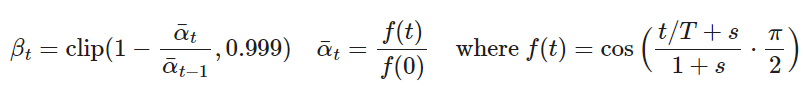

的选择

的选择

在DDPM中,作者选择将其线性增大,从到

。但是实验表示其生成图像的NLL不能和其他的生成式网络相比较。

有一些trick被用于的选择。

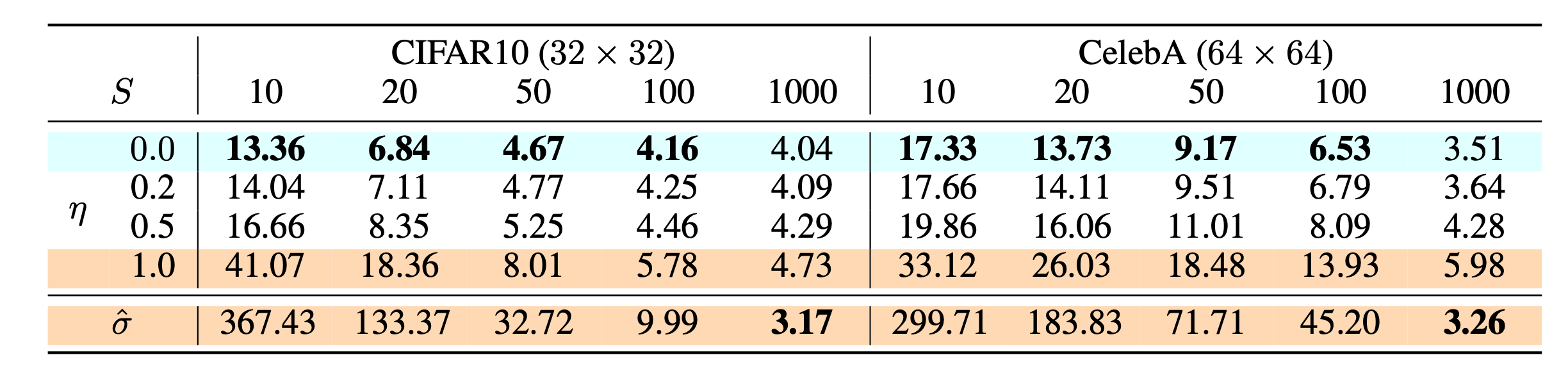

的取值

的取值

在DDPM中,作者选择将固定,并且将

设置为

,其中

。因为将

设置为可学习的会导致不稳定。

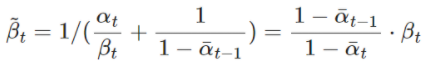

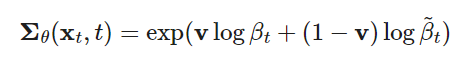

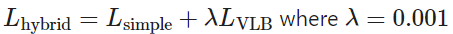

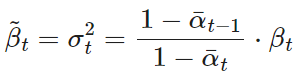

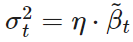

Nichol & Dhariwal (2021) 将设置为

和

的差值:

但是由于不依赖于

,所以将loss加了一项,改为

,并且将

,并且将在

上停止梯度,只用于优化

论文作者观察到很难优化(noisy gradient),所以将

使用重要性采样进行了时间平均。

DDIM

DDPM采样过程很慢,因为要生成一个图像,需要迭代很多轮。

“For example, it takes around 20 hours to sample 50k images of size 32 × 32 from a DDPM, but less than a minute to do so from a GAN on an Nvidia 2080 Ti GPU.”

一个非常intuitive的想法就是每步采样一次,这样就可以将采样步数减少到

步。

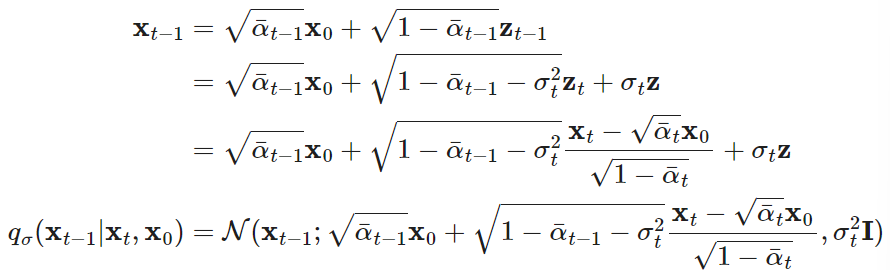

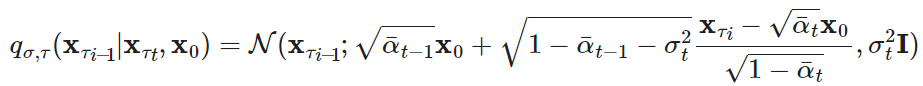

令采样过程为

DDIM(Song et al., 2020)作者将重写为:

根据 ,得到

,得到 ,令

,令 ,

,控制采样的随机性。当

时,采样是确定的。

DDIM与DDPM有一样的边缘噪声概率分布,但是确定性地将噪声map回原来的数据域。

生成

在生成图片的时候,只需要采样步反向过程中的一部分

。于是,生成过程就变为:

当很小的时候,

(deterministic)可以生成最好质量的数据;

生成的质量不好。