paper: One-Class Graph Neural Networks for Anomaly Detection in Attributed Networks

codebase: https://github.com/WangXuhongCN/OCGNN

这篇文章来自上交和伯克利,是做图异常检测的,想法很简单,理解起来也不难。 不知道中的那个刊或者会议。

Intuition

因为一些数据集里是只有正常的点(anormaly-free),也就是说有标签的都是正常的结点(node),剩下的node需要模型去预测(正常和异常)。

作者的思路是建模一个超球(supersphere),在球内的点为正常点,球外的为异常点。并且使用GNN去提取图的feature。

算是一个融合。

理论推导

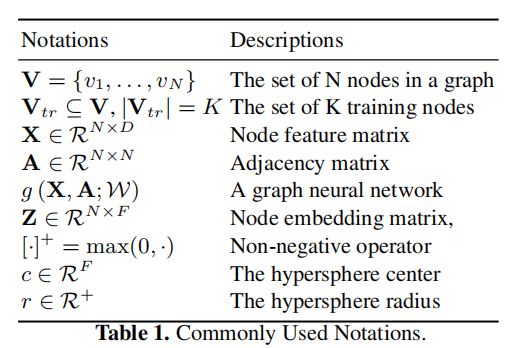

下面给出一些符号定义:图,

表示结点,

表示边,

表示图上结点的数据。

表示用于训练的结点,

是球的中心,

是球的半径。

作者希望这个球尽可能的紧实(compact),能够包裹住尽可能多的正常的node。

由此,有下面的公式:是一个映射,将数据空间映射到另一个希尔伯特空间。第一项就是希望这个球尽可能的小,第二项放松了要求,不要求所有的正常点都在这个球内(球的体积)。

则是控制球的体积和惩罚。

GCN

熟悉GNN的朋友可以跳过这一节。

对于第层的图神经网络,假设输入是

,输出是

,也就是

%22%20aria-hidden%3D%22true%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMATHBI-48%22%20x%3D%220%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%3Cg%20transform%3D%22translate(1041%2C412)%22%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMAIN-28%22%20x%3D%220%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMATHI-6C%22%20x%3D%22389%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMAIN-2B%22%20x%3D%22688%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMAIN-31%22%20x%3D%221466%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMAIN-29%22%20x%3D%221966%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-3D%22%20x%3D%223085%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMATHI-67%22%20x%3D%224141%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%3Cg%20transform%3D%22translate(4788%2C0)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJSZ2-28%22%3E%3C%2Fuse%3E%0A%3Cg%20transform%3D%22translate(597%2C0)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMATHBI-48%22%20x%3D%220%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%3Cg%20transform%3D%22translate(1041%2C412)%22%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMAIN-28%22%20x%3D%220%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMATHI-6C%22%20x%3D%22389%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMAIN-29%22%20x%3D%22688%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-2C%22%20x%3D%222500%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMATHBI-41%22%20x%3D%222945%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMAIN-3B%22%20x%3D%223815%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%3Cg%20transform%3D%22translate(4260%2C0)%22%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJMATHBI-57%22%20x%3D%220%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%3Cg%20transform%3D%22translate(1241%2C412)%22%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMAIN-28%22%20x%3D%220%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMATHI-6C%22%20x%3D%22389%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%20%3Cuse%20transform%3D%22scale(0.707)%22%20xlink%3Ahref%3D%22%23E1-MJMAIN-29%22%20x%3D%22688%22%20y%3D%220%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%20%3Cuse%20xlink%3Ahref%3D%22%23E1-MJSZ2-29%22%20x%3D%226363%22%20y%3D%22-1%22%3E%3C%2Fuse%3E%0A%3C%2Fg%3E%0A%3C%2Fg%3E%0A%3C%2Fsvg%3E#card=math&code=%5Cbm%20H%5E%7B%28l%2B1%29%7D%3Dg%5Cleft%28%5Cbm%20H%5E%7B%28l%29%7D%2C%5Cbm%20A%3B%5Cbm%20W%5E%7B%28l%29%7D%5Cright%29&id=eECe6)

GCN的相关知识,在本文中不详细论述,给出GCN的公式:是一个对角矩阵,由邻接矩阵

算得,

。

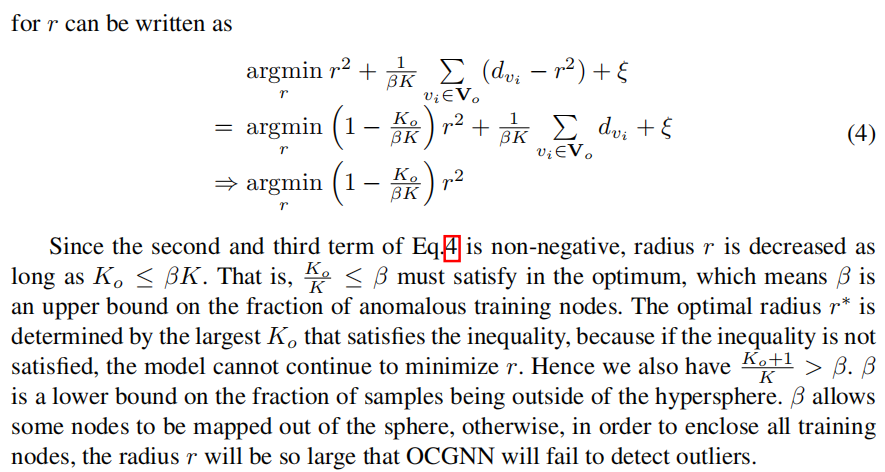

优化目标

有了GCN,就可以设计loss了:

那么聪明如你,一定可以看出来,这个是式子在球内的点才有loss(具体看第一项);第二项就是为了这个球尽可能的紧实,不至于太大;第三项就是一个正则化项,防止过拟合。

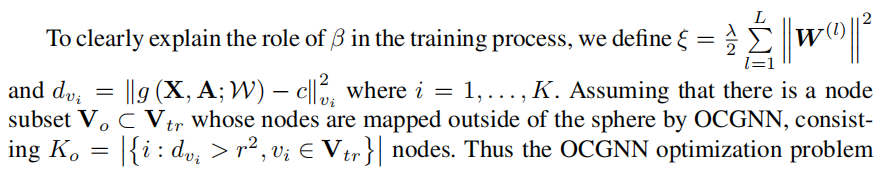

的作用

的作用

作者这里详细的说明了超参的作用,本文在这里不做过多的解释。

简单来说就是为了控制在球外的正常点的比例。

作者在实验中,将

设置为0.1

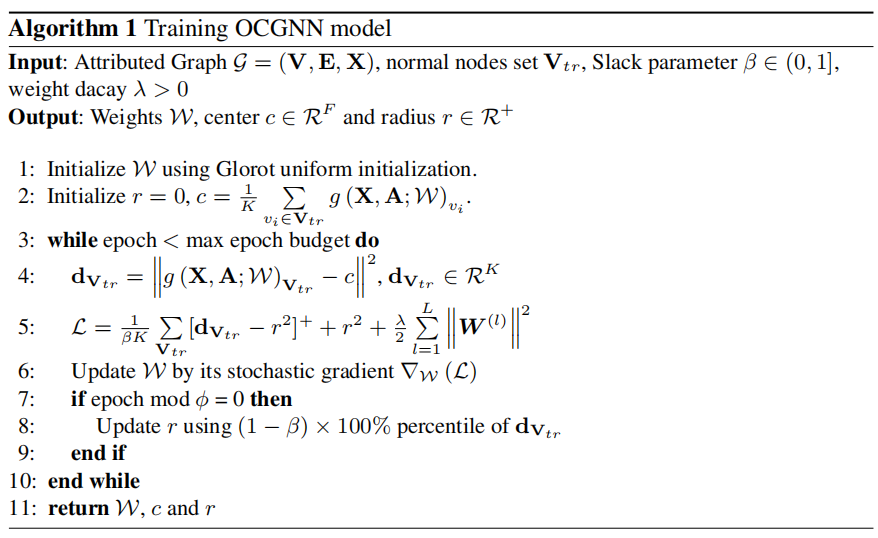

伪代码

值得注意的是,作者直接将球的中心固定了,只更新。作者认为最开始的

就足矣保证所有的训练样本都在

的周围。

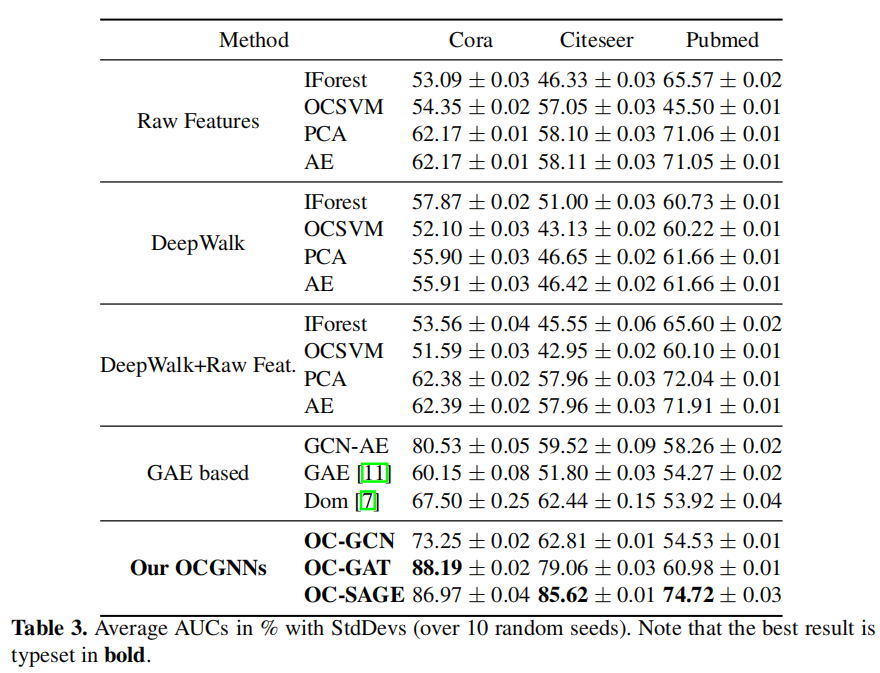

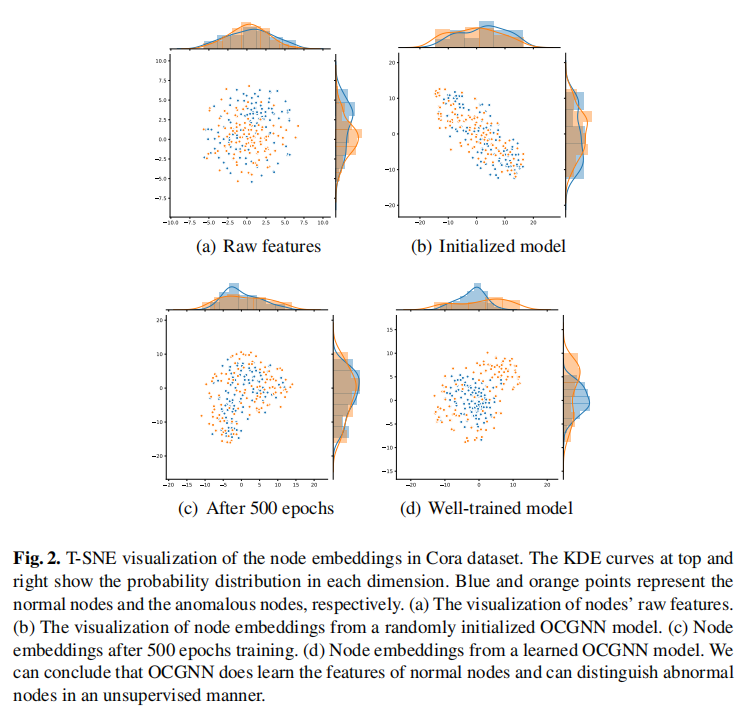

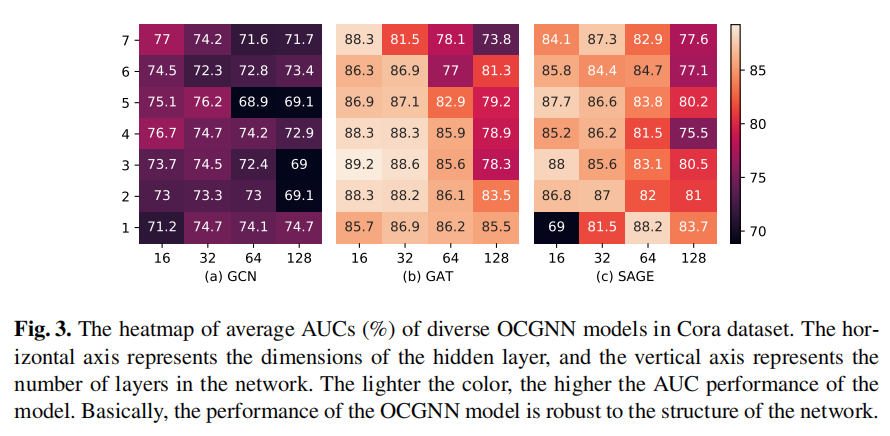

漂亮的数字和图