1. 支持向量机

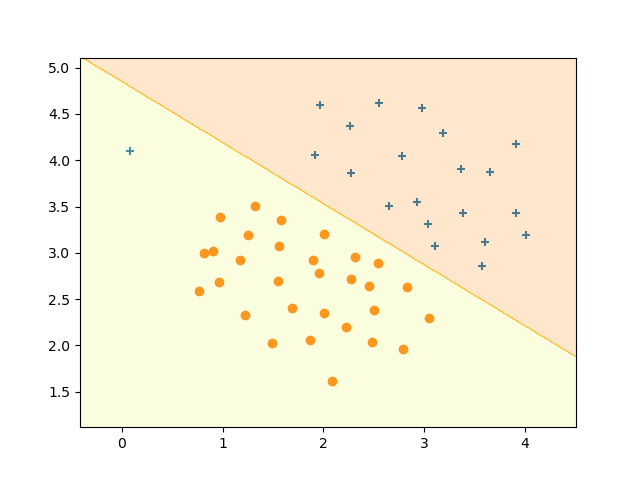

1.1 可视化数据集1

data = load_mat_file('./data/ex6data1.mat')X = data['X']y = data['y']plt.ion()plt.figure()plot_data(X, y)plt.pause(1)plt.close()def plot_data(x, y):# 取出那些行中,列号为0的元素等于1的行号。返回的是一个元组,其中存在一个元素,类型为ndarray,使用[0]取出这个这个矩阵(一维向量)。pos = np.where(y[:, 0] == 1)[0]neg = np.where(y[:, 0] == 0)[0]# pos代表那些正样本的行索引,列号为0代表横坐标。1代表那些正样本的纵坐标。plt.scatter(x[pos, 0], x[pos, 1], marker='+')plt.scatter(x[neg, 0], x[neg, 1], marker='o')

1.2 调用sklearn中的svm

C = 1Classification = SVC(C=C, kernel='linear')# fit(X, y, sample_weight=None), y : array-like, shape (n_samples,)# y.raval返回y的一维向量形式,按照每行降维,返回的是一个视图,修改会影响原值。Classification.fit(X, y.ravel())plot_pad = 0.5plot_x_min, plot_x_max = X[:, 0].min() - plot_pad, X[:, 0].max() + plot_padplot_y_min, plot_y_max = X[:, 1].min() - plot_pad, X[:, 1].max() + plot_padplot_step = 0.01# 按照0.01的间隔生成min到max之间的一系列网格矩阵。plot_x, plot_y = np.meshgrid(np.arange(plot_x_min, plot_x_max, plot_step),np.arange(plot_y_min, plot_y_max, plot_step))#np.c_,左右连接,要求行数相等plot_z = Classification.predict(np.c_[plot_x.ravel(), plot_y.ravel()]).reshape(plot_x.shape)#绘制等高线图,alpha代表透明度,1为完全透明plt.contourf(plot_x, plot_y, plot_z, cmap="Wistia", alpha=0.2)plt.pause(1)plt.close()

有高斯核的SVM

高斯核实现

theta参数决定着,相似程度的下降速度。theta越大,高斯曲线越平缓,相似度下降度慢。反之,相似度下降越快。

def gaussian_kernel(x1, x2, sigma):#linalg.norm,求向量的2范数return np.exp(-((linalg.norm(x1 - x2)) ** 2) / (2 * (sigma ** 2)))

可视化数据集2

RBF指的就是高斯核函数。当C为100时,产生过拟合,其表现为:决策边界强制区分正负样本。

Classification = SVC(C=100, kernel='rbf', gamma=6)# fit(X, y, sample_weight=None), y : array-like, shape (n_samples,)Classification.fit(X, y.ravel())plot_pad = 0.5plot_x_min, plot_x_max = X[:, 0].min() - plot_pad, X[:, 0].max() + plot_padplot_y_min, plot_y_max = X[:, 1].min() - plot_pad, X[:, 1].max() + plot_padplot_step = 0.01plot_x, plot_y = np.meshgrid(np.arange(plot_x_min, plot_x_max, plot_step),np.arange(plot_y_min, plot_y_max, plot_step))plot_z = Classification.predict(np.c_[plot_x.ravel(), plot_y.ravel()]).reshape(plot_x.shape)plt.contourf(plot_x, plot_y, plot_z, cmap="Wistia", alpha=0.2)plt.axis([-0.1, 1.1, 0.3, 1.05])plt.pause(1)plt.close()

可视化数据集3

Classification = SVC(C=1, kernel='poly', degree=3, gamma=10)# fit(X, y, sample_weight=None), y : array-like, shape (n_samples,)Classification.fit(X, y.ravel())plot_pad = 0.5plot_x_min, plot_x_max = X[:, 0].min() - plot_pad, X[:, 0].max() + plot_padplot_y_min, plot_y_max = X[:, 1].min() - plot_pad, X[:, 1].max() + plot_padplot_step = 0.01plot_x, plot_y = np.meshgrid(np.arange(plot_x_min, plot_x_max, plot_step),np.arange(plot_y_min, plot_y_max, plot_step))plot_z = Classification.predict(np.c_[plot_x.ravel(), plot_y.ravel()]).reshape(plot_x.shape)plt.contourf(plot_x, plot_y, plot_z, cmap="Wistia", alpha=0.2)plt.axis([-0.8, 0.4, -0.8, 0.8])plt.pause(1)plt.close()

垃圾邮件分类

邮件预处理

def process_email(email_contents):# Load Vocabularyvocab_list = get_vocab_list()[:, 1]word_indices = []# ========================== Preprocess Email ===========================# Lower caseemail_contents = str(email_contents)email_contents = email_contents.lower()# Strip all HTML# Looks for any expression that starts with < and ends with > and replace# and does not have any < or > in the tag it with a space# [^<>]表示除了<>的字符匹配1次或者多次email_contents = re.sub(r'<[^<>]+>', ' ', email_contents)# Handle Numbers# Look for one or more characters between 0-9email_contents = re.sub(r'[0-9]+', 'number', email_contents)# Handle URLS# Look for strings starting with http:// or https://email_contents = re.sub(r'(http|https)://[^\s]*', 'httpaddr', email_contents)# Handle Email Addresses# Look for strings with @ in the middleemail_contents = re.sub(r'[^\s]+@[^\s]+', 'emailaddr', email_contents)# Handle $ signemail_contents = re.sub(r'[$]+', 'dollar', email_contents)# ========================== Tokenize Email ===========================# Output the email to screen as wellprint('\n==== Processed Email ====\n\n')# Process filel_count = 0# Tokenize and also get rid of any punctuationpartition_text = re.split(r'[ @$/#.-:&*+=\[\]?!(){},\'\">_<;%\n\f]', email_contents)stemmer = PorterStemmer()for one_word in partition_text:if one_word != '':# Remove any non alphanumeric charactersone_word = re.sub(r'[^a-zA-Z0-9]', '', one_word)# Stem the word# (the porterStemmer sometimes has issues, so we use a try catch block)one_word = stemmer.stem(one_word)# % Skip the word if it is too short.if str == '':continuetemp = np.argwhere(vocab_list == one_word)if temp.size == 1:word_indices.append(temp.min())# % Print to screen, ensuring that the output lines are not too longif (l_count + len(one_word) + 1) > 78:print('\n')l_count = 0print('%s' % one_word, end=' ')l_count = l_count + len(one_word) + 1print('\n')# Print footerprint('\n\n=========================\n')return np.array(word_indices)

特征提取

def email_features(word_indices):

# Total number of words in the dictionary

n = 1899

# You need to return the following variables correctly.

x = np.zeros((n, 1))

for i in range(word_indices.size):

x[word_indices[i]] = 1

return x

为垃圾邮件分类训练SVM

# =========== Part 3: Train Linear SVM for Spam Classification ========

# In this section, you will train a linear classifier to determine if an

# email is Spam or Not-Spam.

# Load the Spam Email dataset

data = load_mat_file('../data/spamTrain.mat')

print('\nTraining Linear SVM (Spam Classification)\n')

print('(this may take 1 to 2 minutes) ...\n')

X = data['X']

y = data['y'].ravel()

C = 0.1

Classification = SVC(C=C, kernel='linear')

Classification.fit(X, y)

p = Classification.predict(X)

print('Training Accuracy: {:.2f}\n'.format((np.mean((p == y)) * 100)))

print('Program paused. Press enter to continue.\n')

# pause_func()

# =================== Part 4: Test Spam Classification ================

data = load_mat_file('../data/spamTest.mat')

Xtest = data['Xtest']

ytest = data['ytest'].ravel()

p = Classification.predict(Xtest)

print('Test Accuracy: {:.2f}\n'.format((np.mean((p == ytest)) * 100)))

print('Program paused. Press enter to continue.\n')

测试

# =================== Part 4: Test Spam Classification ================

data = load_mat_file('../data/spamTest.mat')

Xtest = data['Xtest']

ytest = data['ytest'].ravel()

p = Classification.predict(Xtest)

print('Test Accuracy: {:.2f}\n'.format((np.mean((p == ytest)) * 100)))

print('Program paused. Press enter to continue.\n')

# pause_func()

# ================= Part 5: Top Predictors of Spam ====================

# np.argsort用于排序分类器的参数大小

index_array = np.argsort(Classification.coef_).ravel()[::-1]

vocab_list = get_vocab_list()[:, 1]

for i in range(15):

print(' %-15s (%f) \n' % (vocab_list[index_array[i]], Classification.coef_[:, index_array[i]]))

print('Program paused. Press enter to continue.\n')