RAID (独立冗余磁盘阵列)

1988年,加利福尼亚大学伯克利分校首次提出并定义了RAID技术的概念。RAID技术通过把多个硬盘设备组合成一个容量更大、安全性更好的磁盘阵列,并把数据切割成多个区段后分别存放在各个不同的物理硬盘设备上,然后利用分散读写技术来提升磁盘阵列整体的性能,同时把多个重要数据的副本同步到不同的物理硬盘设备上,从而起到了非常好的数据冗余备份效果。

RAID 0

RAID 0技术把多块物理硬盘设备(至少两块)通过硬件或软件的方式串联在一起,组成一个大的卷组,并将数据依次写入到各个物理硬盘中。这样一来,在最理想的状态下,硬盘设备的读写性能会提升数倍,但是若任意一块硬盘发生故障将导致整个系统的数据都受到破坏。通俗来说,RAID 0技术能够有效地提升硬盘数据的吞吐速度,但是不具备数据备份和错误修复能力。<br />

RAID 1

RAID 1技术示意图中可以看到,它是把两块以上的硬盘设备进行绑定,在写入数据时,是将数据同时写入到多块硬盘设备上(可以将其视为数据的镜像或备份)。当其中某一块硬盘发生故障后,一般会立即自动以热交换的方式来恢复数据的正常使用。<br />

RAID 5

RAID5技术是把硬盘设备的数据奇偶校验信息保存到其他硬盘设备中。RAID 5磁盘阵列组中数据的奇偶校验信息并不是单独保存到某一块硬盘设备中,而是存储到除自身以外的其他每一块硬盘设备上,这样的好处是其中任何一设备损坏后不至于出现致命缺陷;图7-3中parity部分存放的就是数据的奇偶校验信息,换句话说,就是RAID 5技术实际上没有备份硬盘中的真实数据信息,而是当硬盘设备出现问题后通过奇偶校验信息来尝试重建损坏的数据。RAID这样的技术特性“妥协”地兼顾了硬盘设备的读写速度、数据安全性与存储成本问题。<br />

RAID 10

RAID 10技术是RAID 1+RAID 0技术的一个“组合体”。如图7-4所示,RAID 10技术需要至少4块硬盘来组建,其中先分别两两制作成RAID 1磁盘阵列,以保证数据的安全性;然后再对两个RAID 1磁盘阵列实施RAID 0技术,进一步提高硬盘设备的读写速度。这样从理论上来讲,只要坏的不是同一组中的所有硬盘,那么最多可以损坏50%的硬盘设备而不丢失数据。由于RAID 10技术继承了RAID 0的高读写速度和RAID 1的数据安全性,在不考虑成本的情况下RAID 10的性能都超过了RAID 5,因此当前成为广泛使用的一种存储技术。<br />

mdadm

mdadm命令用于管理Linux系统中的软件RAID硬盘阵列,格式为“mdadm [模式]

| 参数 | 作用 |

|---|---|

| -a | 检测设备名称 |

| -n | 指定设备数量 |

| -l | 指定RAID级别 |

| -C | 创建 |

| -v | 显示过程 |

| -f | 模拟设备损坏 |

| -r | 移除设备 |

| -Q | 查看摘要信息 |

| -D | 查看详细信息 |

| -S | 停止RAID磁盘阵列 |

-C参数代表创建一个RAID阵列卡;-v参数显示创建的过程,同时在后面追加一个设备名称/dev/md0,这样/dev/md0就是创建后的RAID磁盘阵列的名称;-a yes参数代表自动创建设备文件;-n 4参数代表使用4块硬盘来部署这个RAID磁盘阵列;而-l 10参数则代表RAID 10方案;最后再加上4块硬盘设备的名称就搞定

[root@linux Desktop]# mdadm -Cv /dev/md0 -a yes -n 4 -l 10 /dev/sdb /dev/sdc /dev/sdd /dev/sde

mdadm: layout defaults to n2

mdadm: layout defaults to n2

mdadm: chunk size defaults to 512K

mdadm: /dev/sdb appears to be part of a raid array:

level=raid0 devices=0 ctime=Thu Jan 1 07:00:00 1970

mdadm: partition table exists on /dev/sdb but will be lost or

meaningless after creating array

mdadm: size set to 20954624K

Continue creating array?

Continue creating array? (y/n)

Continue creating array? (y/n) y

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md0 started.

[root@linux Desktop]# mkfs.xfs /dev/md0

log stripe unit (524288 bytes) is too large (maximum is 256KiB)

log stripe unit adjusted to 32KiB

meta-data=/dev/md0 isize=256 agcount=16, agsize=654720 blks

= sectsz=512 attr=2, projid32bit=1

= crc=0

data = bsize=4096 blocks=10475520, imaxpct=25

= sunit=128 swidth=256 blks

naming =version 2 bsize=4096 ascii-ci=0 ftype=0

log =internal log bsize=4096 blocks=5120, version=2

= sectsz=512 sunit=8 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

[root@linux Desktop]# mkdir /RAID

[root@linux Desktop]# mount

mount mount.fuse mount.nfs mount.nfs4 mountpoint mountstats

[root@linux Desktop]# mount /dev/md0 /RAID/

[root@linux Desktop]# df

Filesystem 1K-blocks Used Available Use% Mounted on

/dev/mapper/rhel-root 39294212 4224984 35069228 11% /

devtmpfs 1008544 0 1008544 0% /dev

tmpfs 1017824 140 1017684 1% /dev/shm

tmpfs 1017824 8980 1008844 1% /run

tmpfs 1017824 0 1017824 0% /sys/fs/cgroup

/dev/sda1 508588 127372 381216 26% /boot

/dev/sr0 3654720 3654720 0 100% /run/media/root/RHEL-7.0 Server.x86_64

/dev/md0 41881600 33312 41848288 1% /RAID

[root@linux Desktop]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/rhel-root 38G 4.1G 34G 11% /

devtmpfs 985M 0 985M 0% /dev

tmpfs 994M 140K 994M 1% /dev/shm

tmpfs 994M 8.8M 986M 1% /run

tmpfs 994M 0 994M 0% /sys/fs/cgroup

/dev/sda1 497M 125M 373M 26% /boot

/dev/sr0 3.5G 3.5G 0 100% /run/media/root/RHEL-7.0 Server.x86_64

/dev/md0 40G 33M 40G 1% /RAID

[root@linux Desktop]#

RAID 5磁盘阵列+备份盘。在下面的命令中,参数-n 3代表创建这个RAID 5磁盘阵列所需的硬盘数,参数-l 5代表RAID的级别,而参数-x 1则代表有一块备份盘。当查看/dev/md0(即RAID 5磁盘阵列的名称)磁盘阵列的时候就能看到有一块备份盘在等待中了。我们再次把硬盘设备/dev/sdb移出磁盘阵列,然后迅速查看/dev/md0磁盘阵列的状态,就会发现备份盘已经被自动顶替上去并开始了数据同步。

[root@linux Desktop]# mdadm -Cv /dev/md0 -n 3 -l 5 -x 1 /dev/sdb /dev/sd

sda sda1 sda2 sdb sdc sdd sde

[root@linux Desktop]# mdadm -Cv /dev/md0 -n 3 -l 5 -x 1 /dev/sdb /dev/sd

sda sda1 sda2 sdb sdc sdd sde

[root@linux Desktop]# mdadm -Cv /dev/md0 -n 3 -l 5 -x 1 /dev/sdb /dev/sdc /dev/sdd /dev/sde

mdadm: layout defaults to left-symmetric

mdadm: layout defaults to left-symmetric

mdadm: chunk size defaults to 512K

mdadm: size set to 20954624K

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md0 started.

[root@linux Desktop]# df

Filesystem 1K-blocks Used Available Use% Mounted on

/dev/mapper/rhel-root 39294212 4223352 35070860 11% /

devtmpfs 1008544 0 1008544 0% /dev

tmpfs 1017824 136 1017688 1% /dev/shm

tmpfs 1017824 8988 1008836 1% /run

tmpfs 1017824 0 1017824 0% /sys/fs/cgroup

/dev/sda1 508588 121220 387368 24% /boot

/dev/sr0 3654720 3654720 0 100% /run/media/root/RHEL-7.0 Server.x86_64

[root@linux Desktop]# mdadm -D /dev/md0

/dev/md0:

Version : 1.2

Creation Time : Tue Jun 26 15:49:26 2018

Raid Level : raid5

Array Size : 41909248 (39.97 GiB 42.92 GB)

Used Dev Size : 20954624 (19.98 GiB 21.46 GB)

Raid Devices : 3

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Tue Jun 26 15:49:59 2018

State : clean, degraded, recovering

Active Devices : 2

Working Devices : 4

Failed Devices : 0

Spare Devices : 2

Layout : left-symmetric

Chunk Size : 512K

Rebuild Status : 37% complete

Name :linux.com:0 (local to hostlinux.com)

UUID : da679816:74800333:e1979bf2:b85b0abc

Events : 6

Number Major Minor RaidDevice State

0 8 16 0 active sync /dev/sdb

1 8 32 1 active sync /dev/sdc

4 8 48 2 spare rebuilding /dev/sdd

3 8 64 - spare /dev/sde

[root@linux Desktop]#

[root@linux Desktop]#

[root@linux Desktop]#

[root@linux Desktop]# mkfs.xfs /dev/md0

log stripe unit (524288 bytes) is too large (maximum is 256KiB)

log stripe unit adjusted to 32KiB

meta-data=/dev/md0 isize=256 agcount=16, agsize=654720 blks

= sectsz=512 attr=2, projid32bit=1

= crc=0

data = bsize=4096 blocks=10475520, imaxpct=25

= sunit=128 swidth=256 blks

naming =version 2 bsize=4096 ascii-ci=0 ftype=0

log =internal log bsize=4096 blocks=5120, version=2

= sectsz=512 sunit=8 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

[root@linux Desktop]#

[root@linux Desktop]#

[root@linux Desktop]#

[root@linux Desktop]# mkdir /RAID

[root@linux Desktop]#

[root@linux Desktop]# mount /dev/md0 /RAID/

[root@linux Desktop]#

[root@linux Desktop]#

[root@linux Desktop]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/rhel-root 38G 4.1G 34G 11% /

devtmpfs 985M 0 985M 0% /dev

tmpfs 994M 136K 994M 1% /dev/shm

tmpfs 994M 8.8M 986M 1% /run

tmpfs 994M 0 994M 0% /sys/fs/cgroup

/dev/sda1 497M 119M 379M 24% /boot

/dev/sr0 3.5G 3.5G 0 100% /run/media/root/RHEL-7.0 Server.x86_64

/dev/md0 40G 33M 40G 1% /RAID

[root@linux Desktop]# mdadm /dev/md0 -f /dev/sdb

mdadm: set /dev/sdb faulty in /dev/md0

[root@linux Desktop]# mdadm -D /dev/md0

/dev/md0:

Version : 1.2

Creation Time : Tue Jun 26 15:49:26 2018

Raid Level : raid5

Array Size : 41909248 (39.97 GiB 42.92 GB)

Used Dev Size : 20954624 (19.98 GiB 21.46 GB)

Raid Devices : 3

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Tue Jun 26 15:51:52 2018

State : clean, degraded, recovering

Active Devices : 2

Working Devices : 3

Failed Devices : 1

Spare Devices : 1

Layout : left-symmetric

Chunk Size : 512K

Rebuild Status : 13% complete

Name :linux.com:0 (local to hostlinux.com)

UUID : da679816:74800333:e1979bf2:b85b0abc

Events : 28

Number Major Minor RaidDevice State

3 8 64 0 spare rebuilding /dev/sde

1 8 32 1 active sync /dev/sdc

4 8 48 2 active sync /dev/sdd

0 8 16 - faulty /dev/sdb

[root@linux Desktop]# mdadm -D /dev/md0

/dev/md0:

Version : 1.2

Creation Time : Tue Jun 26 15:49:26 2018

Raid Level : raid5

Array Size : 41909248 (39.97 GiB 42.92 GB)

Used Dev Size : 20954624 (19.98 GiB 21.46 GB)

Raid Devices : 3

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Tue Jun 26 15:52:12 2018

State : clean, degraded, recovering

Active Devices : 2

Working Devices : 3

Failed Devices : 1

Spare Devices : 1

Layout : left-symmetric

Chunk Size : 512K

Rebuild Status : 35% complete

Name :linux.com:0 (local to hostlinux.com)

UUID : da679816:74800333:e1979bf2:b85b0abc

Events : 33

Number Major Minor RaidDevice State

3 8 64 0 spare rebuilding /dev/sde

1 8 32 1 active sync /dev/sdc

4 8 48 2 active sync /dev/sdd

0 8 16 - faulty /dev/sdb

[root@linux Desktop]#

LVM 逻辑卷管理器

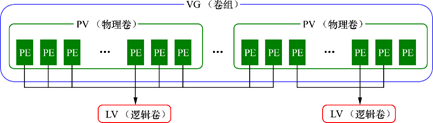

LVM可以允许用户对硬盘资源进行动态调整。<br /> LVM技术是在硬盘分区和文件系统之间添加了一个逻辑层,它提供了一个抽象的卷组,可以把多块硬盘进行卷组合并。这样一来,用户不必关心物理硬盘设备的底层架构和布局,就可以实现对硬盘分区的动态调整。部署LVM时,需要逐个配置物理卷、卷组和逻辑卷。<br />

| 功能/命令 | 物理卷管理 | 卷组管理 | 逻辑卷管理 |

|---|---|---|---|

| 扫描 | pvscan | vgscan | lvscan |

| 建立 | pvcreate | vgcreate | lvcreate |

| 显示 | pvdisplay | vgdisplay | lvdisplay |

| 删除 | pvremove | vgremove | lvremove |

| 扩展 | vgextend | lvextend | |

| 缩小 | vgreduce | lvreduce |

- 将硬盘初始化为物理卷

- 将硬盘加入卷组中

- 从卷组中分割出空间,称为逻辑卷

- 格式化物理卷,挂载使用

初始化为物理卷

[root@linuxprobe ~]# pvcreate /dev/sdb /dev/sdc

Physical volume "/dev/sdb" successfully created

Physical volume "/dev/sdc" successfully created

将物理卷加入到卷组

[root@linuxprobe ~]# vgcreate storage /dev/sdb /dev/sdc

Volume group "storage" successfully created

[root@linuxprobe ~]# vgdisplay

--- Volume group ---

VG Name storage

System ID

Format lvm2

Metadata Areas 2

Metadata Sequence No 1

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 0

Open LV 0

Max PV 0

Cur PV 2

Act PV 2

VG Size 39.99 GiB

PE Size 4.00 MiB

Total PE 10238

Alloc PE / Size 0 / 0 Free PE / Size 10238 / 39.99 GiB

切割为逻辑卷

在对逻辑卷进行切割时有两种计量单位。第一种是以容量为单位,所使用的参数为-L。例如,使用-L 150M生成一个大小为150MB的逻辑卷。另外一种是以基本单元的个数为单位,所使用的参数为-l。每个基本单元的大小默认为4MB。例如,使用-l 37可以生成一个大小为37×4MB=148MB的逻辑卷。

[root@linuxprobe ~]# lvcreate -n vo -l 37 storage

Logical volume "vo" created

[root@linuxprobe ~]# lvdisplay

--- Logical volume ---

LV Path /dev/storage/vo

LV Name vo

VG Name storage

LV UUID D09HYI-BHBl-iXGr-X2n4-HEzo-FAQH-HRcM2I

LV Write Access read/write

LV Creation host, time localhost.localdomain, 2017-02-01 01:22:54 -0500

LV Status available

# open 0

LV Size 148.00 MiB

Current LE 37

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 8192

Block device 253:2

Linux系统会把LVM中的逻辑卷设备存放在/dev设备目录中(实际上是做了一个符号链接),同时会以卷组的名称来建立一个目录,其中保存了逻辑卷的设备映射文件(即/dev/卷组名称/逻辑卷名称)。

[root@linuxprobe ~]# mkfs.ext4 /dev/storage/vo

mke2fs 1.42.9 (28-Dec-2013)

Filesystem label=

OS type: Linux

Block size=1024 (log=0)

Fragment size=1024 (log=0)

Stride=0 blocks, Stripe width=0 blocks

38000 inodes, 151552 blocks

7577 blocks (5.00%) reserved for the super user

First data block=1

Maximum filesystem blocks=33816576

19 block groups

8192 blocks per group, 8192 fragments per group

2000 inodes per group

Superblock backups stored on blocks:

8193, 24577, 40961, 57345, 73729

Allocating group tables: done

Writing inode tables: done

Creating journal (4096 blocks): done

Writing superblocks and filesystem accounting information: done

[root@linuxprobe ~]# mkdir /linuxprobe

[root@linuxprobe ~]# mount /dev/storage/vo /linuxprobe

拓展逻辑卷

[root@linuxprobe ~]# lvextend -L 290M /dev/storage/vo

Rounding size to boundary between physical extents: 292.00 MiB

Extending logical volume vo to 292.00 MiB

Logical volume vo successfully resized

检查硬盘完整性,并重置硬盘容量。

[root@linuxprobe ~]# e2fsck -f /dev/storage/vo

e2fsck 1.42.9 (28-Dec-2013)

Pass 1: Checking inodes, blocks, and sizes

Pass 2: Checking directory structure

Pass 3: Checking directory connectivity

Pass 4: Checking reference counts

Pass 5: Checking group summary information

/dev/storage/vo: 11/38000 files (0.0% non-contiguous), 10453/151552 blocks

[root@linuxprobe ~]# resize2fs /dev/storage/vo

resize2fs 1.42.9 (28-Dec-2013)

Resizing the filesystem on /dev/storage/vo to 299008 (1k) blocks.

The filesystem on /dev/storage/vo is now 299008 blocks long.

缩小逻辑卷

[root@linuxprobe ~]# resize2fs /dev/storage/vo 120M

resize2fs 1.42.9 (28-Dec-2013)

Resizing the filesystem on /dev/storage/vo to 122880 (1k) blocks.

The filesystem on /dev/storage/vo is now 122880 blocks long.

[root@linuxprobe ~]# lvreduce -L 120M /dev/storage/vo

WARNING: Reducing active logical volume to 120.00 MiB

THIS MAY DESTROY YOUR DATA (filesystem etc.)

Do you really want to reduce vo? [y/n]: y

Reducing logical volume vo to 120.00 MiB

Logical volume vo successfully resized

逻辑卷快照

使用-s参数生成一个快照卷,使用-L参数指定切割的大小。另外,还需要在命令后面写上是针对哪个逻辑卷执行的快照操作。

[root@linuxprobe ~]# lvcreate -L 120M -s -n SNAP /dev/storage/vo

Logical volume "SNAP" created

[root@linuxprobe ~]# lvdisplay

--- Logical volume ---

LV Path /dev/storage/SNAP

LV Name SNAP

VG Name storage

LV UUID BC7WKg-fHoK-Pc7J-yhSd-vD7d-lUnl-TihKlt

LV Write Access read/write

LV Creation host, time localhost.localdomain, 2017-02-01 07:42:31 -0500

LV snapshot status active destination for vo

LV Status available

# open 0

LV Size 120.00 MiB

Current LE 30

COW-table size 120.00 MiB

COW-table LE 30

Allocated to snapshot 0.01%

Snapshot chunk size 4.00 KiB

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 8192

Block device 253:3

恢复逻辑卷快照

[root@linuxprobe ~]# umount /linuxprobe

[root@linuxprobe ~]# lvconvert --merge /dev/storage/SNAP

Merging of volume SNAP started.

vo: Merged: 21.4%

vo: Merged: 100.0%

Merge of snapshot into logical volume vo has finished.

Logical volume "SNAP" successfully removed

减少lvm空间添加到其他分区:

root@kali:~# umount /home

root@kali:~# resize2fs -p /dev/mapper/kali--vg-home 40G

resize2fs 1.45.6 (20-Mar-2020)

Please run 'e2fsck -f /dev/mapper/kali--vg-home' first.

root@kali:~# e2fsck -f /dev/mapper/kali--vg-home

e2fsck 1.45.6 (20-Mar-2020)

Pass 1: Checking inodes, blocks, and sizes

Pass 2: Checking directory structure

Pass 3: Checking directory connectivity

Pass 4: Checking reference counts

Pass 5: Checking group summary information

/dev/mapper/kali--vg-home: 273/65961984 files (4.0% non-contiguous), 4429796/263821312 blocks

root@kali:~# resize2fs -p /dev/mapper/kali--vg-home 40G

resize2fs 1.45.6 (20-Mar-2020)

Resizing the filesystem on /dev/mapper/kali--vg-home to 10485760 (4k) blocks.

Begin pass 2 (max = 266003)

Relocating blocks XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX

Begin pass 3 (max = 8052)

Scanning inode table XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX

Begin pass 4 (max = 77)

Updating inode references XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX

The filesystem on /dev/mapper/kali--vg-home is now 10485760 (4k) blocks long.

root@kali:~# mount /home

root@kali:~# df -h

Filesystem Size Used Avail Use% Mounted on

udev 951M 0 951M 0% /dev

tmpfs 197M 1.2M 196M 1% /run

/dev/mapper/kali--vg-root 11G 9.7G 766M 93% /

tmpfs 982M 0 982M 0% /dev/shm

tmpfs 5.0M 0 5.0M 0% /run/lock

tmpfs 982M 0 982M 0% /sys/fs/cgroup

/dev/sda1 236M 148M 76M 66% /boot

/dev/mapper/kali--vg-tmp 1.8G 5.7M 1.7G 1% /tmp

/dev/mapper/kali--vg-var 2.3G 995M 1.2G 47% /var

tmpfs 197M 8.0K 197M 1% /run/user/0

/dev/mapper/kali--vg-home 39G 76M 37G 1% /home

root@kali:~# lvreduce -L 40G /dev/kali-vg/home

WARNING: Reducing active and open logical volume to 40.00 GiB.

THIS MAY DESTROY YOUR DATA (filesystem etc.)

Do you really want to reduce kali-vg/home? [y/n]: y

Size of logical volume kali-vg/home changed from <1006.40 GiB (257638 extents) to 40.00 GiB (10240 extents).

Logical volume kali-vg/home successfully resized.

root@kali:~# lvextend -L 900G /dev/kali-vg/root

Size of logical volume kali-vg/root changed from <11.18 GiB (2861 extents) to 900.00 GiB (230400 extents).

Logical volume kali-vg/root successfully resized.

root@kali:~# resize2fs /dev/mapper/kali--vg-root

resize2fs 1.45.6 (20-Mar-2020)

Filesystem at /dev/mapper/kali--vg-root is mounted on /; on-line resizing required

old_desc_blocks = 2, new_desc_blocks = 113

The filesystem on /dev/mapper/kali--vg-root is now 235929600 (4k) blocks long.

root@kali:~# df -h

Filesystem Size Used Avail Use% Mounted on

udev 951M 0 951M 0% /dev

tmpfs 197M 1.2M 196M 1% /run

/dev/mapper/kali--vg-root 886G 9.7G 841G 2% /

tmpfs 982M 0 982M 0% /dev/shm

tmpfs 5.0M 0 5.0M 0% /run/lock

tmpfs 982M 0 982M 0% /sys/fs/cgroup

/dev/sda1 236M 148M 76M 66% /boot

/dev/mapper/kali--vg-tmp 1.8G 5.7M 1.7G 1% /tmp

/dev/mapper/kali--vg-var 2.3G 995M 1.2G 47% /var

tmpfs 197M 8.0K 197M 1% /run/user/0

/dev/mapper/kali--vg-home 39G 76M 37G 1% /home