模拟逻辑回归

import numpy as npfrom matplotlib import pyplot as pltef sigmod(t): return 1 / (1 + np.exp(-t))plt.plot(x, y)

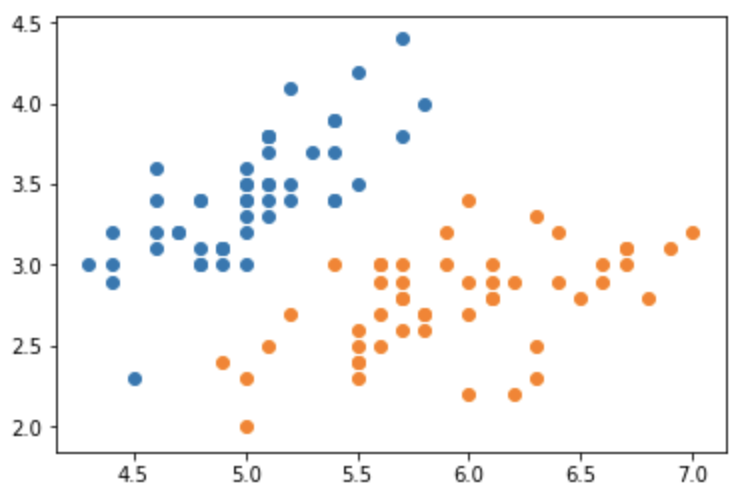

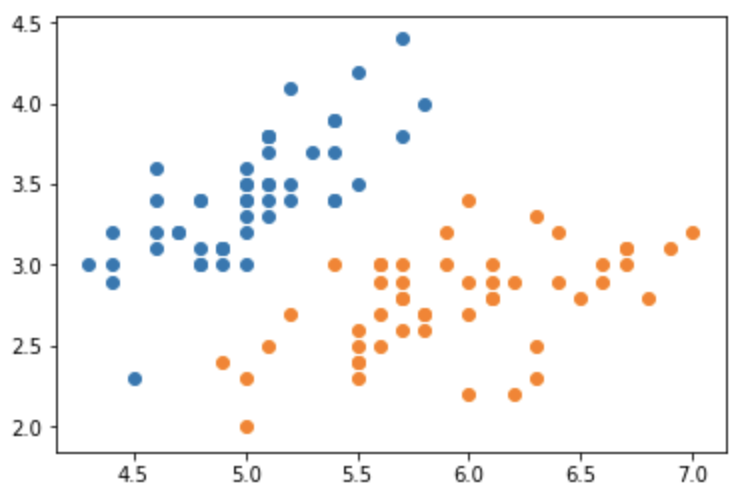

from sklearn import datasetsiris = datasets.load_iris()X = iris.datay = iris.targetX=X[y<2,:2]y=y[y<2]plt.scatter(X[y==0, 0], X[y==0, 1])plt.scatter(X[y==1, 0], X[y==1, 1])

from sklearn.model_selection import train_test_splitfrom sklearn.linear_model import LogisticRegressionX_train, X_test, y_train, y_test = train_test_split(X, y)log_reg = LogisticRegression()log_reg.fit(X_train, y_train)log_reg.coef_ # array([[ 1.95815223, -3.28951035]])def x2(x1): return (-log_reg.coef_[0][0] * x1 - log_reg.intercept_) / log_reg.coef_[0][1]x1_plot = np.linspace(4, 8, 1000)x2_plot = x2(x1_plot)plt.scatter(X[y==0, 0], X[y==0, 1])plt.scatter(X[y==1, 0], X[y==1, 1])plt.plot(x1_plot, x2_plot)

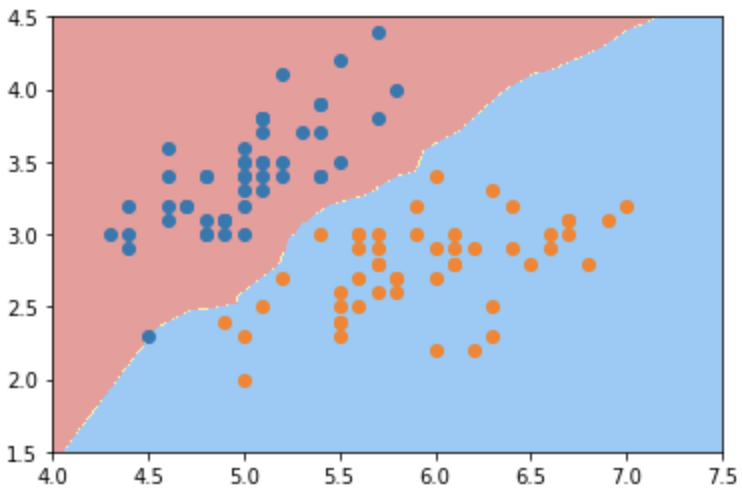

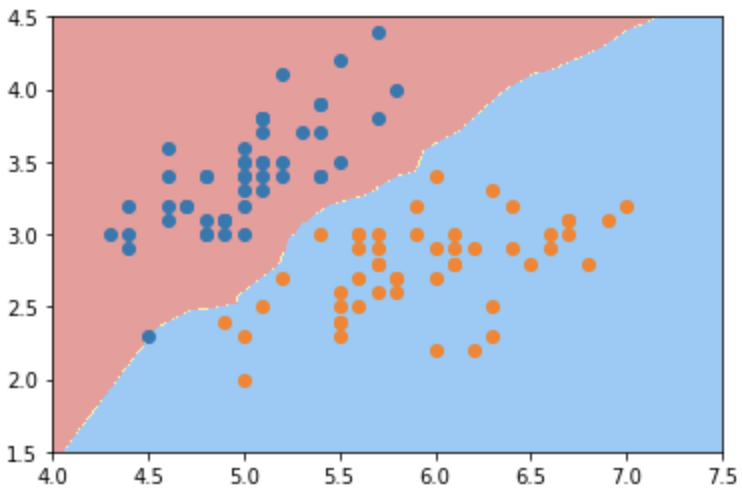

决策边界

def plot_decision_boundary(model, axis): x0, x1 = np.meshgrid( np.linspace(axis[0], axis[1], int((axis[1]-axis[0])*100)).reshape(-1, 1), np.linspace(axis[2], axis[3], int((axis[3]-axis[2])*100)).reshape(-1, 1), ) X_new = np.c_[x0.ravel(), x1.ravel()] y_predict = model.predict(X_new) zz = y_predict.reshape(x0.shape) from matplotlib.colors import ListedColormap custom_cmap = ListedColormap(['#EF9A9A','#FFF59D','#90CAF9']) plt.contourf(x0, x1, zz, linewidth=5, cmap=custom_cmap)plot_decision_boundary(log_reg, axis=[4, 7.5, 1.5, 4.5])plt.scatter(X[y==0, 0], X[y==0, 1])plt.scatter(X[y==1, 0], X[y==1, 1])

from sklearn.neighbors import KNeighborsClassifierknn = KNeighborsClassifier(n_neighbors=3)knn.fit(X_train, y_train)plot_decision_boundary(knn, axis=[4, 7.5, 1.5, 4.5])plt.scatter(X[y==0, 0], X[y==0, 1])plt.scatter(X[y==1, 0], X[y==1, 1])

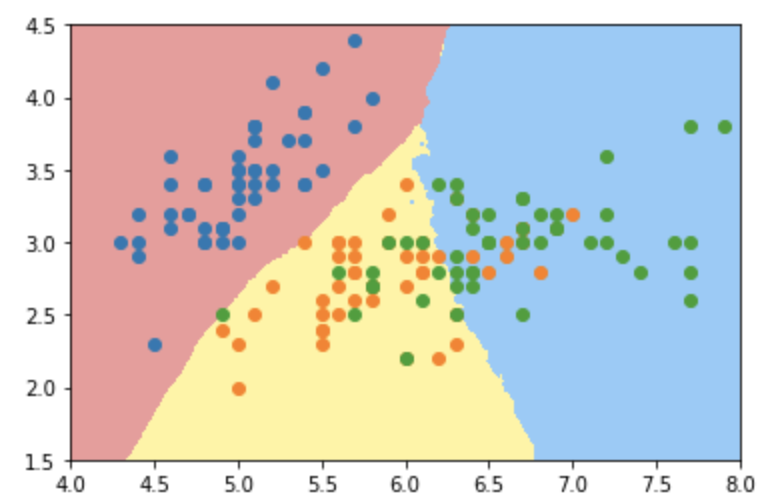

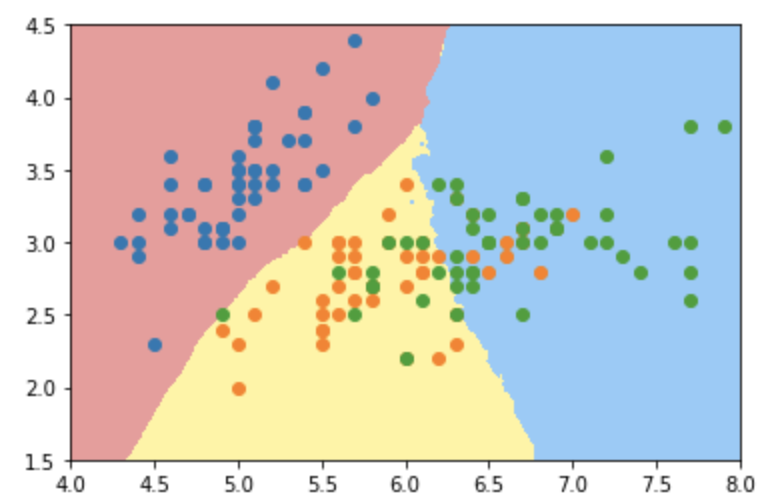

knn2 = KNeighborsClassifier(n_neighbors=5)knn2.fit(iris.data[:, :2], iris.target)plot_decision_boundary(knn2, axis=[4, 10, 1.5, 4.5])plt.scatter(iris.data[iris.target==0, 0], iris.data[iris.target==0, 1])plt.scatter(iris.data[iris.target==1, 0], iris.data[iris.target==1, 1])plt.scatter(iris.data[iris.target==2, 0], iris.data[iris.target==2, 1])

knn3 = KNeighborsClassifier(n_neighbors=50)knn3.fit(iris.data[:, :2], iris.target)plot_decision_boundary(knn3, axis=[4, 8, 1.5, 4.5])plt.scatter(iris.data[iris.target==0, 0], iris.data[iris.target==0, 1])plt.scatter(iris.data[iris.target==1, 0], iris.data[iris.target==1, 1])plt.scatter(iris.data[iris.target==2, 0], iris.data[iris.target==2, 1])

np.random.seed(6666)X = np.random.normal(0, 1, size=(300, 2))y = np.array(X[:, 0] ** 2 + X[:, 1] ** 2 < 1.5, dtype='int')plt.scatter(X[y==0, 0], X[y==0, 1])plt.scatter(X[y==1, 0], X[y==1, 1])log_reg2 = LogisticRegression()log_reg2.fit(X, y)plot_decision_boundary(log_reg2, axis=[-4, 4, -4, 4])plt.scatter(X[y==0, 0], X[y==0, 1])plt.scatter(X[y==1, 0], X[y==1, 1])

from sklearn.preprocessing import PolynomialFeatures, StandardScalerfrom sklearn.pipeline import Pipelinedef PolynomialLogisticRegression(degree, C=1): return Pipeline([ ('poly', PolynomialFeatures(degree=degree)), ('std',StandardScaler()), ('logistic', LogisticRegression(C=C)) ])poly_reg = PolynomialLogisticRegression(2)poly_reg.fit(X, y)plot_decision_boundary(poly_reg, axis=[-4, 4, -4, 4])plt.scatter(X[y==0, 0], X[y==0, 1])plt.scatter(X[y==1, 0], X[y==1, 1])

正则化

poly_reg2 = PolynomialLogisticRegression(8,0.01)poly_reg2.fit(X, y)plot_decision_boundary(poly_reg2, axis=[-4, 4, -4, 4])plt.scatter(X[y==0, 0], X[y==0, 1])plt.scatter(X[y==1, 0], X[y==1, 1])

二分类-多分类—OVR-OVO

from sklearn.multiclass import OneVsRestClassifier, OneVsOneClassifierovr = OneVsRestClassifier(log_reg)ovo = OneVsOneClassifier(log_reg)