准确率

带来的问题:比如一种事件的发生的概率是99.9999%,模型预测的准确率是99%,并不能说明这个模型是好的模型。

精确度:

是指:预测为1的个数 / (预测真正1的个数+预测错误为1的)——> TP / (TP + FP)

召回率:

是指: 预测为1的个数 / (预测真正1的个数+预测错误真正为1的) —- > TP /(TP + FN)

import numpy as npfrom sklearn import datasetsdigits = datasets.load_digits()X = digits.datay = digits.target.copy()y[digits.target == 9] = 1y[digits.target != 9] = 0from sklearn.linear_model import LogisticRegressionfrom sklearn.model_selection import train_test_splitX_train, X_test, y_train, y_test = train_test_split(X, y, random_state=666)log_reg = LogisticRegression()log_reg.fit(X_train, y_train)log_reg.score(X_test, y_test)log_reg_prdeict = log_reg.predict(X_test)def TN(y_true, y_predict):return np.sum((y_true == 0) & (y_predict == 0))def FP(y_true, y_predict):return np.sum((y_true == 0) & (y_predict == 1))def FN(y_true, y_predict):return np.sum((y_true == 1) & (y_predict == 0))def TP(y_true, y_predict):return np.sum((y_true == 1) & (y_predict == 1))# 混淆矩阵def confusion_matrix(y_true, y_predict):return np.array([[TN(y_true, y_predict), FP(y_true, y_predict)],[FN(y_true, y_predict), TP(y_true, y_predict)],])confusion_matrix(y_test, log_reg_prdeict)# 精确度def precision_score(y_true, y_predict):confusion_matrix_ = confusion_matrix(y_true, y_predict)return confusion_matrix_[1, 1] / (confusion_matrix_[0, 1] + confusion_matrix_[1, 1])# 召回率def recall_score(y_true, y_predict):confusion_matrix_ = confusion_matrix(y_true, y_predict)return confusion_matrix_[1, 1] / (confusion_matrix_[1, 1] + confusion_matrix_[1, 0])# sklearn库中的方法from sklearn.metrics import confusion_matrix, precision_score, recall_scoreconfusion_matrix(y_test, log_reg_prdeict)ps = precision_score(y_test, log_reg_prdeict)rs = recall_score(y_test, log_reg_prdeict)

F1 score:

兼顾精确度和召回率

def f1_score(precision_score, recall_score):try:return 2 * precision_score * recall_score / (precision_score + recall_score)except:return 0.0

精准率和召回率的平衡

log_reg.decision_function(X_test)[:10]log_reg.predict(X_test)[:10]# 决策函数dec_scor = log_reg.decision_function(X_test)y_predict_2 = np.array(dec_scor >= 5, dtype='int')confusion_matrix(y_test, y_predict_2)recall_score(y_test, y_predict_2)y_predict_3 = np.array(dec_scor >= -5, dtype='int')confusion_matrix(y_test, y_predict_3)precision_score(y_test, y_predict_3)recall_score(y_test, y_predict_3)thr = np.arange(np.min(dec_scor), np. max(dec_scor), 0.1)for th in thr:y_predict = np.array(dec_scor >= th, dtype='int')precisions.append(precision_score(y_test, y_predict))recalls.append(recall_score(y_test, y_predict))from matplotlib import pyplot as pltplt.plot(thr, precisions)plt.plot(thr, recalls)

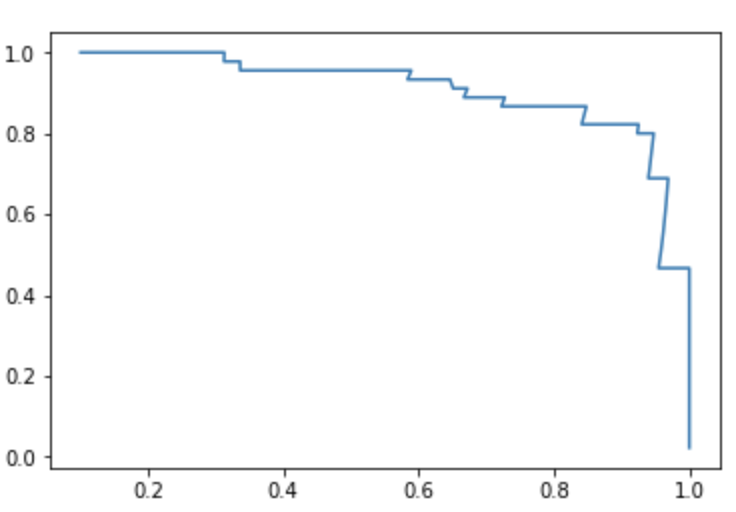

plt.plot(precisions, recalls)

Precision-Recall曲线

from sklearn.metrics import precision_recall_curveprecision, recall, thresholds = precision_recall_curve(y_test, dec_scor)plt.plot(thresholds, precision[:-1])plt.plot(thresholds, recall[:-1])

ROC

from sklearn.metrics import roc_curvefprs, tprs, thresholds = roc_curve(y_test, dec_scor)plt.plot(fprs, tprs)