Weekly Report

- Further tests shows that PatchLevelRotation method, by its own, yields decent results. But MoCo+PLR doesn’t seem to be effective as expected.

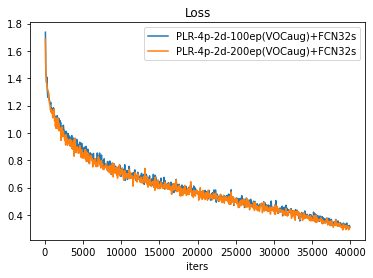

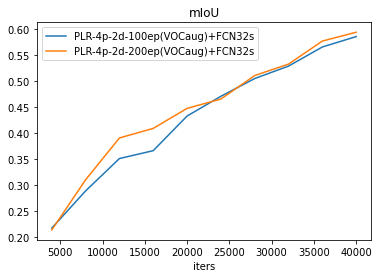

- PatchLevelRotation may not be very sensitive to augmentations, and longer pre-training lead to marginal improvement in loss decent at the early stage.

Test Results

*All FCN32s are in fact moco style 2 layer 3x3 conv with stride=1 and dilation=6.Comparison with MoCo on ImageNet

MoCov1(IN-200ep) v.s. MoCov1(IN-200ep) + PLR(VOCaug)

MoCov1(IN-200ep) v.s. PLR-augplus-200ep(VOCaug)

Patch Level Rotation on VOC dataset

PLR v.s. PLR-augplus

no augplus is MoCov1 aug; augplus is MoCov2 aug, same as SimCLR.

PLR-100ep v.s. PLR-200ep