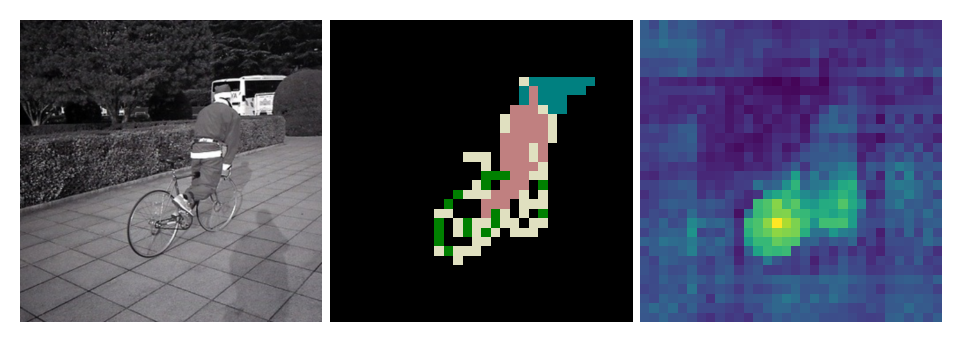

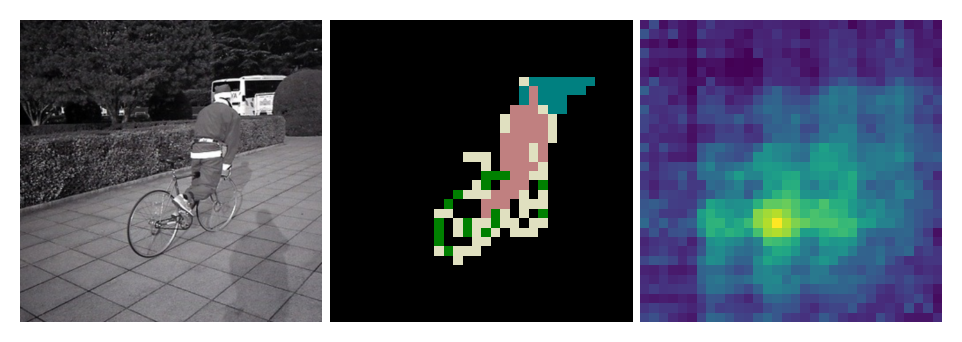

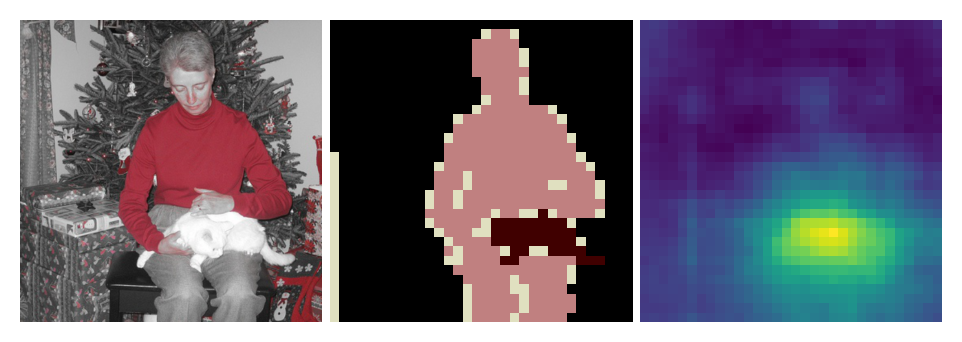

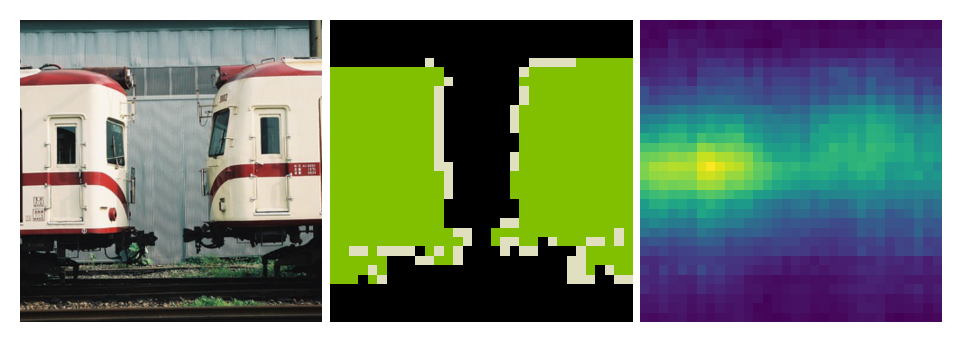

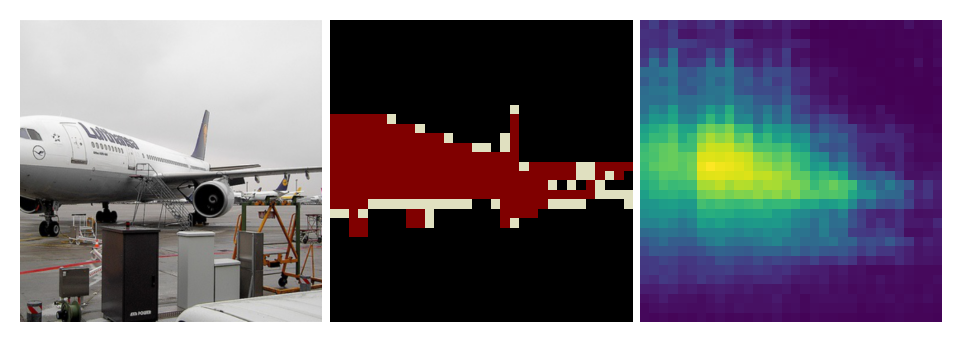

Attention Map

Global

Cosine similarity (should have high value in areas except background, as t-SNE visualization reveals that background labeled feature vectors still sparsely distributed in supervise-trained representation space) as attention heat map, calculated between

- global average pooling of feature map, size [1, channel]

feature map, size [(height, width), channel]

Supervised Learning

MoCo

PLMoCo

PLMoCoPlus (MoCo+PLMoCo)

PLSiam

Local

Cosine similarity as attention heat map, calculated between

selected point on feature map, size [1, channel] (brightest dot on attention map)

- feature map, size [(height, width), channel]

Supervised Learning

MoCo

非常明显的dilated convolution

PLMoCo

PLMoCoPlus

PLSiam

P2Vec 🙁

PRCL 🤷♂️

Recent Progress in SSL

ViT — an alternative to ResNet, a step closer to NLP

MoCo-v3

大胆猜测:gradient问题由first layer出现,逐渐引入后面层,然后instability,于是把projection固定成了random

然后就好多了。。。

算是把contrastive learning与vit结合的头一篇吧

DINO

self-DIstillation with NO labels

loss跟InfoNCE有些不同,key feature直接作为了label,而不是先cos sim再给定0/1的label,整体还是cross entropy

another day, another state of the art. 😄