基础环境

操作系统

$ cat /etc/issue [22:34:09]Ubuntu 16.04.6 LTS \n \l

cpu

$ lscpu [22:28:57]Architecture: x86_64CPU op-mode(s): 32-bit, 64-bitByte Order: Little EndianCPU(s): 2

内存

$ free -h [22:30:26]total used free shared buff/cache availableMem: 3.9G 293M 2.9G 4.0M 656M 3.3GSwap: 0B 0B 0B

docker

$ sudo apt-get update$ sudo apt-get install -y apt-transport-https ca-certificates curl gnupg-agent software-properties-common

安装

sudo curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo apt-key add - [22:37:06]add-apt-repository "deb [arch=amd64] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable"

安装docker

$ sudo apt-get update$ sudo apt-get install -y docker-ce docker-ce-cli containerd.io

查看docker 版本

$ sudo docker version [22:39:21]Client: Docker Engine - CommunityVersion: 19.03.12API version: 1.40Go version: go1.13.10Git commit: 48a66213feBuilt: Mon Jun 22 15:45:49 2020OS/Arch: linux/amd64Experimental: falseServer: Docker Engine - CommunityEngine:Version: 19.03.12API version: 1.40 (minimum version 1.12)Go version: go1.13.10Git commit: 48a66213feBuilt: Mon Jun 22 15:44:20 2020OS/Arch: linux/amd64Experimental: falsecontainerd:Version: 1.2.13GitCommit: 7ad184331fa3e55e52b890ea95e65ba581ae3429runc:Version: 1.0.0-rc10GitCommit: dc9208a3303feef5b3839f4323d9beb36df0a9dddocker-init:Version: 0.18.0GitCommit: fec3683

关闭

$ sudo vi /etc/modules$ sudo vi /etc/sysctl.d/k8s.conf$ sudo swapoff -a [22:44:44]$ sudo sed -i 's/.*swap.*/#&/' /etc/fstab

切换

sudo su root# curl -s https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add -#cat > /etc/apt/sources.list.d/aliyun-k8s.list <<EOFdeb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial mainEOF# apt update# apt install -y kubeadm kubelet kubectl ipvsadm ipset

安装

# kubeadm init --image-repository registry.aliyuncs.com/google_containers --pod-network-cidr=10.244.0.0/16W1027 22:51:15.001922 5101 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io][init] Using Kubernetes version: v1.19.3[preflight] Running pre-flight checks[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/[preflight] Pulling images required for setting up a Kubernetes cluster[preflight] This might take a minute or two, depending on the speed of your internet connection[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'[certs] Using certificateDir folder "/etc/kubernetes/pki"[certs] Generating "ca" certificate and key[certs] Generating "apiserver" certificate and key[certs] apiserver serving cert is signed for DNS names [izbp1dmodzug132aiko240z kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 10.111.118.193][certs] Generating "apiserver-kubelet-client" certificate and key[certs] Generating "front-proxy-ca" certificate and key[certs] Generating "front-proxy-client" certificate and key[certs] Generating "etcd/ca" certificate and key[certs] Generating "etcd/server" certificate and key[certs] etcd/server serving cert is signed for DNS names [izbp1dmodzug132aiko240z localhost] and IPs [10.111.118.193 127.0.0.1 ::1][certs] Generating "etcd/peer" certificate and key[certs] etcd/peer serving cert is signed for DNS names [izbp1dmodzug132aiko240z localhost] and IPs [10.111.118.193 127.0.0.1 ::1][certs] Generating "etcd/healthcheck-client" certificate and key[certs] Generating "apiserver-etcd-client" certificate and key[certs] Generating "sa" key and public key[kubeconfig] Using kubeconfig folder "/etc/kubernetes"[kubeconfig] Writing "admin.conf" kubeconfig file[kubeconfig] Writing "kubelet.conf" kubeconfig file[kubeconfig] Writing "controller-manager.conf" kubeconfig file[kubeconfig] Writing "scheduler.conf" kubeconfig file[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"[kubelet-start] Starting the kubelet[control-plane] Using manifest folder "/etc/kubernetes/manifests"[control-plane] Creating static Pod manifest for "kube-apiserver"[control-plane] Creating static Pod manifest for "kube-controller-manager"[control-plane] Creating static Pod manifest for "kube-scheduler"[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s[apiclient] All control plane components are healthy after 18.502143 seconds[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace[kubelet] Creating a ConfigMap "kubelet-config-1.19" in namespace kube-system with the configuration for the kubelets in the cluster[upload-certs] Skipping phase. Please see --upload-certs[mark-control-plane] Marking the node izbp1dmodzug132aiko240z as control-plane by adding the label "node-role.kubernetes.io/master=''"[mark-control-plane] Marking the node izbp1dmodzug132aiko240z as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule][bootstrap-token] Using token: o0runy.uikvs46vtfxidq0s[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key[addons] Applied essential addon: CoreDNS[addons] Applied essential addon: kube-proxyYour Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user:mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/configYou should now deploy a pod network to the cluster.Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 10.111.118.193:6443 --token o0runy.uikvs46vtfxidq0s \--discovery-token-ca-cert-hash sha256:0fc36ea0a82cca6d155ef893f4646ecba10e67b355a54a9cfbca27fbea1a210b

配置文件

# mkdir -p $HOME/.kube# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config# chown $(id -u):$(id -g) $HOME/.kube/config

验证集群信息

# kubectl get nodesNAME STATUS ROLES AGE VERSIONizbp1dmodzug132aiko240z NotReady master 2m26s v1.19.3# kubectl get pod -n kube-systemNAME READY STATUS RESTARTS AGEcoredns-6d56c8448f-4krmb 0/1 Pending 0 2m25scoredns-6d56c8448f-9svcn 0/1 Pending 0 2m25setcd-izbp1dmodzug132aiko240z 1/1 Running 0 2m34skube-apiserver-izbp1dmodzug132aiko240z 1/1 Running 0 2m35skube-controller-manager-izbp1dmodzug132aiko240z 1/1 Running 0 2m35skube-proxy-hntxx 1/1 Running 0 2m25skube-scheduler-izbp1dmodzug132aiko240z 1/1 Running 0 2m35s

CoreDNS、kube-controller-manager 等依赖于网络的 Pod 都处于 Pending 状态,即调度失败。这当然是符合预期的:因为这个 Master 节点的网络尚未就绪。

flannel 网络插件安装

查看版本

https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

apiVersion: apps/v1kind: DaemonSetmetadata:name: kube-flannel-dsnamespace: kube-systemlabels:tier: nodeapp: flannelspec:selector:matchLabels:app: flanneltemplate:metadata:labels:tier: nodeapp: flannelspec:affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: kubernetes.io/osoperator: Invalues:- linuxhostNetwork: truepriorityClassName: system-node-criticaltolerations:- operator: Existseffect: NoScheduleserviceAccountName: flannelinitContainers:- name: install-cniimage: quay.io/coreos/flannel:v0.13.0

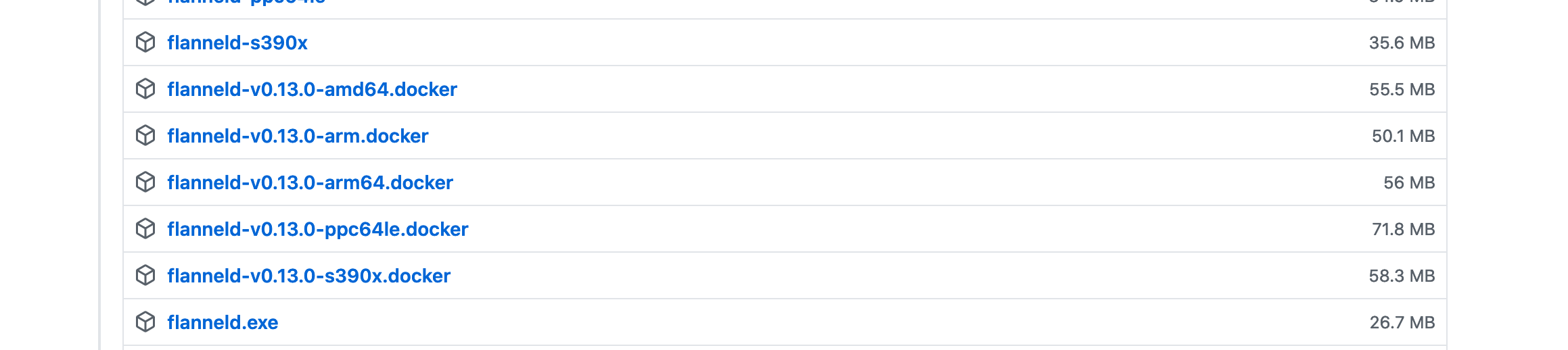

可能存在quay.io/coreos/flannel:v0.13.0 拉取失败的情况 可以使用 https://github.com/coreos/flannel/releases

的flanneld-v0.13.0-amd64.docker

执行解压命令

docker load < flanneld-v0.13.0-amd64.docker

去除master

# kubectl get nodesNAME STATUS ROLES AGE VERSIONizbp1dmodzug132aiko240z Ready master 19m v1.19.3# kubectl taint nodes izbp1dmodzug132aiko240z node-role.kubernetes.io/master-

或者

$ kubectl taint nodes --all node-role.kubernetes.io/master-

在“node-role.kubernetes.io/master”这个键后面加上了一个短横线“-”,这个格式就意味着移除所有以“node-role.kubernetes.io/master”为键的 Taint。

Hello

kubectl create deployment nginx --image=nginxkubectl expose deploy nginx --type=NodePort --port=8080 --target-port=80 --name=nginx-svc

pod

kubectl get podsNAME READY STATUS RESTARTS AGEnginx-6799fc88d8-xnd4t 1/1 Running 0 28s

svc

# kubectl get svcNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEnginx-svc NodePort 10.111.49.102 <none> 8080:30232/TCP 82s

执行

# curl 10.111.49.102:8080.....<h1>Welcome to nginx!</h1><p>If you see this page, the nginx web server is successfully installed andworking. Further configuration is required.</p>....

问题

The connection to the server 127.0.0.1:32768 was refused - did you specify the right host or port?