import mathimport pandas as pdimport torchfrom torch import nnfrom d2l import torch as d2l

10.7.2. 基于位置的前馈网络

class PositionWiseFFN(nn.Module):def __init__(self, ffn_num_input, ffn_num_hiddens, ffn_num_outputs,**kwargs):super(PositionWiseFFN, self).__init__(**kwargs)self.dense1 = nn.Linear(ffn_num_input, ffn_num_hiddens)self.relu = nn.ReLU()self.dense2 = nn.Linear(ffn_num_hiddens, ffn_num_outputs)def forward(self, X):return self.dense2(self.relu(self.dense1(X)))

ffn = PositionWiseFFN(4, 4, 8)ffn.eval()ffn(torch.ones((2, 3, 4)))[0]

tensor([[ 0.0860, 0.1906, 0.1094, -0.7480, -0.6123, 0.2456, -0.3660, -0.5613],[ 0.0860, 0.1906, 0.1094, -0.7480, -0.6123, 0.2456, -0.3660, -0.5613],[ 0.0860, 0.1906, 0.1094, -0.7480, -0.6123, 0.2456, -0.3660, -0.5613]],grad_fn=<SelectBackward>)

10.7.3. 残差连接和层归一化

ln = nn.LayerNorm(2)bn = nn.BatchNorm1d(2)X = torch.tensor([[1, 2], [2, 3]], dtype=torch.float32)# 在训练模式下计算 `X` 的均值和方差print('layer norm:', ln(X), '\nbatch norm:', bn(X))

layer norm: tensor([[-1.0000, 1.0000],[-1.0000, 1.0000]], grad_fn=<NativeLayerNormBackward>)batch norm: tensor([[-1.0000, -1.0000],[ 1.0000, 1.0000]], grad_fn=<NativeBatchNormBackward>)

class AddNorm(nn.Module):def __init__(self, normalized_shape, dropout, **kwargs):super(AddNorm, self).__init__(**kwargs)self.dropout = nn.Dropout(dropout)self.ln = nn.LayerNorm(normalized_shape)def forward(self, X, Y):return self.ln(self.dropout(Y) + X)

add_norm = AddNorm([3, 4], 0.5) # Normalized_shape is input.size()[1:]add_norm.eval()add_norm(torch.ones((2, 3, 4)), torch.ones((2, 3, 4))).shape

torch.Size([2, 3, 4])

10.7.4. 编码器

class EncoderBlock(nn.Module):def __init__(self, key_size, query_size, value_size, num_hiddens,norm_shape, ffn_num_input, ffn_num_hiddens, num_heads,dropout, use_bias=False, **kwargs):super(EncoderBlock, self).__init__(**kwargs)self.attention = d2l.MultiHeadAttention(key_size, query_size, value_size, num_hiddens, num_heads, dropout,use_bias)self.addnorm1 = AddNorm(norm_shape, dropout)self.ffn = PositionWiseFFN(ffn_num_input, ffn_num_hiddens, num_hiddens)self.addnorm2 = AddNorm(norm_shape, dropout)def forward(self, X, valid_lens):Y = self.addnorm1(X, self.attention(X, X, X, valid_lens))return self.addnorm2(Y, self.ffn(Y))

X = torch.ones((2, 100, 24))valid_lens = torch.tensor([3, 2])encoder_blk = EncoderBlock(24, 24, 24, 24, [100, 24], 24, 48, 8, 0.5)encoder_blk.eval()encoder_blk(X, valid_lens).shape

torch.Size([2, 100, 24])

class TransformerEncoder(d2l.Encoder):def __init__(self, vocab_size, key_size, query_size, value_size,num_hiddens, norm_shape, ffn_num_input, ffn_num_hiddens,num_heads, num_layers, dropout, use_bias=False, **kwargs):super(TransformerEncoder, self).__init__(**kwargs)self.num_hiddens = num_hiddensself.embedding = nn.Embedding(vocab_size, num_hiddens)self.pos_encoding = d2l.PositionalEncoding(num_hiddens, dropout)self.blks = nn.Sequential()for i in range(num_layers):self.blks.add_module("block"+str(i),EncoderBlock(key_size, query_size, value_size, num_hiddens,norm_shape, ffn_num_input, ffn_num_hiddens,num_heads, dropout, use_bias))def forward(self, X, valid_lens, *args):# 因为位置编码值在 -1 和 1 之间,# 因此嵌入值乘以嵌入维度的平方根进行缩放,# 然后再与位置编码相加。X = self.pos_encoding(self.embedding(X) * math.sqrt(self.num_hiddens))self.attention_weights = [None] * len(self.blks)for i, blk in enumerate(self.blks):X = blk(X, valid_lens)self.attention_weights[i] = blk.attention.attention.attention_weightsreturn X

encoder = TransformerEncoder(200, 24, 24, 24, 24, [100, 24], 24, 48, 8, 2, 0.5)encoder.eval()encoder(torch.ones((2, 100), dtype=torch.long), valid_lens).shape

torch.Size([2, 100, 24])

10.7.5. 解码器

class DecoderBlock(nn.Module):"""解码器中第 i 个块"""def __init__(self, key_size, query_size, value_size, num_hiddens,norm_shape, ffn_num_input, ffn_num_hiddens, num_heads,dropout, i, **kwargs):super(DecoderBlock, self).__init__(**kwargs)self.i = iself.attention1 = d2l.MultiHeadAttention(key_size, query_size, value_size, num_hiddens, num_heads, dropout)self.addnorm1 = AddNorm(norm_shape, dropout)self.attention2 = d2l.MultiHeadAttention(key_size, query_size, value_size, num_hiddens, num_heads, dropout)self.addnorm2 = AddNorm(norm_shape, dropout)self.ffn = PositionWiseFFN(ffn_num_input, ffn_num_hiddens,num_hiddens)self.addnorm3 = AddNorm(norm_shape, dropout)def forward(self, X, state):enc_outputs, enc_valid_lens = state[0], state[1]# 训练阶段,输出序列的所有词元都在同一时间处理,# 因此 `state[2][self.i]` 初始化为 `None`。# 预测阶段,输出序列是通过词元一个接着一个解码的,# 因此 `state[2][self.i]` 包含着直到当前时间步第 `i` 个块解码的输出表示if state[2][self.i] is None:key_values = Xelse:key_values = torch.cat((state[2][self.i], X), axis=1)state[2][self.i] = key_valuesif self.training:batch_size, num_steps, _ = X.shape# `dec_valid_lens` 的开头: (`batch_size`, `num_steps`),# 其中每一行是 [1, 2, ..., `num_steps`]dec_valid_lens = torch.arange(1, num_steps + 1, device=X.device).repeat(batch_size, 1)else:dec_valid_lens = None# 自注意力X2 = self.attention1(X, key_values, key_values, dec_valid_lens)Y = self.addnorm1(X, X2)# 编码器-解码器注意力。# `enc_outputs` 的开头: (`batch_size`, `num_steps`, `num_hiddens`)Y2 = self.attention2(Y, enc_outputs, enc_outputs, enc_valid_lens)Z = self.addnorm2(Y, Y2)return self.addnorm3(Z, self.ffn(Z)), state

decoder_blk = DecoderBlock(24, 24, 24, 24, [100, 24], 24, 48, 8, 0.5, 0)decoder_blk.eval()X = torch.ones((2, 100, 24))state = [encoder_blk(X, valid_lens), valid_lens, [None]]decoder_blk(X, state)[0].shape

class TransformerDecoder(d2l.AttentionDecoder):def __init__(self, vocab_size, key_size, query_size, value_size,num_hiddens, norm_shape, ffn_num_input, ffn_num_hiddens,num_heads, num_layers, dropout, **kwargs):super(TransformerDecoder, self).__init__(**kwargs)self.num_hiddens = num_hiddensself.num_layers = num_layersself.embedding = nn.Embedding(vocab_size, num_hiddens)self.pos_encoding = d2l.PositionalEncoding(num_hiddens, dropout)self.blks = nn.Sequential()for i in range(num_layers):self.blks.add_module("block"+str(i),DecoderBlock(key_size, query_size, value_size, num_hiddens,norm_shape, ffn_num_input, ffn_num_hiddens,num_heads, dropout, i))self.dense = nn.Linear(num_hiddens, vocab_size)def init_state(self, enc_outputs, enc_valid_lens, *args):return [enc_outputs, enc_valid_lens, [None] * self.num_layers]def forward(self, X, state):X = self.pos_encoding(self.embedding(X) * math.sqrt(self.num_hiddens))self._attention_weights = [[None] * len(self.blks) for _ in range (2)]for i, blk in enumerate(self.blks):X, state = blk(X, state)# 解码器自注意力权重self._attention_weights[0][i] = blk.attention1.attention.attention_weights# “编码器-解码器”自注意力权重self._attention_weights[1][i] = blk.attention2.attention.attention_weightsreturn self.dense(X), state@propertydef attention_weights(self):return self._attention_weights

10.7.6. 训练

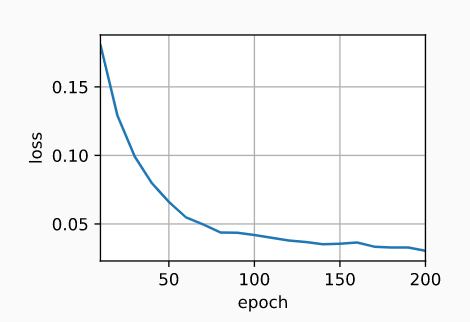

num_hiddens, num_layers, dropout, batch_size, num_steps = 32, 2, 0.1, 64, 10lr, num_epochs, device = 0.005, 200, d2l.try_gpu()ffn_num_input, ffn_num_hiddens, num_heads = 32, 64, 4key_size, query_size, value_size = 32, 32, 32norm_shape = [32]train_iter, src_vocab, tgt_vocab = d2l.load_data_nmt(batch_size, num_steps)encoder = TransformerEncoder(len(src_vocab), key_size, query_size, value_size, num_hiddens,norm_shape, ffn_num_input, ffn_num_hiddens, num_heads,num_layers, dropout)decoder = TransformerDecoder(len(tgt_vocab), key_size, query_size, value_size, num_hiddens,norm_shape, ffn_num_input, ffn_num_hiddens, num_heads,num_layers, dropout)net = d2l.EncoderDecoder(encoder, decoder)d2l.train_seq2seq(net, train_iter, lr, num_epochs, tgt_vocab, device)

loss 0.030, 5244.8 tokens/sec on cuda:0

训练结束后,使用 Transformer 模型将一些英语句子翻译成法语,

并且计算它们的 BLEU 分数。

engs = ['go .', "i lost .", 'he\'s calm .', 'i\'m home .']fras = ['va !', 'j\'ai perdu .', 'il est calme .', 'je suis chez moi .']for eng, fra in zip(engs, fras):translation, dec_attention_weight_seq = d2l.predict_seq2seq(net, eng, src_vocab, tgt_vocab, num_steps, device, True)print(f'{eng} => {translation}, ',f'bleu {d2l.bleu(translation, fra, k=2):.3f}')

go . => va !, bleu 1.000i lost . => j'ai perdu ., bleu 1.000he's calm . => il est calme ., bleu 1.000i'm home . => je suis chez moi ., bleu 1.000

enc_attention_weights = torch.cat(net.encoder.attention_weights, 0).reshape((num_layers, num_heads,-1, num_steps))enc_attention_weights.shape

torch.Size([2, 4, 10, 10])