一,准备数据

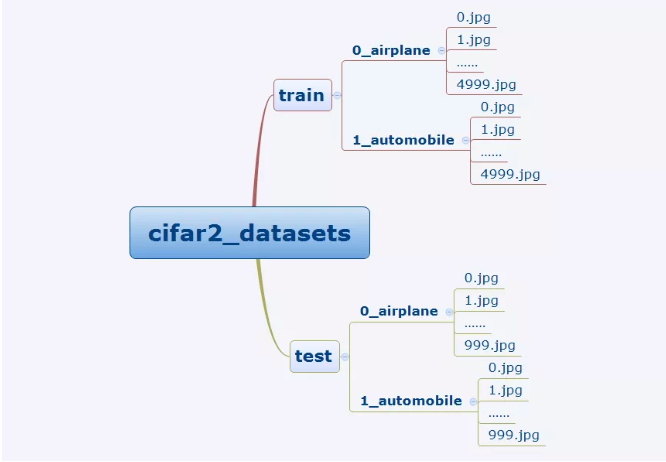

cifar2数据集为cifar10数据集的子集,只包括前两种类别airplane和automobile。

训练集有airplane和automobile图片各5000张,测试集有airplane和automobile图片各1000张。

cifar2任务的目标是训练一个模型来对飞机airplane和机动车automobile两种图片进行分类。

我们准备的Cifar2数据集的文件结构如下所示。

在tensorflow中准备图片数据的常用方案有两种,第一种是使用tf.keras中的ImageDataGenerator工具构建图片数据生成器。

第二种是使用tf.data.Dataset搭配tf.image中的一些图片处理方法构建数据管道。

第一种方法更为简单,其使用范例可以参考以下文章。

https://zhuanlan.zhihu.com/p/67466552

第二种方法是TensorFlow的原生方法,更加灵活,使用得当的话也可以获得更好的性能。

我们此处介绍第二种方法。

import tensorflow as tffrom tensorflow.keras import datasets,layers,modelsBATCH_SIZE = 100def load_image(img_path,size = (32,32)):label = tf.constant(1,tf.int8) if tf.strings.regex_full_match(img_path,".*automobile.*") \else tf.constant(0,tf.int8)img = tf.io.read_file(img_path)img = tf.image.decode_jpeg(img) #注意此处为jpeg格式img = tf.image.resize(img,size)/255.0return(img,label)

#使用并行化预处理num_parallel_calls 和预存数据prefetch来提升性能ds_train = tf.data.Dataset.list_files("./data/cifar2/train/*/*.jpg") \.map(load_image, num_parallel_calls=tf.data.experimental.AUTOTUNE) \.shuffle(buffer_size = 1000).batch(BATCH_SIZE) \.prefetch(tf.data.experimental.AUTOTUNE)ds_test = tf.data.Dataset.list_files("./data/cifar2/test/*/*.jpg") \.map(load_image, num_parallel_calls=tf.data.experimental.AUTOTUNE) \.batch(BATCH_SIZE) \.prefetch(tf.data.experimental.AUTOTUNE)

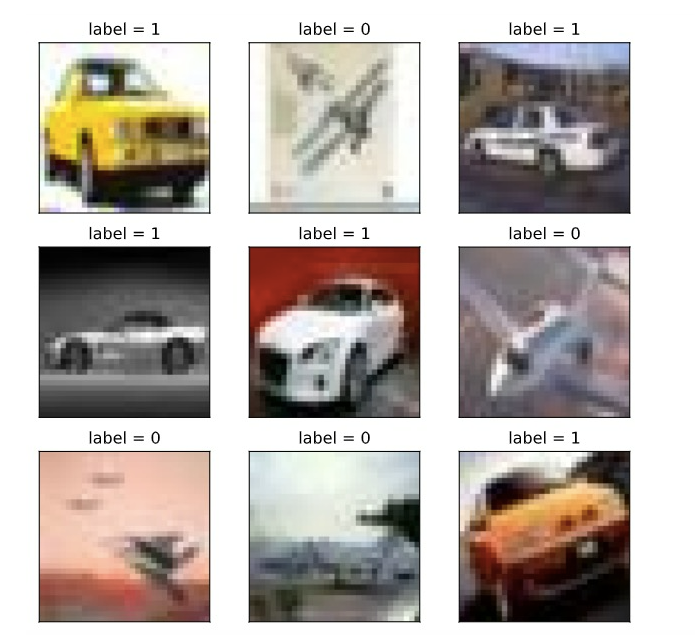

%matplotlib inline%config InlineBackend.figure_format = 'svg'#查看部分样本from matplotlib import pyplot as pltplt.figure(figsize=(8,8))for i,(img,label) in enumerate(ds_train.unbatch().take(9)):ax=plt.subplot(3,3,i+1)ax.imshow(img.numpy())ax.set_title("label = %d"%label)ax.set_xticks([])ax.set_yticks([])plt.show()

for x,y in ds_train.take(1):print(x.shape,y.shape)

(100, 32, 32, 3) (100,)

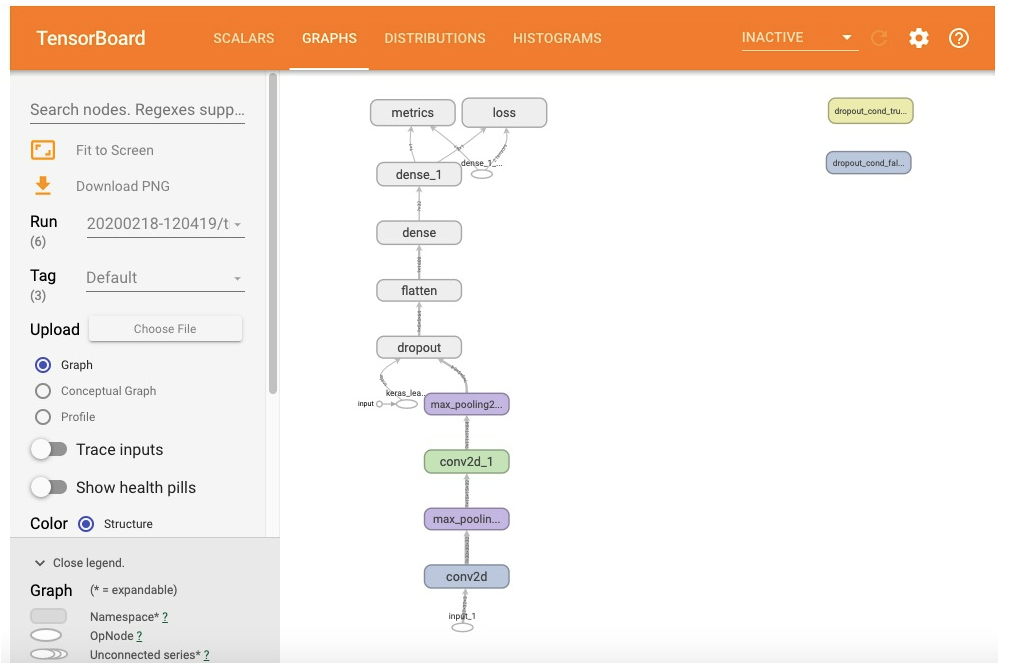

二,定义模型

使用Keras接口有以下3种方式构建模型:使用Sequential按层顺序构建模型,使用函数式API构建任意结构模型,继承Model基类构建自定义模型。

此处选择使用函数式API构建模型。

tf.keras.backend.clear_session() #清空会话inputs = layers.Input(shape=(32,32,3))x = layers.Conv2D(32,kernel_size=(3,3))(inputs)x = layers.MaxPool2D()(x)x = layers.Conv2D(64,kernel_size=(5,5))(x)x = layers.MaxPool2D()(x)x = layers.Dropout(rate=0.1)(x)x = layers.Flatten()(x)x = layers.Dense(32,activation='relu')(x)outputs = layers.Dense(1,activation = 'sigmoid')(x)model = models.Model(inputs = inputs,outputs = outputs)model.summary()

Model: "model"_________________________________________________________________Layer (type) Output Shape Param #=================================================================input_1 (InputLayer) [(None, 32, 32, 3)] 0_________________________________________________________________conv2d (Conv2D) (None, 30, 30, 32) 896_________________________________________________________________max_pooling2d (MaxPooling2D) (None, 15, 15, 32) 0_________________________________________________________________conv2d_1 (Conv2D) (None, 11, 11, 64) 51264_________________________________________________________________max_pooling2d_1 (MaxPooling2 (None, 5, 5, 64) 0_________________________________________________________________dropout (Dropout) (None, 5, 5, 64) 0_________________________________________________________________flatten (Flatten) (None, 1600) 0_________________________________________________________________dense (Dense) (None, 32) 51232_________________________________________________________________dense_1 (Dense) (None, 1) 33=================================================================Total params: 103,425Trainable params: 103,425Non-trainable params: 0_________________________________________________________________

三,训练模型

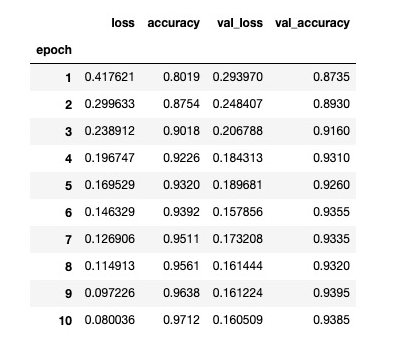

训练模型通常有3种方法,内置fit方法,内置train_on_batch方法,以及自定义训练循环。此处我们选择最常用也最简单的内置fit方法。

import datetimeimport osstamp = datetime.datetime.now().strftime("%Y%m%d-%H%M%S")logdir = os.path.join('data', 'autograph', stamp)## 在 Python3 下建议使用 pathlib 修正各操作系统的路径# from pathlib import Path# stamp = datetime.datetime.now().strftime("%Y%m%d-%H%M%S")# logdir = str(Path('./data/autograph/' + stamp))tensorboard_callback = tf.keras.callbacks.TensorBoard(logdir, histogram_freq=1)model.compile(optimizer=tf.keras.optimizers.Adam(learning_rate=0.001),loss=tf.keras.losses.binary_crossentropy,metrics=["accuracy"])history = model.fit(ds_train,epochs= 10,validation_data=ds_test,callbacks = [tensorboard_callback],workers = 4)

Train for 100 steps, validate for 20 stepsEpoch 1/10100/100 [==============================] - 16s 156ms/step - loss: 0.4830 - accuracy: 0.7697 - val_loss: 0.3396 - val_accuracy: 0.8475Epoch 2/10100/100 [==============================] - 14s 142ms/step - loss: 0.3437 - accuracy: 0.8469 - val_loss: 0.2997 - val_accuracy: 0.8680Epoch 3/10100/100 [==============================] - 13s 131ms/step - loss: 0.2871 - accuracy: 0.8777 - val_loss: 0.2390 - val_accuracy: 0.9015Epoch 4/10100/100 [==============================] - 12s 117ms/step - loss: 0.2410 - accuracy: 0.9040 - val_loss: 0.2005 - val_accuracy: 0.9195Epoch 5/10100/100 [==============================] - 13s 130ms/step - loss: 0.1992 - accuracy: 0.9213 - val_loss: 0.1949 - val_accuracy: 0.9180Epoch 6/10100/100 [==============================] - 14s 136ms/step - loss: 0.1737 - accuracy: 0.9323 - val_loss: 0.1723 - val_accuracy: 0.9275Epoch 7/10100/100 [==============================] - 14s 139ms/step - loss: 0.1531 - accuracy: 0.9412 - val_loss: 0.1670 - val_accuracy: 0.9310Epoch 8/10100/100 [==============================] - 13s 134ms/step - loss: 0.1299 - accuracy: 0.9525 - val_loss: 0.1553 - val_accuracy: 0.9340Epoch 9/10100/100 [==============================] - 14s 137ms/step - loss: 0.1158 - accuracy: 0.9556 - val_loss: 0.1581 - val_accuracy: 0.9340Epoch 10/10100/100 [==============================] - 14s 142ms/step - loss: 0.1006 - accuracy: 0.9617 - val_loss: 0.1614 - val_accuracy: 0.9345

四,评估模型

%load_ext tensorboard#%tensorboard --logdir ./data/keras_model

from tensorboard import notebooknotebook.list()

#在tensorboard中查看模型notebook.start("--logdir ./data/keras_model")

import pandas as pddfhistory = pd.DataFrame(history.history)dfhistory.index = range(1,len(dfhistory) + 1)dfhistory.index.name = 'epoch'dfhistory

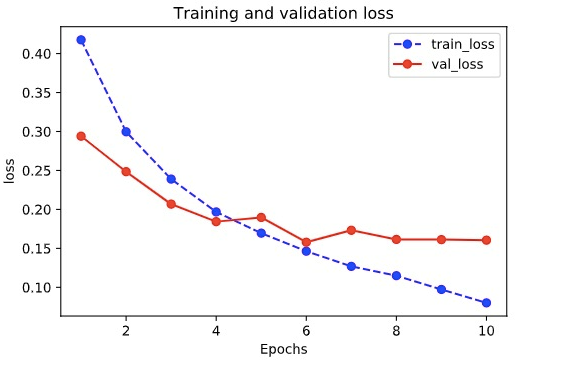

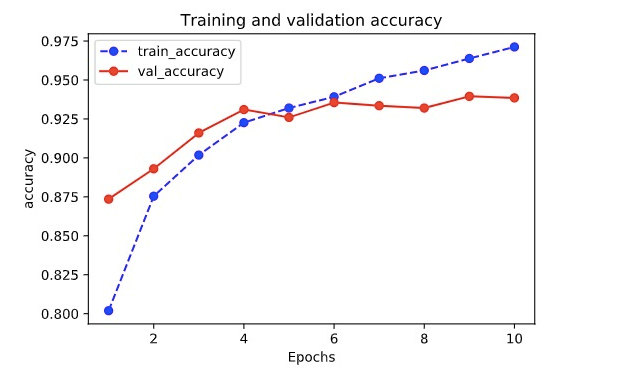

%matplotlib inline%config InlineBackend.figure_format = 'svg'import matplotlib.pyplot as pltdef plot_metric(history, metric):train_metrics = history.history[metric]val_metrics = history.history['val_'+metric]epochs = range(1, len(train_metrics) + 1)plt.plot(epochs, train_metrics, 'bo--')plt.plot(epochs, val_metrics, 'ro-')plt.title('Training and validation '+ metric)plt.xlabel("Epochs")plt.ylabel(metric)plt.legend(["train_"+metric, 'val_'+metric])plt.show()

plot_metric(history,"loss")

plot_metric(history,"accuracy")

#可以使用evaluate对数据进行评估val_loss,val_accuracy = model.evaluate(ds_test,workers=4)print(val_loss,val_accuracy)

0.16139143370091916 0.9345

五,使用模型

可以使用model.predict(ds_test)进行预测。

也可以使用model.predict_on_batch(x_test)对一个批量进行预测。

model.predict(ds_test)

array([[9.9996173e-01],[9.5104784e-01],[2.8648047e-04],...,[1.1484033e-03],[3.5589080e-02],[9.8537153e-01]], dtype=float32)

for x,y in ds_test.take(1):print(model.predict_on_batch(x[0:20]))

tf.Tensor([[3.8065155e-05][8.8236779e-01][9.1433197e-01][9.9921846e-01][6.4052093e-01][4.9970779e-03][2.6735585e-04][9.9842811e-01][7.9198682e-01][7.4823302e-01][8.7208226e-03][9.3951421e-03][9.9790359e-01][9.9998581e-01][2.1642199e-05][1.7915063e-02][2.5839690e-02][9.7538447e-01][9.7393811e-01][9.7333014e-01]], shape=(20, 1), dtype=float32)

六,保存模型

推荐使用TensorFlow原生方式保存模型。

# 保存权重,该方式仅仅保存权重张量model.save_weights('./data/tf_model_weights.ckpt',save_format = "tf")

# 保存模型结构与模型参数到文件,该方式保存的模型具有跨平台性便于部署model.save('./data/tf_model_savedmodel', save_format="tf")print('export saved model.')model_loaded = tf.keras.models.load_model('./data/tf_model_savedmodel')model_loaded.evaluate(ds_test)

[0.16139124035835267, 0.9345]