一、Hadoop的安装

解压 tar -zxvf /usr/local/moudle/hadoop-2.7.6.tar.gz -C /usr/local/soft/

# hadoopexport HADOOP_HOME=/usr/local/soft/hadoop-2.7.6export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin

文件配置

- 添加节点

slaves hadoop-env.shcore-site.xmlhdfs-site.xmlyarn-site.xmlmapred-site.xml

slaves

添加子节点

node1node2

Hadoop-env.sh

添加Java的jdk地址

export JAVA_HOME=/usr/local/soft/jdk1.8.0_171

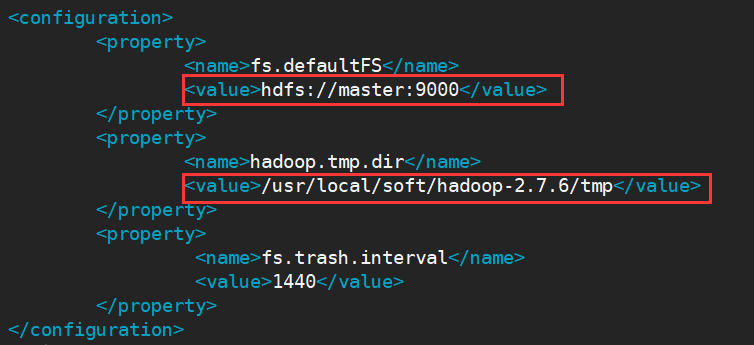

core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/local/soft/hadoop-2.7.6/tmp</value>

</property>

<property>

<name>fs.trash.interval</name>

<value>1440</value>

</property>

</configuration>

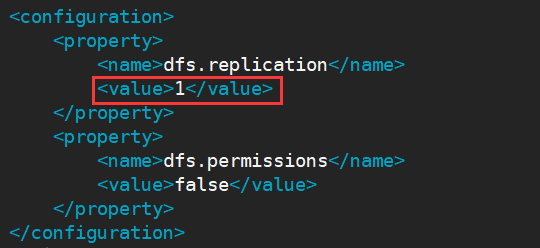

hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

</configuration>

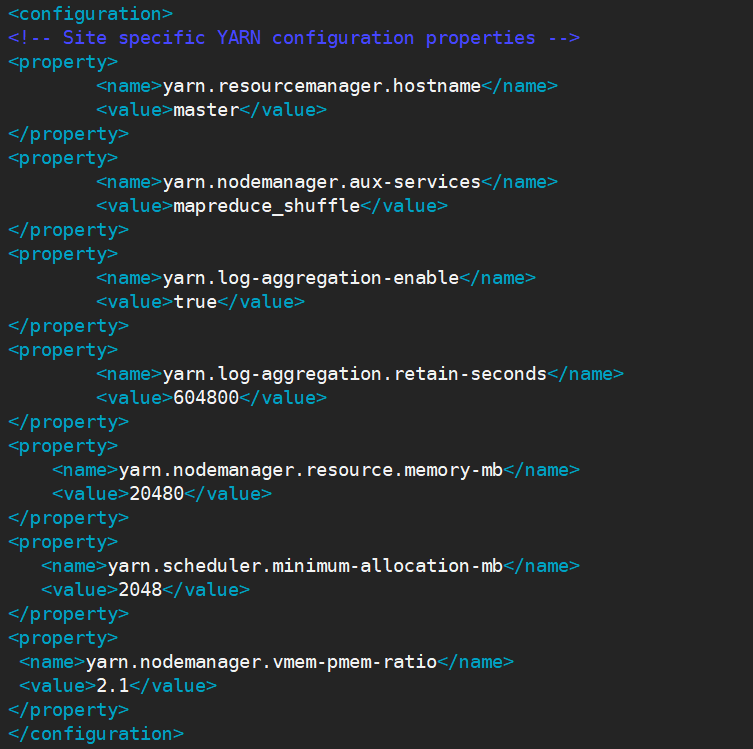

yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>master</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>20480</value>

</property>

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>2048</value>

</property>

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>2.1</value>

</property>

</configuration>

mapred-site.xml

该文件需要从

mapred-site.xml.example上复制一份

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>master:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>master:19888</value>

</property>

</configuration>

主从复制

scp -r /usr/local/soft/hadoop-2.7.6 node1:/usr/local/soft/scp -r /usr/local/soft/hadoop-2.7.6 node2:/usr/local/soft/

启动

查看hadoop文件下是否有tmp文件,没有就执行以下操作(ps:一般没有)

cd /usr/local/soft/hadoop-2.7.6./bin/hdfs namenode -format

启动:./sbin/start-all.sh

二、操作

基本操作

- 添加文件夹

**hadoop dfs -mkdir /root**- 添加多级文件夹

**hadoop dfs -mkdir -p /root/a/b/c**

- 添加多级文件夹

删除

**hadoop dfs -rm 文件路径**Moved: ‘hdfs://master:9000/root/a/b/c’ to trash at: hdfs://master:9000/user/root/.Trash/Current 删除的文件将存放在hdfs://master:9000/user/root/.Trash/Current下

- 彻底删除

**hadoop dfs -rm skipTrash 文件路径 **跳过回收站 - 删除文件夹

**hadoop dfs -rm -r 文件夹路径**

- 彻底删除

- 移动文件

**hadoop dfs -mv 源文件路径 目的路径** - 上传文件

**hadoop dfs -put 源文件路径 目的路径** - 下载文件

**hadoop dfs -get 源文件路径 目的路径** - 复制文件

查看文件

**-cat / -tail / -du**进入安全模式

hadoop dfsadmin -safemode enter- 退出安全模式

hadoop dfsadmin -safemode leavehadoop dfsadmin -safemode enter