1.环境准备 :

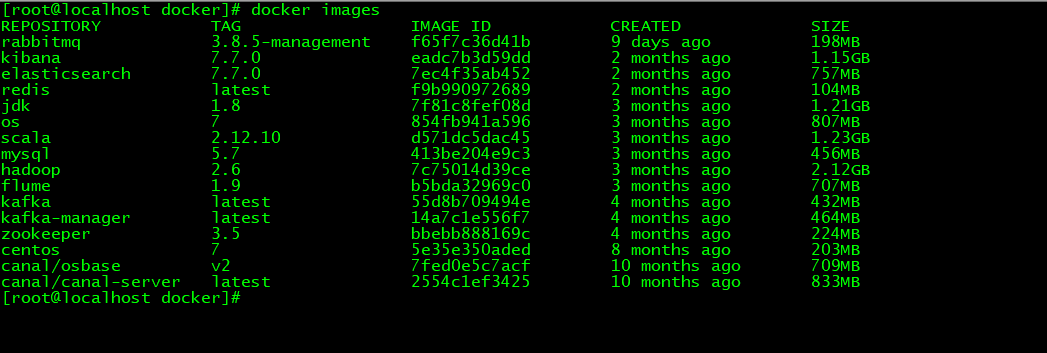

1.1 安装好的docker

查看 docker 是否安装 :

docker --version

1.2 物理机目录

新建docker 总目录,注意这里将来放一些关于docker的数据目录,名字随便取。

mkdir docker

1.3 拉取 zookeeper3.5 镜像

docker pull zookeeper:3.5

1.4 拉取 kafka 最新的镜像

kafka其实就是2.6版本,注意改下image 名称 ,变成 kafka

docker pull wurstmeister/kafka

1.5 拉取manager

注意改下image 名称 变成kafka-manager

docker pull kafkamanager/kafka-manager:latest

2.创建kafka相关文件&文件夹

2.1 日志挂载目录

mkdir -p /docker/kafka/kafka1/logs

mkdir -p /docker/kafka/kafka2/logs

mkdir -p /docker/kafka/kafka3/logs

3 创建 zookepeer配置文件及目录

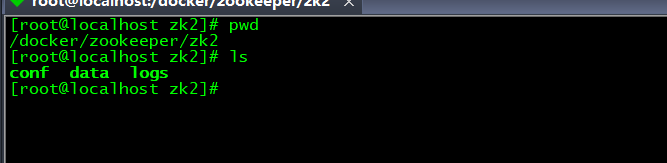

3.1 日志数据挂载目录

mkdir -p /docker/zookeeper/zk2/data

mkdir -p /docker/zookeeper/zk2/log

mkdir -p /docker/zookeeper/zk3/data

mkdir -p /docker/zookeeper/zk3/log

mkdir -p /docker/zookeeper/zk4/data

mkdir -p /docker/zookeeper/zk4/log

3.2 创建 zk 配置文件

mkdir -p /docker/zookeeper/zk2/conf

tickTime=2000

initLimit=5

syncLimit=2

dataDir=/data

dataLogDir=/datalog

clientPort=2181

maxClientCnxns=60

server.1=172.19.0.2:2888:3888

server.2=172.19.0.3:2888:3888

server.3=172.19.0.4:2888:3888

mkdir -p /docker/zookeeper/zk3/conf

tickTime=2000

initLimit=5

syncLimit=2

dataDir=/data

dataLogDir=/datalog

clientPort=2181

maxClientCnxns=60

server.1=172.19.0.2:2888:3888

server.2=172.19.0.3:2888:3888

server.3=172.19.0.4:2888:3888

mkdir -p /docker/zookeeper/zk4/conf

tickTime=2000

initLimit=5

syncLimit=2

dataDir=/data

dataLogDir=/datalog

clientPort=2181

maxClientCnxns=60

server.1=172.19.0.2:2888:3888

server.2=172.19.0.3:2888:3888

server.3=172.19.0.4:2888:3888

4 文件夹授权

文件目录授权

cd /docker

chmod 777 kafka -R

chmod 777 zookeeper -R

5 编排kafka&zookeeper节点容器

通常docker集群部署我们都会编写编排脚本,这个相对于单独一个一个run,出错率低且高效。

vi docker-compose.yml

version: '3'

services:

zoo2:

image: zookeeper:3.5

restart: always

hostname: zoo2

container_name: zoo2

privileged: true

ports:

- 2184:2181

volumes:

- /Users/hezhaoming/Documents/docker/zookeeper/zk2/conf/zoo.cfg:/conf/zoo.cfg

- /Users/hezhaoming/Documents/docker/zookeeper/zk2/data:/data

- /Users/hezhaoming/Documents/docker/zookeeper/zk2/logs:/datalog

environment:

ZOO_MY_ID: 1

ZOO_SERVERS: server.1=0.0.0.0:2888:3888 server.2=zoo3:2888:3888 server.3=zoo4:2888:3888

networks:

zk-net:

ipv4_address: 172.19.0.2

zoo3:

image: zookeeper:3.5

restart: always

hostname: zoo3

container_name: zoo3

privileged: true

ports:

- 2185:2181

volumes:

- /Users/hezhaoming/Documents/docker/zookeeper/zk3/conf/zoo.cfg:/conf/zoo.cfg

- /Users/hezhaoming/Documents/docker/zookeeper/zk3/data:/data

- /Users/hezhaoming/Documents/docker/zookeeper/zk3/logs:/datalog

environment:

ZOO_MY_ID: 2

ZOO_SERVERS: server.1=zoo2:2888:3888 server.2=0.0.0.0:2888:3888 server.3=zoo4:2888:3888

networks:

zk-net:

ipv4_address: 172.19.0.3

zoo4:

image: zookeeper:3.5

restart: always

hostname: zoo4

container_name: zoo4

privileged: true

ports:

- 2186:2181

volumes:

- /Users/hezhaoming/Documents/docker/zookeeper/zk4/conf/zoo.cfg:/conf/zoo.cfg

- /Users/hezhaoming/Documents/docker/zookeeper/zk4/data:/data

- /Users/hezhaoming/Documents/docker/zookeeper/zk4/logs:/datalog

environment:

ZOO_MY_ID: 3

ZOO_SERVERS: server.1=zoo2:2888:3888 server.2=zoo3:2888:3888 server.3=0.0.0.0:2888:3888

networks:

zk-net:

ipv4_address: 172.19.0.4

kafka1:

image: kafka

restart: always

hostname: kafka1

container_name: kafka1

privileged: true

ports:

- 9092:9092

environment:

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.0.112:9092 # 暴露在外的地址=

KAFKA_ZOOKEEPER_CONNECT: zoo2:2181,zoo3:2181,zoo4:2181

KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9092

KAFKA_BROKER_ID: 1

KAFKA_MESSAGE_MAX_BYTES: 1000000

# 删除策略

KAFKA_LOG_RETENTION_HOURS: 1

KAFKA_RETENTION_CHECK_INTERVAL_MS: 60000

KAFKA_LOG_CLEANER_ENABLE: "false"

# 每个topic只会存在一个partitions 和 1个replication

KAFKA_NUM_PARTITIONS: 3

KAFKA_DEFAULT_REPLICATION_FACTOR: 1

volumes:

- /Users/hezhaoming/Documents/docker/kafka/kafka1/logs:/kafka

external_links:

- zoo2

- zoo3

- zoo4

networks:

zk-net:

ipv4_address: 172.19.0.14

kafka2:

image: kafka

restart: always

hostname: kafka2

container_name: kafka2

privileged: true

ports:

- 9093:9092

environment:

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.0.112:9093 # 暴露在外的地址=

KAFKA_ZOOKEEPER_CONNECT: zoo2:2181,zoo3:2181,zoo4:2181

KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9092

KAFKA_BROKER_ID: 2

KAFKA_MESSAGE_MAX_BYTES: 1000000

# 删除策略

KAFKA_LOG_RETENTION_HOURS: 1

KAFKA_RETENTION_CHECK_INTERVAL_MS: 60000

KAFKA_LOG_CLEANER_ENABLE: "false"

# 每个topic只会存在一个partitions 和 1个replication

KAFKA_NUM_PARTITIONS: 3

KAFKA_DEFAULT_REPLICATION_FACTOR: 1

volumes:

- /Users/hezhaoming/Documents/docker/kafka/kafka2/logs:/kafka

external_links:

- zoo2

- zoo3

- zoo4

networks:

zk-net:

ipv4_address: 172.19.0.15

kafka3:

image: kafka

restart: always

hostname: kafka3

container_name: kafka3

privileged: true

ports:

- 9094:9092

environment:

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.0.112:9094 # 暴露在外的地址=

KAFKA_ZOOKEEPER_CONNECT: zoo2:2181,zoo3:2181,zoo4:2181

KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9092

KAFKA_BROKER_ID: 3

KAFKA_MESSAGE_MAX_BYTES: 1000000

# 删除策略

KAFKA_LOG_RETENTION_HOURS: 1

KAFKA_RETENTION_CHECK_INTERVAL_MS: 60000

KAFKA_LOG_CLEANER_ENABLE: "false"

# 每个topic只会存在一个partitions 和 1个replication

KAFKA_NUM_PARTITIONS: 3

KAFKA_DEFAULT_REPLICATION_FACTOR: 1

volumes:

- /Users/hezhaoming/Documents/docker/kafka/kafka3/logs:/kafka

external_links:

- zoo2

- zoo3

- zoo4

networks:

zk-net:

ipv4_address: 172.19.0.16

kafka-manager:

image: kafka-manager

restart: always

container_name: kafa-manager

hostname: kafka-manager

privileged: true

ports:

- 9000:9000

links: # 连接本compose文件创建的container

- kafka1

- kafka2

- kafka3

external_links: # 连接本compose文件以外的container

- zoo2

- zoo3

- zoo4

environment:

ZK_HOSTS: zoo2:2181,zoo3:2181,zoo4:2181

KAFKA_BROKERS: 192.168.0.112:9092,192.168.0.112:9093,192.168.0.112:9094

APPLICATION_SECRET: letmein

KM_ARGS: -Djava.net.preferIPv4Stack=true

networks:

zk-net:

ipv4_address: 172.19.0.17

networks:

zk-net:

driver: bridge

ipam:

driver: default

config:

- subnet: 172.19.0.1/24

6 启动容器

docker-compose up -d

">

">