| - 分享主题:CNN for Time-Series Forecasting - 论文标题:An Empirical Evaluation of Generic Convolutional and Recurrent Networks for Sequence Modeling - 论文链接:https://arxiv.org/pdf/1803.01271.pdf - 分享人:唐共勇 |

|---|

1. Summary

【必写】,推荐使用 grammarly 检查语法问题,尽量参考论文 introduction 的写作方式。需要写出

- 这篇文章解决了什么问题?

- 作者使用了什么方法(不用太细节)来解决了这个问题?

- 你觉得你需要继续去研究哪些概念才会加深你对这篇文章的理解?

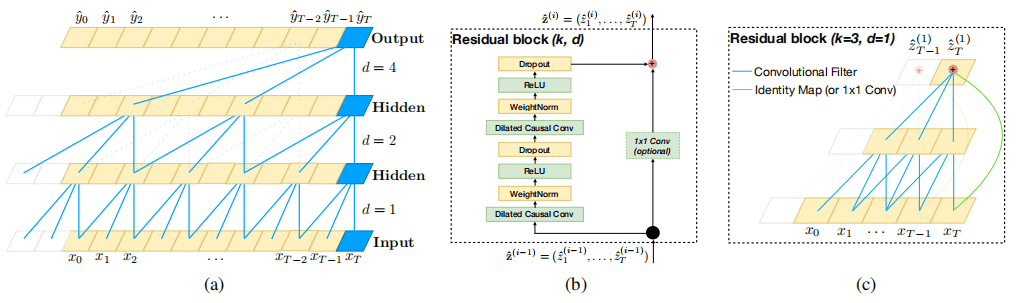

In the modeling of time series problems, we usually use a cyclic neural network (RNN) to model, because the cyclic autoregressive structure of RNN can well represent time series. This paper proposes to use a convolutional neural network (CNN) to model time series and proposes a universal model - temporal convolutional network (TCN). TCN is not the concept proposed in this paper. However, this paper establishes a series of architectures using convolution to process sequence data. The key of TCN is causal convolution, which means that for time t, only the current and previous information can be used. With the help of causal convolution, TCN can capture the historical information of past times. In addition, it can use a very deep network with the help of residual connection and can see enough history with the help of hole convolution. For this article, causal convolution, void convolution, and residual connection are the key points to understanding TCN.

2. 你对于论文的思考

需要写出你自己对于论文的思考,例如优缺点,你的takeaways

- 优点:

- 运行速度。卷积神经网络相较于循环神经网络一大优势就是可以并行运算,从而大大缩减运算时间

- 灵活的感受野。TCN的感受野受层数、卷积核、扩张系数等影响。可以通过调整这些参数使得TCN对历史的感受野可以灵活变动。

- 不存在梯度消失和梯度爆炸问题

- 缺点:

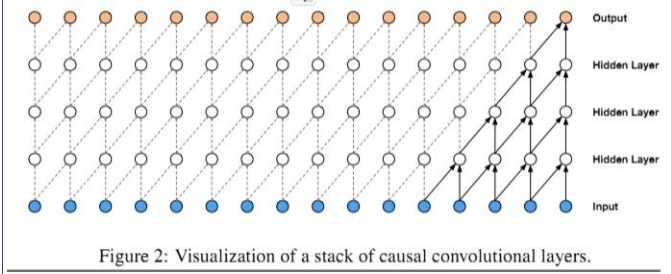

- 因果卷积

因果卷积如上图所示,在下一层t时刻的值只依赖于其前面层t时刻及之前的值。需要注意,每个隐藏层的长度与输入层的长度相同,并使用零填充来确保后续层具有相同的长度。

- 空洞卷积

空洞卷积又叫做扩张卷积,当使用因果卷积来捕捉历史依赖时,需要的历史越长,隐藏层越多。为了解决这个问题,从而提出了空洞卷积。

空洞卷积通过跳过部分输入来使得网络可以感知更长的历史,等同于通过增加0来从原始filter中生成更大的filter。这样就可以一定程度上解决大量堆叠隐藏层的问题。 - 残差网络

残差网络可以使深层网络也有比较好的效果。