- 前言

- 环境准备:

- 1. 系统初始化:

- 1. 更改主机名

- 2. 关闭swap交换分区

- 3. 关闭selinux

- 4. 关闭防火墙

- 5. 调整文件打开数等配置

- 6. yum update 八仙过海各显神通吧,安装自己所需的习惯的应用

- 7. ipvs添加(centos8内核默认4.18.内核4.19不包括4.19的是用这个)

- 8. 优化系统参数(不一定是最优,各取所有)

- 9. containerd安装

- 10. 配置 CRI 客户端 crictl

- 11. 安装 Kubeadm(centos8没有对应yum源使用centos7的阿里云yum源)

- 12. 修改kubelet配置

- 13 . journal 日志相关避免日志重复搜集,浪费系统资源。修改systemctl启动的最小文件打开数量,关闭ssh反向dns解析.设置清理日志,最大200m(可根据个人需求设置)

- 2. master节点操作

- 3. helm 安装 部署cilium 与hubble(默认helm3了)

- 4. work节点部署

- 5. master节点验证

- 6. 其他

- 总结

前言

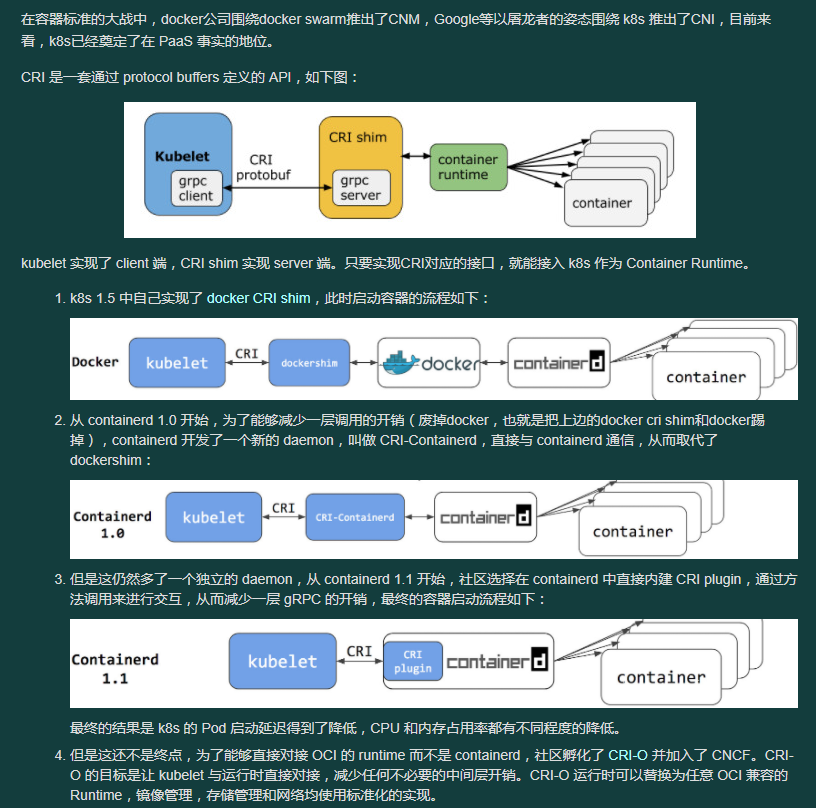

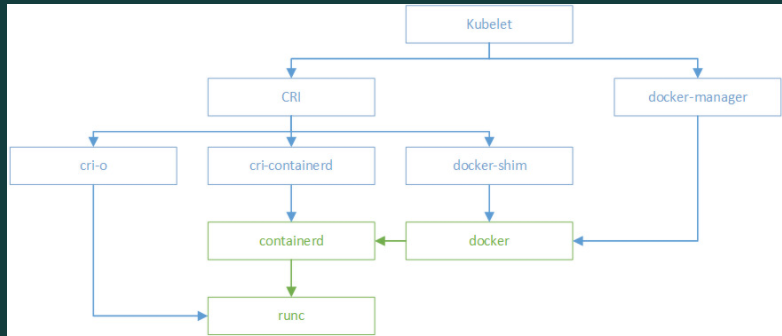

腾讯云绑定用户,开始使用过腾讯云的tke1.10版本。鉴于各种原因选择了自建。线上kubeadm自建kubernetes集群1.16版本(小版本升级到1.16.15)。kubeadm+haproxy+slb+flannel搭建高可用集群,集群启用ipvs。对外服务使用slb绑定traefik tcp 80 443端口对外映射(这是历史遗留问题,过去腾讯云slb不支持挂载多证书,这样也造成了无法使用slb的日志投递功能,现在slb已经支持了多证书的挂载,可以直接使用http http方式了)。生产环境当时搭建仓库没有使用腾讯云的块存储,直接使用cbs。直接用了local disk,还有nfs的共享存储。前几天整了个项目的压力测试,然后使用nfs存储的项目IO直接就飙升了。生产环境不建议使用。准备安装kubernetes 1.20版本,并使用cilium组网。hubble替代kube-proxy 体验一下ebpf。另外也直接上containerd。dockershim的方式确实也浪费资源的。这样也是可以减少资源开销,部署速度的。反正就是体验一下各种最新功能:

图片引用自:https://blog.kelu.org/tech/2020/10/09/the-diff-between-docker-containerd-runc-docker-shim.html

环境准备:

| 主机名 | ip | 系统 | 内核 |

|---|---|---|---|

| sh-master-01 | 10.3.2.5 | centos8 | 4.18.0-240.15.1.el8_3.x86_64 |

| sh-master-02 | 10.3.2.13 | centos8 | 4.18.0-240.15.1.el8_3.x86_64 |

| sh-master-03 | 10.3.2.16 | centos8 | 4.18.0-240.15.1.el8_3.x86_64 |

| sh-work-01 | 10.3.2.2 | centos8 | 4.18.0-240.15.1.el8_3.x86_64 |

| sh-work-02 | 10.3.2.2 | centos8 | 4.18.0-240.15.1.el8_3.x86_64 |

| sh-work-03 | 10.3.2.4 | centos8 | 4.18.0-240.15.1.el8_3.x86_64 |

注: 用centos8是为了懒升级内核版本了。centos7内核版本3.10确实有些老了。但是同样的centos8 kubernetes源是没有的,只能使用centos7的源。

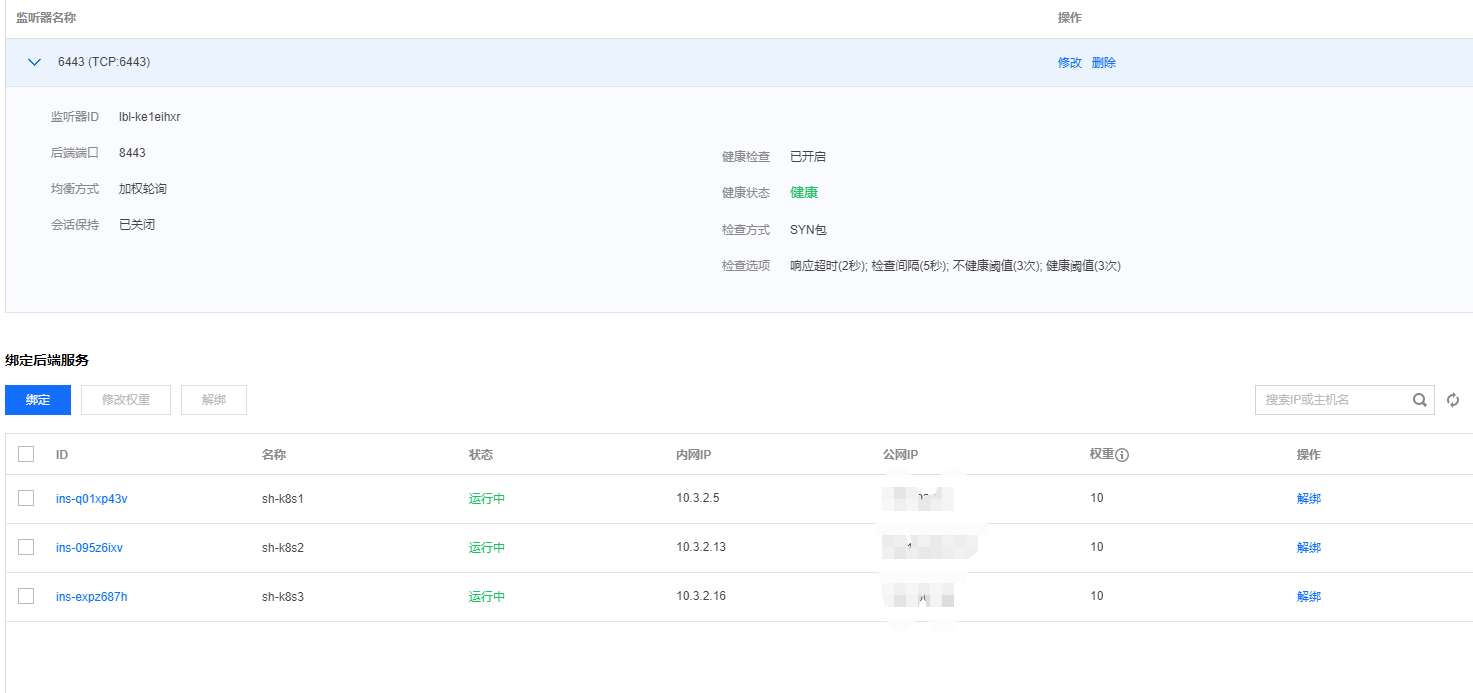

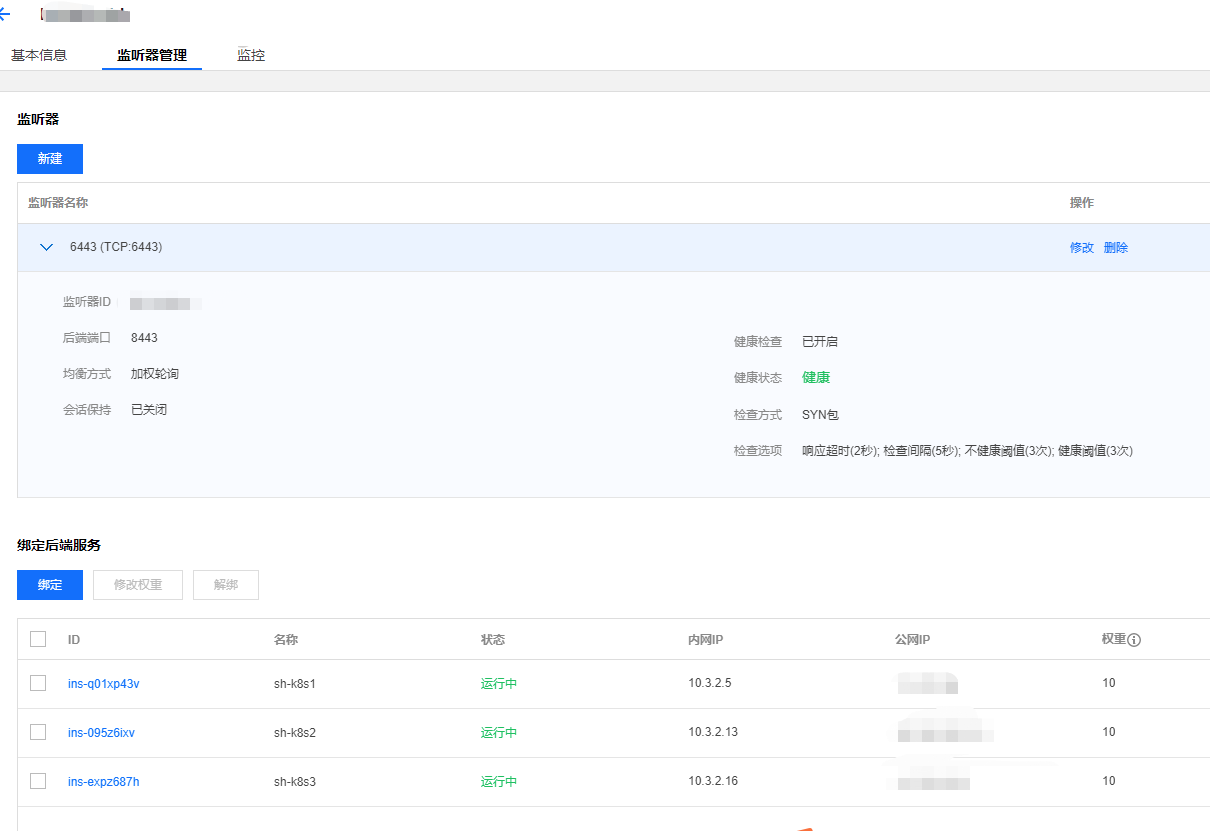

VIP slb地址:10.3.2.12(因为内网没有使用域名的需求,直接用了传统型内网负载,为了让slb映射端口与本地端口一样中间加了一层haproxy代理本地6443.然后slb代理8443端口为6443.)。

1. 系统初始化:

注:由于环境是部署在公有云的,使用了懒人方法。直接初始化了一台server.然后其他的直接都是复制的方式搭建的。

1. 更改主机名

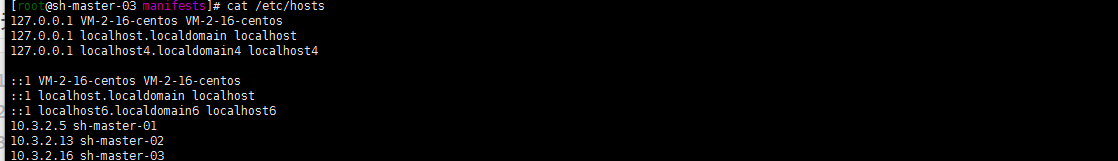

hostnamectl set-hostname sh-master-01cat /etc/hosts

就是举个例子了。我的host文件只在三台master节点写了,work节点都没有写的…….

2. 关闭swap交换分区

swapoff -ased -i 's/.*swap.*/#&/' /etc/fstab

3. 关闭selinux

setenforce 0sed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/sysconfig/selinuxsed -i "s/^SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/configsed -i "s/^SELINUX=permissive/SELINUX=disabled/g" /etc/sysconfig/selinuxsed -i "s/^SELINUX=permissive/SELINUX=disabled/g" /etc/selinux/config

4. 关闭防火墙

systemctl disable --now firewalldchkconfig firewalld off

5. 调整文件打开数等配置

cat> /etc/security/limits.conf <<EOF* soft nproc 1000000* hard nproc 1000000* soft nofile 1000000* hard nofile 1000000* soft memlock unlimited* hard memlock unlimitedEOF

当然了这里最好的其实是/etc/security/limits.d目录下生成一个新的配置文件。避免修改原来的总配置文件、这也是推荐使用的方式。

6. yum update 八仙过海各显神通吧,安装自己所需的习惯的应用

yum updateyum -y install gcc bc gcc-c++ ncurses ncurses-devel cmake elfutils-libelf-devel openssl-devel flex* bison* autoconf automake zlib* fiex* libxml* ncurses-devel libmcrypt* libtool-ltdl-devel* make cmake pcre pcre-devel openssl openssl-devel jemalloc-devel tlc libtool vim unzip wget lrzsz bash-comp* ipvsadm ipset jq sysstat conntrack libseccomp conntrack-tools socat curl wget git conntrack-tools psmisc nfs-utils tree bash-completion conntrack libseccomp net-tools crontabs sysstat iftop nload strace bind-utils tcpdump htop telnet lsof

7. ipvs添加(centos8内核默认4.18.内核4.19不包括4.19的是用这个)

:> /etc/modules-load.d/ipvs.confmodule=(ip_vsip_vs_rrip_vs_wrrip_vs_shbr_netfilter)for kernel_module in ${module[@]};do/sbin/modinfo -F filename $kernel_module |& grep -qv ERROR && echo $kernel_module >> /etc/modules-load.d/ipvs.conf || :done

内核大于等于4.19的

:> /etc/modules-load.d/ipvs.confmodule=(ip_vsip_vs_rrip_vs_wrrip_vs_shnf_conntrackbr_netfilter)for kernel_module in ${module[@]};do/sbin/modinfo -F filename $kernel_module |& grep -qv ERROR && echo $kernel_module >> /etc/modules-load.d/ipvs.conf || :done

这个地方我想我开不开ipvs应该没有多大关系了吧? 因为我网络组件用的cilium hubble。网络用的是ebpf。没有用iptables ipvs吧?至于配置ipvs算是原来部署养成的习惯

加载ipvs模块

systemctl daemon-reloadsystemctl enable --now systemd-modules-load.service

查询ipvs是否加载

# lsmod | grep ip_vsip_vs_sh 16384 0ip_vs_wrr 16384 0ip_vs_rr 16384 0ip_vs 172032 6 ip_vs_rr,ip_vs_sh,ip_vs_wrrnf_conntrack 172032 6 xt_conntrack,nf_nat,xt_state,ipt_MASQUERADE,xt_CT,ip_vsnf_defrag_ipv6 20480 4 nf_conntrack,xt_socket,xt_TPROXY,ip_vslibcrc32c 16384 3 nf_conntrack,nf_nat,ip_vs

8. 优化系统参数(不一定是最优,各取所有)

cat <<EOF > /etc/sysctl.d/k8s.confnet.ipv6.conf.all.disable_ipv6 = 1net.ipv6.conf.default.disable_ipv6 = 1net.ipv6.conf.lo.disable_ipv6 = 1net.ipv4.neigh.default.gc_stale_time = 120net.ipv4.conf.all.rp_filter = 0net.ipv4.conf.default.rp_filter = 0net.ipv4.conf.default.arp_announce = 2net.ipv4.conf.lo.arp_announce = 2net.ipv4.conf.all.arp_announce = 2net.ipv4.ip_forward = 1net.ipv4.tcp_max_tw_buckets = 5000net.ipv4.tcp_syncookies = 1net.ipv4.tcp_max_syn_backlog = 1024net.ipv4.tcp_synack_retries = 2# 要求iptables不对bridge的数据进行处理net.bridge.bridge-nf-call-ip6tables = 1net.bridge.bridge-nf-call-iptables = 1net.bridge.bridge-nf-call-arptables = 1net.netfilter.nf_conntrack_max = 2310720fs.inotify.max_user_watches=89100fs.may_detach_mounts = 1fs.file-max = 52706963fs.nr_open = 52706963vm.overcommit_memory=1vm.panic_on_oom=0vm.swappiness = 0EOFsysctl --system

9. containerd安装

dnf 与yum centos8的变化,具体的自己去看了呢。差不多吧…….

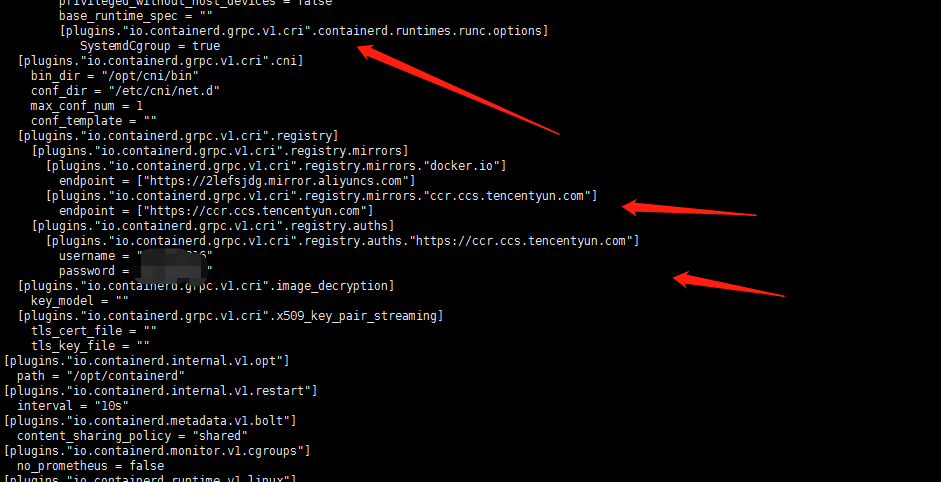

dnf install dnf-utils device-mapper-persistent-data lvm2yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.reposudo yum update -y && sudo yum install -y containerd.iocontainerd config default > /etc/containerd/config.toml# 替换 containerd 默认的 sand_box 镜像,编辑 /etc/containerd/config.tomlsandbox_image = "registry.aliyuncs.com/google_containers/pause:3.2"# 重启containerd$ systemctl daemon-reload$ systemctl restart containerd

其他的配置一个是启用SystemdCgroup另外一个是添加了本地镜像库,账号密码(直接使用了腾讯云的仓库)。

10. 配置 CRI 客户端 crictl

VERSION="v1.21.0"wget https://github.com/kubernetes-sigs/cri-tools/releases/download/$VERSION/crictl-$VERSION-linux-amd64.tar.gzsudo tar zxvf crictl-$VERSION-linux-amd64.tar.gz -C /usr/local/binrm -f crictl-$VERSION-linux-amd64.tar.gz

cat <<EOF > /etc/crictl.yamlruntime-endpoint: unix:///run/containerd/containerd.sockimage-endpoint: unix:///run/containerd/containerd.socktimeout: 10debug: falseEOF# 验证是否可用(可以顺便验证一下私有仓库)crictl pull nginx:alpinecrictl rmi nginx:alpinecrictl images

11. 安装 Kubeadm(centos8没有对应yum源使用centos7的阿里云yum源)

cat <<EOF > /etc/yum.repos.d/kubernetes.repo[kubernetes]name=Kubernetesbaseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64enabled=1gpgcheck=0repo_gpgcheck=0gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpgEOF# 删除旧版本,如果安装了yum remove kubeadm kubectl kubelet kubernetes-cni cri-tools socat# 查看所有可安装版本 下面两个都可以啊# yum list --showduplicates kubeadm --disableexcludes=kubernetes# 安装指定版本用下面的命令# yum -y install kubeadm-1.20.5 kubectl-1.20.5 kubelet-1.20.5or# yum install -y kubelet kubeadm kubectl --disableexcludes=kubernetes# 默认安装最新稳定版,当前版本1.20.5yum install kubeadm# 开机自启systemctl enable kubelet.service

12. 修改kubelet配置

vi /etc/sysconfig/kubeletKUBELET_EXTRA_ARGS= --cgroup-driver=systemd --container-runtime=remote --container-runtime-endpoint=/run/containerd/containerd.sock

13 . journal 日志相关避免日志重复搜集,浪费系统资源。修改systemctl启动的最小文件打开数量,关闭ssh反向dns解析.设置清理日志,最大200m(可根据个人需求设置)

sed -ri 's/^\$ModLoad imjournal/#&/' /etc/rsyslog.confsed -ri 's/^\$IMJournalStateFile/#&/' /etc/rsyslog.confsed -ri 's/^#(DefaultLimitCORE)=/\1=100000/' /etc/systemd/system.confsed -ri 's/^#(DefaultLimitNOFILE)=/\1=100000/' /etc/systemd/system.confsed -ri 's/^#(UseDNS )yes/\1no/' /etc/ssh/sshd_configjournalctl --vacuum-size=200M

2. master节点操作

1 . 安装haproxy

yum install haproxy

cat <<EOF > /etc/haproxy/haproxy.cfg#---------------------------------------------------------------------# Example configuration for a possible web application. See the# full configuration options online.## http://haproxy.1wt.eu/download/1.4/doc/configuration.txt##---------------------------------------------------------------------#---------------------------------------------------------------------# Global settings#---------------------------------------------------------------------global# to have these messages end up in /var/log/haproxy.log you will# need to:## 1) configure syslog to accept network log events. This is done# by adding the '-r' option to the SYSLOGD_OPTIONS in# /etc/sysconfig/syslog## 2) configure local2 events to go to the /var/log/haproxy.log# file. A line like the following can be added to# /etc/sysconfig/syslog## local2.* /var/log/haproxy.log#log 127.0.0.1 local2chroot /var/lib/haproxypidfile /var/run/haproxy.pidmaxconn 4000user haproxygroup haproxydaemon# turn on stats unix socketstats socket /var/lib/haproxy/stats#---------------------------------------------------------------------# common defaults that all the 'listen' and 'backend' sections will# use if not designated in their block#---------------------------------------------------------------------defaultsmode tcplog globaloption tcplogoption dontlognulloption http-server-closeoption forwardfor except 127.0.0.0/8option redispatchretries 3timeout http-request 10stimeout queue 1mtimeout connect 10stimeout client 1mtimeout server 1mtimeout http-keep-alive 10stimeout check 10smaxconn 3000#---------------------------------------------------------------------# main frontend which proxys to the backends#---------------------------------------------------------------------frontend kubernetesbind *:8443 #配置端口为8443mode tcpdefault_backend kubernetes#---------------------------------------------------------------------# static backend for serving up images, stylesheets and such#---------------------------------------------------------------------backend kubernetes #后端服务器,也就是说访问10.3.2.12:6443会将请求转发到后端的三台,这样就实现了负载均衡balance roundrobinserver master1 10.3.2.5:6443 check maxconn 2000server master2 10.3.2.13:6443 check maxconn 2000server master3 10.3.2.16:6443 check maxconn 2000EOFsystemctl enable haproxy && systemctl start haproxy && systemctl status haproxy

2. sh-master-01节点初始化

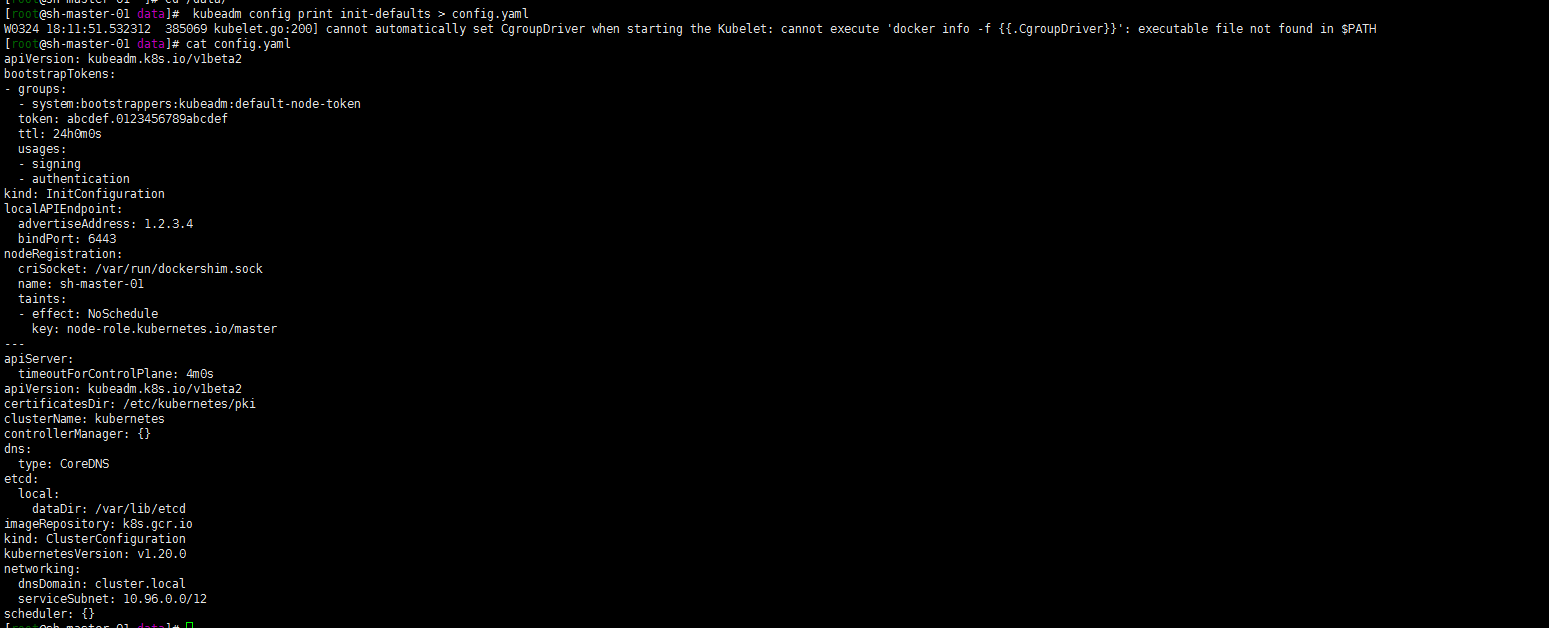

1.生成config配置文件

kubeadm config print init-defaults > config.yaml

2. 修改kubeadm初始化文件

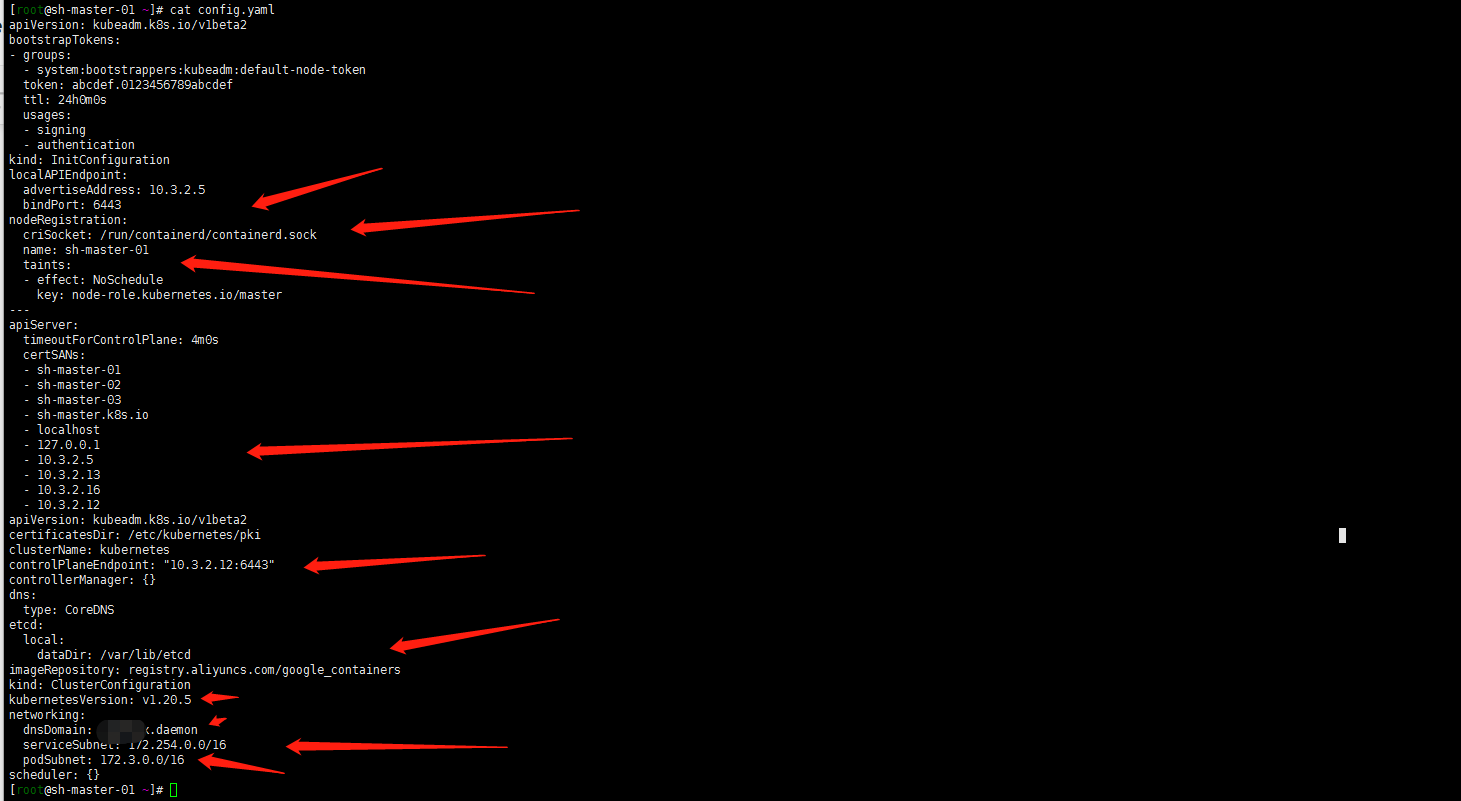

apiVersion: kubeadm.k8s.io/v1beta2bootstrapTokens:- groups:- system:bootstrappers:kubeadm:default-node-tokentoken: abcdef.0123456789abcdefttl: 24h0m0susages:- signing- authenticationkind: InitConfigurationlocalAPIEndpoint:advertiseAddress: 10.3.2.5bindPort: 6443nodeRegistration:criSocket: /run/containerd/containerd.sockname: sh-master-01taints:- effect: NoSchedulekey: node-role.kubernetes.io/master---apiServer:timeoutForControlPlane: 4m0scertSANs:- sh-master-01- sh-master-02- sh-master-03- sh-master.k8s.io- localhost- 127.0.0.1- 10.3.2.5- 10.3.2.13- 10.3.2.16- 10.3.2.12apiVersion: kubeadm.k8s.io/v1beta2certificatesDir: /etc/kubernetes/pkiclusterName: kubernetescontrolPlaneEndpoint: "10.3.2.12:6443"controllerManager: {}dns:type: CoreDNSetcd:local:dataDir: /var/lib/etcdimageRepository: registry.aliyuncs.com/google_containerskind: ClusterConfigurationkubernetesVersion: v1.20.5networking:dnsDomain: xx.daemonserviceSubnet: 172.254.0.0/16podSubnet: 172.3.0.0/16scheduler: {}

3. kubeadm master-01节点初始化(屏蔽kube-proxy)。

kubeadm init --skip-phases=addon/kube-proxy --config=config.yaml

安装成功截图就忽略了,后写的笔记没有保存截图。成功的日志中包含

mkdir -p $HOME/.kubemkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/config按照输出sh-master-02 ,sh-master-03节点加入集群将sh-master-01 /etc/kubernetes/pki目录下ca.* sa.* front-proxy-ca.* etcd/ca* 打包分发到sh-master-02,sh-master-03 /etc/kubernetes/pki目录下kubeadm join 10.3.2.12:6443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:eb0fe00b59fa27f82c62c91def14ba294f838cd0731c91d0d9c619fe781286b6 --control-plane然后同sh-master-01一样执行一遍下面的命令:mkdir -p $HOME/.kubesudo \cp /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/config

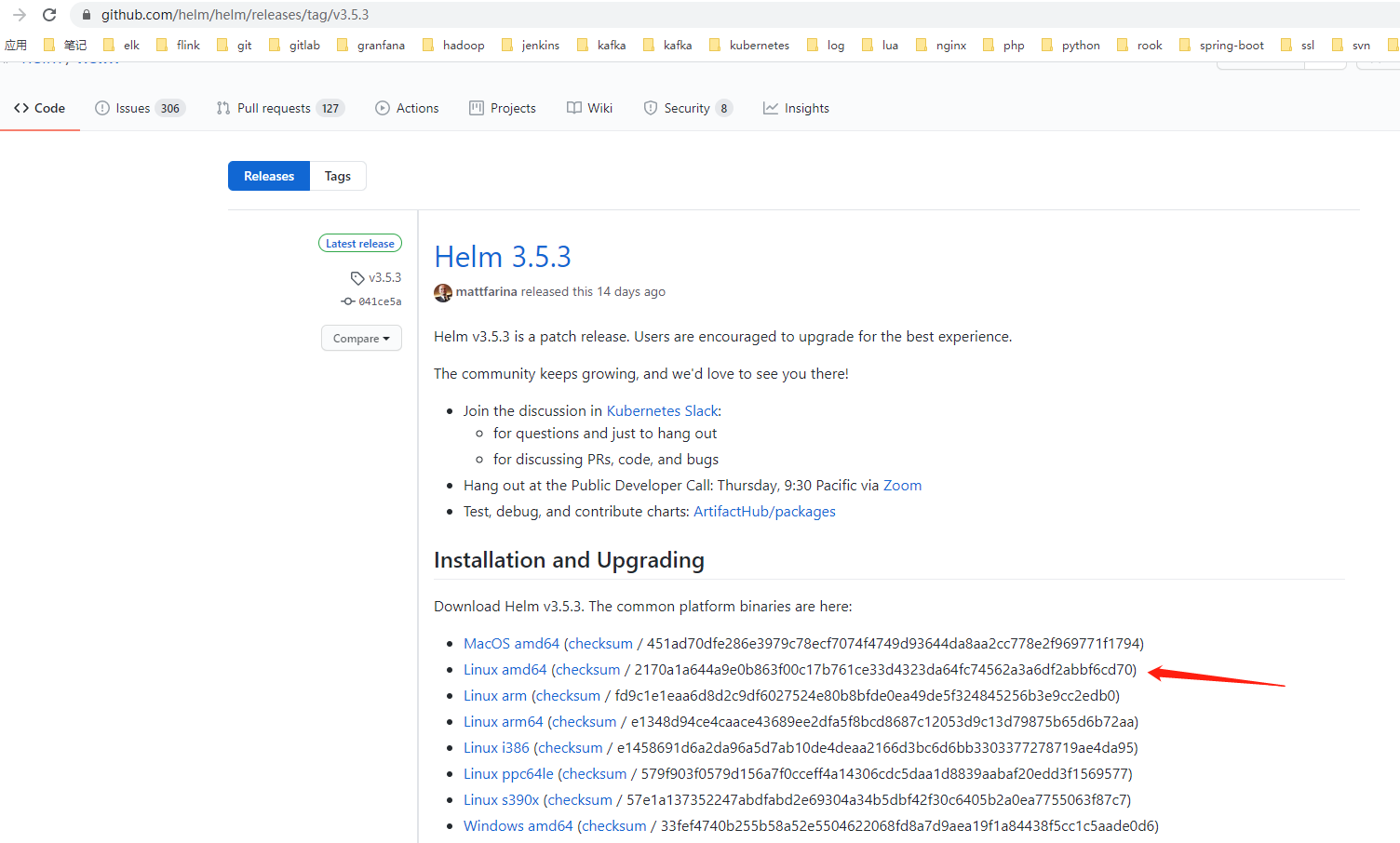

3. helm 安装 部署cilium 与hubble(默认helm3了)

1. 下载helm并安装helm

注: 由于网络原因。下载helm安装包下载不动经常,直接github下载到本地了

tar zxvf helm-v3.5.3-linux-amd64.tar.gzcp helm /usr/bin/

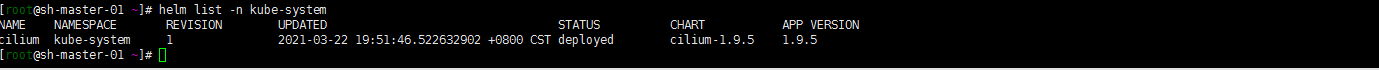

2 . helm 安装cilium hubble

早先版本 cilium 与hubble是分开的现在貌似都集成了一波流走一遍:

helm install cilium cilium/cilium --version 1.9.5--namespace kube-system--set nodeinit.enabled=true--set externalIPs.enabled=true--set nodePort.enabled=true--set hostPort.enabled=true--set pullPolicy=IfNotPresent--set config.ipam=cluster-pool--set hubble.enabled=true--set hubble.listenAddress=":4244"--set hubble.relay.enabled=true--set hubble.metrics.enabled="{dns,drop,tcp,flow,port-distribution,icmp,http}"--set prometheus.enabled=true--set peratorPrometheus.enabled=true--set hubble.ui.enabled=true--set kubeProxyReplacement=strict--set k8sServiceHost=10.3.2.12--set k8sServicePort=6443

部署成功就是这样的

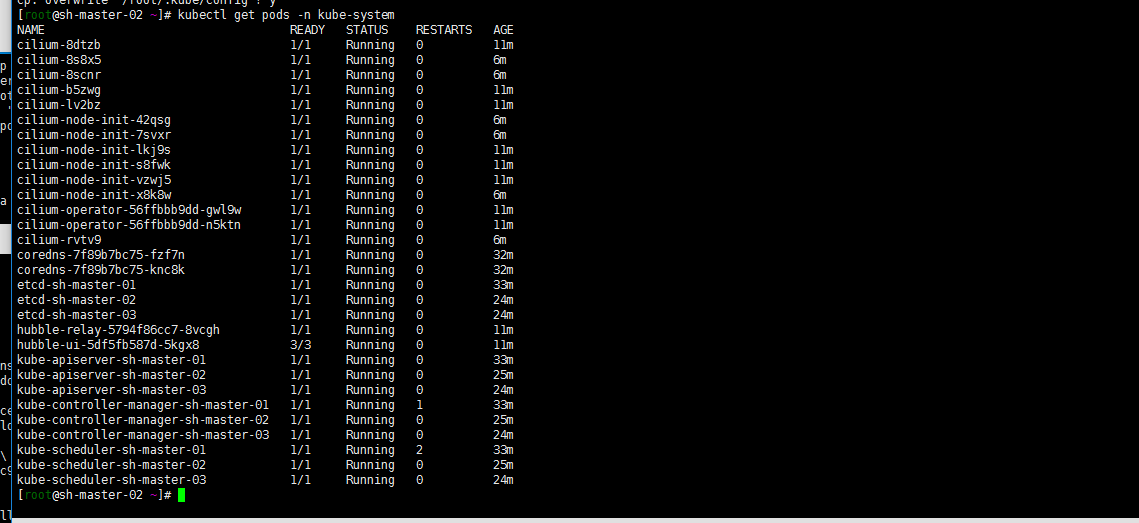

嗯 木有kube-proxy的(截图是work加点加入后的故node-init cilium pod都有6个)

4. work节点部署

sh-work-01 sh-work-02 sh-work-03节点加入集群

kubeadm join 10.3.2.12:6443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:eb0fe00b59fa27f82c62c91def14ba294f838cd0731c91d0d9c619fe781286b6

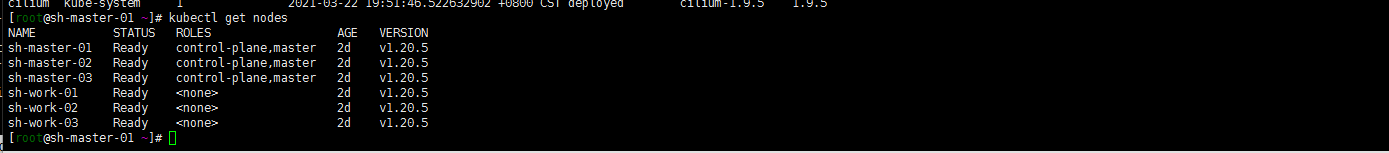

5. master节点验证

随便一台master节点 。默认master-01节点

容易出错 的地方

- 关于slb绑定。绑定一台server然后kubeadm init是容易出差的 slb 端口与主机端口一样。自己连自己是不可以的….不明觉厉。试了好几次。最后绑定三个都先启动了haproxy。

- cilium依赖于BPF要先确认下系统是否挂载了BPF文件系统(我的是检查了默认启用了)

[root@sh-master-01 manifests]# mount |grep bpfbpf on /sys/fs/bpf type bpf (rw,nosuid,nodev,noexec,relatime,mode=700)

3.关于kubernetes的配置Cgroup设置与containerd一直都用了system,记得检查

KUBELET_EXTRA_ARGS= --cgroup-driver=systemd --container-runtime=remote --container-runtime-endpoint=/run/containerd/containerd.sock

在 kube-controller-manager 中使能 PodCIDR

在 controller-manager.config 中添加

--allocate-node-cidrs=true6. 其他

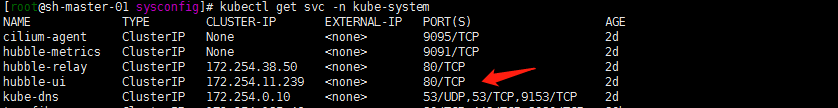

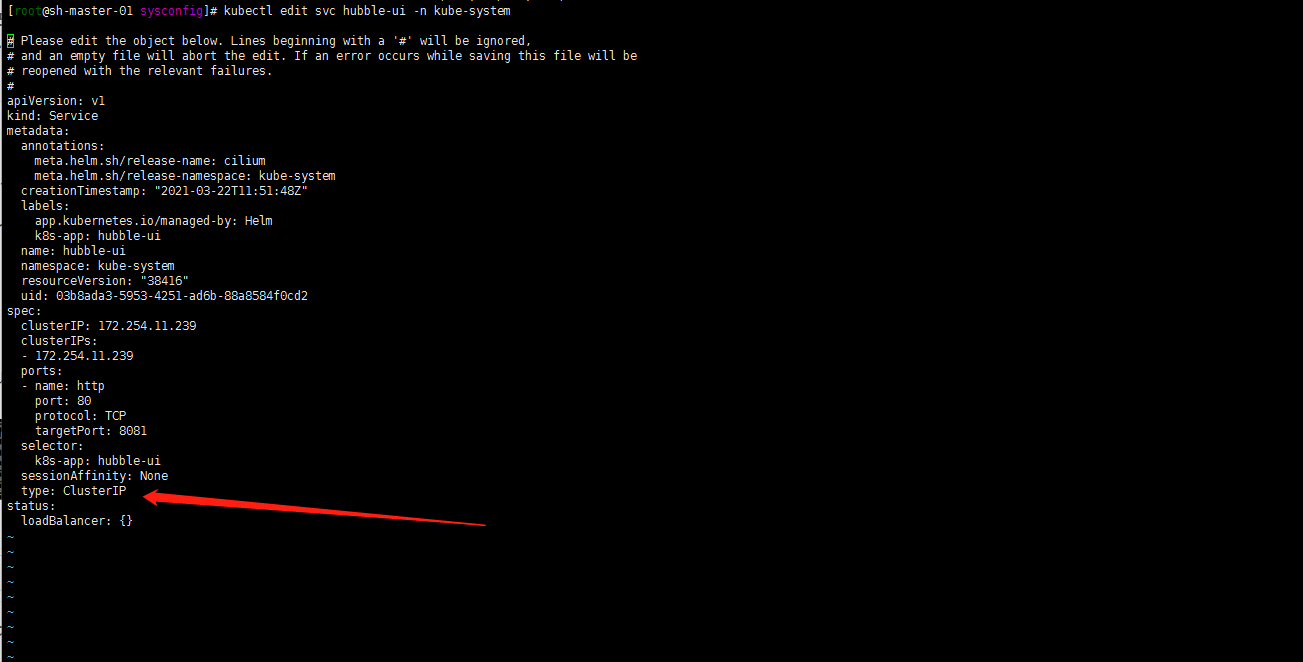

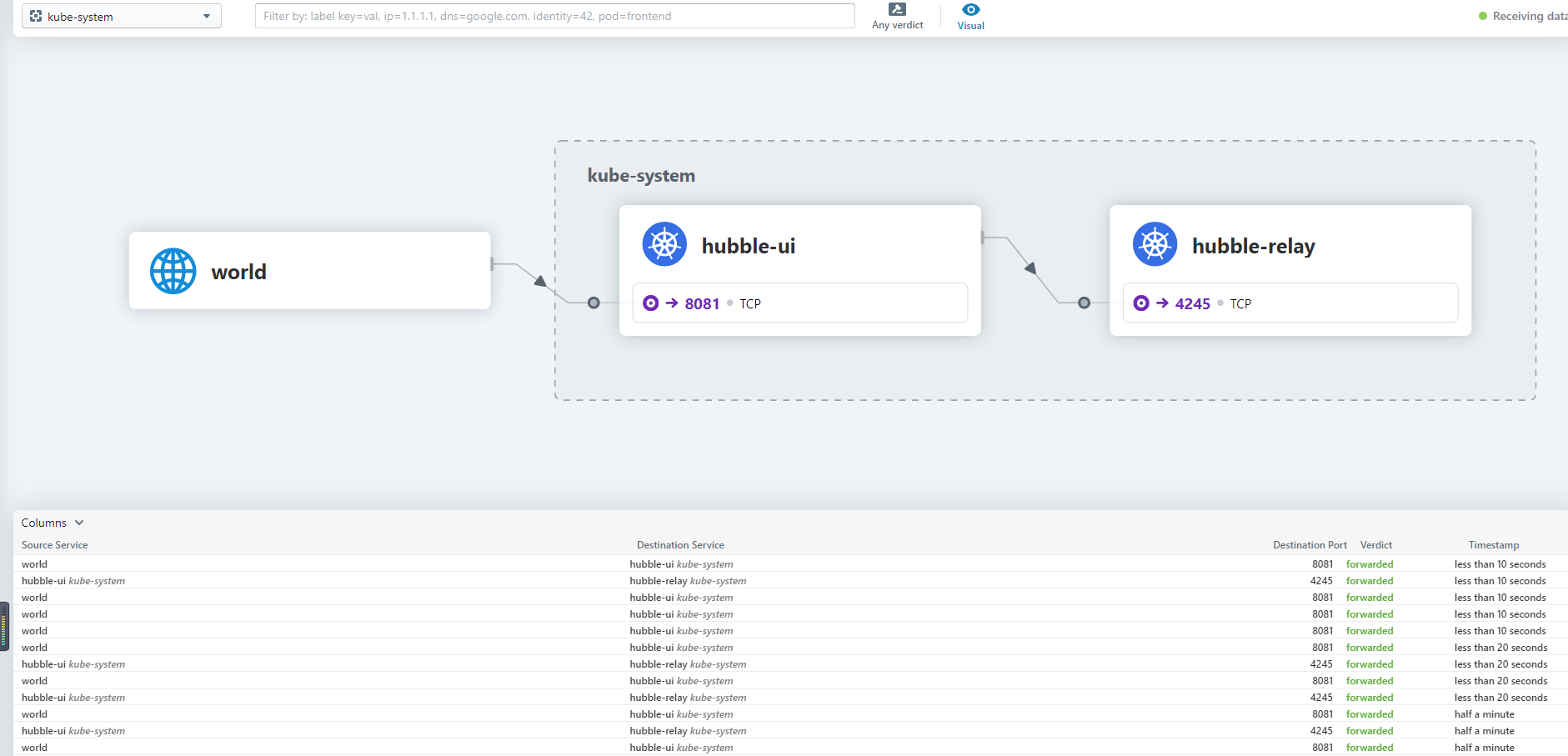

1. 验证下hubble hubble ui

kubectl edit svc hubble-ui -n kube-system

修改为NodePort 先测试一下。后面会用traefik代理

work or master节点随便一个公网IP+nodeport访问

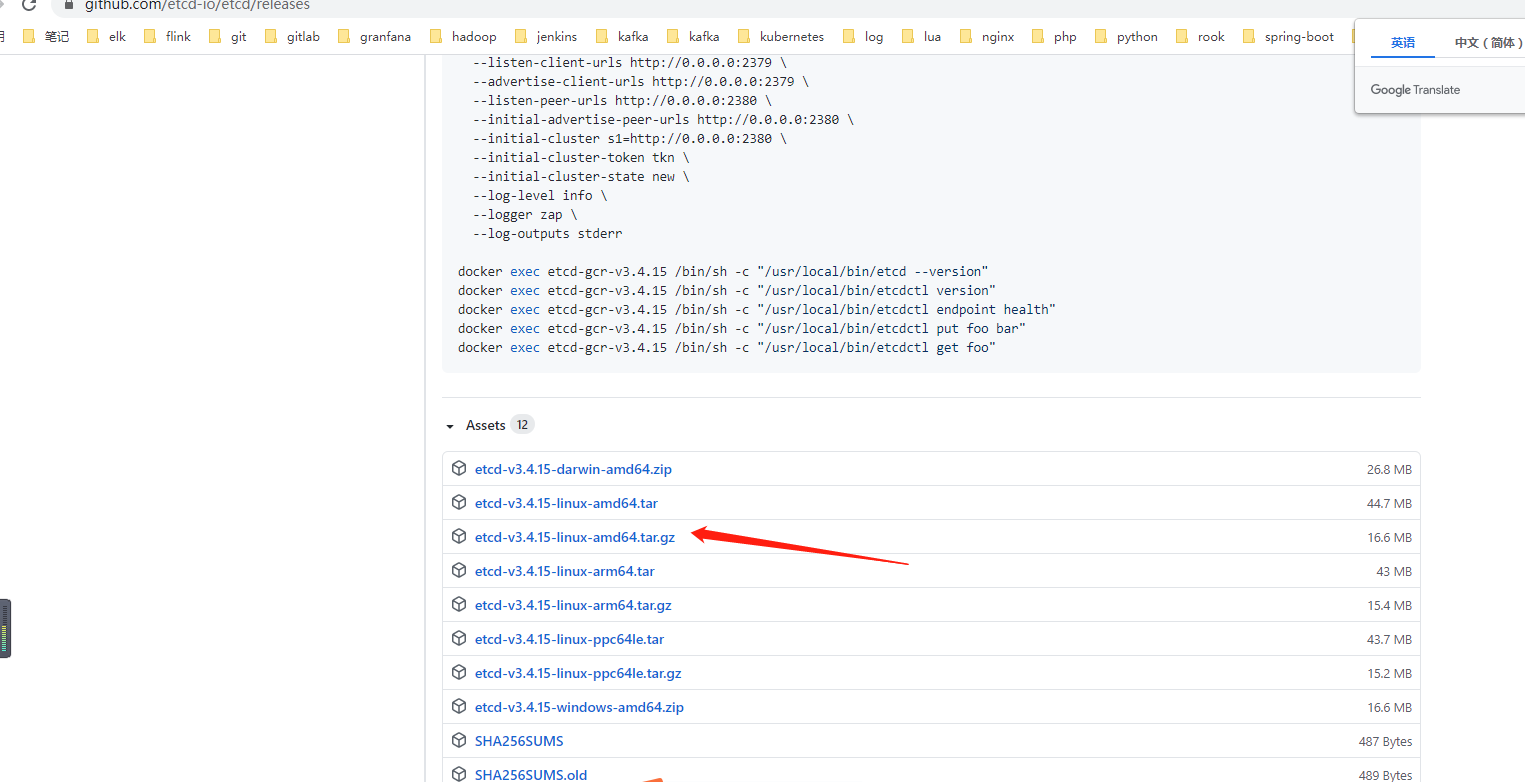

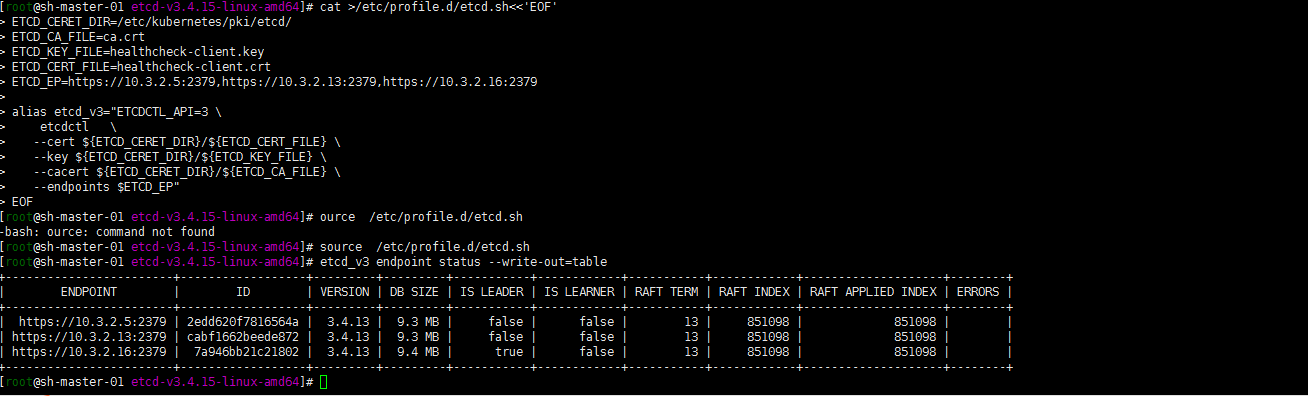

2 .将ETCDCTL工具部署在容器外

很多时候要用etcdctl还要进入容器 比较麻烦,把etcdctl工具直接提取到master01节点docker有copy的命令 containerd不会玩了 直接github仓库下载etcdctl

```

tar zxvf etcd-v3.4.15-linux-amd64.tar.gz

cd etcd-v3.4.15-linux-amd64/

cp etcdctl /usr/local/bin/etcdctl

```

tar zxvf etcd-v3.4.15-linux-amd64.tar.gz

cd etcd-v3.4.15-linux-amd64/

cp etcdctl /usr/local/bin/etcdctl

cat >/etc/profile.d/etcd.sh<<’EOF’ ETCD_CERET_DIR=/etc/kubernetes/pki/etcd/ ETCD_CA_FILE=ca.crt ETCD_KEY_FILE=healthcheck-client.key ETCD_CERT_FILE=healthcheck-client.crt ETCD_EP=https://10.3.2.5:2379,https://10.3.2.13:2379,https://10.3.2.16:2379

alias etcd_v3=”ETCDCTL_API=3 \

etcdctl \

—cert ${ETCD_CERET_DIR}/${ETCD_CERT_FILE} \

—key ${ETCD_CERET_DIR}/${ETCD_KEY_FILE} \

—cacert ${ETCD_CERET_DIR}/${ETCD_CA_FILE} \

—endpoints $ETCD_EP”

EOF

source /etc/profile.d/etcd.sh

```

验证etcd

etcd_v3 endpoint status —write-out=table

总结

综合以上。基本环境算是安装完了,由于文章是后写的,可能有些地方没有写清楚,想起来了再补呢