Hadoop datanode多目录配置

- 配置项

vim /opt/module/hadoop-3.1.3/etc/hadoop/hdfs-site.xml

<property><name>dfs.datanode.data.dir</name><value>file:///home/hadoop/dfs/data,file:///opt/module/hadoop-3.1.3/data/dfs/data</value></property># 根据磁盘空间剩余量来选择磁盘存储数据副本,这样一样能保证所有磁盘都能得到利用,还能保证所有磁盘都被利用均衡<property><name>dfs.datanode.fsdataset.volume.choosing.policy</name><value>org.apache.hadoop.hdfs.server.datanode.fsdataset.AvailableSpaceVolumeChoosingPolicy</value></property><property><name>dfs.datanode.available-space-volume-choosing-policy.balanced-space-threshold</name><value>10737418240</value></property><property><name>dfs.datanode.available-space-volume-choosing-policy.balanced-space-preference-fraction</name><value>0.75f</value></property>

dfs.datanode.fsdataset.volume.choosing.policy一定要配置一下,具体可参考:https://blog.csdn.net/bigdatahappy/article/details/39992075

dfs.datanode.available-space-volume-choosing-policy.balanced-space-threshold 默认值是10737418240,既10G,一般使用默认值就行,以下是该选项的官方解释: This setting controls how much DN volumes are allowed to differ in terms of bytes of free disk space before they are considered imbalanced. If the free space of all the volumes are within this range of each other, the volumes will be considered balanced and block assignments will be done on a pure round robin basis. 意思是首先计算出两个值,一个是所有磁盘中最大可用空间,另外一个值是所有磁盘中最小可用空间,如果这两个值相差小于该配置项指定的阀值时,则就用轮询方式的磁盘选择策略选择磁盘存储数据副本。

dfs.datanode.available-space-volume-choosing-policy.balanced-space-preference-fraction 默认值是0.75f,一般使用默认值就行,以下是该选项的官方解释:

This setting controls what percentage of new block allocations will be sent to volumes with more available disk space than others. This setting should be in the range 0.0 - 1.0, though in practice 0.5 - 1.0, since there should be no reason to prefer that volumes with 意思是有多少比例的数据副本应该存储到剩余空间足够多的磁盘上。该配置项取值范围是0.0-1.0,一般取0.5-1.0,如果配置太小,会导致剩余空间足够的磁盘实际上没分配足够的数据副本,而剩余空间不足的磁盘取需要存储更多的数据副本,导致磁盘数据存储不均衡。

如果是后续新增的磁盘目录,则需要配置数据均衡,具体操作如下

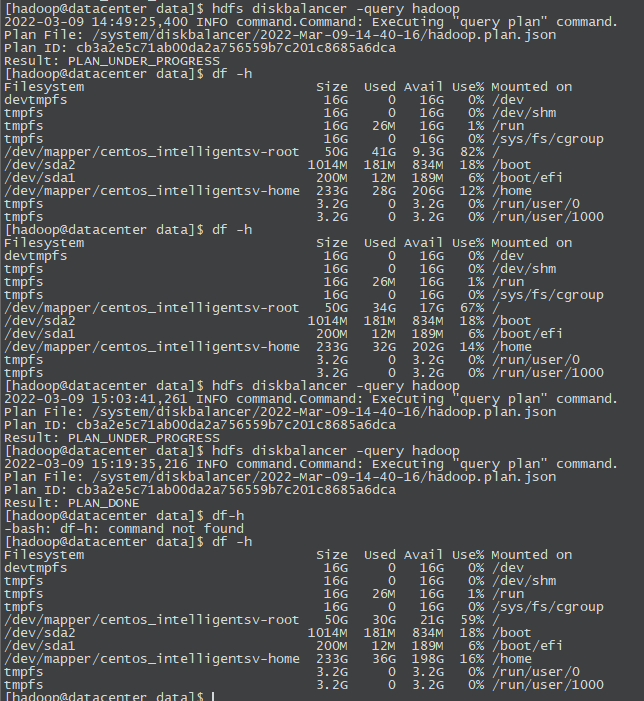

a. hdfs diskbalancer -plan hadoop # -plan后跟的是节点名称,命令执行之后会生成一个plan.json文件,会提示出路径地址

b. hdfs diskbalancer -execute /system/diskbalancer/2022-Mar-09-14-40-16/hadoop.plan.json

c. hdfs diskbalancer -query hadoop # 其中Result:PLAN_UNDER_PROGRESS代表均衡中,PLAN_DONE代表已完成

d.如果节点的磁盘负载差异不大,执行命令时会报信息“DiskBalancing not needed for node:”

- 具体引发的问题

由于初始化hadoop时,配置的datanode目录容量过小,设置了datanode的多目录,但第一次只增加了dfs.datanode.data.dir下的多目录,且未开启datanode存储的方式,故而出现hadoop运行任务时,刚提交mr就卡死的情况。

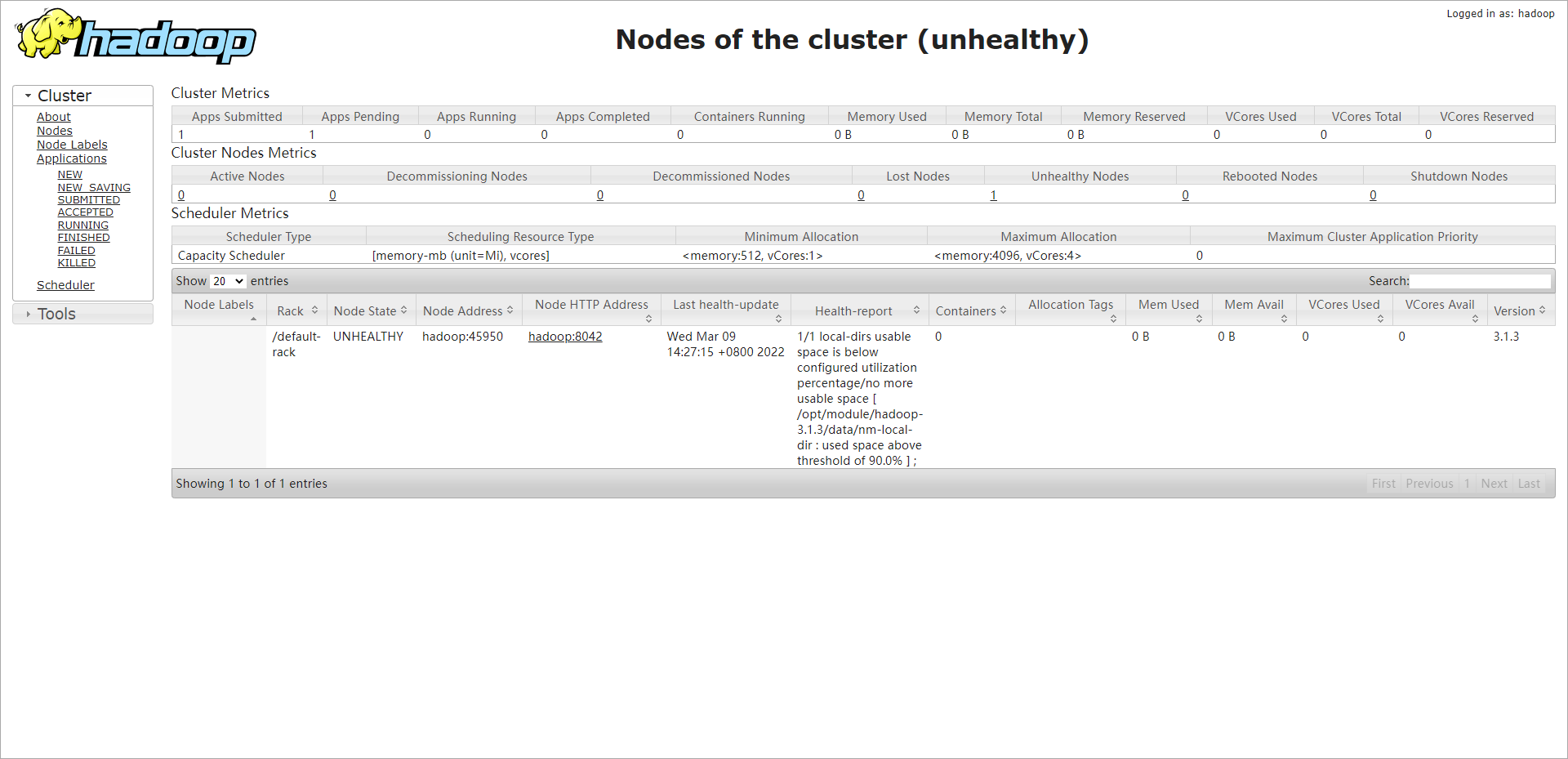

通过查询yarn日志出现Unhealthy Nodes问题,1/1 local-dirs usable space is below configured utilization percentage/no more usable space [ /opt/module/hadoop-3.1.3/data/nm-local-dir : used space above threshold of 90.0% ] ; ,类似下图

多节点数据均衡以及同节点多磁盘数据均衡

多节点:https://blog.csdn.net/qq_34477362/article/details/82662378

同节点多磁盘:https://blog.csdn.net/Androidlushangderen/article/details/51776103