理解Docker网络

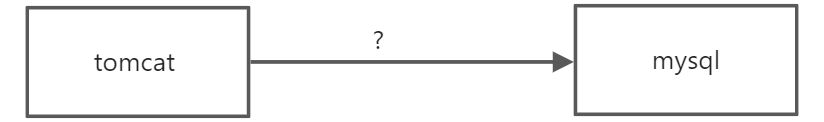

问题:docker是如何访问网络的?

#docker run -d -P --name tomcat01 tomcat#查看容器内部网络地址 ip addr 发现容器启动的时候得到一个eth0@if262 ip地址:docker分配#如果报错:OCI runtime exec failed: exec failed: unable to start container process: exec: "ip": executable file not found in $PATH: unknown#解决方法:进入容器内部 apt update && apt install -y iproute2 即可[root@VM-24-6-centos ~]#docker exec -it tomcat01 ip addr1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00inet 127.0.0.1/8 scope host lovalid_lft forever preferred_lft forever4: eth0@if5: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group defaultlink/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff link-netnsid 0inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0valid_lft forever preferred_lft forever#思考:linux是否可以ping通这个Ip[root@VM-24-6-centos ~]# ping 172.17.0.2PING 172.17.0.2 (172.17.0.2) 56(84) bytes of data.64 bytes from 172.17.0.2: icmp_seq=1 ttl=64 time=0.045 ms64 bytes from 172.17.0.2: icmp_seq=2 ttl=64 time=0.042 ms64 bytes from 172.17.0.2: icmp_seq=3 ttl=64 time=0.050 ms64 bytes from 172.17.0.2: icmp_seq=4 ttl=64 time=0.053 ms64 bytes from 172.17.0.2: icmp_seq=5 ttl=64 time=0.051 ms#linux 可以ping通容器内部

:::info 原理 :::

- 我们每启动或者安装一个docker容器,docker就会给docker容器分配一个ip ,我们只要安装了docker 就会有一个网卡docker0 ,这个网卡是桥接模式 ,使用的是 evth-pair 技术

再次测试 ip addr

再次启动一个tomcat02并查看 ip addr

#我们发现这个容器带来的网卡,都是一对对的#evth-pair就是一对的虚拟设备接口,他们都是成对出现的,一段连着协议,一段彼此相连#正因为有这个特性, evth-pair就可以充当一个桥梁`

- 测试tomcat01 是否可以ping 通 tomcat02

绘制一个网络模型图docker exec -it tomcat02 ping 172.18.0.2#结论:容器和容器之间是可以互相ping的

结论: Tomcat 01 和tomcat02 是公用的一个路由器 docker0

所有容器不指定网络的情况下.都是docker0路由的.docker 会给我们的容器分配一个默认的可用ip小结:

Docker中所有的网络接口都是虚拟的 [虚拟接口转发效率高]

只要容器删除,对应网桥的一对就没有了

—link

:::info 思考一个问题,我们编写了一个微服务, database url= ip 项目不重启,数据库ip换掉了,我们希望可以处理这个问题,可以用名字来进行访问容器? ::: 在这之前我们把自己写一个centos7和tomcat的dockerfile,方便后面操作,因为后面docker镜像的缘故会造成不方便

FROM centos:7MAINTAINER houyifan<1614397071@qq.com>ENV MYPATH /WORKDIR $MYPATHRUN yum install -y tomcatRUN yum install -y vimRUN yum install -y net-toolsRUN yum install -y yumRUN yum install -y iputilsEXPOSE 80CMD echo $MYPATHCMD echo "---end---"CMD /bin/bash

FROM centos7:1MAINTAINER houyifan<16143970701@qq.com>COPY readme.txt /usr/local/readme.txtADD jdk-8u331-linux-x64.tar.gz /usr/local/ADD apache-tomcat-9.0.22.tar.gz /usr/local/ENV MYPATH /usr/localWORKDIR $MYPATHENV JAVA_HOME /usr/local/jdk1.8.0_331ENV CLASSPATH $JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jarENV CATALINA_HOME /usr/local/apache-tomcat-9.0.22ENV CATALINA_BASH /usr/local/apache-tomcat-9.0.22ENV PATH $PATH:$JAVA_HOME/bin:$CATALINA_HOME/lib:$CATALINA_HOME/binEXPOSE 8080ENTRYPOINT /usr/local/apache-tomcat-9.0.22/bin/startup.sh

之后便可以开始了

[root@VM-24-6-centos tomcat]# docker exec -it tomcat03 ping tomcat02ping: tomcat02: Name or service not known#如何可以解决呢?[root@VM-24-6-centos tomcat]# docker exec -it tomcat03 ping tomcat02ping: tomcat02: Name or service not known[root@VM-24-6-centos tomcat]# docker run -d -P --name tomcat03 --link tomcat02 tomcat:1.0#通过--link就可以解决了cae72aa0ca086b97bfeb0e1ed2c532a0a748d72997cbeaa0321af73cf904d48f[root@VM-24-6-centos tomcat]# docker exec -it tomcat03 ping tomcat02PING tomcat02 (172.17.0.3) 56(84) bytes of data.64 bytes from tomcat02 (172.17.0.3): icmp_seq=1 ttl=64 time=0.107 ms64 bytes from tomcat02 (172.17.0.3): icmp_seq=2 ttl=64 time=0.061 ms64 bytes from tomcat02 (172.17.0.3): icmp_seq=3 ttl=64 time=0.052 ms64 bytes from tomcat02 (172.17.0.3): icmp_seq=4 ttl=64 time=0.060 ms#反向可以ping通吗?[root@VM-24-6-centos tomcat]# docker exec -it tomcat02 ping tomcat03ping: tomcat03: Name or service not known#并不行

探究:

其实这个tomcat03就是在本地配置了tomcat02的配置?

#查看hosts的配置,在这里原理发现[root@VM-24-6-centos tomcat]# docker exec -it tomcat03 cat /etc/hosts127.0.0.1 localhost::1 localhost ip6-localhost ip6-loopbackfe00::0 ip6-localnetff00::0 ip6-mcastprefixff02::1 ip6-allnodesff02::2 ip6-allrouters172.17.0.3 tomcat02 e28643de30e4172.17.0.4 cae72aa0ca08

本质探究:—link 就是我们在hosts配置中增加了一个 172.18.0.3 tomcat 02 e28643de30e4

现在使用Docker已经不建议使用—link了!

自定义网络

[root@VM-24-6-centos ~]# docker network lsNETWORK ID NAME DRIVER SCOPE7276891c6bf8 bridge bridge local564033f348d0 host host local4d839be2fc1a none null local

网络模式:

bridge:桥接模式 桥接到docker (默认网络)

none : 不配置网络

host: 和宿主机共享网络

container : 容器之间网络互联(用的很少,局限很大)

测试:

#我们直接俄启动的命令 会有默认一个--net bridge 这个就是我们的docker0docker run -d -P --name tomcat01 tomcat:1.1docker run -d -P --name tomcat01 --net bridge tomcat:1.1#docker0的特点:默认,域名不能访问 ,--link可以打通链接#我们可以自定义一个网络#--dirver bridge 默认桥接#--subnet 192.168.0.0/16 子网地址 区间是192.168.0.2到192.168.255.255#--subnet 192.168.0.1 网关地址[root@VM-24-6-centos tomcat]# docker network create --driver bridge --subnet 192.168.0.0/16 --gateway 192.168.0.1 mynetd8496e6216a0a5621e01b1d9715416090fc164cdf1b253219bcc0e67d326a918[root@VM-24-6-centos tomcat]# docker ininfo inspect[root@VM-24-6-centos tomcat]# docker network lsNETWORK ID NAME DRIVER SCOPE7276891c6bf8 bridge bridge local564033f348d0 host host locald8496e6216a0 mynet bridge local4d839be2fc1a none null local#查看自己创建的网络[root@VM-24-6-centos tomcat]# docker network inspect mynet[{"Name": "mynet","Id": "d8496e6216a0a5621e01b1d9715416090fc164cdf1b253219bcc0e67d326a918","Created": "2022-07-05T17:00:09.179458974+08:00","Scope": "local","Driver": "bridge","EnableIPv6": false,"IPAM": {"Driver": "default","Options": {},"Config": [{"Subnet": "192.168.0.0/16","Gateway": "192.168.0.1"}]},"Internal": false,"Attachable": false,"Ingress": false,"ConfigFrom": {"Network": ""},"ConfigOnly": false,"Containers": {},"Options": {},"Labels": {}}]#查看之后,在自己的 mynet之下创建两个Tomcat 试试docker run -d -P --name tomcat01-net01 --net mynet tomcat:1.1docker run -d -P --name tomcat02-net01 --net mynet tomcat:1.1[root@VM-24-6-centos tomcat]# docker inspect mynet[{"Name": "mynet","Id": "d8496e6216a0a5621e01b1d9715416090fc164cdf1b253219bcc0e67d326a918","Created": "2022-07-05T17:00:09.179458974+08:00","Scope": "local","Driver": "bridge","EnableIPv6": false,"IPAM": {"Driver": "default","Options": {},"Config": [{"Subnet": "192.168.0.0/16","Gateway": "192.168.0.1"}]},"Internal": false,"Attachable": false,"Ingress": false,"ConfigFrom": {"Network": ""},"ConfigOnly": false,"Containers": {"472fe351df020183d89faf54829d1b1a0648ce436f66707bebded1cb8ea2e582": {"Name": "tomcat02-net01","EndpointID": "685559af0451249e020f9ff8668c3b73cbccf71a01900fbe736ce901504322f4","MacAddress": "02:42:c0:a8:00:03","IPv4Address": "192.168.0.3/16","IPv6Address": ""},"9bddd08a80d9ad4ca1d272362a2a2b2e0ba79cceeac7a6a4b4db5a18f1a95357": {"Name": "tomcat01-net01","EndpointID": "1dfa54cc4d49478fdd4d6b52faf42e1c5ac20dfe05b5c5e633f379b7ce4a6816","MacAddress": "02:42:c0:a8:00:02","IPv4Address": "192.168.0.2/16","IPv6Address": ""}},"Options": {},"Labels": {}}]#现在再次在自己创建的mynet网络下测试ping链接[root@VM-24-6-centos tomcat]# ^C[root@VM-24-6-centos tomcat]# docker exec -it tomcat01-net01 ping 192.168.0.3PING 192.168.0.3 (192.168.0.3) 56(84) bytes of data.64 bytes from 192.168.0.3: icmp_seq=1 ttl=64 time=0.093 ms64 bytes from 192.168.0.3: icmp_seq=2 ttl=64 time=0.052 ms64 bytes from 192.168.0.3: icmp_seq=3 ttl=64 time=0.053 ms#现在不适用--link也可以ping 名字了[root@VM-24-6-centos tomcat]# docker exec -it tomcat02-net01 ping 192.168.0.2PING 192.168.0.2 (192.168.0.2) 56(84) bytes of data.64 bytes from 192.168.0.2: icmp_seq=1 ttl=64 time=0.063 ms64 bytes from 192.168.0.2: icmp_seq=2 ttl=64 time=0.056 ms

我们自定义的网络 docker都已经帮我们维护好了对应的关系,推荐我们平时这样使用网络

好处: 不同的集群使用不同的网络,保证集群是安全和健康的

网络连通

#测试打通 Tomcat01 到mynet

docker network connect mynet tomcat01

#连通之后就是将tomcat01 放到了mynet网络下

#这个操作在官网叫做,一个容器两个地址

#类似云服务器的两个ip 公网ip 和私网ip

测试:

[root@VM-24-6-centos tomcat]# docker exec -it tomcat01 ping tomcat01-net01

PING tomcat01-net01 (192.168.0.2) 56(84) bytes of data.

64 bytes from tomcat01-net01.mynet (192.168.0.2): icmp_seq=1 ttl=64 time=0.095 ms

64 bytes from tomcat01-net01.mynet (192.168.0.2): icmp_seq=2 ttl=64 time=0.041 ms

64 bytes from tomcat01-net01.mynet (192.168.0.2): icmp_seq=3 ttl=64 time=0.040 ms

--- tomcat01-net01 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2000ms

rtt min/avg/max/mdev = 0.040/0.058/0.095/0.027 ms

#发现tomcat01 和tomcat01-net01是可以联通了

[root@VM-24-6-centos tomcat]# docker exec -it tomcat02 ping tomcat01-net01

ping: tomcat01-net01: Name or service not known

#而tomcat02没有和mynet网络联通则不能ping通

结论:

假设要跨网络操作别人, 就需要使用docker network connect 联通!

实战:部署Redis集群

shell脚本

#创建网卡

docker network create redis --subnet 172.38.0.0/16

#通过脚本创建六个redis的配置

for port in $(seq 1 6); \

do \

mkdir -p /mydata/redis/node-${port}/conf

touch /mydata/redis/node-${port}/conf/redis.conf

cat << EOF >/mydata/redis/node-${port}/conf/redis.conf

port 6379

bind 0.0.0.0

cluster-enabled yes

cluster-config-file nodes.conf

cluster-node-timeout 5000

cluster-announce-ip 172.38.0.1${port}

cluster-announce-port 6379

cluster-announce-bus-port 16379

appendonly yes

EOF

done

docker run -p 6371${port}:6379 -p 16371${port}:16379 --name redis-${port} \

-v /mydata/redis/node-${port}/data:/data \

-v /mydata/redis/node-${port}/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.1${port} redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf; \

#启动redis

docker run -p 6371:6379 -p 16371:16379 --name redis-1 \

-v /mydata/redis/node-1/data:/date \

-v /mydata/redis/node-1/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.11 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

docker run -p 6372:6379 -p 16372:16379 --name redis-2 \

-v /mydata/redis/node-2/data:/date \

-v /mydata/redis/node-2/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.12 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

docker run -p 6373:6379 -p 16373:16379 --name redis-3 \

-v /mydata/redis/node-3/data:/date \

-v /mydata/redis/node-3/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.13 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

docker run -p 6374:6379 -p 16374:16379 --name redis-4 \

-v /mydata/redis/node-4/data:/date \

-v /mydata/redis/node-4/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.14 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

docker run -p 6375:6379 -p 16375:16379 --name redis-5 \

-v /mydata/redis/node-5/data:/date \

-v /mydata/redis/node-5/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.15 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

docker run -p 6376:6379 -p 16376:16379 --name redis-6 \

-v /mydata/redis/node-6/data:/date \

-v /mydata/redis/node-6/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.38.0.16 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

#创建一个集群,docker exec -it redis-1 /bin/sh 进入redis-1 注意redis中没有bash,这里要用sh进入

/data # redis-cli --cluster create 172.38.0.11:6379 172.38.0.12:6379 172.38.0.13:6379 172.38.0.14:6379 172.38.0.15:6379 172.38.0.16:6379 --cluster-replicas 1

>>> Performing hash slots allocation on 6 nodes...

Master[0] -> Slots 0 - 5460

Master[1] -> Slots 5461 - 10922

Master[2] -> Slots 10923 - 16383

Adding replica 172.38.0.15:6379 to 172.38.0.11:6379

Adding replica 172.38.0.16:6379 to 172.38.0.12:6379

Adding replica 172.38.0.14:6379 to 172.38.0.13:6379

M: 918d0b2598975a9d5f99b4ba103eb283cc12eb54 172.38.0.11:6379

slots:[0-5460] (5461 slots) master

M: f9b8cf2bea2a4385dcb1b985c686b3811facef12 172.38.0.12:6379

slots:[5461-10922] (5462 slots) master

M: 522c023534a66772bd916e79a0b0305e6afa2314 172.38.0.13:6379

slots:[10923-16383] (5461 slots) master

S: de2bf7d41bfe48ebb5f031e6550f1a1da7d979ac 172.38.0.14:6379

replicates 522c023534a66772bd916e79a0b0305e6afa2314

S: eb089036ed6f48aa43cfae90b961a455d5595801 172.38.0.15:6379

replicates 918d0b2598975a9d5f99b4ba103eb283cc12eb54

S: 0fcfdb126e58fe1d6e9efc2303969dfaede6fdff 172.38.0.16:6379

replicates f9b8cf2bea2a4385dcb1b985c686b3811facef12

Can I set the above configuration? (type 'yes' to accept): yes

>>> Nodes configuration updated

>>> Assign a different config epoch to each node

>>> Sending CLUSTER MEET messages to join the cluster

Waiting for the cluster to join

...

>>> Performing Cluster Check (using node 172.38.0.11:6379)

M: 918d0b2598975a9d5f99b4ba103eb283cc12eb54 172.38.0.11:6379

slots:[0-5460] (5461 slots) master

1 additional replica(s)

S: 0fcfdb126e58fe1d6e9efc2303969dfaede6fdff 172.38.0.16:6379

slots: (0 slots) slave

replicates f9b8cf2bea2a4385dcb1b985c686b3811facef12

S: eb089036ed6f48aa43cfae90b961a455d5595801 172.38.0.15:6379

slots: (0 slots) slave

replicates 918d0b2598975a9d5f99b4ba103eb283cc12eb54

M: f9b8cf2bea2a4385dcb1b985c686b3811facef12 172.38.0.12:6379

slots:[5461-10922] (5462 slots) master

1 additional replica(s)

M: 522c023534a66772bd916e79a0b0305e6afa2314 172.38.0.13:6379

slots:[10923-16383] (5461 slots) master

1 additional replica(s)

S: de2bf7d41bfe48ebb5f031e6550f1a1da7d979ac 172.38.0.14:6379

slots: (0 slots) slave

replicates 522c023534a66772bd916e79a0b0305e6afa2314

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

docker 搭建redis集群完成

我们使用docker 之后,所有的技术都会慢慢的简单起来