查看容器日志

kubectl logs POD名称 -n 名称空间

查看事件

kubectl describe POD名称 -n 名称空间

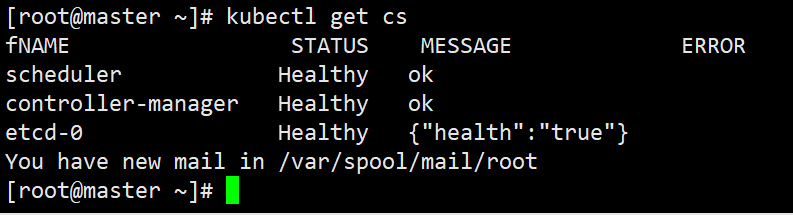

查看master组件状态

Kubectl get cs

查看node状态

kubectl get node

查看Apiserver代理的URL

kubectl cluster-info

查看资源

kubectl api-resource

查看集群详细信息

kubectl cluster-info dump

查看资源信息

kubectl describe <资源> <名称>

查看资源信息

kubectl get pod

思考,为什么执行kubectl get cs命令时没有显示api-server组件状态信息?

解析:在执行此命令时,本身就会交给api-server去做处理,当命令被正常执行时,也就意味着api-server组件是正常的

查看master的IP信息

kubectl get ep

资源监控

Metric-server+cAdvisor监控集群资源消耗

kubectl top ->apiserver->metrics-server->kubelet(cAdvisor)

Metric Server是一个集群范围的资源使用情况的数据聚合器,作为一个应用部署在集群中,Metric server从每个节点的kubelet API收集指标,通过kubetnetes聚合器注册在Master APIServer中

部署metrics-server

1)下载metrics的yaml文件

wget https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yaml

2)修改文件

3)执行yaml文件

kubectl apply -f components.yaml

查看pod资源消耗

kubectl top pod

查看节点资源消耗

kubectl top node

K8S组件日志

分类:

K8S系统的组件日志

K8S Cluster里面部署的应用程序日志

-标准输出(通过kubect logs 输出的日志)

-日志文件(容器内部日志)

日志类型

systemd守护进程管理的组件

journalctl -u kubelet

Pod部署的组件

kubectl log POD_NAME -n NAMESPACE

系统日志

/var/log/messages

K8S查看标准输出日志流程:

kubectl logs ->apiserver ->kubelet ->docker(接管了容器标准输出日志并写到了/var/lib/docker/containers/

K8S应用日志管理

容器中应用日志可以使用emptyDir数据卷将日志文件持久化到宿主机上

宿主机路径:

/var/lib/kubelet/pods/

[root@master ~]# cat web-log.yamlapiVersion: v1kind: Podmetadata:name: my-podspec:containers:- name: webimage: lizhenliang/nginx-phpvolumeMounts:- name: logsmountPath: /usr/local/nginx/logs- name: logimage: busyboxargs: [/bin/sh,-c,'tail -f /opt/access.log']volumeMounts:- name: logsmountPath: /optvolumes:- name: logsemptyDir: {}

日志平台搭建

ELK:重量级 Elasticsearch+Logstash+Kibana

Graylog、Loki:轻量级

课后题目:

1、查看pod日志,并将日志中Error的行记录到指定文件

pod名称:web

文件:/opt/web

2、查看指定标签使用CPU最高的pod,并记录到指定文件

标签f:app=web

文件f:/opt/cpu

3、Pod里创建一个边车容器读取业务容器日志

应用程序部署流程

书写pod模板yaml文件基本方法

用create命令生成

kubectl create deployment nginx —image=nginx:1.16 -o yaml —dry-run=client > my-deploy.yaml

用get命令导出

kubectl get deployment nginx -o yaml > my-deploy.yaml

Pod容器的字段拼写忘记了

kubectl explain pods.spec.containers

kubectl explain deployment

命令行操作与YAML之间有什么利弊

1、命令行常用于临时测试

2、易于复用,较复杂应用部署时建议使用yaml文件

应用程序部署流程

应用程序->部署->升级->回滚->下线

应用升级的三种方式

1、kubectl apply -f xx.yaml

2、kubectl set image deployment/web nginx=nginx:1.16

3、kubectl edit deployment/web

滚动升级:k8s对Podcast升级的默认策略,通过使用新版本Pod逐步更新旧版本Pod,实现零停机发布,用户无感知

版本回滚:

当新的版本有故障时,需要回滚历史正常版本

kubectl rollout history deployment/web 查看历史版本

kubectl rollout undo deployment/web 回滚上一个版本

kubectl rollout undo deployment/web —to-revision=2 回滚历史指定版本

备注:回滚是重新部署某一次部署时的状态,即当时版本所有配置

Pod中的集中容器类型:

Infrastructure Container:基础容器,用于维护整个Pod网络空间

InitContainer:初始化容器,先于业务容器开始执行

Containers:业务容器,并行启动

课后作业

1、创建一个deployment副本数3,然后滚动更新镜像版本,并记录这个更新记录,最后在回滚到上一个版本

名称:nginx

镜像版本: 1.16

更新镜像版本: 1.17

2、给web deployment扩容副本数为 3

3、创建一个pod,其中运行着nginx,redis,memcached,consul4个容器

4、把deployment输出json文件,再删除创建的deployment

5、生成一个deployment yaml文件保存到/opt/deploy.yaml

名称: web

标签: app_env_stage=dev

6、在节点上配置kubelet托管启动一个pod

节点: k8s-node1

pod名称: web

镜像: nginx

7、向pod中添加一个init容器,init容器创建一个空文件,如果该空文件没有被检测到po

(思路: emptydir卷共享空文件所在目录+健康检查)

pod名称: web

kubernetes调度

资源限制

nodeSelector

用于将Pod调度到匹配的Label的Node上,如果没有匹配的标签会调度失败

作用:

完全匹配节点标签,固定Pod到特定的节点

例子:

1)给node01打上为ssd的标签

kubectl label nodes node01 disktype=ssd

2)yaml文件

apiVersion: v1kind: Podmetadata:name: nginx-bxspec:nodeSelector:disktype: ssdcontainers:- image: nginx:1.16name: nginx-name

Taints污点

Taints,避免Pod调度到特定Node上,应用场景,专用节点,列如匹配了特殊硬件的节点,基于Taint的驱逐

设置污点:

kubectl taint node NODE key=value:[effet]

其中[effect]可取值:

NoSchedule:一定不能被调度

PreferNoSchedule:尽量不要调度

NoExecute:不仅不会调度,还会驱逐Node上已经有的Pod

去掉污点:

kubectl taint node NODE key:[effect]-

例子:

1)在node02上打上污点,且为不可调度

kubectl taint node node02 disktype=ssd:NoExecute

2)当没有配置污点容忍时,pod会处与pending状态

3)配置如下污点容忍,pod可被正常调度至node02节点上

apiVersion: v1kind: Podmetadata:name: nginx-bxspec:nodeSelector:disktype: ssdcontainers:- image: nginx:1.16name: nginx-nametolerations:- key: "disktype"operator: "Equal"value: "ssd"effect: "NoExecute"

作业

1、创建一个pod,分配到执行标签node上

pod名称:web

镜像:nginx

node标签:disk=ssd

2、确保再每个节点上运行一个pod

pod名称:nginx

镜像:nginx

3、查看集群中状态为ready的node数量,并将结果写到指定文件

service

修改ipvs模式

kubectl edit configmap kube-proxy -n kube-system

….

mode: “ipvs”

….

kubectl delete pod kube-proxy-btz4p -n kube-system

备注:

1、kube-proxy配置文件以configmap方式存储

2、如果让所有节点生效,需要重建所有节点kube-proxy pod

CoreDNS: 是一个DNS服务,k8s默认采用,以pod部署在集群中,CoreDNS服务监视k8s API,为每一个svc创建DNS记录用于域名解析

ClusterIP A记录格式:

作业

1、给一个pod创建service,并可以通过ClusterIP/NodePort访问

名称:web-service

pod名称:web

容器端口:80

2、任意名称创建deployment和service,使用busybox容器nslookup解析service

3、列出名称空间下某个service关联的所有pod,并将pod名称写到/opt/pod.txt文件中(使用标签筛选)

命名空间:default

service名称:web

4、使用Ingress将美女示例应用暴露到外部访问

存储卷

emptydir

nodePath

NFS

PVC、PV

示例

---apiVersion: v1kind: Podmetadata:name: my-podspec:containers:- name: nginximage: nginx:latestports:- containerPort: 80volumeMounts:- name: wwwmountPath: /usr/share/nginx/htmlvolumes:- name: wwwpersistentVolumeClaim:claimName: my-pvc---#创建pvcapiVersion: v1kind: PersistentVolumeClaimmetadata:name: my-pvcspec:accessModes:- ReadWriteManyresources:requests:storage: 5Gi---#创建pvapiVersion: v1kind: PersistentVolumemetadata:name: my-pvspec:capacity:storage: 5GiaccessModes:- ReadWriteManynfs:path: /ifs/k8sserver: 10.0.0.12

动态创建PV

1、基于nfs创建动态pv,默认nfs不支持动态创建pv,需要安装一个插件nfs-client-provisioner

[root@master storage-class]# cat deployment.yamlapiVersion: apps/v1kind: Deploymentmetadata:name: nfs-client-provisionerlabels:app: nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: defaultspec:replicas: 1strategy:type: Recreateselector:matchLabels:app: nfs-client-provisionertemplate:metadata:labels:app: nfs-client-provisionerspec:serviceAccountName: nfs-client-provisionercontainers:- name: nfs-client-provisionerimage: lizhenliang/nfs-subdir-external-provisioner:v4.0.1volumeMounts:- name: nfs-client-rootmountPath: /persistentvolumesenv:- name: PROVISIONER_NAMEvalue: k8s-sigs.io/nfs-subdir-external-provisioner- name: NFS_SERVERvalue: 10.0.0.12- name: NFS_PATHvalue: /ifs/k8svolumes:- name: nfs-client-rootnfs:server: 10.0.0.12path: /ifs/k8s

2、插件访问api-server需要基于rabc的ServiceAccount方式才可以访问

[root@master storage-class]# cat rbac.yamlapiVersion: v1kind: ServiceAccountmetadata:name: nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: default---kind: ClusterRoleapiVersion: rbac.authorization.k8s.io/v1metadata:name: nfs-client-provisioner-runnerrules:- apiGroups: [""]resources: ["persistentvolumes"]verbs: ["get", "list", "watch", "create", "delete"]- apiGroups: [""]resources: ["persistentvolumeclaims"]verbs: ["get", "list", "watch", "update"]- apiGroups: ["storage.k8s.io"]resources: ["storageclasses"]verbs: ["get", "list", "watch"]- apiGroups: [""]resources: ["events"]verbs: ["create", "update", "patch"]---kind: ClusterRoleBindingapiVersion: rbac.authorization.k8s.io/v1metadata:name: run-nfs-client-provisionersubjects:- kind: ServiceAccountname: nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: defaultroleRef:kind: ClusterRolename: nfs-client-provisioner-runnerapiGroup: rbac.authorization.k8s.io---kind: RoleapiVersion: rbac.authorization.k8s.io/v1metadata:name: leader-locking-nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: defaultrules:- apiGroups: [""]resources: ["endpoints"]verbs: ["get", "list", "watch", "create", "update", "patch"]---kind: RoleBindingapiVersion: rbac.authorization.k8s.io/v1metadata:name: leader-locking-nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: defaultsubjects:- kind: ServiceAccountname: nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: defaultroleRef:kind: Rolename: leader-locking-nfs-client-provisionerapiGroup: rbac.authorization.k8s.io

3、创建存储类

[root@master storage-class]# cat class.yamlapiVersion: storage.k8s.io/v1kind: StorageClassmetadata:name: managed-nfs-storageprovisioner: k8s-sigs.io/nfs-subdir-external-provisioner # or choose another name, must match deployment's env PROVISIONER_NAME'parameters:archiveOnDelete: "false"

4、基于动态pv,创建测试pod

[root@master storage-class]# cat test-claim.yamlapiVersion: v1kind: PersistentVolumeClaimmetadata:name: test-claimspec:storageClassName: "managed-nfs-storage"accessModes:- ReadWriteManyresources:requests:storage: 1Gi---apiVersion: apps/v1kind: Deploymentmetadata:name: test-podspec:replicas: 1selector:matchLabels:app: test-podtemplate:metadata:labels:app: test-podspec:containers:- name: test-podimage: nginxvolumeMounts:- name: nfs-pvcmountPath: "/usr/share/nginx/html"volumes:- name: nfs-pvcpersistentVolumeClaim:claimName: test-claim

基于RBAC方式的授权

1、基于根证书创建生成证书

[root@master rbac]# cat cert.shcat > ca-config.json <<EOF{"signing": {"default": {"expiry": "87600h"},"profiles": {"kubernetes": {"usages": ["signing","key encipherment","server auth","client auth"],"expiry": "87600h"}}}}EOFcat > scxiang-csr.json <<EOF{"CN": "scxiang","hosts": [],"key": {"algo": "rsa","size": 2048},"names": [{"C": "CN","ST": "BeiJing","L": "BeiJing","O": "k8s","OU": "System"}]}EOFcfssl gencert -ca=/etc/kubernetes/pki/ca.crt -ca-key=/etc/kubernetes/pki/ca.key -config=ca-config.json -profile=kubernetes scxiang-csr.json | cfssljson -bare scxiang

2、生成kubeconfig配置文件

[root@master rbac]# cat kubeconfig.shkubectl config set-cluster kubernetes \--certificate-authority=/etc/kubernetes/pki/ca.crt \--embed-certs=true \--server=https://10.0.0.10:6443 \--kubeconfig=scxiang.kubeconfig# 设置客户端认证kubectl config set-credentials scxiang \--client-key=scxiang-key.pem \--client-certificate=scxiang.pem \--embed-certs=true \--kubeconfig=scxiang.kubeconfig# 设置默认上下文kubectl config set-context kubernetes \--cluster=kubernetes \--user=scxiang \--kubeconfig=scxiang.kubeconfig# 设置当前使用配置kubectl config use-context kubernetes --kubeconfig=scxiang.kubeconfig

3、创建访问策略

[root@master rbac]# cat rbac.yamlkind: RoleapiVersion: rbac.authorization.k8s.io/v1metadata:namespace: defaultname: pod-readerrules:- apiGroups: [""]resources: ["pods","services"]verbs: ["get", "watch", "list"]---kind: RoleBindingapiVersion: rbac.authorization.k8s.io/v1metadata:name: read-podsnamespace: defaultsubjects:- kind: Username: scxiangapiGroup: rbac.authorization.k8s.ioroleRef:kind: Rolename: pod-readerapiGroup: rbac.authorization.k8s.io

4、将生成的kubeconfig文件拷贝到需要访问api-server的终端上面

scp scxiang.kubeconfig 10.0.0.12:/root/config

为了不用每次都跟上kubeconfig文件,将kubeconfig文件移到默认路径下

mv scxiang.kubeconfig .kube/config,接下来就可以基于上面rbac所定义的规则,访问相应的资源了

网络策略NetworkPolicy

示例:将default名称空间携带app=web标签的Pod隔离,只允许default命名空间携带run=client1标签的Pod访问80端口

1、准备测试环境

kubectl create deployment web —image=nginx

kubectl run client1 —image=busybox —sleep 36000

kubectl run client2 —image=busybox —sleep 36000

2、生成网络策略

[root@master ~]# cat np1.yamlapiVersion: networking.k8s.io/v1kind: NetworkPolicymetadata:name: test-network-policynamespace: defaultspec:podSelector:matchLabels:app: webpolicyTypes:- Ingressingress:- from:- namespaceSelector:matchLabels:project: default- podSelector:matchLabels:run: client1ports:- protocol: TCPport: 80