设备准备

| 角色 | 主机名 | IP地址 |

|---|---|---|

| master | k8s-master | 192.168.31.100 |

| node | k8s-node1 | 192.168.31.101 |

| node | k8s-node2 | 192.168.31.102 |

[root@k8s-master ~]# nmcli connection modify ens33 ipv4.addresses 192.168.31.100/24 ipv4.gateway 192.168.31.2 ipv4.dns 114.114.114.114 ipv4.method manual autoconnect yes[root@k8s-node1 ~]# nmcli connection modify ens33 ipv4.addresses 192.168.31.101/24 ipv4.gateway 192.168.31.2 ipv4.dns 114.114.114.114 ipv4.method manual autoconnect yes[root@k8s-node2 ~]# nmcli connection modify ens33 ipv4.addresses 192.168.31.102/24 ipv4.gateway 192.168.31.2 ipv4.dns 114.114.114.114 ipv4.method manual autoconnect yes

环境准备

- 在所有的机器上关闭防火墙和SELinux

systemctl stop firewalldsystemctl disable firewalldsed -i 's/enforcing/disabled/' /etc/selinux/configsetenforce 0

- 在所有的机器上关闭交换分区swap

swapoff -a # 临时关闭sed -ri 's/.*swap.*/#&/' /etc/fstab #永久关闭

- 在所有的机器上添加主机名与IP对应的关系,使得互相之间可以通过主机名通信

[root@k8s-master ~]# vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.31.100 k8s-master

192.168.31.101 k8s-node1

192.168.31.102 k8s-node2

- 在所有的机器上将桥接的IPV4流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

- 在所有的机器上安装Docker

yum install wget.x86_64 -y

rm -rf /etc/yum.repos.d/*

wget -O /etc/yum.repos.d/centos7.repo http://mirrors.aliyun.com/repo/Centos-7.repo

wget -O /etc/yum.repos.d/epel-7.repo http://mirrors.aliyun.com/repo/epel-7.repo

wget -O /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install docker-ce -y

- 启动docker

systemctl enable docker.service

systemctl start docker.service

集群部署

配置镜像仓库kubernetes镜像-kubernetes下载地址-kubernetes安装教程-阿里巴巴开源镜像站 (aliyun.com)

- 为所有的机器修改仓库,安装kubeadm、kubelet、kubectl

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum install -y kubelet kubeadm kubectl

systemctl enable kubelet && systemctl start kubelet

ps: 由于官网未开放同步方式, 可能会有索引gpg检查失败的情况, 这时请用

yum install -y --nogpgcheck kubelet kubeadm kubectl安装

- 修改所有机器的Docker配置

cat > /etc/docker/daemon.json <<EOF

{

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

systemctl daemon-reload

systemctl restart docker.service

systemctl restart kubelet.service

- 部署Master

[root@k8s-master ~]# kubeadm init \

--apiserver-advertise-address=192.168.31.100 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.5 \

--control-plane-endpoint k8s-master \

--service-cidr=172.16.0.0/16 \

--pod-network-cidr=10.244.0.0/16

如果出现类似这种

Kubelet version: "1.23.5" Control plane version: "1.22.2"的版本报错问题,主要是因为控制平面的版本不能低于kubelet的版本,因此只要将--kubernetes-version后的版本改为和报错信息中的kubelet的版本一样就行了

如果最后出现下述信息就代表安装完成了

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

# 可以输入的

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

# 如果是root用户还需要输入

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

# 如果需要加入很多控制平面节点即master则需要使用下面的

kubeadm join k8s-master:6443 --token sbw7mf.owh7u211d7fit94h \

--discovery-token-ca-cert-hash sha256:074aa998614125525a58ca30a78337c26f6d5dac305691a35eeb819a7496c8bc \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

# 如果需要加入很多k8s-node节点则需要使用下面的

kubeadm join k8s-master:6443 --token sbw7mf.owh7u211d7fit94h \

--discovery-token-ca-cert-hash sha256:074aa998614125525a58ca30a78337c26f6d5dac305691a35eeb819a7496c8bc

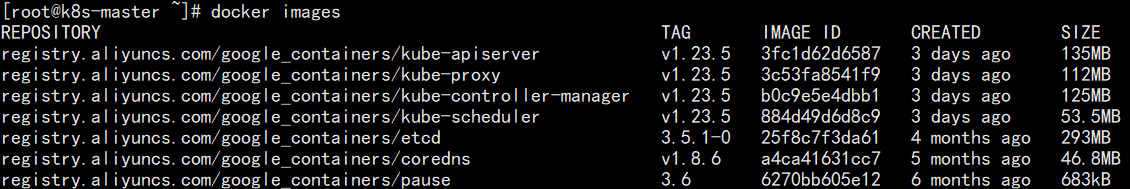

此时k8s-master中的镜像有下面这些

- 按照完成信息中的一些指示进行执行

[root@k8s-master ~]# mkdir -p $HOME/.kube

[root@k8s-master ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-master ~]# export KUBECONFIG=/etc/kubernetes/admin.conf

- 由于之后会需要加入多个k8s-node节点,因此需要保存完成信息中的指示

[root@k8s-master ~]# vim k8s-node.token

kubeadm join k8s-master:6443 --token sbw7mf.owh7u211d7fit94h \

--discovery-token-ca-cert-hash sha256:074aa998614125525a58ca30a78337c26f6d5dac305691a35eeb819a7496c8bc

- 查看节点的状态,为NotReady

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady control-plane,master 5m20s v1.23.5

- k8s是默认不带虚拟网络插件的,因此需要安装网络插件

# 最好手动提前拉取所需镜像

[root@k8s-master1 ~]# docker pull quay.io/coreos/flannel:v0.14.0

[root@k8s-master1 ~]# wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

[root@k8s-master1 ~]# kubectl apply -f kube-flannel.yml

# 稍等片刻,状态就为Ready了

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 9m54s v1.23.5

- 将k8s-node节点加入到其中,需要用到之前保存的密钥

[root@k8s-node1 ~]# docker pull quay.io/coreos/flannel:v0.14.0

[root@k8s-node2 ~]# docker pull quay.io/coreos/flannel:v0.14.0

[root@k8s-node1 ~]# kubeadm join k8s-master:6443 --token sbw7mf.owh7u211d7fit94h \

--discovery-token-ca-cert-hash sha256:074aa998614125525a58ca30a78337c26f6d5dac305691a35eeb819a7496c8bc

[root@k8s-node2 ~]# kubeadm join k8s-master:6443 --token sbw7mf.owh7u211d7fit94h \

--discovery-token-ca-cert-hash sha256:074aa998614125525a58ca30a78337c26f6d5dac305691a35eeb819a7496c8bc

- 查看各节点的状态

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 40m v1.23.5

k8s-node1 Ready <none> 10m v1.23.5

k8s-node2 Ready <none> 10m v1.23.5

简单体验

- 创建Nginx容器

[root@k8s-master ~]# kubectl create deployment nginx --image=nginx

deployment.apps/nginx created

- 暴露对外端口

[root@k8s-master ~]# kubectl expose deployment nginx --port=80 --type=NodePort

service/nginx exposed

- 查看Nginx是否运行成功

[root@k8s-master ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-85b98978db-p5mpm 1/1 Running 0 116s

[root@k8s-master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 172.16.0.1 <none> 443/TCP 36m

nginx NodePort 172.16.184.54 <none> 80:31625/TCP 59s

- 访问测试,每台机器的IP地址都可以访问,因为每一台机器上都被设置了iptables规则

[root@k8s-master ~]# curl 192.168.31.100:31625

[root@k8s-master ~]# curl 192.168.31.101:31625

[root@k8s-master ~]# curl 192.168.31.102:31625

- 扩容

[root@k8s-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-85b98978db-p5mpm 1/1 Running 0 20m

[root@k8s-master ~]# kubectl scale deployment nginx --replicas=3

deployment.apps/nginx scaled

[root@k8s-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-85b98978db-cvj8k 1/1 Running 0 27s

nginx-85b98978db-p5mpm 1/1 Running 0 21m

nginx-85b98978db-sk9s5 1/1 Running 0 27s

可以看到2个pod在node1上,还有1个pod在node2上