6.1- 介绍

块是字节序列(例如,一个512字节的数据块)。基于块的存储接口是使用旋转介质(例如硬盘,CD,软盘甚至传统的9轨磁带)存储数据的最常用方法。块设备接口的无处不在使虚拟块设备成为与海量数据存储系统(如Ceph)进行交互的理想候选者。

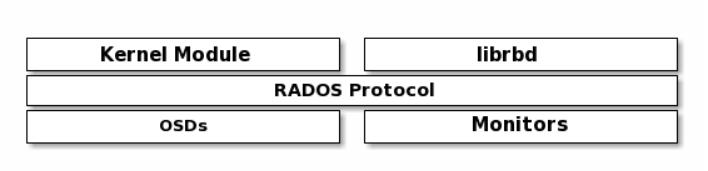

Ceph块设备经过精简配置,可调整大小,并在Ceph集群中的多个OSD上存储条带化的数据。Ceph块设备利用了RADOS功能,例如快照,复制和一致性。Ceph的RADOS块设备(RBD)使用内核模块或librbd库与OSD进行交互。

注意

内核模块可以使用Linux页面缓存。对于librbd基于应用程序,Ceph支持RBD缓存。

Ceph的的块设备提供了无限的可扩展性,以高性能的内核模块,或者KVM系列如QEMU和基于云计算系统,如OpenStack的和的CloudStack依赖的libvirt和QEMU与Ceph的块设备集成。您可以使用同一群集同时运行Ceph RADOS网关,Ceph文件系统和Ceph块设备。

6.2- 创建块存储池

[root@mon1 ~]# ceph -scluster:id: 8785bb23-6770-4f83-9235-279c7d34c76fhealth: HEALTH_WARNDegraded data redundancy: 487/1527 objects degraded (31.893%), 122 pgs degradedservices:mon: 3 daemons, quorum mon1,mon2,mon3 (age 2m)mgr: mon1(active, since 3h), standbys: mon2, mon3osd: 9 osds: 9 up (since 4h), 9 in (since 4h)data:pools: 1 pools, 128 pgsobjects: 509 objects, 1.9 GiBusage: 13 GiB used, 527 GiB / 540 GiB availpgs: 487/1527 objects degraded (31.893%)120 active+recovery_wait+degraded6 active+clean2 active+recovering+degradedio:recovery: 3.3 MiB/s, 0 objects/s

创建pool

ceph osd pool create influxdata 512 512

检查

[root@mon1 ~]# ceph osd lspools22 influxdata

创建块镜像

rbd create influxdata/influx_data --size 10240 --image-feature layering## 如果不指定 pool_name/image_name 默认使用的是rbd pool

检查

[root@mon1 ~]# rbd list influxdatainflux_data[root@mon1 ~]# rbd info influxdata/influx_datarbd image 'influx_data':size 10 GiB in 2560 objectsorder 22 (4 MiB objects)snapshot_count: 0id: 1982a32f770f0block_name_prefix: rbd_data.1982a32f770f0format: 2features: layeringop_features:flags:create_timestamp: Wed Jan 6 13:28:27 2021access_timestamp: Wed Jan 6 13:28:27 2021modify_timestamp: Wed Jan 6 13:28:27 2021

创建账户和密码 ```bash ceph auth get-or-create client.influx mon ‘allow r’ osd ‘allow rwx pool=influxdata’|tee > /etc/ceph/ceph.client.influx.keyring

[root@mon1 ~]# cat /etc/ceph/ceph.client.influx.keyring [client.influx] key = AQCgTfVfogPQNBAAsisDrblyxPYNg5WKk1XzLg==

- 拷贝到客户端```bash[root@mon1 ~]# scp /etc/ceph/ceph.client.influx.keyring 10.68.3.91:/etc/ceph/

- 客户端映射快设备

```bash

yum install ceph-common -y

创建ceph.conf

[root@monitor1 ~]# cat /etc/ceph/ceph.conf [global] mon_host = 10.68.3.121,10.68.3.122,10.68.3.123

[root@monitor1 ~]# rbd map —image influxdata/influx_data —name client.influx /dev/rbd0

- 格式化挂载使用

```bash

mkfs.xfs /dev/rbd0

mount /dev/rbd0 /var/lib/influxdb/

[root@monitor1 ~]# df -h

Filesystem Size Used Avail Use% Mounted on

devtmpfs 899M 0 899M 0% /dev

tmpfs 910M 0 910M 0% /dev/shm

tmpfs 910M 33M 878M 4% /run

tmpfs 910M 0 910M 0% /sys/fs/cgroup

/dev/mapper/centos-root 14G 2.2G 12G 17% /

/dev/sda1 1014M 149M 866M 15% /boot

tmpfs 182M 0 182M 0% /run/user/0

/dev/rbd0 10G 33M 10G 1% /var/lib/influxdb

- 开机挂载 ```bash

[root@monitor1 ~]# cat /etc/fstab

#

/etc/fstab

Created by anaconda on Mon Jan 4 07:43:22 2021

#

Accessible filesystems, by reference, are maintained under ‘/dev/disk’

See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info

# /dev/mapper/centos-root / xfs defaults 0 0 UUID=68c4209c-d479-4804-ac96-406a547d6168 /boot xfs defaults 0 0 /dev/mapper/centos-swap swap swap defaults 0 0 /dev/rbd0 /var/lib/influxdb xfs defaults 0 0

```