查考链接https://www.kancloud.cn/willseecloud/ceph/1788314 官方文档:https://docs.ceph.com/en/latest/cephadm/install/#enable-ceph-cli

0- cephadm介绍

Cephadm

Cephadm的目标是提供一个功能齐全、健壮且维护良好的安装和管理层,可供不在Kubernetes中运行Ceph的任何环境使用。具体特性如下:

- 将所有组件部署在容器中。 使用容器简化了不同发行版之间的依赖关系和打包复杂度。当然,我们仍在构建

RPM和Deb软件包,但是随着越来越多的用户过渡到cephadm(或Rook)并构建容器,我们看到的特定于操作系统的bug就越少。 - 与Orchestrator API紧密集成。

Ceph的Orchestrator界面在cephadm的开发过程中得到了广泛的发展,以匹配实现并清晰地抽象出Rook中存在的(略有不同)功能。最终结果是不管是看起来还是感觉都像Ceph的一部分。 - 不依赖管理工具。

Salt和Ansible之类的工具在大型环境中进行大规模部署时非常出色,但是使Ceph依赖于这种工具意味着用户还需要学习该相关的软件。更重要的是,与专为管理Ceph而专门设计的部署工具相比,依赖这些工具(Salt和Ansible等)的部署最终可能变得更加复杂,难以调试并且(最显着)更慢。 - 最小的操作系统依赖性。

Cephadm需要Python 3,LVM和container runtime(Podman或Docker)。任何当前的Linux发行版都可以。 - 将群集彼此隔离。 支持多个

Ceph集群同时存在于同一主机上一直是一个比较小众的场景,但是确实存在,并且以一种健壮,通用的方式将集群彼此隔离,这使得测试和重新部署集群对于开发人员和用户而言都是安全自然的过程。 - 自动升级。 一旦

Ceph“拥有”自己的部署方式,它就可以以安全和自动化的方式[升级Ceph。 - 从“传统”部署工具轻松迁移。 我们需要从现有工具(例如

ceph-ansible,ceph-deploy和DeepSea)中现有的Ceph部署轻松过渡到cephadm。

所有这一切的目的是将Ceph开发人员和用户社区的注意力集中在仅这两个平台上:cephadm用于“裸机”部署,Rook用于在Kubernetes环境中运行Ceph,并为这两个平台提供类似的管理体验。

Bootstrap

cephadm模型有一个简单的“ Bootstrap ”步骤,该步骤从命令行启动,该命令行在本地主机上启动一个最小的Ceph群集(一个monitor 与 manager 守护程序)。然后,使用orchestrator命令部署集群的其余部分,以添加其他主机,使用存储设备并为集群服务部署守护程序。

1- 环境规划

| 节点角色 | 主机名/dns | 规格 | 磁盘 | OS | IP |

|---|---|---|---|---|---|

| cephadm,monitor,mgr,rgw,mds,osd,nfs | mon1 | 2C4G | OS:16G OSD1: 8G OSD4: 8G OSD7: 8G |

CentOS8.2 mini kernel: 4.18.0 |

public: 10.68.3.121 |

| monitor,mgr,rgw,mds,osd,nfs | mon2 | 2C4G | OS:16G OSD2: 8G OSD5: 8G OSD8: 8G |

CentOS8.2 mini kernel: 4.18.0 |

public: 10.68.3.122 |

| monitor,mgr,rgw,mds,osd,nfs | mon3 | 2C4G | OS:16G OSD3: 8G OSD6: 8G OSD9: 8G |

CentOS8.2 mini kernel: 4.18.0 |

public: 10.68.3.123 |

| osd | node1 | 2C4G | OS:16G OSD10: 8G OSD12: 8G OSD14: 8G |

CentOS8.2 mini kernel: 4.18.0 |

public: 10.68.3.131 |

| osd | node2 | 2C4G | OS:16G OSD3: 11G OSD6: 13G OSD9: 15G |

CentOS8.2 mini kernel: 4.18.0 |

public: 10.68.3.132 |

ntp server: 10.68.3.101 安装说明:

- ceph版本: 15.2.3 octopus (stable)

- 由于octopus需要python3支持,这里直接使用centos 8.2 minimal操作系统安装

- 集群的第一个节点也作为cephadm部署节点使用。

2-环境配置

2.1- IP配置

- mon1 ```bash [root@localhost ~]# vim /etc/sysconfig/network-scripts/ifcfg-ens32 TYPE=Ethernet BOOTPROTO=static DEFROUTE=yes NAME=ens32 DEVICE=ens32 ONBOOT=yes IPADDR=10.68.3.121 NETMASK=255.255.255.0 GATEWAY=10.68.3.1 DNS1=10.68.15.10

重启网络

nmcli c reload #重新载入一下配置文件 nmcli c up ens32

- mon2```bash[root@localhost ~]# vim /etc/sysconfig/network-scripts/ifcfg-ens32TYPE=EthernetBOOTPROTO=staticDEFROUTE=yesNAME=ens32DEVICE=ens32ONBOOT=yesIPADDR=10.68.3.122NETMASK=255.255.255.0GATEWAY=10.68.3.1DNS1=10.68.15.10## 重启网络nmcli c reload #重新载入一下配置文件nmcli c up ens32

- mon3 ```bash [root@localhost ~]# vi /etc/sysconfig/network-scripts/ifcfg-ens32 TYPE=Ethernet BOOTPROTO=static DEFROUTE=yes NAME=ens32 DEVICE=ens32 ONBOOT=yes IPADDR=10.68.3.123 NETMASK=255.255.255.0 GATEWAY=10.68.3.1 DNS1=10.68.15.10

重启网络

nmcli c reload #重新载入一下配置文件 nmcli c up ens32

<a name="7TwRk"></a>## 2.2-关闭firewalld和selinux```bashsystemctl stop firewalldsystemctl disable firewalldsed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/configreboot

2.3- 主机名

mon1

hostnamectl set-hostname mon1mon2

hostnamectl set-hostname mon2mon3

hostnamectl set-hostname mon3node1

hostnamectl set-hostname node1node2 ```bash hostnamectl set-hostname node2

<a name="4alvn"></a>

## 2.4- 配置centos8阿里云yum源

```bash

curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-8.repo

dnf install -y epel-release

sed -i 's|^#baseurl=https://download.fedoraproject.org/pub|baseurl=https://mirrors.tuna.tsinghua.edu.cn|' /etc/yum.repos.d/epel*

sed -i 's|^metalink|#metalink|' /etc/yum.repos.d/epel*

2.5- 配置时间同步

yum install -y chrony

systemctl enable --now chronyd

vi /etc/chrony.conf

server 10.68.3.101 iburst

#pool 2.centos.pool.ntp.org iburst

systemctl restart chronyd

chronyc sources

2.6- 配置ceph源

cat >>/etc/yum.repos.d/ceph.repo<< eof

[ceph] #路径为以上的x86_64目录

name=ceph

baseurl=https://mirrors.aliyun.com/ceph/rpm-octopus/el8/x86_64/

gpgcheck=0

enabled=1

[ceph-noarch] #路径为以上noarch目录

name=ceph-noarch

baseurl=https://mirrors.aliyun.com/ceph/rpm-octopus/el8/noarch/

gpgcheck=0

enabled=1

eof

2.7- mon1 配置hosts文件

cat << eof > /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

10.68.3.121 mon1

10.68.3.122 mon2

10.68.3.123 mon3

10.68.3.131 node1

10.68.3.132 node2

eof

scp /etc/hosts mon2:/etc/

scp /etc/hosts mon3:/etc/

scp /etc/hosts node1:/etc/

scp /etc/hosts node2:/etc/

2.8- mon1配置免密登录mon1,mon2,mon3

[root@harbor ~]# ssh mon1

Last login: Sat Dec 26 14:07:31 2020 from 10.68.3.101

[root@mon1 ~]# ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:N1G3WMgZ4VU9KGdG2QNXfmAXovwf8ahaqpxcTU2nALA root@mon1.shz.scom

The key's randomart image is:

+---[RSA 3072]----+

| ....=O@+B|

| ..==%oO.|

| E .oO o.*|

| ..+ ++|

| S o ..+..|

| . + .. .|

| . + . |

| o o + |

| =.o |

+----[SHA256]-----+

[root@mon1 ~]#

ssh-copy-id mon1

ssh-copy-id mon2

ssh-copy-id mon3

2.9- 按必备包

基础工具包

dnf install vim net-tools nmap wget curl telnet bind-utils -y容器包

dnf install python3 podman -y2.10- podman配置镜像加速

```bash cat > /etc/containers/registries.conf <<eof

unqualified-search-registries = [“docker.io”]

[[registry]] prefix = “docker.io” insecure = true location = “ung2thfc.mirror.aliyuncs.com”

eof

scp /etc/containers/registries.conf mon2:/etc/containers/ scp /etc/containers/registries.conf mon3:/etc/containers/

<a name="ChsKD"></a>

#

<a name="EkgUQ"></a>

# 3- 安装集群主机

<a name="XvbOB"></a>

## 3.1- mon1安装cephadm

```bash

dnf install -y cephadm

3.2- 部署mon节点

mkdir -p /opt/ceph

cd /opt/ceph

cephadm bootstrap -h # 查看帮助

podman pull docker.io/ceph/ceph:v15 # 所有节点先下载镜像

podman pull docker.io/prom/node-exporter:v0.18.1

cephadm bootstrap --mon-ip 10.68.3.121 --mon-id mon1 \

--initial-dashboard-user admin \

--initial-dashboard-password admin \

--output-dir /opt/ceph/ \

--mon-addrv 10.68.3.0/24

说明

需要的镜像:

prom/prometheus latest 396dc3b4e717 31 hours ago 142MB

prom/alertmanager latest c876f5897d7b 2 days ago 55.5MB

prom/node-exporter latest 0e0218889c33 3 days ago 26.4MB

ceph/ceph v15 d72755c420bc 3 weeks ago 1.11GB

ceph/ceph-grafana latest 87a51ecf0b1c 3 months ago 509MB

- ceph-mgr ceph管理程序

- ceph-monitor ceph监视器

- ceph-crash 崩溃数据收集模块

- prometheus prometheus监控组件

- grafana 监控数据展示dashboard

- alertmanager prometheus告警组件

- node_exporter prometheus节点数据收集组件

输出过程:

[root@mon1 ceph]# cephadm bootstrap --mon-ip 10.68.3.121 --mon-id mon1 \

> --initial-dashboard-user admin \

> --initial-dashboard-password admin \

> --output-dir /opt/ceph/ \

> --mon-addrv 10.68.3.0/24

Verifying podman|docker is present...

Verifying lvm2 is present...

Verifying time synchronization is in place...

Unit chronyd.service is enabled and running

Repeating the final host check...

podman|docker (/usr/bin/podman) is present

systemctl is present

lvcreate is present

Unit chronyd.service is enabled and running

Host looks OK

Cluster fsid: 4eec4012-4793-11eb-8510-000c2937b6b2

Verifying IP 10.68.3.121 port 3300 ...

Verifying IP 10.68.3.121 port 6789 ...

Mon IP 10.68.3.121 is in CIDR network 10.68.3.0/24

Pulling container image docker.io/ceph/ceph:v15...

Extracting ceph user uid/gid from container image...

Creating initial keys...

Creating initial monmap...

Creating mon...

Waiting for mon to start...

Waiting for mon...

mon is available

Assimilating anything we can from ceph.conf...

Generating new minimal ceph.conf...

Restarting the monitor...

Setting mon public_network...

Creating mgr...

Verifying port 9283 ...

Wrote keyring to /opt/ceph/ceph.client.admin.keyring

Wrote config to /opt/ceph/ceph.conf

Waiting for mgr to start...

Waiting for mgr...

mgr not available, waiting (1/10)...

mgr not available, waiting (2/10)...

mgr not available, waiting (3/10)...

mgr not available, waiting (4/10)...

mgr is available

Enabling cephadm module...

Waiting for the mgr to restart...

Waiting for Mgr epoch 5...

Mgr epoch 5 is available

Setting orchestrator backend to cephadm...

Generating ssh key...

Wrote public SSH key to to /opt/ceph/ceph.pub

Adding key to root@localhost's authorized_keys...

Adding host mon1...

Deploying mon service with default placement...

Deploying mgr service with default placement...

Deploying crash service with default placement...

Enabling mgr prometheus module...

Deploying prometheus service with default placement...

Deploying grafana service with default placement...

Deploying node-exporter service with default placement...

Deploying alertmanager service with default placement...

Enabling the dashboard module...

Waiting for the mgr to restart...

Waiting for Mgr epoch 13...

Mgr epoch 13 is available

Generating a dashboard self-signed certificate...

Creating initial admin user...

Fetching dashboard port number...

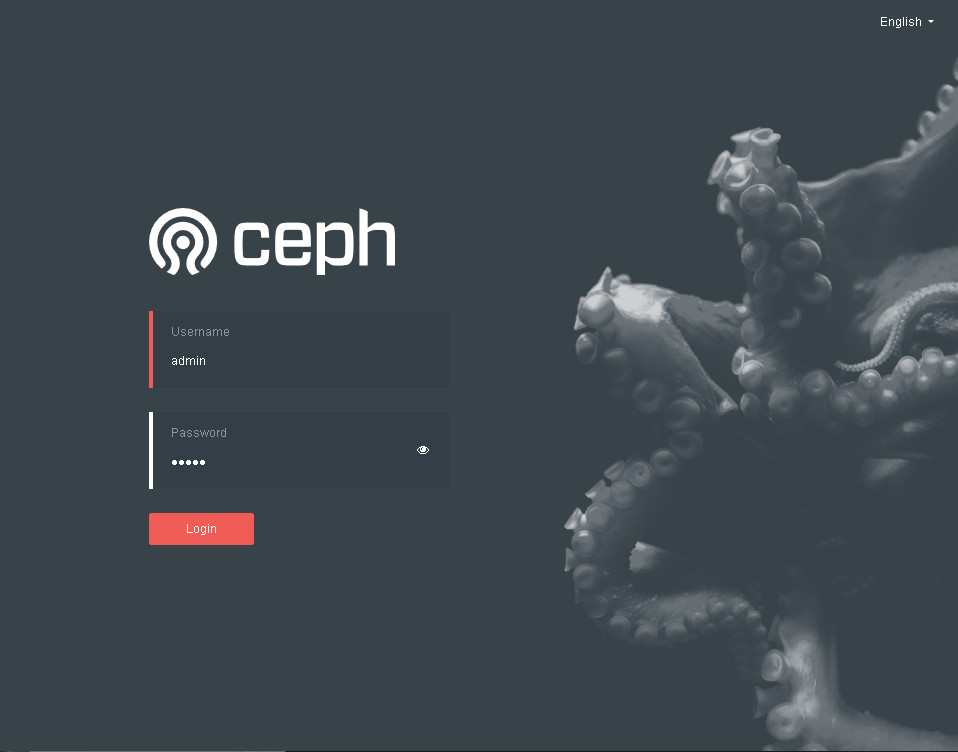

Ceph Dashboard is now available at:

URL: https://mon1:8443/

User: admin

Password: admin

You can access the Ceph CLI with:

sudo /usr/sbin/cephadm shell --fsid 4eec4012-4793-11eb-8510-000c2937b6b2 -c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring

Please consider enabling telemetry to help improve Ceph:

ceph telemetry on

For more information see:

https://docs.ceph.com/docs/master/mgr/telemetry/

Bootstrap complete.

检查部署的版本:

cephadm shell -- ceph -v

Inferring fsid a7a318dc-4759-11eb-afff-000c29b1c7bb

Inferring config /var/lib/ceph/a7a318dc-4759-11eb-afff-000c29b1c7bb/mon.mon1.shz.scom/config

Using recent ceph image docker.io/ceph/ceph:v15

ceph version 15.2.8 (bdf3eebcd22d7d0b3dd4d5501bee5bac354d5b55) octopus (stable)

查看所有组件运行状态

ceph orch ps

[ceph: root@mon1 /]# ceph orch ps

NAME HOST STATUS REFRESHED AGE VERSION IMAGE NAME IMAGE ID CONTAINER ID

crash.mon1 mon1 running (10h) 8m ago 10h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c3eb9b8ef11b

crash.mon2 mon2 running (9h) 9m ago 9h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 5d7a6dc7b7bf

crash.mon3 mon3 running (9h) 9m ago 9h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 580b9209408a

crash.node1 node1 running (68m) 19m ago 68m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 830ae455edd2

crash.node2 node2 running (68m) 9m ago 68m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c b32bfe41437b

mds.cephfs.mon1.gqfzjd mon1 running (37m) 8m ago 37m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c cec0c2fabba2

mds.cephfs.mon2.ofbuhd mon2 running (37m) 9m ago 37m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c b2ce742bdb25

mds.cephfs.mon3.scdcas mon3 running (37m) 9m ago 37m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 1665d7ea6458

mgr.mon1.gxivji mon1 running (10h) 8m ago 10h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 9895e70a88e5

mgr.mon3.wcdgws mon3 running (9h) 9m ago 9h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 9671cfcc4879

mon.mon1 mon1 running (10h) 8m ago 10h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c6d2edfe749e

mon.mon2 mon2 running (90m) 9m ago 90m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 6831474ae296

mon.mon3 mon3 running (8h) 9m ago 8h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 1dad20cfb8cb

node-exporter.mon1 mon1 running (9h) 8m ago 9h 0.18.1 docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf a1769a2c96e3

node-exporter.mon2 mon2 running (8h) 9m ago 8h 0.18.1 docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf a263814973cf

node-exporter.mon3 mon3 running (9h) 9m ago 9h 0.18.1 docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf a98474474801

node-exporter.node1 node1 running (100m) 19m ago 8h 0.18.1 docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf 43c2b9c1b577

node-exporter.node2 node2 running (55m) 9m ago 8h 0.18.1 docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf 001c9c481fde

osd.0 mon1 running (108m) 8m ago 108m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 69ed8a7c2674

osd.1 mon2 running (107m) 9m ago 107m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c6582ada37ea

osd.10 node2 running (63m) 9m ago 63m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 3fbb521b4de8

osd.11 node1 running (59m) 19m ago 59m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c f04a44147db3

osd.12 node2 running (58m) 9m ago 58m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c ac57964f9918

osd.13 node1 running (57m) 19m ago 57m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 48b64e0c65bb

osd.14 node2 running (57m) 9m ago 57m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 0255eaf95a17

osd.2 mon3 running (106m) 9m ago 106m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 8c29e7a27e5f

osd.3 mon1 running (104m) 8m ago 104m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c b1ac841836d7

osd.4 mon2 running (103m) 9m ago 103m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c dcf2b0c46391

osd.5 mon3 running (102m) 9m ago 102m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c35535ed359f

osd.6 mon1 running (101m) 8m ago 101m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 33337487b7dc

osd.7 mon2 running (101m) 9m ago 101m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 9e401f42c863

osd.8 mon3 running (100m) 9m ago 100m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c5a821d0a425

osd.9 node1 running (66m) 19m ago 66m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 7ec2e34b3f2c

rgw.myorg.cn-east-1.mon1.mmloiz mon1 running (10m) 8m ago 10m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c a2e23fd30cd8

rgw.myorg.cn-east-1.mon2.epselk mon2 running (10m) 9m ago 10m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 073eea9b742c

rgw.myorg.cn-east-1.mon3.zxoura mon3 running (10m) 9m ago 10m 15.2.8 docker.io/ceph/ceph:v15

查看某个组件运行状态

ceph orch ps --daemon-type mds

[ceph: root@mon1 /]# ceph orch ps --daemon-type mds

NAME HOST STATUS REFRESHED AGE VERSION IMAGE NAME IMAGE ID CONTAINER ID

mds.cephfs.mon1.gqfzjd mon1 running (40m) 51s ago 40m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c cec0c2fabba2

mds.cephfs.mon2.ofbuhd mon2 running (40m) 69s ago 40m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c b2ce742bdb25

mds.cephfs.mon3.scdcas mon3 running (39m) 63s ago 39m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 1665d7ea6458

容器状态查看

[root@mon1 ~]# cephadm ls

[

{

"style": "cephadm:v1",

"name": "mon.mon1",

"fsid": "4eec4012-4793-11eb-8510-000c2937b6b2",

"systemd_unit": "ceph-4eec4012-4793-11eb-8510-000c2937b6b2@mon.mon1",

"enabled": true,

"state": "running",

"container_id": "c6d2edfe749e8c519c113321ca688e242fb32e074067523e86285ce33c10e627",

"container_image_name": "docker.io/ceph/ceph:v15",

"container_image_id": "5553b0cb212ca2aa220d33ba39d9c602c8412ce6c5febc57ef9cdc9c5844b185",

"version": "15.2.8",

"started": "2020-12-26T15:59:40.475291",

"created": "2020-12-26T15:59:33.022058",

"deployed": "2020-12-26T15:59:30.108049",

"configured": "2020-12-27T00:51:17.543723"

},

{

"style": "cephadm:v1",

"name": "mgr.mon1.gxivji",

"fsid": "4eec4012-4793-11eb-8510-000c2937b6b2",

"systemd_unit": "ceph-4eec4012-4793-11eb-8510-000c2937b6b2@mgr.mon1.gxivji",

"enabled": true,

"state": "running",

"container_id": "9895e70a88e5ec18567c4beb5014d6bce641196a4a5fc67705b474fc7f41eac5",

"container_image_name": "docker.io/ceph/ceph:v15",

"container_image_id": "5553b0cb212ca2aa220d33ba39d9c602c8412ce6c5febc57ef9cdc9c5844b185",

"version": "15.2.8",

"started": "2020-12-26T15:59:44.195130",

"created": "2020-12-26T15:59:45.021095",

"deployed": "2020-12-26T15:59:42.846089",

"configured": "2020-12-27T00:51:23.328741"

},

{

"style": "cephadm:v1",

"name": "crash.mon1",

"fsid": "4eec4012-4793-11eb-8510-000c2937b6b2",

"systemd_unit": "ceph-4eec4012-4793-11eb-8510-000c2937b6b2@crash.mon1",

"enabled": true,

"state": "running",

"container_id": "c3eb9b8ef11b197c7490b9d5c1beacfd661428e8e1a9871d45cd23402af67cfd",

"container_image_name": "docker.io/ceph/ceph:v15",

"container_image_id": "5553b0cb212ca2aa220d33ba39d9c602c8412ce6c5febc57ef9cdc9c5844b185",

"version": "15.2.8",

"started": "2020-12-26T16:05:38.062720",

"created": "2020-12-26T16:05:38.795192",

"deployed": "2020-12-26T16:05:36.739186",

"configured": "2020-12-27T00:51:29.302760"

},

{

"style": "cephadm:v1",

"name": "node-exporter.mon1",

"fsid": "4eec4012-4793-11eb-8510-000c2937b6b2",

"systemd_unit": "ceph-4eec4012-4793-11eb-8510-000c2937b6b2@node-exporter.mon1",

"enabled": true,

"state": "running",

"container_id": "a1769a2c96e3dbfc3db13b4a0459c675863f8fc82f7fb4cf56ca9694e2cd250f",

"container_image_name": "docker.io/prom/node-exporter:v0.18.1",

"container_image_id": "e5a616e4b9cf68dfcad7782b78e118be4310022e874d52da85c55923fb615f87",

"version": "0.18.1",

"started": "2020-12-26T16:07:43.203186",

"created": "2020-12-26T16:07:44.077578",

"deployed": "2020-12-26T16:07:41.836571",

"configured": "2020-12-26T16:07:44.077578"

},

{

"style": "cephadm:v1",

"name": "osd.0",

"fsid": "4eec4012-4793-11eb-8510-000c2937b6b2",

"systemd_unit": "ceph-4eec4012-4793-11eb-8510-000c2937b6b2@osd.0",

"enabled": true,

"state": "running",

"container_id": "69ed8a7c26747918ecf99f57c1f9ff541f3c27ced1fe09511da13249d71beba2",

"container_image_name": "docker.io/ceph/ceph:v15",

"container_image_id": "5553b0cb212ca2aa220d33ba39d9c602c8412ce6c5febc57ef9cdc9c5844b185",

"version": "15.2.8",

"started": "2020-12-27T00:18:32.224978",

"created": "2020-12-27T00:18:32.846564",

"deployed": "2020-12-27T00:18:05.535499",

"configured": "2020-12-27T00:51:33.663774"

},

{

"style": "cephadm:v1",

"name": "osd.3",

"fsid": "4eec4012-4793-11eb-8510-000c2937b6b2",

"systemd_unit": "ceph-4eec4012-4793-11eb-8510-000c2937b6b2@osd.3",

"enabled": true,

"state": "running",

"container_id": "b1ac841836d75a055ef40136673b8ee856b0cb4879e8cec6633d825894efdbe2",

"container_image_name": "docker.io/ceph/ceph:v15",

"container_image_id": "5553b0cb212ca2aa220d33ba39d9c602c8412ce6c5febc57ef9cdc9c5844b185",

"version": "15.2.8",

"started": "2020-12-27T00:22:58.278257",

"created": "2020-12-27T00:22:58.768322",

"deployed": "2020-12-27T00:22:51.641300",

"configured": "2020-12-27T00:51:39.002791"

},

{

"style": "cephadm:v1",

"name": "osd.6",

"fsid": "4eec4012-4793-11eb-8510-000c2937b6b2",

"systemd_unit": "ceph-4eec4012-4793-11eb-8510-000c2937b6b2@osd.6",

"enabled": true,

"state": "running",

"container_id": "33337487b7dce1161b86ab205a87fdff4e51d562bfb6bb6601a1297faa82377e",

"container_image_name": "docker.io/ceph/ceph:v15",

"container_image_id": "5553b0cb212ca2aa220d33ba39d9c602c8412ce6c5febc57ef9cdc9c5844b185",

"version": "15.2.8",

"started": "2020-12-27T00:25:45.436339",

"created": "2020-12-27T00:25:46.036788",

"deployed": "2020-12-27T00:25:38.117763",

"configured": "2020-12-27T00:51:43.363805"

},

{

"style": "cephadm:v1",

"name": "mds.cephfs.mon1.gqfzjd",

"fsid": "4eec4012-4793-11eb-8510-000c2937b6b2",

"systemd_unit": "ceph-4eec4012-4793-11eb-8510-000c2937b6b2@mds.cephfs.mon1.gqfzjd",

"enabled": true,

"state": "running",

"container_id": "cec0c2fabba2ac6ae4a6f9f347bc85b7615d7f5d56d3346edabe9cf90d938be8",

"container_image_name": "docker.io/ceph/ceph:v15",

"container_image_id": "5553b0cb212ca2aa220d33ba39d9c602c8412ce6c5febc57ef9cdc9c5844b185",

"version": "15.2.8",

"started": "2020-12-27T01:29:50.644960",

"created": "2020-12-27T01:29:51.786702",

"deployed": "2020-12-27T01:29:47.058688",

"configured": "2020-12-27T01:29:51.787702"

},

{

"style": "cephadm:v1",

"name": "rgw.myorg.cn-east-1.mon1.mmloiz",

"fsid": "4eec4012-4793-11eb-8510-000c2937b6b2",

"systemd_unit": "ceph-4eec4012-4793-11eb-8510-000c2937b6b2@rgw.myorg.cn-east-1.mon1.mmloiz",

"enabled": true,

"state": "running",

"container_id": "a2e23fd30cd8f0c228a9b15a24e122c861d3e184f75cb22798272889a341c370",

"container_image_name": "docker.io/ceph/ceph:v15",

"container_image_id": "5553b0cb212ca2aa220d33ba39d9c602c8412ce6c5febc57ef9cdc9c5844b185",

"version": "15.2.8",

"started": "2020-12-27T01:56:51.577001",

"created": "2020-12-27T01:56:53.006791",

"deployed": "2020-12-27T01:56:47.806775",

"configured": "2020-12-27T01:56:53.006791"

},

{

"style": "cephadm:v1",

"name": "nfs.foo.mon1",

"fsid": "4eec4012-4793-11eb-8510-000c2937b6b2",

"systemd_unit": "ceph-4eec4012-4793-11eb-8510-000c2937b6b2@nfs.foo.mon1",

"enabled": true,

"state": "running",

"container_id": "e032427d71431ecc1106feaf40795b5f6ed3ac071cf5a91ed0e9952945fc0676",

"container_image_name": "docker.io/ceph/ceph:v15",

"container_image_id": "5553b0cb212ca2aa220d33ba39d9c602c8412ce6c5febc57ef9cdc9c5844b185",

"version": "3.3",

"started": "2020-12-27T02:08:18.607214",

"created": "2020-12-27T02:08:19.757851",

"deployed": "2020-12-27T02:08:10.168822",

"configured": "2020-12-27T02:08:19.757851"

}

]

3.3- 登录web查看结果

3.4- 连接ceph

/usr/sbin/cephadm shell --fsid a7a318dc-4759-11eb-afff-000c29b1c7bb -c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring

[root@mon1 ceph]# /usr/sbin/cephadm shell --fsid a7a318dc-4759-11eb-afff-000c29b1c7bb -c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring

Using recent ceph image docker.io/ceph/ceph:v15

检查集群:

[ceph: root@mon1 /]# ceph -s

cluster:

id: a7a318dc-4759-11eb-afff-000c29b1c7bb

health: HEALTH_WARN

OSD count 0 < osd_pool_default_size 3

services:

mon: 1 daemons, quorum mon1.shz.scom (age 22m)

mgr: mon1.shz.scom.tdlifn(active, since 21m)

osd: 0 osds: 0 up, 0 in

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 0 B used, 0 B / 0 B avail

pgs:

[ceph: root@mon1 /]# ceph status

cluster:

id: a7a318dc-4759-11eb-afff-000c29b1c7bb

health: HEALTH_WARN

OSD count 0 < osd_pool_default_size 3

services:

mon: 1 daemons, quorum mon1.shz.scom (age 23m)

mgr: mon1.shz.scom.tdlifn(active, since 21m)

osd: 0 osds: 0 up, 0 in

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 0 B used, 0 B / 0 B avail

pgs:

推出:

exit

3.5- 添加更多节点

拷贝公钥

ssh-copy-id -f -i /opt/ceph/ceph.pub root@mon2

ssh-copy-id -f -i /opt/ceph/ceph.pub root@mon3

进入ceph控制台

/usr/sbin/cephadm shell --fsid a7a318dc-4759-11eb-afff-000c29b1c7bb -c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring

查看已经存在的节点

[ceph: root@mon1 /]# ceph orch host ls

HOST ADDR LABELS STATUS

mon1.shz.scom mon1.shz.scom

标志新加入的节点默认不是mon节点

ceph orch apply mon --unmanaged

[ceph: root@mon1 /]# ceph orch apply mon --unmanaged

Scheduled mon update...

添加节点

## 命令

ceph orch host add <hostname> [<addr>] [<labels>. Add a host ## 注意,添加时hostname和IP需要同时提供

ceph orch host add mon2

ceph orch host add mon3

记录

[root@mon1 ceph]# /usr/sbin/cephadm shell --fsid a7a318dc-4759-11eb-afff-000c29b1c7bb -c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring -- ceph orch host add mon2

Using recent ceph image docker.io/ceph/ceph:v15

Added host 'mon2'

[root@mon1 ceph]# /usr/sbin/cephadm shell --fsid a7a318dc-4759-11eb-afff-000c29b1c7bb -c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring -- ceph orch host add mon3

Using recent ceph image docker.io/ceph/ceph:v15

Added host 'mon3'

删除节点: orch host rm

确认结果

[root@mon1 ceph]# /usr/sbin/cephadm shell --fsid a7a318dc-4759-11eb-afff-000c29b1c7bb -c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring -- ceph orch host ls

Using recent ceph image docker.io/ceph/ceph:v15

HOST ADDR LABELS STATUS

mon1 mon1

mon2 mon2

mon3 mon3

3.6- 添加mon节点

使用主机标签来控制运行monitor的主机

[root@mon1 ceph]# /usr/sbin/cephadm shell --fsid a7a318dc-4759-11eb-afff-000c29b1c7bb -c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring -- ceph orch host label add mon2 mon

Using recent ceph image docker.io/ceph/ceph:v15

Added label mon to host mon2

[root@mon1 ceph]# /usr/sbin/cephadm shell --fsid a7a318dc-4759-11eb-afff-000c29b1c7bb -c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring -- ceph orch host label add mon3 mon

Using recent ceph image docker.io/ceph/ceph:v15

Added label mon to host mon3

[root@mon1 ceph]# /usr/sbin/cephadm shell --fsid a7a318dc-4759-11eb-afff-000c29b1c7bb -c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring -- ceph orch host ls

Using recent ceph image docker.io/ceph/ceph:v15

HOST ADDR LABELS STATUS

mon1 mon1

mon2 mon2 mon

mon3 mon3 mon

据标签部署monitor

[root@mon1 ceph]# /usr/sbin/cephadm shell --fsid a7a318dc-4759-11eb-afff-000c29b1c7bb \

-c /opt/ceph/ceph.conf -k /opt/ceph/ceph.client.admin.keyring \

-- ceph orch apply mon label:mon

Using recent ceph image docker.io/ceph/ceph:v15

Scheduled mon update...

3.7-添加node节点

ssh-copy-id -f -i /opt/ceph/ceph.pub root@node1

ssh-copy-id -f -i /opt/ceph/ceph.pub root@node2

4- 部署OSD

如果满足以下所有条件,则认为存储设备可用:

- 设备必须没有分区。

- 设备不得具有任何LVM状态。

- 不得安装设备。

- 该设备不得包含文件系统。

- 该设备不得包含Ceph BlueStore OSD。

- 设备必须大于5 GB。

4.1- 部署OSD

- 查看可用设备 ```bash /usr/sbin/cephadm shell —fsid a7a318dc-4759-11eb-afff-000c29b1c7bb -c /opt/ceph/ceph.conf \ -k /opt/ceph/ceph.client.admin.keyring

[ceph: root@mon1 /]# ceph orch device ls Hostname Path Type Serial Size Health Ident Fault Available mon1 /dev/sdb hdd 8589M Unknown N/A N/A No mon1 /dev/sdc hdd 8589M Unknown N/A N/A No mon1 /dev/sdd hdd 8589M Unknown N/A N/A No mon3 /dev/sdb hdd 8589M Unknown N/A N/A No mon3 /dev/sdc hdd 8589M Unknown N/A N/A No mon3 /dev/sdd hdd 8589M Unknown N/A N/A No mon2 /dev/sdb hdd 8589M Unknown N/A N/A No mon2 /dev/sdc hdd 8589M Unknown N/A N/A No mon2 /dev/sdd hdd 8589M Unknown N/A N/A No node2 /dev/sdb hdd 8589M Unknown N/A N/A Yes node2 /dev/sdc hdd 8589M Unknown N/A N/A Yes node2 /dev/sdd hdd 8589M Unknown N/A N/A Yes node1 /dev/sdb hdd 8589M Unknown N/A N/A Yes node1 /dev/sdc hdd 8589M Unknown N/A N/A Yes node1 /dev/sdd hdd 8589M Unknown N/A N/A Yes

- 添加OSD【注意添加的顺序]

```bash

ceph orch daemon add osd mon1:/dev/sdb

ceph orch daemon add osd mon2:/dev/sdb

ceph orch daemon add osd mon3:/dev/sdb

ceph orch daemon add osd mon1:/dev/sdc

ceph orch daemon add osd mon2:/dev/sdc

ceph orch daemon add osd mon3:/dev/sdc

ceph orch daemon add osd mon1:/dev/sdd

ceph orch daemon add osd mon2:/dev/sdd

ceph orch daemon add osd mon3:/dev/sdd

- 添加node 的osd节点 ```bash ceph orch host label add node1 osd ceph orch host label add node2 osd ceph orch host label add mon1 osd ceph orch host label add mon2 osd ceph orch host label add mon3 osd

[ceph: root@mon1 /]# ceph orch host ls HOST ADDR LABELS STATUS mon1 mon1 mon osd mon2 mon2 mon osd mon3 mon3 mon osd node1 node1 osd node2 node2 osd

ceph orch daemon add osd node1:/dev/sdb ceph orch daemon add osd node2:/dev/sdb

ceph orch daemon add osd node1:/dev/sdc ceph orch daemon add osd node2:/dev/sdc

ceph orch daemon add osd node1:/dev/sdd ceph orch daemon add osd node2:/dev/sdd

<a name="OpTxv"></a>

## 4.2- 部署的MDS

要使用CephFS文件系统,需要一个或多个MDS守护程序

- 部署元数据服务器

```bash

ceph osd pool create cephfs_data 64 64

ceph osd pool create cephfs_metadata 64 64

ceph fs new cephfs cephfs_metadata cephfs_data

ceph fs ls

[ceph: root@mon1 /]# ceph fs ls

name: cephfs, metadata pool: cephfs_metadata, data pools: [cephfs_data ]

ceph orch apply mds cephfs --placement="3 mon1 mon2 mon3"

[ceph: root@mon1 /]# ceph orch apply mds cephfs --placement="3 mon1 mon2 mon3"

Scheduled mds.cephfs update...

确认结果

[ceph: root@mon1 /]# ceph -s

cluster:

id: 4eec4012-4793-11eb-8510-000c2937b6b2

health: HEALTH_OK

services:

mon: 3 daemons, quorum mon1,mon3,mon2 (age 17m)

mgr: mon1.gxivji(active, since 9h), standbys: mon3.wcdgws

mds: cephfs:1 {0=cephfs.mon1.gqfzjd=up:active} 2 up:standby

osd: 15 osds: 15 up (since 33m), 15 in (since 33m)

data:

pools: 3 pools, 81 pgs

objects: 22 objects, 2.2 KiB

usage: 16 GiB used, 104 GiB / 120 GiB avail

pgs: 81 active+clean

4.3-部署RGWS

Cephadm将radosgw部署为管理特定领域和区域的守护程序的集合.

使用cephadm时,radosgw守护程序是通过监视器配置数据库而不是通过ceph.conf或命令行来配置的。如果该配置尚未就绪(通常在本client.rgw.<realmname>.<zonename>节中),那么radosgw守护程序将使用默认设置(例如,绑定到端口80)启动.

- 要在mon1、mon2和mon3上部署3个服务于myorg领域和us-east-1区域的rgw守护程序:

```bash

如果尚未创建领域,请首先创建一个领域:

radosgw-admin realm create —rgw-realm=myorg —default

接下来创建一个新的区域组:

radosgw-admin zonegroup create —rgw-zonegroup=default —master —default

接下来创建一个区域:

radosgw-admin zone create —rgw-zonegroup=default —rgw-zone=cn-east-1 —master —default

为特定领域和区域部署一组radosgw守护程序:

ceph orch apply rgw myorg cn-east-1 —placement=”3 mon1 mon2 mon3”

创建过程

```bash

[ceph: root@mon1 /]# radosgw-admin realm create --rgw-realm=myorg --default

{

"id": "27899407-1b2f-43c3-811a-bc154cd07f5d",

"name": "myorg",

"current_period": "3dd84558-82f3-471c-9dae-cf72fef06511",

"epoch": 1

}

[ceph: root@mon1 /]# radosgw-admin zonegroup create --rgw-zonegroup=default --master --default

{

"id": "1526f5f6-d591-4fa1-91aa-ebc0f1a228c3",

"name": "default",

"api_name": "default",

"is_master": "true",

"endpoints": [],

"hostnames": [],

"hostnames_s3website": [],

"master_zone": "",

"zones": [],

"placement_targets": [],

"default_placement": "",

"realm_id": "27899407-1b2f-43c3-811a-bc154cd07f5d",

"sync_policy": {

"groups": []

}

}

[ceph: root@mon1 /]# radosgw-admin zone create --rgw-zonegroup=default --rgw-zone=cn-east-1 --master --default

{

"id": "053c82a4-13d2-4a7f-a491-37a337598bb3",

"name": "cn-east-1",

"domain_root": "cn-east-1.rgw.meta:root",

"control_pool": "cn-east-1.rgw.control",

"gc_pool": "cn-east-1.rgw.log:gc",

"lc_pool": "cn-east-1.rgw.log:lc",

"log_pool": "cn-east-1.rgw.log",

"intent_log_pool": "cn-east-1.rgw.log:intent",

"usage_log_pool": "cn-east-1.rgw.log:usage",

"roles_pool": "cn-east-1.rgw.meta:roles",

"reshard_pool": "cn-east-1.rgw.log:reshard",

"user_keys_pool": "cn-east-1.rgw.meta:users.keys",

"user_email_pool": "cn-east-1.rgw.meta:users.email",

"user_swift_pool": "cn-east-1.rgw.meta:users.swift",

"user_uid_pool": "cn-east-1.rgw.meta:users.uid",

"otp_pool": "cn-east-1.rgw.otp",

"system_key": {

"access_key": "",

"secret_key": ""

},

"placement_pools": [

{

"key": "default-placement",

"val": {

"index_pool": "cn-east-1.rgw.buckets.index",

"storage_classes": {

"STANDARD": {

"data_pool": "cn-east-1.rgw.buckets.data"

}

},

"data_extra_pool": "cn-east-1.rgw.buckets.non-ec",

"index_type": 0

}

}

],

"realm_id": "27899407-1b2f-43c3-811a-bc154cd07f5d"

}

[ceph: root@mon1 /]# ceph orch apply rgw myorg cn-east-1 --placement="3 mon1 mon2 mon3"

Scheduled rgw.myorg.cn-east-1 update...

4.4- 部署NFS Ganesha

Cephadm使用预定义的RADOS池和可选的namespace部署NFS Ganesha

要部署NFS Ganesha网关,请执行以下操作:

例如,同一个服务ID部署NFSFOO,将使用RADOS池NFS的象头和命名空间NFS-NS,:

ceph osd pool create nfs-ganesha 64 64

ceph orch apply nfs foo nfs-ganesha nfs-ns --placement="3 mom1 mon2 mon3"

ceph osd pool application enable nfs-ganesha cephfs

创建过程

[ceph: root@mon1 /]# ceph osd pool create nfs-ganesha 64 64

pool 'nfs-ganesha' created

[ceph: root@mon1 /]# ceph orch apply nfs foo nfs-ganesha nfs-ns --placement="3 mon1 mon2 mon3"

Scheduled nfs.foo update...

[ceph: root@mon1 /]# ceph osd pool application enable nfs-ganesha cephfs

enabled application 'cephfs' on pool 'nfs-ganesha'

5- 服务

5.1- 容器外使用ceph命令

安装ceph命令行工具

- 安装ceph-common ```bash dnf install ceph-common -y [root@mon1 ~]# ceph -v ceph version 15.2.8 (bdf3eebcd22d7d0b3dd4d5501bee5bac354d5b55) octopus (stable)

- 创建连接集群配置文件

```bash

mkdir -p /etc/ceph

cp /opt/ceph/ceph.conf /etc/ceph/

cp /opt/ceph/ceph.client.admin.keyring /etc/ceph/

验证

[root@mon1 ~]# ceph -s cluster: id: 4eec4012-4793-11eb-8510-000c2937b6b2 health: HEALTH_OK services: mon: 3 daemons, quorum mon1,mon3,mon2 (age 90s) mgr: mon1.gxivji(active, since 10h), standbys: mon3.wcdgws mds: cephfs:1 {0=cephfs.mon1.gqfzjd=up:active} 2 up:standby osd: 15 osds: 15 up (since 100m), 15 in (since 100m) rgw: 3 daemons active (myorg.cn-east-1.mon1.mmloiz, myorg.cn-east-1.mon2.epselk, myorg.cn-east-1.mon3.zxoura) task status: data: pools: 8 pools, 249 pgs objects: 254 objects, 8.2 KiB usage: 16 GiB used, 104 GiB / 120 GiB avail pgs: 249 active+clean io: client: 511 B/s rd, 0 op/s rd, 0 op/s wr5.2-检查服务

查看服务状态 ```bash [root@mon1 ~]# ceph orch ls NAME RUNNING REFRESHED AGE PLACEMENT IMAGE NAME IMAGE ID alertmanager 0/1 - - count:1

crash 5/5 12m ago 10h docker.io/ceph/ceph:v15 5553b0cb212c grafana 0/1 - - count:1 mds.cephfs 3/3 12m ago 89m mon1;mon2;mon3;count:3 docker.io/ceph/ceph:v15 5553b0cb212c mgr 2/2 11m ago 10h count:2 docker.io/ceph/ceph:v15 5553b0cb212c mon 3/3 12m ago 2h label:mon docker.io/ceph/ceph:v15 5553b0cb212c nfs.foo 3/3 12m ago 42m mon1;mon2;mon3;count:3 docker.io/ceph/ceph:v15 5553b0cb212c node-exporter 5/5 12m ago 10h docker.io/ceph/ceph:v15 5553b0cb212c prometheus 0/1 - - count:1 rgw.myorg.cn-east-1 3/3 12m ago 54m mon1;mon2;mon3;count:3 docker.io/ceph/ceph:v15 5553b0cb212c

- 查看daemon 状态

```bash

[root@mon1 ~]# ceph orch ps

NAME HOST STATUS REFRESHED AGE VERSION IMAGE NAME IMAGE ID CONTAINER ID

crash.mon1 mon1 running (10h) 16m ago 10h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c3eb9b8ef11b

crash.mon2 mon2 running (9h) 17m ago 9h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 5d7a6dc7b7bf

crash.mon3 mon3 running (9h) 17m ago 9h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 580b9209408a

crash.node1 node1 running (117m) 18m ago 117m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 830ae455edd2

crash.node2 node2 running (117m) 18m ago 117m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c b32bfe41437b

mds.cephfs.mon1.gqfzjd mon1 running (86m) 16m ago 86m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c cec0c2fabba2

mds.cephfs.mon2.ofbuhd mon2 running (86m) 17m ago 86m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c b2ce742bdb25

mds.cephfs.mon3.scdcas mon3 running (86m) 17m ago 86m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 1665d7ea6458

mgr.mon1.gxivji mon1 running (10h) 16m ago 10h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 9895e70a88e5

mgr.mon3.wcdgws mon3 running (9h) 17m ago 9h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 9671cfcc4879

mon.mon1 mon1 running (10h) 16m ago 10h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c6d2edfe749e

mon.mon2 mon2 running (2h) 17m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 6831474ae296

mon.mon3 mon3 running (9h) 17m ago 9h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 1dad20cfb8cb

nfs.foo.mon1 mon1 running (48m) 16m ago 48m 3.3 docker.io/ceph/ceph:v15 5553b0cb212c e032427d7143

nfs.foo.mon2 mon2 running (47m) 17m ago 47m 3.3 docker.io/ceph/ceph:v15 5553b0cb212c a5ca131bdea4

nfs.foo.mon3 mon3 running (47m) 17m ago 47m 3.3 docker.io/ceph/ceph:v15 5553b0cb212c 1891fa0049a8

node-exporter.mon1 mon1 running (10h) 16m ago 10h 0.18.1 docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf a1769a2c96e3

node-exporter.mon2 mon2 running (9h) 17m ago 9h 0.18.1 docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf a263814973cf

node-exporter.mon3 mon3 running (9h) 17m ago 9h 0.18.1 docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf a98474474801

node-exporter.node1 node1 running (2h) 18m ago 9h 0.18.1 docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf 43c2b9c1b577

node-exporter.node2 node2 running (104m) 18m ago 9h 0.18.1 docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf 001c9c481fde

osd.0 mon1 running (2h) 16m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 69ed8a7c2674

osd.1 mon2 running (2h) 17m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c6582ada37ea

osd.10 node2 running (112m) 18m ago 112m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 3fbb521b4de8

osd.11 node1 running (108m) 18m ago 108m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c f04a44147db3

osd.12 node2 running (107m) 18m ago 107m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c ac57964f9918

osd.13 node1 running (107m) 18m ago 107m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 48b64e0c65bb

osd.14 node2 running (106m) 18m ago 106m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 0255eaf95a17

osd.2 mon3 running (2h) 17m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 8c29e7a27e5f

osd.3 mon1 running (2h) 16m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c b1ac841836d7

osd.4 mon2 running (2h) 17m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c dcf2b0c46391

osd.5 mon3 running (2h) 17m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c35535ed359f

osd.6 mon1 running (2h) 16m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 33337487b7dc

osd.7 mon2 running (2h) 17m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 9e401f42c863

osd.8 mon3 running (2h) 17m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c5a821d0a425

osd.9 node1 running (115m) 18m ago 115m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 7ec2e34b3f2c

rgw.myorg.cn-east-1.mon1.mmloiz mon1 running (59m) 16m ago 59m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c a2e23fd30cd8

rgw.myorg.cn-east-1.mon2.epselk mon2 running (59m) 17m ago 59m 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 073eea9b742c

rgw.myorg.cn-east-1.mon3.zxoura mon3 running (59m) 17m ago 59m 15.2.8 docker.io/ceph/ceph:v15

查看指定的daemon状态

ceph orch ps --daemon_type osd --daemon_id 0

# 例如

[root@mon1 ~]# ceph orch ps --daemon_type mon

NAME HOST STATUS REFRESHED AGE VERSION IMAGE NAME IMAGE ID CONTAINER ID

mon.mon1 mon1 running (11h) 7m ago 11h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c6d2edfe749e

mon.mon2 mon2 running (2h) 7m ago 2h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 6831474ae296

mon.mon3 mon3 running (9h) 7m ago 9h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c 1dad20cfb8cb

[root@mon1 ~]#

[root@mon1 ~]# ceph orch ps --daemon_type mon --daemon_id mon1

NAME HOST STATUS REFRESHED AGE VERSION IMAGE NAME IMAGE ID CONTAINER ID

mon.mon1 mon1 running (11h) 7m ago 11h 15.2.8 docker.io/ceph/ceph:v15 5553b0cb212c c6d2edfe749e

[root@mon1 ~]#

5.3- 排障

- 查看服务

```bash

[root@mon1 ~]# ceph orch ls

NAME RUNNING REFRESHED AGE PLACEMENT IMAGE NAME IMAGE ID

alertmanager 0/1 - - count:1

crash 5/5 10m ago 11h docker.io/ceph/ceph:v15 5553b0cb212c grafana 0/1 - - count:1 mds.cephfs 3/3 9m ago 107m mon1;mon2;mon3;count:3 docker.io/ceph/ceph:v15 5553b0cb212c mgr 2/2 9m ago 11h count:2 docker.io/ceph/ceph:v15 5553b0cb212c mon 3/3 9m ago 2h label:mon docker.io/ceph/ceph:v15 5553b0cb212c nfs.foo 3/3 9m ago 60m mon1;mon2;mon3;count:3 docker.io/ceph/ceph:v15 5553b0cb212c node-exporter 5/5 10m ago 11h docker.io/ceph/ceph:v15 5553b0cb212c prometheus 0/1 - - count:1 rgw.myorg.cn-east-1 3/3 9m ago 71m mon1;mon2;mon3;count:3 docker.io/ceph/ceph:v15 5553b0cb212c [root@mon1 ~]#

> 没有启动的服务

> - alertmanager

> - grafana

> - prometheus

- 查看没有启动的服务

```bash

[root@mon1 ~]# ceph orch ps --daemon_type prometheus

No daemons reported

- 重启服务

service/daemon 的Start/stop/reload

ceph orch {stop,start,reload}

ceph orch daemon {start,stop,reload}

ceph orch restart prometheus

ceph orch restart alertmanager

ceph orch restart grafana

检查结果

[root@mon1 ~]# ceph orch ls NAME RUNNING REFRESHED AGE PLACEMENT IMAGE NAME IMAGE ID alertmanager 0/1 - - count:1 <unknown> <unknown> crash 5/5 3m ago 11h * docker.io/ceph/ceph:v15 5553b0cb212c grafana 0/1 - - count:1 <unknown> <unknown> mds.cephfs 3/3 3m ago 2h mon1;mon2;mon3;count:3 docker.io/ceph/ceph:v15 5553b0cb212c mgr 2/2 3m ago 11h count:2 docker.io/ceph/ceph:v15 5553b0cb212c mon 3/3 3m ago 2h label:mon docker.io/ceph/ceph:v15 5553b0cb212c nfs.foo 3/3 3m ago 73m mon1;mon2;mon3;count:3 docker.io/ceph/ceph:v15 5553b0cb212c node-exporter 5/5 3m ago 11h * docker.io/prom/node-exporter:v0.18.1 e5a616e4b9cf osd.None 15/0 3m ago - <unmanaged> docker.io/ceph/ceph:v15 5553b0cb212c prometheus 0/1 - - count:1 <unknown> <unknown> rgw.myorg.cn-east-1 3/3 3m ago 85m mon1;mon2;mon3;count:3 docker.io/ceph/ceph:v15 5553b0cb212c检查日志

/var/log/ceph/cephadm.log

[root@mon1 ~]# grep error /var/log/ceph/cephadm.log

2020-12-27 10:26:11,375 DEBUG stat:stderr Error: unable to pull docker.io/ceph/ceph-grafana:6.6.2: Error initializing source docker://ceph/ceph-grafana:6.6.2: error pinging docker registry ung2thfc.mirror.aliyuncs.com: Get "http://ung2thfc.mirror.aliyuncs.com/v2/": dial tcp 116.62.81.173:80: i/o timeout

2020-12-27 10:26:11,377 INFO stat:stderr Error: unable to pull docker.io/ceph/ceph-grafana:6.6.2: Error initializing source docker://ceph/ceph-grafana:6.6.2: error pinging docker registry ung2thfc.mirror.aliyuncs.com: Get "http://ung2thfc.mirror.aliyuncs.com/v2/": dial tcp 116.62.81.173:80: i/o timeout

2020-12-27 10:45:49,756 DEBUG stat:stderr Error: unable to pull docker.io/ceph/ceph-grafana:6.6.2: Error initializing source docker://ceph/ceph-grafana:6.6.2: error pinging docker registry ung2thfc.mirror.aliyuncs.com: Get "http://ung2thfc.mirror.aliyuncs.com/v2/": dial tcp 116.62.81.173:80: i/o timeout

2020-12-27 10:45:49,757 INFO stat:stderr Error: unable to pull docker.io/ceph/ceph-grafana:6.6.2: Error initializing source docker://ceph/ceph-grafana:6.6.2: error pinging docker registry ung2thfc.mirror.aliyuncs.com: Get "http://ung2thfc.mirror.aliyuncs.com/v2/": dial tcp 116.62.81.173:80: i/o timeout

2020-12-27 11:06:09,985 DEBUG stat:stderr Error: unable to pull docker.io/ceph/ceph-grafana:6.6.2: Error initializing source docker://ceph/ceph-grafana:6.6.2: error pinging docker registry ung2thfc.mirror.aliyuncs.com: Get "http://ung2thfc.mirror.aliyuncs.com/v2/": dial tcp 116.62.81.173:80: i/o timeout

2020-12-27 11:06:09,987 INFO stat:stderr Error: unable to pull docker.io/ceph/ceph-grafana:6.6.2: Error initializing source docker://ceph/ceph-grafana:6.6.2: error pinging docker registry ung2thfc.mirror.aliyuncs.com: Get "http://ung2thfc.mirror.aliyuncs.com/v2/": dial tcp 116.62.81.173:80: i/o timeout

或者

- 补充镜像 ```bash podman pull docker.io/ceph/ceph-grafana:6.6.2 podman pull docker.io/prom/prometheus:v2.18.1

<a name="NSDTW"></a>

# 6- 补充

<a name="rZwCX"></a>

## 6.1- 升级

一旦部署了新集群(或升级并转换了现有集群),`cephadm`的最佳功能之一就是它能够自动执行升级。在大多数情况下,这很简单:

```bash

ceph orch upgrade start --ceph-version 15.2.1

可以从ceph status命令监视升级进度,该命令的输出将包含一个进度条,例如:

Upgrade to docker.io/ceph/ceph:v15.2.1 (3m) [===.........................] (remaining: 21m)

7 -可用性测试

7.1- CephFS[1]

CephFS底层是基于RADOS的,具体来说是基于RADOS上的两个存储池,一个用来存储文件,一个用来存储文件的元数据。所以,诸如文件的目录结构等信息都是在元数据存储池里的,因此,如果有SSD,建议把元数据的存储池放在SSD上,一方面加速,另一方面,元数据的体积并不会特别大。而文件数据存储池应该放在HDD上。

- 查看存储池 ```bash [root@mon1 ceph]# ceph osd pool ls device_health_metrics cephfs_data

[root@mon1 ceph]# ceph osd pool ls detail pool 2 ‘cephfs_data’ replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 64 pgp_num 64 autoscale_mode on last_change 92 flags hashpspool stripe_width 0 application cephfs pool 3 ‘cephfs_metadata’ replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 16 pgp_num 16 autoscale_mode on last_change 306 lfor 0/306/304 flags hashpspool stripe_width 0 pg_autoscale_bias 4 pg_num_min 16 recovery_priority 5 application cephfs

- 创建pool

> 通常在创建pool之前,需要覆盖默认的`pg_num`,官方推荐:

> - 若少于5个OSD, 设置pg_num为128。

> - 5~10个OSD,设置pg_num为512。

> - 10~50个OSD,设置pg_num为4096。

> - 超过50个OSD,可以参考[pgcalc](http://ceph.com/pgcalc/)计算

创建pool语法:

```bash

ceph osd pool create {pool-name} {pg-num} [{pgp-num}] [replicated] \

[crush-ruleset-name] [expected-num-objects]

ceph osd pool create {pool-name} {pg-num} {pgp-num} erasure \

[erasure-code-profile] [crush-ruleset-name] [expected_num_objects]

- 手动创建CephFS

```bash

ceph fs new

<元数据存储池> <文件数据存储池>

ceph fs new mycephfs cephfs_metadata cephfs_data

<a name="Ztpkw"></a>

## 7.2- CephFS [2]

利用ceph的编排功能自动创建(名称为my`cephfs-1`)

- 查看已经存在的fs

```bash

[root@mon1 ceph]# ceph fs ls

name: cephfs, metadata pool: cephfs_metadata, data pools: [cephfs_data ]

ceph fs volume create mycephfs-1

# 故障

[root@mon1 ceph]# ceph fs volume create mycephfs-1

Error EINVAL: Creation of multiple filesystems is disabled. To enable this experimental feature, use 'ceph fs flag set enable_multiple true'

ceph fs flag set enable_multiple true

[root@mon1 ceph]# ceph fs flag set enable_multiple true

Warning! This feature is experimental.It may cause problems up to and including data loss.Consult the documentation at ceph.com, and if unsure, do not proceed.Add --yes-i-really-mean-it if you are certain.

[root@mon1 ceph]# ceph fs volume create mycephfs-1

[root@mon1 ceph]# ceph fs ls

name: cephfs, metadata pool: cephfs_metadata, data pools: [cephfs_data ]

name: mycephfs-1, metadata pool: cephfs.mycephfs-1.meta, data pools: [cephfs.mycephfs-1.data ]

- 挂载

```bash

mkdir -p /mnt/cephfs

查看key

[root@mon1 ceph]# cat /etc/ceph/ceph.client.admin.keyring [client.admin]key = AQDdXedfhBp9AxAARE9O/BBHH43QGBzPCL3/fA==

mount -t ceph mon1:6789,mon2:6789,mon3:6789:/ /mnt/cephfs/ -o name=admin,secret=AQDdXedfhBp9AxAARE9O/BBHH43QGBzPCL3/fA==

- 验证

```bash

df -Th

10.68.3.121:6789,10.68.3.122:6789,10.68.3.123:6789:/ ceph 33G 0 33G 0% /mnt/cephfs

# 默认挂载的是cephfs而不是mycephfs-1