1.理解Docker 0

清空所有网络

查看容器的内部网络地址 ip addr, 发现容器启动的时候会得到一个 eth0@if43 ip地址,docker分配的!

[root@localhost /]# docker exec -it tomcat01 ip addr

1: lo:

思考:linux能不能ping通docker容器内部!

[root@localhost /]# ping 172.17.0.2 PING 172.17.0.2 (172.17.0.2) 56(84) bytes of data. 64 bytes from 172.17.0.2: icmp_seq=1 ttl=64 time=0.476 ms 64 bytes from 172.17.0.2: icmp_seq=2 ttl=64 time=0.099 ms 64 bytes from 172.17.0.2: icmp_seq=3 ttl=64 time=0.105 ms …

linux 可以ping通docker容器内部

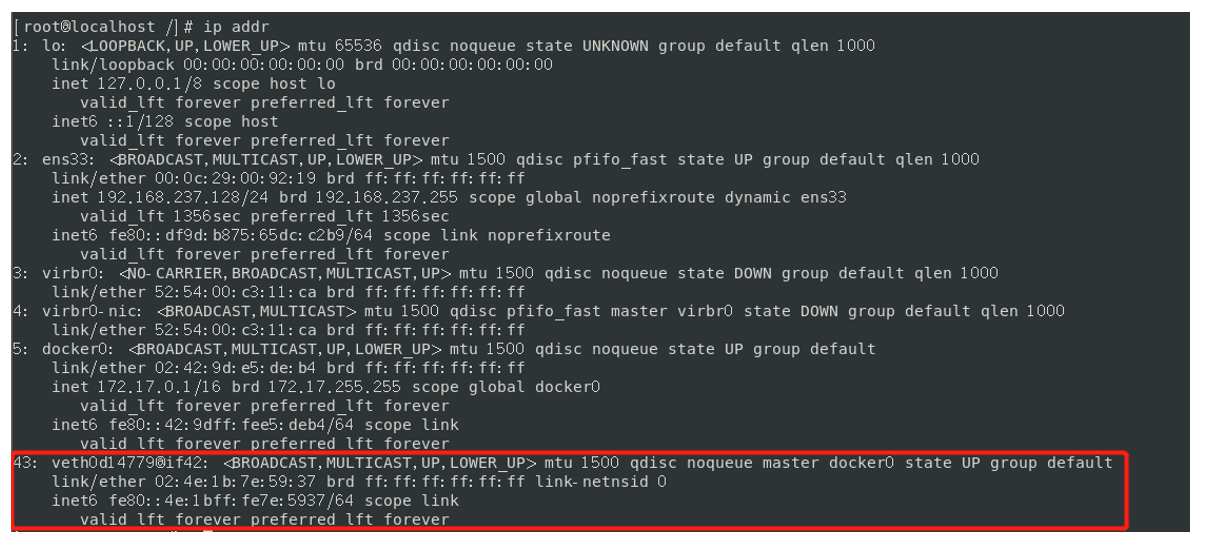

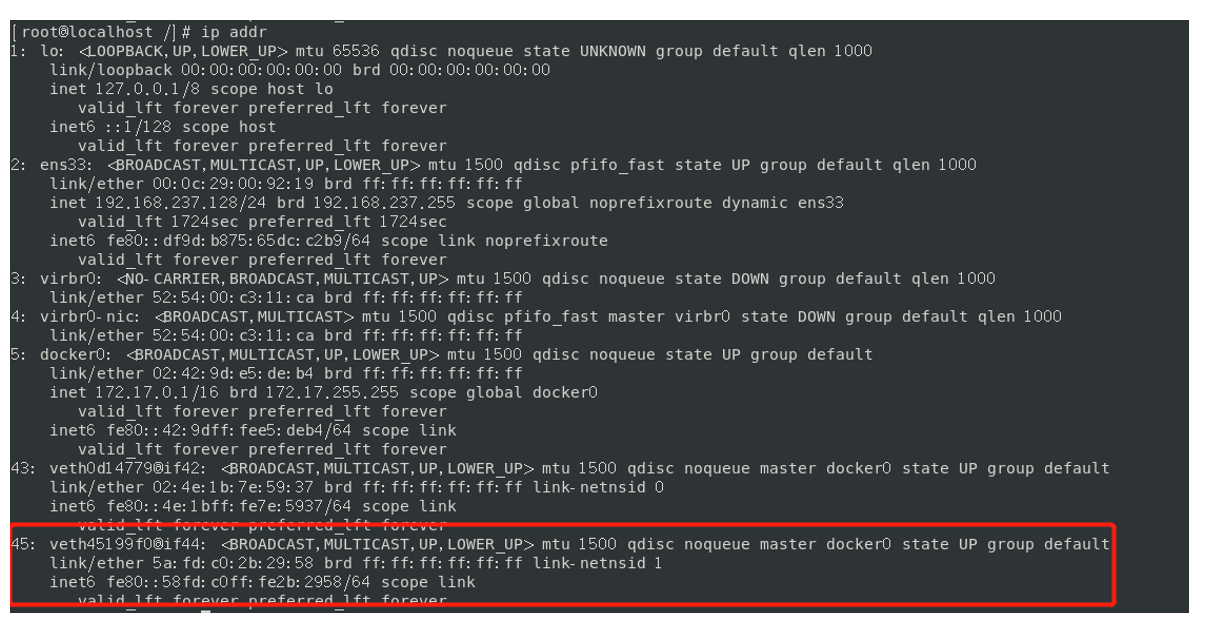

原理:<br />1、我们每启动一个docker容器,docker就会给docker容器分配一个ip,我们只要装了docker,就会有一个docker01网卡。<br />桥接模式,使用的技术是veth-pair技术!<br />再次测试 ip addr,发现多了一对网卡 : <br /><br />2 、在启动一个容器测试,发现又多了一对网络<br /><br />我们发现这个容器带来网卡,都是一对对的```basic# 我们发现这个容器带来网卡,都是一对对的# veth-pair 就是一对的虚拟设备接口,他们都是成对出现的,一段连着协议,一段彼此相连# 正因为有这个特性,veth-pair 充当一个桥梁,连接各种虚拟网络设备# OpenStack,Docker容器之间的连接,OVS的连接都是使用veth-pair技术

3、我们来测试下tomcat01和tomcat02是否可以ping通

[root@localhost /]# docker exec -it tomcat02 ping 172.17.0.2PING 172.17.0.2 (172.17.0.2) 56(84) bytes of data.64 bytes from 172.17.0.2: icmp_seq=1 ttl=64 time=0.556 ms64 bytes from 172.17.0.2: icmp_seq=2 ttl=64 time=0.096 ms64 bytes from 172.17.0.2: icmp_seq=3 ttl=64 time=0.111 ms...# 结论:容器与容器之间是可以相互ping通的!!!

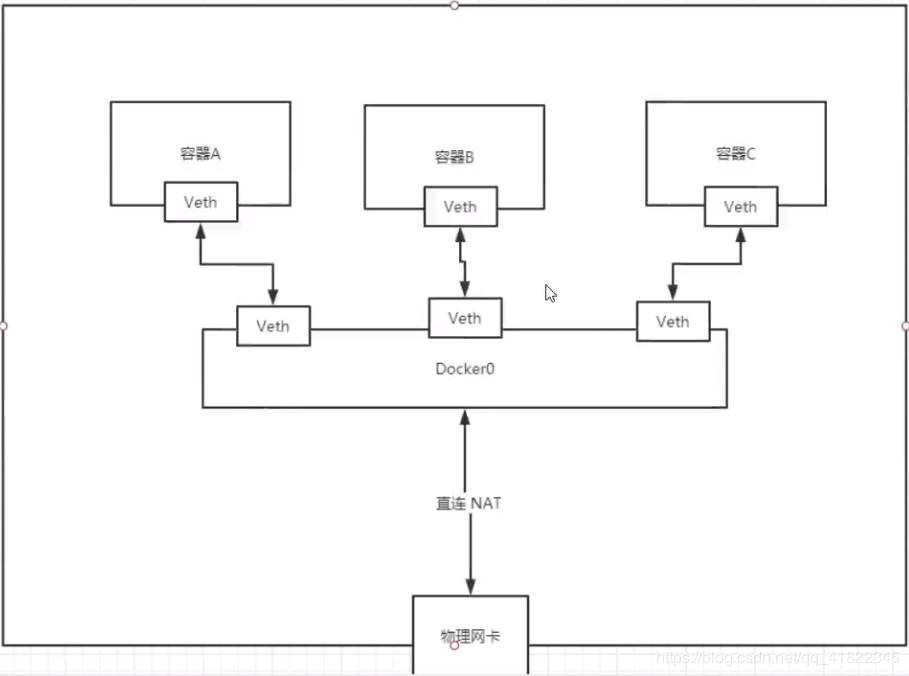

绘制一个网络模型图:

结论:tomcat01和tomcat02公用一个路由器,docker0。

所有的容器不指定网络的情况下,都是docker0路由的,docker会给我们的容器分配一个默认的可用ip。

小结: Docker使用的是Linux的桥接,宿主机是一个Docker容器的网桥 docker0

Docker中所有网络接口都是虚拟的,虚拟的转发效率高(内网传递文件)

只要容器删除,对应的网桥一对就没了!

2.–link

思考一个场景:我们编写了一个微服务,database url=ip: 项目不重启,数据ip换了,我们希望可以处理这个问题,可以通过名字来进行访问容器?

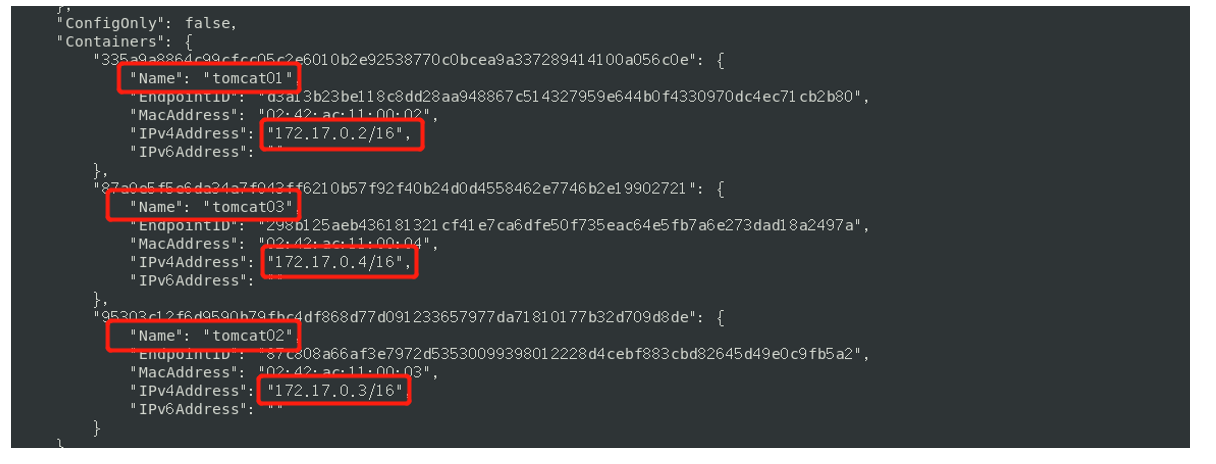

# tomcat02 想通过直接ping 容器名(即"tomcat01")来ping通,而不是ip,发现失败了![root@localhost /]# docker exec -it tomcat02 ping tomcat01ping: tomcat01: Name or service not known# 如何解决这个问题呢?# 通过--link 就可以解决这个网络联通问题了!!! 发现新建的tomcat03可以ping通tomcat02[root@localhost /]# docker run -d -P --name tomcat03 --link tomcat02 tomcat87a0e5f5e6da34a7f043ff6210b57f92f40b24d0d4558462e7746b2e19902721[root@localhost /]# docker exec -it tomcat03 ping tomcat02PING tomcat02 (172.17.0.3) 56(84) bytes of data.64 bytes from tomcat02 (172.17.0.3): icmp_seq=1 ttl=64 time=0.132 ms64 bytes from tomcat02 (172.17.0.3): icmp_seq=2 ttl=64 time=0.116 ms64 bytes from tomcat02 (172.17.0.3): icmp_seq=3 ttl=64 time=0.116 ms64 bytes from tomcat02 (172.17.0.3): icmp_seq=4 ttl=64 time=0.116 ms# 反向能ping通吗? 发现tomcat02不能oing通tomcat03[root@localhost /]# docker exec -it tomcat02 ping tomcat03ping: tomcat03: Name or service not known

探究:inspect !!!

其实这个tomcat03就是在本地配置了到tomcat02的映射:

# 查看hosts 配置,在这里发现原理![root@localhost /]# docker exec -it tomcat03 cat /etc/hosts127.0.0.1 localhost::1 localhost ip6-localhost ip6-loopbackfe00::0 ip6-localnetff00::0 ip6-mcastprefixff02::1 ip6-allnodesff02::2 ip6-allrouters172.17.0.3 tomcat02 95303c12f6d9 # 就像windows中的 host 文件一样,做了地址绑定172.17.0.4 87a0e5f5e6da

本质探究:

–link 本质就是在hosts配置中添加映射

现在使用Docker已经不建议使用–link了!

自定义网络,不适用docker0!

docker0问题:不支持容器名连接访问!

3.自定义网络

网络模式

bridge :桥接 docker(默认,自己创建也是用bridge模式)

none :不配置网络,一般不用

host :和所主机共享网络

container :容器网络连通(用得少!局限很大)

测试

# 我们之前直接启动的命令 (默认是使用--net bridge,可省),这个bridge就是我们的docker0docker run -d -P --name tomcat01 tomcatdocker run -d -P --name tomcat01 --net bridge tomcat# 上面两句等价# docker0(即bridge)默认不支持域名访问 ! --link可以打通连接,即支持域名访问!# 我们可以自定义一个网络!# --driver bridge 网络模式定义为 :桥接# --subnet 192.168.0.0/16 定义子网 ,范围为:192.168.0.2 ~ 192.168.255.255# --gateway 192.168.0.1 子网网关设为: 192.168.0.1[root@localhost /]# docker network create --driver bridge --subnet 192.168.0.0/16 --gateway 192.168.0.1 mynet7ee3adf259c8c3d86fce6fd2c2c9f85df94e6e57c2dce5449e69a5b024efc28c[root@localhost /]# docker network lsNETWORK ID NAME DRIVER SCOPE461bf576946c bridge bridge localc501704cf28e host host local7ee3adf259c8 mynet bridge local #自定义的网络9354fbcc160f none null local

自己的网络就创建好了:

[root@localhost /]# docker run -d -P --name tomcat-net-01 --net mynet tomcatb168a37d31fcdc2ff172fd969e4de6de731adf53a2960eeae3dd9c24e14fac67[root@localhost /]# docker run -d -P --name tomcat-net-02 --net mynet tomcatc07d634e17152ca27e318c6fcf6c02e937e6d5e7a1631676a39166049a44c03c[root@localhost /]# docker network inspect mynet[{"Name": "mynet","Id": "7ee3adf259c8c3d86fce6fd2c2c9f85df94e6e57c2dce5449e69a5b024efc28c","Created": "2020-06-14T01:03:53.767960765+08:00","Scope": "local","Driver": "bridge","EnableIPv6": false,"IPAM": {"Driver": "default","Options": {},"Config": [{"Subnet": "192.168.0.0/16","Gateway": "192.168.0.1"}]},"Internal": false,"Attachable": false,"Ingress": false,"ConfigFrom": {"Network": ""},"ConfigOnly": false,"Containers": {"b168a37d31fcdc2ff172fd969e4de6de731adf53a2960eeae3dd9c24e14fac67": {"Name": "tomcat-net-01","EndpointID": "f0af1c33fc5d47031650d07d5bc769e0333da0989f73f4503140151d0e13f789","MacAddress": "02:42:c0:a8:00:02","IPv4Address": "192.168.0.2/16","IPv6Address": ""},"c07d634e17152ca27e318c6fcf6c02e937e6d5e7a1631676a39166049a44c03c": {"Name": "tomcat-net-02","EndpointID": "ba114b9bd5f3b75983097aa82f71678653619733efc1835db857b3862e744fbc","MacAddress": "02:42:c0:a8:00:03","IPv4Address": "192.168.0.3/16","IPv6Address": ""}},"Options": {},"Labels": {}}]# 再次测试 ping 连接[root@localhost /]# docker exec -it tomcat-net-01 ping 192.168.0.3PING 192.168.0.3 (192.168.0.3) 56(84) bytes of data.64 bytes from 192.168.0.3: icmp_seq=1 ttl=64 time=0.199 ms64 bytes from 192.168.0.3: icmp_seq=2 ttl=64 time=0.121 ms^C--- 192.168.0.3 ping statistics ---2 packets transmitted, 2 received, 0% packet loss, time 2msrtt min/avg/max/mdev = 0.121/0.160/0.199/0.039 ms# 现在不使用 --link,也可以ping 名字了!!!!!![root@localhost /]# docker exec -it tomcat-net-01 ping tomcat-net-02PING tomcat-net-02 (192.168.0.3) 56(84) bytes of data.64 bytes from tomcat-net-02.mynet (192.168.0.3): icmp_seq=1 ttl=64 time=0.145 ms64 bytes from tomcat-net-02.mynet (192.168.0.3): icmp_seq=2 ttl=64 time=0.117 ms^C--- tomcat-net-02 ping statistics ---2 packets transmitted, 2 received, 0% packet loss, time 3msrtt min/avg/max/mdev = 0.117/0.131/0.145/0.014 ms

我们在使用自定义的网络时,docker都已经帮我们维护好了对应关系,推荐我们平时这样使用网 络!!!

好处:

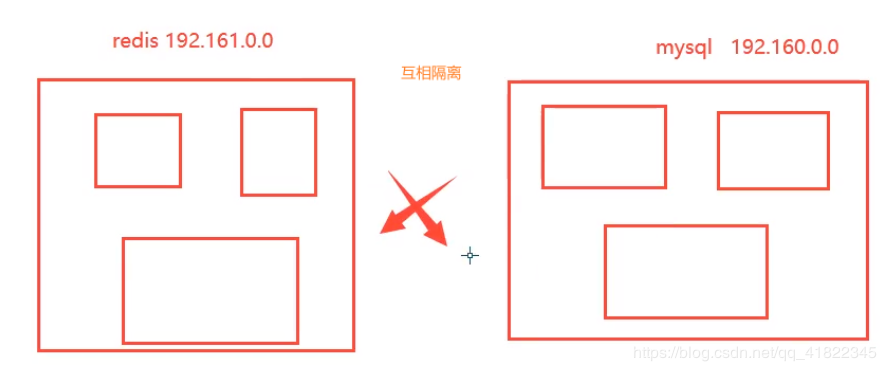

redis——不同的集群使用不同的网络,保证了集群的安全和健康

mysql——不同的集群使用不同的网络,保证了集群的安全和健康

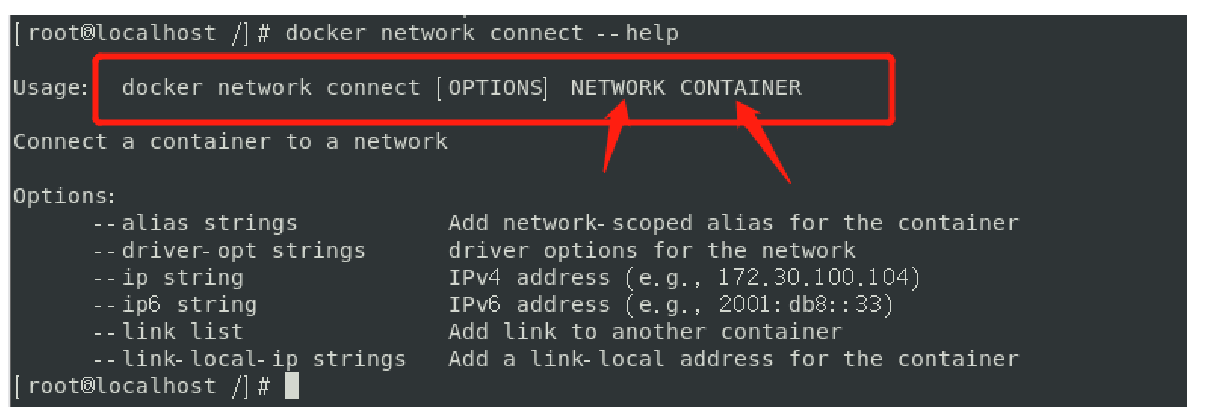

4.网络连通

# 测试打通 tomcat01 — mynet[root@localhost /]# docker network connect mynet tomcat01# 连通之后就是将 tomcat01 放到了 mynet 网络下! (见下图)# 这就产生了 一个容器有两个ip地址 ! 参考阿里云的公有ip和私有ip[root@localhost /]# docker network inspect mynet

# tomcat01 连通ok[root@localhost /]# docker exec -it tomcat01 ping tomcat-net-01PING tomcat-net-01 (192.168.0.2) 56(84) bytes of data.64 bytes from tomcat-net-01.mynet (192.168.0.2): icmp_seq=1 ttl=64 time=0.124 ms64 bytes from tomcat-net-01.mynet (192.168.0.2): icmp_seq=2 ttl=64 time=0.162 ms64 bytes from tomcat-net-01.mynet (192.168.0.2): icmp_seq=3 ttl=64 time=0.107 ms^C--- tomcat-net-01 ping statistics ---3 packets transmitted, 3 received, 0% packet loss, time 3msrtt min/avg/max/mdev = 0.107/0.131/0.162/0.023 ms# tomcat02 是依旧打不通的[root@localhost /]# docker exec -it tomcat02 ping tomcat-net-01ping: tomcat-net-01: Name or service not known

结论:假设要跨网络操作别人,就需要使用docker network connect 连通!

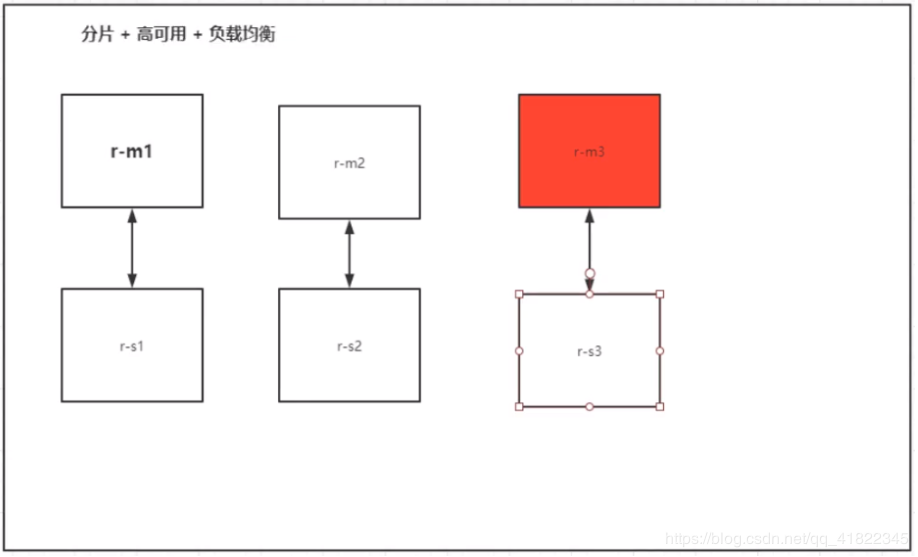

5.实战:部署Redis集群

停止所有正在运行的容器

docker stop $(docker ps -aq)

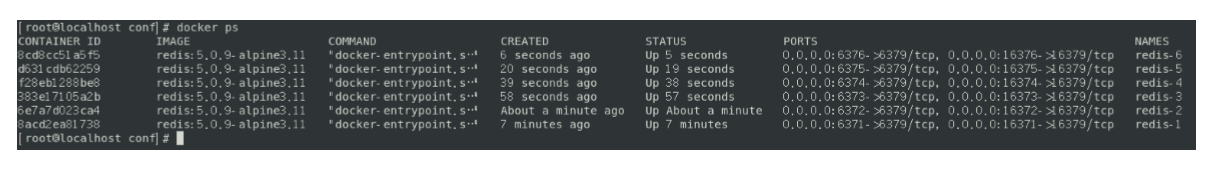

启动6个redis容器,上面三个是主,下面三个是备!

使用shell脚本启动!

# 创建redis集群网络docker network create redis --subnet 172.38.0.0/16# 通过脚本创建六个redis配置[root@localhost /]# for port in $(seq 1 6);\do \mkdir -p /mydata/redis/node-${port}/conftouch /mydata/redis/node-${port}/conf/redis.confcat <<EOF>>/mydata/redis/node-${port}/conf/redis.confport 6379bind 0.0.0.0cluster-enabled yescluster-config-file nodes.confcluster-node-timeout 5000cluster-announce-ip 172.38.0.1${port}cluster-announce-port 6379cluster-announce-bus-port 16379appendonly yesEOFdone# 查看创建的六个redis[root@localhost /]# cd /mydata/[root@localhost mydata]# \lsredis[root@localhost mydata]# cd redis/[root@localhost redis]# lsnode-1 node-2 node-3 node-4 node-5 node-6# 查看redis-1的配置信息[root@localhost redis]# cd node-1[root@localhost node-1]# lsconf[root@localhost node-1]# cd conf/[root@localhost conf]# lsredis.conf[root@localhost conf]# cat redis.confport 6379bind 0.0.0.0cluster-enabled yescluster-config-file nodes.confcluster-node-timeout 5000cluster-announce-ip 172.38.0.11cluster-announce-port 6379cluster-announce-bus-port 16379appendonly yes

docker run -p 6371:6379 -p 16371:16379 --name redis-1 \-v /mydata/redis/node-1/data:/data \-v /mydata/redis/node-1/conf/redis.conf:/etc/redis/redis.conf \-d --net redis --ip 172.38.0.11 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.confdocker run -p 6372:6379 -p 16372:16379 --name redis-2 \-v /mydata/redis/node-2/data:/data \-v /mydata/redis/node-2/conf/redis.conf:/etc/redis/redis.conf \-d --net redis --ip 172.38.0.12 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.confdocker run -p 6373:6379 -p 16373:16379 --name redis-3 \-v /mydata/redis/node-3/data:/data \-v /mydata/redis/node-3/conf/redis.conf:/etc/redis/redis.conf \-d --net redis --ip 172.38.0.13 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.confdocker run -p 6374:6379 -p 16374:16379 --name redis-4 \-v /mydata/redis/node-4/data:/data \-v /mydata/redis/node-4/conf/redis.conf:/etc/redis/redis.conf \-d --net redis --ip 172.38.0.14 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.confdocker run -p 6375:6379 -p 16375:16379 --name redis-5 \-v /mydata/redis/node-5/data:/data \-v /mydata/redis/node-5/conf/redis.conf:/etc/redis/redis.conf \-d --net redis --ip 172.38.0.15 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.confdocker run -p 6376:6379 -p 16376:16379 --name redis-6 \-v /mydata/redis/node-6/data:/data \-v /mydata/redis/node-6/conf/redis.conf:/etc/redis/redis.conf \-d --net redis --ip 172.38.0.16 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

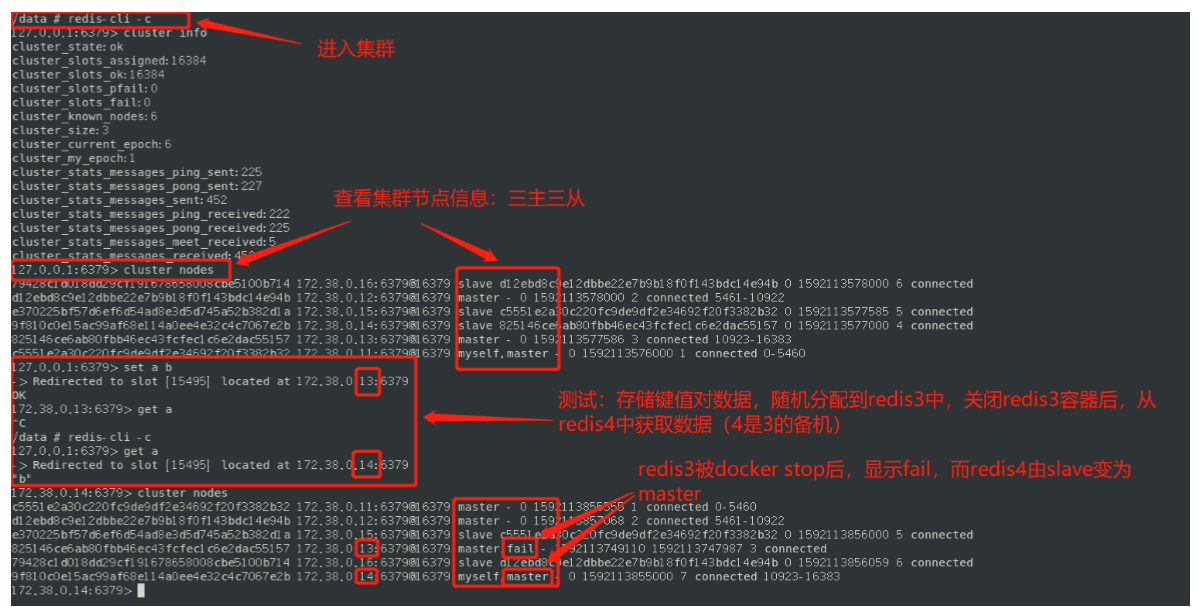

[root@localhost conf]# docker exec -it redis-1 /bin/sh/data # lsappendonly.aof nodes.conf/data # redis-cli --cluster create 172.38.0.11:6379 172.38.0.12:6379 172.38.0.13:6379 172.38.0.14:6379 172.38.0.15:6379 172.38.0.16:6379 --cluster-replicas 1>>> Performing hash slots allocation on 6 nodes...Master[0] -> Slots 0 - 5460Master[1] -> Slots 5461 - 10922Master[2] -> Slots 10923 - 16383Adding replica 172.38.0.15:6379 to 172.38.0.11:6379Adding replica 172.38.0.16:6379 to 172.38.0.12:6379Adding replica 172.38.0.14:6379 to 172.38.0.13:6379M: c5551e2a30c220fc9de9df2e34692f20f3382b32 172.38.0.11:6379slots:[0-5460] (5461 slots) masterM: d12ebd8c9e12dbbe22e7b9b18f0f143bdc14e94b 172.38.0.12:6379slots:[5461-10922] (5462 slots) masterM: 825146ce6ab80fbb46ec43fcfec1c6e2dac55157 172.38.0.13:6379slots:[10923-16383] (5461 slots) masterS: 9f810c0e15ac99af68e114a0ee4e32c4c7067e2b 172.38.0.14:6379replicates 825146ce6ab80fbb46ec43fcfec1c6e2dac55157S: e370225bf57d6ef6d54ad8e3d5d745a52b382d1a 172.38.0.15:6379replicates c5551e2a30c220fc9de9df2e34692f20f3382b32S: 79428c1d018dd29cf191678658008cbe5100b714 172.38.0.16:6379replicates d12ebd8c9e12dbbe22e7b9b18f0f143bdc14e94bCan I set the above configuration? (type 'yes' to accept): yes>>> Nodes configuration updated>>> Assign a different config epoch to each node>>> Sending CLUSTER MEET messages to join the clusterWaiting for the cluster to join....>>> Performing Cluster Check (using node 172.38.0.11:6379)M: c5551e2a30c220fc9de9df2e34692f20f3382b32 172.38.0.11:6379slots:[0-5460] (5461 slots) master1 additional replica(s)S: 79428c1d018dd29cf191678658008cbe5100b714 172.38.0.16:6379slots: (0 slots) slavereplicates d12ebd8c9e12dbbe22e7b9b18f0f143bdc14e94bM: d12ebd8c9e12dbbe22e7b9b18f0f143bdc14e94b 172.38.0.12:6379slots:[5461-10922] (5462 slots) master1 additional replica(s)S: e370225bf57d6ef6d54ad8e3d5d745a52b382d1a 172.38.0.15:6379slots: (0 slots) slavereplicates c5551e2a30c220fc9de9df2e34692f20f3382b32S: 9f810c0e15ac99af68e114a0ee4e32c4c7067e2b 172.38.0.14:6379slots: (0 slots) slavereplicates 825146ce6ab80fbb46ec43fcfec1c6e2dac55157M: 825146ce6ab80fbb46ec43fcfec1c6e2dac55157 172.38.0.13:6379slots:[10923-16383] (5461 slots) master1 additional replica(s)[OK] All nodes agree about slots configuration.>>> Check for open slots...>>> Check slots coverage...[OK] All 16384 slots covered.

docker搭建redis集群完成!

我们使用docker之后,所有的技术都会慢慢变得简单起来!