方法一

docker-compose.yml

version: "3"services:mysql:container_name: mysql8image: mysql/mysql-server:8.0.18-1.1.13command: --default-authentication-plugin=mysql_native_passwordports:- 3306:3306- 33060:33060environment:MYSQL_ROOT_PASSWORD: 123456MYSQL_USER: hxyMYSQL_PASSWORD: hxyvolumes:- ./conf:/etc/mysql/conf.d- ./data:/var/lib/mysqlnetworks:- mysql-monitor-networkmysqld-exporter:container_name: mysqld-exporterimage: prom/mysqld-exporter:v0.12.1ports:- 9104:9104environment:DATA_SOURCE_NAME: "hxy:hxy@(mysql:3306)/"networks:- mysql-monitor-networkdepends_on:- mysqlprometheus:container_name: prometheusimage: prom/prometheus:v2.23.0ports:- 9090:9090volumes:- ./prometheus/prometheus.yml:/etc/prometheus/prometheus.yml- ./prometheus/data:/prometheusnetworks:- mysql-monitor-networkgrafana:container_name: grafanacontainer_name: grafanaimage: grafana/grafana:7.3.4ports:- 3000:3000volumes:# 数据存储位置- ./grafana/data:/var/lib/grafananetworks:- mysql-monitor-networknetworks:mysql-monitor-network:

所有容器必须在同个网络中,不然网络互相隔离,访问不到对方

prometheus.yml 内容如下

**

# my global configglobal:scrape_interval: 15s # Set the scrape interval to every 15 seconds. Default is every 1 minute.evaluation_interval: 15s # Evaluate rules every 15 seconds. The default is every 1 minute.# scrape_timeout is set to the global default (10s).# Alertmanager configurationalerting:alertmanagers:- static_configs:- targets:# - alertmanager:9093# Load rules once and periodically evaluate them according to the global 'evaluation_interval'.rule_files:# - "first_rules.yml"# - "second_rules.yml"# A scrape configuration containing exactly one endpoint to scrape:# Here it's Prometheus itself.scrape_configs:# The job name is added as a label `job=<job_name>` to any timeseries scraped from this config.- job_name: 'prometheus'# metrics_path defaults to '/metrics'# scheme defaults to 'http'.static_configs:- targets: ['localhost:9090']- job_name: 'mysql8'static_configs:- targets: ['mysqld-exporter:9104']

mysql8 的链接地址,可以写 mysqld-exporter 的vip,也可以使用容器名称代替,如上

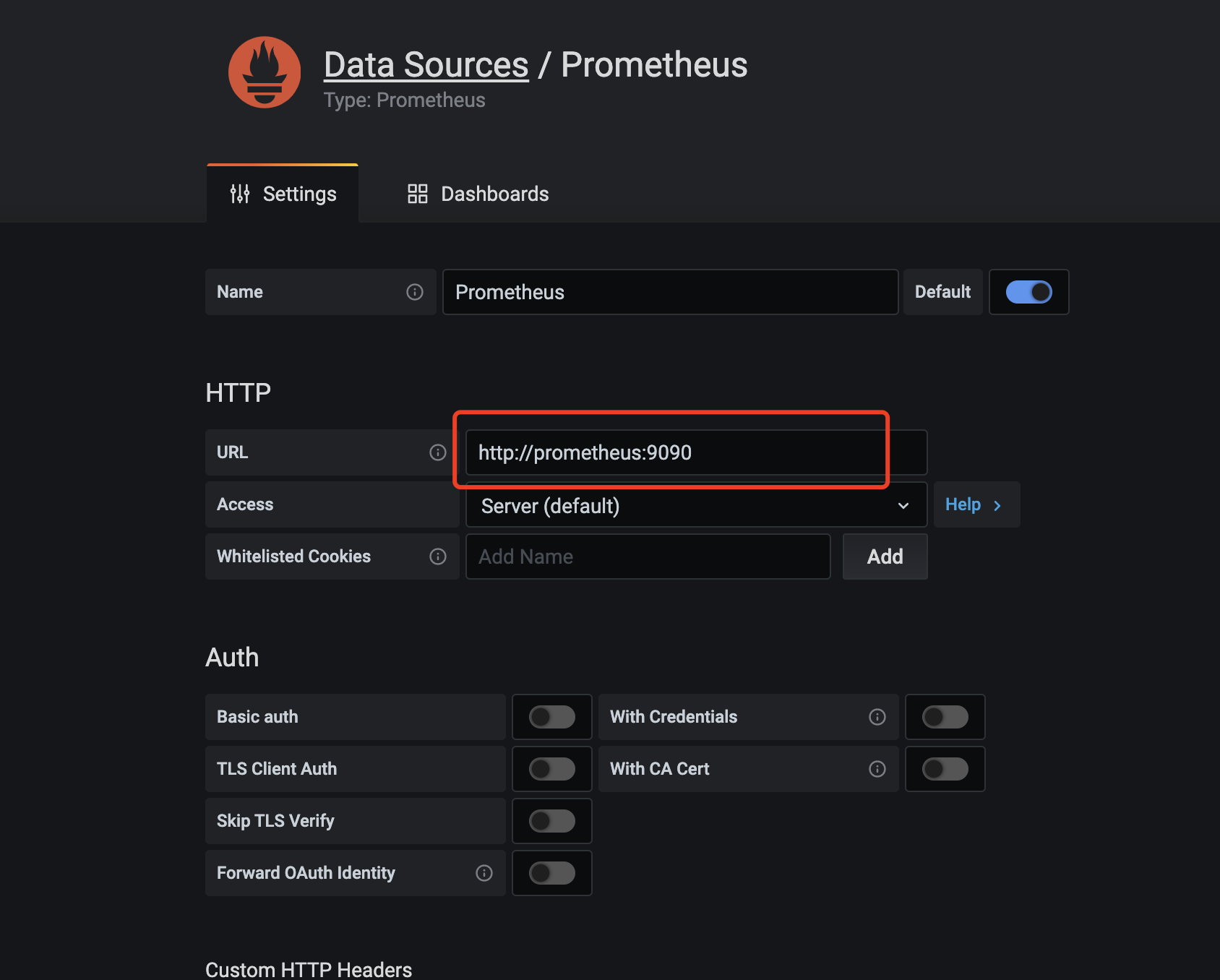

下一步需要登录 grafana ,添加数据源。访问 localhost:3000 ,在设置里面添加数据源

同理, url 可以使用vip,也可以使用容器名称,然后添加 mysql dashboard 模版

id 为 7362 ,注意不要用 12826 或 9777 ,因为后面两个模版,好像不是用于mysql master版本的,会显示不出数据,因为模版有问题,导入完模版就能看到数据啦

方法二

除了将几个容器都放到同个 docker-compose.yml 外,也可以将其拆分开来,方便管理

首先创建一个外部 network ,用于不同容器之间通讯

$ docker network create grafana-monitor-network

mysql docker-compose.yml

version: "3"services:mysql:container_name: mysql8image: mysql/mysql-server:8.0.18-1.1.13command: --default-authentication-plugin=mysql_native_passwordports:- 3306:3306- 33060:33060environment:MYSQL_ROOT_PASSWORD: 123456MYSQL_USER: hxyMYSQL_PASSWORD: hxyvolumes:- ./conf:/etc/mysql/conf.d- ./data:/var/lib/mysqlnetworks:- mysql-network- grafana-monitor-networkmysqld-exporter:container_name: mysqld-exporterimage: prom/mysqld-exporter:v0.12.1ports:- 9104:9104environment:DATA_SOURCE_NAME: "hxy:hxy@(mysql:3306)/"networks:- mysql-network- grafana-monitor-networkdepends_on:- mysqlnetworks:mysql-network:grafana-monitor-network:external: true

这里将 mysql 跟 mysqld-exporter 放一起, mysqld-exporter 依赖 mysql 一起启动

prometheus docker-compose.yml

version: "3"services:prometheus:container_name: prometheusimage: prom/prometheus:v2.23.0ports:- 9090:9090volumes:- ./prometheus.yml:/etc/prometheus/prometheus.yml- ./data:/prometheusnetworks:- grafana-monitor-networknetworks:grafana-monitor-network:external: true

prometheus.yml

# my global configglobal:scrape_interval: 15s # Set the scrape interval to every 15 seconds. Default is every 1 minute.evaluation_interval: 15s # Evaluate rules every 15 seconds. The default is every 1 minute.# scrape_timeout is set to the global default (10s).# Alertmanager configurationalerting:alertmanagers:- static_configs:- targets:# - alertmanager:9093# Load rules once and periodically evaluate them according to the global 'evaluation_interval'.rule_files:# - "first_rules.yml"# - "second_rules.yml"# A scrape configuration containing exactly one endpoint to scrape:# Here it's Prometheus itself.scrape_configs:# The job name is added as a label `job=<job_name>` to any timeseries scraped from this config.- job_name: 'prometheus'# metrics_path defaults to '/metrics'# scheme defaults to 'http'.static_configs:- targets: ['localhost:9090']- job_name: 'mysql8'static_configs:- targets: ['mysqld-exporter:9104']

grafana docker-compose.yml

version: "3"services:grafana:container_name: grafanaimage: grafana/grafana:7.3.4ports:- 3000:3000volumes:# 数据存储位置- ./data:/var/lib/grafananetworks:- grafana-monitor-networknetworks:grafana-monitor-network:external: true

grafana 默认用户名密码为 admin/admin