pv 与 pvc

PersistentVolume PV 持久卷

集群中的资源,不区分 namespace

独立于 pod 生命周期

对底层共享存储的抽象

PersistentVolumeClaim PVC 持久卷声明

区分 namespace

对存储需求的声明, 消耗 pv

细节点

pvc 可以先创建,没有对应的 pv 处于 pending ,一旦有符合的 pv 就 bound

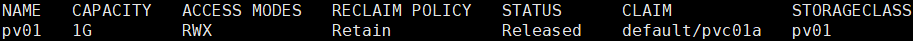

pv 处于 released 状态不可再用,需手动释放

删除 pod ,不会影响其引入的 pvc

绑定 pvc 的pod运行中, pvc不能删除

删除 pvc ,对 pv 的影响取决于 pv回收策略

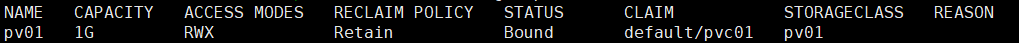

Retain 删除pvc,pv会一直 Released ,记录上次的 pvc信息,此时pv不可用,需手动处理

Recycle 删除pvc,pv会由 Released 变为 Available

pv 访问模式

pv.spec.accessModes <[]string>

ReadWriteOnce RWO 单个挂载读写

ReadOnlyMany ROX 多个挂载只读

ReadWriteMany RWX 多个挂载读写

每个卷同一时刻只能以一种模式挂载

底层不同的存储类型支持的访问模式不同

nfs AzureFile CephFS 三种都支持

HostPath 只支持 RWO

pv 存储类别

pv.spec.storageClassName

特定类别的 pv 只能与请求了该类别的 pvc 进行绑定

未设定类别的 pv 则只能与不请求任何类别的 pvc 进行绑定

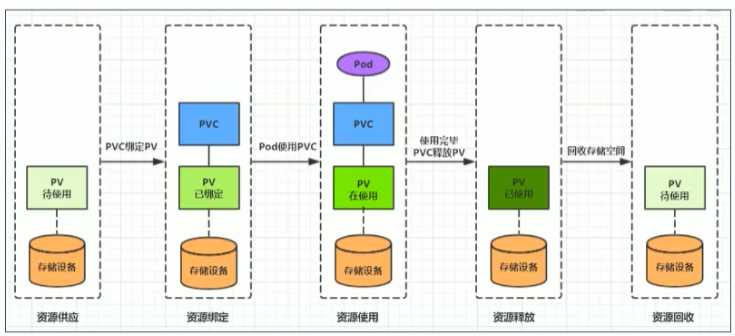

pv 回收策略

pv.spec.persistentVolumeReclaimPolicy

Retain 保留,手动默认

Recycle 回收,基本擦除(不删除pv只清除卷内容)

Delete 删除 pv ,关联的存储资产将被删除,动态默认

底层不同的存储类型支持的回收策略不同

nfs、hostPath 支持 Recycle

AWS EBS、GCE PD、Azure Disk 、 Cinder 支持 Delete

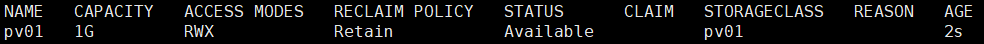

pv 状态

Available 可用,没被任何 pvc 绑定

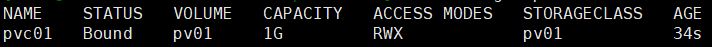

Bound 已绑定

Released 释放,声明删除但资源还未被集群重新声明

Failed 失败,自动回收失败

手动 release pv

删除 pv 处于 terminating

kubectl patch pv pv名字 -p ‘{“metadata”:{“finalizers”:null}}’

静态供应示例

准备nfs

nfs 服务端

yum -y install nfs-utils

mkdir /data/nfs/

vim /etc/exports

/data/nfs *(rw,async,no_root_squash,no_all_squash)

systemctl start rpcbind nfs

showmount -e

node 节点安装 nfs 工具

yum -y install nfs-utils

创建 pv

apiVersion: v1kind: PersistentVolumemetadata:name: pv01spec:capacity:storage: 1GaccessModes:- ReadWriteManystorageClassName: pv01nfs:path: /data/nfs/01server: 192.168.80.99

创建 pvc

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvc01

spec:

accessModes: ["ReadWriteMany"]

storageClassName: pv01

resources:

requests:

storage: 500m

pod 使用 pvc

apiVersion: v1

kind: Pod

metadata:

name: pod-pvc

spec:

volumes:

- name: webroot

persistentVolumeClaim:

claimName: pvc01

readOnly: false

containers:

- image: nginx

name: pod-pvc-nginx

volumeMounts:

- name: webroot

mountPath: /usr/share/nginx/html

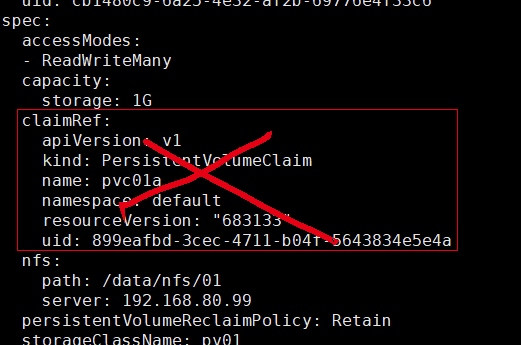

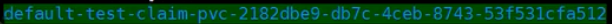

动态供应示例

nfs

StorageClass

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-client

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner # or choose another name, must match deployment's env PROVISIONER_NAME'

parameters:

archiveOnDelete: "false"

指定默认 sc

metadata:

annotations:

storageclass.kubernetes.io/is-default-class: “true”

pvc 不写明 storageClassName 采用默认 sc

pv 删除时备份

parameters:

archiveOnDelete: “true”

rbac

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

Deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: k8s.gcr.io/sig-storage/nfs-subdir-external-provisioner:v4.0.2

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: k8s-sigs.io/nfs-subdir-external-provisioner

- name: NFS_SERVER

value: 192.168.80.99

- name: NFS_PATH

value: /data/nfs/k8s

volumes:

- name: nfs-client-root

nfs:

server: 192.168.80.99

path: /data/nfs/k8s