一、Hadoop的运行模式

1.单节点集群的部署运行-Single Node Cluster

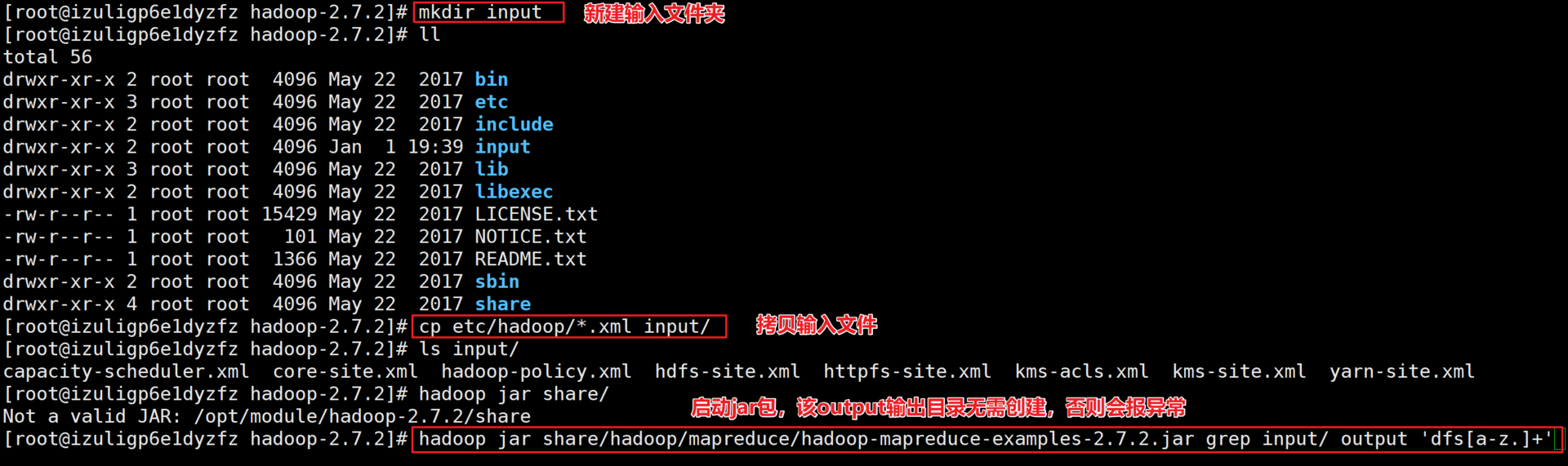

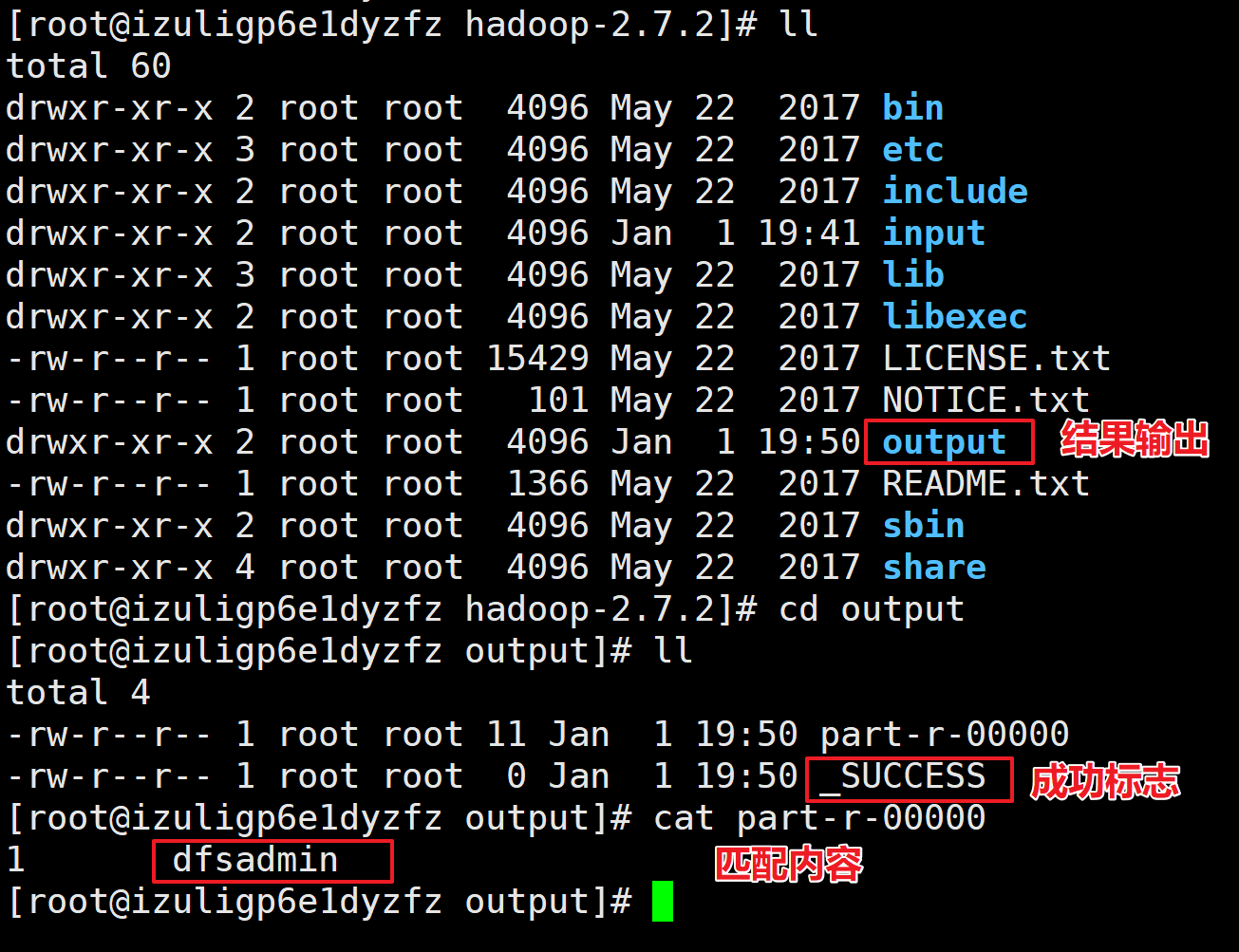

[root@izuligp6e1dyzfz hadoop-2.7.2]# mkdir input[root@izuligp6e1dyzfz hadoop-2.7.2]# lltotal 56drwxr-xr-x 2 root root 4096 May 22 2017 bindrwxr-xr-x 3 root root 4096 May 22 2017 etcdrwxr-xr-x 2 root root 4096 May 22 2017 includedrwxr-xr-x 2 root root 4096 Jan 1 19:39 inputdrwxr-xr-x 3 root root 4096 May 22 2017 libdrwxr-xr-x 2 root root 4096 May 22 2017 libexec-rw-r--r-- 1 root root 15429 May 22 2017 LICENSE.txt-rw-r--r-- 1 root root 101 May 22 2017 NOTICE.txt-rw-r--r-- 1 root root 1366 May 22 2017 README.txtdrwxr-xr-x 2 root root 4096 May 22 2017 sbindrwxr-xr-x 4 root root 4096 May 22 2017 share[root@izuligp6e1dyzfz hadoop-2.7.2]# cp etc/hadoop/*.xml input/[root@izuligp6e1dyzfz hadoop-2.7.2]# ls input/capacity-scheduler.xml core-site.xml hadoop-policy.xml hdfs-site.xml httpfs-site.xml kms-acls.xml kms-site.xml yarn-site.xml[root@izuligp6e1dyzfz hadoop-2.7.2]# hadoop jar share/Not a valid JAR: /opt/module/hadoop-2.7.2/share[root@izuligp6e1dyzfz hadoop-2.7.2]# hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar grep input/ output 'dfs[a-z.]+'

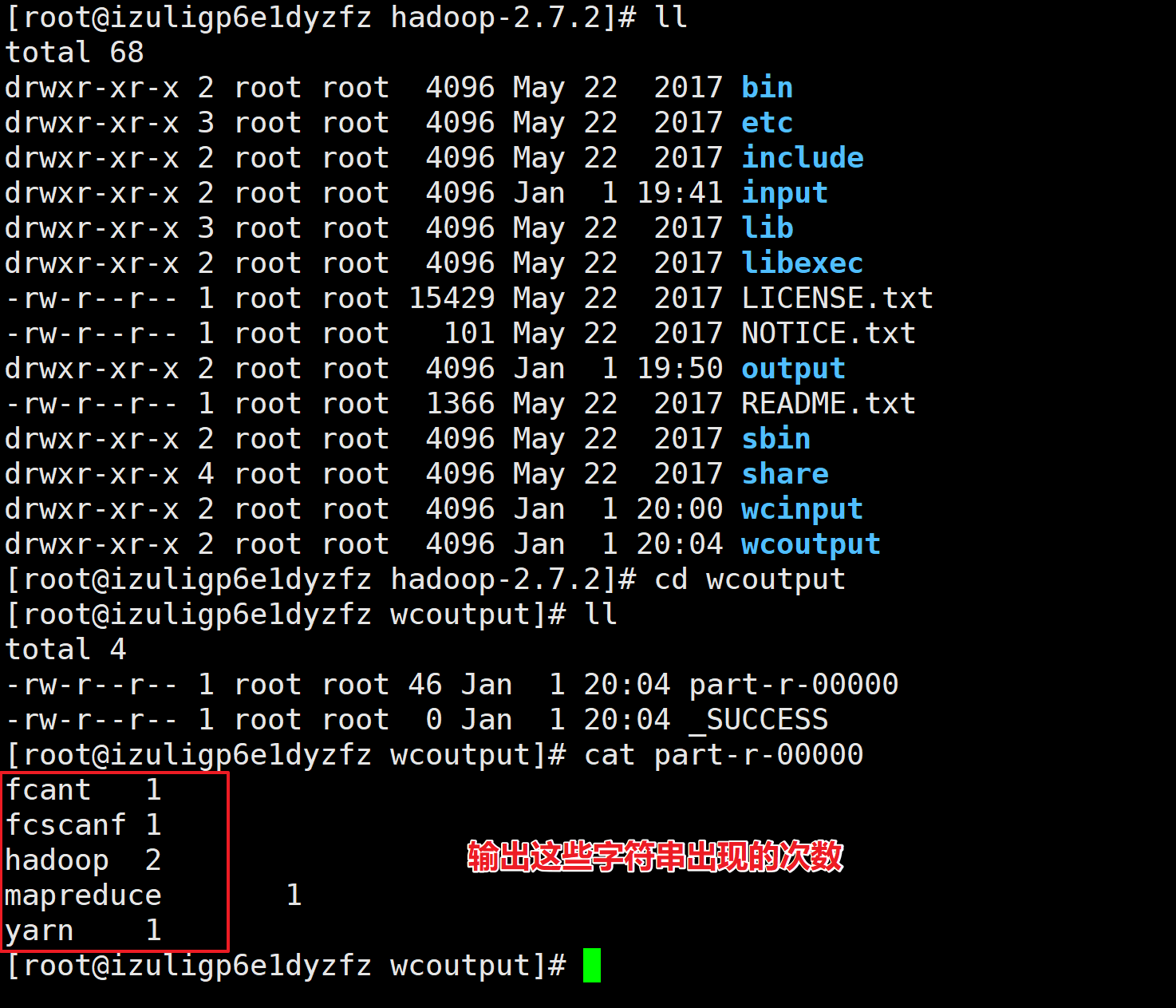

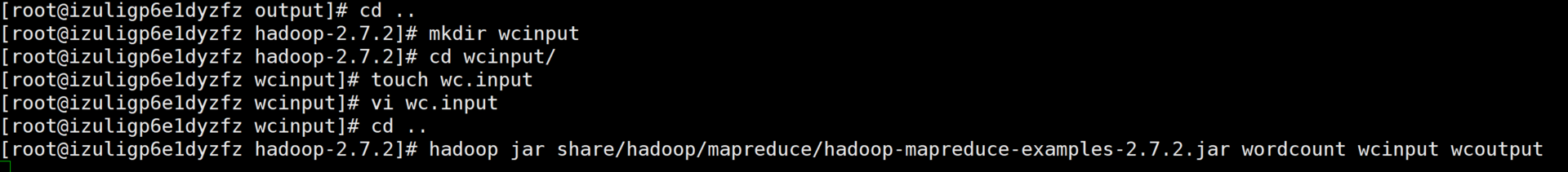

2.WordCount案例

[root@izuligp6e1dyzfz hadoop-2.7.2]# mkdir wcinput

[root@izuligp6e1dyzfz hadoop-2.7.2]# cd wcinput/

[root@izuligp6e1dyzfz wcinput]# touch wc.input

[root@izuligp6e1dyzfz wcinput]# vi wc.input

[root@izuligp6e1dyzfz wcinput]# cd ..

[root@izuligp6e1dyzfz hadoop-2.7.2]# hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar wordcount wcinput wcoutput

二、伪分布式运行模式-Pseudo-Distributed Operation

I.启动HDFS并运行MapReduce

1.配置HDFS

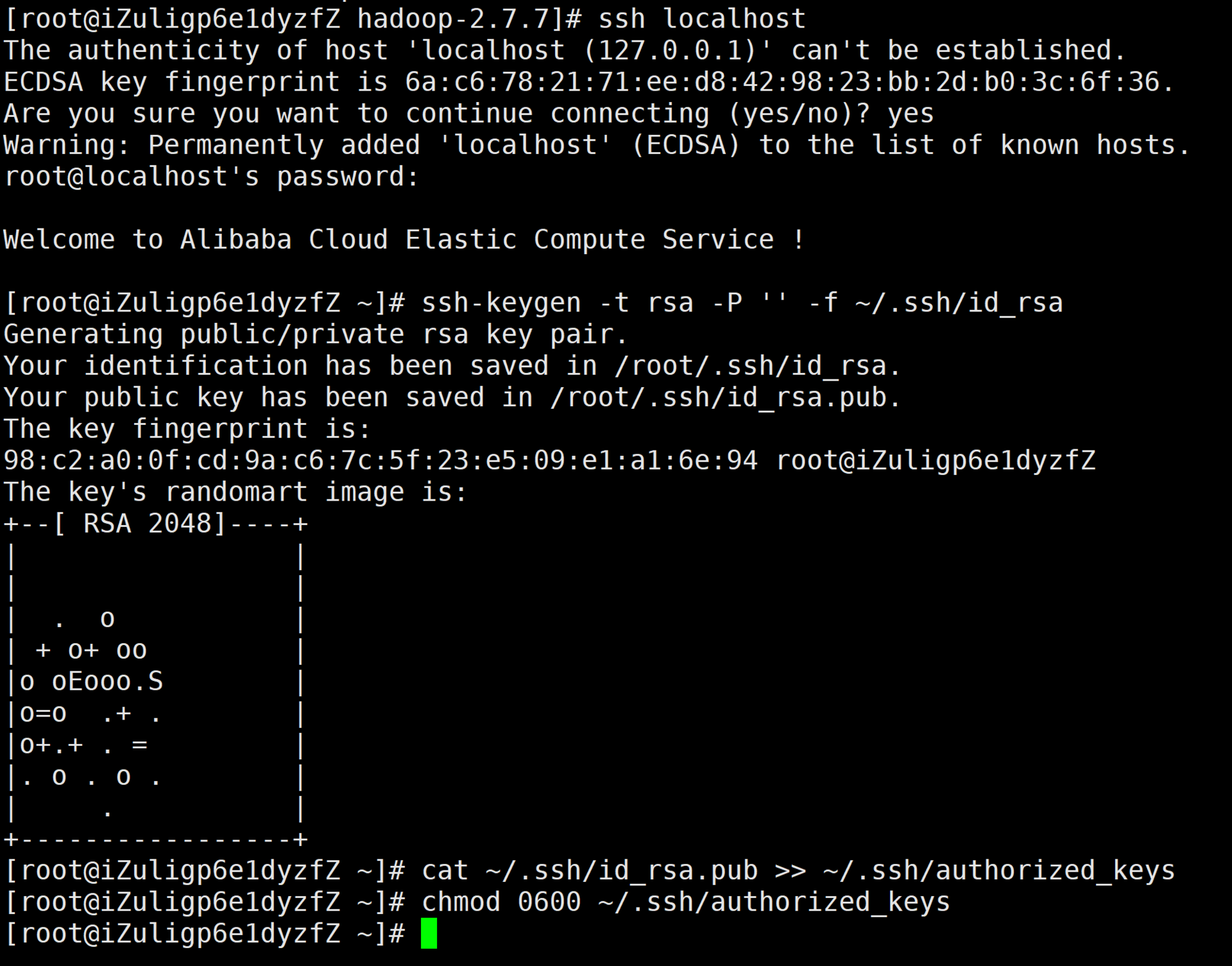

a.配置ssh免密登录

[root@iZuligp6e1dyzfZ ~]# ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

Generating public/private rsa key pair.

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

98:c2:a0:0f:cd:9a:c6:7c:5f:23:e5:09:e1:a1:6e:94 root@iZuligp6e1dyzfZ

The key's randomart image is:

+--[ RSA 2048]----+

| |

| |

| . o |

| + o+ oo |

|o oEooo.S |

|o=o .+ . |

|o+.+ . = |

|. o . o . |

| . |

+-----------------+

[root@iZuligp6e1dyzfZ ~]# cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[root@iZuligp6e1dyzfZ ~]# chmod 0600 ~/.ssh/authorized_keys

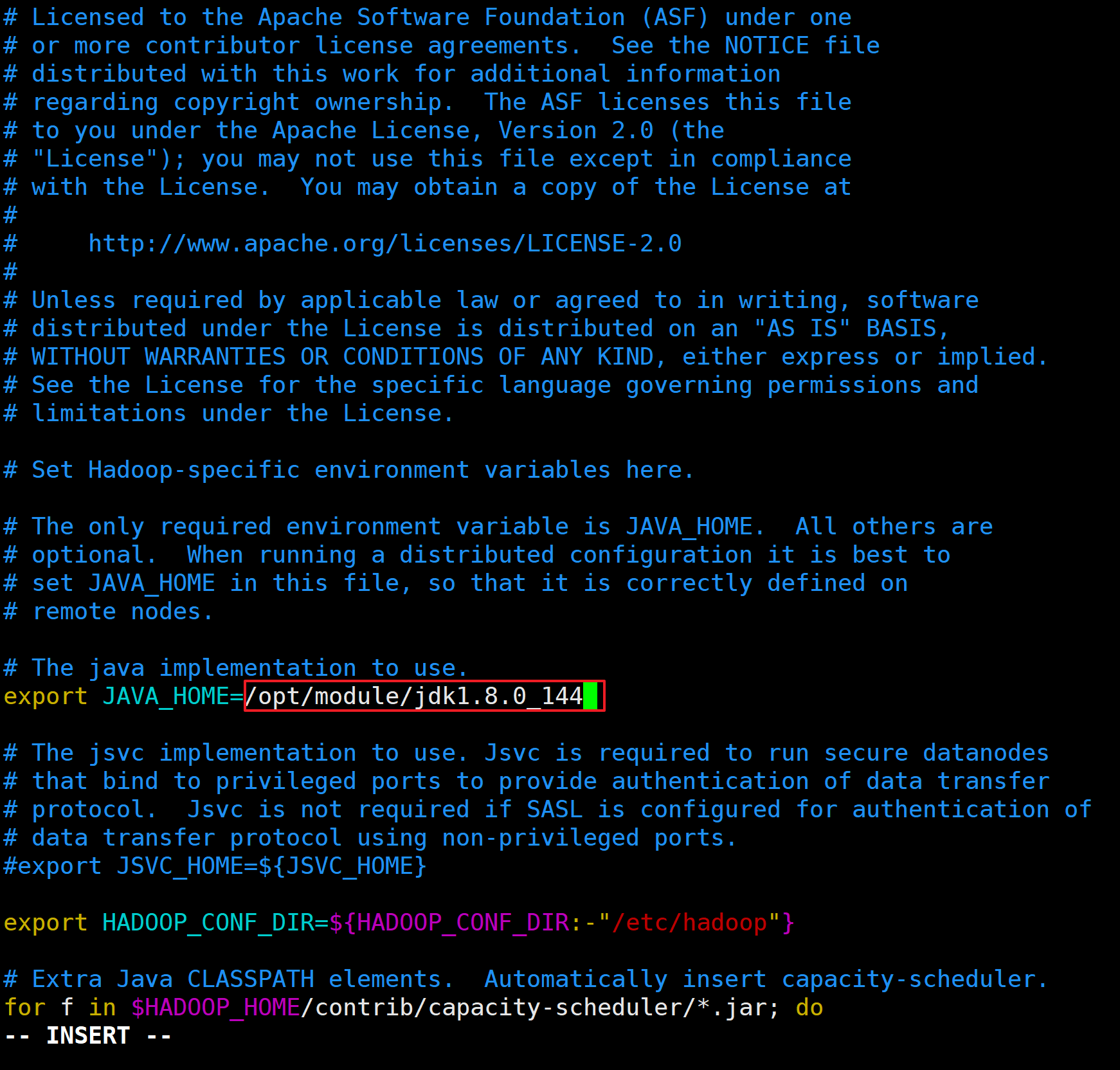

b.配置hadoop-env.sh

[root@izuligp6e1dyzfz hadoop]# echo $JAVA_HOME

/opt/module/jdk1.8.0_144

配置内容如下

export JAVA_HOME=/opt/module/jdk1.8.0_144

<br />

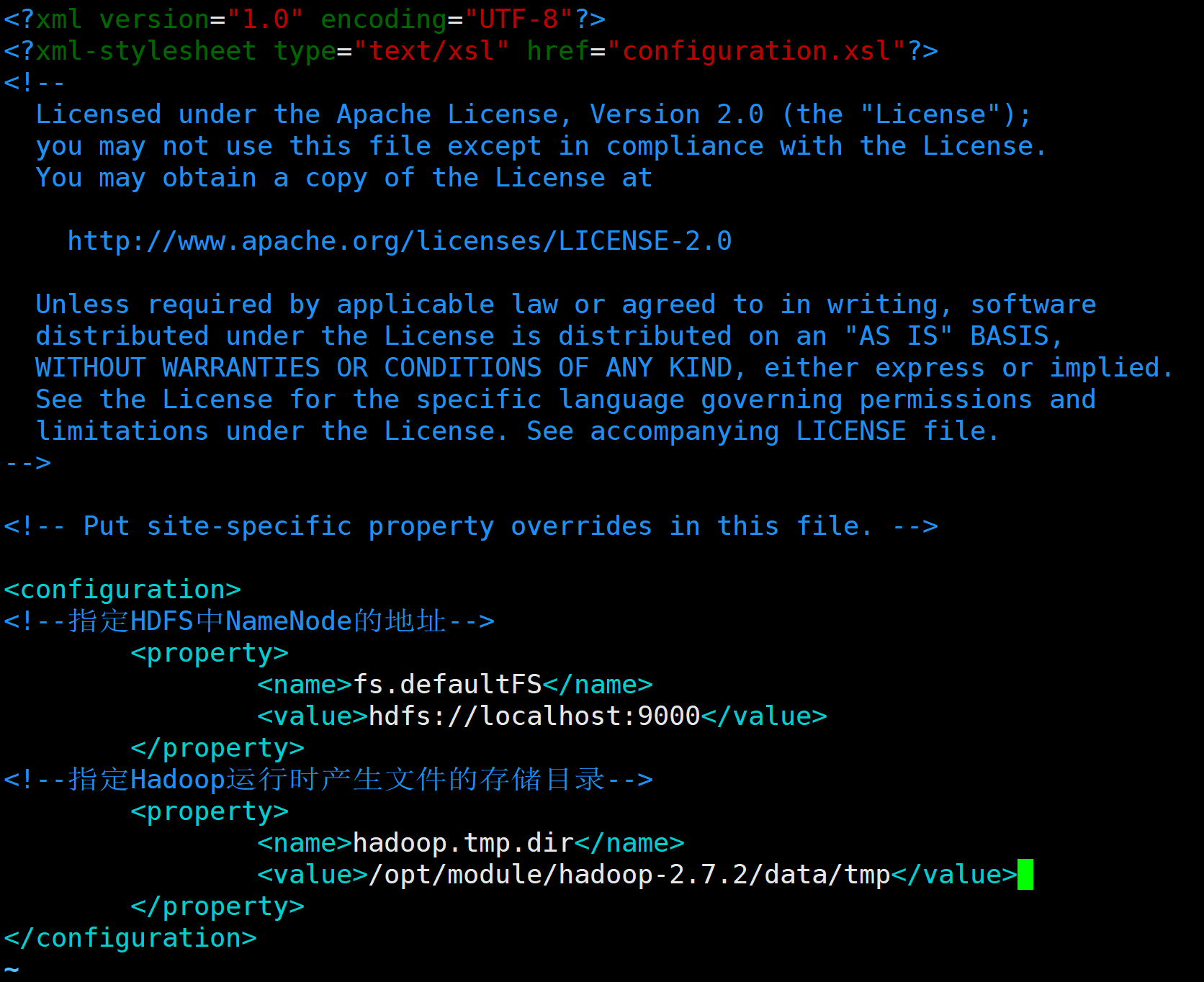

c.配置core-site.xml

[root@izuligp6e1dyzfz hadoop]# vim core-site.xml

<configuration>

<!--指定HDFS中NameNode的地址-->

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

<!--指定Hadoop运行时产生文件的存储目录-->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop-2.7.2/data/tmp</value>

</property>

</configuration>

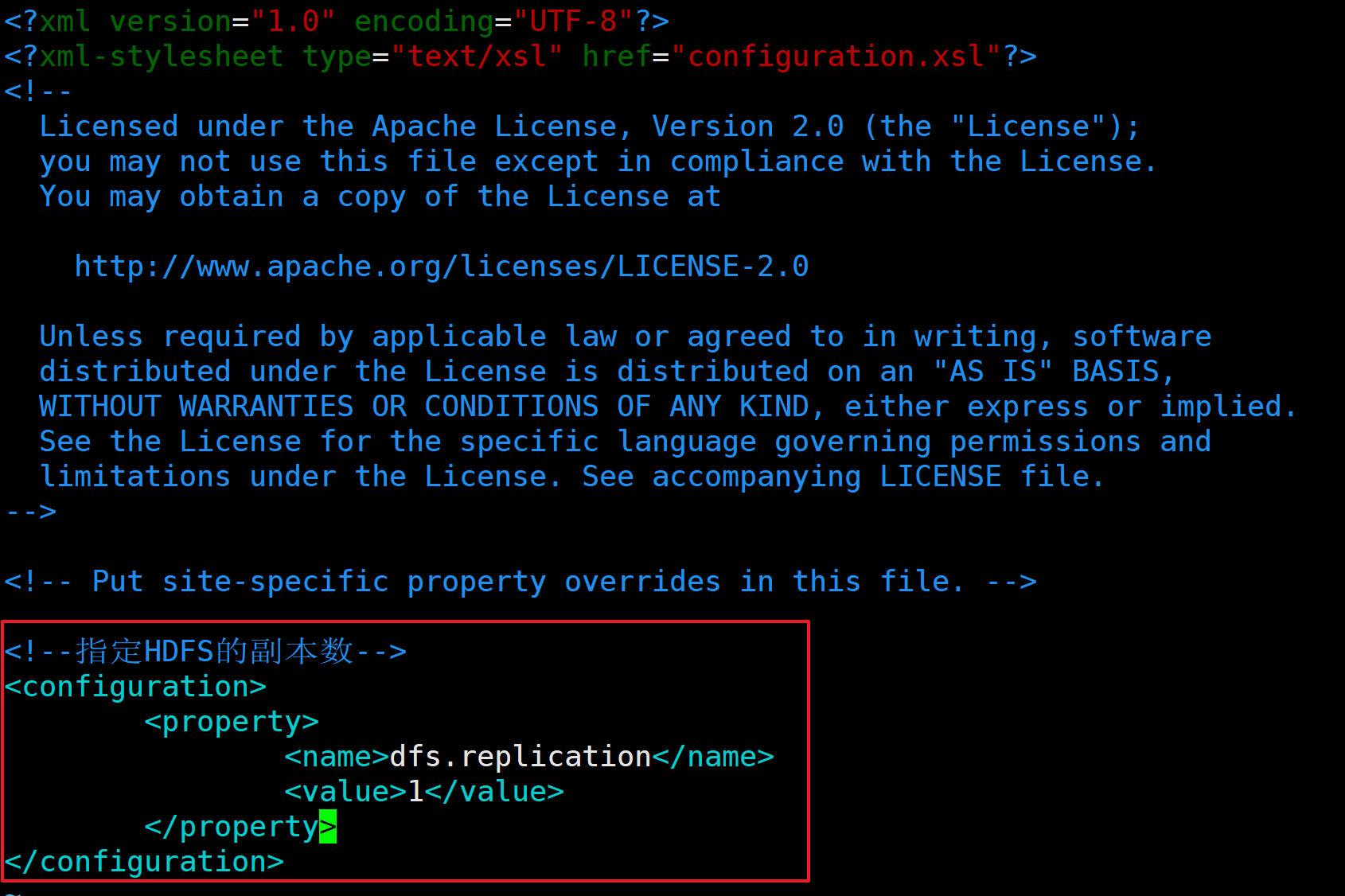

d.配置hdfs-site.xml

[root@izuligp6e1dyzfz hadoop]# vim hdfs-site.xml

<!--指定HDFS的副本数-->

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>

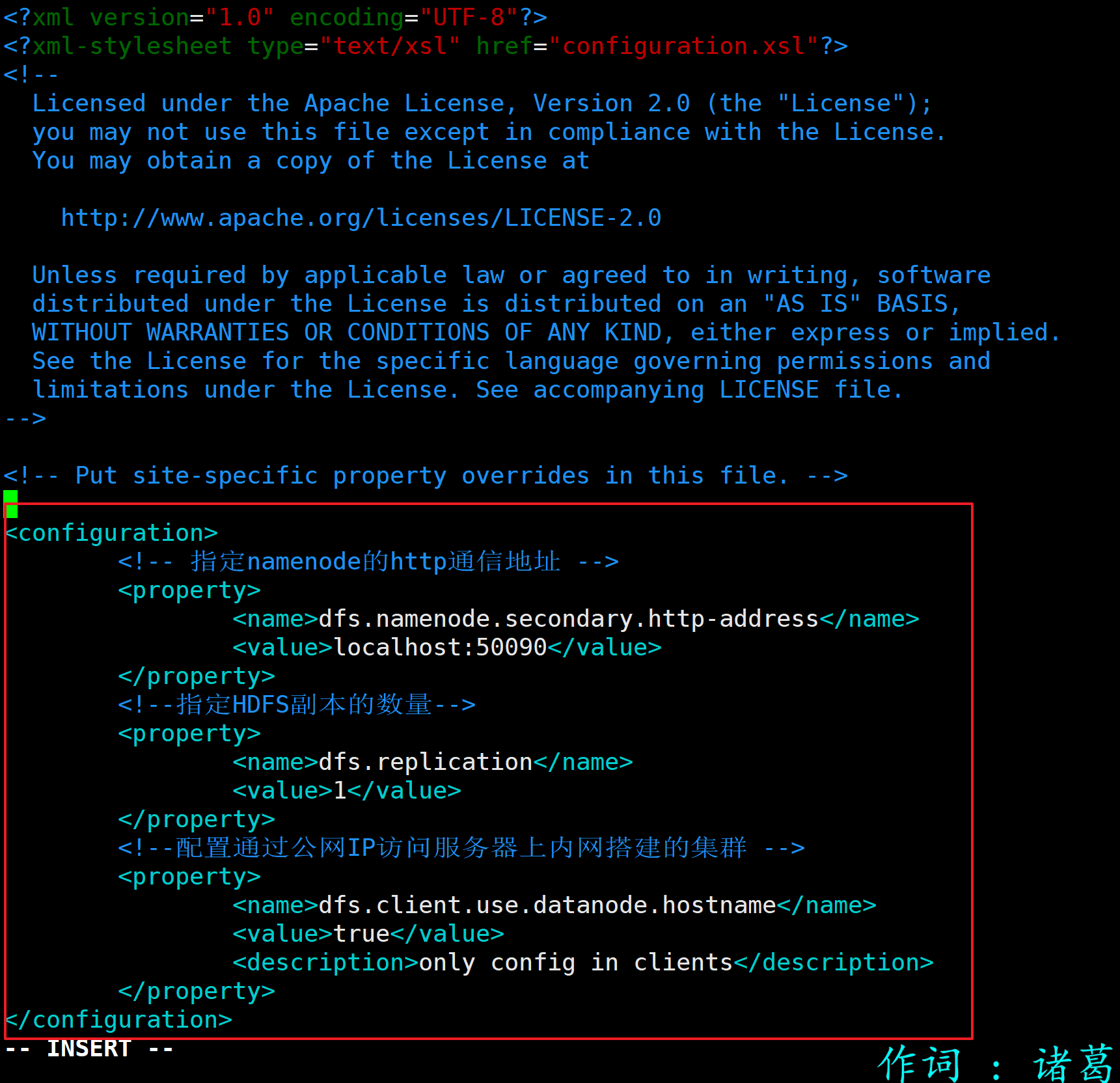

e.配置hdfs-site.xml使得服务器外部IP访问(可选,如在本机则不必做如下配置)

<configuration>

<!-- 指定namenode的http通信地址 -->

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>localhost:50090</value>

</property>

<!--指定HDFS副本的数量-->

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<!--配置通过公网IP访问服务器上内网搭建的集群 -->

<property>

<name>dfs.client.use.datanode.hostname</name>

<value>true</value>

<description>only config in clients</description>

</property>

</configuration>

2.启动集群

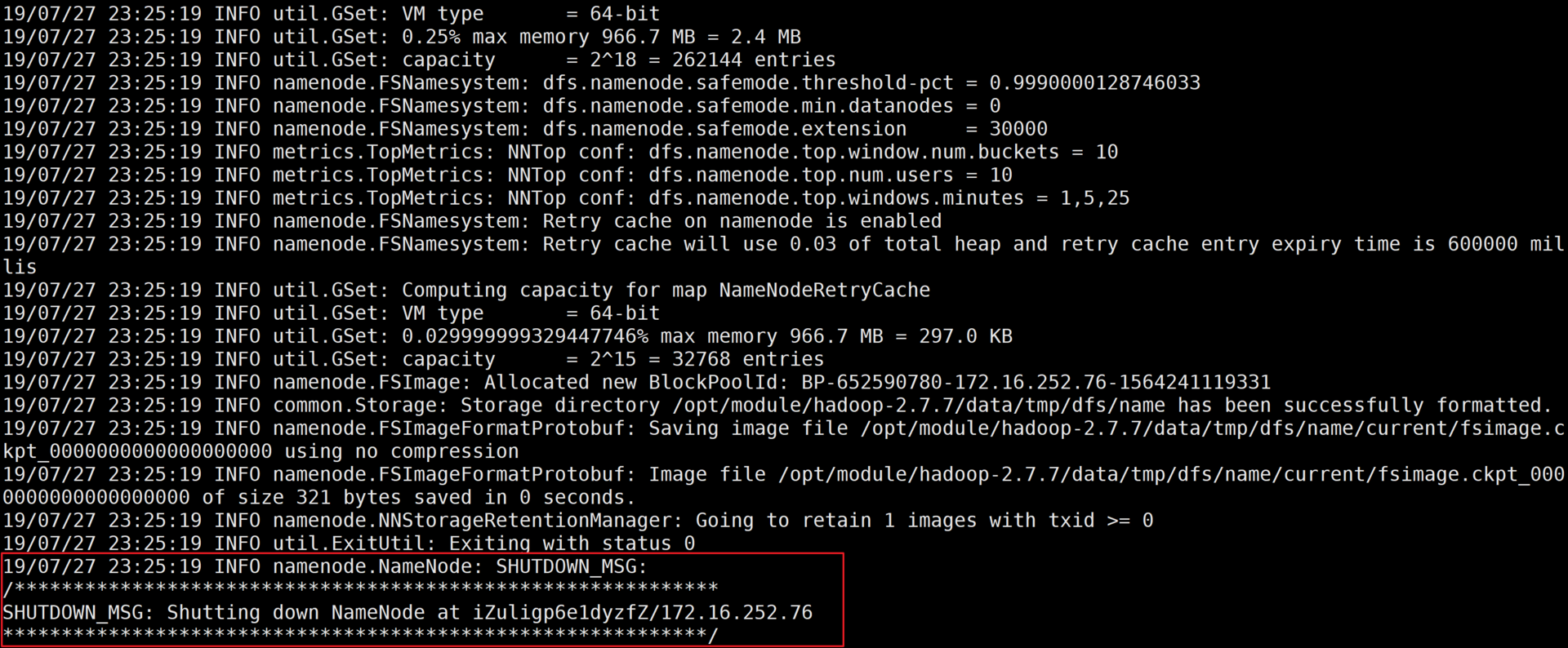

a.格式化NameNode(第一次启动时格式化、以后就不需要格式化)

[root@izuligp6e1dyzfz hadoop-2.7.2]# bin/hdfs namenode -format

格式化集群输出信息

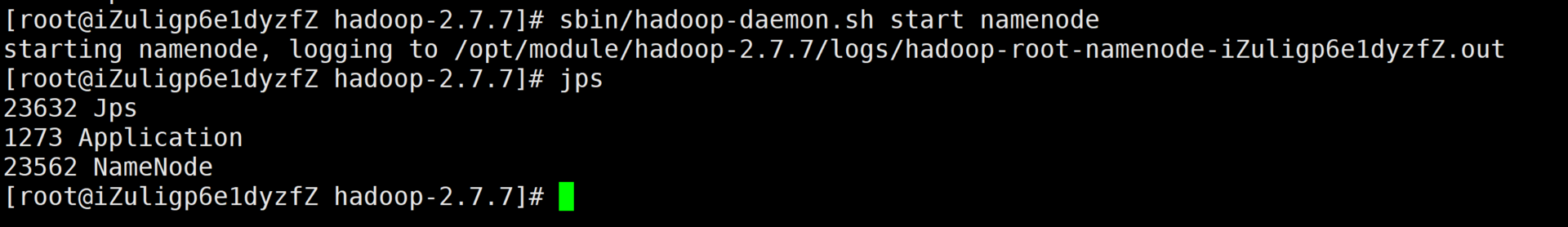

b.启动NameNode

[root@izuligp6e1dyzfz hadoop-2.7.2]# sbin/hadoop-daemon.sh start namenode

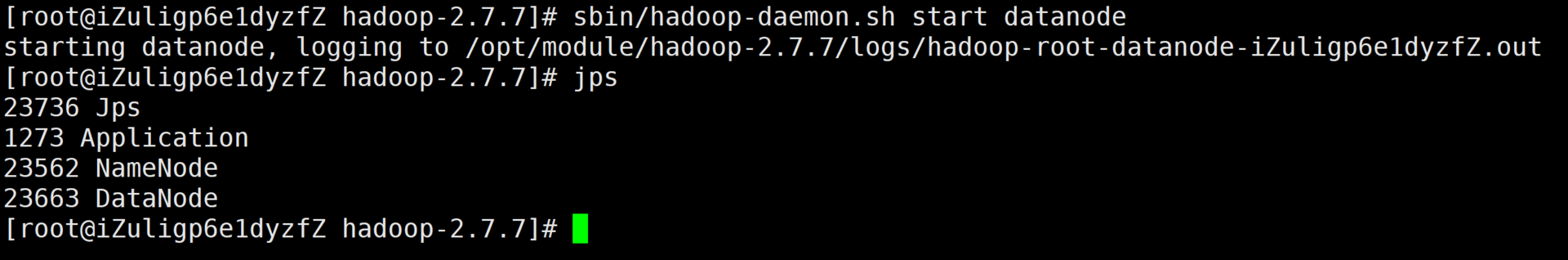

c.启动DataNode

[root@izuligp6e1dyzfz hadoop-2.7.2]# sbin/hadoop-daemon.sh start datanode

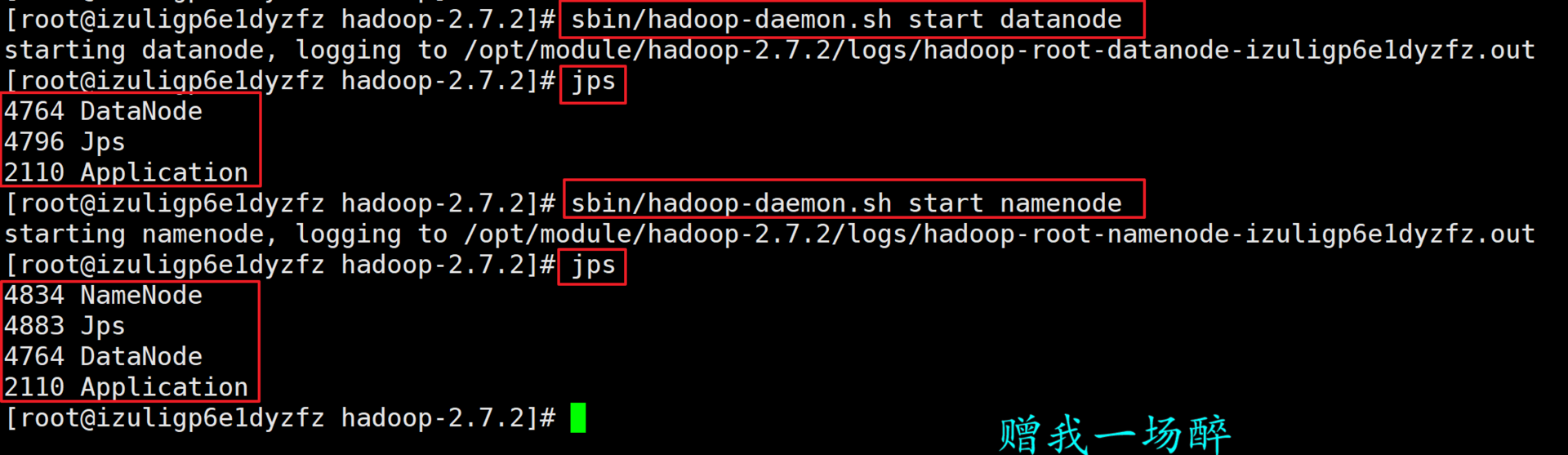

3.查看集群

a.查看集群是否启动成功

[root@izuligp6e1dyzfz hadoop-2.7.2]# sbin/hadoop-daemon.sh start datanode

starting datanode, logging to /opt/module/hadoop-2.7.2/logs/hadoop-root-datanode-izuligp6e1dyzfz.out

[root@izuligp6e1dyzfz hadoop-2.7.2]# jps

4764 DataNode

4796 Jps

2110 Application

[root@izuligp6e1dyzfz hadoop-2.7.2]# sbin/hadoop-daemon.sh start namenode

starting namenode, logging to /opt/module/hadoop-2.7.2/logs/hadoop-root-namenode-izuligp6e1dyzfz.out

[root@izuligp6e1dyzfz hadoop-2.7.2]# jps

4834 NameNode

4883 Jps

4764 DataNode

2110 Application

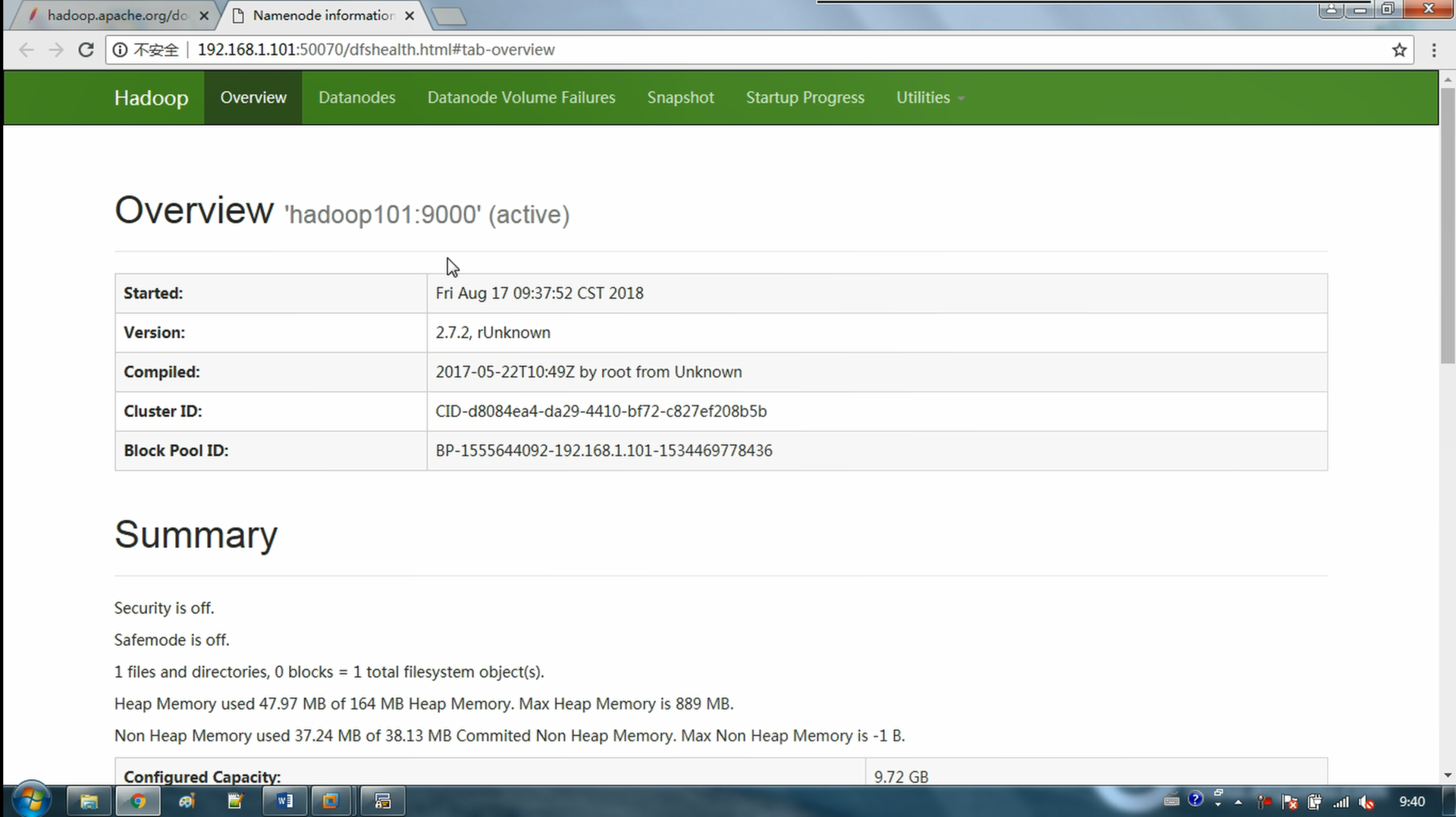

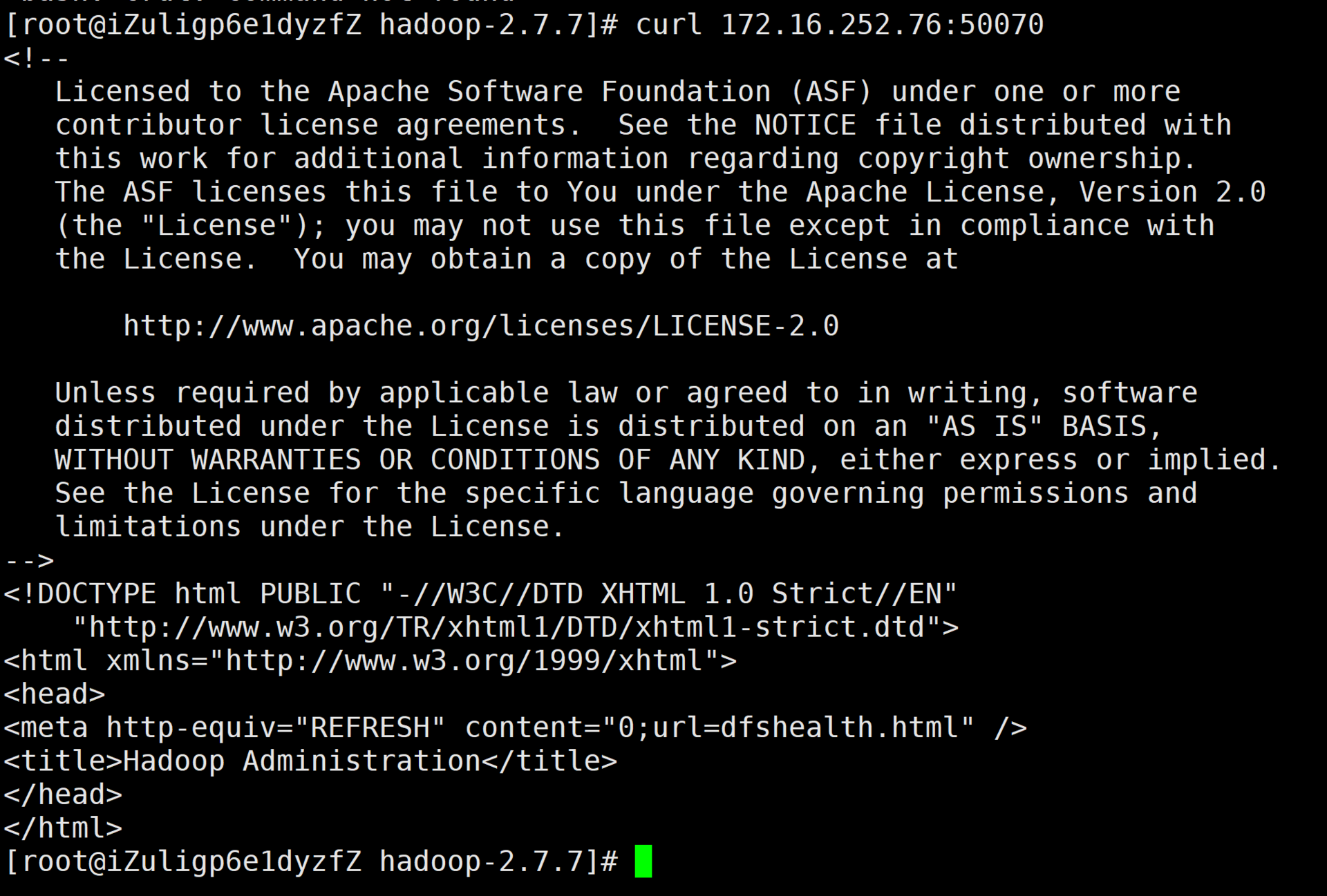

b.访问集群的Web管理界面

浏览器输入URL即可访问,Linux可以使用curl命令访问获取返回值

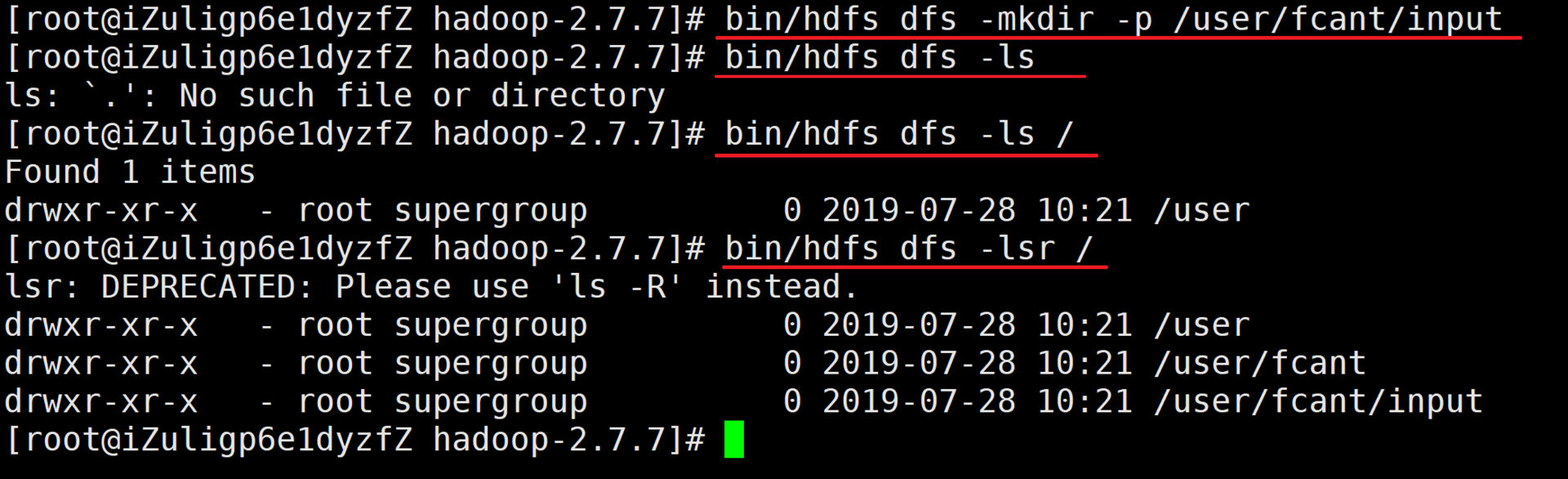

4.伪分布式集群的操作

a.在集群下新建文件夹

[root@fcant hadoop-2.7.2]# bin/hdfs dfs -mkdir -p /user/fcant/input

[root@fcant hadoop-2.7.2]# bin/hdfs dfs -mkdir -lsr /

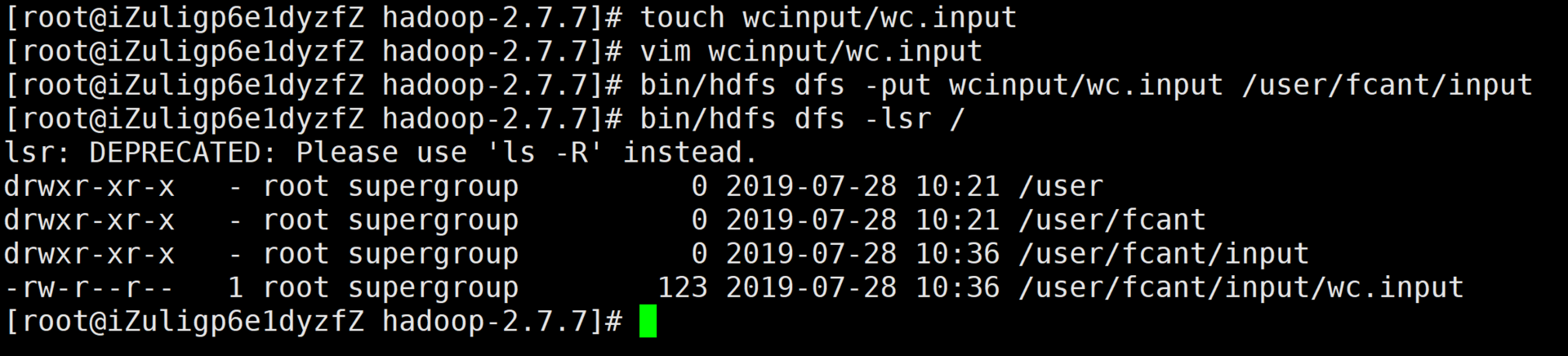

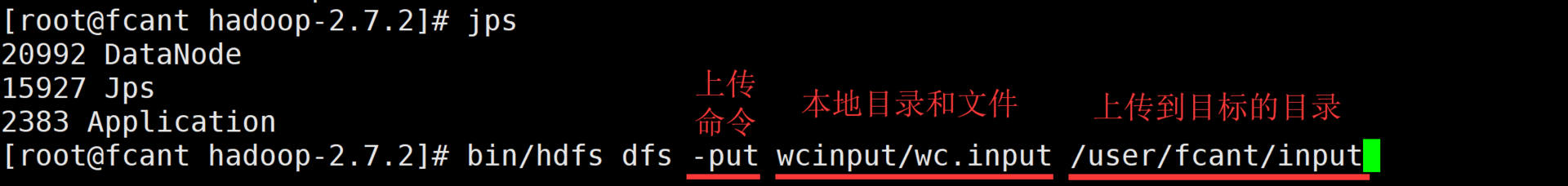

b.将本地文件上传到集群下

[root@fcant hadoop-2.7.2]# bin/hdfs dfs -put wcinput/wc.input /user/fcant/input

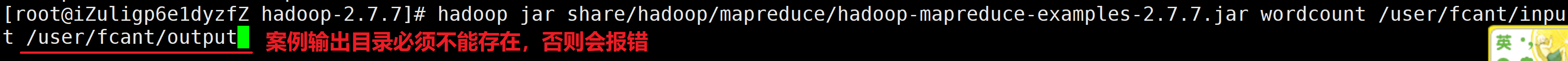

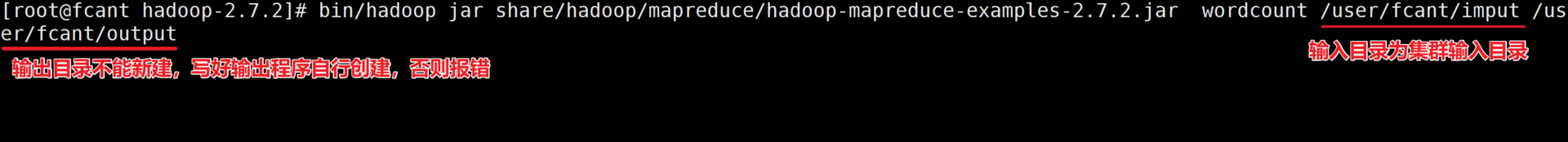

c.在集群运行测试案例

[root@fcant hadoop-2.7.2]# bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.7.jar wordcount /user/fcant/imput /user/fcant/output

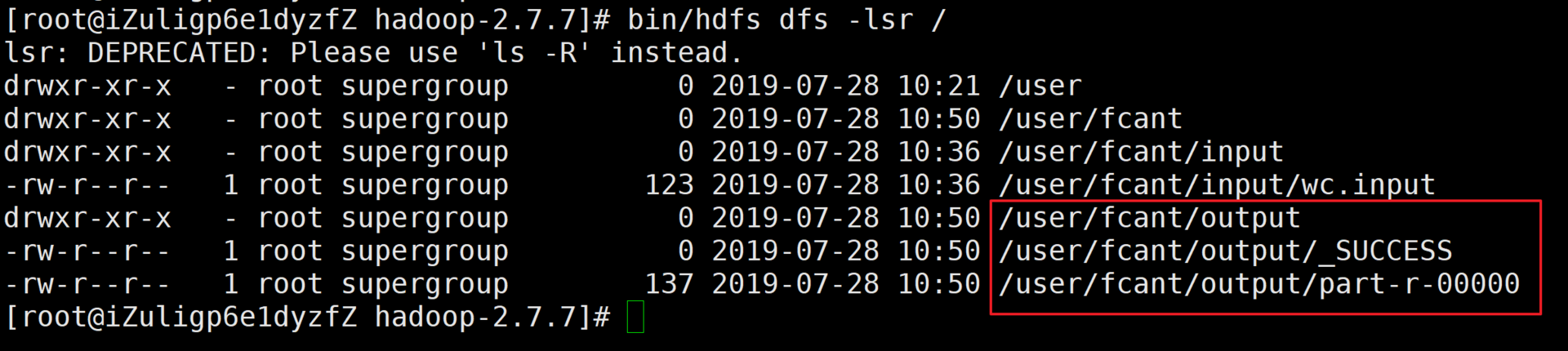

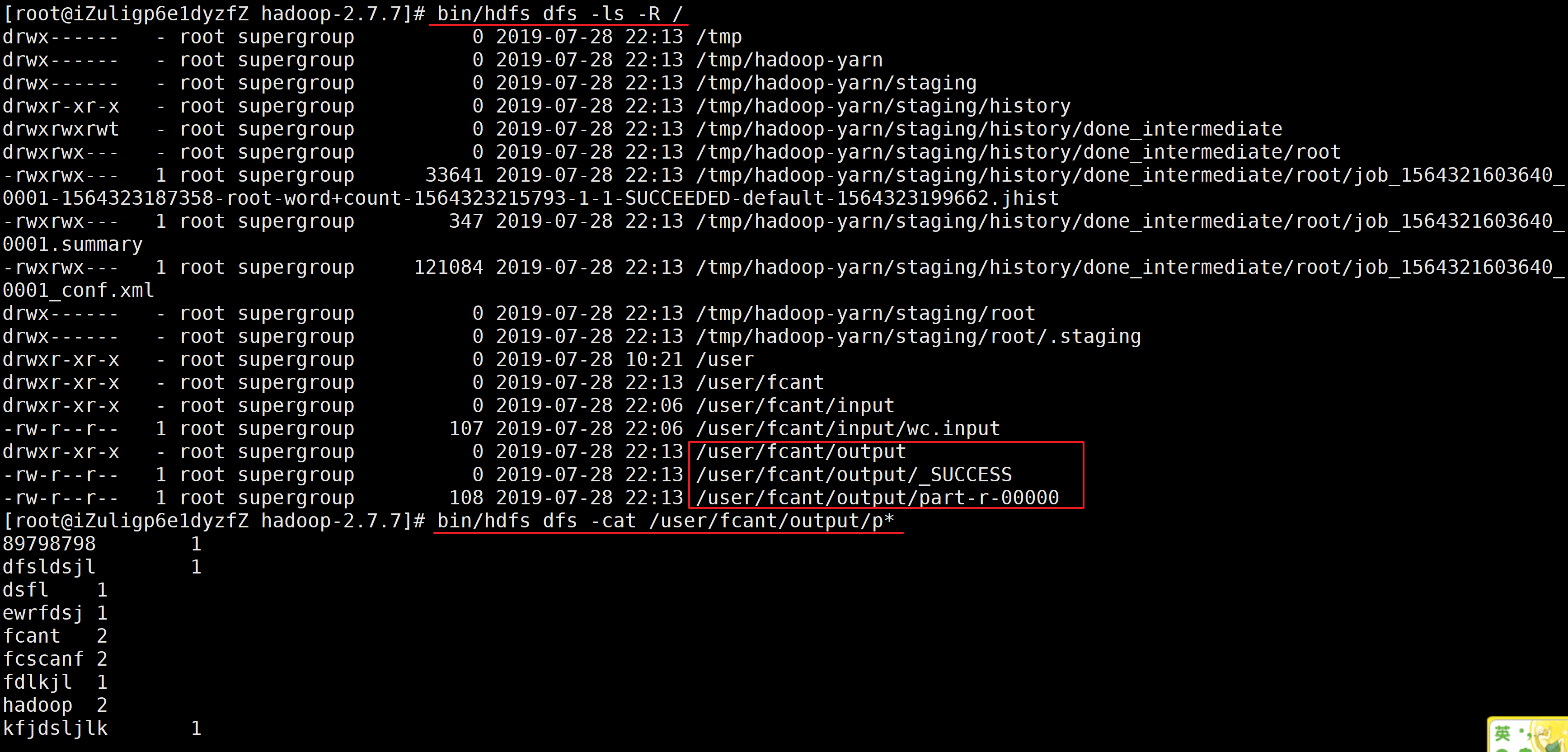

d.运行成功输出的结果

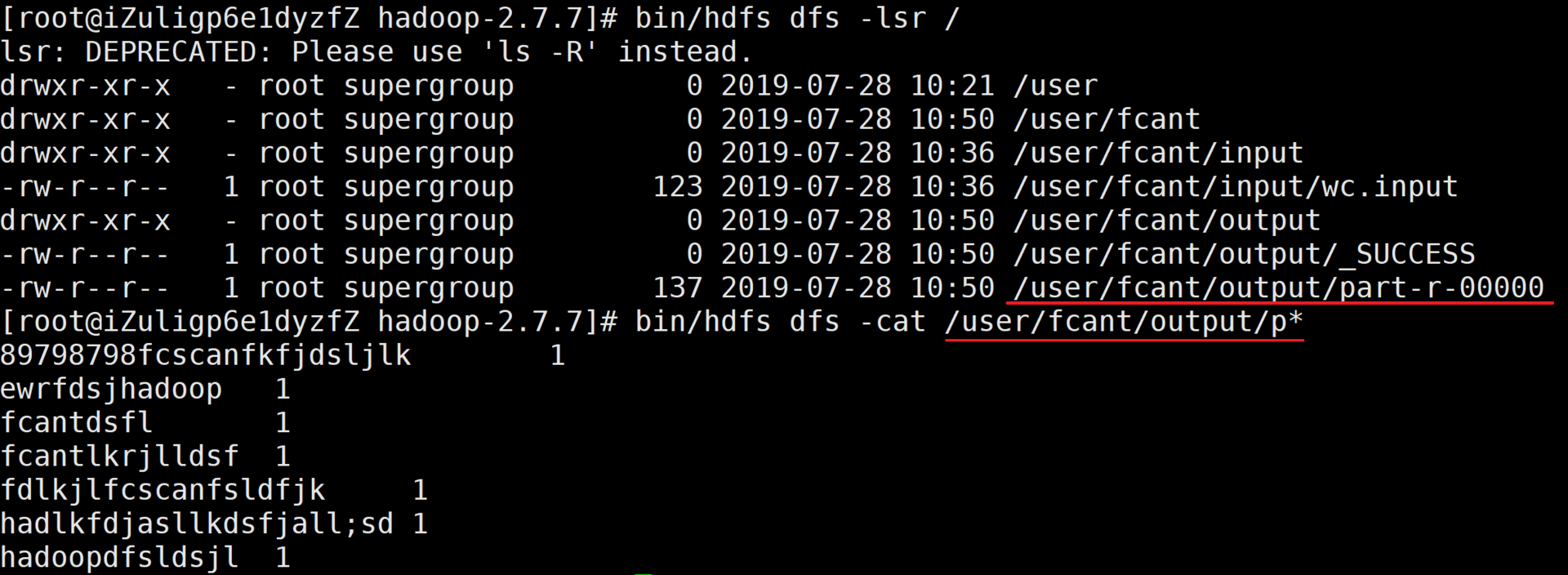

e.查看并下载结果集

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# bin/hdfs dfs -lsr /

lsr: DEPRECATED: Please use 'ls -R' instead.

drwxr-xr-x - root supergroup 0 2019-07-28 10:21 /user

drwxr-xr-x - root supergroup 0 2019-07-28 10:50 /user/fcant

drwxr-xr-x - root supergroup 0 2019-07-28 10:36 /user/fcant/input

-rw-r--r-- 1 root supergroup 123 2019-07-28 10:36 /user/fcant/input/wc.input

drwxr-xr-x - root supergroup 0 2019-07-28 10:50 /user/fcant/output

-rw-r--r-- 1 root supergroup 0 2019-07-28 10:50 /user/fcant/output/_SUCCESS

-rw-r--r-- 1 root supergroup 137 2019-07-28 10:50 /user/fcant/output/part-r-00000

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# bin/hdfs dfs -cat /user/fcant/output/p*

89798798fcscanfkfjdsljlk 1

ewrfdsjhadoop 1

fcantdsfl 1

fcantlkrjlldsf 1

fdlkjlfcscanfsldfjk 1

hadlkfdjasllkdsfjall;sd 1

hadoopdfsldsjl 1

[root@iZuligp6e1dyzfZ hadoop-2.7.7]#

II.启动YARN并运行MapReduce

1.配置YARN

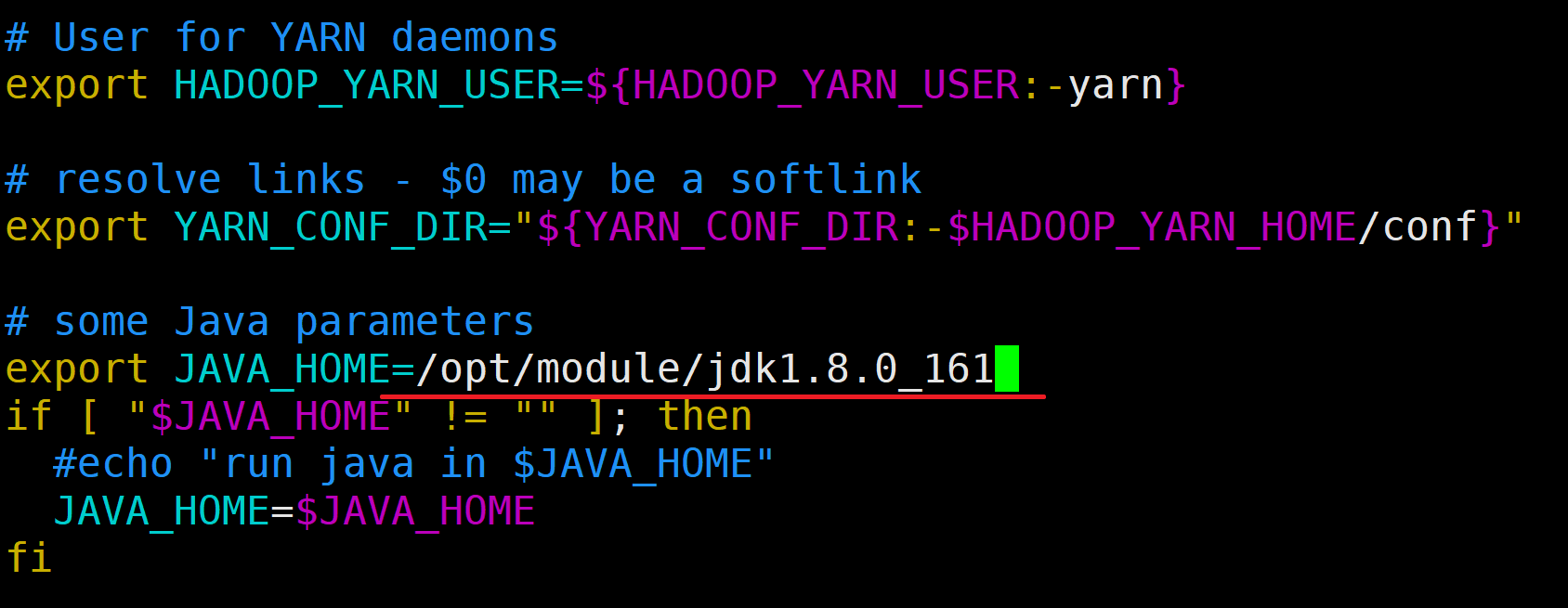

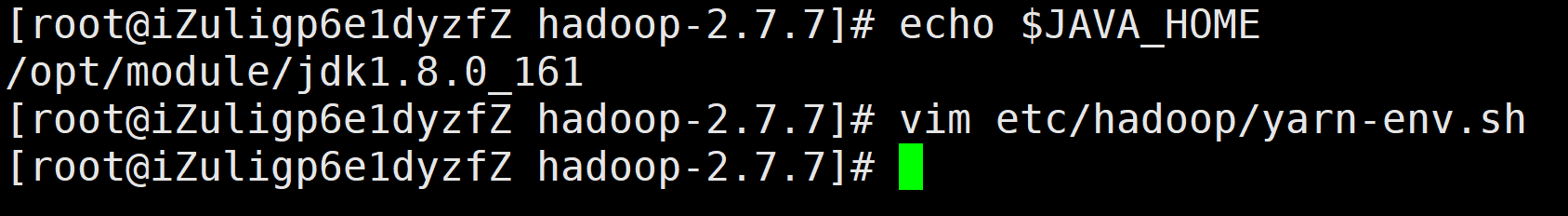

a.配置yarn-env.sh

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# echo $JAVA_HOME

/opt/module/jdk1.8.0_161

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# vim etc/hadoop/yarn-env.sh

配置信息如下:

export JAVA_HOME=/opt/module/jdk1.8.0_161

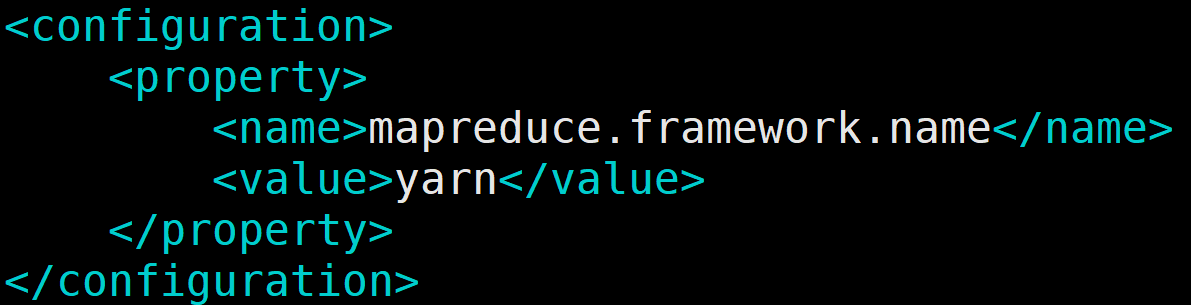

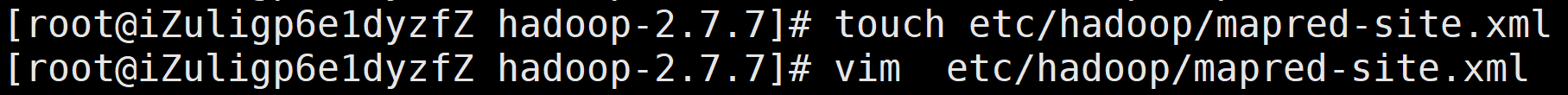

b.配置mapred-site.xml

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# touch etc/hadoop/mapred-site.xml

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# vim etc/hadoop/mapred-site.xml

配置内容如下

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

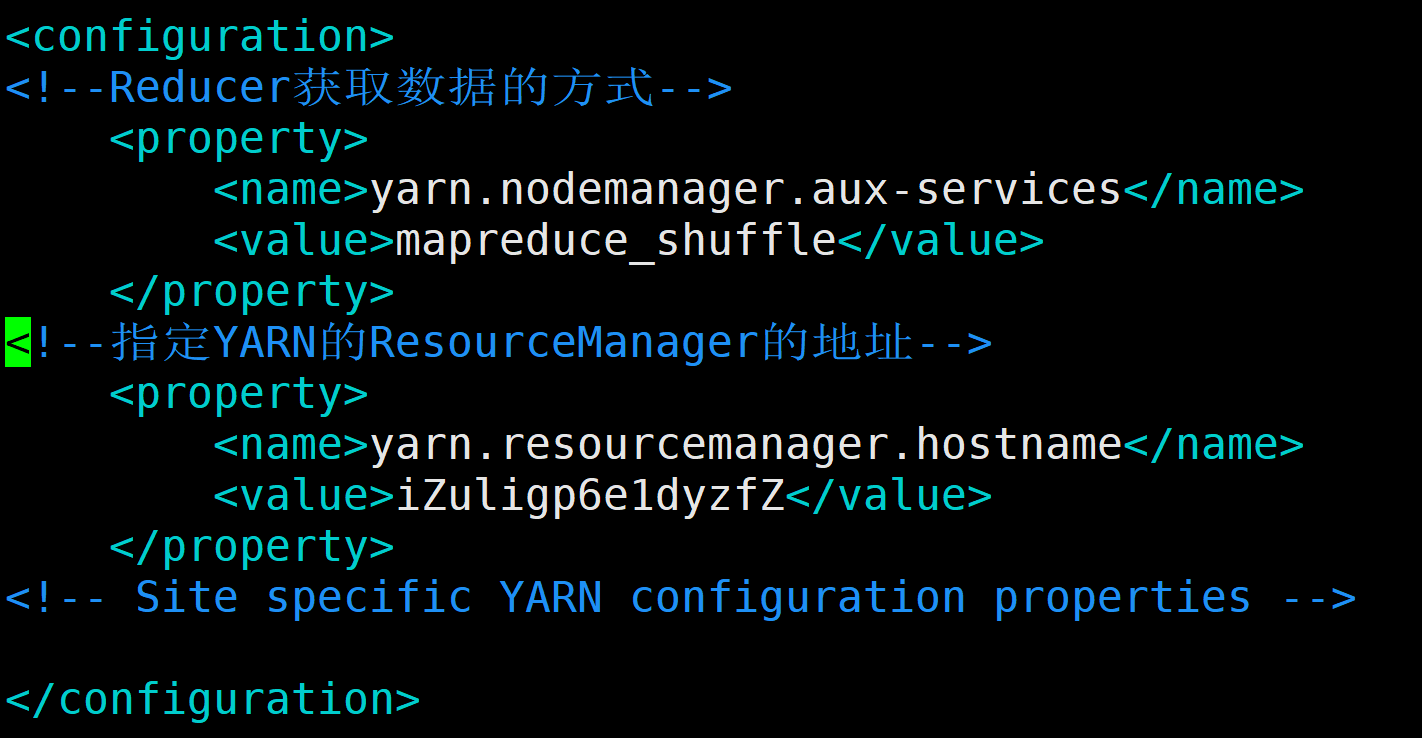

c.配置yarn-stie.xml

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# vim etc/hadoop/yarn-site.xml

配置内容如下

<configuration>

<!--Reducer获取数据的方式-->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!--指定YARN的ResourceManager的地址-->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>iZuligp6e1dyzfZ</value>

</property>

<!-- Site specific YARN configuration properties -->

</configuration>

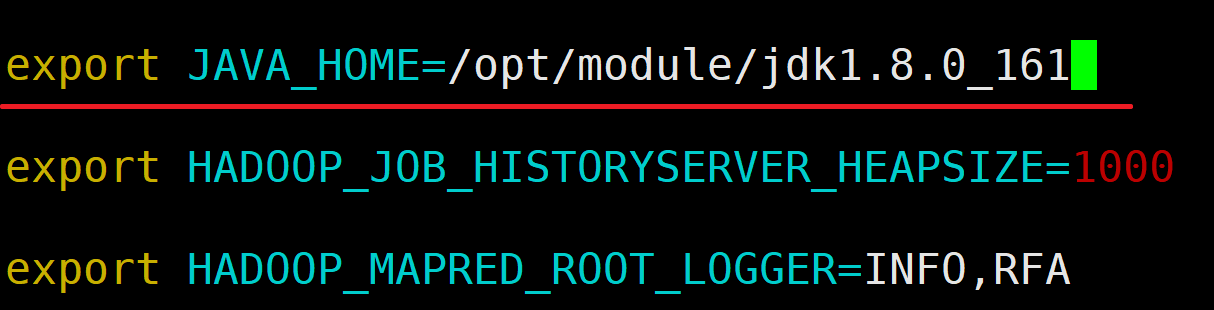

d.配置yarn-env.sh

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# vim etc/hadoop/mapred-env.sh

配置内容如下

export JAVA_HOME=/opt/module/jdk1.8.0_161

2.启动集群

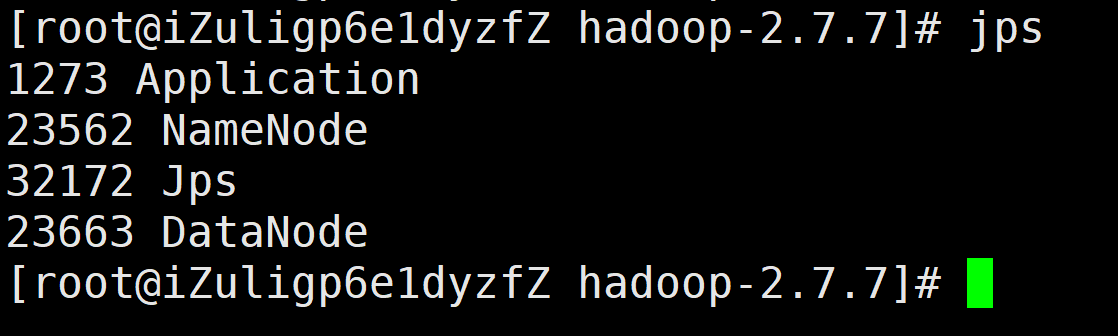

a.确保NameNode和DataNode已经启动

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

1273 Application

23562 NameNode

32172 Jps

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]#

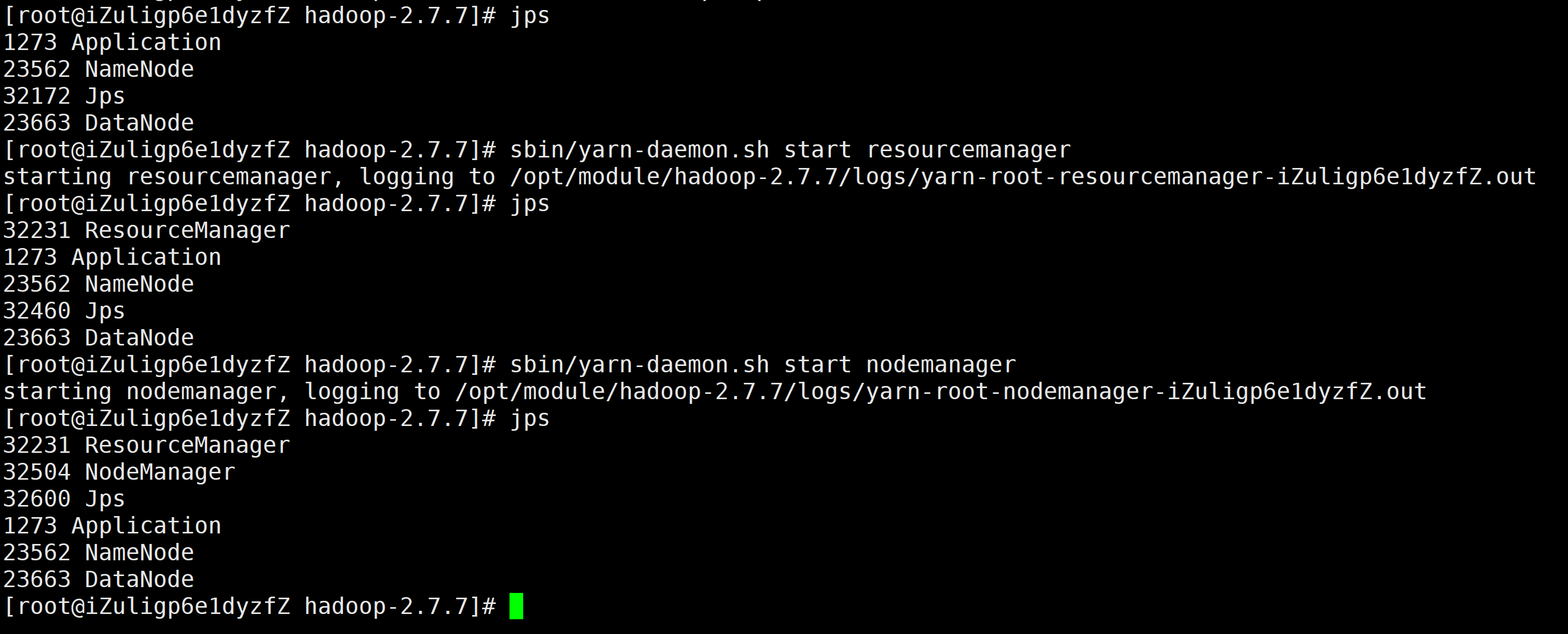

b.启动YARN中的resourcemanager和nodemanager

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

1273 Application

23562 NameNode

32172 Jps

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# sbin/yarn-daemon.sh start resourcemanager

starting resourcemanager, logging to /opt/module/hadoop-2.7.7/logs/yarn-root-resourcemanager-iZuligp6e1dyzfZ.out

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

32231 ResourceManager

1273 Application

23562 NameNode

32460 Jps

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# sbin/yarn-daemon.sh start nodemanager

starting nodemanager, logging to /opt/module/hadoop-2.7.7/logs/yarn-root-nodemanager-iZuligp6e1dyzfZ.out

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

32231 ResourceManager

32504 NodeManager

32600 Jps

1273 Application

23562 NameNode

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]#

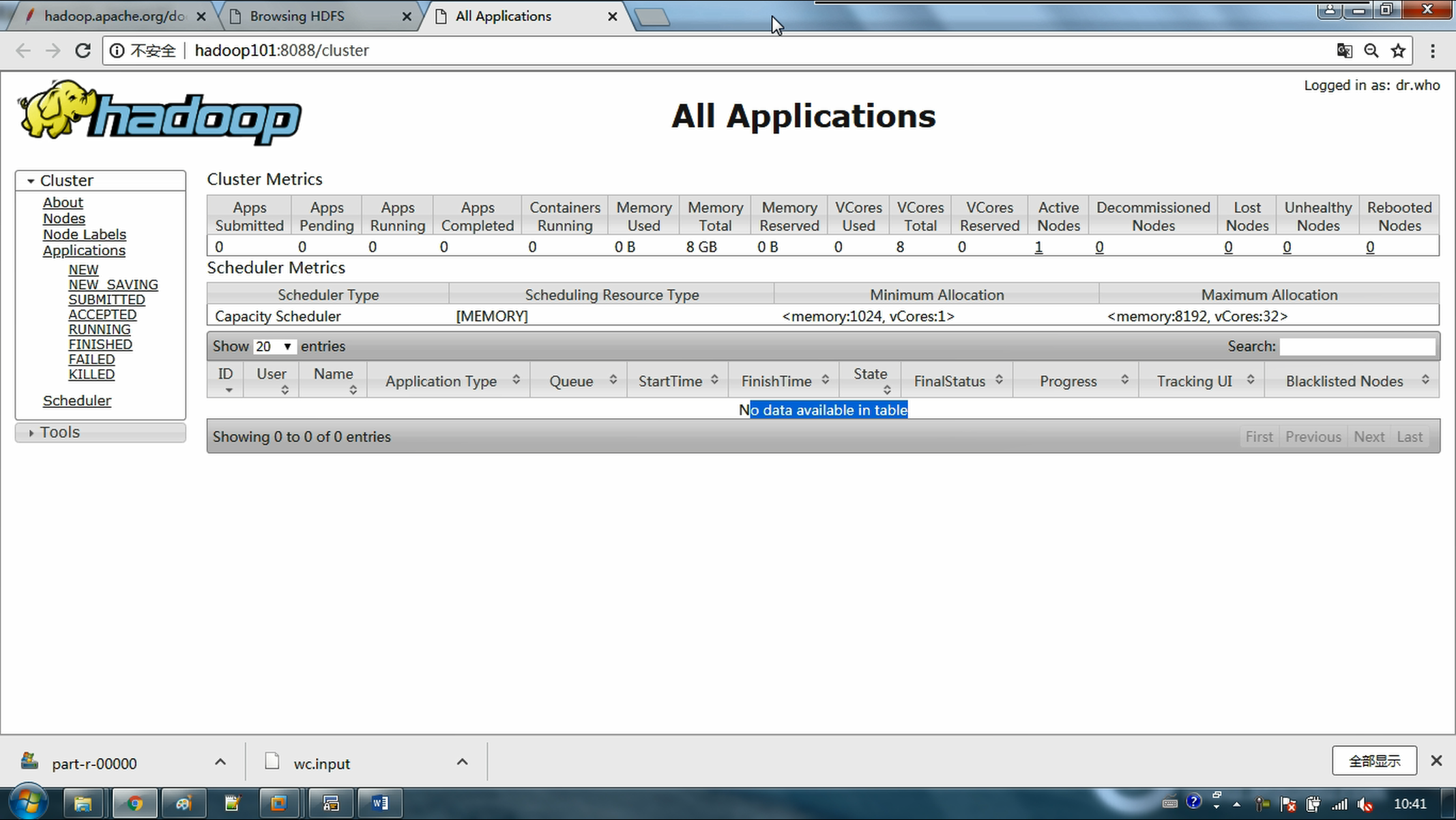

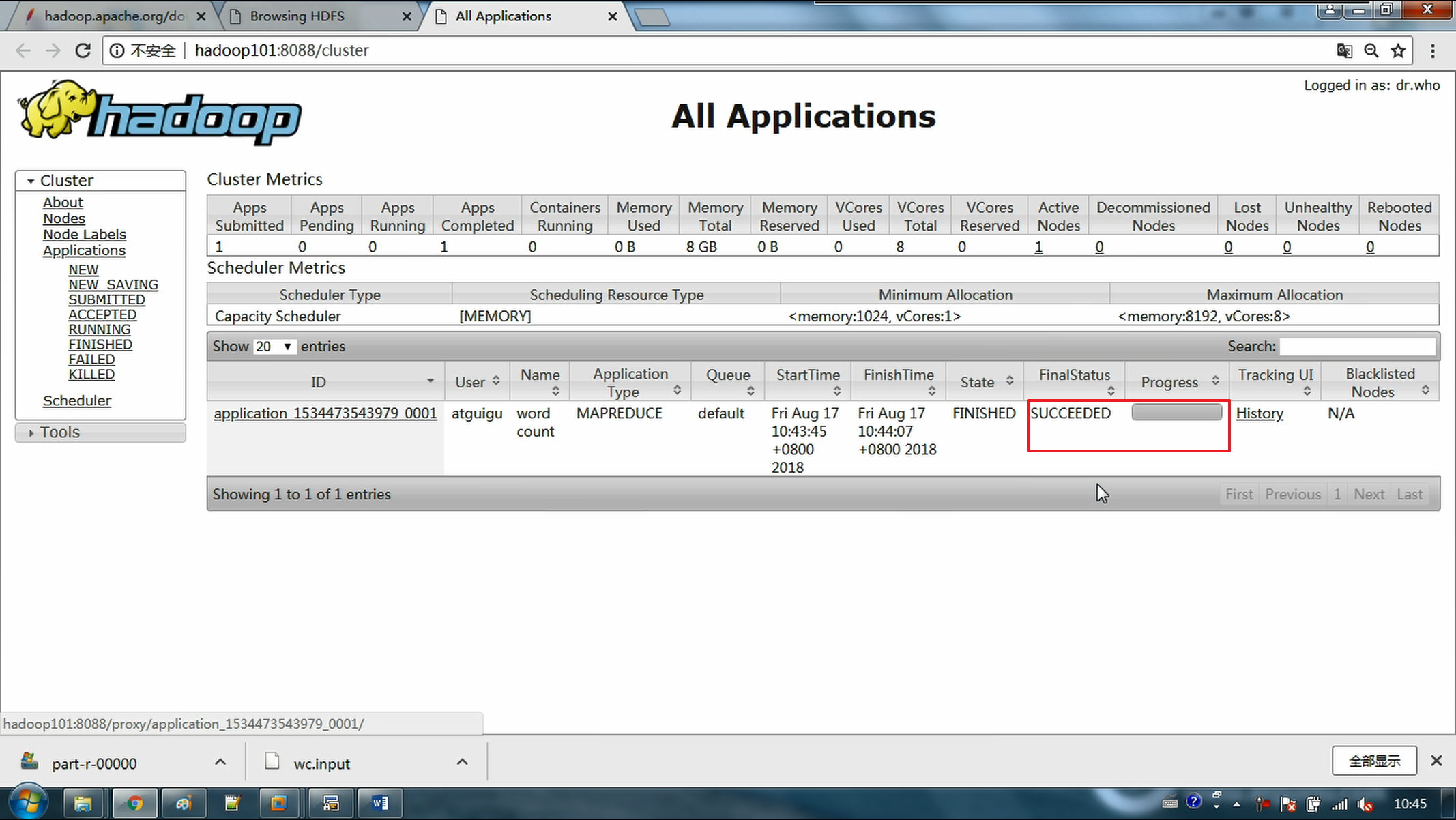

c.启动后可以通过浏览器访问8088端口查看应用程序

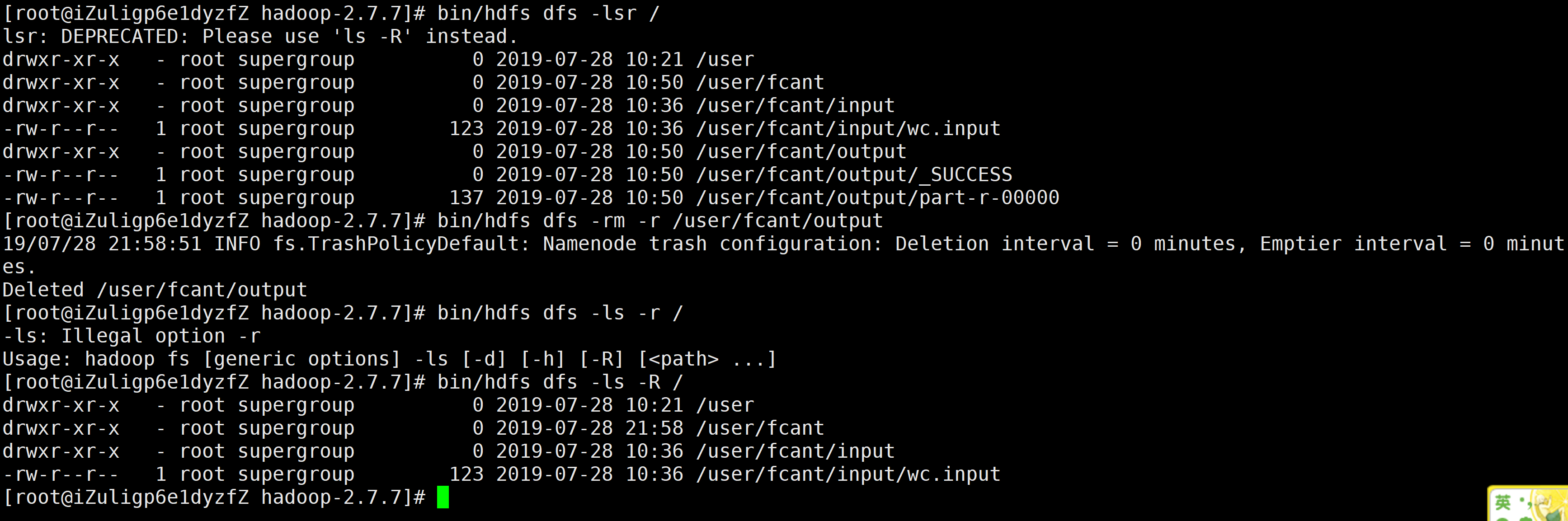

d.删除output输出目录

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# bin/hdfs dfs -lsr /

lsr: DEPRECATED: Please use 'ls -R' instead.

drwxr-xr-x - root supergroup 0 2019-07-28 10:21 /user

drwxr-xr-x - root supergroup 0 2019-07-28 10:50 /user/fcant

drwxr-xr-x - root supergroup 0 2019-07-28 10:36 /user/fcant/input

-rw-r--r-- 1 root supergroup 123 2019-07-28 10:36 /user/fcant/input/wc.input

drwxr-xr-x - root supergroup 0 2019-07-28 10:50 /user/fcant/output

-rw-r--r-- 1 root supergroup 0 2019-07-28 10:50 /user/fcant/output/_SUCCESS

-rw-r--r-- 1 root supergroup 137 2019-07-28 10:50 /user/fcant/output/part-r-00000

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# bin/hdfs dfs -rm -r /user/fcant/output

19/07/28 21:58:51 INFO fs.TrashPolicyDefault: Namenode trash configuration: Deletion interval = 0 minutes, Emptier interval = 0 minutes.

Deleted /user/fcant/output

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# bin/hdfs dfs -ls -r /

-ls: Illegal option -r

Usage: hadoop fs [generic options] -ls [-d] [-h] [-R] [<path> ...]

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# bin/hdfs dfs -ls -R /

drwxr-xr-x - root supergroup 0 2019-07-28 10:21 /user

drwxr-xr-x - root supergroup 0 2019-07-28 21:58 /user/fcant

drwxr-xr-x - root supergroup 0 2019-07-28 10:36 /user/fcant/input

-rw-r--r-- 1 root supergroup 123 2019-07-28 10:36 /user/fcant/input/wc.input

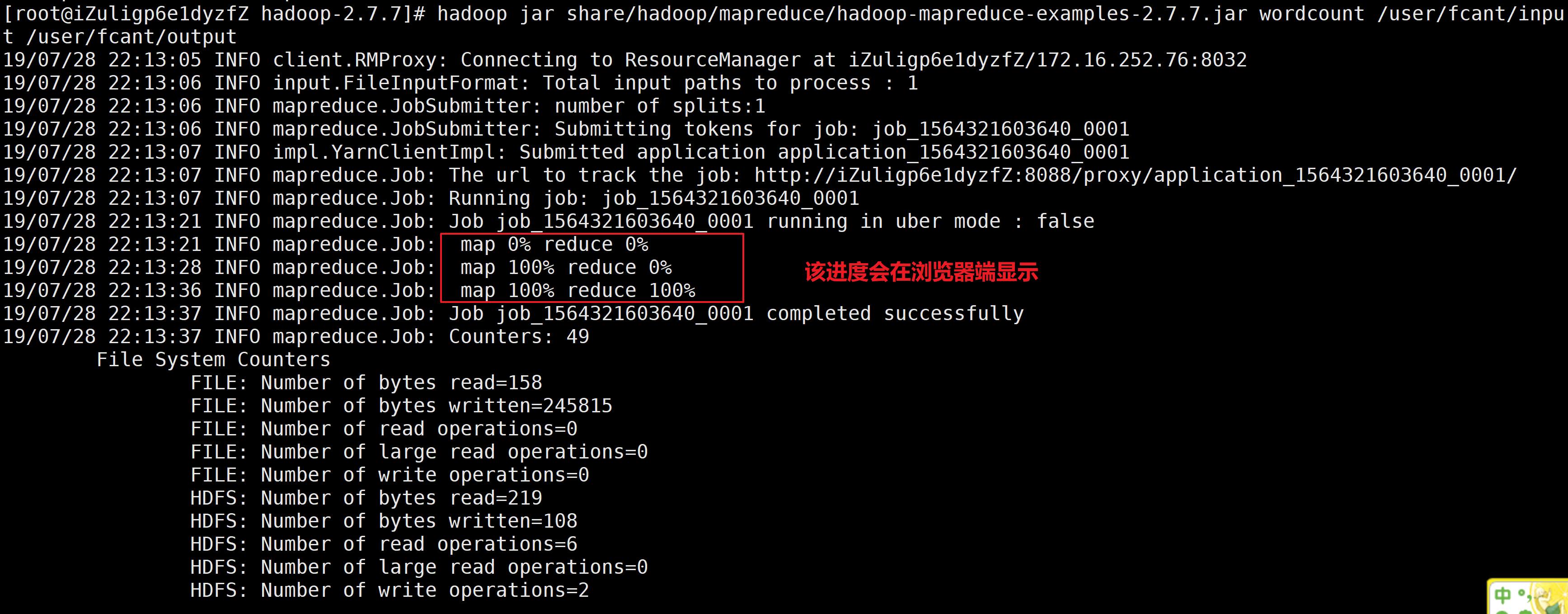

e.运行测试案例

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.7.jar wordcount /user/fcant/input /user/fcant/output

f.在YARN和本地运行的不同以及浏览器查看进度

g.运行结果

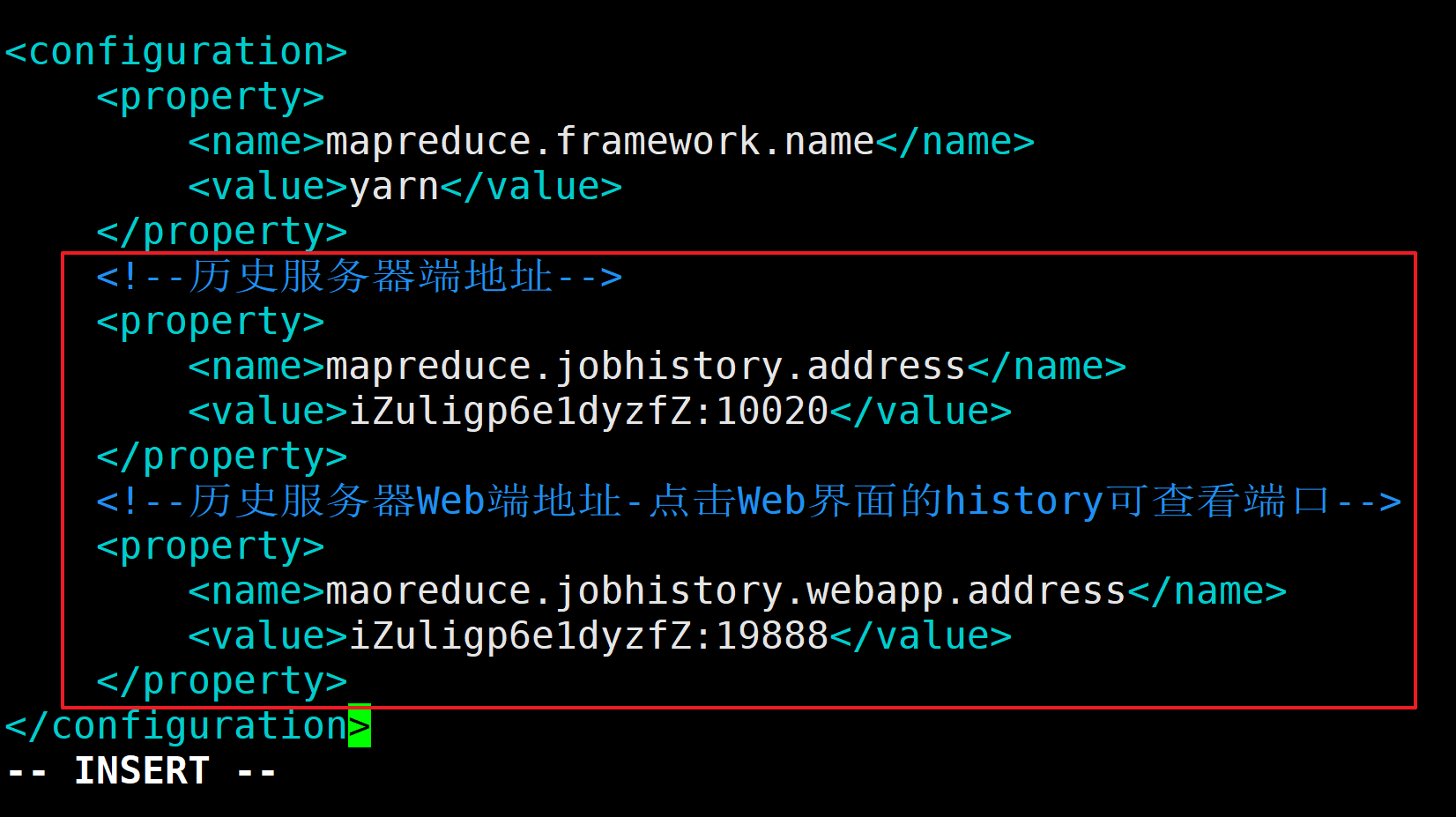

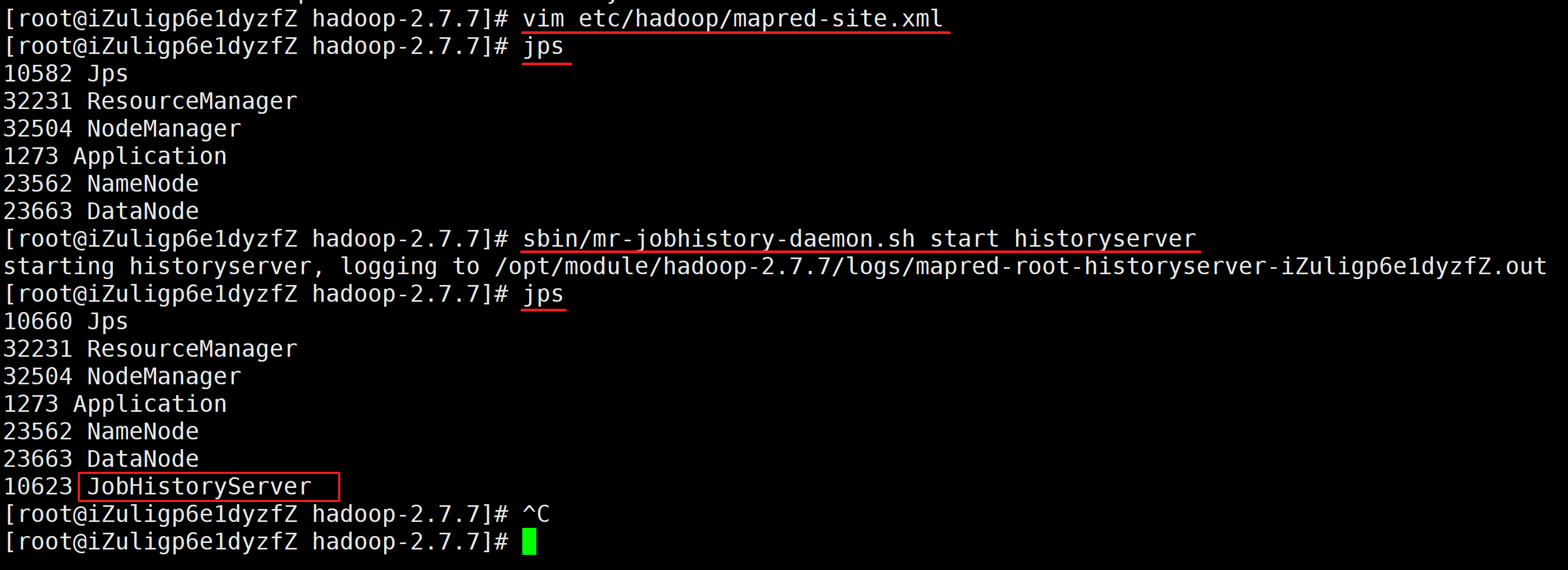

III.配置历史服务器

查看程序的历史运行情况,需要配置历史服务器

1.配置mapred-site.xml

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# vim etc/hadoop/mapred-site.xml

配置内容如下

<!--历史服务器端地址-->

<property>

<name>mapreduce.jobhistory.address</name>

<value>iZuligp6e1dyzfZ:10020</value>

</property>

<!--历史服务器Web端地址-点击Web界面的history可查看端口-->

<property>

<name>maoreduce.jobhistory.webapp.address</name>

<value>iZuligp6e1dyzfZ:19888</value>

</property>

2.启动历史服务器

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# vim etc/hadoop/mapred-site.xml

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

10582 Jps

32231 ResourceManager

32504 NodeManager

1273 Application

23562 NameNode

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# sbin/mr-jobhistory-daemon.sh start historyserver

starting historyserver, logging to /opt/module/hadoop-2.7.7/logs/mapred-root-historyserver-iZuligp6e1dyzfZ.out

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

10660 Jps

32231 ResourceManager

32504 NodeManager

1273 Application

23562 NameNode

23663 DataNode

10623 JobHistoryServer

[root@iZuligp6e1dyzfZ hadoop-2.7.7]#

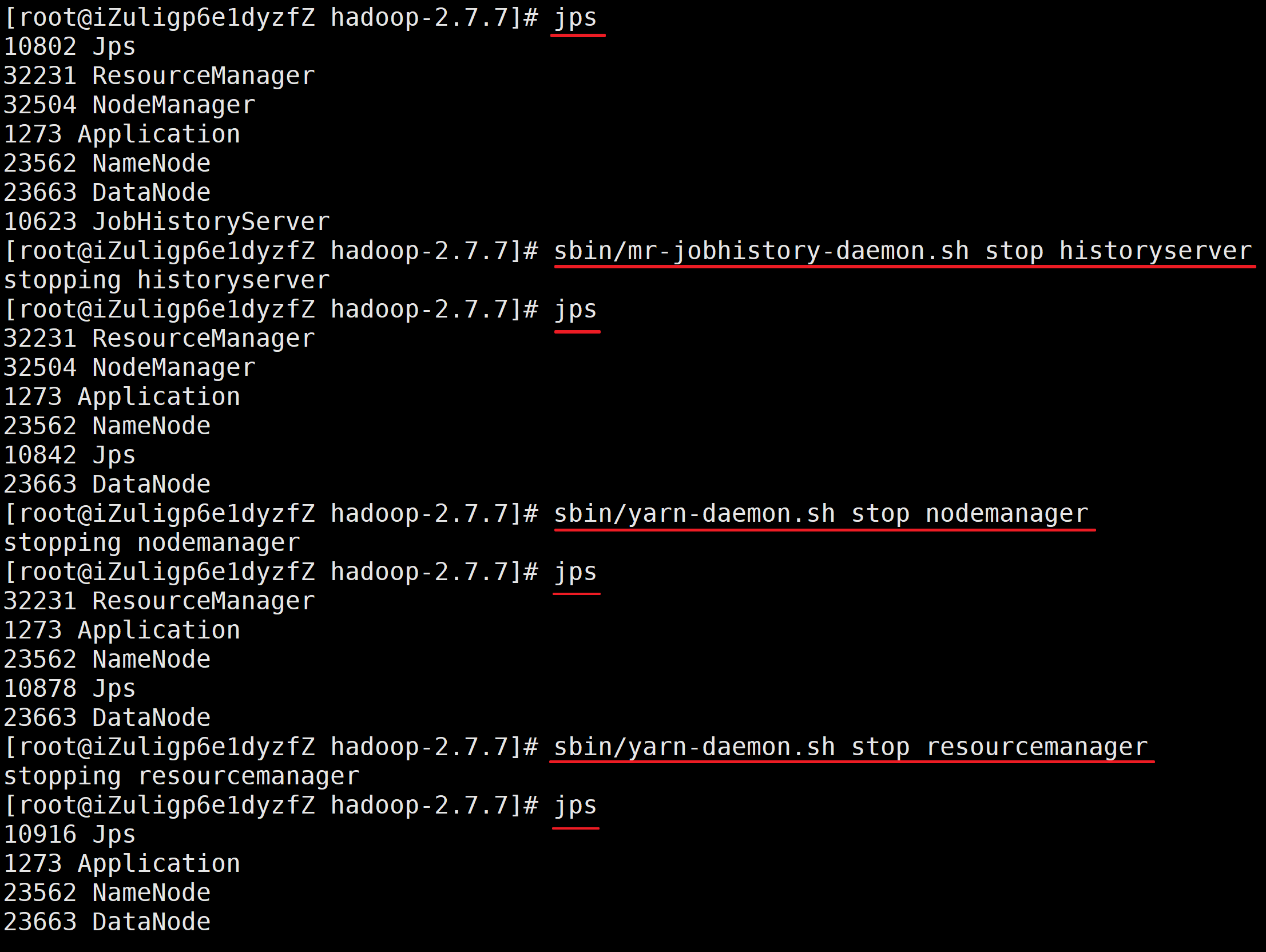

IV.配置日志的聚集

日志聚集的概念:应用程序运行完成以后,将程序运行信息上传到HDFS系统上。

日志聚集功能的好处:可以方便的查看到程序运行详情,方便开发测试

注意:开启日志聚集功能,需要重新启动NodeManager、ResourceManager和HistoryManager

开启日志聚集的步骤

1.关闭NodeManager、ResourceManager和HistoryManager

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

10802 Jps

32231 ResourceManager

32504 NodeManager

1273 Application

23562 NameNode

23663 DataNode

10623 JobHistoryServer

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# sbin/mr-jobhistory-daemon.sh stop historyserver

stopping historyserver

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

32231 ResourceManager

32504 NodeManager

1273 Application

23562 NameNode

10842 Jps

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# sbin/yarn-daemon.sh stop nodemanager

stopping nodemanager

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

32231 ResourceManager

1273 Application

23562 NameNode

10878 Jps

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# sbin/yarn-daemon.sh stop resourcemanager

stopping resourcemanager

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

10916 Jps

1273 Application

23562 NameNode

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]#

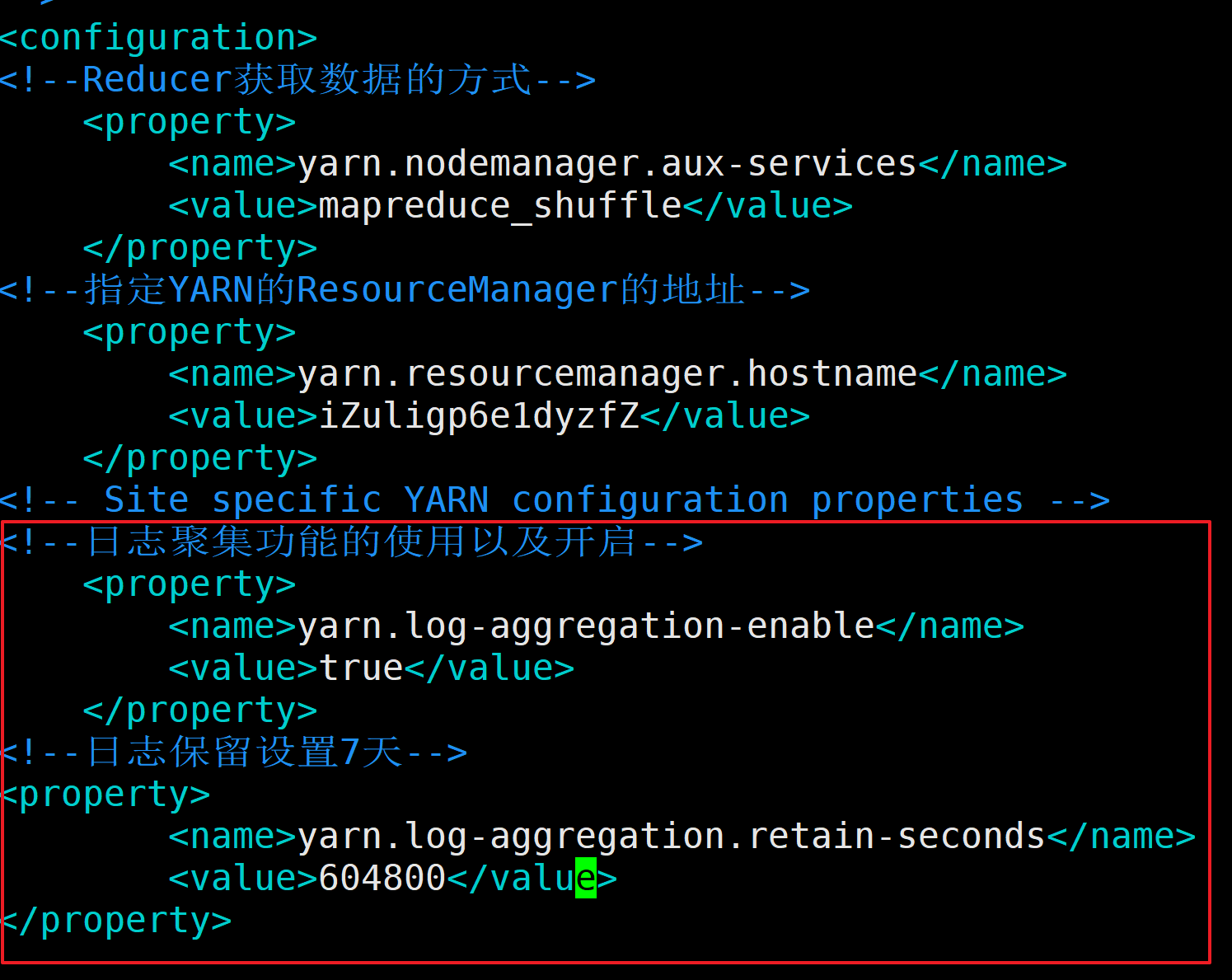

2.配置yarn-site.xml

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# vim etc/hadoop/yarn-site.xml

配置内容如下

<!--日志聚集功能的使用以及开启-->

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<!--日志保留设置7天-->

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>

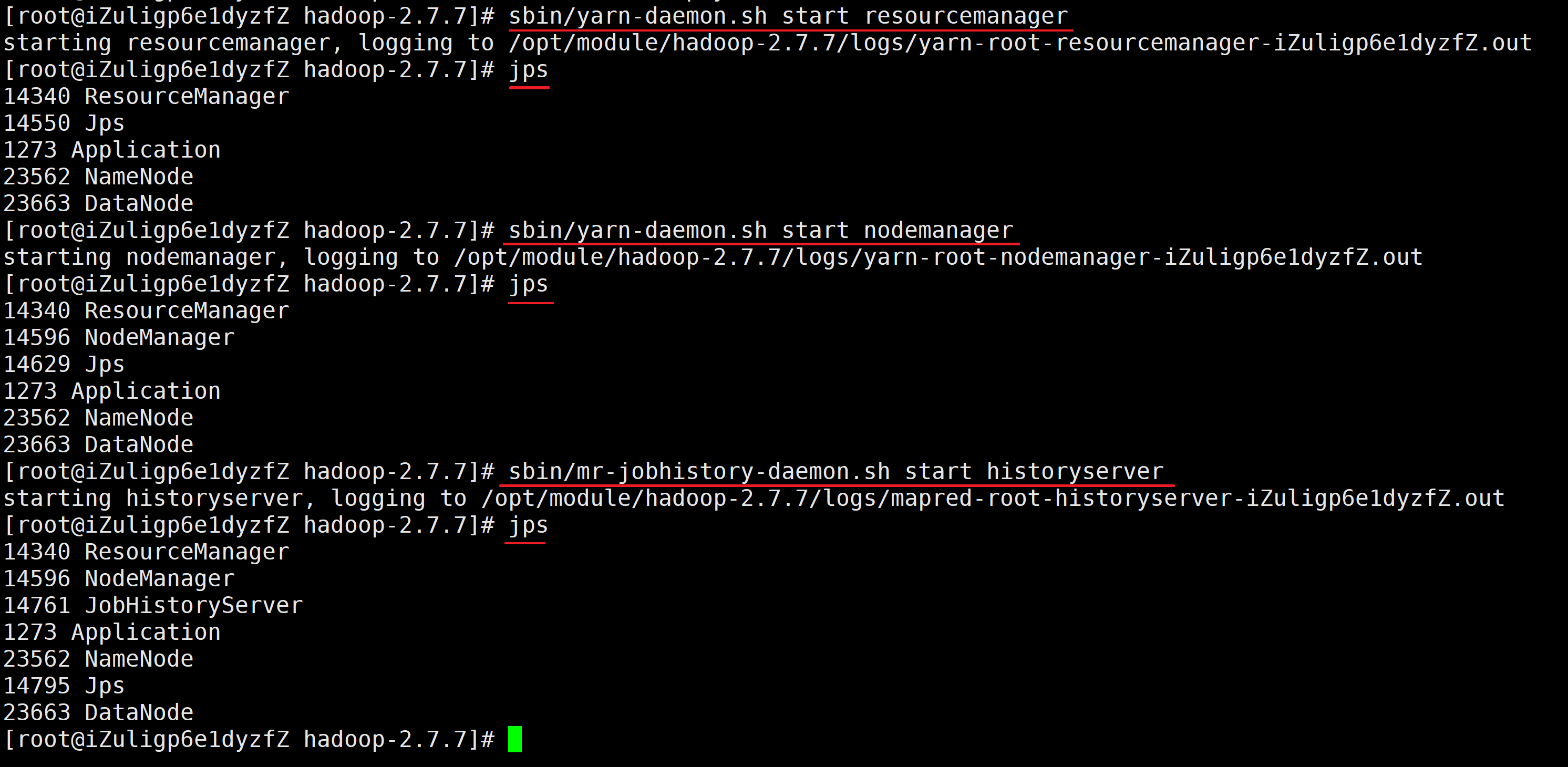

3.启动NodeManager、ResourceManager和HistoryManager

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# sbin/yarn-daemon.sh start resourcemanager

starting resourcemanager, logging to /opt/module/hadoop-2.7.7/logs/yarn-root-resourcemanager-iZuligp6e1dyzfZ.out

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

14340 ResourceManager

14550 Jps

1273 Application

23562 NameNode

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# sbin/yarn-daemon.sh start nodemanager

starting nodemanager, logging to /opt/module/hadoop-2.7.7/logs/yarn-root-nodemanager-iZuligp6e1dyzfZ.out

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

14340 ResourceManager

14596 NodeManager

14629 Jps

1273 Application

23562 NameNode

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# sbin/mr-jobhistory-daemon.sh start historyserver

starting historyserver, logging to /opt/module/hadoop-2.7.7/logs/mapred-root-historyserver-iZuligp6e1dyzfZ.out

[root@iZuligp6e1dyzfZ hadoop-2.7.7]# jps

14340 ResourceManager

14596 NodeManager

14761 JobHistoryServer

1273 Application

23562 NameNode

14795 Jps

23663 DataNode

[root@iZuligp6e1dyzfZ hadoop-2.7.7]#