- 1.编译linkis源码

- 2.基础工作和配置文件修改

- For advice on how to change settings please see

- http://dev.mysql.com/doc/refman/5.7/en/server-configuration-defaults.html">http://dev.mysql.com/doc/refman/5.7/en/server-configuration-defaults.html

- Remove leading # and set to the amount of RAM for the most important data

- cache in MySQL. Start at 70% of total RAM for dedicated server, else 10%.

- innodb_buffer_pool_size = 128M

- Remove leading # to turn on a very important data integrity option: logging

- changes to the binary log between backups.

- log_bin

- Remove leading # to set options mainly useful for reporting servers.

- The server defaults are faster for transactions and fast SELECTs.

- Adjust sizes as needed, experiment to find the optimal values.

- join_buffer_size = 128M

- sort_buffer_size = 2M

- read_rnd_buffer_size = 2M

- Disabling symbolic-links is recommended to prevent assorted security risks

- 在这里配置,配置完成重启mysql

- 3.安装和调试

安装前先在linux中安装ldap,请参考:https://www.yuque.com/zosimer/vibszg/emzotb

采用的大数据组件版本为:hadoop版本:3.1.1.3.1.4.0-315,spark版本:2.3.2.3.1.4.0-315,hive版本:3.1.0.3.1.4.0-315

操作系统:centos7

机器节点信息:

| hdp01 | hdp02 | hdp03 |

|---|---|---|

| hiveclient,sparkclient,hadoopclient,mysql等 | hiveclient,sparkclient,hadoopclient等 | hiveclient,sparkclient,hadoopclient,linkis,dataspherestudio,nginx等 |

1.编译linkis源码

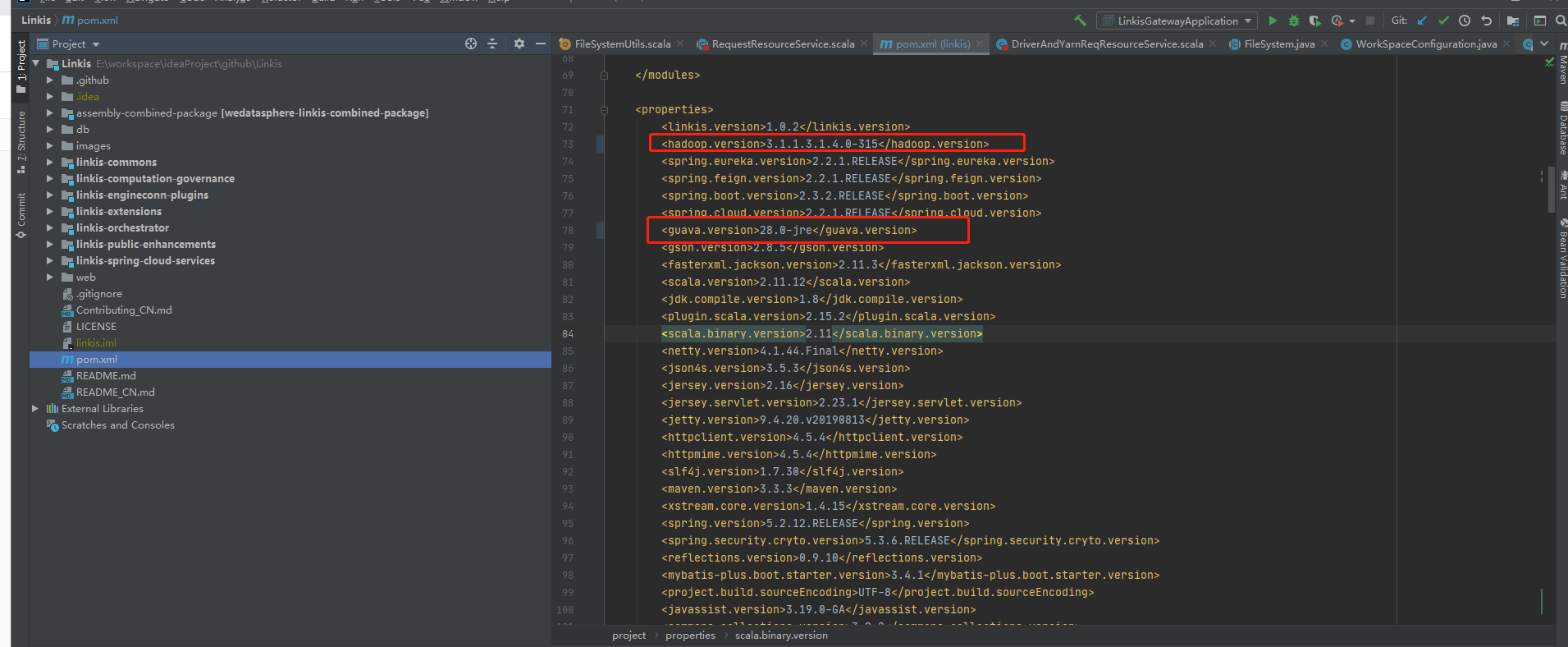

a. hadoop版本号修改

需要修改两个文件,一个是项目根目录下的pom文件

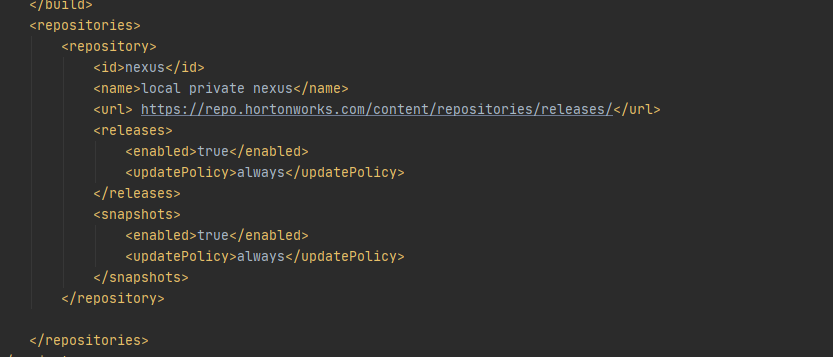

添加hdp相关的maven仓库地址

<repositories><repository><id>nexus</id><name>local private nexus</name><url> https://repo.hortonworks.com/content/repositories/releases/</url><releases><enabled>true</enabled><updatePolicy>always</updatePolicy></releases><snapshots><enabled>true</enabled><updatePolicy>always</updatePolicy></snapshots></repository></repositories>

还有一个是Linkis/linkis-commons/linkis-hadoop-common/pom.xml,修改依赖hadoop-hdfs为hadoop-hdfs-client

b. hive版本号修改

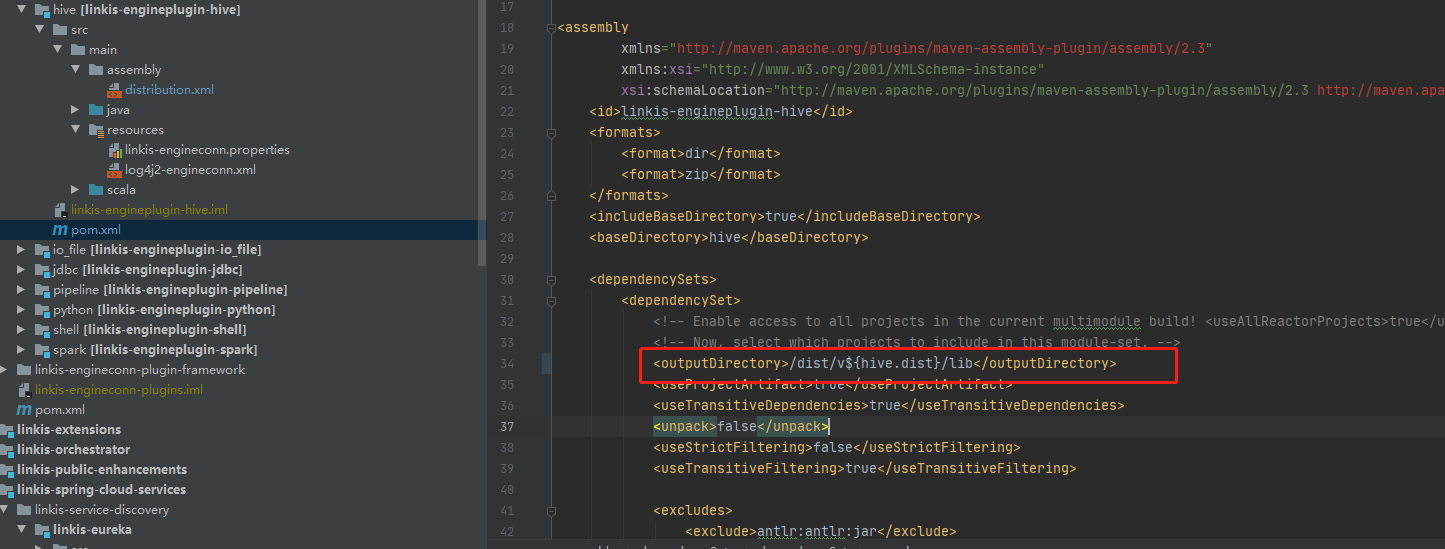

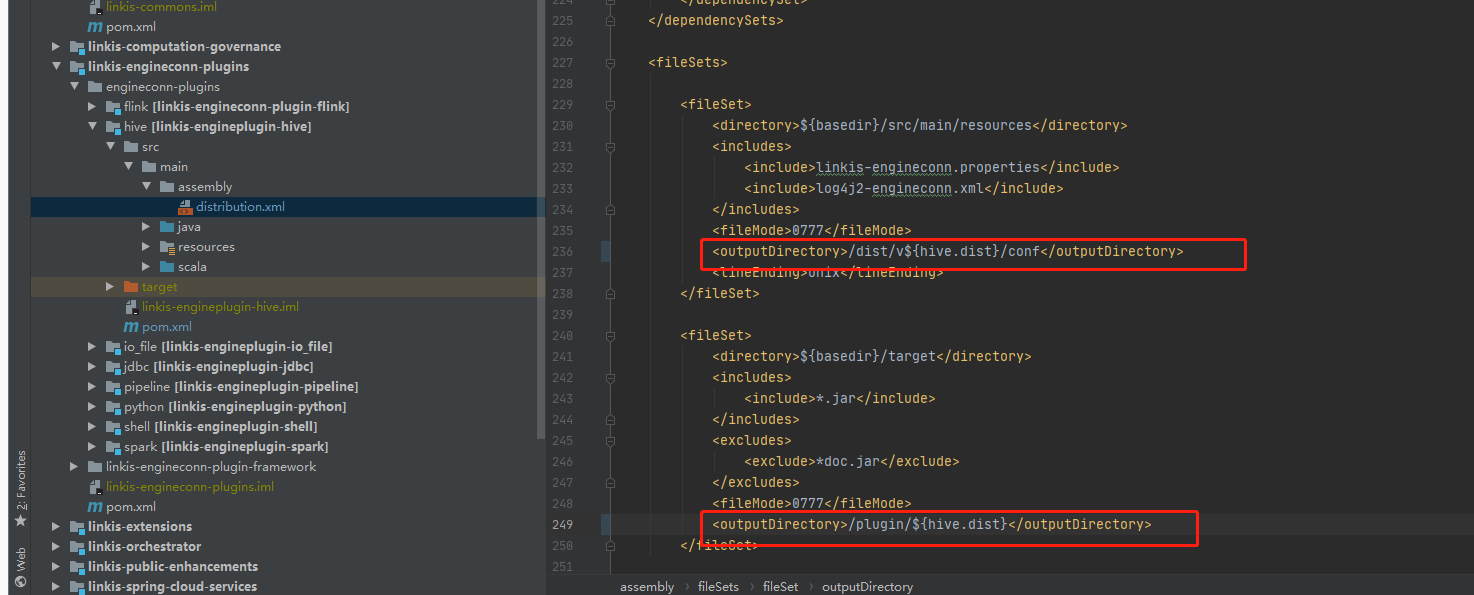

hive需要修改两个文件,Linkis\linkis-engineconn-plugins\engineconn-plugins\hive\pom.xml和Linkis\linkis-engineconn-plugins\engineconn-plugins\hive\src\main\assembly\distribution.xml

pom文件中除了需要修改hive的版本号

此外,还需修改\Linkis\linkis-engineconn-plugins\engineconn-plugins\hive\src\main\assembly\distribution.xml

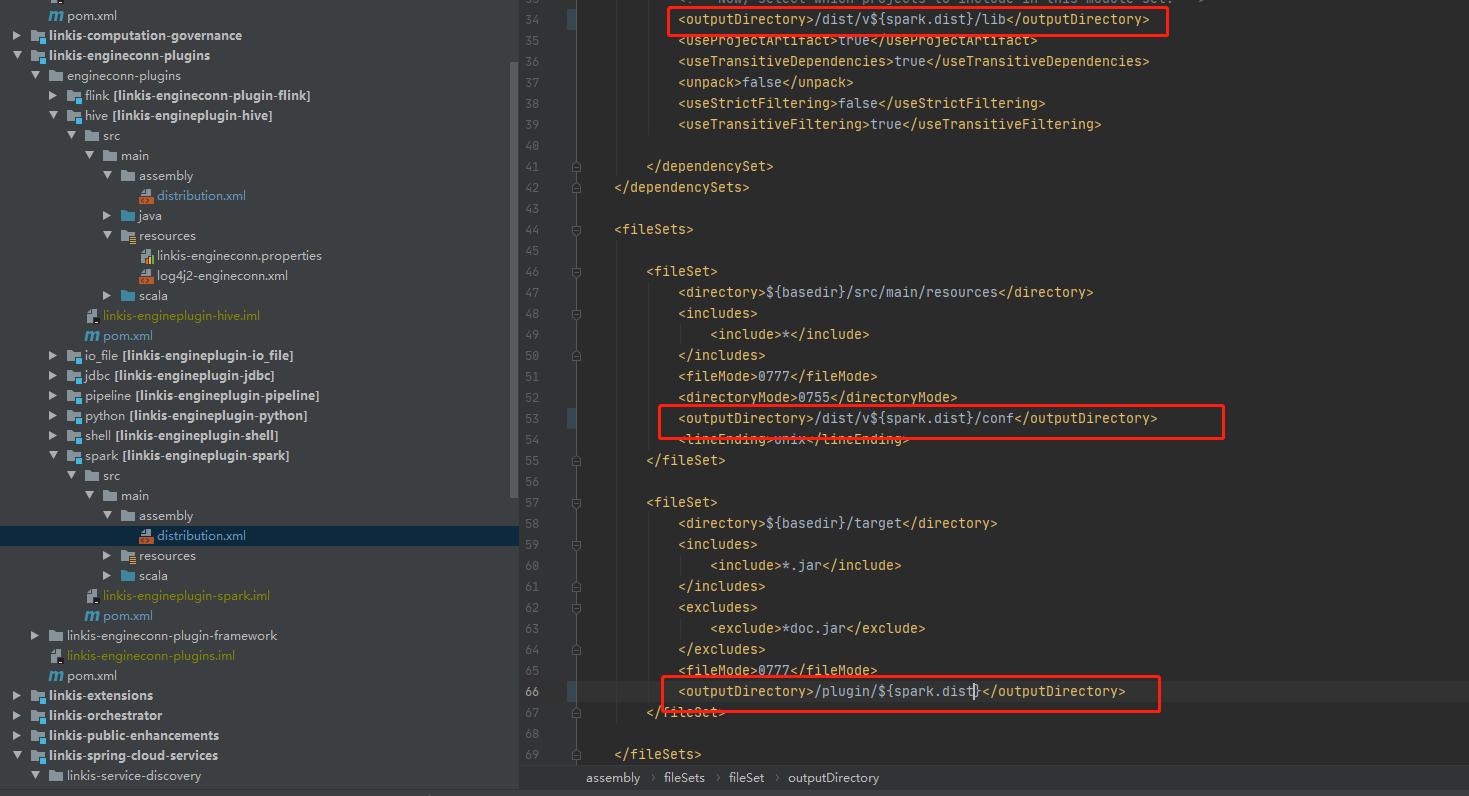

c. spark版本号修改

spark可以参考hive版本号版本修改

Linkis\linkis-engineconn-plugins\engineconn-plugins\spark\pom.xml

Linkis\linkis-engineconn-plugins\engineconn-plugins\spark\src\main\assembly\distribution.xml

常量改成你对应的版本

sql文件改成你对应的版本

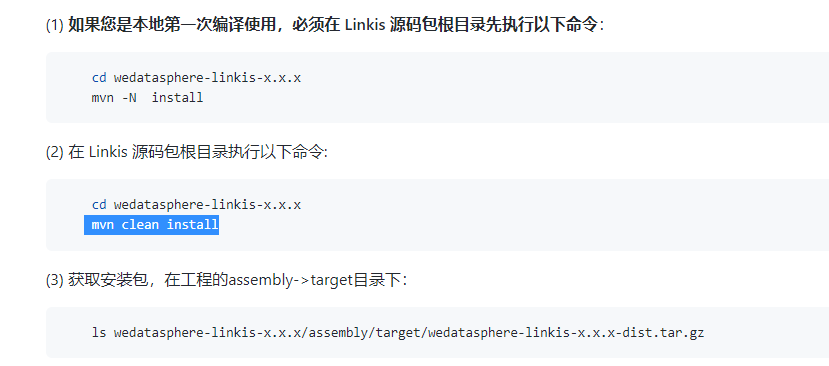

修改完上面的几处地方,就可以进行编译了,在根目录使用 mvn -N install和 mvn clean install,具体参考官方文档:

2.基础工作和配置文件修改

a. 基础软件安装

Linkis需要的命令工具(在正式安装前,脚本会自动检测这些命令是否可用,如果不存在会尝试自动安装,安装失败则需用户手动安装以下基础shell命令工具):

- telnet

- tar

- sed

- dos2unix

- mysql

- yum

- java

- unzip

- expect

需要安装的软件:

- MySQL (5.5+)

- JDK (1.8.0_141以上)

- Python(2.x和3.x都支持)

- Nginx

我这里使用的mysql5.7,需要在/etc/my.cnf配置文件末尾添加一行配置,因为如果不加在后面执行hive的时候会报错:Expression #1 of SELECT list is not in GROUP BY clause and contains nonaggregated column ‘test.user.id’ which is not functionally dependent on columns in GROUP BY clause; this is incompatible with sql_mode=only_full_group_byyum install mysql unzip expect telnet tar sed dos2unix nginx

添加配置如下: ```shellFor advice on how to change settings please see

http://dev.mysql.com/doc/refman/5.7/en/server-configuration-defaults.html

[mysqld] #

Remove leading # and set to the amount of RAM for the most important data

cache in MySQL. Start at 70% of total RAM for dedicated server, else 10%.

innodb_buffer_pool_size = 128M

#

Remove leading # to turn on a very important data integrity option: logging

changes to the binary log between backups.

log_bin

#

Remove leading # to set options mainly useful for reporting servers.

The server defaults are faster for transactions and fast SELECTs.

Adjust sizes as needed, experiment to find the optimal values.

join_buffer_size = 128M

sort_buffer_size = 2M

read_rnd_buffer_size = 2M

datadir=/var/lib/mysql socket=/var/lib/mysql/mysql.sock

Disabling symbolic-links is recommended to prevent assorted security risks

symbolic-links=0

log-error=/var/log/mysqld.log pid-file=/var/run/mysqld/mysqld.pid

在这里配置,配置完成重启mysql

sql_mode=STRICT_TRANS_TABLES,NO_ZERO_IN_DATE,NO_ZERO_DATE,ERROR_FOR_DIVISION_BY_ZERO,NO_AUTO_CREATE_USER,NO_ENGINE_SUBSTITUTION

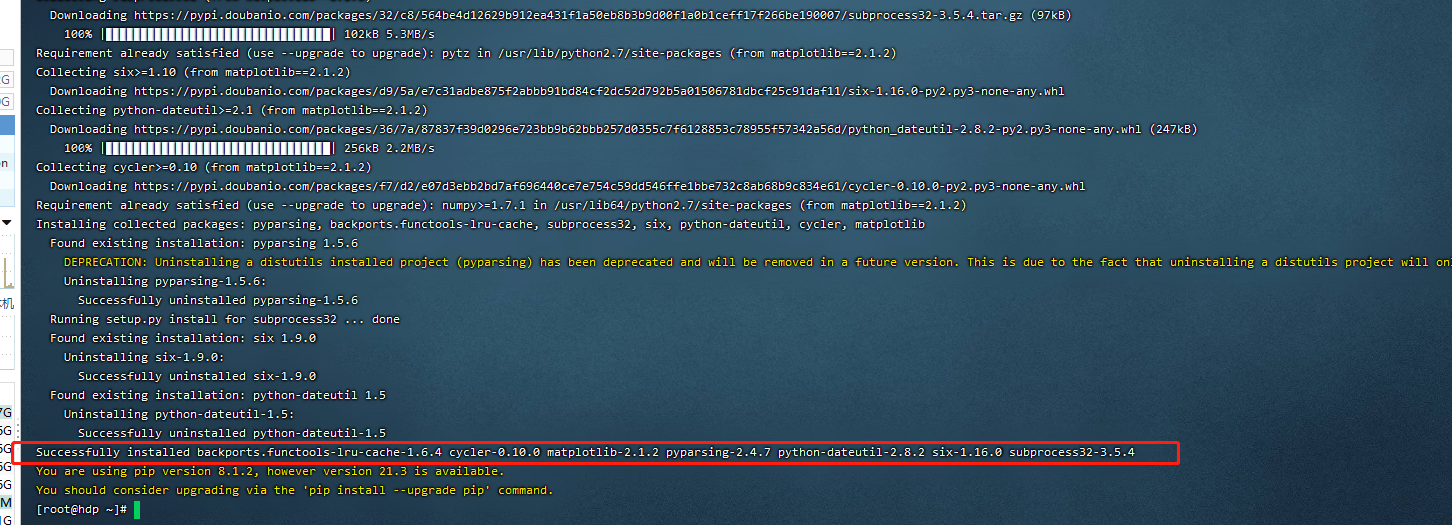

还有安装linkis会检测matplotlib时否安装,所有需要安装matplotlib,当时在这个地方也卡了半天,因为ambari不支持python3,升级到python3导致ambari不能正常启动,如下是我使用python2安装matplotlib的步骤,仅供参考:

```shell

yum install https://dl.fedoraproject.org/pub/epel/epel-release-latest-7.noarch.rpm

yum install -y python-pip

yum install python-devel

yum install gcc-c++

pip install cppy==1.1.0 -i https://pypi.douban.com/simple

pip install future -i https://pypi.douban.com/simple

pip install numpy==1.9.2 -i https://pypi.douban.com/simple

pip install setuptools==33.1.1 https://pypi.douban.com/simple

pip install pyparsing==2.2.2 https://pypi.douban.com/simple

pip install pandas https://pypi.douban.com/simple

pip uninstall cycler

pip install cycler==0.10 -i https://pypi.douban.com/simple

pip install matplotlib==1.5.1 -i https://pypi.douban.com/simple

b. 创建用户

例如: 部署用户是hadoop账号(可以不是hadoop用户,但是推荐使用Hadoop的超级用户进行部署,这里只是一个示例)

在所有需要部署的机器上创建部署用户,用于安装

useradd hadoop因为Linkis的服务是以 sudo -u ${linux-user} 方式来切换引擎,从而执行作业,所以部署用户需要有 sudo 权限,而且是免密的。

vi /etc/sudoers

hadoop ALL=(ALL) NOPASSWD: NOPASSWD: ALLc.配置环境变量

sudo vi /etc/profile

export JAVA_HOME=/usr/local/jdk1.8.0_201 ##如果不使用Hive、Spark等引擎且不依赖Hadoop,则不需要修改以下环境变量 #HADOOP export HADOOP_HOME=/usr/hdp/current/hadoop-client export HADOOP_CONF_DIR=/etc/hadoop/conf #Hive export HIVE_HOME=/usr/hdp/current/hive-client export HIVE_CONF_DIR=/etc/hive/conf #Spark export SPARK_HOME=/usr/hdp/current/spark2-client export SPARK_CONF_DIR=/etc/spark2/conf export FLINK_HOME=/opt/flink-1.12.2 export PYSPARK_ALLOW_INSECURE_GATEWAY=1 # Pyspark必须加的参数 export JRE_HOME=${JAVA_HOME}/jre export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib export PATH=${JAVA_HOME}/bin:$PATH:$FLINK_HOME/bind.安装准备

下载全家桶安装包:点击下载(自己有就不用下载)

下载 DSS & LINKIS 一键安装部署包 ``` ├── dss_linkis # 一键部署主目录 ├── bin # 用于一键安装,以及一键启动 DSS + Linkis ├── conf # 一键部署的参数配置目录 ├── wedatasphere-dss-1.0.0-dist.tar.gz # DSS后台安装包 ├── wedatasphere-dss-web-1.0.0-dist.zip # DSS前端安装包 ├── wedatasphere-linkis-1.0.2-combined-package-dist.tar.gz # Linkis安装包

2. 替换linkis安装包

使用 hadoop用户在 /opt/下新建一个linkis_dss

```shell

sudo mkdir /opt/linkis_dss

sudo chown hadoop:hadoop /opt/linkis_dss -R

把我们下载好的DSS & LINKIS放到这个linkis_dss文件下并解压,然后把wedatasphere-linkis-1.0.2-combined-package-dist.tar.gz压缩包换成之前编译完成的压缩包

e. 修改配置

打开conf/config.sh,按需修改相关配置参数:

vi conf/config.sh

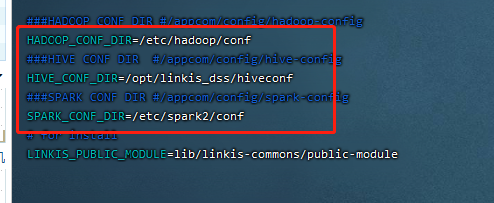

参数说明如下:(下面配置比较重点的点是:LINKIS_VERSION,DSS_VERSION,LDAP_URL,LDAP_BASEDN,LDAP_USER_NAME_FORMAT,WORKSPACE_USER_ROOT_PATH,DSS_WEB_PORT,HADOOP_CONF_DIR,HIVE_CONF_DIR,SPARK_CONF_DIR)

#################### 一键安装部署的基本配置 ####################

# 部署用户,默认为当前登录用户,非必须不建议修改

# deployUser=hadoop

# 非必须不建议修改

LINKIS_VERSION=1.0.3

### DSS Web,本机安装无需修改

#DSS_NGINX_IP=127.0.0.1

###如果是单机版本的集群或者装在yarn同节点,8088会与yarn的端口冲突,我这里修改为8090

DSS_WEB_PORT=8090

# 非必须不建议修改

DSS_VERSION=1.0.1

## Java应用的堆栈大小。如果部署机器的内存少于8G,推荐128M;达到16G时,推荐至少256M;如果想拥有非常良好的用户使用体验,推荐部署机器的内存至少达到32G。

export SERVER_HEAP_SIZE="128M"

############################################################

##################### Linkis 的配置开始 #####################

########### 非注释的参数必须配置,注释掉的参数可按需修改 ##########

############################################################

### DSS工作空间目录

WORKSPACE_USER_ROOT_PATH=file:///appcomm/linkis/

### 用户 HDFS 根路径

HDFS_USER_ROOT_PATH=hdfs:///tmp/linkis

### 结果集路径: file 或者 hdfs path

RESULT_SET_ROOT_PATH=hdfs:///tmp/linkis

### Path to store started engines and engine logs, must be local

ENGINECONN_ROOT_PATH=/appcom/tmp

#ENTRANCE_CONFIG_LOG_PATH=hdfs:///tmp/linkis/

### HADOOP配置文件路径,必须配置,hdp hadoop的配置文件路径

HADOOP_CONF_DIR=/etc/hadoop/conf

### HIVE CONF DIR

###注意,这个地方不能配置hdp hive的配置文件路径,需要单独把配置文件拷贝一份,我这里放在/opt/linkis_dss/hiveconf

###至于为什么需要单独配置,因为这个地方需要配置tez相关的信息,后面会谈到如何配置

HIVE_CONF_DIR=/opt/linkis_dss/hiveconf

### SPARK CONF DIR

##注意,hdp spark的配置文件路径

SPARK_CONF_DIR=/etc/spark2/conf

# for install

#LINKIS_PUBLIC_MODULE=lib/linkis-commons/public-module

## YARN REST URL

YARN_RESTFUL_URL=http://127.0.0.1:8088

## Engine版本配置,不配置则采用默认配置

#SPARK_VERSION

###这里需要填写在编译时pom文件中<spark.dist>2.3.2.3.1.4.0_315</spark.dist>

SPARK_VERSION=2.3.2.3.1.4.0_315

##HIVE_VERSION

###这里需要填写在编译时pom文件中<hive.dist>3.1.0.3.1.4.0_315</hive.dist>

HIVE_VERSION=3.1.0.3.1.4.0_315

PYTHON_VERSION=python2

## LDAP is for enterprise authorization, if you just want to have a try, ignore it.

LDAP_URL=ldap://localhost:389/

LDAP_BASEDN=dc=c,dc=citic

LDAP_USER_NAME_FORMAT=uid=%s,ou=People,dc=c,dc=citic

# Microservices Service Registration Discovery Center

#LINKIS_EUREKA_INSTALL_IP=127.0.0.1

#LINKIS_EUREKA_PORT=20303

#LINKIS_EUREKA_PREFER_IP=true

### Gateway install information

#LINKIS_GATEWAY_PORT =127.0.0.1

#LINKIS_GATEWAY_PORT=9001

### ApplicationManager

#LINKIS_MANAGER_INSTALL_IP=127.0.0.1

#LINKIS_MANAGER_PORT=9101

### EngineManager

#LINKIS_ENGINECONNMANAGER_INSTALL_IP=127.0.0.1

#LINKIS_ENGINECONNMANAGER_PORT=9102

### EnginePluginServer

#LINKIS_ENGINECONN_PLUGIN_SERVER_INSTALL_IP=127.0.0.1

#LINKIS_ENGINECONN_PLUGIN_SERVER_PORT=9103

### LinkisEntrance

#LINKIS_ENTRANCE_INSTALL_IP=127.0.0.1

#LINKIS_ENTRANCE_PORT=9104

### publicservice

#LINKIS_PUBLICSERVICE_INSTALL_IP=127.0.0.1

#LINKIS_PUBLICSERVICE_PORT=9105

### cs

#LINKIS_CS_INSTALL_IP=127.0.0.1

#LINKIS_CS_PORT=9108

##################### Linkis 的配置完毕 #####################

############################################################

####################### DSS 的配置开始 #######################

########### 非注释的参数必须配置,注释掉的参数可按需修改 ##########

############################################################

# 用于存储发布到 Schedulis 的临时ZIP包文件

WDS_SCHEDULER_PATH=file:///appcom/tmp/wds/scheduler

### This service is used to provide dss-framework-project-server capability.

#DSS_FRAMEWORK_PROJECT_SERVER_INSTALL_IP=127.0.0.1

#DSS_FRAMEWORK_PROJECT_SERVER_PORT=9002

### This service is used to provide dss-framework-orchestrator-server capability.

#DSS_FRAMEWORK_ORCHESTRATOR_SERVER_INSTALL_IP=127.0.0.1

#DSS_FRAMEWORK_ORCHESTRATOR_SERVER_PORT=9003

### This service is used to provide dss-apiservice-server capability.

#DSS_APISERVICE_SERVER_INSTALL_IP=127.0.0.1

#DSS_APISERVICE_SERVER_PORT=9004

### This service is used to provide dss-workflow-server capability.

#DSS_WORKFLOW_SERVER_INSTALL_IP=127.0.0.1

#DSS_WORKFLOW_SERVER_PORT=9005

### dss-flow-Execution-Entrance

### This service is used to provide flow execution capability.

#DSS_FLOW_EXECUTION_SERVER_INSTALL_IP=127.0.0.1

#DSS_FLOW_EXECUTION_SERVER_PORT=9006

### This service is used to provide dss-datapipe-server capability.

#DSS_DATAPIPE_SERVER_INSTALL_IP=127.0.0.1

#DSS_DATAPIPE_SERVER_PORT=9008

##sendemail配置,只影响DSS工作流中发邮件功能

EMAIL_HOST=smtp.163.com

EMAIL_PORT=25

EMAIL_USERNAME=xxx@163.com

EMAIL_PASSWORD=xxxxx

EMAIL_PROTOCOL=smtp

####################### DSS 的配置结束 #######################

f. 修改数据库配置

请确保配置的数据库,安装机器可以正常访问,否则将会出现DDL和DML导入失败的错误。

vi conf/db.sh

### 配置DSS数据库

MYSQL_HOST=127.0.0.1

MYSQL_PORT=3306

MYSQL_DB=dss

MYSQL_USER=xxx

MYSQL_PASSWORD=xxx

## Hive metastore的数据库配置,用于Linkis访问Hive的元数据信息

HIVE_HOST=127.0.0.1

HIVE_PORT=3306

HIVE_DB=xxx

HIVE_USER=xxx

HIVE_PASSWORD=xxx

g. 修改hive-site.xml配置文件

之前在linkis中的conf/config文件中提到hive-site.xml配置路径不能直接使用 hdp hive的路径,需要单独配置出来,

在 /opt/linkis_dss/下新建一个hiveconf目录,并且把/etc/hive/conf下面的配置拷贝一份到/opt/linkis_dss/hiveconf下

sudo mkdir /opt/linkis_dss/hiveconf

sudo cp /etc/hive/conf/* /opt/linkis_dss/hiveconf

然后修改/etc/hive/conf /opt/linkis_dss/hiveconf/hive-site.xml,在中间添加下面两项配置

<property>

<name>tez.lib.uris</name>

<!--${hdp.version}替换成你自己的版本-->

<value>/hdp/apps/3.1.4.0-315/tez/tez.tar.gz</value>

</property>

<property>

<name>hive.tez.container.size</name>

<value>10240</value>

</property>

3.安装和调试

a. 执行安装脚本:

sh bin/install.sh

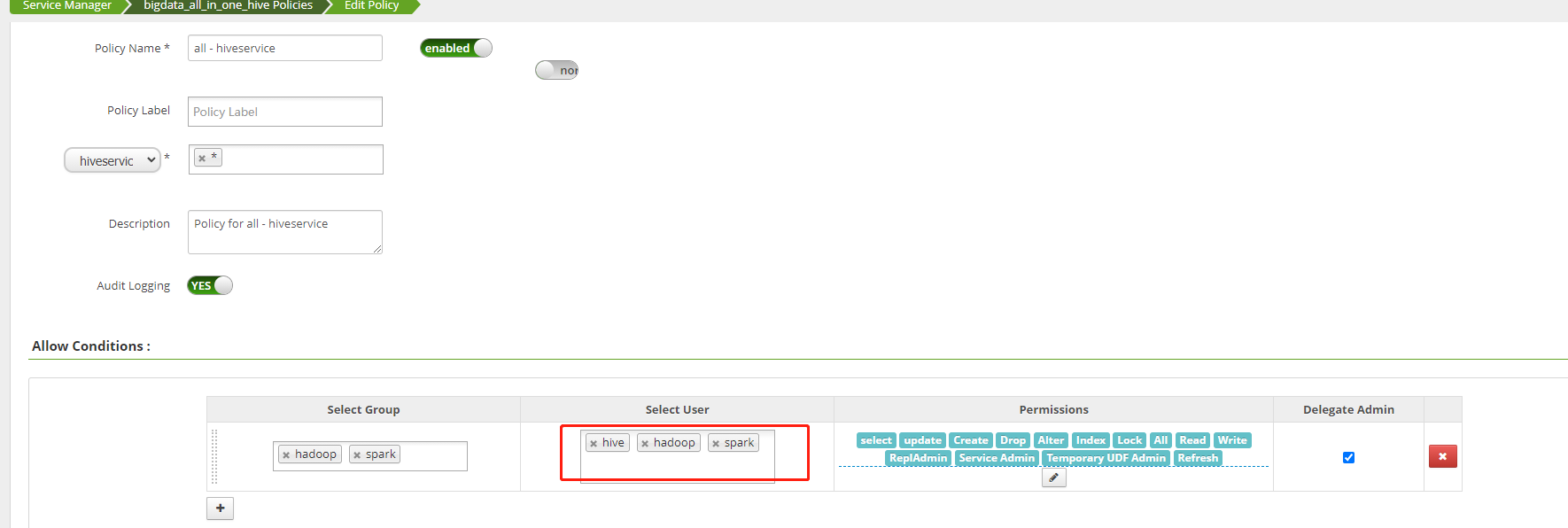

如果遇到hadoop用户没有权限连接hive,可以到ranger上设置hadoop用户允许访问hive

b. 执行启动脚本

1.#在启动脚本前需要/opt/linkis_dss/linkis/conf/linkis-mg-gateway.properties 中加入

wds.linkis.ldap.proxy.userNameFormat=uid=%s,ou=People,dc=c,dc=citic

2.需要往以下里面传入mysql驱动包

/opt/linkis_dss/linkis/lib/linkis-spring-cloud-services/linkis-mg-gateway/mysql-connector-java-5.1.49.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/mysql-connector-java-5.1.49.jar

/opt/linkis_dss/dss/dss-appconns/exchange/lib/mysql-connector-java-5.1.48.jar

/opt/linkis_dss/dss/lib/dss-commons/mysql-connector-java-5.1.49.jar

3.#在启动脚本

sh bin/start-all.sh

c. jar包冲突解决

启动发现linkis-ps-publicservice包错,错误信息如下:

27.9105/],UNAVAILABLE} java.lang.NoSuchMethodError: org.eclipse.jetty.server.session.SessionHandler.getSessionManager()Lorg/eclipse/jetty/server/SessionManager;

at org.eclipse.jetty.servlet.ServletContextHandler$Context.getSessionCookieConfig(ServletContextHandler.java:1415) ~[jetty-servlet-9.3.24.v20180605.jar:9.3.24.v20180605]

at org.springframework.boot.web.servlet.server.AbstractServletWebServerFactory$SessionConfiguringInitializer.onStartup(AbstractServletWebServerFactory.java:303) ~[spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.springframework.boot.web.embedded.jetty.ServletContextInitializerConfiguration.callInitializers(ServletContextInitializerConfiguration.java:65) ~[spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.springframework.boot.web.embedded.jetty.ServletContextInitializerConfiguration.configure(ServletContextInitializerConfiguration.java:54) ~[spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.eclipse.jetty.webapp.WebAppContext.configure(WebAppContext.java:498) ~[jetty-webapp-9.4.20.v20190813.jar:9.4.20.v20190813]

at org.eclipse.jetty.webapp.WebAppContext.startContext(WebAppContext.java:1402) ~[jetty-webapp-9.4.20.v20190813.jar:9.4.20.v20190813]

at org.eclipse.jetty.server.handler.ContextHandler.doStart(ContextHandler.java:821) ~[jetty-server-9.4.20.v20190813.jar:9.4.20.v20190813]

at org.eclipse.jetty.servlet.ServletContextHandler.doStart(ServletContextHandler.java:262) ~[jetty-servlet-9.3.24.v20180605.jar:9.3.24.v20180605]

at org.eclipse.jetty.webapp.WebAppContext.doStart(WebAppContext.java:524) [jetty-webapp-9.4.20.v20190813.jar:9.4.20.v20190813]

at org.eclipse.jetty.util.component.AbstractLifeCycle.start(AbstractLifeCycle.java:72) [jetty-util-9.4.30.v20200611.jar:9.4.30.v20200611]

at org.eclipse.jetty.util.component.ContainerLifeCycle.start(ContainerLifeCycle.java:169) [jetty-util-9.4.30.v20200611.jar:9.4.30.v20200611]

at org.eclipse.jetty.server.Server.start(Server.java:407) [jetty-server-9.4.20.v20190813.jar:9.4.20.v20190813]

at org.eclipse.jetty.util.component.ContainerLifeCycle.doStart(ContainerLifeCycle.java:110) [jetty-util-9.4.30.v20200611.jar:9.4.30.v20200611]

at org.eclipse.jetty.server.handler.AbstractHandler.doStart(AbstractHandler.java:106) [jetty-server-9.4.20.v20190813.jar:9.4.20.v20190813]

at org.eclipse.jetty.server.Server.doStart(Server.java:371) [jetty-server-9.4.20.v20190813.jar:9.4.20.v20190813]

at org.eclipse.jetty.util.component.AbstractLifeCycle.start(AbstractLifeCycle.java:72) [jetty-util-9.4.30.v20200611.jar:9.4.30.v20200611]

at org.springframework.boot.web.embedded.jetty.JettyWebServer.initialize(JettyWebServer.java:136) [spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.springframework.boot.web.embedded.jetty.JettyWebServer.<init>(JettyWebServer.java:92) [spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.springframework.boot.web.embedded.jetty.JettyServletWebServerFactory.getJettyWebServer(JettyServletWebServerFactory.java:408) [spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.springframework.boot.web.embedded.jetty.JettyServletWebServerFactory.getWebServer(JettyServletWebServerFactory.java:162) [spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.springframework.boot.web.servlet.context.ServletWebServerApplicationContext.createWebServer(ServletWebServerApplicationContext.java:178) [spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.springframework.boot.web.servlet.context.ServletWebServerApplicationContext.onRefresh(ServletWebServerApplicationContext.java:158) [spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.springframework.context.support.AbstractApplicationContext.refresh(AbstractApplicationContext.java:545) [spring-context-5.2.8.RELEASE.jar:5.2.8.RELEASE]

at org.springframework.boot.web.servlet.context.ServletWebServerApplicationContext.refresh(ServletWebServerApplicationContext.java:143) [spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.springframework.boot.SpringApplication.refresh(SpringApplication.java:758) [spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

at org.springframework.boot.SpringApplication.refresh(SpringApplication.java:750) [spring-boot-2.3.2.RELEASE.jar:2.3.2.RELEASE]

参考:https://docs.qq.com/doc/DWlN4emlJeEJxWlR0中Q21,jetty-servlet 和 jetty-security,jetty-util版本需要从9.3.20升级为9.4.20;

###查看linkis lib下所有jetty的jar文件

find /opt/linkis_dss/linkis/lib -name "jetty*"

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-annotations-9.4.30.v20200611.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-client-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-continuation-9.4.30.v20200611.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-http-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-io-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-plus-9.4.30.v20200611.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-security-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-server-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-servlet-9.3.24.v20180605.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-servlets-9.4.30.v20200611.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-util-9.3.24.v20180605.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-webapp-9.3.24.v20180605.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/spark/dist/v2.3.2.3.1.4.0_315/lib/jetty-xml-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/hive/dist/v3.1.0.3.1.4.0-315/lib/jetty-rewrite-9.3.25.v20180904.jar

/opt/linkis_dss/linkis/lib/linkis-spring-cloud-services/linkis-mg-gateway/jetty-client-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-spring-cloud-services/linkis-mg-gateway/jetty-http-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-spring-cloud-services/linkis-mg-gateway/jetty-io-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-spring-cloud-services/linkis-mg-gateway/jetty-util-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-spring-cloud-services/linkis-mg-gateway/jetty-xml-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-annotations-9.4.30.v20200611.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-client-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-continuation-9.4.30.v20200611.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-http-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-io-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-plus-9.4.30.v20200611.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-security-9.3.24.v20180605.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-server-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-servlet-9.3.24.v20180605.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-servlets-9.4.30.v20200611.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-util-9.4.30.v20200611.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-webapp-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-commons/public-module/jetty-xml-9.4.20.v20190813.jar

/opt/linkis_dss/linkis/lib/linkis-computation-governance/linkis-cg-linkismanager/jetty-util-9.3.24.v20180605.jar

把lib下所有jetty-servlet 和 jetty-security,jetty-util从9.3.20升级为9.4.20;

Caused by: java.lang.VerifyError: class net.sf.cglib.core.DebuggingClassWriter overrides final method visit.(IILjava/lang/String;Ljava/lang/String;Ljava/lang/String;[Ljava/lang/String;)V

rm -rf linkis/lib/linkis-commons/public-module/asm-5.0.4.jar

d. 解决nginx端口冲突和修改nginx配置

由于httpd 80端口和nginx端口冲突,可以把httpd 服务先停掉,并且把开启启动关闭

systemctl stop httpd.service

systemctl disable httpd.service

由于默认安装nginx配置文件微服务网关自动生成的端口号不对,需要修改两个地方的端口号(解决502状态码)

vi /etc/nginx/conf.d/dss.conf

server {

listen 8090;# 访问端口

server_name localhost;

#charset koi8-r;

#access_log /var/log/nginx/host.access.log main;

location /dss/visualis {

root /opt/linkis_dss/web; # 静态文件目录

autoindex on;

}

location /dss/linkis {

root /opt/linkis_dss/web; # linkis管理台的静态文件目录

autoindex on;

}

location / {

root /opt/linkis_dss/web/dist; # 静态文件目录

index index.html index.html;

}

location /ws {

proxy_pass http://localhost:9001;#后端Linkis的地址 默认20401

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection upgrade;

}

location /api {

proxy_pass http://localhost:9001; #后端Linkis的地址,默认20401

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header x_real_ipP $remote_addr;

proxy_set_header remote_addr $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_http_version 1.1;

proxy_connect_timeout 4s;

proxy_read_timeout 600s;

proxy_send_timeout 12s;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection upgrade;

}

#error_page 404 /404.html;

# redirect server error pages to the static page /50x.html

#

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root /usr/share/nginx/html;

}

}

浏览器输入:http://192.168.0.120:8090/#/login,输入用户名密码hadoop/hadoop,这时会发现,登录可以正常登录,但是进去之后,好多接口报404错误

e. 解决404错误

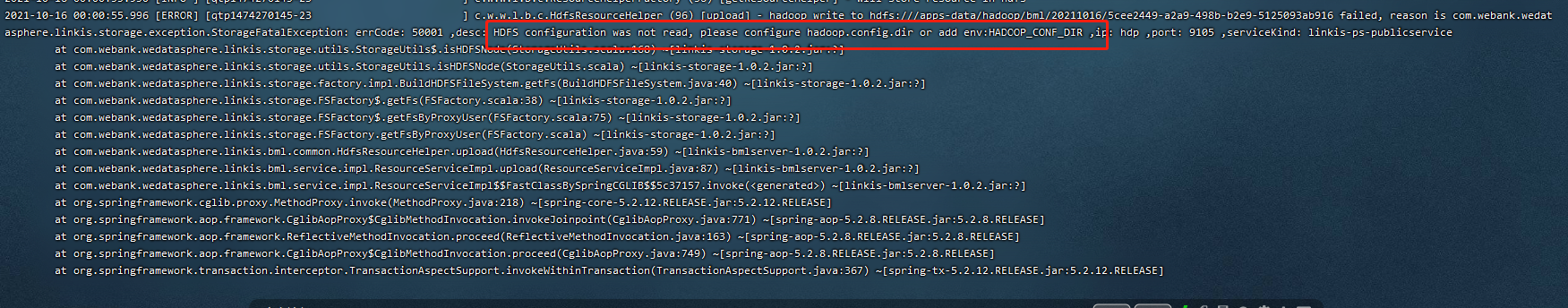

通过查看日志/opt/linkis_dss/linkis/logs/linkis-ps-publicservice.log,发现有以下两种情况出现404

第一种:就是安装时hadoop配置文件路径写错了导致下面的错误

第二种:就是hdfs对于hadoop用户没有写权限

解决办法:两种选一种就可以

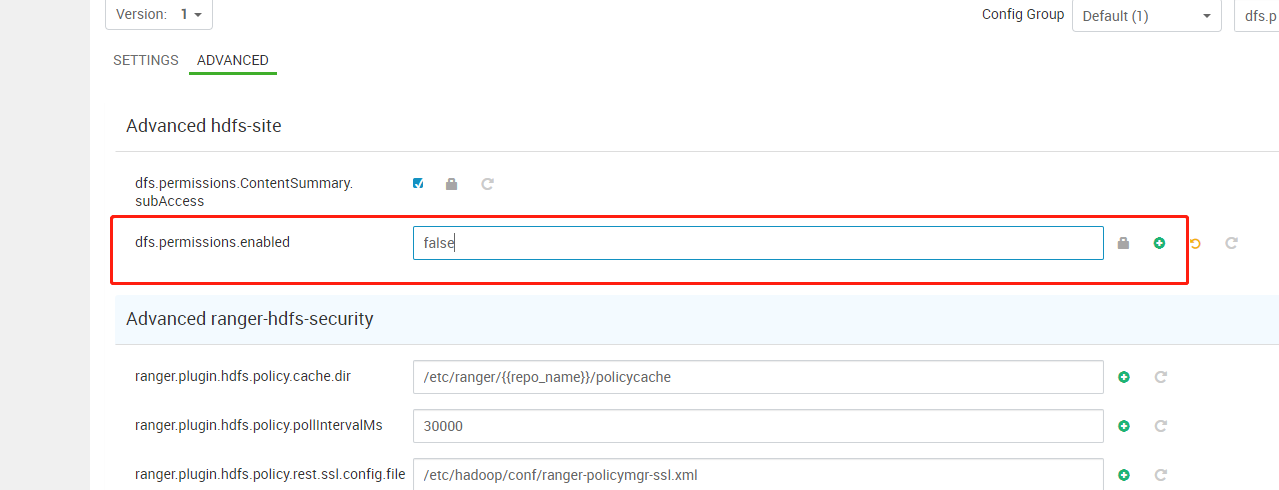

第一种:关闭hdfs的权限验证

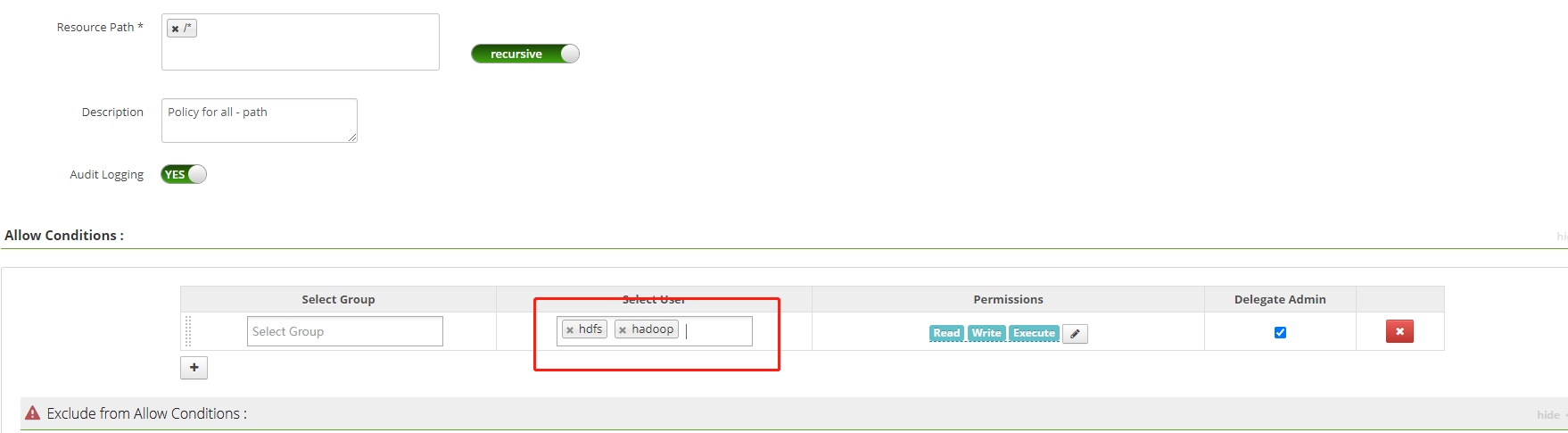

第二种:通过ranger 让hadoop用户有权限写 hdfs

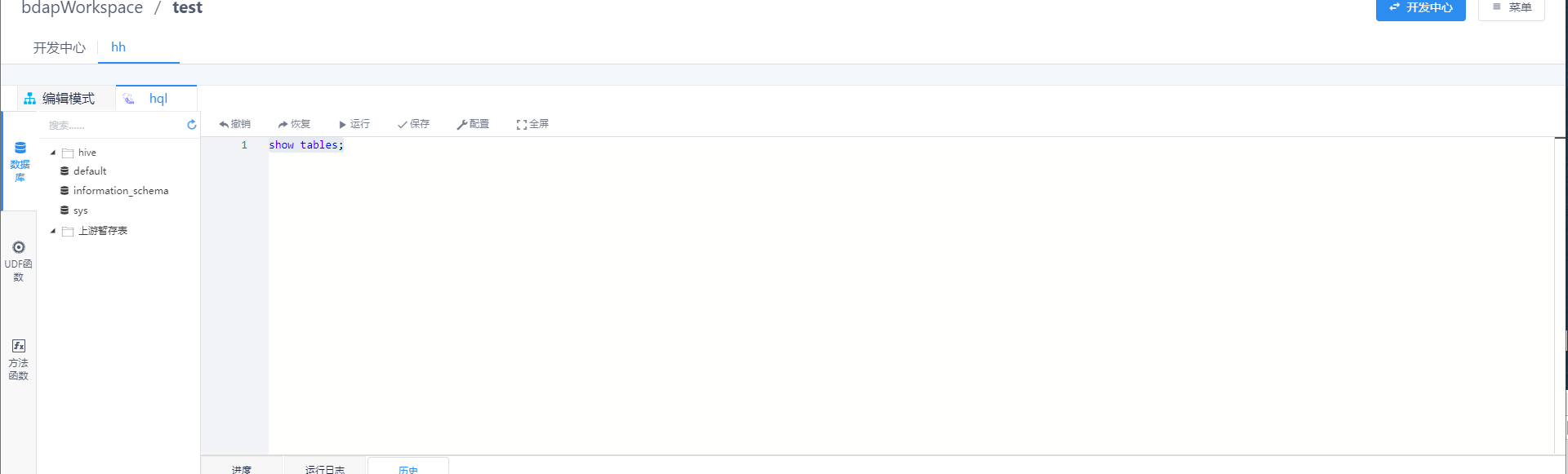

然后重新启动所有微服务后,登录进去,一顿操作猛如虎,终于看见胜利的曙光了。

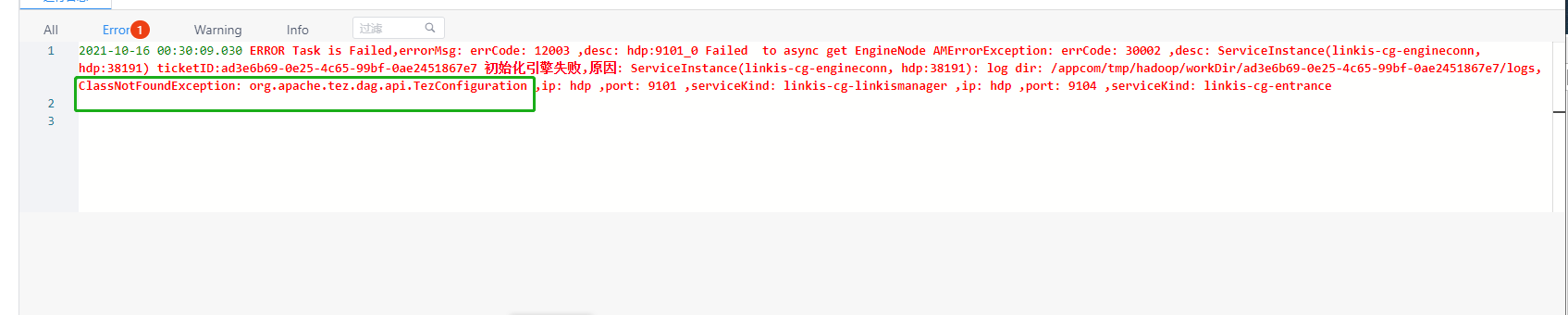

但是,高兴似乎有点太早,会出现tez相关的类找不到

f. 适配tez引擎

前面修改了hive-site.xml配置文件,并且路径没有使用hdp默认的hive 配置文件路径,需要单独出来,其实主要时为了适配tez引擎,如果我们现在直接启动,会报一个tez相关的类找不到的异常:

发现没有tez相关的jar包,把 hdp中tez相关的jar文件拷贝到/opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/hive/dist/v3.1.0.3.1.4.0_315/lib下

cp /usr/hdp/3.1.4.0-315/tez/tez-*.jar /opt/linkis_dss/linkis/lib/linkis-engineconn-plugins/hive/dist/v3.1.0.3.1.4.0_315/lib/

然后只需要重启hive引擎就可以了

sh /opt/linkis_dss/linkis/sbin/linkis-daemon.sh restart cg-engineplugin

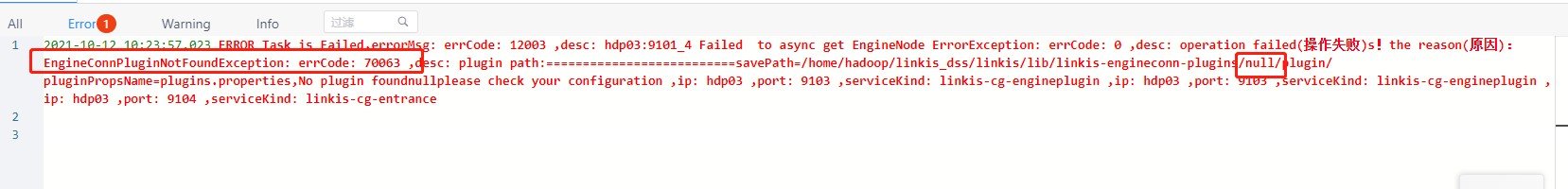

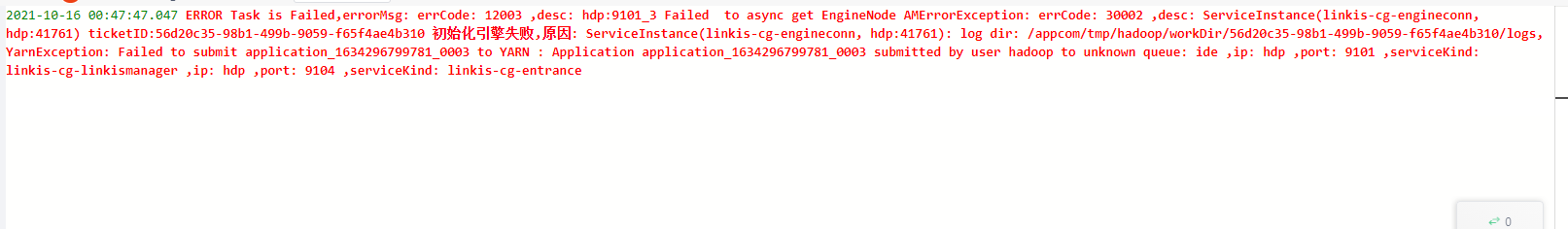

此时,是不是觉得这下肯定ok了,运行sql后,发现离成功还差一点点,错误如下:

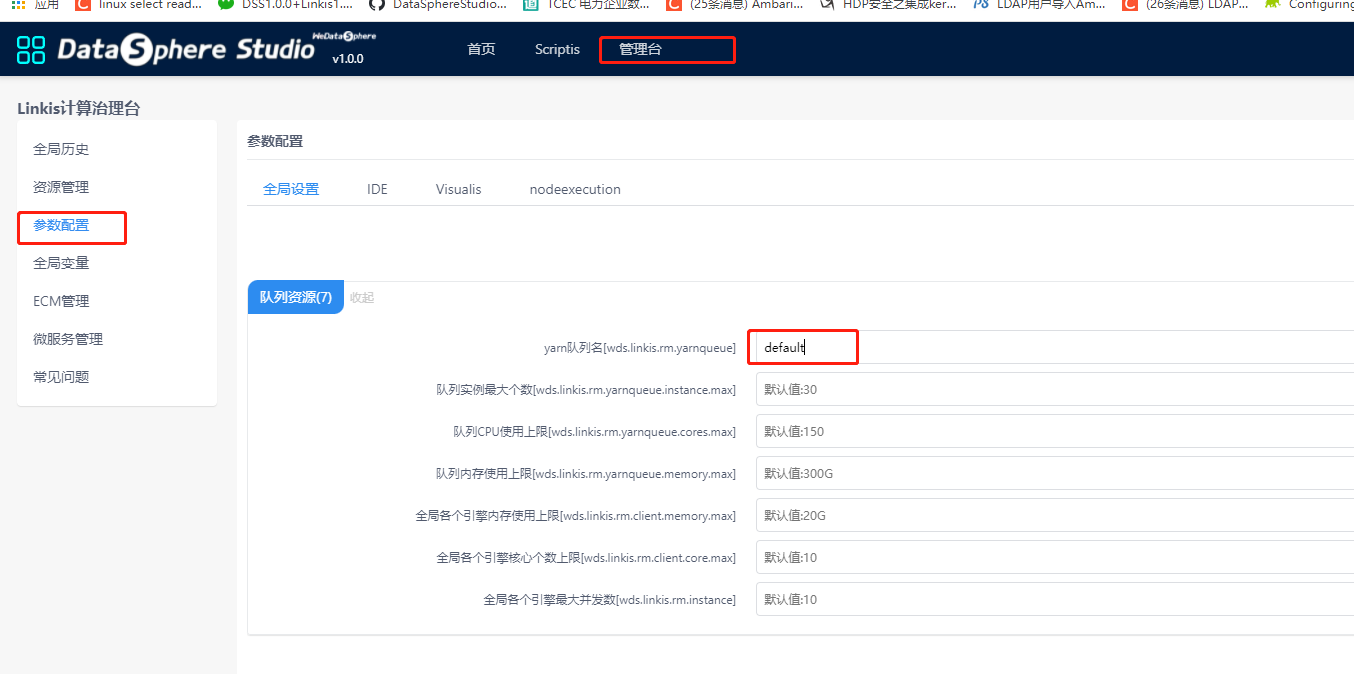

这个错误是平台默认的yarn 队列是ide,但是yarn里面并没有这个队列,yarn默认的是default队列,怎么修改呢

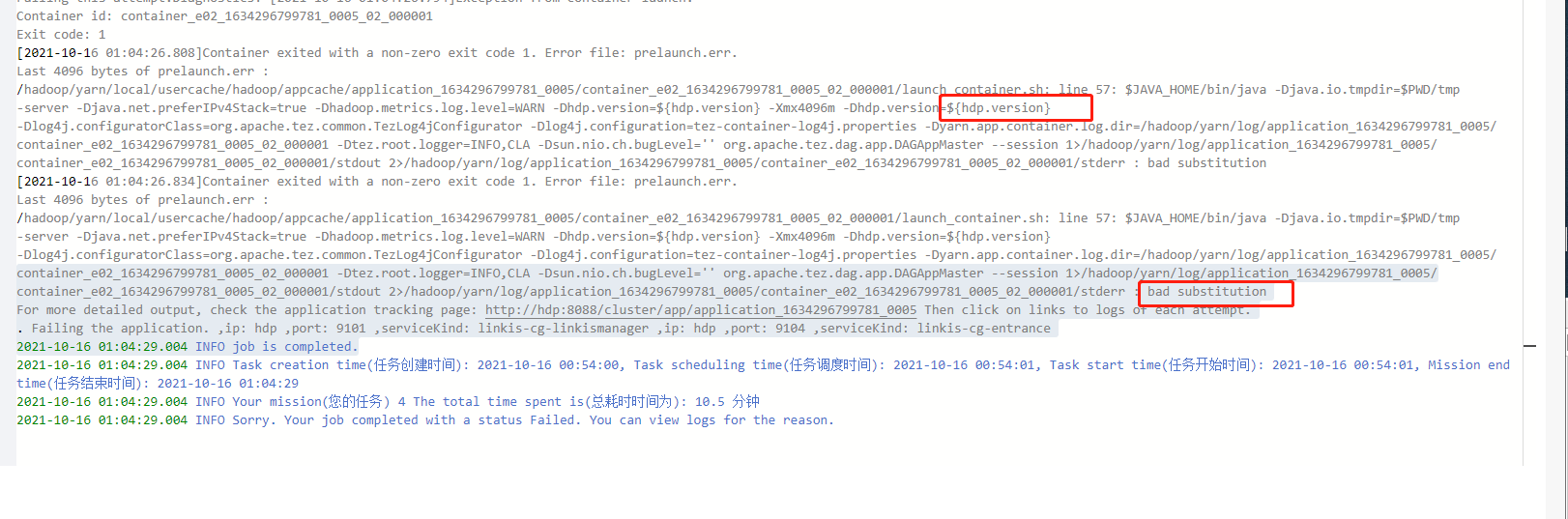

再次运行:报下面的错误,这是因为yarn无法识别${hdp.version}这个参数

g. yarn 无法识别${hdp.version}

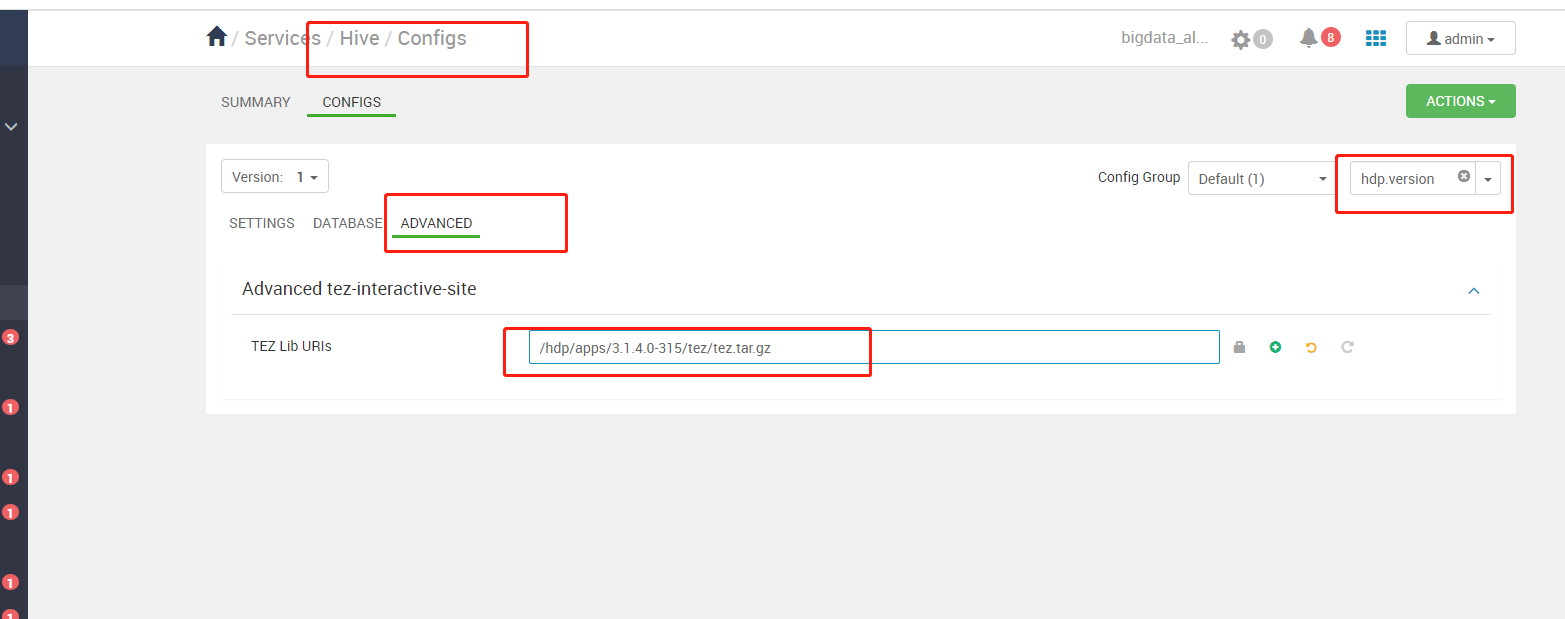

需要在ambari平台中把tez和hive,yarn,mapreduce中的${hdp.version}都替换成3.1.4.0-315

hive 配置

tez配置

yarn和mapreduce参考上面的方法都操作一遍。完成上述配置后,需要再次把 hdp的hive配置文件拷贝到/opt/linkis_dss/hiveconf目录,并且添加tez相关的配置

cp /etc/hive/conf/* /opt/linkis_dss/hiveconf

vi /opt/linkis_dss/hiveconf/hive-sitze.xml

添加下面的配置

<property>

<name>tez.lib.uris</name>

<!--${hdp.version}替换成你自己的版本-->

<value>/hdp/apps/${hdp.version}/tez/tez.tar.gz</value>

</property>

<property>

<name>hive.tez.container.size</name>

<value>10240</value>

</property>

h. 删除hive-site.xml中的atlas配置(如果您没有装,可略过)

在/opt/linkis_dss/hiveconf/hive-sitze.xml中查找atlas相关的配置项,删除即可,如果不删除会出现如下错误:

然后重启 hive引擎

sh /opt/linkis_dss/linkis/sbin/linkis-daemon.sh restart cg-engineplugin

到此位置,hive算是完全打通

——————————————————————————————————————————————————————

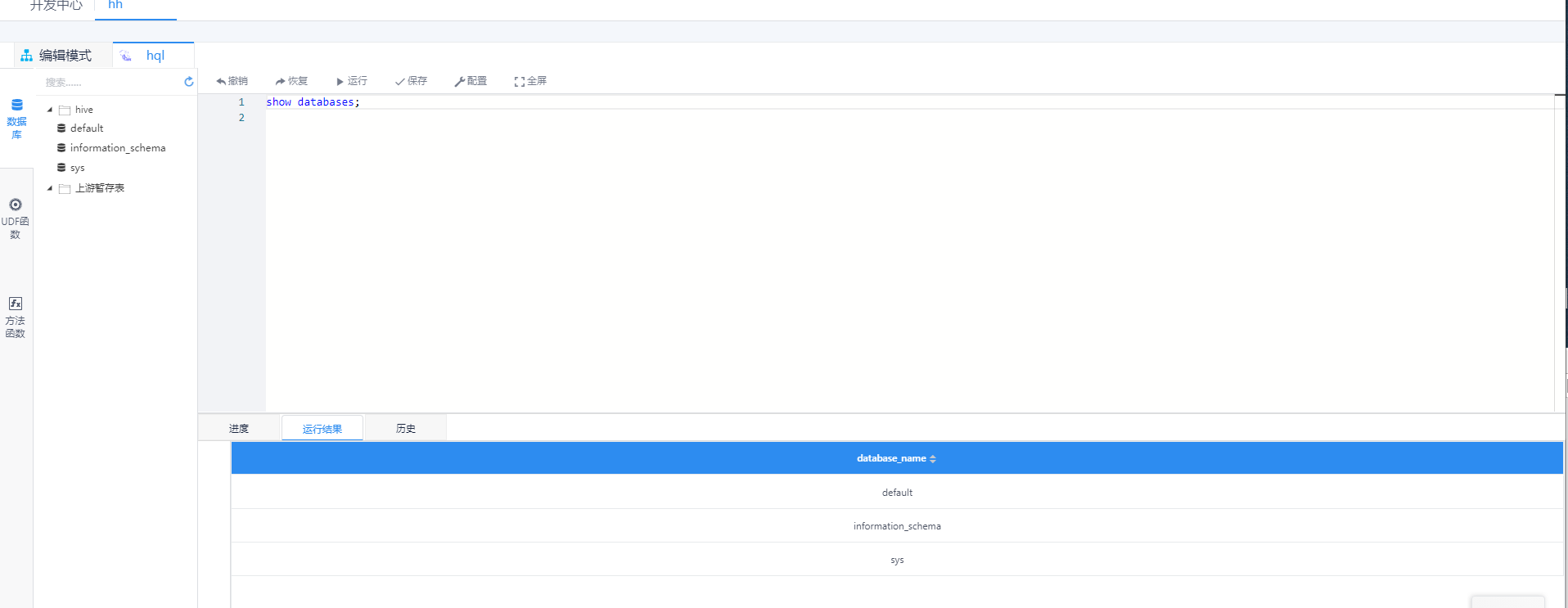

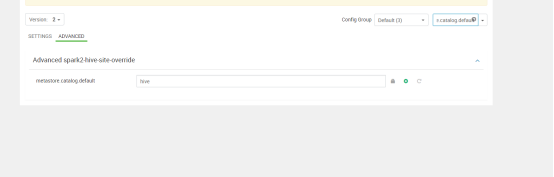

i. 验证spark

hdp spark默认表元数据和hive是不共享的,你会发现执行spark引擎的时候会没有你新建的表和数据库,此时只需要修改hdp上的一个配置后,重启即可

metastore.catalog.default=spark 改成metastore.catalog.default=hive

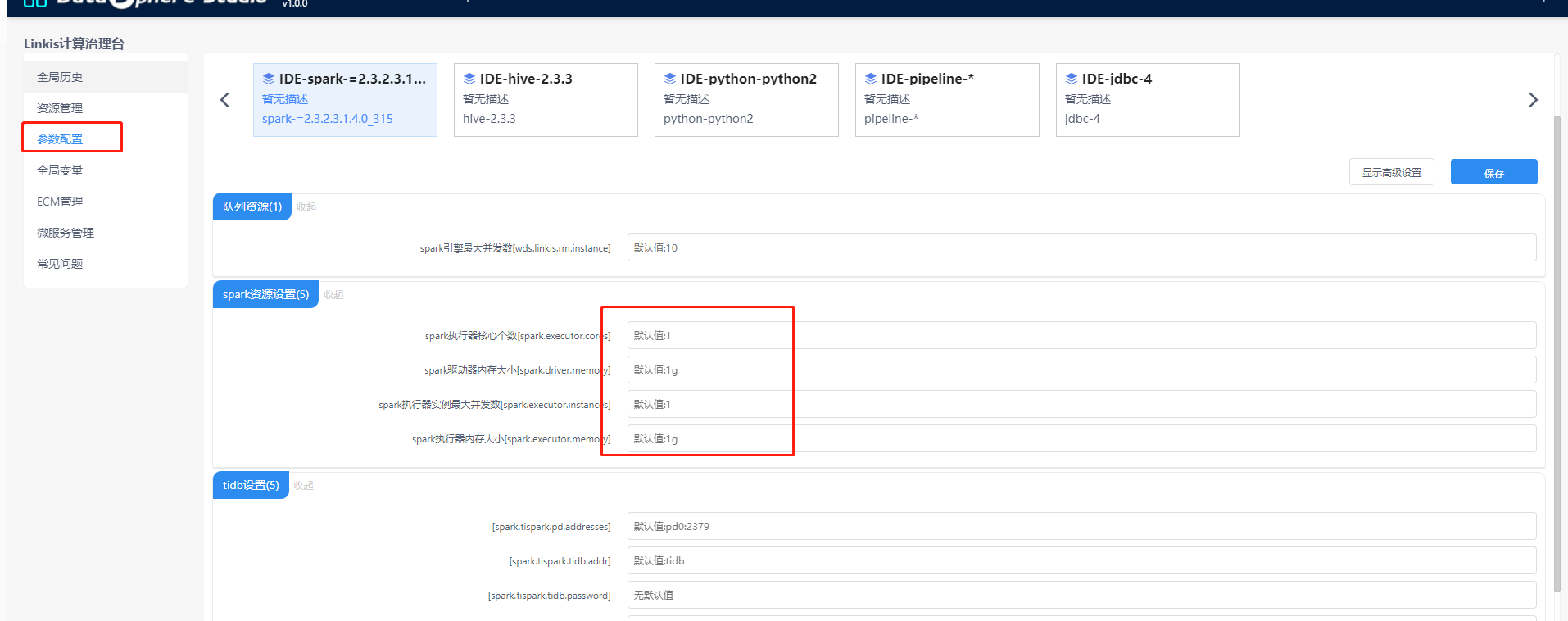

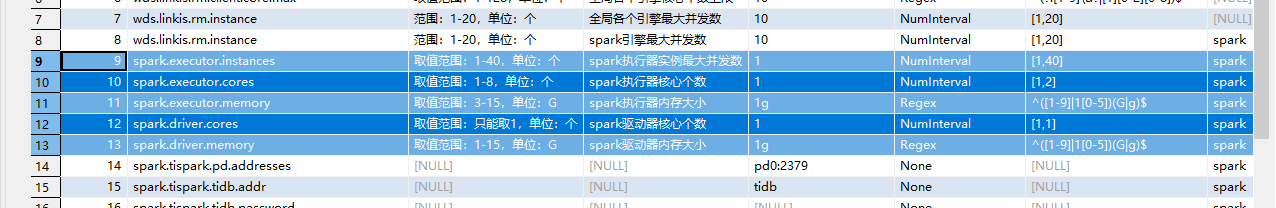

j. 修改默认资源大小(资源不足做参考)

linkis 执行引擎的资源大小在linkis_ps_configuration_config_key这个表里面配置,可以把对应的默认值和最小值改小,或者把对应的正则表达式调整一下,对于资源不足的情况可以解决

linkismanager服务修改下这个配置:wds.linkis.manager.rm.request.enable=false

可以清理下资源记录,或者设置小点的资源

或者关闭检测

linkismanager服务修改下这个配置:wds.linkis.manager.rm.request.enable=false

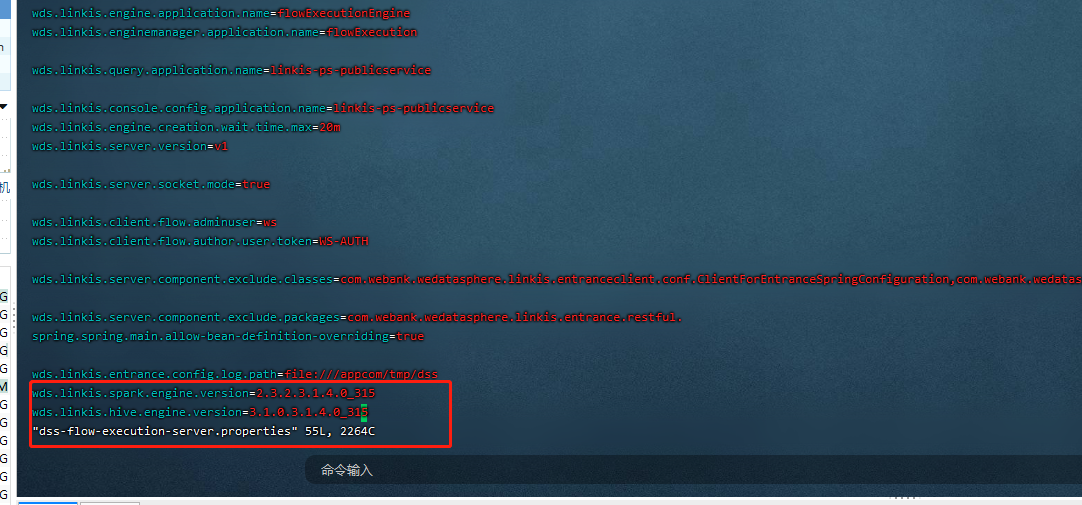

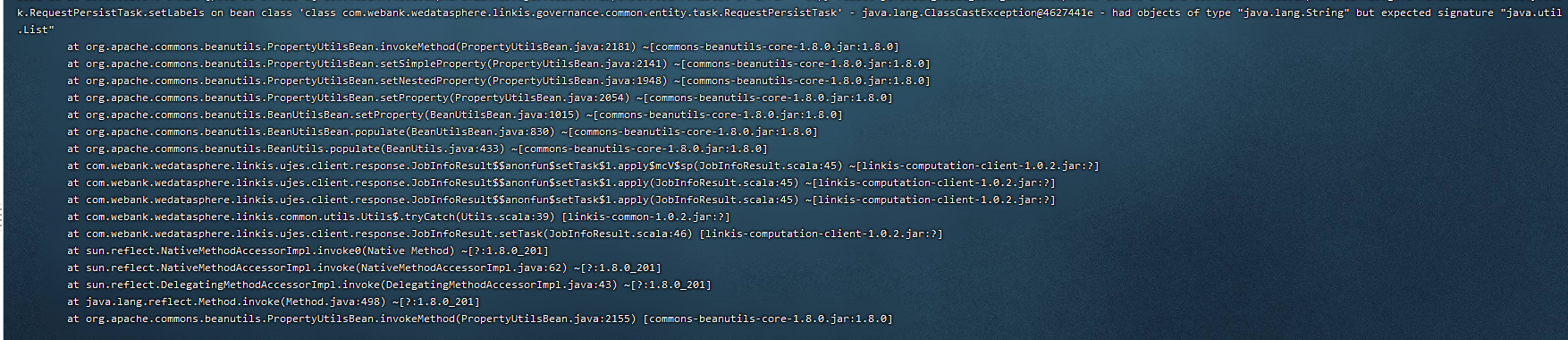

k.执行整个工作流报插件找不到错误

vi /opt/linkis_dss/dss/conf/dss-flow-execution-server.properties

加入下面两项配置:

wds.linkis.spark.engine.version=2.3.2.3.1.4.0_315

wds.linkis.hive.engine.version=3.1.0.3.1.4.0_315